研究並向我展示 GPT-5.5 與 DeepSeek V4 的基準測試比較。

現有證據只足以做「有限比較」:GPT 5.5 是 OpenAI API 文件中列出的模型,官方建議用於複雜推理與程式設計工作負載 [1];DeepSeek V4 的直接基準證據主要來自第三方比較頁,顯示 DeepSeek V4 Flash High 在程式設計類平均分領先 GPT 5.5,而 GPT 5.5 在 agentic tasks 類別領先 [5]。由於缺少 DeepSeek 官方基準、完整分項分數與一致版本定義,整體結論...

現有證據只足以做「有限比較」:GPT 5.5 是 OpenAI API 文件中列出的模型,官方建議用於複雜推理與程式設計工作負載 [1];DeepSeek V4 的直接基準證據主要來自第三方比較頁,顯示 DeepSeek V4 Flash High 在程式設計類平均分領先 GPT 5.5,而 GPT 5.5 在 agentic tasks 類別領先 [5]。由於缺少 DeepSeek 官方基準、完整分項分數與一致版本定義,整體結論應視為初步比較;Insufficient evidence. Key findings 程式設計基準:DeepSeek V4 Flash High 領先 GPT 5.5。 可用證據顯示,DeepSeek V

重點整理

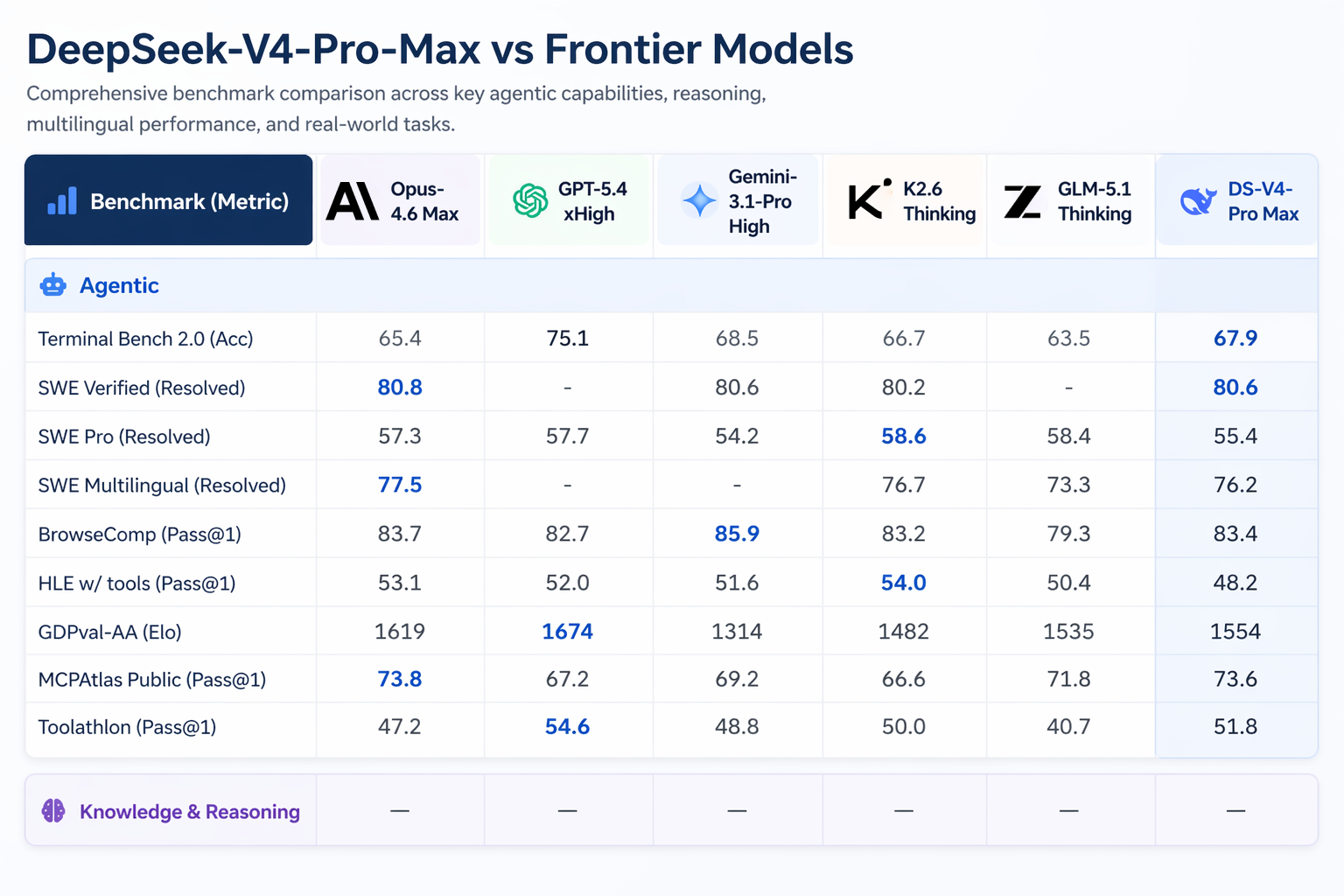

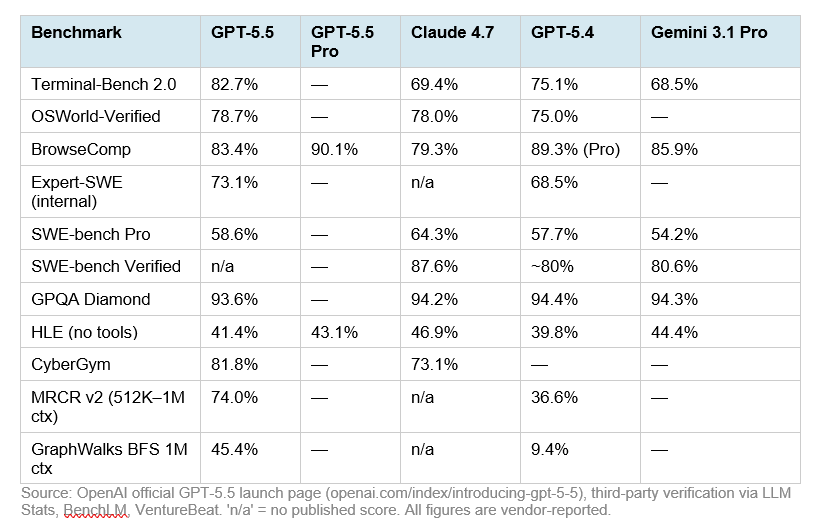

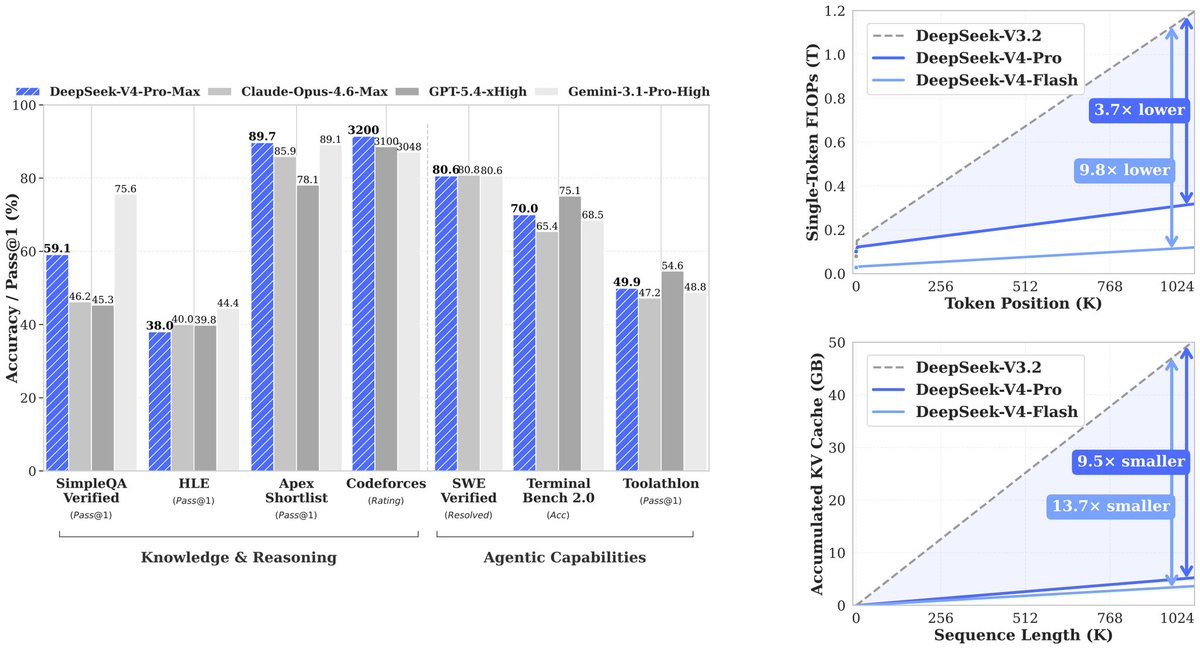

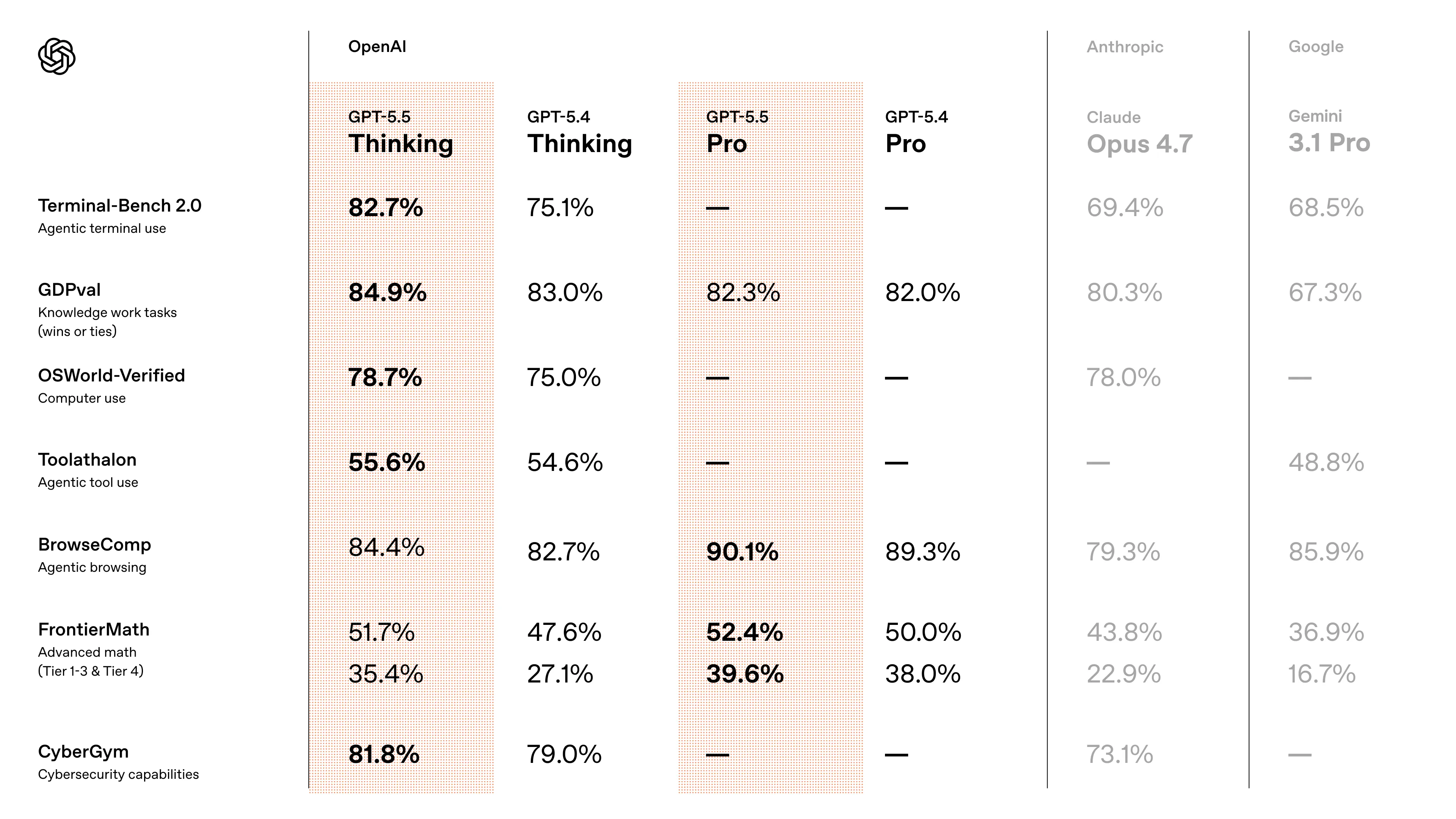

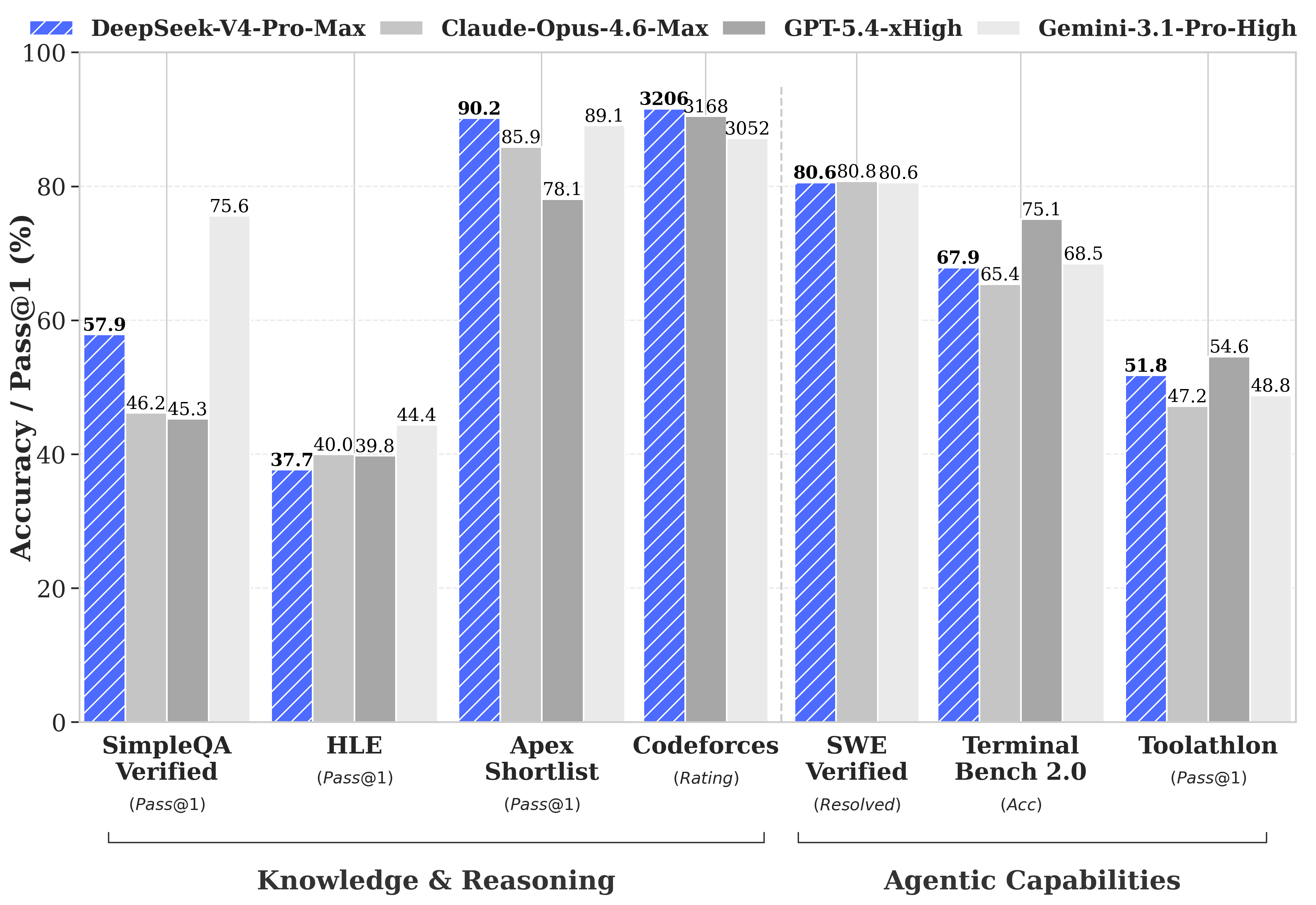

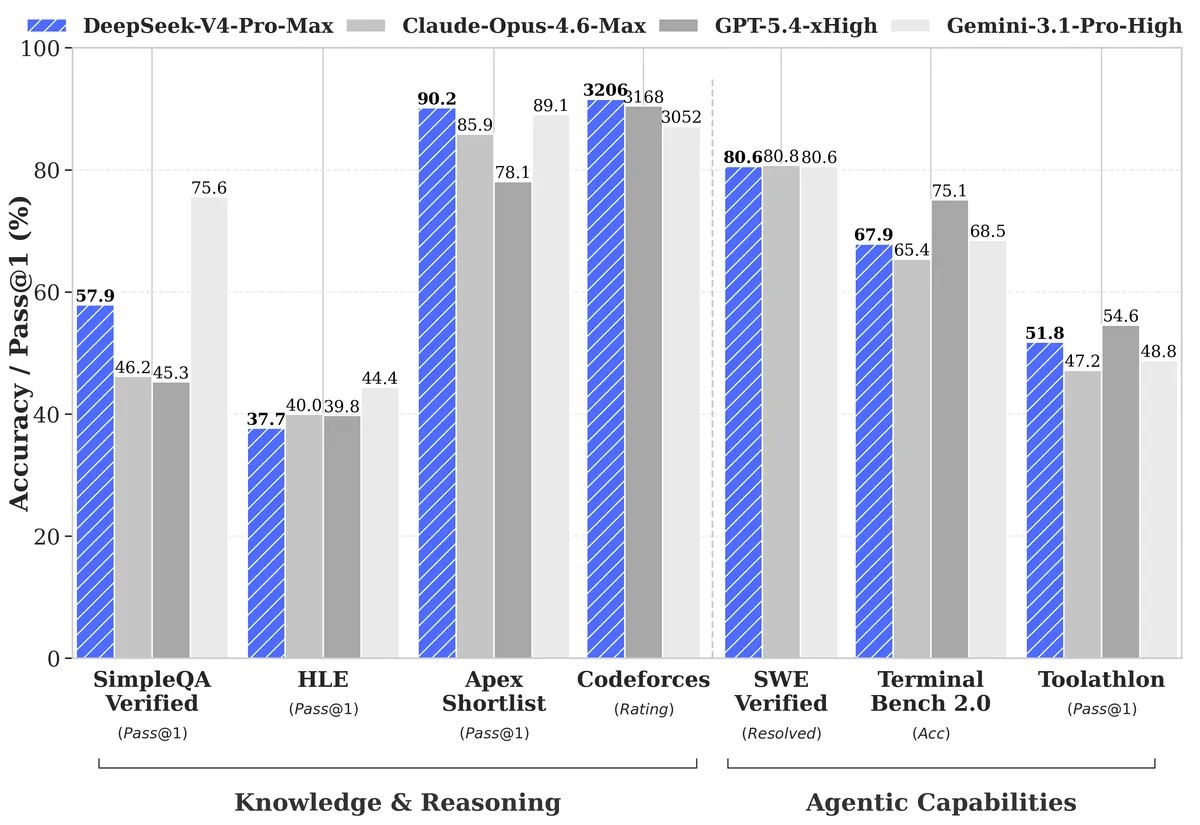

- **程式設計基準:DeepSeek V4 Flash High 領先 GPT-5.5。** 可用證據顯示,DeepSeek V4 Flash High 在 coding 類別平均分為 72.2,GPT-5.5 為 58.6;該比較指出 Terminal-Bench 2.0 是拉開差距最大的子測試 。

- **Agentic tasks:GPT-5.5 領先 DeepSeek V4 Flash High。** 同一第三方比較指出,GPT-5.5 在 agentic tasks 類別相對 DeepSeek V4 Flash High 佔優,但可用片段未提供具體分數 。

- **官方定位:GPT-5.5 被 OpenAI 推薦用於複雜推理與程式設計。** OpenAI API 模型文件寫明,複雜推理與 coding 可從 gpt-5.5 開始選用,而較低延遲、較低成本工作負載可選 gpt-5.4-mini 或 gpt-5.4-nano 。

- **價格比較:DeepSeek V4 系列被多個報導描述為顯著更便宜。** 可用證據稱 DeepSeek V4 Flash 的價格為每百萬輸入 token $0.14、每百萬輸出 token $0.28,且低於 GPT-5.4 Nano、Gemini 3.1 Flash、GPT-5.4 Mini 與 Claude Haiku 4.5 。

- 現有證據只足以做「有限比較」:GPT-5.5 是 OpenAI API 文件中列出的模型,官方建議用於複雜推理與程式設計工作負載 [1];DeepSeek V4 的直接基準證據主要來自第三方比較頁,顯示 DeepSeek V4 Flash High 在程式設計類平均分領先 GPT-5.5,而 GPT-5.5 在 agentic tasks 類別領先 [5]。由於缺少 DeepSeek 官方基準、完整分項分數與一致版本定義,整體結論應視為初步比較;Insufficient evidence.

- ## Key findings

研究答案

現有證據只足以做「有限比較」:GPT-5.5 是 OpenAI API 文件中列出的模型,官方建議用於複雜推理與程式設計工作負載 [1];DeepSeek V4 的直接基準證據主要來自第三方比較頁,顯示 DeepSeek V4 Flash High 在程式設計類平均分領先 GPT-5.5,而 GPT-5.5 在 agentic tasks 類別領先 [

5]。由於缺少 DeepSeek 官方基準、完整分項分數與一致版本定義,整體結論應視為初步比較;Insufficient evidence.

Key findings

-

程式設計基準:DeepSeek V4 Flash High 領先 GPT-5.5。 可用證據顯示,DeepSeek V4 Flash High 在 coding 類別平均分為 72.2,GPT-5.5 為 58.6;該比較指出 Terminal-Bench 2.0 是拉開差距最大的子測試 [

5]。

-

Agentic tasks:GPT-5.5 領先 DeepSeek V4 Flash High。 同一第三方比較指出,GPT-5.5 在 agentic tasks 類別相對 DeepSeek V4 Flash High 佔優,但可用片段未提供具體分數 [

5]。

-

官方定位:GPT-5.5 被 OpenAI 推薦用於複雜推理與程式設計。 OpenAI API 模型文件寫明,複雜推理與 coding 可從 gpt-5.5 開始選用,而較低延遲、較低成本工作負載可選 gpt-5.4-mini 或 gpt-5.4-nano [

1]。

-

價格比較:DeepSeek V4 系列被多個報導描述為顯著更便宜。 可用證據稱 DeepSeek V4 Flash 的價格為每百萬輸入 token $0.14、每百萬輸出 token $0.28,且低於 GPT-5.4 Nano、Gemini 3.1 Flash、GPT-5.4 Mini 與 Claude Haiku 4.5 [

4]。

-

GPT-5.5 價格資料來自媒體報導而非官方證據。 可用報導稱 GPT-5.5 價格為每百萬輸入 token $5、每百萬輸出 token $30,GPT-5.5 Pro 為每百萬輸入 token $30、每百萬輸出 token $180 [

6]。

-

DeepSeek V4 Preview / Pro 的價格優勢說法不完全一致。 一項報導稱 DeepSeek V4 Preview 約比 GPT-5.5 便宜 85% [

7];另一項報導標題稱 DeepSeek V4 Pro 版本比 GPT-5.5 Pro 便宜 98% [

6]。

Benchmark comparison

| 面向 | GPT-5.5 | DeepSeek V4 | 目前可支持的結論 |

|---|---|---|---|

| Coding 平均分 | 58.6 | 72.2,版本為 DeepSeek V4 Flash High | DeepSeek V4 Flash High 在可用 coding 比較中領先 [ |

| Terminal-Bench 2.0 | 未提供具體分數 | 未提供具體分數 | 該子測試被描述為造成 coding 差距最大的 benchmark,但缺少分項分數 [ |

| Agentic tasks | 領先 | 落後於 GPT-5.5 | GPT-5.5 在 agentic tasks 類別佔優,但缺少具體分數 [ |

| 複雜推理 / coding 官方定位 | 官方建議用於複雜推理與 coding | 缺少 DeepSeek 官方定位證據 | GPT-5.5 的官方定位較明確 [ |

| 價格 / 成本 | 媒體報導稱 GPT-5.5 為 $5 input / $30 output 每百萬 token;Pro 為 $30 input / $180 output 每百萬 token | 報導稱 V4 Flash 為 $0.14 input / $0.28 output 每百萬 token | DeepSeek V4 系列在可用報導中明顯更便宜,但價格證據不是完整官方對照 [ |

Evidence notes

-

目前最直接的「基準測試」證據只有 DeepSeek V4 Flash High vs GPT-5.5 的第三方比較片段,且只明確給出 coding 類別平均分 72.2 vs 58.6 [

5]。

-

GPT-5.5 的存在與官方用途定位有 OpenAI API 文件支持,因此這部分證據較強 [

1]。

-

DeepSeek V4 的價格與相對成本優勢主要來自媒體報導與第三方文章,而不是 DeepSeek 官方價格表或完整 benchmark paper;因此價格比較可信度低於官方文件 [

4][

6][

7][

8]。

-

可用資料混用了 DeepSeek V4、V4 Flash High、V4 Preview、V4 Pro 等名稱,這些可能不是同一模型或同一推理設定;因此不能把 V4 Flash High 的 coding 分數直接等同於所有 DeepSeek V4 版本的表現 [

4][

5][

6][

7][

8]。

Limitations / uncertainty

-

Insufficient evidence. 可用證據沒有提供完整 benchmark 套件、測試方法、樣本數、溫度設定、工具使用設定、上下文長度、成本歸一化方式或統計顯著性 [

5]。

-

Insufficient evidence. 可用證據沒有 DeepSeek 官方 benchmark、OpenAI 官方 GPT-5.5 benchmark 對照表,或獨立機構的完整可重現評測 [

1][

5]。

-

Insufficient evidence. 目前無法可靠比較數學、長上下文、知識問答、多模態、安全性、幻覺率、工具調用可靠性與延遲,因為可用證據未提供這些維度的完整數據 [

1][

5]。

Summary

輔助視覺素材

研究對話

研究問題

研究並向我展示 GPT-5.5 與 DeepSeek V4 的基準測試比較。

附引用的答案

現有證據只足以做「有限比較」:GPT-5.5 是 OpenAI API 文件中列出的模型,官方建議用於複雜推理與程式設計工作負載 [1];DeepSeek V4 的直接基準證據主要來自第三方比較頁,顯示 DeepSeek V4 Flash High 在程式設計類平均分領先 GPT-5.5,而 GPT-5.5 在 agentic tasks 類別領先 [

5]。由於缺少 DeepSeek 官方基準、完整分項分數與一致版本定義,整體結論應視為初步比較;Insufficient evidence.

Key findings

-

程式設計基準:DeepSeek V4 Flash High 領先 GPT-5.5。 可用證據顯示,DeepSeek V4 Flash High 在 coding 類別平均分為 72.2,GPT-5.5 為 58.6;該比較指出 Terminal-Bench 2.0 是拉開差距最大的子測試 [

5]。

-

Agentic tasks:GPT-5.5 領先 DeepSeek V4 Flash High。 同一第三方比較指出,GPT-5.5 在 agentic tasks 類別相對 DeepSeek V4 Flash High 佔優,但可用片段未提供具體分數 [

5]。

-

官方定位:GPT-5.5 被 OpenAI 推薦用於複雜推理與程式設計。 OpenAI API 模型文件寫明,複雜推理與 coding 可從 gpt-5.5 開始選用,而較低延遲、較低成本工作負載可選 gpt-5.4-mini 或 gpt-5.4-nano [

1]。

-

價格比較:DeepSeek V4 系列被多個報導描述為顯著更便宜。 可用證據稱 DeepSeek V4 Flash 的價格為每百萬輸入 token $0.14、每百萬輸出 token $0.28,且低於 GPT-5.4 Nano、Gemini 3.1 Flash、GPT-5.4 Mini 與 Claude Haiku 4.5 [

4]。

-

GPT-5.5 價格資料來自媒體報導而非官方證據。 可用報導稱 GPT-5.5 價格為每百萬輸入 token $5、每百萬輸出 token $30,GPT-5.5 Pro 為每百萬輸入 token $30、每百萬輸出 token $180 [

6]。

-

DeepSeek V4 Preview / Pro 的價格優勢說法不完全一致。 一項報導稱 DeepSeek V4 Preview 約比 GPT-5.5 便宜 85% [

7];另一項報導標題稱 DeepSeek V4 Pro 版本比 GPT-5.5 Pro 便宜 98% [

6]。

Benchmark comparison

| 面向 | GPT-5.5 | DeepSeek V4 | 目前可支持的結論 |

|---|---|---|---|

| Coding 平均分 | 58.6 | 72.2,版本為 DeepSeek V4 Flash High | DeepSeek V4 Flash High 在可用 coding 比較中領先 [ |

| Terminal-Bench 2.0 | 未提供具體分數 | 未提供具體分數 | 該子測試被描述為造成 coding 差距最大的 benchmark,但缺少分項分數 [ |

| Agentic tasks | 領先 | 落後於 GPT-5.5 | GPT-5.5 在 agentic tasks 類別佔優,但缺少具體分數 [ |

| 複雜推理 / coding 官方定位 | 官方建議用於複雜推理與 coding | 缺少 DeepSeek 官方定位證據 | GPT-5.5 的官方定位較明確 [ |

| 價格 / 成本 | 媒體報導稱 GPT-5.5 為 $5 input / $30 output 每百萬 token;Pro 為 $30 input / $180 output 每百萬 token | 報導稱 V4 Flash 為 $0.14 input / $0.28 output 每百萬 token | DeepSeek V4 系列在可用報導中明顯更便宜,但價格證據不是完整官方對照 [ |

Evidence notes

-

目前最直接的「基準測試」證據只有 DeepSeek V4 Flash High vs GPT-5.5 的第三方比較片段,且只明確給出 coding 類別平均分 72.2 vs 58.6 [

5]。

-

GPT-5.5 的存在與官方用途定位有 OpenAI API 文件支持,因此這部分證據較強 [

1]。

-

DeepSeek V4 的價格與相對成本優勢主要來自媒體報導與第三方文章,而不是 DeepSeek 官方價格表或完整 benchmark paper;因此價格比較可信度低於官方文件 [

4][

6][

7][

8]。

-

可用資料混用了 DeepSeek V4、V4 Flash High、V4 Preview、V4 Pro 等名稱,這些可能不是同一模型或同一推理設定;因此不能把 V4 Flash High 的 coding 分數直接等同於所有 DeepSeek V4 版本的表現 [

4][

5][

6][

7][

8]。

Limitations / uncertainty

-

Insufficient evidence. 可用證據沒有提供完整 benchmark 套件、測試方法、樣本數、溫度設定、工具使用設定、上下文長度、成本歸一化方式或統計顯著性 [

5]。

-

Insufficient evidence. 可用證據沒有 DeepSeek 官方 benchmark、OpenAI 官方 GPT-5.5 benchmark 對照表,或獨立機構的完整可重現評測 [

1][

5]。

-

Insufficient evidence. 目前無法可靠比較數學、長上下文、知識問答、多模態、安全性、幻覺率、工具調用可靠性與延遲,因為可用證據未提供這些維度的完整數據 [

1][

5]。

Summary

大家也會問

「研究並向我展示 GPT-5.5 與 DeepSeek V4 的基準測試比較。」的簡短答案是什麼?

**程式設計基準:DeepSeek V4 Flash High 領先 GPT-5.5。** 可用證據顯示,DeepSeek V4 Flash High 在 coding 類別平均分為 72.2,GPT-5.5 為 58.6;該比較指出 Terminal-Bench 2.0 是拉開差距最大的子測試 。

最值得優先驗證的重點是什麼?

**程式設計基準:DeepSeek V4 Flash High 領先 GPT-5.5。** 可用證據顯示,DeepSeek V4 Flash High 在 coding 類別平均分為 72.2,GPT-5.5 為 58.6;該比較指出 Terminal-Bench 2.0 是拉開差距最大的子測試 。 **Agentic tasks:GPT-5.5 領先 DeepSeek V4 Flash High。** 同一第三方比較指出,GPT-5.5 在 agentic tasks 類別相對 DeepSeek V4 Flash High 佔優,但可用片段未提供具體分數 。

接下來在實務上該怎麼做?

**官方定位:GPT-5.5 被 OpenAI 推薦用於複雜推理與程式設計。** OpenAI API 模型文件寫明,複雜推理與 coding 可從 gpt-5.5 開始選用,而較低延遲、較低成本工作負載可選 gpt-5.4-mini 或 gpt-5.4-nano 。

下一步適合探索哪個相關主題?

繼續閱讀「研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較不會中途失焦、漏步驟或跑偏?」,從另一個角度查看更多引用來源。

開啟相關頁面我應該拿這個和什麼比較?

將這個答案與「研究 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 的基準測試表現,並根據這些基準測試對它們進行比較。」交叉比對。

開啟相關頁面繼續深入研究

來源

- [1] DeepSeek previews new AI model that 'closes the gap' with frontier ...techcrunch.com

San Francisco, CA|October 13-15, 2026 REGISTER NOW Notably, DeepSeek V4 is much more affordable than any frontier model available today. The smaller V4 Flash model costs $0.14 per million input tokens and $0.28 per million output tokens, undercutting GPT-5.4 Nano, Gemini 3.1 Flash, GPT-5.4 Mini, and Claude Haiku 4.5. The larger V4 Pro model, meanwhile, costs $0.145 per million input tokens and $3.48 per million output tokens, also undercutting Gemini 3.1 Pro, GPT-5.5, Claude Opus 4.7, and GPT-5.4. The launch comes a day after the U.S. accused China of stealing American AI labs’ IP on an indus…

- [2] DeepSeek V4 Is Here—Its Pro Version Costs 98% Less Than GPT 5.5 Protech.yahoo.com

And this ended up with Deepseek being able to offer a much cheaper price per token than its competitors, while providing comparable results. To put that in dollar terms: GPT-5.5 launched yesterday at $5 input and $30 output per million tokens with GPT-5.5 Pro priced at $30 per million input tokens and $180 per million output tokens. Deepseek V4-Pro is $1.74 input and $3.48 output. V4-Flash is $0.14 input and $0.28 output. Cline CEO Saoud Rizwan pointed out that if Uber had used DeepSeek instead of Claude, its 2026 AI budget—reportedly enough for four months of usage—would have lasted seven ye…

- [3] DeepSeek V4 is here: How it compares to ChatGPT, Claude, Geminisea.mashable.com

DeepSeek V4 is here: How it compares to ChatGPT, Claude, Gemini DeepSeek V4 Preview costs about 85 percent less than GPT-5.5. Timothy Beck Werth By Timothy Beck Werth DeepSeek V4 is here: How it compares to ChatGPT, Claude, Gemini Credit: Long Wei/VCG via Getty Images > Tech Anything you can do I can do better... That may as well be the motto for the AI arms race, which is unfolding across multiple dimensions in 2026. There's the competition between Silicon Valley AI labs like Anthropic, OpenAI, and Google DeepMind, the race for chips and compute power, and, of course, the fierce competitio…

- [4] DeepSeek V4 Pro (Reasoning, Max Effort) vs GPT-5.5 (xhigh)artificialanalysis.ai

Model Comparison | Metric | DeepSeek logoDeepSeek V4 Pro (Reasoning, Max Effort) | OpenAI logoGPT-5.5 (xhigh) | Analysis | --- --- | | Creator | DeepSeek | OpenAI | | Context Window | 1000k tokens (~1500 A4 pages of size 12 Arial font) | 922k tokens (~1383 A4 pages of size 12 Arial font) | DeepSeek V4 Pro (Reasoning, Max Effort) is larger than GPT-5.5 (xhigh) | | Release Date | April, 2026 | April, 2026 | DeepSeek V4 Pro (Reasoning, Max Effort) has a more recent release date than GPT-5.5 (xhigh) | | Image Input Support | | | GPT-5.5 (xhigh) has image input support while DeepSeek V4 Pro (Re…

- [5] DeepSeek V4: Features, Benchmarks, and Comparisons - DataCampdatacamp.com

DeepSeek V4: Features, Benchmarks, and Comparisons Discover DeepSeek V4 features, pricing, and 1M context efficiency. We compare V4 Pro and Flash benchmarks against frontier models like GPT-5.5 and Opus 4.7. Apr 23, 2026 · 7 min read After months of rumors and hot on the heels of the new GPT-5.5 and Claude Opus 4.7, DeepSeek has finally released DeepSeek V4. The release comes in the form of two preview models, V4-Pro and V4-Flash, hitting the market with aggressive pricing and near-frontier performance. DeepSeek V4-Pro boasts 1.6 trillion total parameters with a 1-million-token context wind…

- [6] GPT-5.5: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

GPT-5.5: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• Make AI phone calls with one API call CallingBox Start for free 1. Organizations 2. OpenAI 3. GPT-5.5 Compare Image 2: OpenAI logo # GPT-5.5 OpenAI·Apr 2026·Proprietary GPT-5.5 is OpenAI's smartest model yet, designed for real work across agentic coding, computer use, knowledge work, and early scientific research. It matches GPT-5.4 per-token latency in real-world serving while reaching a much higher...m…

- [7] OpenAI's GPT-5.5 Launches With 91.7% Benchmark Scoremexc.com

Timothy Morano Apr 23, 2026 18:49 OpenAI’s GPT-5.5 debuts with enhanced legal AI capabilities, scoring 91.7% on benchmarks. Available now for ChatGPT Plus and Pro users. OpenAI has officially unveiled GPT-5.5, its latest AI model, on April 23, 2026, pushing the boundaries of artificial intelligence in professional and technical applications. Early evaluations show the model scoring an impressive 91.7% on Harvey.ai’s BigLaw Bench evaluation suite, edging out GPT-5.4’s already strong 91.0%. This marks a new high for OpenAI’s generative models and positions GPT-5.5 as a powerful tool for legal a…

- [8] DeepSeek V4 vs GPT-5.5: Benchmarks, Costs & Security - LockLLMlockllm.com

General reasoning benchmarks indicate a tightening race at the frontier, with models trading marginal percentage points across standardized tests. In the MMLU-Pro metric, which measures multidisciplinary academic knowledge, Gemini 3.1-Pro High leads the cohort at 91.0 percent, followed closely by Opus-4.6 Max at 89.1 percent, with DeepSeek V4-Pro-Max scoring 87.5 percent. When evaluating performance on extremely difficult scientific reasoning, such as the GPQA Diamond benchmark, the frontier models converge at the highest echelons. Gemini 3.1-Pro achieves 94.3 percent, GPT-5.4 xHigh reaches 9…

- [9] GPT-5.5 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # GPT-5.5 According to BenchLM.ai, GPT-5.5 ranks #5 out of 112 models on the provisional leaderboard with an overall score of 89/100. It also ranks #2 out of 16 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. GPT-5.5 is a proprietary model with a 1M token context window. It uses explicit chain-of-thought reasoning, which typically improves performance on math and complex reasoning tasks at the cost of…

- [10] DeepSeek vs GPT-4: Real Developer Benchmarks & Performance ...sitepoint.com

avatar # DeepSeek vs GPT-4: Real Developer Benchmarks & Performance Comparison 2026 SitePoint Team Share this article DeepSeek vs GPT-4: Real Developer Benchmarks & Performance Comparison 2026 7 Day Free Trial. Cancel Anytime. The LLM market is saturated with competing models and conflicting performance claims. For developers evaluating DeepSeek vs GPT-4o benchmarks, marketing collateral is insufficient. This article presents head-to-head benchmark data, pricing analysis, runnable Node.js code examples for reproducing tests, and a final verdict organized by use case. ## Table of Contents ## W…

- [11] AI Model Benchmarks Apr 2026 | Compare GPT-5, Claude 4.5 ...lmcouncil.ai

Factorio Learning Environment | | Model | Score | --- | 1 | Claude 3.7 Sonnet | 29.1 | | 2 | Claude 3.5 Sonnet (Jun '24) | 28.1 | | 3 | Gemini 2.5 Pro Exp (Mar '25) | 18.4 | | 4 | GPT-4o (Nov '24) | 16.6 | | 5 | DeepSeek-V3 | 15.1 | ### GeoBench | | Model | Score | --- | 1 | Gemini 3 Pro Preview | 3893 | | 2 | Gemini 2.0 Flash Thinking Exp | 3873 | | 3 | Gemini 2.5 Pro Exp (Mar '25) | 3871 | | 4 | Gemini 2.5 Pro Preview (Jun '25) | 3836 | | 5 | o3 (high) | 3789 | Last Updated Mar 6th, 2026 • Data source: Epoch AI & Scale AI [...] # AI Model Benchmarks Apr 2026 18 benchmarks - the world's…

- [12] DeepSeek vs ChatGPT 2026: The $0 AI That Rivals GPT-5 [Full Comparison]tech-insider.org

Is DeepSeek as good as ChatGPT in 2026? DeepSeek V4 matches or exceeds ChatGPT GPT-5 on most coding and reasoning benchmarks. ChatGPT has the edge in multimodal tasks (image generation, web browsing) and conversational polish. For pure technical performance, DeepSeek is comparable at a fraction of the cost. ### Is DeepSeek free? DeepSeek offers a free tier with generous daily limits and open-weight models that can be self-hosted at zero cost. API pricing is dramatically lower than ChatGPT — roughly 90% cheaper per million tokens for comparable model tiers. ### Is DeepSeek safe to use? Is…

- [13] DeepSeek V4 Flash (High) vs GPT-5.5: AI Benchmark Comparison 2026 | BenchLM.aibenchlm.ai

DeepSeek V4 Flash (High) has the edge for coding in this comparison, averaging 72.2 versus 58.6. Inside this category, Terminal-Bench 2.0 is the benchmark that creates the most daylight between them. ### Which is better for agentic tasks, DeepSeek V4 Flash (High) or GPT-5.5? GPT-5.5 has the edge for agentic tasks in this comparison, averaging 81.8 versus 55.4. Inside this category, BrowseComp is the benchmark that creates the most daylight between them. ## Related Comparisons ## Explore More ### The AI models change fast. We track them for you. For engineers, researchers, and the plain curiou…

- [14] DeepSeek V4 Preview: The Complete 2026 Guide - o-mega | AIo-mega.ai

6. Head-to-Head: DeepSeek V4 vs GPT-5.5 The comparison between DeepSeek V4-Pro and GPT-5.5 is the headline matchup, and the nuances matter more than the top-line numbers suggest. GPT-5.5 holds clear advantages in certain areas, DeepSeek V4-Pro leads in others, and the pricing gap creates a gravitational pull that shapes the decision for most teams. On SWE-bench Verified, GPT-5.5 leads with 88.7% versus V4-Pro's 80.6%, an 8.1-point gap. This is the benchmark where GPT-5.5 shows its strongest advantage, and it matters because SWE-bench Verified is the closest proxy for real-world software en…

- [15] DeepSeek V4 vs GPT-5.5: The Ultimate AI Model Comparison Guideskywork.ai

.9742C29.3654%2035.1061%2029.1866%2035.1802%2029.0001%2035.1802C28.8136%2035.1802%2028.6348%2035.1061%2028.5029%2034.9742C28.3711%2034.8424%2028.297%2034.6635%2028.297%2034.4771V20.7055L23.1694%2025.8339C23.0375%2025.9658%2022.8586%2026.0399%2022.672%2026.0399C22.4854%2026.0399%2022.3064%2025.9658%2022.1745%2025.8339C22.0426%2025.702%2021.9685%2025.523%2021.9685%2025.3364C21.9685%2025.1498%2022.0426%2024.9709%2022.1745%2024.839Z'%20fill='%23485568'/%3e%3c/g%3e%3cdefs%3e%3cfilter%20id='filter0_d_21176_403320'%20x='0.200001'%20y='0.642676'%20width='57.6'%20height='57 [...] logo'%3e%3crect%20x='…

- [16] DeepSeek-V4 arrives with near state-of-the-art intelligence at 1/6th ...venturebeat.com

On Terminal-Bench 2.0, DeepSeek scores 67.9%, close to Claude Opus 4.7’s 69.4%, but far behind GPT-5.5’s 82.7%. | | | | | | | --- --- --- | | Benchmark | DeepSeek-V4-Pro-Max | GPT-5.5 | GPT-5.5 Pro, where shown | Claude Opus 4.7 | Best result among these | | GPQA Diamond | 90.1% | 93.6% | — | 94.2% | Claude Opus 4.7 | | Humanity’s Last Exam, no tools | 37.7% | 41.4% | 43.1% | 46.9% | Claude Opus 4.7 | | Humanity’s Last Exam, with tools | 48.2% | 52.2% | 57.2% | 54.7% | GPT-5.5 Pro | | Terminal-Bench 2.0 | 67.9% | 82.7% | — | 69.4% | GPT-5.5 | | SWE-Bench Pro / SWE Pro | 55.4% | 58.6% | — | 64…

- [17] GPT-5.5 vs DeepSeek V4: Closed Premium vs Open Budget (2026) - TokenMix 博客tokenmix.ai

Benchmark Head-to-Head | Benchmark | GPT-5.5 | V4-Pro | V4-Flash | --- --- | | SWE-Bench Verified | 88.7% | ~85% | ~78% | | SWE-Bench Pro | 58.6% | ~55% | ~48% | | Terminal-Bench 2.0 | 82.7% | ~75% | ~68% | | MMLU | 92.4% | ~89% | ~84% | | AIME 2025 | — | ~94 | ~88 | | Context | 256K | 1M | 1M | | Hallucination (-60% vs predecessor) | Claimed | — | — | | Omnimodal (text+image+audio+video) | Yes | No | No | The gap analysis: On SWE-Bench Verified: GPT-5.5 leads V4-Pro by 3.7 points On Terminal-Bench 2.0: GPT-5.5 leads V4-Pro by ~7 points On context: V4 leads GPT-5.5 by 4× On openness: V4 wi…

- [18] Kimi K2.6、DeepSeek V4、GPT-5.5、Claude Opus 4.7:先测哪一个? | LaoZhang AI Blogblog.laozhang.ai

| 合同项 | Kimi K2.6 | DeepSeek V4 | GPT-5.5 | Claude Opus 4.7 | --- --- | 路线归属 | Kimi 平台 | DeepSeek API | 先是 ChatGPT 和 Codex | Anthropic API 与云平台 | | 部署标签 | kimi-k2.6 | deepseek-v4-flash 或 deepseek-v4-pro | 等 API 文档公开后复核 | claude-opus-4-7 | | 上下文 | 256k 级 | 1M,上限输出 384K | API 上下文待复核 | 1M | | 价格归属 | Kimi 人民币价格页 | DeepSeek 美元价格页 | 当前 API 指南没有 GPT-5.5 API 价格行 | Anthropic 美元价格页 | 2026 年 4 月 24 日核对来源:DeepSeek V4 发布说明、DeepSeek 价格页、Kimi K2.6 价格页、OpenAI 最新模型指南、Claude 模型总览 和 Claude 价格页。改生产默认前必须重新核对。 ## 为什么 DeepSeek V4 改变比较方式 DeepSeek V4 不是把名字换进标题那么简单。它让 DeepSeek 路线拥有当前模型 ID、价格行、上下文和兼容接口。Flash 是便宜默认候选,Pr…

- [19] 時隔1年多...DeepSeek發布V4新模型 百萬上下文成標配 | 大陸政經 | 兩岸 | 經濟日報money.udn.com

打開 App user_photo edn logo search button edn logo new-icon ### 熱門關鍵字 ### 最近搜尋 ### 網站導覽 ### 服務 google pay apple store 聯合報系著作權所有 © 2026 All Rights Reserved #### 太棒了! 你沒有錯過任何訊息! bell icon notice-title img 封面故事連發 中東衝突震盪全球 台灣正在輸出通膨? 伊朗助攻石油人民幣能成功? 《經濟日報》訂戶限定專文 🔓限時解鎖 notice-title img 誰是下一個護國神山? 直擊未來10年轉型關鍵,看見台灣醫療新未來。 # 時隔1年多...DeepSeek發布V4新模型 百萬上下文成標配 本文共1035字 就在OpenAI發布GPT-5.5幾個小時後,大陸AI新創深度求索(DeepSeek)24日宣布,全新系列模型DeepSeek-V4的預覽版本正式上線,並同步開源。最新模型具有能力處理長達百萬字的超長上下文,在Agent能力、世界知識和推理性能上均實現大陸國內與開源領域的領先。陸媒研判,新模型使用的是華為昇騰晶片。這距離DeepSeek去年1月的大版本更新已時隔15個月。 上海第一財經報導,V4模型按大小分為Pro和Flash兩個版本,其中,Pro版參數為1.6兆,啟動參數49…

- [20] DeepSeek-V4和GPT-5.5同日上线!新款DeepSeek有哪些亮点?|deepseek_网易订阅163.com

制作直升机遥控模型,阿帕奇AH-64 制造科技 2026-04-22 16:29:03 ### 面向高阶自智的网络智能化运营体系 通信世界 2026-04-24 11:36:27 ### 全新问界M9硬件架构再升级,40颗传感器为自动驾驶做准备? Autolab 2026-04-22 17:13:00 寺庙整治风暴来袭 43家违规场所被关停 ### 寺庙整治风暴来袭 43家违规场所被关停 女子利用天气预报频繁购买飞机延误险,5年买中900多次,获赔近300万,被抓时:我符合保险理赔要求 ### 女子利用天气预报频繁购买飞机延误险,5年买中900多次,获赔近300万,被抓时:我符合保险理赔要求 普通家庭,存款到这个数,就真的不用慌了! ### 普通家庭,存款到这个数,就真的不用慌了! 革命卫队计算错误,以为谁都离不开霍尔木兹海峡,其实伊朗最需要 ### 革命卫队计算错误,以为谁都离不开霍尔木兹海峡,其实伊朗最需要 日本自民党前总裁河野洋平拟率团访华 ### 日本自民党前总裁河野洋平拟率团访华 泼水节变“流氓节”?女子当街被围攻泼水,请别拿传统当遮羞布! ### 泼水节变“流氓节”?女子当街被围攻泼水,请别拿传统当遮羞布! 新低密度脂蛋白标准已更新,安全值或不再是3.1,建议了解 ### 新低密度脂蛋白标准已更新,安全值或不再是3.1,建议了解 英超争冠冲刺,近十年最激烈?…

- [21] DeepSeek V4 与GPT-5.5 基准测试全数据对比 - AtomGit开源社区gitcode.csdn.net

表2:数学、长上下文与智能体能力对比 | Benchmark (指标) | DS-V4-Pro Max | DS-V4-Flash Max | GPT-5.5 | GPT-5.4 xHigh (参考) | 最优模型 | --- --- --- | | HMMT 2026 Feb (Pass@1) | 95.2 | 94.8 | (未提供) | 97.7 | GPT-5.4 xHigh | | FrontierMath Tier 1-3 | (参见Tier 4) | (参见Tier 4) | 51.7% | 47.6% | GPT-5.5 | | FrontierMath Tier 4 | (类比 35.4) | (类比 35.4) | 35.4% | 27.1% | GPT-5.5 | | Apex Shortlist (推理) | 90.2 | 85.7 | (未提供) | 78.1 | DS-V4-Pro Max | | MRCR 1M (长文检索) | 83.5 | 78.7 | (512K-1M: 74.0%) | 36.6% | DS-V4-Pro Max | | Terminal-Bench 2.0 (智能体) | 67.9 | 56.9 | 82.7% | 75.1% | GPT-5.5 | | Toolathlon (工具调用) | 51.8 | 47…

- [22] DeepSeek V4 正式发布深度解析:1.6T 参数、百万上下文、全国产算力——同天发 GPT-5.5 是偶然吗?_java_拾-光-AtomGit开源社区gitcode.csdn.net

SWE Verified 四款旗舰打成 80.6% 的平手——这在 Benchmark 历史上比较罕见,说明这个指标已经接近当前技术上限。 #### 知识与推理能力 | Benchmark | V4-Pro-Max | Opus 4.6 Max | GPT-5.4 xHigh | Gemini-3.1-Pro | --- --- | Apex Shortlist | 90.2% 🥇 | ~85% | ~88% | ~87% | | AIME 2026 | 99.4% | ~96% | ~97% | ~98% | | IMO Answer Bench | 88.4% | ~82% | ~85% | ~86% | | SimpleQA Verified | 57.9% | ~48% | ~55% | 75.6% 🥇 | | MMLU | 92.8% | 91.5% | 93.0% 🥇 | 92.5% | SimpleQA-Verified(事实问答,不能乱编):Gemini 75.6% 仍然领先,这是 Gemini 的传统强项。V4 的 57.9% 已经超越所有已评测开源模型约 20 个百分点。 #### 开发者主观评价 DeepSeek 表示:Pro 版的使用体验"优于 Sonnet 4.5,交付质量接近 Opus 4.6 非思考模式,但仍与 Opus 4.6 思考模式存在一…

- [23] GPT-5.5正式发布,DeepSeek-V4预览版本正式上线并开源的影响及概念_财富号_东方财富网caifuhao.eastmoney.com

财经 焦点 股票 新股 期指 期权 行情 数据 全球 美股 港股 期货 外汇 银行 基金 理财 债券 直播 股吧 基金吧 博客 财富号 搜索 指数期指期权个股板块排行新股基金港股美股期货外汇黄金自选股自选基金 资金流向主力排名板块资金个股研报新股申购转债申购北交所申购AH股比价年报大全融资融券龙虎榜限售解禁IPO审核大宗交易估值分析 首页 > 社区 > 正文 股海欢腾 2026年04月24日 15:17 北京 返回 龙芯中科吧> 点赞 0 评论 0 收藏 大 中 小

- [24] Models | OpenAI APIdevelopers.openai.com

Start with gpt-5.5 for complex reasoning and coding, or choose gpt-5.4-mini and gpt-5.4-nano for lower-latency, lower-cost workloads. View all. Compare models. 4 hours ago

- [25] Introducing GPT-Rosalind for life sciences research - OpenAIopenai.com

OpenAI introduces GPT-Rosalind, a frontier reasoning model built to accelerate drug discovery, genomics analysis, protein reasoning, ... Apr 16, 2026

- [26] Introducing GPT-5 - OpenAIopenai.com

A smarter, more widely useful model. GPT‑5 not only outperforms previous models on benchmarks and answers questions more quickly, but—most ... Aug 7, 2025

- [27] Introducing GPT-5.5 - OpenAIopenai.com

Introducing GPT-5.5, our smartest model yet—faster, more capable, and built for complex tasks like coding, research, and data analysis ... 2 days ago

- [28] [PDF] GeneBench: Assessing AI Agents for Multi-Stage Inference ... - OpenAIcdn.openai.com

We introduce GeneBench, a benchmark for AI agents on realistic multi-stage scientific data analysis in genetics and quantitative biology. 2 days ago

- [29] GPT-5.5 is here! Available in the API, Codex and ChatGPT todaycommunity.openai.com

Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, ... 2 days ago

- [30] GPT-5.5 System Card - OpenAIopenai.com

GPT‑5.5 is a new model designed for complex, real-world work, including writing code, researching online, analyzing information, ... 2 days ago

- [31] Introducing OpenAI Privacy Filteropenai.com

Introducing OpenAI Privacy Filter. Our state of the art model for masking personally identifiable information (PII) in text. 3 days ago

- [32] OpenAI Deployment Safety Hub: System cards & other updatesdeploymentsafety.openai.com

Apr 23, 2026. GPT-5.5 System Card. GPT-5.5 is a new model designed for complex, real-world work, including writing code, researching online, analyzing… Apr 21, ...

- [33] GPT-5.5 System Card - Deployment Safety Hub - OpenAIdeploymentsafety.openai.com

GPT-5.5 is the highest scoring model on this benchmark, achieving a median score of of 50.5%, but does not significantly improve over GPT ... 2 days ago

- [34] DeepSeek-V4 Pro vs GPT-5 Pro - Detailed Performance & Feature Comparisondocsbot.ai

| MCP-Atlas Tool-use benchmark focused on MCP (Model Context Protocol) tasks; typically reported on a public subset | 73.6% Public set, Pass@1, Think Max Source | Not available | | Codeforces Competitive programming benchmark reported as Codeforces rating (higher is better) | 3206 Rating, Think Max Source | Not available | | IMO Answer Bench Olympiad-style math benchmark focused on solution/answer correctness | 89.8% Pass@1, Think Max Source | Not available | | Terminal-Bench 2.0 Evaluates agentic terminal coding capabilities using the Terminus-2 agent framework | 67.9% Terminal Bench 2.0, Ac…

- [35] DeepSeek-V4 Pro vs GPT-5.5 - Detailed Performance & Feature Comparisondocsbot.ai

| Codeforces Competitive programming benchmark reported as Codeforces rating (higher is better) | 3206 Rating, Think Max Source | Not available | | IMO Answer Bench Olympiad-style math benchmark focused on solution/answer correctness | 89.8% Pass@1, Think Max Source | Not available | | Tau2Bench Telecom Evaluates function calling capabilities in telecom domain scenarios | Not available | Original prompts, no prompt adjustment Source | | Terminal-Bench 2.0 Evaluates agentic terminal coding capabilities using the Terminus-2 agent framework | Terminal Bench 2.0, Acc, Think Max Source | Terminal-…

- [36] DeepSeek-V4 Pro vs GPT‑5 mini - Detailed Performance & Feature Comparisondocsbot.ai

| Codeforces Competitive programming benchmark reported as Codeforces rating (higher is better) | 3206 Rating, Think Max Source | Not available | | IMO Answer Bench Olympiad-style math benchmark focused on solution/answer correctness | 89.8% Pass@1, Think Max Source | Not available | | Tau2Bench Telecom Evaluates function calling capabilities in telecom domain scenarios | Not available | 74.1% Source | | Terminal-Bench 2.0 Evaluates agentic terminal coding capabilities using the Terminus-2 agent framework | 67.9% Terminal Bench 2.0, Acc, Think Max Source | 38.2% Terminal-Bench 2.0 Source | [.…

- [37] DeepSeek-V4-Pro-Max: Pricing, Benchmarks & Performancellm-stats.com

| LiveCodeBench LiveCodeBench is a holistic and contamination-free evaluation benchmark for large language models for code. It continuously collects new problems from programming contests (LeetCode, AtCoder, CodeForces) and evaluates four different scenarios: code generation, self-repair, code execution, and test output prediction. Problems are annotated with release dates to enable evaluation on unseen problems released after a model's training cutoff. More | #1 | 0.94/1 Methodology: Pass@1 | huggingface.co | [...] GPQAView → #13 of 11 Image 11: LLM Stats Logo A challenging dataset of 448 mu…

- [38] GPT-5.5 vs DeepSeek-V4 Pro - DocsBot AIdocsbot.ai

| FrontierMath (Tier 4) Advanced mathematics benchmark covering FrontierMath Tier 4 tasks with Python assistance | 35.4% Source | Not available | | GPQA Diamond GPQA Diamond tier - graduate-level physics assessment | 93.6% Source | 90.1% Pass@1, Think Max Source | | GDPval (wins or ties) Economic knowledge work evaluation measuring win/tie rates versus industry professionals across 44 occupations | 84.9% wins or ties vs industry professionals across 44 occupations Source | Not available | | GDPval-AA Economic knowledge work evaluation focused on accuracy-adjusted GDPval results | Not availabl…

- [39] DeepSeek V4: The Next Frontier of Open-Source AI - Agentic AIkenhuangus.substack.com

High mode (the default) activates standard reasoning chains, suitable for most applications. Max mode allocates the maximum compute budget for deep reasoning, achieving the highest benchmark scores but at greater latency and cost. In Max mode, V4-Pro scores 91.2 on MMLU-Pro, 90.1 on GPQA Diamond, 93.5 on LiveCodeBench, and achieves a Codeforces Elo rating of 3,206, outperforming 96.3% of human competitive programmers.