研究 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 的基準測試表現,並根據這些基準測試對它們進行比較。

僅依據你提供的證據,現在還不能對 GPT 5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT 5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer use 類工作流上提升明顯,[2] 且有...

僅依據你提供的證據,現在還不能對 GPT 5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT 5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer use 類工作流上提升明顯,[2] 且有次級報導稱其狹義重回 generally available LLM 的領先位置。[5] DeepSeek V4 Pro 則有公司說法經媒體轉述,稱其在 world knowledge benchmarks

重點整理

- 僅依據你提供的證據,現在還不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT-5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer-use 類工作流上提升明顯,[2] 且有次級報導稱其狹義重回 generally available LLM 的領先位置。[5] DeepSeek V4-Pro 則有公司說法經媒體轉述,稱其在 world

- 無法產生可信的四模型總排名:你提供的材料沒有共享 benchmark 分數,且缺少 GPT-5.5 的任何 benchmark 證據。

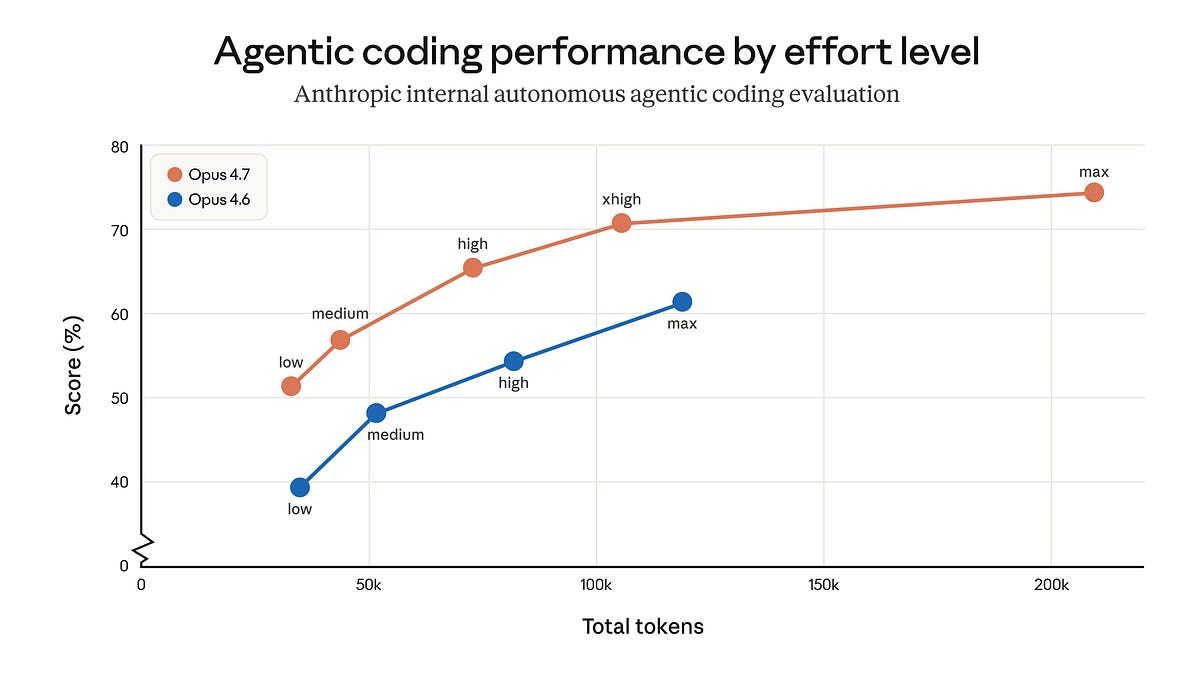

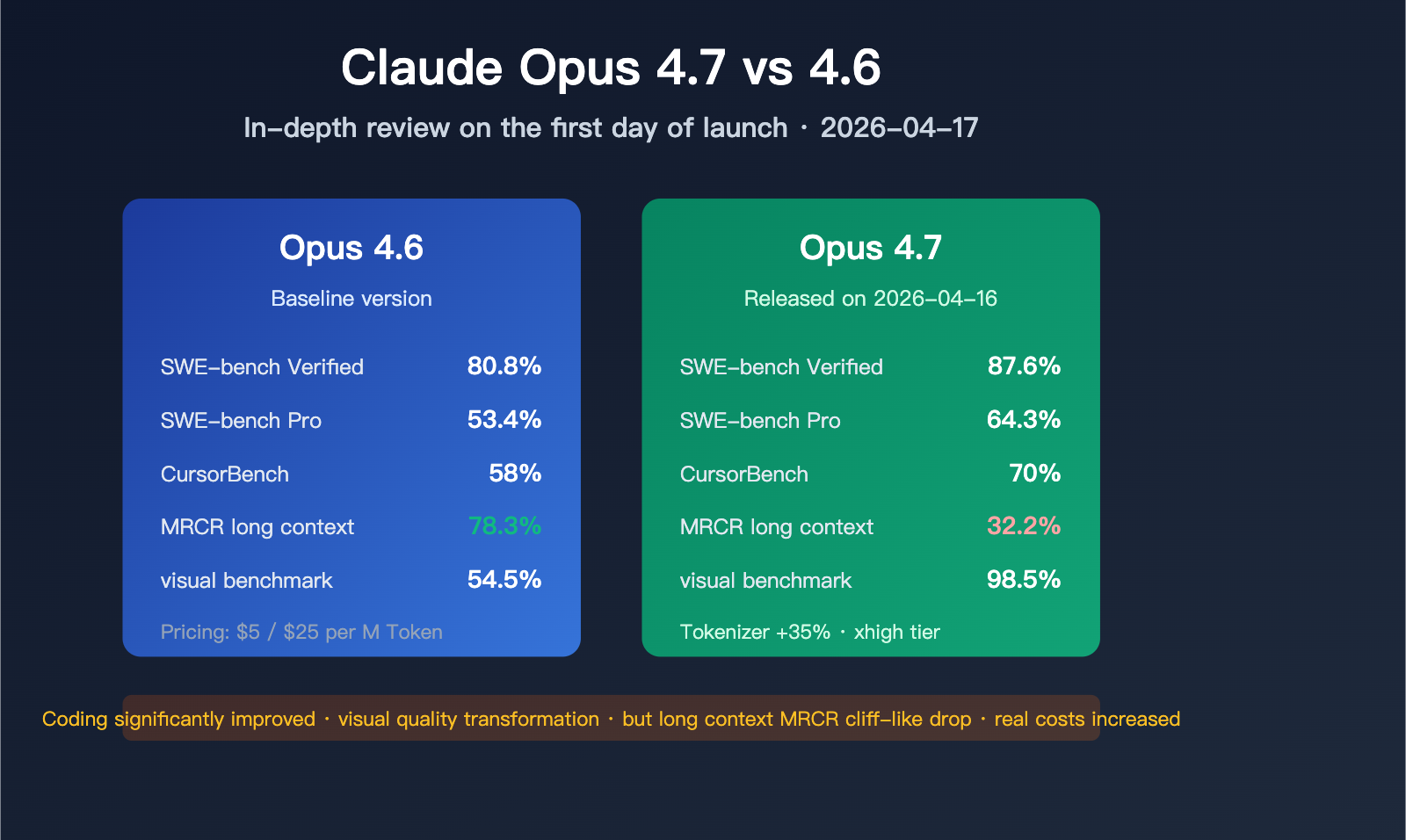

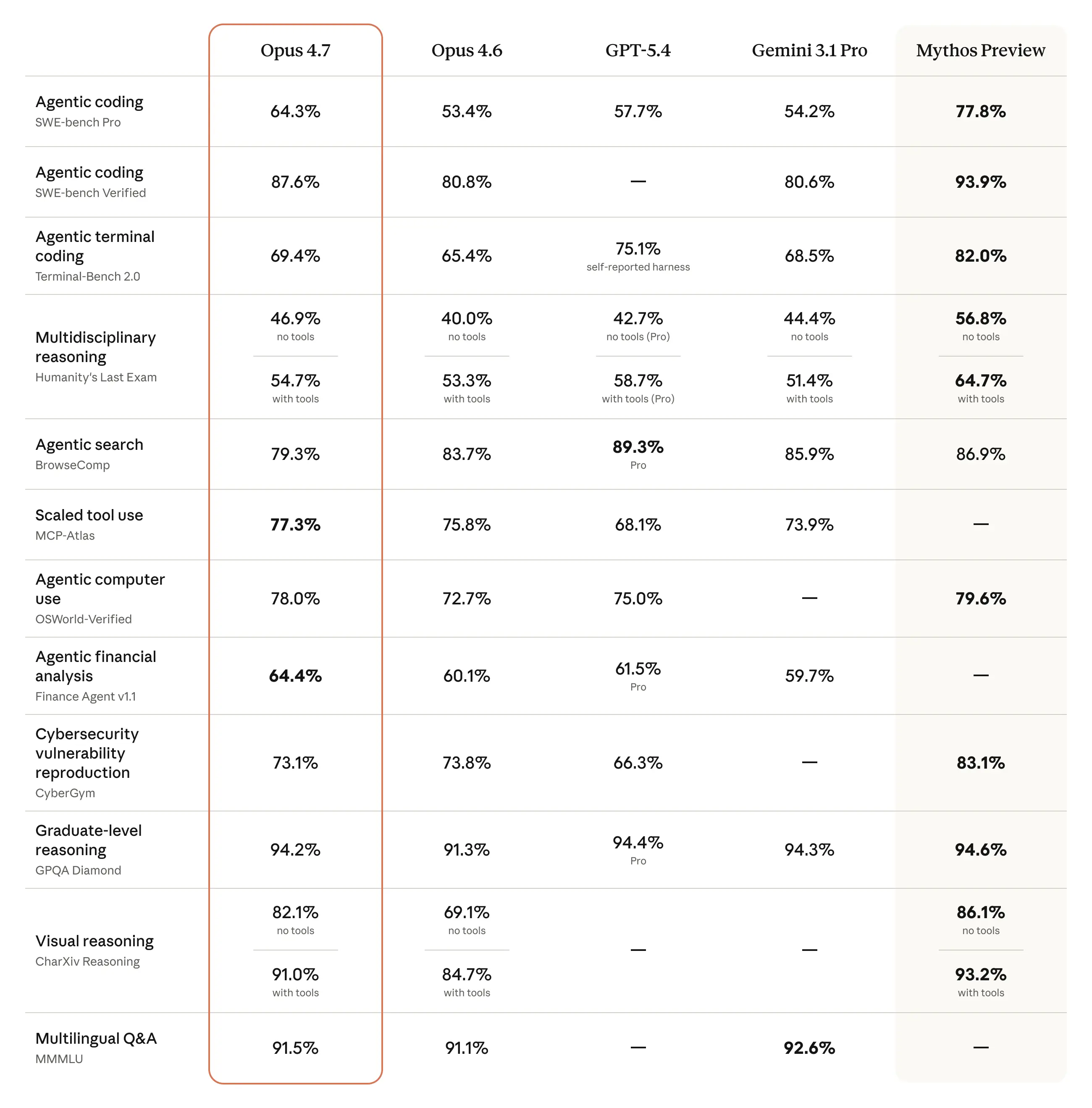

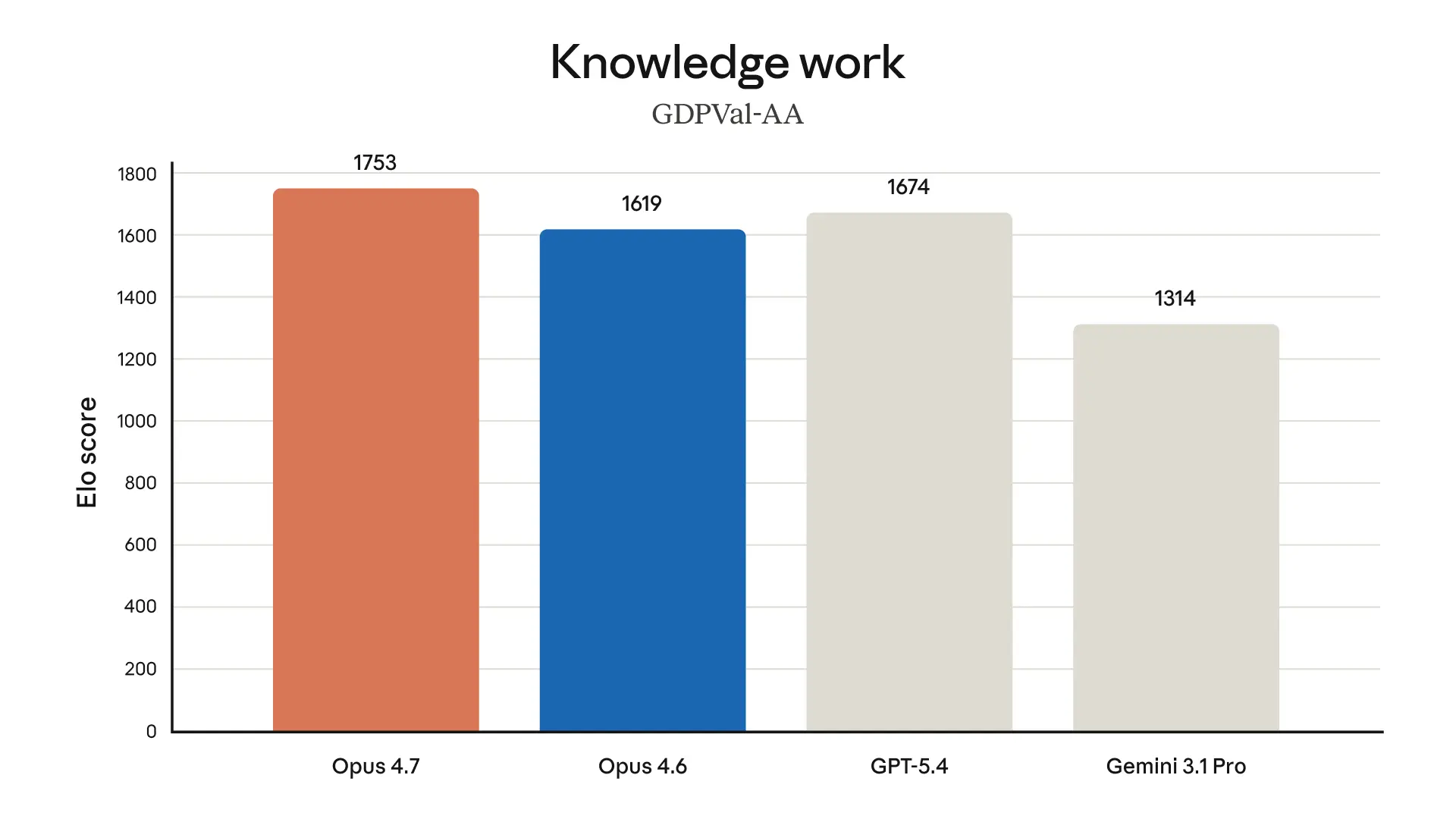

- Claude Opus 4.7 的強項證據最集中在視覺與 computer-use 工作流。Anthropic 官方文件說明它對 vision-heavy workloads 有 performance gains,特別影響 computer use、screenshot、artifact 與 document understanding workflows,且將座標映射到影像也更簡單。 VentureBeat 另稱其解析度提升到前代的 3 倍,並在標題中將其描述為狹義重回 generally available LLM 領先。

- DeepSeek V4-Pro 的 benchmark 相關競爭性敘述最明確,但主要來自公司說法經媒體轉述。報導稱它在 world knowledge benchmarks 上明顯領先其他開源模型,且只略遜於頂級閉源模型;另有分析師根據其 benchmark profile 認為它可能以更低成本提供很強的 agent 能力。

- Kimi K2.6 目前只能確認是 Moonshot AI 的原生多模態 agentic model,並於 2026-04-20 在 Workers AI 上得到 Day 0 支援;你提供的證據沒有其可比 benchmark 分數。

- 僅依據你提供的證據,現在還不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT-5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer-use 類工作流上提升明顯,[2] 且有次級報導稱其狹義重回 generally available LLM 的領先位置。[5] DeepSeek V4-Pro 則有

- ## Key findings

研究答案

僅依據你提供的證據,現在還不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT-5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer-use 類工作流上提升明顯,[2] 且有次級報導稱其狹義重回 generally available LLM 的領先位置。[

5] DeepSeek V4-Pro 則有公司說法經媒體轉述,稱其在 world knowledge benchmarks 上明顯領先其他開源模型,[

6] 而 Kimi K2.6 在現有證據中只有產品定位與上線資訊,缺少可直接比較的 benchmark 成績。[

1]

Key findings

-

無法產生可信的四模型總排名:你提供的材料沒有共享 benchmark 分數,且缺少 GPT-5.5 的任何 benchmark 證據。

-

Claude Opus 4.7 的強項證據最集中在視覺與 computer-use 工作流。Anthropic 官方文件說明它對 vision-heavy workloads 有 performance gains,特別影響 computer use、screenshot、artifact 與 document understanding workflows,且將座標映射到影像也更簡單。[

2] VentureBeat 另稱其解析度提升到前代的 3 倍,並在標題中將其描述為狹義重回 generally available LLM 領先。[

5]

-

DeepSeek V4-Pro 的 benchmark 相關競爭性敘述最明確,但主要來自公司說法經媒體轉述。報導稱它在 world knowledge benchmarks 上明顯領先其他開源模型,且只略遜於頂級閉源模型;另有分析師根據其 benchmark profile 認為它可能以更低成本提供很強的 agent 能力。[

6][

7]

-

Kimi K2.6 目前只能確認是 Moonshot AI 的原生多模態 agentic model,並於 2026-04-20 在 Workers AI 上得到 Day 0 支援;你提供的證據沒有其可比 benchmark 分數。[

1]

Confirmed facts

-

Kimi K2.6 已於 2026-04-20 在 Workers AI 上可用,Cloudflare 表示這是與 Moonshot AI 合作的 Day 0 支援;該模型被描述為 native multimodal agentic model。[

1]

-

Anthropic 官方文件表示 Claude Opus 4.7 的變更會帶來 vision-heavy workloads 的 performance gains,且特別有助於 computer use、screenshot、artifact、document understanding workflows;將座標映射到影像也變得更簡單。[

2]

-

VentureBeat 報導稱 Claude Opus 4.7 帶來相較前代 3 倍的解析度提升,並在標題中將其描述為狹義重回「most powerful generally available LLM」。[

5]

-

媒體報導稱 DeepSeek 提供 DeepSeek V4-Pro 與 DeepSeek V4-Flash 兩個版本;其中 V4-Pro 被描述為在 world knowledge benchmarks 上明顯領先其他開源模型,且僅略遜於頂級閉源模型。[

6]

-

CNBC 報導稱 DeepSeek V4 已針對 Claude Code 與 OpenClaw 之類 agent 工具做最佳化;Counterpoint 的 Wei Sun 則認為其 benchmark profile 顯示它可能以更低成本提供優秀 agent 能力。[

7]

-

Hugging Face 上存在一則要求補充 DeepSeek-V4-Pro 在 GPQA、GSM8K、HLE、MMLU-Pro、SWE-Bench Pro、SWE-Bench Verified、Terminal-Bench 2.0 等項目社群評測結果的討論。[

4]

What remains inference

-

把 Claude Opus 4.7 判定為四者整體第一,仍屬推論;現有證據沒有同一組 benchmark 的分數表可直接支持這個結論。[

2][

5]

-

把 DeepSeek V4-Pro 判定為所有開源任務全面第一,也仍屬推論;目前可見的是媒體轉述的公司說法,缺少你提供證據中的原始分數表。[

6]

-

把 Kimi K2.6 放在任何明確名次,幾乎純屬猜測;目前只知道它的產品定位,沒有硬 benchmark 成績。[

1]

-

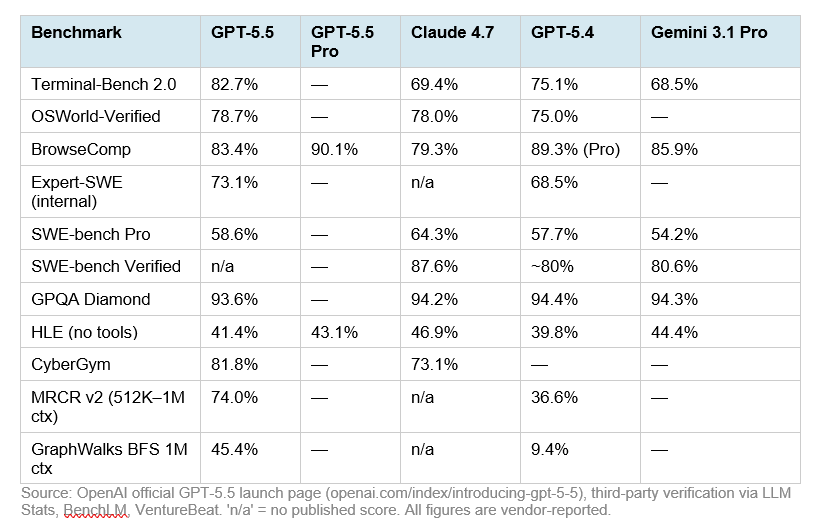

對 GPT-5.5 作任何 benchmark 結論都沒有證據基礎,因為提供材料裡沒有它的 benchmark 資料。

What the evidence suggests

-

若只看視覺與 computer-use 類任務,Claude Opus 4.7 的證據最強,因為這是唯一在官方文件中明確聲稱該類工作流有性能提升的模型,且次級報導補充了解析度提升細節。[

2][

5]

-

若只看現有材料中最明確的 benchmark 競爭描述,DeepSeek V4-Pro 在 world knowledge benchmarks 的定位最突出,因為它是唯一被直接描述為明顯領先其他開源模型的模型。[

6]

-

若看 agent tooling 與成本效益敘事,DeepSeek V4 也有較清楚的外部分析支持,因為報導提到它針對 Claude Code、OpenClaw 做了最佳化,且分析師從其 benchmark profile 推論出較強的 agent 能力/成本比。[

7]

-

Kimi K2.6 看起來是以多模態與 agentic 能力為賣點,但在這組證據裡沒有足夠 benchmark 資料把它與 Claude 或 DeepSeek 做定量比較。[

1]

-

整體四模型排序:Insufficient evidence。

Conflicting evidence or uncertainty

-

最大的不確定性不是「誰贏」,而是「有沒有可比數據」:目前沒有看到四個模型在同一 benchmark、同一版本、同一提示或工具設定下的分數。

-

Claude 的「領先」敘事主要來自次級報導的綜述與標題,而不是你提供證據中的原始官方 benchmark 表。[

5]

-

DeepSeek 的最強 benchmark 主張來自公司說法經媒體轉述,因此可信度低於官方技術報告或第三方獨立評測。[

6]

-

Kimi K2.6 的 benchmark 能力在這組證據裡幾乎空白,所以不能因產品描述或上線速度而推斷其分數。[

1]

-

Reddit 上有人提醒 SWE-bench leaderboard 可能混用了不同版本與不同 benchmark 任務,這提示跨榜單比較可能失真;但這只是低權威來源,最多當弱提醒使用。[

65]

Open questions

-

GPT-5.5 在 MMLU-Pro、GPQA、HLE、SWE-Bench Verified/Pro、Terminal-Bench 2.0、以及多模態 benchmark 上的成績是什麼?

-

Claude Opus 4.7、Kimi K2.6、DeepSeek V4 是否有同一版本 benchmark 表,且使用一致的工具權限、上下文長度、溫度與評分規則?

-

DeepSeek V4-Pro 所謂的 world knowledge benchmarks,具體是哪些測試、原始分數是多少、對照模型又是哪些?[

6]

-

Kimi K2.6 是否已有第三方獨立 benchmark,而不只是上線與產品定位資訊?[

1]

Sources worth trusting most

-

[

2] 是 Anthropic 官方文件,對 Claude Opus 4.7 的產品級能力變更與適用工作流最可信。[

2]

-

[

1] 是 Cloudflare 官方 changelog,對 Kimi K2.6 的上線時間、可用性與產品定位最可信。[

1]

-

[

6] 與 [

7] 是較可靠的媒體報導,適合用來了解 DeepSeek 的對外 benchmark 敘事與外部分析,但仍屬次級來源。[

6][

7]

-

[

5] 可作為 Claude Opus 4.7 外界競爭定位的參考,但對硬 benchmark 來說仍弱於官方技術文件。[

5]

-

[

4] 只能證明社群正在補充 DeepSeek-V4-Pro 的評測結果,不能當成最終 benchmark 證據。[

4]

Recommended next step

-

如果你要一個真正可 defend 的比較,下一步應該只蒐集四模型在共享 benchmark 上的原始分數表,優先看 MMLU-Pro、GPQA、HLE、SWE-Bench Verified/Pro、Terminal-Bench 2.0,以及多模態評測,並要求每個結果都標明是否允許 tools、browsing、test-time compute 與不同解碼設定。

-

在目前這組證據下,最安全的暫時結論是:Claude Opus 4.7 在視覺/computer-use 能力的證據最強,[

2][

5] DeepSeek V4-Pro 在開源對比的 world knowledge benchmark 敘事最強,[

6] Kimi K2.6 與 GPT-5.5 都不足以納入公平排名。[

1]

Summary

基於目前證據,不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做可靠的總體 benchmark 排名。較可靠的局部結論是:Claude Opus 4.7 在 vision/computer-use 類場景的正向證據最強,[2][

5] DeepSeek V4-Pro 有最明確的開源 benchmark 領先聲稱,[

6][

7] Kimi K2.6 缺 benchmark 數據,[

1] GPT-5.5 則完全沒有可用資料。若要嚴格比較,現階段最誠實的答案就是:Insufficient evidence。

輔助視覺素材

研究對話

研究問題

研究 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 的基準測試表現,並根據這些基準測試對它們進行比較。

附引用的答案

僅依據你提供的證據,現在還不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT-5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer-use 類工作流上提升明顯,[2] 且有次級報導稱其狹義重回 generally available LLM 的領先位置。[

5] DeepSeek V4-Pro 則有公司說法經媒體轉述,稱其在 world knowledge benchmarks 上明顯領先其他開源模型,[

6] 而 Kimi K2.6 在現有證據中只有產品定位與上線資訊,缺少可直接比較的 benchmark 成績。[

1]

Key findings

-

無法產生可信的四模型總排名:你提供的材料沒有共享 benchmark 分數,且缺少 GPT-5.5 的任何 benchmark 證據。

-

Claude Opus 4.7 的強項證據最集中在視覺與 computer-use 工作流。Anthropic 官方文件說明它對 vision-heavy workloads 有 performance gains,特別影響 computer use、screenshot、artifact 與 document understanding workflows,且將座標映射到影像也更簡單。[

2] VentureBeat 另稱其解析度提升到前代的 3 倍,並在標題中將其描述為狹義重回 generally available LLM 領先。[

5]

-

DeepSeek V4-Pro 的 benchmark 相關競爭性敘述最明確,但主要來自公司說法經媒體轉述。報導稱它在 world knowledge benchmarks 上明顯領先其他開源模型,且只略遜於頂級閉源模型;另有分析師根據其 benchmark profile 認為它可能以更低成本提供很強的 agent 能力。[

6][

7]

-

Kimi K2.6 目前只能確認是 Moonshot AI 的原生多模態 agentic model,並於 2026-04-20 在 Workers AI 上得到 Day 0 支援;你提供的證據沒有其可比 benchmark 分數。[

1]

Confirmed facts

-

Kimi K2.6 已於 2026-04-20 在 Workers AI 上可用,Cloudflare 表示這是與 Moonshot AI 合作的 Day 0 支援;該模型被描述為 native multimodal agentic model。[

1]

-

Anthropic 官方文件表示 Claude Opus 4.7 的變更會帶來 vision-heavy workloads 的 performance gains,且特別有助於 computer use、screenshot、artifact、document understanding workflows;將座標映射到影像也變得更簡單。[

2]

-

VentureBeat 報導稱 Claude Opus 4.7 帶來相較前代 3 倍的解析度提升,並在標題中將其描述為狹義重回「most powerful generally available LLM」。[

5]

-

媒體報導稱 DeepSeek 提供 DeepSeek V4-Pro 與 DeepSeek V4-Flash 兩個版本;其中 V4-Pro 被描述為在 world knowledge benchmarks 上明顯領先其他開源模型,且僅略遜於頂級閉源模型。[

6]

-

CNBC 報導稱 DeepSeek V4 已針對 Claude Code 與 OpenClaw 之類 agent 工具做最佳化;Counterpoint 的 Wei Sun 則認為其 benchmark profile 顯示它可能以更低成本提供優秀 agent 能力。[

7]

-

Hugging Face 上存在一則要求補充 DeepSeek-V4-Pro 在 GPQA、GSM8K、HLE、MMLU-Pro、SWE-Bench Pro、SWE-Bench Verified、Terminal-Bench 2.0 等項目社群評測結果的討論。[

4]

What remains inference

-

把 Claude Opus 4.7 判定為四者整體第一,仍屬推論;現有證據沒有同一組 benchmark 的分數表可直接支持這個結論。[

2][

5]

-

把 DeepSeek V4-Pro 判定為所有開源任務全面第一,也仍屬推論;目前可見的是媒體轉述的公司說法,缺少你提供證據中的原始分數表。[

6]

-

把 Kimi K2.6 放在任何明確名次,幾乎純屬猜測;目前只知道它的產品定位,沒有硬 benchmark 成績。[

1]

-

對 GPT-5.5 作任何 benchmark 結論都沒有證據基礎,因為提供材料裡沒有它的 benchmark 資料。

What the evidence suggests

-

若只看視覺與 computer-use 類任務,Claude Opus 4.7 的證據最強,因為這是唯一在官方文件中明確聲稱該類工作流有性能提升的模型,且次級報導補充了解析度提升細節。[

2][

5]

-

若只看現有材料中最明確的 benchmark 競爭描述,DeepSeek V4-Pro 在 world knowledge benchmarks 的定位最突出,因為它是唯一被直接描述為明顯領先其他開源模型的模型。[

6]

-

若看 agent tooling 與成本效益敘事,DeepSeek V4 也有較清楚的外部分析支持,因為報導提到它針對 Claude Code、OpenClaw 做了最佳化,且分析師從其 benchmark profile 推論出較強的 agent 能力/成本比。[

7]

-

Kimi K2.6 看起來是以多模態與 agentic 能力為賣點,但在這組證據裡沒有足夠 benchmark 資料把它與 Claude 或 DeepSeek 做定量比較。[

1]

-

整體四模型排序:Insufficient evidence。

Conflicting evidence or uncertainty

-

最大的不確定性不是「誰贏」,而是「有沒有可比數據」:目前沒有看到四個模型在同一 benchmark、同一版本、同一提示或工具設定下的分數。

-

Claude 的「領先」敘事主要來自次級報導的綜述與標題,而不是你提供證據中的原始官方 benchmark 表。[

5]

-

DeepSeek 的最強 benchmark 主張來自公司說法經媒體轉述,因此可信度低於官方技術報告或第三方獨立評測。[

6]

-

Kimi K2.6 的 benchmark 能力在這組證據裡幾乎空白,所以不能因產品描述或上線速度而推斷其分數。[

1]

-

Reddit 上有人提醒 SWE-bench leaderboard 可能混用了不同版本與不同 benchmark 任務,這提示跨榜單比較可能失真;但這只是低權威來源,最多當弱提醒使用。[

65]

Open questions

-

GPT-5.5 在 MMLU-Pro、GPQA、HLE、SWE-Bench Verified/Pro、Terminal-Bench 2.0、以及多模態 benchmark 上的成績是什麼?

-

Claude Opus 4.7、Kimi K2.6、DeepSeek V4 是否有同一版本 benchmark 表,且使用一致的工具權限、上下文長度、溫度與評分規則?

-

DeepSeek V4-Pro 所謂的 world knowledge benchmarks,具體是哪些測試、原始分數是多少、對照模型又是哪些?[

6]

-

Kimi K2.6 是否已有第三方獨立 benchmark,而不只是上線與產品定位資訊?[

1]

Sources worth trusting most

-

[

2] 是 Anthropic 官方文件,對 Claude Opus 4.7 的產品級能力變更與適用工作流最可信。[

2]

-

[

1] 是 Cloudflare 官方 changelog,對 Kimi K2.6 的上線時間、可用性與產品定位最可信。[

1]

-

[

6] 與 [

7] 是較可靠的媒體報導,適合用來了解 DeepSeek 的對外 benchmark 敘事與外部分析,但仍屬次級來源。[

6][

7]

-

[

5] 可作為 Claude Opus 4.7 外界競爭定位的參考,但對硬 benchmark 來說仍弱於官方技術文件。[

5]

-

[

4] 只能證明社群正在補充 DeepSeek-V4-Pro 的評測結果,不能當成最終 benchmark 證據。[

4]

Recommended next step

-

如果你要一個真正可 defend 的比較,下一步應該只蒐集四模型在共享 benchmark 上的原始分數表,優先看 MMLU-Pro、GPQA、HLE、SWE-Bench Verified/Pro、Terminal-Bench 2.0,以及多模態評測,並要求每個結果都標明是否允許 tools、browsing、test-time compute 與不同解碼設定。

-

在目前這組證據下,最安全的暫時結論是:Claude Opus 4.7 在視覺/computer-use 能力的證據最強,[

2][

5] DeepSeek V4-Pro 在開源對比的 world knowledge benchmark 敘事最強,[

6] Kimi K2.6 與 GPT-5.5 都不足以納入公平排名。[

1]

Summary

基於目前證據,不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做可靠的總體 benchmark 排名。較可靠的局部結論是:Claude Opus 4.7 在 vision/computer-use 類場景的正向證據最強,[2][

5] DeepSeek V4-Pro 有最明確的開源 benchmark 領先聲稱,[

6][

7] Kimi K2.6 缺 benchmark 數據,[

1] GPT-5.5 則完全沒有可用資料。若要嚴格比較,現階段最誠實的答案就是:Insufficient evidence。

大家也會問

「研究 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 的基準測試表現,並根據這些基準測試對它們進行比較。」的簡短答案是什麼?

僅依據你提供的證據,現在還不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT-5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer-use 類工作流上提升明顯,[2] 且有次級報導稱其狹義重回 generally available LLM 的領先位置。[5] DeepSeek V4-Pro 則有公司說法經媒體轉述,稱其在 world

最值得優先驗證的重點是什麼?

僅依據你提供的證據,現在還不能對 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 做出嚴格而公平的基準測試總排名,因為這些材料沒有提供同一套 benchmark、同一版本設定下的完整分數,而且完全沒有 GPT-5.5 的 benchmark 資料。較能成立的結論是:Claude Opus 4.7 有最明確的官方證據顯示其在視覺與 computer-use 類工作流上提升明顯,[2] 且有次級報導稱其狹義重回 generally available LLM 的領先位置。[5] DeepSeek V4-Pro 則有公司說法經媒體轉述,稱其在 world 無法產生可信的四模型總排名:你提供的材料沒有共享 benchmark 分數,且缺少 GPT-5.5 的任何 benchmark 證據。

接下來在實務上該怎麼做?

Claude Opus 4.7 的強項證據最集中在視覺與 computer-use 工作流。Anthropic 官方文件說明它對 vision-heavy workloads 有 performance gains,特別影響 computer use、screenshot、artifact 與 document understanding workflows,且將座標映射到影像也更簡單。 VentureBeat 另稱其解析度提升到前代的 3 倍,並在標題中將其描述為狹義重回 generally available LLM 領先。

下一步適合探索哪個相關主題?

繼續閱讀「研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較不會中途失焦、漏步驟或跑偏?」,從另一個角度查看更多引用來源。

開啟相關頁面我應該拿這個和什麼比較?

將這個答案與「請閱讀 Claude Opus 4.7 與 GPT 5.5 的介紹: https://www.anthropic.com/news/claude-opus-4-7 https://openai.com/index/introducing-gpt-5-5 請對它們進行深入研究,並撰」交叉比對。

開啟相關頁面繼續深入研究

來源

- [1] What's new in Claude Opus 4.7 - Claude API Docsplatform.claude.com

What's new in Claude Opus 4.7 - Claude API Docs Loading... . This change should unlock performance gains on vision-heavy workloads, and is particularly important for computer use and screenshot/artifact/document understanding workflows. Additionally, operations like mapping coordinates to images are now simpler — the model's coordinates are 1:1 with actual pixels, so there's no scale-factor math required. High-res images use more tokens. If the additional image fidelity is unnecessary, downsample images before sending to Claude to avoid token-usage increases. Beyond resolution, Claude Opus…

- [2] Anthropic releases Claude Opus 4.7, narrowly retaking lead for most ...venturebeat.com

This represents a three-fold increase in resolution compared to previous iterations. For developers building "computer-use" agents that must navigate dense, high-DPI interfaces or for analysts extracting data from intricate technical diagrams, this change effectively removes the "blurry vision" ceiling that previously limited autonomous navigation. This visual acuity is reflected in benchmarks from XBOW, where the model jumped from a 54.5% success rate in visual-acuity tests to 98.5%. On the benchmark front, Opus 4.7 has claimed the top spot in several critical categories: [...] But also, it'…

- [3] Claude Opus 4.7 Benchmark Breakdown: Vision, Coding, and ...mindstudio.ai

Claude Opus 4.7 posted 82.4% on SWE-bench Verified, up roughly 11 points from Opus 4.6 — the most meaningful coding benchmark available. Vision improvements were the largest percentage gains: MathVista jumped 9.5 points, enabling reliable visual math reasoning and structured chart interpretation. FinanceBench performance of 82.7% makes Opus 4.7 a strong choice for financial document analysis, handling most standard extraction and calculation tasks accurately. Opus 4.7 leads GPT-5.4 and Gemini 3.1 Pro across all five core benchmarks in this breakdown, with the most meaningful gap on SWE-bench.…

- [4] Claude Opus 4.7: Pricing, Benchmarks & Context Windowalmcorp.com

For coding, the official materials point to several standout numbers. Anthropic says Opus 4.7 improved resolution by 13% over Opus 4.6 on a 93-task coding benchmark. AWS cites 64.3% on SWE-bench Pro, 87.6% on SWE-bench Verified, and 69.4% on Terminal-Bench 2.0. Anthropic also highlights gains such as 70% on CursorBench versus 58% for Opus 4.6, along with reports that the model resolves more production-grade tasks in internal and partner evaluations. Those numbers do not mean every coding workflow becomes 13% or 20% better overnight. What they do suggest is that Opus 4.7 is moving up where adv…

- [5] Introducing Claude Opus 4.7anthropic.com

Image 22: logo > Claude Opus 4.7 feels like a real step up in intelligence. Code quality is noticeably improved, it’s cutting out the meaningless wrapper functions and fallback scaffolding that used to pile up, and fixes its own code as it goes. It’s the cleanest jump we’ve seen since the move from Sonnet 3.7 to the Claude 4 series. > > Ben Lafferty > > Senior Staff Engineer Image 23: logo > For the computer-use work that sits at the heart of XBOW’s autonomous penetration testing, the new Claude Opus 4.7 is a step change: 98.5% on our visual-acuity benchmark versus 54.5% for Opus 4.6. Our sin…

- [6] Anthropic releases Claude Opus 4.7: How to try it, benchmarks, safetymashable.com

Claude Mythos scored 56.8 percent on HLE Claude Opus 4.7 scored 46.9 percent Gemini 3.1 Pro scored 44.4 percent GPT-5-4 Pro scored 42.7 percent Claude Opus 4.6 scored 40.0 percent With tools, GPT-5-4-Pro scored 58.7 percent compared to Opus 4.7’s 54.7 percent. Mythos beat them both with 64.7 percent. Related Stories 'The AI Doc' director says cynicism is the only wrong answer to AI Anthropic sues Pentagon as Claude downloads soar Anthropic CEO warns that AI could bring slavery, bioterrorism, and unstoppable drone armies. I'm not buying it. Anthropic used mostly AI to build Claude Cowork tool…

- [7] Anthropic’s Claude Opus 4.7 Is Here, and It’s Already Outperforming Its Competitiors - Ohio Society of Association Professionalsohiosap.org

This comes shortly after Anthropic launched Claude Opus 4.6 in February. And the model is "less broadly capable" than its most recent offering, Claude Mythos Preview. But at this time Anthropic has no plans to release Claude Mythos Preview to the general public. It says the effort is aimed at understanding how models of that caliber could eventually be deployed at scale. How Does Opus 4.7 Compare? According to The Next Web, the most striking gains are in software engineering: on SWE-bench Pro, an AI evaluation benchmark, Opus 4.7 scored 64.3 percent—up from 53.4 percent on Opus 4.6 and ahead…

- [8] Claude Opus 4.7anthropic.com

Enterprise workflows Opus 4.7 sets the standard for enterprise workflows, carrying context across sessions to manage complex, multi-day projects end-to-end with professional polish and strong performance on spreadsheets, slides, and docs. ## Benchmarks Claude Opus 4.7 is our most capable, generally available model, performing at the frontier across coding, agentic, and knowledge work capabilities. Image 3 ## Trust and safety Extensive testing and evaluation ensures the release of Opus 4.7 meets Anthropic’s standards for safety, security, and reliability. The accompanying model card covers…

- [9] Claude Opus 4.7 Benchmarks Explainedvellum.ai

Coding is the clear headline. SWE-bench Verified jumps from 80.8% to 87.6%, a nearly 7-point gain that puts Opus 4.7 ahead of Gemini 3.1 Pro (80.6%). On SWE-bench Pro, the harder multi-language variant, Opus 4.7 goes from 53.4% to 64.3%, leapfrogging both GPT-5.4 (57.7%) and Gemini (54.2%). Computer use takes a meaningful step. OSWorld-Verified climbs from 72.7% to 78.0%, ahead of GPT-5.4 (75.0%) and within 1.6 points of Mythos Preview (79.6%). Pair that with the 3x vision resolution upgrade and you have a model that's genuinely more capable at real UI interaction. Tool use is best-in-class.…

- [10] Claude Opus 4.7: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

LLM Stats Logo ### Join our newsletter and stay up to date with everything AI There's too much noise in AI, let's filter it for you. Get a curated digest of models, benchmarks, and the analysis that matters, right in your inbox once a week. No spam, unsubscribe anytime LLM Stats Logo The AI Benchmarking Hub. ### Leaderboards ### Arenas ### Benchmarks ### Models ### Resources © 2026 llm-stats

- [11] Anthropic Promised Claude Opus 4.7 Would Change ... - Towards AIpub.towardsai.net

Anthropic Promised Claude Opus 4.7 Would Change Everything. Here’s What Actually Happened. | by Adi Insights and Innovations | Apr, 2026 | Towards AI Sitemap Open in app Sign up Sign in []( Get app Write Search Sign up Sign in Image 1 ## Towards AI · Follow publication Image 2: Towards AI We build Enterprise AI. We teach what we learn. Join 100K+ AI practitioners on Towards AI Academy. Free: 6-day Agentic AI Engineering Email Guide: Follow publication Member-only story # Anthropic Promised Claude Opus 4.7 Would Change Everything. Here’s What Actually Happened. ## _The benchmarks say it’s th…

- [12] Claude Opus 4.7 results: early benchmarks, real-world feedback ...boringbot.substack.com

The Claude Opus 4.7 benchmarks on software engineering tasks show the clearest improvement. On SWE-Bench, the industry-standard benchmark for evaluating autonomous code repair across real GitHub issues, Opus 4.7 shows a meaningful step up from Opus 4.6, with early reported scores suggesting improvements in the range of 8–12 percentage points depending on task category (Source: community-reported testing via r/ClaudeAI and independent evaluations). On HumanEval, which tests functional code generation, Opus 4.7 continues to perform competitively. [...] Definitive head-to-head benchmarks for the…

- [13] Official Benchmark of Anthropic's latest frontier model, ...facebook.com

Creator Inside - Official Benchmark of Anthropic's latest... | Facebook Log In Log In Forgot Account? ## Creator Inside's Post []( ### Creator Inside 16h · Official Benchmark of Anthropic's latest frontier model, Claude Opus 4.7Image 1: 🔥Image 2: 🤌🏻 Image 3: May be an image of text Image 4 Image 5 All reactions: 4 1 share Like Comment Image 6 Image 7 All reactions: 4 1 share Like Comment

- [14] Claude Opus 4.7 benchmarks : r/singularity - Redditreddit.com

How can there be a benchmark if the model is not even out not on API or the App? ILikeAnanas. • 9d ago · https://www.anthropic.com/news/claude-

- [15] Claude Opus 4.7 benchmarked 1 day after release vs Opus 4.6 ...reddit.com

Claude Opus 4.7 (high) unexpectedly performs significantly worse than Opus 4.6 (high) on the Thematic Generalization Benchmark: 80.6 → 72.8.

- [16] Claude Opus 4.7 is a downgrade - Mediummedium.com

I've been running benchmark evaluations on Opus 4.7 over the past few days, and almost all of the results are worse than the old Opus 4.6.

- [17] Notes on moving to Opus 4.7 for an AI SRE : r/Anthropic - Redditreddit.com

Models are already smart enough: this model is obviously better and does improve our performance, but we only saw an uplift of 75% -> 81%

- [18] Claude Opus 4.7 Full Breakdown + Testing Results : r/PostAI - Redditreddit.com

4.5K subscribers in the PostAI community. Welcome to PostAI, a dedicated community for all things artificial intelligence.

- [19] GPT-5.5: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

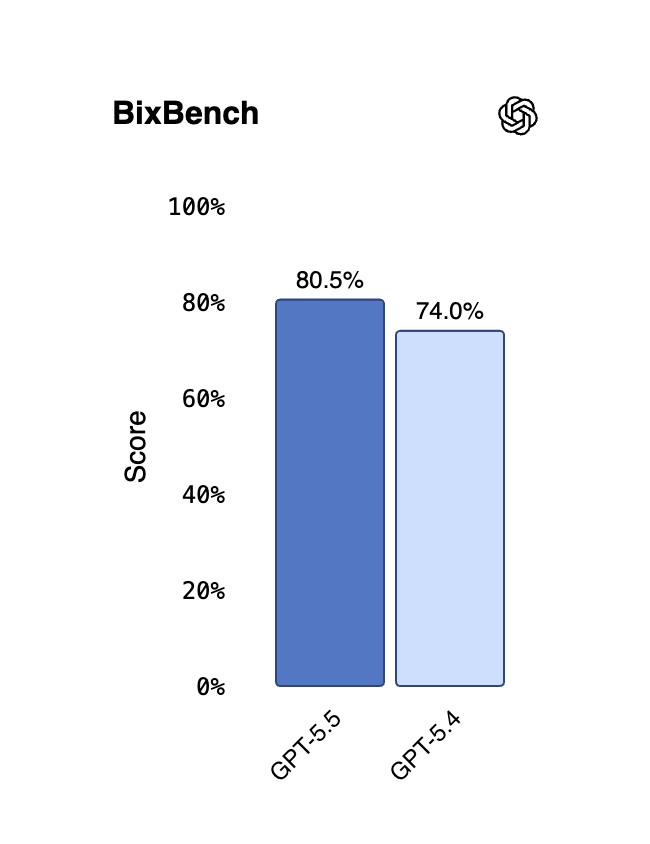

GPT-5.5: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• Make AI phone calls with one API call CallingBox Start for free 1. Organizations 2. OpenAI 3. GPT-5.5 Compare Image 2: OpenAI logo # GPT-5.5 OpenAI·Apr 2026·Proprietary GPT-5.5 is OpenAI's smartest model yet, designed for real work across agentic coding, computer use, knowledge work, and early scientific research. It matches GPT-5.4 per-token latency in real-world serving while reaching a much higher...m…

- [20] I put GPT-5.5 through a 10-round test: It scored 93/100, losing points only for exuberance | ZDNETzdnet.com

Overall test results Overall, the tests can reward up to 100 points. The current version, GPT-5.5, scored 93. GPT 5.2 scored 92. GPT-5.1 scored 91. You might think this latest build would do better than a point or two improvement over the previous versions, but the model's own overeagerness brought it down. On the first test, the one asking about current news, I asked the AI to summarize one source. Instead, it looked for the same news from six separate sources. It overreached and lost points. [...] Available points: 10 Awarded points: 10 This test is among the most fun in the entire quest…

- [21] Introducing GPT-5.5openai.com

Long context EvalGPT-5.5GPT‑5.4GPT-5.5 ProGPT‑5.4 ProClaudeOpus 4.7Gemini 3.1 Pro Graphwalks BFS 256k f1 73.7%62.5%--76.9%- Graphwalks BFS 1mil f1 45.4%9.4%--41.2% (Opus 4.6)- Graphwalks parents 256k f1 90.1%82.8%--93.6%- Graphwalks parents 1mil f1 58.5%44.4%--72.0% (Opus 4.6)- OpenAI MRCR v2 8-needle 4K-8K 98.1%97.3%---- OpenAI MRCR v2 8-needle 8K-16K 93.0%91.4%---- OpenAI MRCR v2 8-needle 16K-32K 96.5%97.2%---- OpenAI MRCR v2 8-needle 32K-64K 90.0%90.5%---- OpenAI MRCR v2 8-needle 64K-128K 83.1%86.0%---- OpenAI MRCR v2 8-needle 128K-256K 87.5%79.3%--59.2%- OpenAI MRCR v2 8-needle 256K…

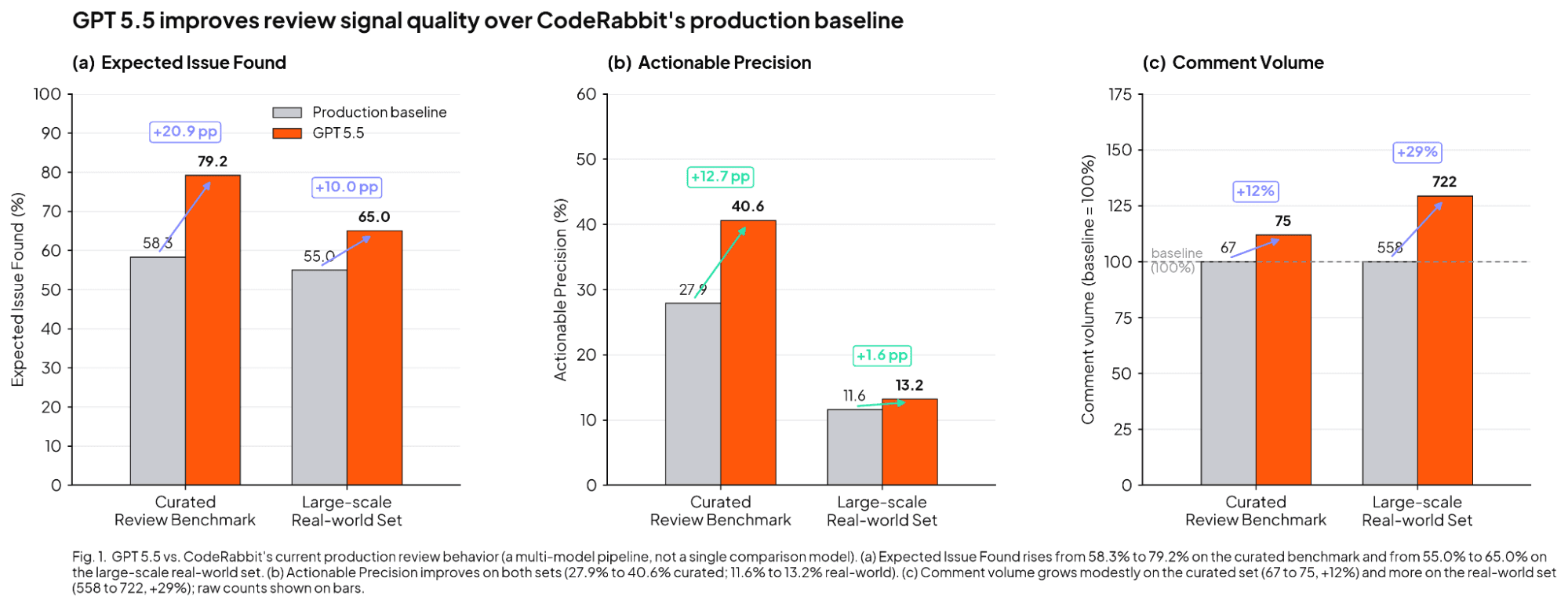

- [22] OpenAI GPT-5.5 Benchmark (CodeRabbit)coderabbit.ai

In our early testing with GPT-5.5, the agent reached 79.2% expected issue found on our curated review benchmark versus 58.3%, improved precision from 27.9% to 40.6%, and produced 75 comments versus the baseline's 67. That means it found substantially more useful issues with only a modest increase in comment volume. In testing, GPT-5.5 improved performance across several key metrics on our large-scale, real-world review set. Specifically, the expected issue found rate increased from a 55.0% baseline to 65.0%. Furthermore, precision improved from 11.6% to 13.2%. The agent also became more verbo…

- [23] OpenAI GPT-5.5: an evaluation - Sonarsonarsource.com

GPT-5.5 is the latest model from OpenAI, and it delivers huge improvements in a key area: security. In fact, its security numbers are some of the best we’ve seen. Vulnerability density is low, consistent across runs, and flat across severity levels. That's the headline. But, as with all models, there’s a more nuanced, complex story when we dig below the surface. We ran GPT-5.5 through Sonar's LLM evaluation framework, which is designed to measure LLM-generated code against the same rules as a developer-written codebase. ## What was measured Model: GPT-5.5 Language: Java Benchmark: 4,444 tasks…

- [24] OpenAI's GPT-5.5 is the new leading AI model - Artificial Analysisartificialanalysis.ai

Image 2 OpenAI leads five of our headline evaluations and places second to Gemini 3.1 Pro Preview on three. Image 3 Effort variants provide a clear ladder to balance intelligence and cost. GPT-5.5 (xhigh) is ~20% more expensive to run our Index than its predecessor, but 30% cheaper than Claude Opus 4.7 (max) Image 4 GPT-5.5 (xhigh) uses ~40% fewer output tokens to run our Index than its predecessor Image 5 GPT-5.5 (xhigh) leads GDPval-AA with an Elo of 1785 Image 6 GPT-5.5 (xhigh) records our highest ever AA-Omniscience accuracy score but trails the frontier on hallucination See Artificial An…

- [25] OpenAI's GPT-5.5 masters agentic coding with 82.7% benchmark ...interestingengineering.com

— OpenAI (@OpenAI) April 23, 2026 OpenAI said the improvements go beyond benchmarks. Early testers reported that GPT-5.5 better understands system architecture and failure points. It can identify where fixes belong and predict downstream impacts across a codebase. The company emphasized efficiency alongside capability. GPT-5.5 matches GPT-5.4’s per-token latency despite higher intelligence. It also uses fewer tokens to complete the same tasks, lowering computational cost. “GPT-5.5 delivers this step up in intelligence without compromising on speed,” OpenAI noted. It added that the model perfo…

- [26] GPT-5.5 System Card - OpenAI Deployment Safety Hubdeploymentsafety.openai.com

| evaluation | GPT-5 | GPT-5.1 | GPT-5.2 | GPT-5.4 | GPT-5.5 | | HealthBench length-adjusted | 57.7 (63.1, 2904) | 50.9 (64.2, 4222) | 56.8 (60.7, 2645) | 54.0 (55.7, 2275) | 56.5 (58.4, 2313) | | HealthBench Hard length-adjusted | 34.7 (41.6, 2880) | 25.4 (41.4, 4049) | 34.3 (38.9, 2585) | 29.1 (30.3, 2161) | 31.5 (33.8, 2289) | | HealthBench Consensus length-adjusted | 95.6 (96.0, 2880) | 95.0 (95.8, 4171) | 94.4 (94.7, 2615) | 96.3 (96.4, 2238) | 95.6 (95.7, 2259) | | HealthBench Professional length-adjusted | 46.2 (51.0, 3616) | 39.6 (48.0, 4863) | 45.9 (50.0, 3400) | 48.1 (51.9, 3308) |…

- [27] OpenAI releases GPT-5.5 with improved coding and research capabilitiesca.finance.yahoo.com

© 2026 All rights reserved. About our ads Advertising Jobs # Yahoo Finance Yahoo Finance Mail Sign in Investing.com # OpenAI releases GPT-5.5 with improved coding and research capabilities Louis Juricic 1 min read Investing.com -- OpenAI announced Thursday the release of GPT-5.5, its latest AI model now available to Plus, Pro, Business, and Enterprise users through ChatGPT and Codex platforms. The model achieved 82.7% accuracy on Terminal-Bench 2.0, which tests command-line workflows, and 58.6% on SWE-Bench Pro, which evaluates GitHub issue resolution, according to benchmark results provided…

- [28] OpenAI's GPT-5.5: Benchmarks, Safety Classification, and Availabilitydatacamp.com

GPT-5.5 Benchmark Results Here is what I think is the most interesting model eval from the release. It is a measure of long-context performance. These benchmarks evaluate an underlying question: If I hand you a massive amount of text, can you still reason over it? The MRCR needle tests are essentially a "needle in a haystack" test, hence the name. The model is given a long document with specific pieces of info - "needles" - hidden inside it, then asked to retrieve them - the needles. We see the test done multiple times with different context sizes. The higher the score, the more the model…

- [29] GPT-5.5 Is 'Our Smartest Model Yet,' Says Company With History of ...mediacopilot.ai

OpenAI said GPT-5.5 outperformed its predecessor on every major coding and agent benchmark the company tested, while using fewer tokens and running at the same speed as the older model. On one third-party coding index, it matched leading rivals at about half the cost. Keeping a larger model that fast required rebuilding inference as a single system rather than a patchwork of tweaks, the company said. GPT-5.5 was designed, trained and served on NVIDIA’s latest hardware, and OpenAI credited its own Codex tool and GPT-5.5 itself with helping hit the efficiency targets. Early testers told the com…

- [30] OpenAI unveils GPT-5.5, claims a "new class of intelligence" at ...the-decoder.com

So far, OpenAI has only shared GPT-5.5 Pro benchmark results for three of nine tests: BrowseComp, FrontierMath Tier 1-3, and FrontierMath Tier 4. It beats the base model in all three. ## Cybersecurity capabilities rated "High" OpenAI classifies the biological, chemical, and cybersecurity capabilities of GPT-5.5 as "High" in its Preparedness Framework, the same rating as its recent predecessors, but not "Critical." The model shows improved cybersecurity performance compared to GPT-5.4, scoring 81.8 percent on the CyberGym benchmark (versus 79.0 percent) and 88.1 percent on internal capture-the…

- [31] OpenAI Just Dropped GPT-5.5 and Says the New Model Intuits What ...inc.com

According to the company's official blog, GPT-5.5 outperformed or tied with human workers on about 85 percent of the benchmarked tasks, compared

- [32] OpenAI GPT-5.5 meaningfully advances enterprise content use casesblog.box.com

In a head-to-head comparison against GPT 5.4, GPT 5.5 achieved a 10-percentage-point lead in overall agent accuracy, scoring 77% against 67%

- [33] OpenAI Releases GPT-5.5 With State-of-the-Art Scores on Coding, Science, and Computer Uselinkedin.com

On Terminal-Bench 2.0, which measures complex command-line workflows requiring planning and tool coordination, GPT-5.5 reaches 82.7%, up from GPT-5.4's 75.1%. On OSWorld-Verified, which tests whether a model can operate real computer interfaces without human guidance, it hits 78.7%, compared to 75.0% for GPT-5.4. The model achieves these results while using fewer output tokens on both benchmarks, a detail that matters for the economics of deploying AI at scale. ### The Coding Case [...] ### What This Adds Up To Taken together, the benchmark results and early-access accounts describe a model t…

- [34] We Tested GPT-5.5 for 3 Weeks. It's a Beast. - YouTubeyoutube.com

GPT-5.5 Just Beat Claude Opus 4.7 at Engineering Image 7 Every Every 37.1K subscribers Subscribe Subscribed 528 Share Save Download Download 16,312 views 7 hours ago 16,312 views • Apr 23, 2026 OpenAI just dropped GPT-5.5—and after three weeks of hands-on testing at Every, the headline is its coding ability. On Every's Senior Engineer Benchmark, GPT-5.5 scored 62.5 out of 100. That’s about a 30-point leap over Claude Opus 4.7.…...more ...more How this was made Auto-dubbed Audio tracks for some languages were automatically generated. Learn more ## Chapters View all Image 8 #### It's Model Re…

- [35] GPT-5.5 benchmark results have been released : r/singularity - Redditreddit.com

GPT-5.5 benchmark results have been released · Comments Section · It got 73.1% on Expert SWE though! · More posts you may like · Community Info

- [36] Moonshot AI Kimi K2.6 now available on Workers AIdevelopers.cloudflare.com

Image 2: hero image ← Back to all posts ## Moonshot AI Kimi K2.6 now available on Workers AI Apr 20, 2026 Workers AI

@cf/moonshotai/kimi-k2.6is now available on Workers AI, in partnership with Moonshot AI for Day 0 support. Kimi K2.6 is a native multimodal agentic model from Moonshot AI that advances practical capabilities in long-horizon coding, coding-driven design, proactive autonomous execution, and swarm-based task orchestration. Built on a Mixture-of-Experts architecture with 1T total parameters and 32B active per token, Kimi K2.6 delivers frontier-scale intelligence with efficient i… - [37] [AINews] Moonshot Kimi K2.6: the world's leading Open Model ...latent.space

Two benchmark-adjacent research items stood out. Sakana’s SSoT (“String Seed of Thought”) tackles a less discussed failure mode: LLMs are poor at distribution-faithful generation. In the announcement, they show that adding a prompt step where the model internally generates and manipulates a random string improves coin-flip calibration and output diversity without external RNGs. And Skill-RAG, summarized by @omarsar0, uses hidden-state probing to detect impending knowledge failures and only then invoke the right retrieval strategy—moving RAG from unconditional retrieval to failure-aware retrie…

- [38] Kimi K2.6 - Intelligence, Performance & Price Analysisartificialanalysis.ai

Kimi K2.6 scores 54 on the Artificial Analysis Intelligence Index, placing it well above average among comparable models (averaging 28). When evaluating the Intelligence Index, it generated 160M tokens, which is very verbose in comparison to the average of 41M. Pricing for Kimi K2.6 is $0.00 per 1M input tokens (competitively priced, average: $0.55) and $0.00 per 1M output tokens (competitively priced, average: $1.58). In total, it cost $0.00 to evaluate Kimi K2.6 on the Intelligence Index. ### Technical specifications [...] ### How many parameters does Kimi K2.6 have? Kimi K2.6 has 1 trillio…

- [39] Kimi K2.6 Has Arrived: An Open-Weight Powerhouse for Agentic Workblog.kilo.ai

Ready to put these impressive stats to the test in your own workspace? Kimi K2.6 is available to use now in the Kilo Gateway. That means you can use it wherever you use Kilo—in the Kilo CLI, our VS Code and JetBrains extensions, Hermes, KiloClaw (our hosted OpenClaw), and more. Experience the next evolution of open-source agentic intelligence today. Read more about the official model release and dive into the full technical benchmarks from Moonshot AI here: Kimi K2.6 Announcement. #### Discussion about this post No posts ### Ready for more? © 2026 Kilo Code Inc. · Privacy ∙ Terms ∙ Collection…

- [40] Kimi K2.6 on GMI Cloud: Architecture, Benchmarks & API Accessgmicloud.ai

Kimi K2.6: Architecture, Benchmarks, and What It Means for Production AI April 22, 2026 .png) Moonshot AI just open-sourced Kimi K2.6, and the results speak for themselves. It tops SWE-Bench Pro, runs 300 parallel sub-agents, and fits on 4x H100s in INT4. Built for autonomous coding, agent orchestration, and full-stack design. ## What Kimi K2.6 Is Kimi K2.6 is an open-source, native multimodal agentic model released by Moonshot AI on April 20, 2026, under a Modified MIT License. It is built for three things: long-horizon autonomous coding, coding-driven UI and full-stack design, and agent s…

- [41] Kimi K2.6 Review: Better Reasoning, 100-Agent Swarms (2026) | eesel AIeesel.ai

If you’re doing high-volume, multi-file refactors, the efficiency gains from the Muon optimizer.which Moonshot claims provides 2x computational efficiency over standard AdamW optimizers.make Kimi a very cost-effective choice for 2026. This Muon technical guide and the Muon research paper explain how this matrix-aware optimization cuts training costs by roughly 50%. ## Conclusion: Integrating Kimi into a Multi-Agent World The release of Kimi K2.6 on April 13, 2026, marks a turning point for Moonshot AI. They are no longer just a "fast follower" to western labs; with the Agent Swarm and Muon-op…

- [42] Kimi K2.6: The new leading open weights model - Artificial Analysisartificialanalysis.ai

➤ Multimodality: Kimi K2.6 supports Image and Video input and text output natively. The model’s max context length remains 256k. Kimi K2.6 has significantly higher token usage than Kimi K2.5. Kimi K2.5 scores 6 on the AA-Omniscience Index, primarily driven by low hallucination rate. Here’s the full suite of Kimi K2.6 evaluation results: See Artificial Analysis for further details and benchmarks of Kimi K2.6: Want to dive deeper? Discuss this model with our Discord community: ## Read the latest ### Opus 4.7: Everything you need to know Benchmarks and Analysis of Opus 4.7 April 17, 2026 ### Sub…

- [43] MoonshotAI: Kimi K2.6 – Benchmarks - OpenRouteropenrouter.ai

MoonshotAI: Kimi K2.6 ### moonshotai/kimi-k2.6 Released Apr 20, 2026256,000 context$0.7448/M input tokens$4.655/M output tokens Kimi K2.6 is Moonshot AI's next-generation multimodal model, designed for long-horizon coding, coding-driven UI/UX generation, and multi-agent orchestration. It handles complex end-to-end coding tasks across Python, Rust, and Go, and can convert prompts and visual inputs into production-ready interfaces. Its agent swarm architecture scales to hundreds of parallel sub-agents for autonomous task decomposition - delivering documents, websites, and spreadsheets in a si…

- [44] Kimi K2.6: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

Kimi K2.6: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• What if your agent could call anyone? CallingBox Start for free 1. Organizations 2. Moonshot AI 3. Kimi K2.6 Compare Chat Image 2: Moonshot AI logo # Kimi K2.6 Moonshot AI·Apr 2026·Modified MIT License Kimi K2.6 is Moonshot AI's open-source, native multimodal agentic model focused on state-of-the-art coding, long-horizon execution, and agent swarm capabilities. It scales horizontally to 300 sub-agents…

- [45] Moonshot AI Releases Kimi K2.6 with Long-Horizon Coding, Agent ...marktechpost.com

The Long-Horizon Coding Headline Numbers The metric that will likely get the most attention from dev teams is SWE-Bench Pro — a benchmark testing whether a model can resolve real-world GitHub issues in professional software repositories. Kimi K2.6 scores 58.6 on SWE-Bench Pro, compared to 57.7 for GPT-5.4 (xhigh), 53.4 for Claude Opus 4.6 (max effort), 54.2 for Gemini 3.1 Pro (thinking high), and 50.7 for Kimi K2.5. On SWE-Bench Verified it scores 80.2, sitting within a tight band of top-tier models. On Terminal-Bench 2.0 using the Terminus-2 agent framework, K2.6 achieves 66.7, compared…

- [46] MoonshotAI: Kimi K2.6 Reviewdesignforonline.com

Performance Indices Source: Artificial Analysis This model was released recently. Independent benchmark evaluations are typically completed within days of release — these figures are preliminary and are likely to be updated as testing is finalised. ## Benchmark Scores ### Intelligence ### Technical ### Content Benchmark data from Artificial Analysis and Hugging Face How does MoonshotAI: Kimi K2.6 stack up? Compare side-by-side with other similar models. ## Model Information | | | --- | | OpenRouter ID |

moonshotai/kimi-k2.6| | Provider | moonshotai | | Release Date | April 20, 2026 | |… - [47] Matt Silverlock 🐀 on X: "very excited to see Kimi K2.6 land on Workers AI. benchmarks are benchmarks, but competitive open models drive all models forward. https://t.co/OnUmdLJyit" / Xx.com

Matt Silverlock 🐀 on X: "very excited to see Kimi K2.6 land on Workers AI. benchmarks are benchmarks, but competitive open models drive all models forward. / X Don’t miss what’s happening People on X are the first to know. Log in Sign up # []( ## Post See new posts # Conversation Image 1 Matt Silverlock Image 2: 🐀 Image 3 @elithrar very excited to see Kimi K2.6 land on Workers AI. benchmarks are benchmarks, but competitive open models drive all models forward. Image 4: Image Quote Image 5 michelle @michellechen · Apr 20 partnering with @Kimi_Moonshot to bring kimi k2.6 to @CloudflareDev w…

- [48] Moonshot AI releases Kimi K2.6 with long-horizon coding and agent ...facebook.com

Artificial Intelligence & Deep Learning | Moonshot AI Releases Kimi K2.6 with Long-Horizon Coding, Agent Swarm Scaling to 300 Sub-Agents and 4,000 Coordinated Steps | Facebook Log In Log In Forgot Account? Image 1 Moonshot AI releases Kimi K2.6 with long-horizon coding and agent swarm scaling Summarized by AI from the post below : An open, heterogeneous multi-agent ecosystem where humans and agents from any device, running any model, collaborate in a shared operational space — with K2.6 as the adaptive coordinator. - Benchmarks: 54.0 on HLE-Full with tools (leads GPT-5.4, Claude Opus 4.6, G…

- [49] Moonshot AI Unveils Kimi K2.6, an Open-Weight Model Built for ...linkedin.com

According to Moonshot AI, K2.6 posts leading results across several major evaluations, placing it alongside GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro. Reported scores include 54.0 on HLE with Tools, 58.6 on SWE-Bench Pro, and 83.2 on BrowseComp. Beyond raw scores, Moonshot AI is framing K2.6 as a system built for sustained, tool-heavy workflows: it can chain more than 4,000 tool calls and operate continuously for over twelve hours in languages such as Rust, Go, and Python. [...] #### 36K followers Published Apr 20, 2026 + Follow Moonshot AI has released Kimi K2.6 as an open-weight model, p…

- [50] Better Kimi K2.6 benchmark score chart : r/ArtificialInteligence - Redditreddit.com

Artificial Analysis has released a more in-depth benchmark breakdown of Kimi K2 Thinking (2nd image) · r/LocalLLaMA - Artificial Analysis has

- [51] My Hands-On Review of Kimi K2 Thinking: The Open- ...reddit.com

For coding, it achieved high scores like 71.3% on SWE-Bench Verified, and I saw it generate functional games and fix bugs seamlessly. It's

- [52] Kimi K2.6 is Surprisingly GOOD! (Real World Tests and ...youtube.com

We tested the new Kimi K2.6 model extensively by building 6 real-world projects from single prompts — 3D building animations, space shooter

- [53] Last night, Moonshot AI dropped Kimi K2.6. - Saurabh khansaurabhkhan.medium.com

Moonshot built their own design benchmark, Kimi Design Bench, and tested it against Gemini 3. The K2.6 agent was noticeably ahead. It even

- [54] Kimi K2.6: Tested Open-Source Model – Full Review!youtube.com

Kimi K2.6: Tested Open-Source Model – Full Review! In this video, I put Kimi K2.6, the latest open-source AI model, to the test!

- [55] Reading the Traces: What Two Charts Tell Us About Kimi K2 ...aashi-dutt3.medium.com

Moonshot AI's Kimi K2.6 launch blog is full of the usual things: benchmark tables, partner testimonials, a license file.

- [56] Add community evaluation results for GPQA, GSM8K, HLE, MMLU ...huggingface.co

deepseek-ai/DeepSeek-V4-Pro · Add community evaluation results for GPQA, GSM8K, HLE, MMLU-PRO, SWE-BENCH_PRO, SWE-BENCH_VERIFIED, TERMINAL-BENCH-2.0 Image 1: Hugging Face's logoHugging Face Models Datasets Spaces Buckets new Docs Enterprise Pricing Log In Sign Up # Image 2 deepseek-ai / DeepSeek-V4-Pro like 2.12k Follow Image 3DeepSeek 127k Text GenerationTransformersSafetensorsdeepseek_v4Eval Results8-bit precisionfp8 License:mit Model cardFiles Files and versions xetCommunity 144 Deploy Use this model ## Add community evaluation results for GPQA, GSM8K, HLE, MMLU-PRO, SWE-BENCH_PRO, SWE-B…

- [57] China’s DeepSeek releases new AI model it claims beats all open-source competitorsau.finance.yahoo.com

The model is available as DeepSeek V4-Pro and DeepSeek V4-Flash. The latter version, the company says, is a “more efficient and economical choice". “In world knowledge benchmarks, DeepSeek V4-Pro significantly leads other open-source models and is only slightly outperformed by the top-tier closed-source model Gemini-Pro-3.1," the company noted, referring to Google's generative AI. DeepSeek V4-Pro comes with a “maximum reasoning effort mode”, which, the Chinese startup claims, “significantly advances the knowledge capabilities of open-source models, firmly establishing itself as the best open-…

- [58] China's DeepSeek releases preview of long-awaited V4 model as AI ...cnbc.com

DeepSeek also said that V4 has been optimized for use with popular agent tools such as Anthropic’s Claude Code and OpenClaw. According to Counterpoint’s principal AI analyst, Wei Sun, V4′s benchmark profile suggests it could offer “excellent agent capability at significantly lower cost.” [...] Similar to DeepSeek’s previous model releases, the latest upgrade is open-source, allowing developers to download the code, run it locally and modify it in most cases. The model is available in both a “pro” and a “flash” version, depending on size, with DeepSeek claiming that V4 achieves strong performa…

- [59] DeepSeek V4 (2026): 1T Parameters, 81% SWE-bench ... - NxCodenxcode.io

N NxCode Team 2026-03-12•12 min read DeepSeek V4 is a ~1 trillion parameter Mixture-of-Experts model with only ~37B active parameters per token, a 1M-token context window powered by Engram conditional memory, and native multimodal generation (text, image, video). Leaked benchmarks claim 90% HumanEval and 80%+ SWE-bench Verified — which would match Claude Opus 4.6. Based on DeepSeek's current pricing, V4 is expected to remain dramatically cheaper than closed-source competitors, with weights planned for Apache 2.0 release. [...] The claimed results: | Metric | Standard Attention | Engram (DeepS…

- [60] DeepSeek V4 Guide: Engram Memory, Training Data Strategy ...kili-technology.com

DeepSeek V3's benchmark performance established that an open-weight model, trained at an economical cost, could match or exceed leading closed-source models. On coding benchmarks, it matched GPT-4 Turbo on HumanEval. On mathematical reasoning, it competed with Claude 3.5 Sonnet. Comprehensive evaluations across MMLU, ARC, and HellaSwag showed it within points of the best proprietary models. The lesson: training efficiency and model performance are not in tension. You don't need $100 million — you need to spend wisely on data composition, training stability, and post-training quality. ## How D…

- [61] DeepSeek V4 Preview Releaseapi-docs.deepseek.com

API Reference News DeepSeek-V4 Preview Release 2026/04/24 DeepSeek-V3.2 Release 2025/12/01 DeepSeek-V3.2-Exp Release 2025/09/29 DeepSeek V3.1 Update 2025/09/22 DeepSeek V3.1 Release 2025/08/21 DeepSeek-R1-0528 Release 2025/05/28 DeepSeek-V3-0324 Release 2025/03/25 DeepSeek-R1 Release 2025/01/20 DeepSeek APP 2025/01/15 Introducing DeepSeek-V3 2024/12/26 DeepSeek-V2.5-1210 Release 2024/12/10 DeepSeek-R1-Lite Release 2024/11/20 DeepSeek-V2.5 Release 2024/09/05 Context Caching is Available 2024/08/02 New API Features 2024/07/25 Other Resources FAQ Change Log . 🔹 Peak Efficiency: World-leading lo…

- [62] DeepSeek V4 Pro (Max) - Intelligence, Performance & Price Analysisartificialanalysis.ai

Artificial Analysis Intelligence Index Artificial Analysis Intelligence Index v4.0 includes: GDPval-AA, 𝜏²-Bench Telecom, Terminal-Bench Hard, SciCode, AA-LCR, AA-Omniscience, IFBench, Humanity's Last Exam, GPQA Diamond, CritPt. See Intelligence Index methodology for further details, including a breakdown of each evaluation and how we run them. ## Openness ## Artificial Analysis Openness Index: Results ## Intelligence Index Comparisons ## Intelligence vs. Price ### Price-Quality Variance While higher intelligence models are typically more expensive, they do not all follow the same price-…

- [63] DeepSeek V4: Features, Benchmarks, and Comparisons - DataCampdatacamp.com

DeepSeek V4 Benchmarks According to DeepSeek’s internal results, DeepSeek V4 demonstrates impressive performance, particularly when pushed to its maximum reasoning limits (DeepSeek-V4-Pro-Max). According to the official release notes, here is how the model stacks up against the wider industry: ### Knowledge and reasoning Pro-Max easily outperforms other open-source models and beats older frontier models like GPT-5.2. It scores a highly competitive 87.5% on MMLU-Pro and 90.1% on GPQA Diamond, alongside a massive 92.6% on GSM8K for math. While it still trails the absolute bleeding edge (GPT-…

- [64] deepseek-ai/DeepSeek-V4-Pro - Hugging Facehuggingface.co

Evaluation results []( Diamond on Idavidrein/gpqaView evaluation resultsleaderboard 90.1 Gsm8k on openai/gsm8kView evaluation resultsleaderboard 92.6 Hle on cais/hleView evaluation results 37.7 Mmlu Pro on TIGER-Lab/MMLU-ProView evaluation results 87.5 SWE Bench Pro on ScaleAI/SWE-bench_ProView evaluation resultsleaderboard 55.4 Swe Bench Resolved on SWE-bench/SWE-bench_VerifiedView evaluation resultsleaderboard 80.6 Terminalbench 2 on harborframework/terminal-bench-2.0View evaluation resultsleaderboard 67.9 System theme Company TOSPrivacyAboutCareers[]( Website ModelsDatasetsSpacesPricing…

- [65] DeepSeek-V4-Pro-Max: Pricing, Benchmarks & Performancellm-stats.com

34 40 47 54 5250494038 BrowseComp BrowseComp is a benchmark comprising 1,266 questions that challenge AI agents to persistently navigate the internet in search of hard-to-find, entangled information. The benchmark measures agents' ability to exercise persistence in information gathering, demonstrate creativity in web navigation, and find concise, verifiable answers. Despite the difficulty of the questions, BrowseComp is simple and easy-to-use, as predicted answers are short and easily verifiable against reference answers. agents, reasoning, search 63 72 80 89 86837569 MCP Atlas MCP Atlas is a…

- [66] DeepSeek V4 - Vals AIvals.ai

Benchmarks Models Comparison Model Guide App Reports News About Benchmarks Models Comparison Model Guide App Reports About Release date Models 4/23/2026 DeepSeek DeepSeek V4 4/23/2026 OpenAI GPT 5.5 4/20/2026 Moonshot AI Kimi K2.6 4/16/2026 Anthropic Claude Opus 4.7 4/8/2026 Meta Muse Spark 4/2/2026 Google Gemma 4 31B IT 4/2/2026 Alibaba Qwen 3.6 Plus 4/1/2026 zAI GLM 5.1 4/1/2026 Arcee AI Trinity Large Thinking 3/17/2026 OpenAI GPT 5.4 Mini 3/17/2026 OpenAI GPT 5.4 Nano 3/17/2026 MiniMax MiniMax-M2.7 3/9/2026 xAI Grok 4.20 (Reasoning) 3/5/2026 OpenAI GPT 5.4 3/3/2026 Google Gemini 3.1 Flash…

- [67] DeepSeek V4 Review: Professional Assessment of the Best ...bridgers.agency

This is the question businesses ask every time: "Which one is best?" The honest answer is that it depends entirely on the context of use. Here is our analysis framework. ### For Code and Software Development Leaked benchmarks suggest a 90% score on HumanEval and above 80% on SWE-bench for DeepSeek V4. If these numbers hold, V4 would position itself at the same level as, or above, GPT-5.4 and Claude Opus 4 on programming tasks. Our experience with V3 confirms that DeepSeek particularly excels at Python, JavaScript, and system-level languages. However, performance on less represented languages…

- [68] DeepSeek-V4-Flash-Max: Pricing, Benchmarks & Performancellm-stats.com

LLM Stats Logo Make AI phone calls with one API call ### Join our newsletter and stay up to date with everything AI There's too much noise in AI, let's filter it for you. Get a curated digest of models, benchmarks, and the analysis that matters, right in your inbox once a week. No spam, unsubscribe anytime LLM Stats Logo The AI Benchmarking Hub. ### Leaderboards ### Arenas ### Benchmarks ### Models ### Resources © 2026 llm-stats

- [69] DeepSeek V4 API: Pricing, Benchmarks & 1M Context - EvoLink.AIevolink.ai

HappyHorse 1.0 Coming SoonLearn More DeepSeek V4 Flash API DeepSeek V4 Flash is the fast general-purpose tier of the V4 series. 1M-token context, optional thinking mode, and an order of magnitude lower cost than Claude Sonnet — callable via OpenAI or Anthropic endpoints on EvoLink. Model Type: ✓DeepSeek V4 FlashDeepSeek V4 Pro Price: $0.147(~ 10 credits) per 1M input tokens; $0.294(~ 20 credits) per 1M output tokens $0.029(~ 2 credits) per 1M cache read tokens Highest stability with guaranteed 99.9% uptime. Recommended for production environments. Use the same API endpoint for all versions. O…

- [70] DeepSeek V4: 1T Parameter AI Model Guidedeepseek.ai

| Benchmark | DeepSeek V4 | GPT-5.4 | Claude Opus 4.5 | DeepSeek V3 | --- --- | SWE-bench Verified | >80% | ~80% | 80.9% | ~49% | | HumanEval | ~90% | ~92% | ~92% | ~85% | | Context Window | 1M tokens | 256K | 200K | 128K | | Parameters (Total) | ~1T | Unknown | Unknown | 671B | ⚠️ Note: These impressive numbers are currently based on leaked internal data and are awaiting independent third-party verification upon release. ## API Pricing: The Most Cost-Effective Frontier AI Western models like GPT-5.4 and Claude Opus are powerful but expensive. For example, GPT-5.2/5.4 costs around $1.75 to $1…

- [71] DeepSeek V4: Architecture, Benchmarks, and API Guide (2026)morphllm.com

March 3, 2026·1 min read TL;DRKey SpecsArchitecture: Three InnovationsBenchmark PerformanceFor Coding: How Good Is It?What Changed from V3API PricingCommunity ReactionLimitationsFAQ ## TL;DR DeepSeek V4 launches this week. It is a 1-trillion-parameter MoE model with a 1M-token context window, three new architectural techniques, and native multimodal support. Pre-release claims put it at 80-85% SWE-bench Verified and 90% HumanEval. API pricing is expected around $0.14/M input tokens, roughly 20-50x cheaper than Western frontier models. The numbers are from internal DeepSeek benchmarks only. In…

- [72] DeepSeek-V4 Technical Report [pdf] - Hacker Newsnews.ycombinator.com

itself as a highly cost-effective architecture for complex reasoning tasks. • Agent: On public benchmarks, DeepSeek-V4-Pro-Max is on par with leading open-source models, such as Kimi-K2.6 and GLM-5.1, but slightly worse than frontier closed models. In our internal evaluation, DeepSeek-V4-Pro-Max outperforms Claude Sonnet 4.5 and approaches the level of Opus 4.5. While they're some months behind closed SOTA (though benchmarks put them close), I wonder if Deepseek 4's longer context capabilities and kv-cache advantage will make up for this reply | | | | | | daemonologist 5 hours ago | prev | ne…

- [73] DeepSeek V4 Benchmarks! : r/singularity - Redditreddit.com

V4 flash seems like the real winner though, deepseek v4 flash (high) scores about the same as gemini 3 flash on artificial analaysis, but costs ... 1 day ago

- [74] Deepseek v4 models are out and here are benchmarks !( 4 versions)reddit.com

[ Removed by moderator ] : r/LocalLLaMA Skip to main content[ Removed by moderator ] : r/LocalLLaMA Open menu Open navigation."Ollama is using llama.cpp under the hood, but adds some quality of life improvements on top of it" Use server wrappers for multi-model hosting and auto-load/unload."The ollama convenience features can be replicated in llama.cpp now" Test with quantized GGUF models to reduce memory and still get good perf."With 24 GB of RAM... I'd definitely recommend a Q4_K_S (ideally iMatrix) GGUF" ### Context, scaling, and performance Context size and token limits matter; larger m…

- [75] Deepseek v4: Best Opensource Model Ever? (Fully Tested) - YouTubeyoutube.com

Benchmark Maxxing 0:53 Image 10 #### Cost Efficiency #### Cost Efficiency 1:29 #### Cost Efficiency 1:29 Image 11 #### Benchmarks #### Benchmarks 2:09 #### Benchmarks 2:09 Image 12 #### MacOS Demo #### MacOS Demo 2:23 #### MacOS Demo 2:23 Image 13 #### DeepSeek Lying... #### DeepSeek Lying... 3:16 #### DeepSeek Lying... 3:16 Image 14 #### Pricing #### Pricing 4:25 #### Pricing 4:25 Image 15 #### How To Use #### How To Use 4:48 #### How To Use 4:48 Transcript Follow along using the transcript. Show transcript Image 16 ### WorldofAI 214K subscribers VideosAboutImage 17 TwitterImage 18 Patr…

- [76] DeepSeek V4 Released - Best AI Model Ever - YouTubeyoutube.com

DeepSeek V4 Pro and Flash are out, and this video breaks down why the release matters. It covers the model size, benchmark competitiveness

- [77] The Coding Assistant Breakdown: More Tokens Please - SemiAnalysisnewsletter.semianalysis.com

The Coding Assistant Breakdown: More Tokens Please. Hands On With GPT 5.5, Opus 4.7, DeepSeek V4, Why Benchmarks Are Bad, and Who's Going To Win. 16 hours ago

- [78] China's DeepSeek unveils latest V4 model with 1M context and ...globaltimes.cn

In evaluations for Mathematics, STEM, and competitive-level coding, DeepSeek-V4-Pro has surpassed all currently documented open-source models, ... 1 day ago

- [79] DeepSeek-V4: a million-token context that agents can actually usehuggingface.co

DeepSeek released V4 today. Two MoE checkpoints are on the Hub: DeepSeek-V4-Pro at 1.6T total parameters with 49B active, ... 1 day ago

- [80] DeepSeek v4 | Hacker Newsnews.ycombinator.com

DeepSeek V4-Pro Max shines in competitive coding benchmarks. However, it trails both Opus models on software engineering. Kimi K2.6 is ... 10 hours ago

- [81] Deep|DeepSeek V4 vs Claude vs GPT-5.4: A 38-Task ... - FundaAIfundaai.substack.com

DeepSeek V4 Pro (Thinking) has the highest completed-task multi-step score at 8.90, ahead of Opus 4.7 at 8.87, but with partial coverage: 29/38 ... 1 day ago

- [82] DeepSeek v4 just dropped. At first glance it does not appear to be ...x.com

v4 does appear impressive on coding benchmarks (93.5% on LiveCodeBench), but its best results are on benchmarks with known contamination risk ... 1 day ago

- [83] Breaking: DeepSeek-V4 just released. This is not just another AI ...facebook.com

DeepSeek-R1's performance is supported by benchmark results: ✓ Reasoning Benchmarks: - AIME 2024: 79.8% pass@1, surpassing OpenAI's o1- mini. 1 day ago

- [84] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

- Evaluation Results ; Terminal-Bench 2.0 (Terminus-2), 66.7, 65.4* ; SWE-Bench Pro, 58.6, 57.7 ; SWE-Bench Multilingual, 76.7, - ; SWE-Bench ... 5 days ago

- [85] moonshotai/Kimi-K2.6 · Add evaluation results for HLE, GPQA, AIME ...huggingface.co

Hugging Face's logo Hugging Face · Models · Datasets · Spaces ... Add evaluation results for HLE, GPQA, AIME, HMMT, SWE-Bench, and Terminal-Bench. 5 days ago

- [86] moonshotai/Kimi-K2-Instruct · Add SWE-Bench Pro evaluation resultshuggingface.co

This PR ensures your model shows up at https://huggingface.co/datasets/ScaleAI/SWE-bench_Pro. This is based on the new evaluation results ... 2 days ago

- [87] Commits · moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

Kimi-K2.6 / .eval_results ... Commit History. Add evaluation results for HLE, GPQA, AIME, HMMT, SWE-Bench, and Terminal-Bench (#4). 5 days ago

- [88] .eval_results/swe_bench_verified.yaml · moonshotai/Kimi-K2.6 at mainhuggingface.co

We're on a journey to advance and democratize artificial intelligence through open source and open science.

- [89] SWE-bench Leaderboardsswebench.com

SWE-bench Logo Official Leaderboards ;. Kimi K2.5 (high reasoning). 70.80 ;. DeepSeek V3.2 (high reasoning). 70.00 ;. Gemini 3 Pro. 69.60 ;. Claude 4.5 ...

- [90] I Tested Kimi K2.6 vs GLM-5.1 on 15 Real Coding Tasks - Towards AIpub.towardsai.net

Two Chinese open-weight models now sit at the top of the global SWE-Bench Pro leaderboard: Kimi K2.6 at 58.6% and GLM-5.1 at 58.4%. 3 days ago

- [91] Updates to official SWE-bench leaderboard: Kimi K2 Thinking top of ...reddit.com

All your tests on the leaderboard are against different versions and probably different benchmark tasks. Why aren't you retesting? Dec 11, 2025

- [92] Is Kimi K2.6 Actually Benchmaxxing? - YouTubeyoutube.com

Kimi K2.6 dropped yesterday from Moonshot AI. The benchmarks look incredible on paper. SWE-Bench Pro ahead of Opus 4.6. Terminal-Bench 2.0 ... 4 days ago