研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較不會中途失焦、漏步驟或跑偏?

就你提供的這批證據來看,若問題是「哪一個 更有明確、可查核的證據 顯示它適合長流程、反覆修正的研究任務」,答案比較偏向 Claude Opus 4.7。[3][5][6] 但如果問題是「哪一個 實際上一定 比較不會中途失焦、漏步驟或跑偏」,目前證據不足,因為你給的材料裡幾乎沒有可核實的 GPT 5.5 官方證據可直接對比。[1][2][53] Key findings Claude Opus 4.7 的官方或準官方材料,明確把它定...

就你提供的這批證據來看,若問題是「哪一個 更有明確、可查核的證據 顯示它適合長流程、反覆修正的研究任務」,答案比較偏向 Claude Opus 4.7。[3][5][6] 但如果問題是「哪一個 實際上一定 比較不會中途失焦、漏步驟或跑偏」,目前證據不足,因為你給的材料裡幾乎沒有可核實的 GPT 5.5 官方證據可直接對比。[1][2][53] Key findings Claude Opus 4.7 的官方或準官方材料,明確把它定位在「long running agentic tasks」上,這和你描述的「連續搜尋、整理、交叉比對、再修正」的長流程研究任務高度相關。[3][6] Anthropic 的官方文件還提到 Claude O

重點整理

- 就你提供的證據來看,較有明確支持的是:Claude Opus 4.7 比較明確被描述為適合長時間、長步驟、需要持續專注的任務;但要嚴格回答「Claude Opus 4.7 跟 GPT-5.5 哪一個比較不會中途失焦、漏步驟或跑偏」,目前證據不足,不能做可靠的正面對決結論。[1][3][6][7] 如果只能依這批資料做保守判斷,Claude Opus 4.7 這一側的證據明顯比較完整;GPT-5.5 則幾乎沒有可對照的直接證據,因此不能查核出誰一定更穩。[1][3][7] ## Key findings - Google Cloud 文件明確描述 Claude Opus 4 可在「需要專注投入、

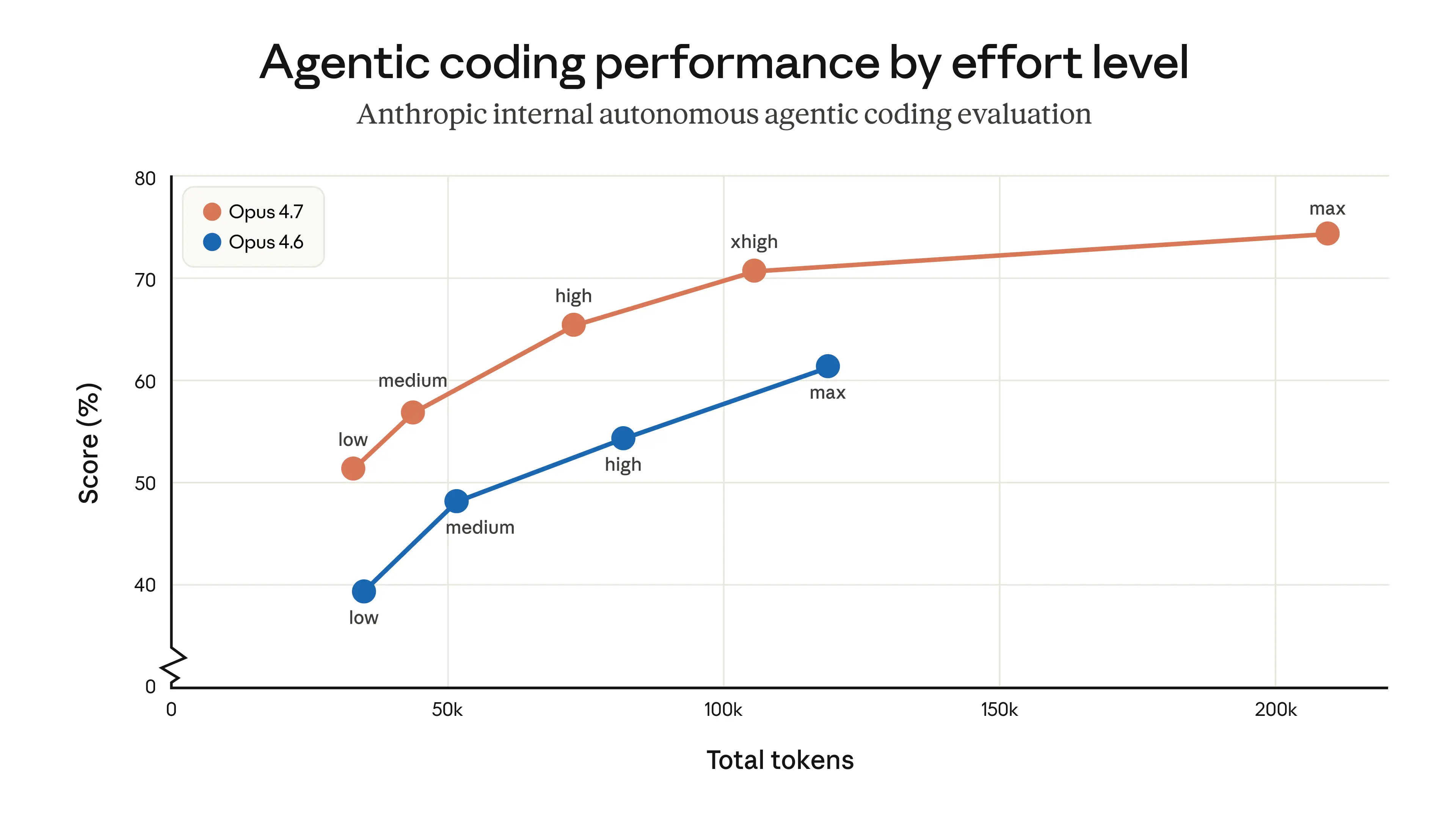

- Claude Opus 4.7 的官方或準官方材料,明確把它定位在「long-running agentic tasks」上,這和你描述的「連續搜尋、整理、交叉比對、再修正」的長流程研究任務高度相關。

- Anthropic 的官方文件還提到 Claude Opus 4.7 有「task budgets(beta)」;這個機制會讓模型看到整個 agentic loop 的預估 token 預算與剩餘倒數,並據此調整優先順序、盡量把任務完整收尾。 這至少是直接針對「長流程中途失焦或收尾不完整」風險的一種產品級設計訊號。

- 你提供的 GPT-5 證據中,OpenAI 官方文件只顯示 GPT-5 的模型與 API 文件存在,但片段裡沒有看到針對「長時間 agentic 研究流程比較不會漏步驟或跑偏」的明確官方主張,也沒有看到 GPT-5.5 的官方 model card 或同級說明。

- 你提供的唯一 GPT-5.5 線索是一則 Facebook 貼文,內容稱 GPT-5.5 著重更強效能、又不變慢,且可處理 coding、research、data analysis 等任務。 但這不是官方文件,也不是高可信度的一手技術說明,因此不能拿來和 Claude Opus 4.7 的官方文件做對等驗證。

- 就你提供的這批證據來看,若問題是「哪一個**更有明確、可查核的證據**顯示它適合長流程、反覆修正的研究任務」,答案比較偏向 Claude Opus 4.7。[3][5][6] 但如果問題是「哪一個**實際上一定**比較不會中途失焦、漏步驟或跑偏」,目前證據不足,因為你給的材料裡幾乎沒有可核實的 GPT-5.5 官方證據可直接對比。[1][2][53]

- ## Key findings

研究答案

就你提供的這批證據來看,若問題是「哪一個更有明確、可查核的證據顯示它適合長流程、反覆修正的研究任務」,答案比較偏向 Claude Opus 4.7。[3][

5][

6] 但如果問題是「哪一個實際上一定比較不會中途失焦、漏步驟或跑偏」,目前證據不足,因為你給的材料裡幾乎沒有可核實的 GPT-5.5 官方證據可直接對比。[

1][

2][

53]

Key findings

-

Claude Opus 4.7 的官方或準官方材料,明確把它定位在「long-running agentic tasks」上,這和你描述的「連續搜尋、整理、交叉比對、再修正」的長流程研究任務高度相關。[

3][

6]

-

Anthropic 的官方文件還提到 Claude Opus 4.7 有「task budgets(beta)」;這個機制會讓模型看到整個 agentic loop 的預估 token 預算與剩餘倒數,並據此調整優先順序、盡量把任務完整收尾。[

5] 這至少是直接針對「長流程中途失焦或收尾不完整」風險的一種產品級設計訊號。[

5]

-

你提供的 GPT-5 證據中,OpenAI 官方文件只顯示 GPT-5 的模型與 API 文件存在,但片段裡沒有看到針對「長時間 agentic 研究流程比較不會漏步驟或跑偏」的明確官方主張,也沒有看到 GPT-5.5 的官方 model card 或同級說明。[

1][

2]

-

你提供的唯一 GPT-5.5 線索是一則 Facebook 貼文,內容稱 GPT-5.5 著重更強效能、又不變慢,且可處理 coding、research、data analysis 等任務。[

53] 但這不是官方文件,也不是高可信度的一手技術說明,因此不能拿來和 Claude Opus 4.7 的官方文件做對等驗證。[

53]

-

綜合以上,若只能依這批證據做「哪個比較有把握」的判斷,Claude Opus 4.7 的證據基礎明顯更強。[

3][

5][

6] 若要做嚴格的「Claude Opus 4.7 一定比 GPT-5.5 更不會失焦」結論,則屬於證據不足。[

1][

2][

53]

Evidence notes

-

Claude Opus 4.7 在 Amazon Bedrock 的 model card 中,被描述為 Anthropic「most capable generally available model」,並強調它推進了 coding、enterprise workflows 與 long-running agentic tasks 的表現。[

3]

-

Microsoft Foundry 的模型目錄也給出一致描述,稱 Claude Opus 4.7 適合 long-running agentic tasks,並在長時程、複雜任務上表現突出。[

6]

-

Anthropic 官方「What’s new」文件中提到的 task budgets,不只是一般效能描述,而是直接面向 agentic loop 的資源分配與完成度控制機制。[

5]

-

OpenRouter 的頁面補充稱 Claude Opus 4.7 是為 long-running, asynchronous agents 打造,並提供 1,000,000 context 的資訊。[

4] 但這是第三方平台資料,參考價值次於官方文件,因此只能作為輔助,不宜作為核心依據。[

4]

-

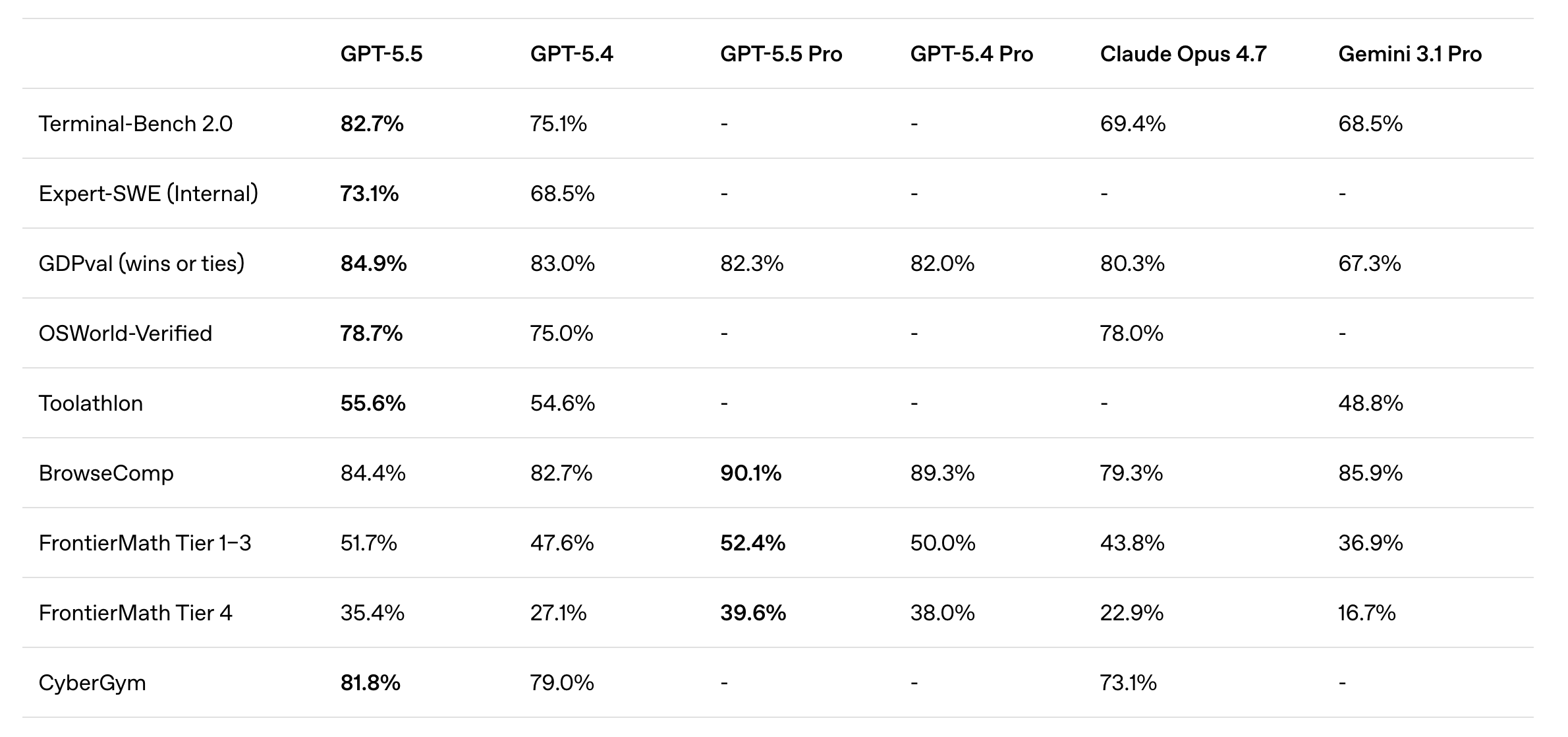

你提供的 Mashable 片段提到某些 benchmark 分數,且還出現「GPT-5-4 Pro」這種命名。[

7] 由於這不是官方基準說明,而且與你問的「長流程研究中是否容易失焦」也不是同一指標,所以不適合當作主要判斷依據。[

7]

Limitations / uncertainty

-

最大限制是:目前證據包裡沒有 GPT-5.5 的官方技術文件、model card,或專門談長流程 agentic/research 任務穩定性的官方說明。[

1][

2][

53]

-

因此,本回答只能說「Claude Opus 4.7 有較強的正面證據」,不能說「Claude Opus 4.7 已被充分證明全面勝過 GPT-5.5」。[

3][

5][

6]

-

另外,產品定位與功能設計不等於真實世界一定更少漏步驟;若沒有同條件、同任務、同評分標準的 head-to-head 測試,最嚴格的比較仍然無法定論。[

3][

5][

6]

Summary

如果你要,我下一步可以幫你把這個結論改寫成更短的「採購建議版」、或更嚴格的「研究報告版」。

輔助視覺素材

研究對話

研究問題

研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較不會中途失焦、漏步驟或跑偏?

附引用的答案

就你給的證據,不能可靠地下結論說「Claude Opus 4.7」或「GPT-5.5 Spud」哪一個在長流程研究任務裡更不會中途失焦、漏步驟或跑偏,因為現有資料裡沒有直接對比,也沒有你點名的「GPT-5.5 Spud」證據可查核。Insufficient evidence.[1][

2][

4][

5][

6] - 提供的官方與準官方資料中,Claude Opus 4.7 被描述為 Anthropic「最有能力的」一般可用模型之一,強項是複雜推理與 agentic coding,但這不是針對你要的「連續搜尋、整理、交叉比對、再修正」研究流程做的直接驗證。[

1] - 與你問題最接近的表述,其實來自 Claude Opus 4.1 的官方雲端文件:它被描述為能在需要持續專注的長時間任務上維持表現,但這份證據是 4.1,不是 4.7,所以只能算間接參考,不能直接外推到 4.7。[

4] - OpenAI 的官方文件裡,GPT-5.4 被描述為面向複雜專業工作的前沿模型,且 GPT-5 系列有工具使用文件,但你給的證據沒有針對「長流程研究任務的抗失焦、抗漏步驟、抗跑偏」提供直接說明或 benchmark。[

2][

5][

6] - 你題目中的「GPT-5.5 Spud」沒有出現在提供的 OpenAI 官方模型頁或模型指南證據中,因此我不能確認它的型號名稱、定位,或拿它和 Claude Opus 4.7 做事實層面的公平比較。[

2][

3][

5][

6] - [

8] 提到 Claude Opus 4.7 在 coding benchmark 與 production tasks 有提升,但來源不是 Anthropic、Google Cloud 或 OpenAI 官方文件,而且內容偏 coding/production task,不是研究工作流,所以可信度與相關性都較弱。[

8] 如果你只要一個「暫時性的工作判斷」而不是定論:在現有證據裡,Claude 系列有比較接近「長時間任務持續表現」的描述,但那是 Opus 4.1 的資料,不能當成 Claude Opus 4.7 明確勝出的證明。[

4] 如果你要我下一步幫你做更嚴格的判讀,我建議直接比三類證據:官方長任務描述、真實多步研究 benchmark、以及實測 logs 的漏步驟率。

研究問題

研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 哪一個比較不會中途失焦、漏步驟或跑偏?

附引用的答案

就你提供的證據來看,較有明確支持的是:Claude Opus 4.7 比較明確被描述為適合長時間、長步驟、需要持續專注的任務;但要嚴格回答「Claude Opus 4.7 跟 GPT-5.5 哪一個比較不會中途失焦、漏步驟或跑偏」,目前證據不足,不能做可靠的正面對決結論。[1][

3][

6][

7]

如果只能依這批資料做保守判斷,Claude Opus 4.7 這一側的證據明顯比較完整;GPT-5.5 則幾乎沒有可對照的直接證據,因此不能查核出誰一定更穩。[1][

3][

7]

Key findings

-

Google Cloud 文件明確描述 Claude Opus 4 可在「需要專注投入、執行數千步驟的長時間任務」中維持表現,並說這類能力擴大了 AI agents 可解決的問題範圍。[

1][

6]

-

Anthropic 的 Claude 文件把 Claude Opus 4.7 定位為其「最有能力」的模型,用於複雜推理與 agentic coding,並稱相較 Claude Opus 4.6 有明顯躍升。[

7]

-

第三方 API 文件也把 Claude 4 Opus 描述為擅長「complex, long-duration tasks」與 agent-based workflows;但這屬次級來源,證據力弱於原廠或雲平台官方文件。[

4]

-

相比之下,提供的 GPT 證據只有 GPT-5 模型文件入口,而且片段中可見的是「Latest: GPT-5.4」,不是 GPT-5.5;在你給的證據裡,沒有看到 GPT-5.5 對長流程研究、連續搜尋整理、交叉比對、修正流程穩定性的直接主張或測試結果。[

3]

-

因此,若問題是「哪一個比較不會中途失焦、漏步驟或跑偏」,最嚴謹的答案不是直接選邊,而是:目前無法完成可信的 head-to-head 事實查核,因為兩邊證據不對稱,且 GPT-5.5 缺乏可比資料。[

1][

3][

7]

-

若問題改成「哪一個在這批證據中,被更明確宣稱適合長流程、多步驟、代理式工作」,答案會偏向 Claude Opus 4.7/Claude Opus 4 系列。[

1][

6][

7]

Evidence notes

-

對 Claude 有三種相對較強的支持訊號:

-

對 GPT-5.5,這批材料裡沒有等量、等類型的證據,例如:

Limitations / uncertainty

-

最主要限制是:沒有 GPT-5.5 的直接證據,所以不能做公平比較。[

3]

-

Claude 的多數支持內容屬「官方定位/官方能力描述」,不是你所問情境的獨立實測結果;這能支持「被設計或宣稱適合」,但不能直接證明「實際上一定比 GPT-5.5 更不會失焦」。[

1][

6][

7]

-

Reddit 這類使用者貼文雖然可能提供體感經驗,但權威性低,不適合拿來當核心查核依據;因此不應據此下結論。[

54]

Summary

依你提供的證據,現在最可靠的結論是:

- **不能正式查核出 Claude Opus 4.7 一定比 GPT-5.5 更不會失焦、漏步驟或跑偏。**因為 GPT-5.5 的可比證據不足。[

3]

- 若只看目前已有資料,Claude Opus 4.7 一側更有證據顯示它被明確面向長流程、多步驟、需要持續專注的工作來定位。[

1][

6][

7]

所以,最準確的回答是:目前無法做出可靠的勝負判定;但就現有證據傾向而言,Claude Opus 4.7 在這類長流程研究任務上的支持說法比較完整。[1][

3][

7]

研究問題

研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 哪一個比較不會中途失焦、漏步驟或跑偏?

附引用的答案

就你提供的這批證據來看,若問題是「哪一個更有明確、可查核的證據顯示它適合長流程、反覆修正的研究任務」,答案比較偏向 Claude Opus 4.7。[3][

5][

6] 但如果問題是「哪一個實際上一定比較不會中途失焦、漏步驟或跑偏」,目前證據不足,因為你給的材料裡幾乎沒有可核實的 GPT-5.5 官方證據可直接對比。[

1][

2][

53]

Key findings

-

Claude Opus 4.7 的官方或準官方材料,明確把它定位在「long-running agentic tasks」上,這和你描述的「連續搜尋、整理、交叉比對、再修正」的長流程研究任務高度相關。[

3][

6]

-

Anthropic 的官方文件還提到 Claude Opus 4.7 有「task budgets(beta)」;這個機制會讓模型看到整個 agentic loop 的預估 token 預算與剩餘倒數,並據此調整優先順序、盡量把任務完整收尾。[

5] 這至少是直接針對「長流程中途失焦或收尾不完整」風險的一種產品級設計訊號。[

5]

-

你提供的 GPT-5 證據中,OpenAI 官方文件只顯示 GPT-5 的模型與 API 文件存在,但片段裡沒有看到針對「長時間 agentic 研究流程比較不會漏步驟或跑偏」的明確官方主張,也沒有看到 GPT-5.5 的官方 model card 或同級說明。[

1][

2]

-

你提供的唯一 GPT-5.5 線索是一則 Facebook 貼文,內容稱 GPT-5.5 著重更強效能、又不變慢,且可處理 coding、research、data analysis 等任務。[

53] 但這不是官方文件,也不是高可信度的一手技術說明,因此不能拿來和 Claude Opus 4.7 的官方文件做對等驗證。[

53]

-

綜合以上,若只能依這批證據做「哪個比較有把握」的判斷,Claude Opus 4.7 的證據基礎明顯更強。[

3][

5][

6] 若要做嚴格的「Claude Opus 4.7 一定比 GPT-5.5 更不會失焦」結論,則屬於證據不足。[

1][

2][

53]

Evidence notes

-

Claude Opus 4.7 在 Amazon Bedrock 的 model card 中,被描述為 Anthropic「most capable generally available model」,並強調它推進了 coding、enterprise workflows 與 long-running agentic tasks 的表現。[

3]

-

Microsoft Foundry 的模型目錄也給出一致描述,稱 Claude Opus 4.7 適合 long-running agentic tasks,並在長時程、複雜任務上表現突出。[

6]

-

Anthropic 官方「What’s new」文件中提到的 task budgets,不只是一般效能描述,而是直接面向 agentic loop 的資源分配與完成度控制機制。[

5]

-

OpenRouter 的頁面補充稱 Claude Opus 4.7 是為 long-running, asynchronous agents 打造,並提供 1,000,000 context 的資訊。[

4] 但這是第三方平台資料,參考價值次於官方文件,因此只能作為輔助,不宜作為核心依據。[

4]

-

你提供的 Mashable 片段提到某些 benchmark 分數,且還出現「GPT-5-4 Pro」這種命名。[

7] 由於這不是官方基準說明,而且與你問的「長流程研究中是否容易失焦」也不是同一指標,所以不適合當作主要判斷依據。[

7]

Limitations / uncertainty

-

最大限制是:目前證據包裡沒有 GPT-5.5 的官方技術文件、model card,或專門談長流程 agentic/research 任務穩定性的官方說明。[

1][

2][

53]

-

因此,本回答只能說「Claude Opus 4.7 有較強的正面證據」,不能說「Claude Opus 4.7 已被充分證明全面勝過 GPT-5.5」。[

3][

5][

6]

-

另外,產品定位與功能設計不等於真實世界一定更少漏步驟;若沒有同條件、同任務、同評分標準的 head-to-head 測試,最嚴格的比較仍然無法定論。[

3][

5][

6]

Summary

如果你要,我下一步可以幫你把這個結論改寫成更短的「採購建議版」、或更嚴格的「研究報告版」。

大家也會問

「研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較不會中途失焦、漏步驟或跑偏?」的簡短答案是什麼?

就你提供的證據來看,較有明確支持的是:Claude Opus 4.7 比較明確被描述為適合長時間、長步驟、需要持續專注的任務;但要嚴格回答「Claude Opus 4.7 跟 GPT-5.5 哪一個比較不會中途失焦、漏步驟或跑偏」,目前證據不足,不能做可靠的正面對決結論。[1][3][6][7] 如果只能依這批資料做保守判斷,Claude Opus 4.7 這一側的證據明顯比較完整;GPT-5.5 則幾乎沒有可對照的直接證據,因此不能查核出誰一定更穩。[1][3][7] ## Key findings - Google Cloud 文件明確描述 Claude Opus 4 可在「需要專注投入、

最值得優先驗證的重點是什麼?

就你提供的證據來看,較有明確支持的是:Claude Opus 4.7 比較明確被描述為適合長時間、長步驟、需要持續專注的任務;但要嚴格回答「Claude Opus 4.7 跟 GPT-5.5 哪一個比較不會中途失焦、漏步驟或跑偏」,目前證據不足,不能做可靠的正面對決結論。[1][3][6][7] 如果只能依這批資料做保守判斷,Claude Opus 4.7 這一側的證據明顯比較完整;GPT-5.5 則幾乎沒有可對照的直接證據,因此不能查核出誰一定更穩。[1][3][7] ## Key findings - Google Cloud 文件明確描述 Claude Opus 4 可在「需要專注投入、 Claude Opus 4.7 的官方或準官方材料,明確把它定位在「long-running agentic tasks」上,這和你描述的「連續搜尋、整理、交叉比對、再修正」的長流程研究任務高度相關。

接下來在實務上該怎麼做?

Anthropic 的官方文件還提到 Claude Opus 4.7 有「task budgets(beta)」;這個機制會讓模型看到整個 agentic loop 的預估 token 預算與剩餘倒數,並據此調整優先順序、盡量把任務完整收尾。 這至少是直接針對「長流程中途失焦或收尾不完整」風險的一種產品級設計訊號。

下一步適合探索哪個相關主題?

繼續閱讀「請閱讀 Claude Opus 4.7 與 GPT 5.5 的介紹: https://www.anthropic.com/news/claude-opus-4-7 https://openai.com/index/introducing-gpt-5-5 請對它們進行深入研究,並撰」,從另一個角度查看更多引用來源。

開啟相關頁面我應該拿這個和什麼比較?

將這個答案與「研究並查核事實:如果研究過程裡混入 prompt injection、假引用、惡意 PDF、帶偏見資料,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較能守住研究品質和安全邊界?」交叉比對。

開啟相關頁面繼續深入研究

來源

- [1] Claude Opus 4.7 - Amazon Bedrockdocs.aws.amazon.com

Image 2 Anthropic — Claude Opus 4.7 ## Model Details Claude Opus 4.7 is Anthropic's most capable generally available model, advancing performance across coding, enterprise workflows, and long-running agentic tasks. Model launch date: Apr 16, 2026 Model EOL date: N/A End User License Agreements and Terms of Use:View Model lifecycle: Active Context window: 1M tokens Max output tokens: 128K Reasoning: Supported Knowledge cutoff: January 2026 [...] ### On this page 1. Anthropic — Claude Opus 4.7 2. Model Details 3. Capabilities and Features 4. Pricing 5. Programmatic Access 6. Service Tiers 7.…

- [2] Anthropic: Claude Opus 4.7 – API Quickstart | OpenRouteropenrouter.ai

Anthropic: Claude Opus 4.7 ### anthropic/claude-opus-4.7 Released Apr 16, 20261,000,000 context$5/M input tokens$25/M output tokens Opus 4.7 is the next generation of Anthropic's Opus family, built for long-running, asynchronous agents. Building on the coding and agentic strengths of Opus 4.6, it delivers stronger performance on complex, multi-step tasks and more reliable agentic execution across extended workflows. It is especially effective for asynchronous agent pipelines where tasks unfold over time - large codebases, multi-stage debugging, and end-to-end project orchestration. [...] Be…

- [3] What's new in Claude Opus 4.7platform.claude.com

Task budgets (beta) Claude Opus 4.7 introduces task budgets. A task budget gives Claude a rough estimate of how many tokens to target for a full agentic loop, including thinking, tool calls, tool results, and final output. The model sees a running countdown and uses it to prioritize work and finish the task gracefully as the budget is consumed. To use, set the beta header

task-budgets-2026-03-13and add the following to your output config: Python [...] # What's new in Claude Opus 4.7 - Claude API Docs . This change should unlock performance gains on vision-heavy workloads, and is partic… - [4] Claude Opus 4.7 - Anthropicanthropic.com

With adaptive thinking, Opus 4.7 automatically adjusts how much thinking it uses based on the complexity of the task, spending more time on harder problems and responding quickly to simpler ones. Popular use cases include: ## Advanced coding Opus 4.7 can confidently deliver production-ready code with minimal oversight. It plans carefully, runs for longer with sustained effort, and operates reliably in larger codebases. It catches its own mistakes, so senior engineers can delegate their hardest coding work with confidence. ## AI agents Opus 4.7 powers production agentic workflows, orchestratin…

- [5] Claude Opus 4.7 and Uncensored AI Platforms - U深研unifuncs.com

相关链接 [o3 launch post] [o3 system card] [GPT-4.1 launch post] [GPT-4.1 hosted evals] [Gemini 2.5 Pro Preview model card] [Claude 3.7 Sonnet launch post] Claude Opus 4.6 \ Anthropic Anthropic Claude Opus 4.6 features and improvements ### 发布方:Anthropic ### 发布日期:Feb 5, 2026 # Introducing Claude Opus 4.6 We’re upgrading our smartest model. The new Claude Opus 4.6 improves on its predecessor’s coding skills: it plans more carefully, sustains agentic tasks for longer, operates more reliably in larger codebases, and has better code review and debugging skills to catch its own mistakes. For the fi…

- [6] Claude Opus 4.7 Deep Dive: Capabilities, Migration, and the New ...caylent.com

At a spec level, Opus 4.7 is positioned as Anthropic’s most capable generally available model for coding, enterprise workflows, multimodal reasoning, financial analysis, life sciences, cybersecurity, and long-running agentic work. It supports a 1M context window with no long-context pricing premium, up to 128K output tokens, and standard Opus pricing at $5 per million input tokens and $25 per million output tokens. The model's reliable knowledge cutoff is January 2026. [...] Cut VMware costs and migrate to AWS in half the time with end-to-end AI acceleration. Say goodbye to licensing costs li…

- [7] Claude Opus 4.7,全网差评!刚升级就翻车,用户怒斥:还我4.6 - 知乎zhuanlan.zhihu.com

Anthropic建议迁移至Opus 4.7前,过一遍Opus 4.6的全部提示词。 此外,BrowseComp分数下降了4.4个百分点。如果你的智能体严重依赖深度网络研究和多页面信息整合,请谨慎升级。对于这类特定工作负载,GPT-5.4 Pro(89.3%)或 Gemini 3.1 Pro(85.9%)是更合适的选择。 更要命的是,Opus 4.7采用新tokenizer,让相同文本的token数多0–35%,所以基于4.6的固定预算要重测。 这不得不让人怀疑:Anthropic并不在意普通用户,要不然为什么发布一个比Mythos更差但比Opus 4.6更费token的Opus 4.7? A厂还有多长时间纠错? 总之,这次Opus 4.7的争议,表面上看是一个产品更新的「翻车事件」,但它触及了一个更深层的问题。 当AI越来越强大,谁来定义「强大」的标准?是更长的上下文?更快的响应速度?还是更低的运营成本? 不撒谎,不敷衍,不编造,不会在用户最需要深度思考的时候,选择「省点电」。 这些要求,是任何一个专业工具的基本底线。 Opus 4.6做到了。Opus 4.7却没有做到。 在这一次,Anthropic的信任又被透支了。 他们还有机会纠正方向,但窗口期不会太长。 参考资料: 新智元 [...] # Claude Opus 4.7,全网差评!刚升级就翻车,用户怒斥:还我4.6 新智…

- [8] Introducing Claude Opus 4.7 - Anthropicanthropic.com

Image 6: logo > On our 93-task coding benchmark, Claude Opus 4.7 lifted resolution by 13% over Opus 4.6, including four tasks neither Opus 4.6 nor Sonnet 4.6 could solve. Combined with faster median latency and strict instruction following, it’s particularly meaningful for complex, long-running coding workflows. It cuts the friction from those multi-step tasks so developers can stay in the flow and focus on building. > > Mario Rodriguez > > Chief Product Officer Image 7: logo [...] Image 8: logo > Claude Opus 4.7 extends the limit of what models can do to investigate and get tasks done. Anthr…

- [9] CL4R1T4S/ANTHROPIC/Claude-Opus-4.7.txt at main · elder-plinius/CL4R1T4Sgithub.com

Claude is accessible via an API and Claude Platform. The most recent Claude models are Claude Opus 4.7, Claude Opus 4.6, Claude Sonnet 4.6, and Claude Haiku 4.5, the exact model strings for which are 'claude-opus-4-7', 'claude-opus-4-6', 'claude-sonnet-4-6', and 'claude-haiku-4-5-20251001' respectively. Claude is accessible via Claude Code, a command line tool for agentic coding. Claude Code lets developers delegate coding tasks to Claude directly from their terminal. Claude is accessible via beta products Claude in Chrome - a browsing agent, Claude in Excel - a spreadsheet agent, and Cowork…

- [10] Claude Opus 4.7突袭!Anthropic把“不乱猜”做成了核心卖点_模型_能力_测试阶段sohu.com

Claude Opus 4.7突袭!Anthropic把“不乱猜”做成了核心卖点 来源:市场资讯 (来源:钛媒体AGI) Claude Opus 4.7发布,视觉智能是此版本进步最为显著的方面。 作者|硅谷Tech news 编辑|秦聪慧 本文首发于钛媒体APP 当地时间4 月 16 日,Anthropic 正式发布新一代旗舰模型 Claude Opus 4.7。该公司将其定位为"迄今能力最强的通用可用模型",标志着人工智能行业竞争焦点的决定性转变——从追求对话流畅性转向考核自主任务执行能力。 与两个月前发布的 Opus 4.6 相比,新版本专为Agentic工作流设计,即需要 AI 在较长时间内独立运行、仅需少量人类干预的复杂任务。此次更新优先提升高分辨率视觉感知、复杂多步软件工程链稳定性,以及长上下文检索能力,而非单纯追求推理深度(官方博客地址: 值得注意的是,Anthropic 在发布 Opus 4.7 的同时坦诚表示,这并非其最强模型——能力更强的 Claude Mythos Preview 仍处于受限测试阶段。 1 视觉智能突破与长上下文瓶颈攻克 在企业级应用场景的基准测试中,Opus 4.7 与主要竞争对手的性能差距进一步拉大。在 OfficeQA Pro 评测中——该评测由 Databricks 开发,要求模型解析近 9 万页美国财政部历史文件(涵盖近 100…

- [11] Claude Opus 4.7 登場 — Anthropic 最新旗艦,這次升級比你想的更重要 這次升級有三個核心突破: 🔹 軟體工程能力:最難的 coding 任務解決率提升 13%,可以真正放手讓它自主執行 🔹 視覺理解:圖片解析度提升 3 倍,電腦操控 Agent 準確率從 54.5% 跳到 98.5% 🔹 長時間 Agent:跨工作階段記憶 + 自我驗證輸出,不需要全程監督 最讓我覺得有意思的,是 Anthropic 的資安策略—— 他們在 Opus 4.7 的訓練過程中刻意限制了網路攻擊能力,先在這個模型上驗證護欄,再決定是否開放更強的 Mythos Preview。 這種「分threads.com

Log in # Thread 894 views future.ai.tw's profile picture future.ai.tw AnthropicClaude Claude Opus 4.7 登場 — Anthropic 最新旗艦,這次升級比你想的更重要 這次升級有三個核心突破: 🔹 軟體工程能力:最難的 coding 任務解決率提升 13%,可以真正放手讓它自主執行 🔹 視覺理解:圖片解析度提升 3 倍,電腦操控 Agent 準確率從 54.5% 跳到 98.5% 🔹 長時間 Agent:跨工作階段記憶 + 自我驗證輸出,不需要全程監督 最讓我覺得有意思的,是 Anthropic 的資安策略—— 他們在 Opus 4.7 的訓練過程中刻意限制了網路攻擊能力,先在這個模型上驗證護欄,再決定是否開放更強的 Mythos Preview。 這種「分級開放」的思路,是目前業界最成熟的負責任 AI 發布策略。 📌 滑左看完整 8 張圖解 📌 API 用戶:模型代碼 claude-opus-4-7,定價維持不變 1 1

- [12] Instagraminstagram.com

AI 巨頭Anthropic 宣布正式推出最新旗艦模型Claude Opus 4.7。是次升級主要針對高階軟件工程能力進行重大優化,能更自主地處理複雜的編碼任務,同時視覺

- [13] AI Model Catalog | Microsoft Foundry Modelsai.azure.com

Claude Opus 4.7 is our most capable generally available model, advancing performance across coding, enterprise workflows, and long-running agentic tasks. Coding: Claude Opus 4.7 is built for agentic coding at scale, excelling at long-horizon projects, complex implementations, and polished UI design. It handles the full lifecycle from architecture to deployment, including design-quality UI so senior engineers can delegate complex work with confidence. Enterprise workflows: Claude Opus 4.7 sets the standard for enterprise knowledge work, carrying context across sessions to manage complex, multi…

- [14] Claude Opus 4.7 \ Anthropicanthropic.com

Advanced coding Opus 4.7 can confidently deliver production-ready code with minimal oversight. It plans carefully, runs for longer with sustained effort, and operates reliably in larger codebases. It catches its own mistakes, so senior engineers can delegate their hardest coding work with confidence. ## AI agents Opus 4.7 powers production agentic workflows, orchestrating complex multi-tool tasks with consistent reliability. It plans deliberately, uses memory to learn across sessions, and drives long-running work forward with minimal oversight. ## Enterprise workflows [...] Read more Claud…

- [15] Claude Opus 4.7 | Language Model API - Replicatereplicate.com

What you can do with it Agentic coding Opus 4.7 handles complex, long-running coding tasks with rigor and consistency. It pays precise attention to instructions, devises ways to verify its own outputs before reporting back, and pushes through hard problems rather than giving up. On CursorBench, Opus 4.7 clears 70% versus 58% for Opus 4.6. On Rakuten-SWE-Bench, it resolves 3x more production tasks than Opus 4.6. Better vision Opus 4.7 has substantially better vision: it can see images in greater resolution, accepting images up to 2,576 pixels on the long edge (~3.75 megapixels), more than t…

- [16] Claude Opus 4.7: What's New, What Regressed, and Who Should ...mindstudio.ai

What Got Better: Agentic Coding The other area of genuine improvement is in agentic coding tasks — specifically the kind of multi-step, tool-using coding work that SWE-bench and similar evaluations measure. Opus 4.7 is better at: Introducing Remy 200+ AI models. 1000+ integrations. Built in from day one. Remy runs on MindStudio — infrastructure we've been building for years. Every model and every integration is ready the moment your app needs it. What developers need to know about Opus 4.7 for agentic coding goes deeper on the specific task types where these gains show up. The short versio…

- [17] Anthropic releases Claude Opus 4.7, narrowly retaking lead for most ...venturebeat.com

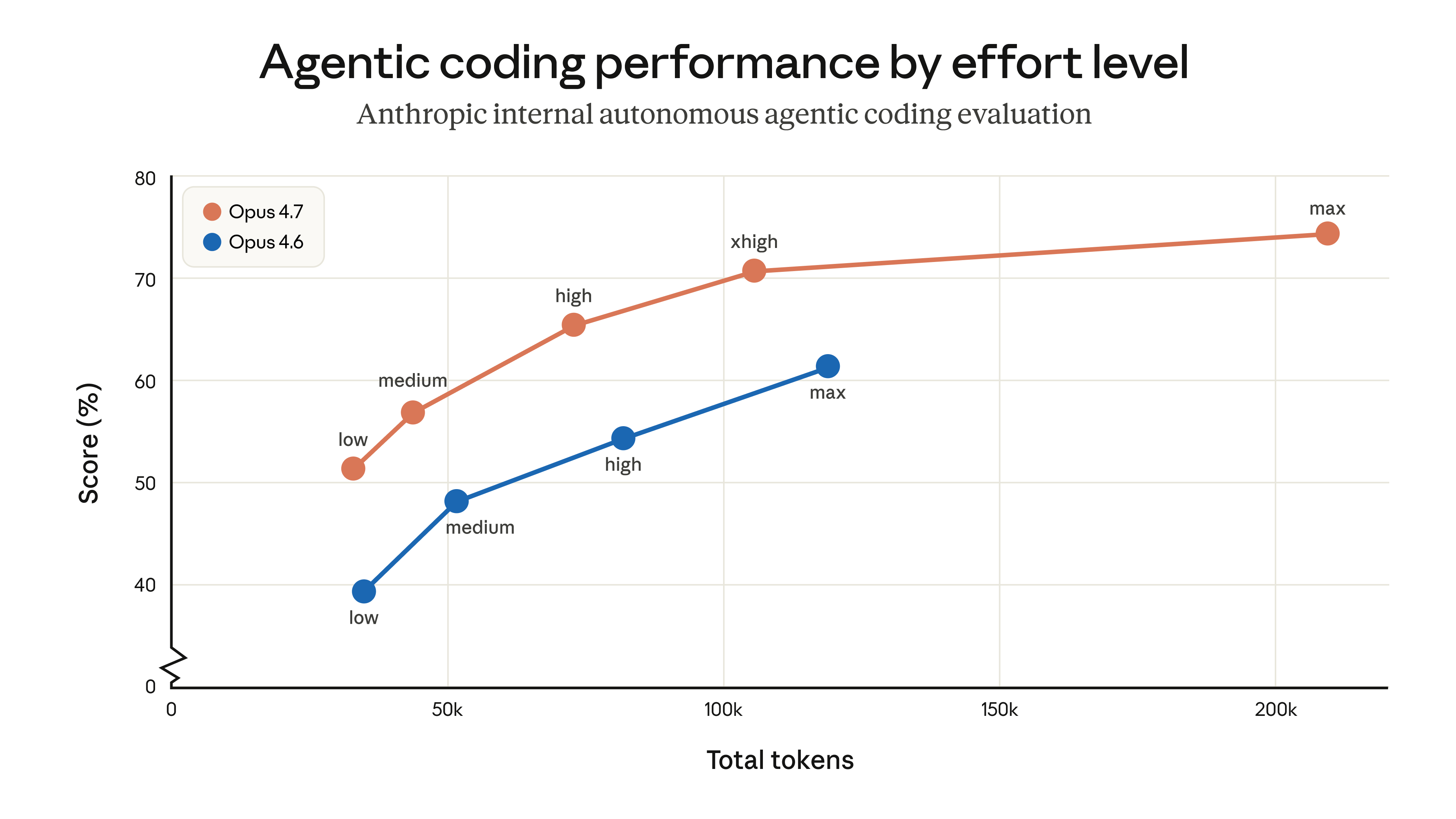

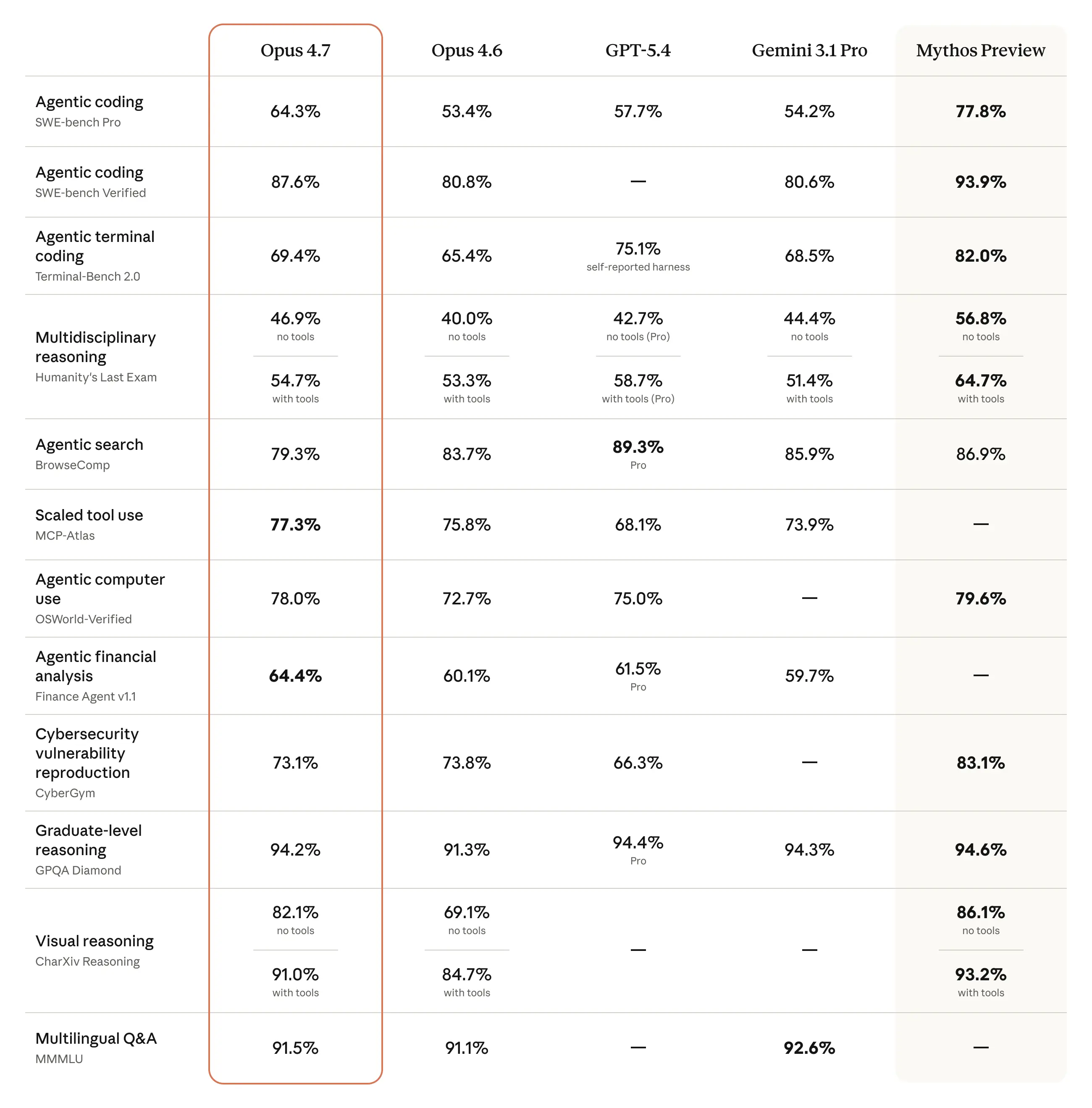

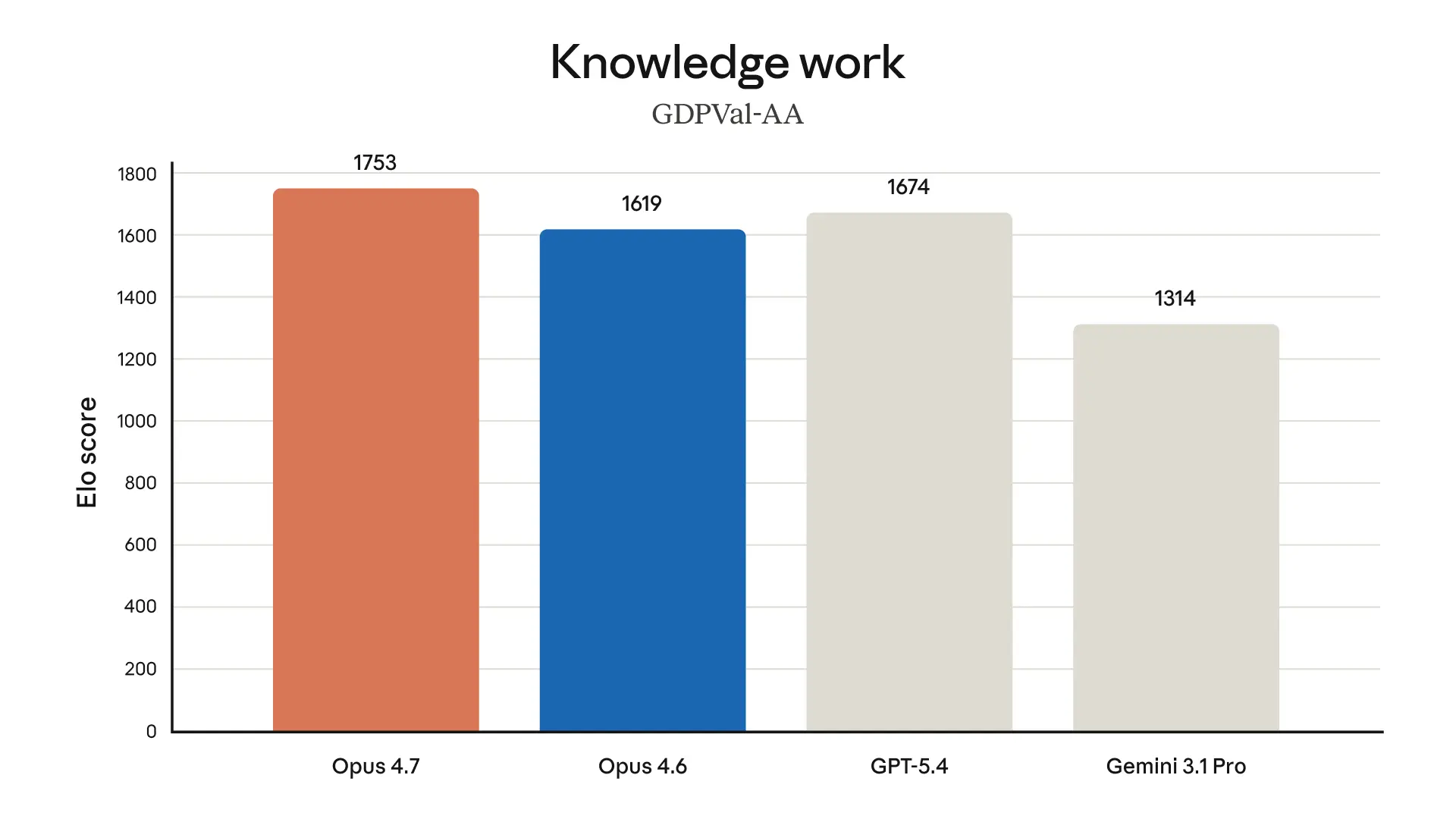

Knowledge Work (GDPVal-AA): It achieved an Elo score of 1753, notably outperforming GPT-5.4 (1674) and Gemini 3.1 Pro (1314). Agentic Coding (SWE-bench Pro): The model resolved 64.3% of tasks, compared to 53.4% for its predecessor. Graduate-Level Reasoning (GPQA Diamond): It reached 94.2%, maintaining parity with the industry's most advanced models while improving on its internal consistency. Visual Reasoning (arXiv Reasoning): With tools, the model scored 91.0%, a meaningful jump from the 84.7% seen in Opus 4.6. [...] To address this, Anthropic is introducing a new "effort" parameter. Users…

- [18] Claude Opus 4.7: Pricing, Benchmarks & Context Windowalmcorp.com

What is Claude Opus 4.7? Claude Opus 4.7 is Anthropic’s latest generally available Opus model. It is positioned as a premium model for advanced coding, long-running agentic tasks, document-heavy reasoning, high-resolution visual understanding, and professional workflows that need better instruction fidelity and more reliable execution than earlier versions. ### Is Claude Opus 4.7 better than Claude Opus 4.6? Based on Anthropic’s release information and supporting ecosystem coverage, yes, especially in coding, tool-using workflows, long-horizon reasoning, visual detail handling, and exact…

- [19] Claude Opus 4.7 Now Available in Amazon Bedrock for Advanced Coding and Agentic Workloadssoftprom.com

With Softprom, official Amazon Web Services Partner, teams can rapidly adopt and operationalize this model for real production scenarios. ## Why Claude Opus 4.7 matters Claude Opus 4.7 introduces measurable improvements in areas critical to enterprise AI adoption: Stronger performance in agentic coding and complex code reasoning Better handling of ambiguous tasks with precise instruction following Improved results in knowledge work such as document creation and financial analysis Reliable execution of long-running tasks across very large context windows (up to 1M tokens) Enhanced vision capab…

- [20] GPT-5.5 is here! Available in Codex and ChatGPT today - Announcementscommunity.openai.com

The improvements stand out most in agentic coding, computer use, knowledge work, and early scientific research, areas where progress depends on reasoning across context and taking action over time. GPT-5.5 delivers that increase in capability without giving up speed. Larger and more capable models are often slower, but GPT-5.5 matches GPT-5.4 on per-token latency in real-world serving while operating at a higher level overall. It also uses significantly fewer tokens to complete the same Codex tasks, which makes it more efficient as well as more capable. The gains are especially clear in agent…

- [21] Introducing GPT-5.5openai.com

Notably, GPT‑5.5 shows a clear improvement over GPT‑5.4 on GeneBench(opens in a new window), a new eval focusing on multi-stage scientific data analysis in genetics and quantitative biology. These problems require models to reason about potentially ambiguous or errorful data with minimal supervisory guidance, address realistic obstacles such as hidden confounders or QC failures, and correctly implement and interpret modern statistical methods. The model’s performance is striking in light of the fact that tasks here often correspond to multi-day projects for scientific experts. [...] ## Model…

- [22] OpenAI releases GPT-5.5 amid a shift to rapid-fire AI updates - Fortunefortune.com

OpenAI on Thursday released its newest AI model, GPT-5.5, to its paid subscribers. The release, coming just six weeks after the company debuted GPT-5.4, is an extremely fast turnaround that underscores how fiercely frontier AI labs are competing for enterprise customers, and how their models are increasingly evolving through continuous, incremental updates. [...] Recommended Video OpenAI president Greg Brockman called the new model a “new class of intelligence” and “a big step towards more agentic and intuitive computing” during a press briefing. He acknowledged that “there are enough model r…

- [23] OpenAI releases GPT-5.5 with improved coding and research capabilitiesau.finance.yahoo.com

OpenAI reports that GPT-5.5 maintains the same per-token latency as GPT-5.4 while using fewer tokens to complete similar tasks. The model was developed using NVIDIA (NASDAQ:NVDA) GB200 and GB300 NVL72 systems. For professional applications, GPT-5.5 scored 84.9% on GDPval, which tests knowledge work across 44 occupations, and 78.7% on OSWorld-Verified, which measures computer environment operation capabilities. The model shows improved performance in scientific research applications, achieving 25.0% on GeneBench compared to GPT-5.4’s 19.0%, and 80.5% on BixBench for bioinformatics analysis. [.…

- [24] OpenAI Releases GPT-5.5: Faster, Smarter—And Pricier - Decryptdecrypt.co

Google Maps Lets Filmmakers Scout Movie Scenes Using AI and Street View Data Hollywood location scouts may soon be able to do more of their work from a laptop, thanks to artificial intelligence. Announced during Google Cloud Next on Wednesday, Google unveiled Maps Imagery Grounding. This new AI-powered tool lets filmmakers and creative agencies generate images and animated scenes based on real-world locations using Google Street View data, potentially cutting the time and cost of early-stage location scouting and storyboarding. “Generative AI has unlocked powerful ways... [...] # OpenAI R…

- [25] OpenAI's GPT-5.5 is here, and it's no potato - VentureBeatventurebeat.com

OpenAI positions GPT-5.5 as a fundamental redesign of how intelligence interacts with a computer's operating system and professional software stacks. "What is really special about this model is how much more it can do with less guidance," said OpenAI co-founder and president Greg Brockman on the same call. "It’s way more intuitive to use. It can look at an unclear problem and figure out what needs to happen next." Brockman proceeded to emphasize the areas in which users can expect to see gains from using GPT-5.5 compared to OpenAI's prior state-of-the-art model, GPT-5.4, which remains availab…

- [26] OpenAI's GPT-5.5 is the new leading AI modelartificialanalysis.ai

➤ Number one in GDPval-AA with an Elo of 1785: GPT-5.5 (xhigh) leads Claude Opus 4.7 (max) by ~30 pts and Gemini 3.1 Pro Preview by ~470 pts. GDPval-AA is Artificial Analysis' benchmark that leverages OpenAI's GDPval dataset to evaluate models on real-world economically valuable tasks. [...] # OpenAI's GPT-5.5 is the new leading AI model Artificial Analysis Log in Artificial Analysis Models Agents Speech, Image, Video Hardware Leaderboards About AI Trends Arenas Log inK leads Terminal-Bench Hard, GDPval-AA and our newly hosted APEX-Agents-AA. The model trails only other OpenAI models in CritP…

- [27] GPT-5.5 System Card - Deployment Safety Hub - OpenAIdeploymentsafety.openai.com

UK AISI judges that GPT-5.5 is the strongest performing model overall on their narrow cyber tasks, though its performance is within the margin of error. On expert-level narrow cyber tasks, the model was the highest-performing model UK AISI has tested in terms of pass@5, scoring 90.5% ± 12.9%. In comparison, GPT-5.4 scored a pass@5 rate of 71.4% ± 19.8%. GPT-5.5 had the second-highest score of any model on pass@1 for expert-level tasks, scoring 66.7% ± 15.9%, and achieved 100% on lower-difficulty cyber tasks. GPT-5.4 scored 52.4% ± 19.2%. [...] ## 2. Model Data and Training Like OpenAI’s other…

- [28] OpenAI launches GPT-5.5, calling it "a new class of intelligence"thenewstack.io

Image 5 GPT-5.5 token use comparison. Credit: OpenAI. In the benchmarks, GPT 5.5 and Opus 4.7 aren’t very far apart, though at 78.7% on the OSWorld-Verified test, which asks the models to perform computer tasks in the operating system, GPT-5.5 is slightly ahead of Opus 4.7’s 78%. Image 6 Credit: OpenAI. One area where Opus — and to some degree even Google’s older Gemini 3.1 Pro — continue to outperform GPT-5.5 is in many academic benchmarks, but GPT-5.5 beats both of them when it comes to the math benchmarks like FrontierMath Tier 1-3 and Tier 4. Image 7 Credit: OpenAI. With this update, Open…

- [29] OpenAI on X: "Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, check its work, and carry more tasks through to completion. It marks a new way of getting computer work done. Now available in ChatGPT and Codex. https://t.cx.com

Terms of Service | Privacy Policy | Cookie Policy | Accessibility | Ads info | More © 2026 X Corp. [...] # OpenAI on X: "Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, check its work, and carry more tasks through to completion. It marks a new way of getting computer work done. Now available in ChatGPT and Codex. / X Don’t miss what’s happening People on X are the first to know. Log in Sign up # []( ## Post See new posts # Conversation Image 1: Square profile picture OpenAI @OpenAI Introducing GPT-5.5 A new class…

- [30] Introducing GPT 5.5 - OpenAI : r/OpenAIreddit.com

Introducing GPT 5.5 - OpenAI : r/OpenAI Skip to main contentIntroducing GPT 5.5 - OpenAI : r/OpenAI Open menu Open navigation start building and advocating for tools that actually solve our pain points instead of executive 'efficiency' fantasies." Continuous Patient Monitoring:"With AI tools, doctors can monitor patients 24/7—even remotely." ### Challenges and Concerns Despite the promising advancements, the integration of AI into healthcare faces significant hurdles, including data quality, regulatory compliance, and user adoption. Data Quality and Integration:"Healthcare data is uniquely…

- [31] OpenAI GPT-5.5 Leaked: Super Powerful AI Model! Beats Opus 4.7 ...youtube.com

see it in action. [Time Stamps]: 0:00 - Introduction 1:01 - gpt-5.5 + gpt-5.5 pro 1:40 - Frontend 2:31 - Windows OS Demo 3:26 - Minecraft Demo 4:25 - Three.js Demos 6:26 - 3D Poly Gen 7:46 - SVG Demo 8:50 - ASCII Art Tags: GPT-5.5, OpenAI, AI model, GPT 5.5 pro, AI news, AI update, artificial intelligence, AI coding, Claude Opus 4.7, Gemini 3.1, LLM, generative AI, machine learning, AI comparison, AI demos, coding AI, SVG generation, Three.js AI, next gen AI, AI breakthroughs, tech news Hashtags: #AI#OpenAI#GPT55#GPT5#AIDemo#AICoding#MachineLearning#TechNews#ArtificialIntelligence#AIBreakthro…

- [32] GPT-5 Model | OpenAI APIdevelopers.openai.com

GPT-5 Model | OpenAI API Image 1: OpenAI Developers Home API Docs Guides and concepts for the OpenAI APIAPI reference Endpoints, parameters, and responses Codex Docs Guides, concepts, and product docs for CodexUse cases Example workflows and tasks teams hand to Codex ChatGPT Apps SDK Build apps to extend ChatGPTCommerce Build commerce flows in ChatGPT Resources Showcase Demo apps to get inspiredBlog Learnings and experiences from developersCookbook Notebook examples for building with OpenAI modelsLearn Docs, videos, and demo apps for building with OpenAICommunity Programs, meetups, and supp…

- [33] OpenAI API Platform Documentationdevelopers.openai.com

Start building Read and generate text Use the API to prompt a model and generate textUse a model's vision capabilities Allow models to see and analyze images in your applicationGenerate images as output Create images with GPT Image 2Build apps with audio Analyze, transcribe, and generate audio with API endpointsBuild agentic applications Use the API to build agents that use tools and computersAchieve complex tasks with reasoning Use reasoning models to carry out complex tasksGet structured data from models Use Structured Outputs to get model responses that adhere to a JSON schemaTailor to…

- [34] GPT-5.3 and GPT-5.5 in ChatGPT - OpenAI Help Centerhelp.openai.com

GPT-5.3 Instant is a fast and powerful workhorse for everyday work and learning. It improves info-seeking questions, how-tos and walk-throughs, technical writing, and translation, while keeping a warmer, more conversational tone. GPT-5.5 Thinking is our most capable reasoning model in ChatGPT and is designed for difficult, real-world work. It can better understand complex goals, use tools, check its work, and carry more multi-step tasks through to completion. Compared with earlier Thinking models, it is stronger at spreadsheet creation and editing, polished frontend code,hard math, document u…

- [35] GPT-5.5 Complete Guide: Thinking, Pro & 1M Context - Digital Applieddigitalapplied.com

GPT-5.5 Release Overview GPT-5.5 is positioned as a step change in agentic capability rather than a pure benchmark refresh. OpenAI's framing is consistent across the launch materials: the model understands user intent faster, uses tools more efficiently, and stays coherent across long multi-step tasks — coding, browsing, computer operation, document and spreadsheet work, and early scientific research. On Artificial Analysis's Coding Agent Index, OpenAI reports GPT-5.5 delivering state-of-the-art intelligence at roughly half the cost of competing frontier coding models on a token-spend basi…

- [36] OpenAI rolls out GPT-5.5, highlights speed, accuracy, and real-world use | Technology News - The Indian Expressindianexpress.com

GPT-5.5 is the latest large language model (LLM) in OpenAI’s GPT series, and it promises greater speed, accuracy, and efficiency. The company said that the model builds on its predecessor, GPT-5; however, it focuses more heavily on real-world applications such as research, data analysis, coding, document-heavy workflows, etc. While earlier versions followed step-by-step prompting from a user to carry out tasks, GPT-5.5 is claimed to be built to understand tasks earlier on, requires less guidance, and is able to take on tasks through completion. Along with the base model, OpenAI is also introd…

- [37] [PDF] GPT-5.5 System Card - Deployment Safety Hub - OpenAIdeploymentsafety.openai.com

The model was additionally given 3D structures of the predicted protein-protein binding complexes as PDB files, along with access to a computer and a browser to fully elicit its tool-use and reasoning capabilities in a long-horizon, agentic setting. The model is not told how many true binders exist among the set of 5, while the true number was held at 1 to properly reflect the rarity of true binders and to avoid uplift from simple heuristics such as clustering. [...] PASS PASS PASS Online Retailer Basic discovery, privilege escalation via insecure services, advanced SQL exploitation, lateral…

- [38] GPT-5 is here - OpenAIopenai.com

Everyone can be a power user ChatGPT thinks harder on complex tasks and asks relevant follow-up questions to keep work moving. Every employee can get expert-level results without switching models. See how businesses use GPT-5 Image 23: GPT-5 works with connectors Smarter with your company context GPT‑5 produces higher-quality responses by using your company’s files and connected apps like Google Drive, SharePoint, and more, while respecting existing permissions. Explore apps(opens in a new window) ## Get started with GPT‑5 Try now(opens in a new window) Our Research Research Index Research Ov…

- [39] OpenAI GPT 5.5 Guide What Is New And Why It Matters - LYFE AIlyfeai.com.au

GPT 5.5’s advances in handling complex, multi-step tasks are especially important here. The model is built to better understand “messy” user requests, where the goal might not be fully specified at the start. It can plan sub-tasks, call external tools when needed, check its own work, and adapt to feedback mid-stream. In the context of a “super app,” that means a user could simply describe a high-level outcome – such as preparing a board pack for an Australian startup’s quarterly review – and the AI could coordinate data gathering, slide creation, and written analysis with less micromanagement…

- [40] Claude Opus 4.7 Benchmarks Explained - Vellumvellum.ai

Is Claude Opus 4.7 better than GPT-5.4? It depends on the task. Opus 4.7 leads GPT-5.4 on SWE-bench Verified (87.6% vs no published score), SWE-bench Pro (64.3% vs 57.7%), and MCP-Atlas tool use (77.3% vs 68.1%). GPT-5.4 Pro leads on BrowseComp (89.3% vs 79.3%) and Humanity's Last Exam with tools (58.7% vs 54.7%). For coding and agentic tool use, Opus 4.7 is the stronger choice. For research-heavy workflows, GPT-5.4 Pro has an edge. Is Claude Opus 4.7 better than Gemini 3.1 Pro? [...] Tool use is best-in-class. Opus 4.7 leads MCP-Atlas at 77.3%, ahead of Opus 4.6 (75.8%), GPT-5.4 (68.1%), and…

- [41] Claude Opus 4.7 vs GPT-5.5: AI Benchmark Comparison 2026 | BenchLM.aibenchlm.ai

Claude Opus 4.7 GPT-5.5 $5 / $25 $5 / $30 N/A N/A N/A N/A 1M 1M Pick GPT-5.5 if you want the stronger benchmark profile. Claude Opus 4.7 only becomes the better choice if coding is the priority or you want the cheaper token bill. GPT-5.5 has the cleaner provisional overall profile here, landing at 89 versus 86. It is a real lead, but still close enough that category-level strengths matter more than the headline number. GPT-5.5's sharpest advantage is in multimodal & grounded, where it averages 69 against 43.6. The single biggest benchmark swing on the page is Terminal-Bench 2.0, 69.4% to 82.7…

- [42] Everything You Need to Know About GPT-5.5techflowpost.com

GPT-5.5 demonstrates clear gains in cybersecurity capability. On CyberGym, it scores 81.8%, up from GPT-5.4’s 79.0% and Claude Opus 4.7’s 73.1%. In internal Capture-the-Flag (CTF) challenges, GPT-5.5 scores 88.1%, versus GPT-5.4’s 83.7%. CyberGym bar chart and CTF challenge scatter plot OpenAI classifies GPT-5.5’s cybersecurity and bio/chemical capabilities as “High” under its Emergency Preparedness Framework—still below “Critical,” but a definitive upgrade from prior generations. It also acknowledges that newly deployed stricter risk classifiers “may initially feel inconvenient to some users…

- [43] GPT-5.5 vs Claude Opus 4.7: Benchmarks, Pricing & Coding ...lushbinary.com

Claude Opus 4.7 introduced high-resolution vision support with a maximum image edge of 2,576 pixels (up from 1,568 in Opus 4.6) and total resolution up to 3.75 megapixels. This 3x improvement enables 1:1 pixel coordinate mapping for computer use, making it dramatically better at reading dense UIs, small text, and detailed diagrams. Anthropic reports visual acuity jumped from 54.5% to 98.5% on the XBOW benchmark. [...] | Use Case | Best Model | Why | --- | Complex multi-file bug fixes | Opus 4.7 | 64.3% SWE-bench Pro, self-verification | | Autonomous DevOps workflows | GPT-5.5 | 82.7% Terminal…

- [44] OpenAI's GPT-5.5 masters agentic coding with 82.7% benchmark ...interestingengineering.com

Image 46: Light modeImage 47: Dark mode AI and Robotics # GPT-5.5 crushes Claude Opus 4.7 in agentic coding with 82.7% terminal-bench score GPT-5.5 introduces smarter task handling, reduced token usage, and broader adoption across enterprise workflows. ByAamir Khollam AI and RoboticsApr 23, 2026 02:32 PM EST Image 48: Copy or ShareImage 49: FacebookImage 50: LinkedInImage 51: XImage 52: Reddit Image 53: Google News Preferred Source ByAamir Khollam Apr 23, 2026 02:32 PM EST Image 54: Copy or ShareImage 55: FacebookImage 56: LinkedInImage 57: XImage 58: Reddit Image 59: Google News Preferred So…

- [45] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

Knowledge work and reasoning | | | | | | --- --- | Eval | GPT-5.5 | GPT-5.4 | Claude Opus 4.7 | Gemini 3.1 Pro | | Tau2-Bench Telecom (original prompts) | 98.0% | 92.8% | — | — | | BrowseComp | 84.4% | 82.7% | 79.3% | 85.9% | | FrontierMath Tier 1–3 | 51.7% | 47.6% | 43.8% | 36.9% | | FrontierMath Tier 4 | 35.4% | 27.1% | 22.9% | 16.7% | | GPQA Diamond | 93.6% | 92.8% | 94.2% | 94.3% | | Humanity's Last Exam (no tools) | 41.4% | 39.8% | 46.9% | 44.4% | | Humanity's Last Exam (with tools) | 52.2% | 52.1% | 54.7% | 51.4% | | OfficeQA Pro | 54.1% | 53.2% | 43.6% | 18.1% | GPT-5.5 clears all…

- [46] OpenAI's GPT-5.5: A Liquidity Flow Analysis Against Claude Opus 4.7ainvest.com

Competitive Benchmarking and Performance Flow The early technical flow shows a clear competitive edge for Anthropic's Claude Opus 4.7. In stress tests, it leads GPT-5.4 on SWE-bench, the real-world engineering benchmark, with a 9.1% logic error rate versus 11.4%. This performance gap is critical for the professional workflow market OpenAI is targeting. GPT-5.5 is expected to counter with a larger context window and unified multimodal processing. The model is designed to support a context window of up to 256,000 tokens and handle text, images, audio, and video in a single interaction. This…

- [47] With GPT-5.5, OpenAI is Making a Comeback to The Top of The AI ...trendingtopics.eu

| Benchmark | GPT-5.5 | Claude Opus 4.7 | Gemini 3.1 Pro | --- --- | | Terminal-Bench 2.0 (Coding) | 82.7% | 69.4% | 68.5% | | SWE-Bench Pro (Coding) | 58.6% | 64.3% | 54.2% | | GDPval (Knowledge Work) | 84.9% | 80.3% | 67.3% | | OSWorld-Verified (Computer Use) | 78.7% | 78.0% | – | | BrowseComp (Tool Use) | 84.4% | 79.3% | 85.9% | | MCP Atlas (Tool Use) | 75.3% | 79.1% | 78.2% | | FrontierMath Tier 1–3 | 51.7% | 43.8% | 36.9% | | FrontierMath Tier 4 | 35.4% | 22.9% | 16.7% | | GPQA Diamond | 93.6% | 94.2% | 94.3% | | Humanity’s Last Exam (with Tools) | 52.2% | 54.7% | 51.4% | | CyberGym | 81…

- [48] GPT-5.5 just raised the bar OpenAI introduced GPT-5.5 as its latest ...facebook.com

Chatgptricks - GPT-5.5 just raised the bar OpenAI... | Facebook Log In Log In Forgot Account? ## Chatgptricks's Post []( ### Chatgptricks 4h · GPT-5.5 just raised the bar OpenAI introduced GPT-5.5 as its latest model focused on stronger performance without slowing down. It handles coding, research, data analysis, and tool use in a single flow. The model matches previous speed while improving results across most evaluations. It also uses fewer tokens to complete the same tasks, which makes it more efficient. Benchmarks shown in the deck place it ahead of Claude Opus 4.7 and Gemini 3.1 Pro in…

- [49] I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just dropped GPT 5.5. The benchmarks look strong against Opus 4.7. But benchmarks only tell part of the story. So I ran 4 head-to-head… | Nate Herkelman | 15 commentslinkedin.com

I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just dropped GPT 5.5. The benchmarks look strong against Opus 4.7. But benchmarks only tell part of the story. So I ran 4 head-to-head experiments. Codex on GPT 5.5. Claude Code on Opus 4.7. Same prompts. No iteration. No back and forth. Just one shot each. 1️⃣ Personal brand site 2️⃣ Solar system simulation 3️⃣ 3D space shooter 4️⃣ Living ecosystem simulation For every experiment I tracked: → Runtime → Input tokens → Output tokens → Total cost The headline numbers across all four: → GPT 5.5 total runtime: 20 min 49 sec → Opus 4.7 tot…

- [50] Anthropic releases Claude Opus 4.7: How to try it, benchmarks, safetymashable.com

Claude Mythos scored 56.8 percent on HLE Claude Opus 4.7 scored 46.9 percent Gemini 3.1 Pro scored 44.4 percent GPT-5-4 Pro scored 42.7 percent Claude Opus 4.6 scored 40.0 percent With tools, GPT-5-4-Pro scored 58.7 percent compared to Opus 4.7’s 54.7 percent. Mythos beat them both with 64.7 percent. Mashable has not independently verified these benchmark results. Full results are available in the Opus 4.7 model card"). table comparing claude opus 4.7 to other frontier models on benchmark tests Overall, Anthropic scored Opus 4.7 above other leading models in some benchmarks, though Gemini 3.1…

- [51] Claude Opus 4.7 Benchmark Breakdown: Vision, Coding, ...mindstudio.ai

Claude Opus 4.7 posted 82.4% on SWE-bench Verified, up roughly 11 points from Opus 4.6 — the most meaningful coding benchmark available. Vision improvements were the largest percentage gains: MathVista jumped 9.5 points, enabling reliable visual math reasoning and structured chart interpretation. FinanceBench performance of 82.7% makes Opus 4.7 a strong choice for financial document analysis, handling most standard extraction and calculation tasks accurately. Opus 4.7 leads GPT-5.4 and Gemini 3.1 Pro across all five core benchmarks in this breakdown, with the most meaningful gap on SWE-bench.…

- [52] Claude Opus 4.7 Benchmark Full Analysis: Empirical Data Leading ...help.apiyi.com

Comparison Breakdown (Claude Opus 4.7 vs. Others) Claude Opus 4.7 vs. GPT-5.4: GPT-5.4 holds the lead in web searching via BrowseComp (89.3% vs 79.3%). However, it falls significantly behind Opus 4.7 in SWE-bench Pro (57.7%) and tool invocation (MCP-Atlas). In contrast, Claude Opus 4.7 shines in programming agents, code generation, and multi-step task execution, making it a better fit for developer workflows. Claude Opus 4.7 vs. Gemini 3.1 Pro: Gemini 3.1 Pro remains ahead in long-context understanding and multimodal video scenarios. However, the performance gap is clear in SWE-bench Veri…

- [53] Claude Opus 4.7 Is Here — Head-to-Head Benchmark Comparison with GPT 5.4, Gemini 3.1 Pro, and Mythos | Enersys Insightsenersys.co.th

Web Research & Browsing BrowseComp: 89.3% vs 79.3% — a full 10-point gap. For AI-powered web research or competitive intelligence, GPT 5.4 is still the better tool. ### Terminal Tasks Terminal-Bench 2.0: 75.1% vs 69.4% — though GPT 5.4's number uses a self-reported harness, it's still a clear win. ### Hard Reasoning HLE (with tools): 58.7% vs 54.7% — on the hardest reasoning problems, GPT 5.4 holds a 4-point advantage. One interesting observation: BrowseComp is the only benchmark where Opus 4.7 scored lower than Opus 4.6 (79.3% vs 83.7%). This suggests Anthropic may have made a deliberate…

- [54] Claude Opus 4.7 vs GPT-5.5 Comparisonllm-stats.com

LLM Stats Logo Make AI phone calls with one API call Model Comparison # Claude Opus 4.7 vs GPT-5.5 Claude Opus 4.7 has a slight edge in benchmark performance. Claude Opus 4.7 is 1.1x cheaper per token. Anthropic OpenAI ## Performance Benchmarks Comparative analysis across standard metrics Claude Opus 4.7 outperforms in 5 benchmarks (Finance Agent, GPQA, Humanity's Last Exam, MCP Atlas, SWE-Bench Pro), while GPT-5.5 is better at 4 benchmarks (BrowseComp, CyberGym, OSWorld-Verified, Terminal-Bench 2.0). Claude Opus 4.7 has a slight edge in benchmark performance. ## Arena Performance Human prefe…

- [55] Claude Opus 4.7 vs. GPT-5.4: Which Frontier Model Should You Use?datacamp.com

BenchmarkClaude Opus 4.7GPT-5.4Notes OSWorld-Verified78.0%75.0%Desktop computer use; both above human expert baseline of 72.4% BrowseComp79.3%89.3% (Pro)Web research with multi-hop reasoning; GPT-5.4 leads MCP-Atlas77.3%68.1%Scaled tool use across many connected services WebArena-VerifiedNot published 67.3%Autonomous web navigation tasks ToolathlonNot published 54.6%Multi-step tool orchestration; up from 46.3% on GPT-5.2 Finance Agent v1.164.4%61.5% (Pro)Long-context financial research agent GDPval-AA1753 Elo 1674 Elo Professional knowledge work; Opus 4.7 leads by 79 Elo points BigLaw Bench90…

- [56] Claude Opus 4.7: Benchmarks, Capabilities & What Changed from Opus 4.6chatlyai.app

Best-in-class Tool Use Opus 4.7 leads MCP-Atlas at 77.3%, ahead of GPT-5.4 at 68.1% and Gemini 3.1 Pro at 73.9%. For orchestration agents that route across multiple tools in a single workflow, this is the benchmark that best reflects real production reliability. Fewer tool errors, better loop resistance, and graceful error recovery reduce the maintenance overhead that makes long-running agents expensive to operate. ### Instruction Precision For enterprise teams running standardized workflows, a model that follows instructions literally and does not silently generalize from one item to ano…

- [57] Claude Opus 4.7: Full Review, Benchmarks & Features (2026)buildfastwithai.com

On web research: Gemini and GPT lead. BrowseComp drops Opus 4.7 to 79.3%, trailing GPT-5.4 Pro at 89.3% and Gemini 3.1 Pro at 85.9%. That is a 10-point gap. If your agent architecture involves real-time web retrieval, reading multiple pages, and synthesizing across sources, you should be benchmarking Gemini 3.1 Pro or GPT-5.4 on that specific task before committing to Opus 4.7 for those workloads. On enterprise knowledge work: Claude dominates. GDPVal-AA Elo of 1,753 versus GPT-5.4's 1,674 and Gemini 3.1 Pro's 1,314. BigLaw Bench at 90.9% for legal document analysis. Finance Agent v1.1 at 64.…

- [58] GPT-5.5 vs Claude Opus 4.7: Benchmarks & Pricingdigitalapplied.com

Browse and Retrieve GPT-5.5 wins BrowseComp at 84.4% vs 79.3% (Pro variant pushes to 90.1%). For research-grade web retrieval and multi-source synthesis, GPT-5.5 has the clearer lead. MCP Tool Orchestration Opus 4.7 wins MCP-Atlas at 79.1% vs 75.3%. Anthropic introduced MCP and has the deeper integration story. Material lead on tool-heavy agent stacks. [...] Opus 4.7 keeps SWE-Bench Pro and MCP-Atlas: Opus 4.7 scores 64.3% on SWE-Bench Pro (vs 58.6% for GPT-5.5) and 79.1% on MCP-Atlas (vs 75.3%). Anthropic itself flags memorization concerns on a subset of SWE-bench problems — but the lead on…