研究並向我展示 GPT-5.5 與 Claude Opus 4.7 的基準測試比較。

目前可用證據不足以做出「權威、完整」的 GPT 5.5 與 Claude Opus 4.7 基準測試結論。有限的第三方資料顯示 Claude Opus 4.7 可能在整體基準表現上略佔優勢,但 BenchLM 同時標示兩者的完整 benchmark data 尚未就緒,因此應將此視為初步、未充分驗證的比較,而非定論。[5][6] Key findings 整體基準測試結論:Insufficient evidence. 可用資料中沒...

目前可用證據不足以做出「權威、完整」的 GPT 5.5 與 Claude Opus 4.7 基準測試結論。有限的第三方資料顯示 Claude Opus 4.7 可能在整體基準表現上略佔優勢,但 BenchLM 同時標示兩者的完整 benchmark data 尚未就緒,因此應將此視為初步、未充分驗證的比較,而非定論。[5][6] Key findings 整體基準測試結論:Insufficient evidence. 可用資料中沒有官方 OpenAI 與 Anthropic 對 GPT 5.5、Claude Opus 4.7 的同場完整 benchmark 對照,也沒有足夠的逐項分數可驗證結論。[1][2][6] 第三方 LLM S

重點整理

- **整體基準測試結論:Insufficient evidence.** 可用資料中沒有官方 OpenAI 與 Anthropic 對 GPT-5.5、Claude Opus 4.7 的同場完整 benchmark 對照,也沒有足夠的逐項分數可驗證結論。

- **第三方 LLM Stats 的初步比較**稱 Claude Opus 4.7 在 benchmark performance 上有「slight edge」,並稱 Claude Opus 4.7 每 token 成本約便宜 1.1 倍。

- **BenchLM 的比較頁面更保守**,明確表示 Claude Opus 4.7 與 GPT-5.5 的 benchmark data「coming soon」,且目前只有 partial data,因此不支持強結論。

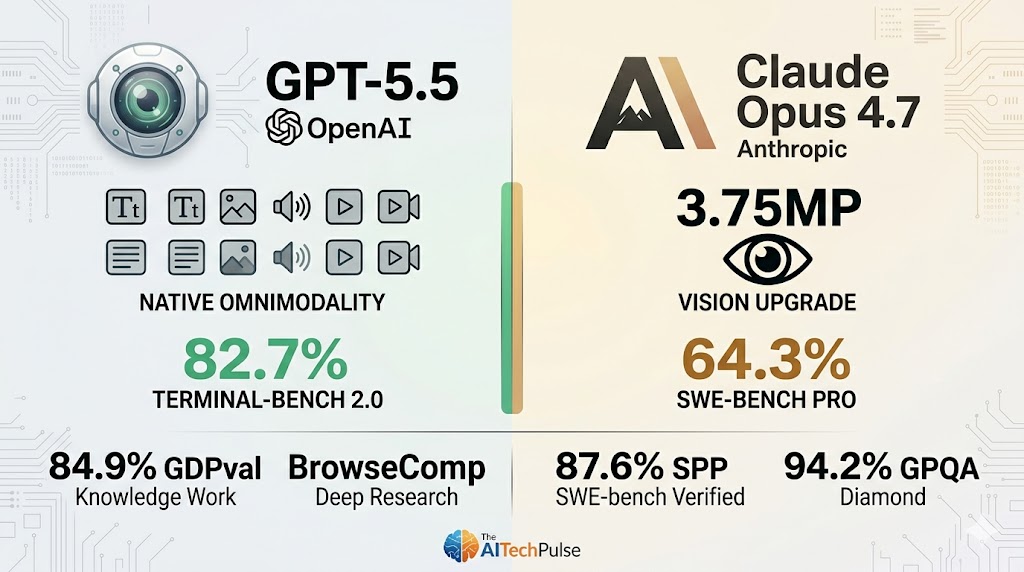

- **價格與規格方面**,OpenAI API 文件列出 GPT-5.5 的 Model ID 為 `gpt-5.5`,輸入價格為 $5 / MTok,輸出價格為 $30 / MTok,context window 為 1M,最大輸出為 128K tokens,並標示 latency 為 Fast。

- 目前可用證據不足以做出「權威、完整」的 GPT-5.5 與 Claude Opus 4.7 基準測試結論。有限的第三方資料顯示 Claude Opus 4.7 可能在整體基準表現上略佔優勢,但 BenchLM 同時標示兩者的完整 benchmark data 尚未就緒,因此應將此視為初步、未充分驗證的比較,而非定論。[5][6]

- ## Key findings

研究答案

目前可用證據不足以做出「權威、完整」的 GPT-5.5 與 Claude Opus 4.7 基準測試結論。有限的第三方資料顯示 Claude Opus 4.7 可能在整體基準表現上略佔優勢,但 BenchLM 同時標示兩者的完整 benchmark data 尚未就緒,因此應將此視為初步、未充分驗證的比較,而非定論。[5][

6]

Key findings

-

整體基準測試結論:Insufficient evidence. 可用資料中沒有官方 OpenAI 與 Anthropic 對 GPT-5.5、Claude Opus 4.7 的同場完整 benchmark 對照,也沒有足夠的逐項分數可驗證結論。[

1][

2][

6]

-

第三方 LLM Stats 的初步比較稱 Claude Opus 4.7 在 benchmark performance 上有「slight edge」,並稱 Claude Opus 4.7 每 token 成本約便宜 1.1 倍。[

5]

-

BenchLM 的比較頁面更保守,明確表示 Claude Opus 4.7 與 GPT-5.5 的 benchmark data「coming soon」,且目前只有 partial data,因此不支持強結論。[

6]

-

價格與規格方面,OpenAI API 文件列出 GPT-5.5 的 Model ID 為

gpt-5.5,輸入價格為 $5 / MTok,輸出價格為 $30 / MTok,context window 為 1M,最大輸出為 128K tokens,並標示 latency 為 Fast。[2]

-

BenchLM 顯示的價格對照為 Claude Opus 4.7 約 $5 / $25,GPT-5.5 約 $5 / $30,兩者 context window 皆為 1M,但 speed 與 latency / TTFT 皆標示 N/A。[

6]

-

GPT-5.5 的官方定位是「coding and professional work」的新一類模型,並支援 functions、web search、file search、computer use 等工具。[

2]

-

OpenAI 文件建議在工具密集或長時間工作流程中,應針對 accuracy、token consumption、end-to-end latency 與其他模型做實測 benchmark,而不是只依賴靜態分數。[

1]

-

Claude Opus 4.7 的部分表現存在負面訊號:一則 AI 開發者日報摘錄稱 Claude Opus 4.7 high reasoning 在 Thematic Generalization Benchmark 上低於 Opus 4.6 high reasoning,分數從 80.6 降到 72.8,但這不是 GPT-5.5 對 Claude Opus 4.7 的直接比較。[

4]

-

Reddit 有貼文聲稱 GPT-5.5 在幻覺率與 AA IQ 上勝過 Claude Opus 4.7,但這是社群來源,證據強度明顯低於官方文件或可重現 benchmark,因此不應作為主要結論依據。[

38]

Comparison table

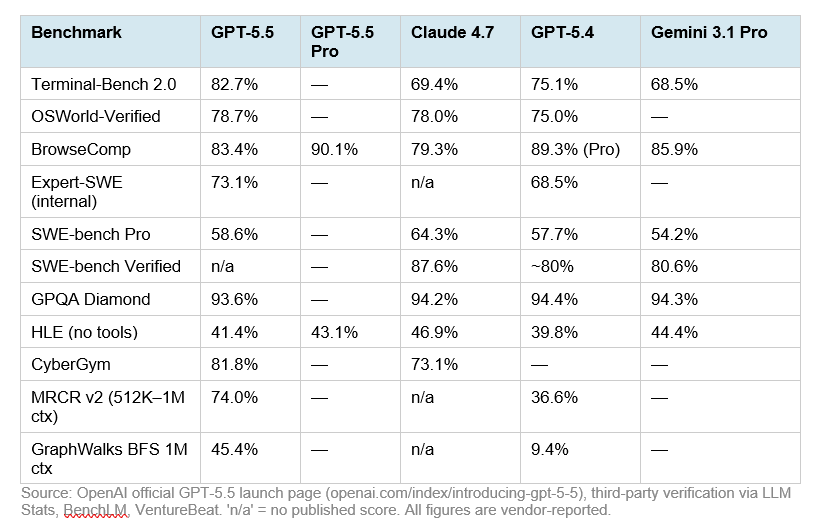

| 面向 | GPT-5.5 | Claude Opus 4.7 | 可支持的結論 |

|---|---|---|---|

| 整體 benchmark | 官方比較資料不足 | 第三方稱略勝 | LLM Stats 稱 Claude Opus 4.7 略有 benchmark 優勢,但 BenchLM 表示完整資料尚未就緒。[ |

| 輸入價格 | $5 / MTok | $5 / MTok | 兩者輸入價格在 BenchLM 摘錄中相同。[ |

| 輸出價格 | $30 / MTok | $25 / MTok | BenchLM 摘錄顯示 Claude Opus 4.7 輸出較便宜;LLM Stats 也稱 Claude Opus 4.7 每 token 約便宜 1.1 倍。[ |

| Context window | 1M | 1M | BenchLM 摘錄顯示兩者皆為 1M context window;OpenAI 文件也列出 GPT-5.5 context window 為 1M。[ |

| Latency / speed | OpenAI 標示 Fast | N/A | GPT-5.5 在 OpenAI 模型頁標示 latency 為 Fast;BenchLM 對兩者 latency / TTFT 皆標示 N/A。[ |

| 工具支援 | Functions、Web search、File search、Computer use | 資料不足 | 可確認 GPT-5.5 的工具支援;可用證據未提供 Claude Opus 4.7 的同等官方工具規格。[ |

| 可靠性結論 | 需自行實測 | 需自行實測 | OpenAI 文件建議依 accuracy、token consumption、end-to-end latency 進行實際 benchmark。[ |

Evidence notes

-

最強的 GPT-5.5 規格證據來自 OpenAI API 文件,包含模型 ID、價格、context window、最大輸出、latency 與工具支援。[

2]

-

最直接的兩模型比較來自 LLM Stats 與 BenchLM,但兩者皆屬第三方資料;其中 BenchLM 明確表示 benchmark data 尚未完整,因此它反而削弱了「已有確定排名」的信心。[

5][

6]

-

關於 Claude Opus 4.7 的 Thematic Generalization Benchmark 退步資訊,只能支持「Claude Opus 4.7 在某項測試相對 Opus 4.6 可能退步」這個有限觀察,不能直接推論 GPT-5.5 必然更強。[

4]

Limitations / uncertainty

-

Insufficient evidence. 目前沒有可用的官方同場 benchmark 表格、完整 benchmark suite 分數、測試設定、樣本數、溫度設定或推理等級控制,因此不能嚴格判定 GPT-5.5 或 Claude Opus 4.7 誰在整體能力上勝出。[

1][

2][

6]

-

第三方結論彼此語氣不同:LLM Stats 給出 Claude Opus 4.7 略勝的判斷,而 BenchLM 則說資料尚未完整,因此較保守的結論是「Claude 可能略優,但證據不足」。[

5][

6]

-

Reddit 來源可作為社群訊號,但不適合作為基準測試比較的主要依據。[

38]

Summary

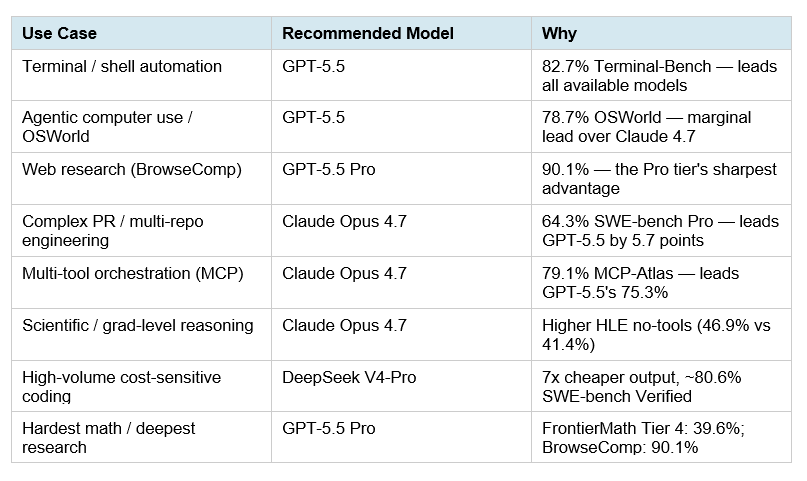

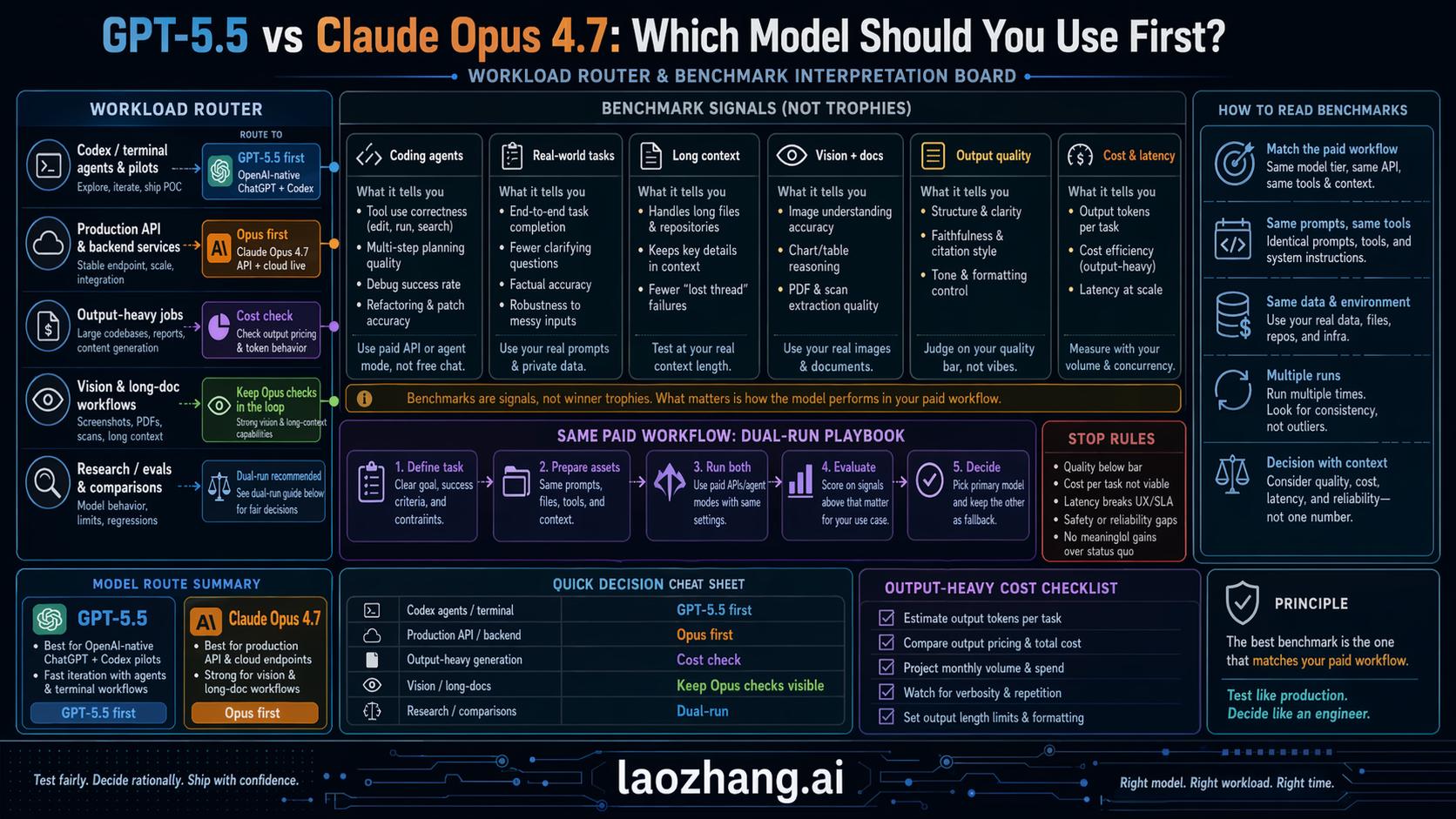

在現有證據下,最合理的結論是:Claude Opus 4.7 可能在某些第三方 benchmark 彙總中略勝 GPT-5.5,且輸出 token 價格可能較低;GPT-5.5 則有官方確認的 1M context window、128K 最大輸出、Fast latency 標示與多工具支援。[2][

5][

6]

若要做採購或模型選型,不能只看目前這些摘錄;應依你的實際任務,對兩者做同題、同提示、同推理設定的 accuracy、成本、token consumption 與 end-to-end latency 測試。[1]

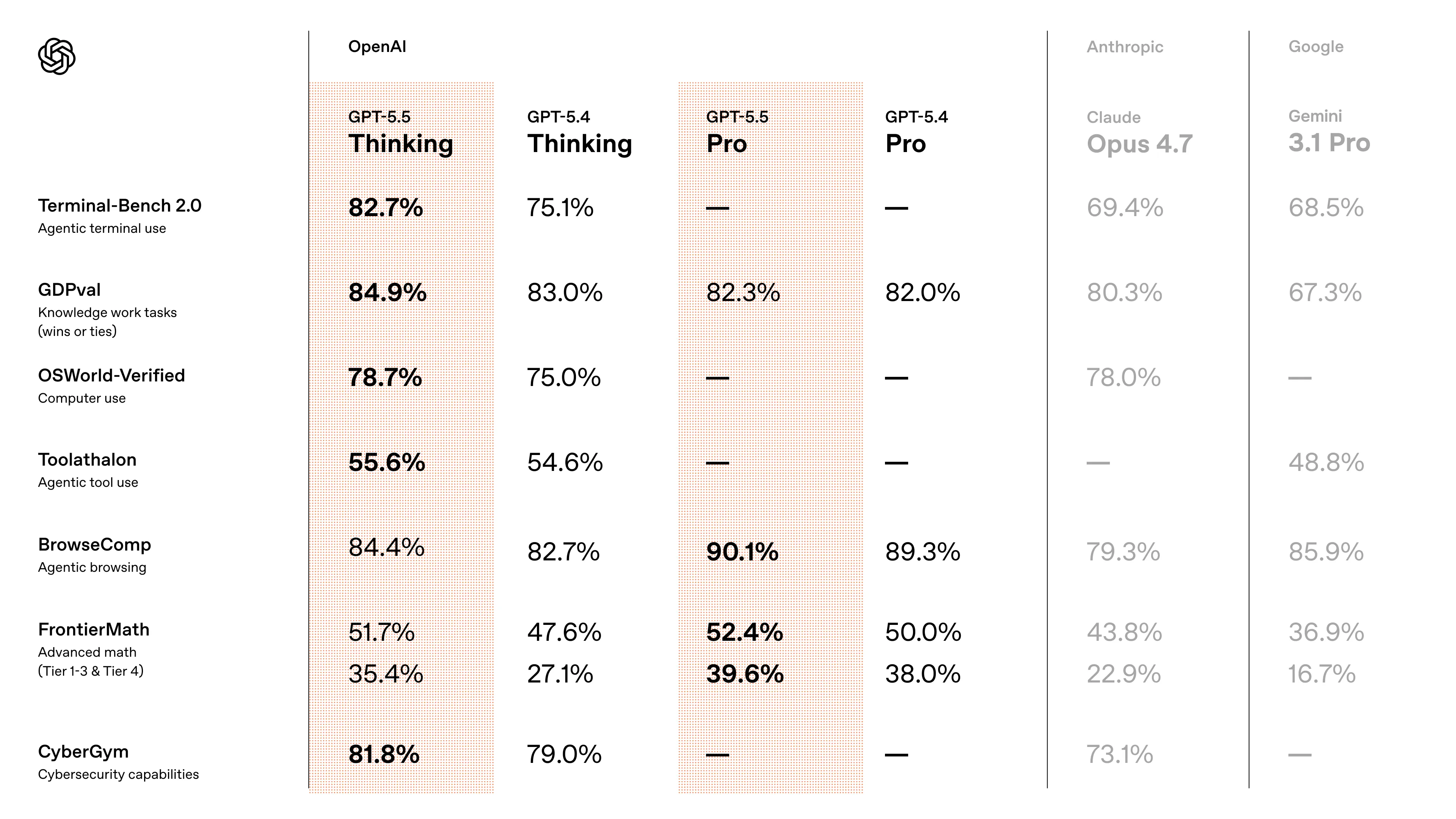

輔助視覺素材

研究對話

研究問題

研究並向我展示 GPT-5.5 與 Claude Opus 4.7 的基準測試比較。

附引用的答案

目前可用證據不足以做出「權威、完整」的 GPT-5.5 與 Claude Opus 4.7 基準測試結論。有限的第三方資料顯示 Claude Opus 4.7 可能在整體基準表現上略佔優勢,但 BenchLM 同時標示兩者的完整 benchmark data 尚未就緒,因此應將此視為初步、未充分驗證的比較,而非定論。[5][

6]

Key findings

-

整體基準測試結論:Insufficient evidence. 可用資料中沒有官方 OpenAI 與 Anthropic 對 GPT-5.5、Claude Opus 4.7 的同場完整 benchmark 對照,也沒有足夠的逐項分數可驗證結論。[

1][

2][

6]

-

第三方 LLM Stats 的初步比較稱 Claude Opus 4.7 在 benchmark performance 上有「slight edge」,並稱 Claude Opus 4.7 每 token 成本約便宜 1.1 倍。[

5]

-

BenchLM 的比較頁面更保守,明確表示 Claude Opus 4.7 與 GPT-5.5 的 benchmark data「coming soon」,且目前只有 partial data,因此不支持強結論。[

6]

-

價格與規格方面,OpenAI API 文件列出 GPT-5.5 的 Model ID 為

gpt-5.5,輸入價格為 $5 / MTok,輸出價格為 $30 / MTok,context window 為 1M,最大輸出為 128K tokens,並標示 latency 為 Fast。[2]

-

BenchLM 顯示的價格對照為 Claude Opus 4.7 約 $5 / $25,GPT-5.5 約 $5 / $30,兩者 context window 皆為 1M,但 speed 與 latency / TTFT 皆標示 N/A。[

6]

-

GPT-5.5 的官方定位是「coding and professional work」的新一類模型,並支援 functions、web search、file search、computer use 等工具。[

2]

-

OpenAI 文件建議在工具密集或長時間工作流程中,應針對 accuracy、token consumption、end-to-end latency 與其他模型做實測 benchmark,而不是只依賴靜態分數。[

1]

-

Claude Opus 4.7 的部分表現存在負面訊號:一則 AI 開發者日報摘錄稱 Claude Opus 4.7 high reasoning 在 Thematic Generalization Benchmark 上低於 Opus 4.6 high reasoning,分數從 80.6 降到 72.8,但這不是 GPT-5.5 對 Claude Opus 4.7 的直接比較。[

4]

-

Reddit 有貼文聲稱 GPT-5.5 在幻覺率與 AA IQ 上勝過 Claude Opus 4.7,但這是社群來源,證據強度明顯低於官方文件或可重現 benchmark,因此不應作為主要結論依據。[

38]

Comparison table

| 面向 | GPT-5.5 | Claude Opus 4.7 | 可支持的結論 |

|---|---|---|---|

| 整體 benchmark | 官方比較資料不足 | 第三方稱略勝 | LLM Stats 稱 Claude Opus 4.7 略有 benchmark 優勢,但 BenchLM 表示完整資料尚未就緒。[ |

| 輸入價格 | $5 / MTok | $5 / MTok | 兩者輸入價格在 BenchLM 摘錄中相同。[ |

| 輸出價格 | $30 / MTok | $25 / MTok | BenchLM 摘錄顯示 Claude Opus 4.7 輸出較便宜;LLM Stats 也稱 Claude Opus 4.7 每 token 約便宜 1.1 倍。[ |

| Context window | 1M | 1M | BenchLM 摘錄顯示兩者皆為 1M context window;OpenAI 文件也列出 GPT-5.5 context window 為 1M。[ |

| Latency / speed | OpenAI 標示 Fast | N/A | GPT-5.5 在 OpenAI 模型頁標示 latency 為 Fast;BenchLM 對兩者 latency / TTFT 皆標示 N/A。[ |

| 工具支援 | Functions、Web search、File search、Computer use | 資料不足 | 可確認 GPT-5.5 的工具支援;可用證據未提供 Claude Opus 4.7 的同等官方工具規格。[ |

| 可靠性結論 | 需自行實測 | 需自行實測 | OpenAI 文件建議依 accuracy、token consumption、end-to-end latency 進行實際 benchmark。[ |

Evidence notes

-

最強的 GPT-5.5 規格證據來自 OpenAI API 文件,包含模型 ID、價格、context window、最大輸出、latency 與工具支援。[

2]

-

最直接的兩模型比較來自 LLM Stats 與 BenchLM,但兩者皆屬第三方資料;其中 BenchLM 明確表示 benchmark data 尚未完整,因此它反而削弱了「已有確定排名」的信心。[

5][

6]

-

關於 Claude Opus 4.7 的 Thematic Generalization Benchmark 退步資訊,只能支持「Claude Opus 4.7 在某項測試相對 Opus 4.6 可能退步」這個有限觀察,不能直接推論 GPT-5.5 必然更強。[

4]

Limitations / uncertainty

-

Insufficient evidence. 目前沒有可用的官方同場 benchmark 表格、完整 benchmark suite 分數、測試設定、樣本數、溫度設定或推理等級控制,因此不能嚴格判定 GPT-5.5 或 Claude Opus 4.7 誰在整體能力上勝出。[

1][

2][

6]

-

第三方結論彼此語氣不同:LLM Stats 給出 Claude Opus 4.7 略勝的判斷,而 BenchLM 則說資料尚未完整,因此較保守的結論是「Claude 可能略優,但證據不足」。[

5][

6]

-

Reddit 來源可作為社群訊號,但不適合作為基準測試比較的主要依據。[

38]

Summary

在現有證據下,最合理的結論是:Claude Opus 4.7 可能在某些第三方 benchmark 彙總中略勝 GPT-5.5,且輸出 token 價格可能較低;GPT-5.5 則有官方確認的 1M context window、128K 最大輸出、Fast latency 標示與多工具支援。[2][

5][

6]

若要做採購或模型選型,不能只看目前這些摘錄;應依你的實際任務,對兩者做同題、同提示、同推理設定的 accuracy、成本、token consumption 與 end-to-end latency 測試。[1]

大家也會問

「研究並向我展示 GPT-5.5 與 Claude Opus 4.7 的基準測試比較。」的簡短答案是什麼?

**整體基準測試結論:Insufficient evidence.** 可用資料中沒有官方 OpenAI 與 Anthropic 對 GPT-5.5、Claude Opus 4.7 的同場完整 benchmark 對照,也沒有足夠的逐項分數可驗證結論。

最值得優先驗證的重點是什麼?

**整體基準測試結論:Insufficient evidence.** 可用資料中沒有官方 OpenAI 與 Anthropic 對 GPT-5.5、Claude Opus 4.7 的同場完整 benchmark 對照,也沒有足夠的逐項分數可驗證結論。 **第三方 LLM Stats 的初步比較**稱 Claude Opus 4.7 在 benchmark performance 上有「slight edge」,並稱 Claude Opus 4.7 每 token 成本約便宜 1.1 倍。

接下來在實務上該怎麼做?

**BenchLM 的比較頁面更保守**,明確表示 Claude Opus 4.7 與 GPT-5.5 的 benchmark data「coming soon」,且目前只有 partial data,因此不支持強結論。

下一步適合探索哪個相關主題?

繼續閱讀「研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較不會中途失焦、漏步驟或跑偏?」,從另一個角度查看更多引用來源。

開啟相關頁面我應該拿這個和什麼比較?

將這個答案與「研究 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 的基準測試表現,並根據這些基準測試對它們進行比較。」交叉比對。

開啟相關頁面繼續深入研究

來源

- [1] Claude Opus 4.7 vs GPT-5.5: AI Benchmark Comparison 2026benchlm.ai

BlogAdvertise Search⌘K ## Search BenchLM Search models, benchmarks, rankings, comparisons, providers, and blog posts. @glevd $5 / $25 $5 / $30 Speed N/A N/A Latency (TTFT) N/A N/A Context Window 1M 1M Quick Verdict Benchmark data for Claude Opus 4.7 and GPT-5.5 is coming soon on BenchLM. BenchLM has partial data for these models, but not enough overlapping benchmark coverage to produce a fair score-level comparison yet. GPT-5.5 is priced at $5.00 input / $30.00 output per 1M tokens, versus $5.00 input / $25.00 output per 1M tokens for Claude Opus 4.7. ## Benchmark Deep Dive Agentic 7 benchmar…

- [2] GPT-5.5 vs Claude Opus 4.7: Benchmarks, Pricing & Coding ...lushbinary.com

| Benchmark | GPT-5.5 | Opus 4.7 | Gemini 3.1 Pro | --- --- | | SWE-bench Pro | 58.6% | 64.3% | 54.2% | | SWE-bench Verified | ~85% | 87.6% | ~80% | | Terminal-Bench 2.0 | 82.7% | ~72% | ~68% | | GDPval (Knowledge Work) | 84.9% | ~78% | ~75% | | OSWorld-Verified (Computer Use) | 78.7% | ~65% | ~60% | | GPQA Diamond | ~93% | 94.2% | ~91% | | CursorBench | ~65% | 70% | ~58% | | Tau2-bench Telecom | 98.0% | ~90% | ~85% | Key Takeaway Opus 4.7 wins the coding benchmarks (SWE-bench Pro, SWE-bench Verified, CursorBench, GPQA Diamond). GPT-5.5 wins the agentic and knowledge-work benchmarks (Terminal…

- [3] GPT-5.5 vs Claude Opus 4.7: Pricing, Speed, Benchmarksllm-stats.com

The Verdict On the 10 benchmarks both providers report, Opus 4.7 leads on 6 and GPT-5.5 leads on 4. The leads cluster by category, not by overall quality: Opus 4.7 is ahead on the reasoning-heavy and review-grade tests (GPQA Diamond, HLE with and without tools, SWE-Bench Pro, MCP Atlas, FinanceAgent v1.1). GPT-5.5 is ahead on the long-running tool-use tests (Terminal-Bench 2.0, BrowseComp, OSWorld-Verified, CyberGym). Margins are mostly between 2 and 13 percentage points, and every score is self-reported at each provider's high reasoning tier — comparable in shape, not in methodology. [...…

- [4] GPT-5.5 vs Claude Opus 4.7: quién gana en código, terminal y agenteswebreactiva.com

Los benchmarks principales frente a Opus 4.7 ¶ GPT-5.5 lidera con claridad en Terminal-Bench 2.0 (82.7% vs 69.4% de Opus 4.7), OSWorld-Verified (78.7% vs 78.0%) y BrowseComp (84.4% vs 79.3%), pero Opus 4.7 mantiene ventaja en SWE-Bench Pro (64.3% vs 58.6%), MCP Atlas (79.1% vs 75.3%) y razonamiento sin herramientas. Los datos cruzan los anuncios oficiales de OpenAI del 23 de abril con los de Anthropic del 16 de abril. Estos son los números que importan, benchmark por benchmark: [...] Fuente: anuncios oficiales de OpenAI y Anthropic, cruzados con el análisis publicado por Decrypt el 23 de a…

- [5] OpenAI’s GPT-5.5 vs Claude Opus 4.7: Which is better? | Mashablemashable.com

GPT-5.5 and Opus 4.7: Leaderboards GPT-5.5 isn't yet ranked on all AI leaderboards yet, but it should be very competitive with Claude Opus 4.7. On the leaderboards of verified benchmark tests such as Arc Prize"), GPT-5.5 beats Opus 4.7 (more on this below). On the popular Arena leaderboard"), which is based on user testing, Claude Opus 4.7 Thinking has the top overall spot. Interestingly, Opus 4.7 is currently ranked below Opus 4.6, though that will likely change in time. Currently, new Anthropic models hold the top four overall spots. What's more, Anthropic's unreleased Claude Mythos isn'…

- [6] Claude Opus 4.7 Benchmarks Explainedvellum.ai

Coding is the clear headline. SWE-bench Verified jumps from 80.8% to 87.6%, a nearly 7-point gain that puts Opus 4.7 ahead of Gemini 3.1 Pro (80.6%). On SWE-bench Pro, the harder multi-language variant, Opus 4.7 goes from 53.4% to 64.3%, leapfrogging both GPT-5.4 (57.7%) and Gemini (54.2%). Computer use takes a meaningful step. OSWorld-Verified climbs from 72.7% to 78.0%, ahead of GPT-5.4 (75.0%) and within 1.6 points of Mythos Preview (79.6%). Pair that with the 3x vision resolution upgrade and you have a model that's genuinely more capable at real UI interaction. Tool use is best-in-class.…

- [7] GPT 5.5 vs Opus 4.7: Which AI Model Is Best for Product Design?uxplanet.org

When it comes to comparing GPT-5.5 with Opus 4.7, I think that despite all the upgrades that OpenAI did for GPT-5.5, Opus 4.7 still works better

- [8] GPT 5.5 beats Claude Opus 4.7 : r/ArtificialInteligence - Redditreddit.com

GPT 5.5 beats Claude Opus 4.7 : r/ArtificialInteligence Skip to main contentGPT 5.5 beats Claude Opus 4.7 : r/ArtificialInteligence Open menu Open navigation. Lower hallucination and a significant lead in AA IQ. Share New to Reddit? Create your account and connect with a world of communities. Continue with Email Continue With Phone Number By continuing, you agree to ourUser Agreementand acknowledge that you understand thePrivacy Policy. # Related Answers Section Related Answers Latest breakthroughs in AI technology Impact of AI on job markets Ethical dilemmas in AI development Best AI tools…

- [9] GPT 5.5 is out. Here are the benchmarks. | Facebookfacebook.com

1d 4 Image 3 View all 3 replies []( Robert Eaton Opus 4.7 has been terrible. Getting everything wrong, making same mistake over and over. I went back to 4.6 and everything was fine 1d 4 Image 4Image 5 View all 2 replies []( Patrick Healy Codex $100a month package while building then go to $20 a month package & you run a local model hybrid is the best set up for a business. Personal use just go full local 1d 3 Image 6 View 1 reply View more comments 3 of 20 See more on Facebook See more on Facebook Email or phone number Password Log In Forgot password? or Create new account [...] # OpenClaw Co…

- [10] GPT-5.5 Is Here — I Tested It (And It Beats Claude Opus 4.7)medium.com

OpenAI calls GPT 5.5 “a new class of intelligence for real work.” ## Create an account to read the full story. The author made this story available to Medium members only. If you’re new to Medium, create a new account to read this story on us. Continue in app Or, continue in mobile web Sign up with Google Sign up with Facebook Sign up with email Already have an account? Sign in []( Image 10 Write a response What are your thoughts? Cancel Respond See all responses Help Status About Careers Press Blog Privacy Rules Terms Text to speech [...] # GPT-5.5 Is Here — I Tested It (And It Beats Claud…

- [11] I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just dropped GPT 5.5. The benchmarks look strong against Opus 4.7. But benchmarks only tell part of the story. So I ran 4 head-to-head… | Nate Herkelman | 39 commentslinkedin.com

in Codex, it's in chat TBT. I'm sure by the time you're watching this video, the API will probably be out. But I saw the announcement and I just jumped on it and wanted to play around. So a quick quote from the president of Opening Eye. It's faster, sharper, and it uses fewer tokens compared to something like GT 5.4. Now let's see where it pulls ahead a little bit. So the terminal bench 2.0, it scored a 82.7, whereas GT 5.4 scored a 75. 21 and Opus 4.7 scored a 69.4 so it is beating Opus here on the terminal bench and it's also beating the previous model. They also ran an expert sweep bench i…

- [12] We Tested GPT-5.5 for 3 Weeks. It's a Beast. - YouTubeyoutube.com

GPT-5.5 Just Beat Claude Opus 4.7 at Engineering Image 7 Every Every 37.1K subscribers Subscribe Subscribed 528 Share Save Download Download 16,312 views 7 hours ago 16,312 views • Apr 23, 2026 OpenAI just dropped GPT-5.5—and after three weeks of hands-on testing at Every, the headline is its coding ability. On Every's Senior Engineer Benchmark, GPT-5.5 scored 62.5 out of 100. That’s about a 30-point leap over Claude Opus 4.7.…...more ...more How this was made Auto-dubbed Audio tracks for some languages were automatically generated. Learn more ## Chapters View all Image 8 #### It's Model Re…

- [13] AI 开发者日报 2026-04-20ainews.liduos.com

Claude Opus 4.7 (high) unexpectedly performs significantly worse than Opus 4.6 (high) on the Thematic Generalization Benchmark: 80.6 → 72.8. (Activity: 610): The image is a bar chart illustrating the performance of various models on the Thematic Generalization Benchmark, highlighting that Claude Opus 4.7 (high reasoning) scored

72.8, which is notably lower than Claude Opus 4.6 (high reasoning) at80.6. This benchmark evaluates a model’s ability to infer latent themes from examples and distinguish them from close distractors using anti-examples. The performance drop in Opus 4.7 is attribut… - [14] Claude Opus 4.7 vs GPT-5.5 Comparison - LLM Statsllm-stats.com

LLM Stats Logo Make AI phone calls with one API call Model Comparison # Claude Opus 4.7 vs GPT-5.5 Claude Opus 4.7 has a slight edge in benchmark performance. Claude Opus 4.7 is 1.1x cheaper per token. Anthropic OpenAI ## Performance Benchmarks Comparative analysis across standard metrics Claude Opus 4.7 outperforms in 5 benchmarks (Finance Agent, GPQA, Humanity's Last Exam, MCP Atlas, SWE-Bench Pro), while GPT-5.5 is better at 4 benchmarks (BrowseComp, CyberGym, OSWorld-Verified, Terminal-Bench 2.0). Claude Opus 4.7 has a slight edge in benchmark performance. ## Arena Performance Human prefe…

- [15] GPT-5.5 (xhigh) vs Claude Opus 4.7 (Adaptive Reasoning, Max Effort): Model Comparisonartificialanalysis.ai

| Metric | OpenAI logoGPT-5.5 (xhigh) | Anthropic logoClaude Opus 4.7 (Adaptive Reasoning, Max Effort) | Analysis | --- --- | | Creator | OpenAI | Anthropic | | | Context Window | 922k tokens (~1383 A4 pages of size 12 Arial font) | 1000k tokens (~1500 A4 pages of size 12 Arial font) | GPT-5.5 (xhigh) is smaller than Claude Opus 4.7 (Adaptive Reasoning, Max Effort) | | Release Date | April, 2026 | April, 2026 | GPT-5.5 (xhigh) has a more recent release date than Claude Opus 4.7 (Adaptive Reasoning, Max Effort) | | Image Input Support | Yes | Yes | Both GPT-5.5 (xhigh) and Claude Opus 4.7 (Ada…

- [16] GPT-5.5 vs Claude Opus 4.7: Which Model Wins for Agentic Work?beam.ai

GPT-5.5 is the better pick for long-context coding tasks, complex mathematical reasoning, cybersecurity work, and workflows that require processing large documents in a single pass. Claude Opus 4.7 is the better pick for real-world code resolution, tool-heavy agent workflows, financial analysis, and tasks where instruction-following consistency matters more than raw intelligence scores. The most capable production AI agent deployments already route different tasks to different models based on the specific requirements. A coding agent might use GPT-5.5 for architecture-level reasoning and Clau…

- [17] GPT-5.5来了!全榜第一碾压Opus 4.7,OpenAI今夜雪耻 - 知乎zhuanlan.zhihu.com

OpenAI内部的Expert-SWE评测,专门测那些人类预估中位完成时间20小时的长周期编程任务,GPT-5.5拿到73.1%,同样高于GPT-5.4的68.5%。 在业界公认最能反映真实GitHub问题解决能力的评测SWE-Bench Pro中,GPT-5.5得分58.6%,略逊色于Claude Opus 4.7(64.3%)。 不过,OpenAI在这个数据旁边标了一个星号,写着「Anthropic报告称在部分问题子集上存在过拟合(记忆)迹象」。 换句话说就是,Opus 4.7虽然考试成绩好,但我怀疑你背过答案。 Codex研究员直言:SWE-Bench早已不能衡量顶尖编程能力了 最关键是,在这三项的评估中,GPT-5.5使用了更少的token,但仍全面赶超GPT-5.4。 这一能力在Codex中,体现得更为明显。 它可以完成「端到端」的编程任务,从实现、重构到调试、测试和验证等流程。 举个栗子,让GPT-5.5做一个阿尔忒弥斯II太空任务可视化应用。 首先把一张任务的截图扔给GPT-5.5,然后要求用WebGL和Vite实现一个可交互的3D轨道模拟器,轨迹数据必须来自NASA/JPL Horizons的真实矢量数据,并且还要有逼真的轨道力学。 只见,GPT-5.5从零搭完,鼠标拖拽能转,猎户座飞船、月球、太阳的相对位置都对得上。 早期测试的大佬直言, GPT‑5.5拥有更强…

- [18] Introducing Claude Opus 4.7anthropic.com

Image 7: logo > Based on our internal research-agent benchmark, Claude Opus 4.7 has the strongest efficiency baseline we’ve seen for multi-step work. It tied for the top overall score across our six modules at 0.715 and delivered the most consistent long-context performance of any model we tested. On General Finance—our largest module—it improved meaningfully on Opus 4.6, scoring 0.813 versus 0.767, while also showing the best disclosure and data discipline in the group. And on deductive logic, an area where Opus 4.6 struggled, Opus 4.7 is solid. > > Michal Mucha > > Lead AI Engineer, Applied…

- [19] GPT-5.5 vs Claude Opus 4.7: Real-World Coding Performance Comparedmindstudio.ai

GPT-5.5 uses 72% fewer output tokens than Claude Opus 4.7 on the same coding tasks — a structural difference, not a minor gap. On raw benchmark quality, both models are competitive. Neither dominates on every task type. For high-volume agentic coding pipelines, GPT-5.5 is significantly cheaper and faster to run. For complex, reasoning-heavy tasks across large codebases, Opus 4.7’s thoroughness can justify the cost. The best production setups often use both models via routing — GPT-5.5 for standard tasks, Opus 4.7 for the hard ones. Token efficiency compounds in agentic loops: every step adds…

- [20] How OpenAI's recently released GPT-5.5 stacks up with Anthropic's ...rdworldonline.com

Research & Development World # How OpenAI’s recently released GPT-5.5 stacks up with Anthropic’s gated Claude Mythos By Brian Buntz | [Adobe Stock] TL;DR: Claude Mythos Preview appears to lead cleanly on six of nine overlapping rows, especially SWE-bench Pro and Humanity’s Last Exam (HLE), but benchmark comparisons between Mythos and GPT-5.5 are imprecise. The released Opus 4.7 and GPT-5.5 are neck and neck. Opus 4.7 leads on SWE-bench Pro (64.3 vs 58.6), HLE no tools (46.9 vs 41.4) and HLE with tools (54.7 vs 52.2). [...] Opus 4.7 leads GPT-5.5 base on: SWE-bench Pro, HLE with tools. Ties on…

- [21] BridgeMind on X: "GPT 5.5 JUST DROPPED. Claude Opus 4.7 is no longer the best model in the world. 82.7% vs 69.4% on Terminal-Bench 2.0. 84.9% vs 80.3% on GDPval. 81.8% vs 73.1% on CyberGym. Not even close. Sam said it would be a leap. He wasn't lying. OpenAI just took the crown back https://t.co/dZbx.com

| Privacy Policy | Cookie Policy | Accessibility | Ads info | More © 2026 X Corp. [...] 6:04 PM · Apr 23, 2026 125 174 1.5K 234 Read 125 replies ## New to X? Sign up now to get your own personalized timeline! Sign up with Apple Create account By signing up, you agree to the Terms of Service and Privacy Policy, including Cookie Use. ## Relevant people Image 3 BridgeMind @bridgemindai Follow Click to Follow bridgemindai The home of the vibe coding movement. Founded by @matthewmillerai . 63K+ on YouTube. Building to $1M in public. # Trending now ## What’s happening Politics · Trending Falklands…

- [22] Introducing GPT-5 - OpenAIopenai.com

GPT‑5 not only outperforms previous models on benchmarks and answers questions more quickly, but—most importantly—is more useful for real-world ... Aug 7, 2025

- [23] Semantic Alignment Before Acceleration - Promptingcommunity.openai.com

April 24, 2026. Feedback on GPT-5 Model Performance for Translation Tasks · Feedback. 19, 2756, August 19, 2025. Chatgpt API isn't good as it's ... 3 hours ago

- [24] Making ChatGPT better for clinicians - OpenAIopenai.com

Introducing GPT-5.5. Product Apr 23, 2026. OAI Blog Agents Hero 1x1. Introducing workspace agents in ChatGPT. Product Apr 22, 2026. Images 2.0 ... 3 days ago

- [25] GPT-5.5 System Card - Deployment Safety Hub - OpenAIdeploymentsafety.openai.com

We report here on our Production Benchmarks, an evaluation set with conversations representative of challenging examples from production data. 2 days ago

- [26] [PDF] GeneBench: Assessing AI Agents for Multi-Stage Inference ... - OpenAIcdn.openai.com

We introduce GeneBench, a benchmark for AI agents on realistic multi-stage scientific data analysis in genetics and quantitative biology. 2 days ago

- [27] Introducing GPT-5.5 - OpenAIopenai.com

Update on April 24, 2026: GPT‑5.5 and GPT‑5.5 Pro are now available in the API. The system card has also been updated to describe the ... 2 days ago

- [28] GPT-5.5 is here! Available in the API, Codex and ChatGPT todaycommunity.openai.com

Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, ... 2 days ago

- [29] GPT-5.5 System Card - OpenAIopenai.com

GPT‑5.5 is a new model designed for complex, real-world work, including writing code, researching online, analyzing information, ... 2 days ago

- [30] Introducing GPT-Rosalind for life sciences research - OpenAIopenai.com

OpenAI introduces GPT-Rosalind, a frontier reasoning model built to accelerate drug discovery, genomics analysis, protein reasoning, ...

- [31] OpenAI Deployment Safety Hub: System cards & other updatesdeploymentsafety.openai.com

Apr 23, 2026. GPT-5.5 System Card. GPT-5.5 is a new model designed for complex, real-world work, including writing code, researching online, analyzing… Apr 21, ...

- [32] Using GPT-5.5 | OpenAI APIdevelopers.openai.com

For tool-heavy or long-running workflows, verify that your application handles

phase, preambles, and assistant-item replay correctly. Benchmark against other models on accuracy, token consumption, and end-to-end latency. [...] More efficient reasoning: GPT-5.5 reaches strong results with fewer reasoning tokens than prior models, even at the same reasoning effort. This is especially useful in complex, tool-heavy, or multi-step workflows where token savings compound. Stronger task execution with outcome-first prompts: GPT-5.5 is better at working from a clear goal, preserving constraints, and… - [33] Models | OpenAI APIdevelopers.openai.com

GPT-5.5 New A new class of intelligence for coding and professional work. Model ID gpt-5.5 [Reasoning none low medium high xhigh Input price $5 / Input MTok Output price $30 / Output MTok Latency Fast Max output 128K tokens Context window 1M Tools Functions, Web search, File search, Computer use Knowledge cutoff Dec 1, 2025]( A more affordable model for coding and professional work. Model ID gpt-5.4 [Reasoning none low medium high xhigh Input price $2.50 / Input MTok Output price $15 / Output MTok Latency Fast Max output 128K tokens Context window 1M Tools Functions, Web search, File search,…

- [34] GPT-5.3 Instant: Smoother, more useful everyday conversationsopenai.com

Image 3: OAI Blog Agents Hero 1x1 Introducing workspace agents in ChatGPT Product Apr 22, 2026 Our Research Research Index Research Overview Research Residency Economic Research Latest Advancements GPT-5.5 GPT-5.4 GPT-5.3 Instant GPT-5.3-Codex Safety Safety Approach Security & Privacy Trust & Transparency ChatGPT Explore ChatGPT(opens in a new window) Business Enterprise Education Pricing(opens in a new window) Download(opens in a new window) Sora Sora Overview Features Pricing Sora log in(opens in a new window) API Platform Platform Overview Pricing API log in(opens in a new window) Document…

- [35] gpt-oss-120b & gpt-oss-20b 模型卡 - OpenAIopenai.com

Image 3: Child safety blueprint > card image 儿童安全蓝图正式发布 安全 2026年4月8日 我们的研究 研究索引 研究概览 研究驻留 经济研究 最新进展 GPT-5.3 Instant GPT-5.3-Codex GPT-5 Codex 安全 安全措施 安全与隐私 信任与透明度 ChatGPT 探索 ChatGPT(在新窗口中打开) Business 版 Enterprise 版 Education 版 定价(在新窗口中打开) 下载(在新窗口中打开) Sora Sora 概览 功能 定价 Sora 登录(在新窗口中打开) API 平台 平台概览 定价 API 登录(在新窗口中打开) 文档(在新窗口中打开) 开发者论坛(在新窗口中打开) 商业应用 商业应用概览 解决方案 联系销售团队 公司 关于我们 我们的宪章 基金会(在新窗口中打开) 工作机会 品牌 支持 帮助中心(在新窗口中打开) 更多 新闻 客户案例 Academy 直播 播客 RSS 条款与政策 使用条款 隐私政策 其他政策 (在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开) OpenAI © 2015–2026 管理 Cookie 中文 中国 Image 4 [...] _对抗性攻击者是否可以通过微调…

- [36] How accurate is the math or benchmarks of ai through chatgpt 4o? - Use cases and examples - OpenAI Developer Communitycommunity.openai.com

in terms of low processing power - how could i use a near 0 usage in working ai models who are designed for high computations and information aquisition ? ### Related topics | Topic | | Replies | Views | Activity | --- --- | I want to share and discuss a few questions about GPT-4 API | 0 | 796 | April 28, 2023 | | Model selection problem. Mainly used to solve mathematics and physics problems API | 5 | 5525 | February 22, 2026 | | Physics and Math GPT Prompt Engineering Improvements? Prompting gpt-4 | 3 | 1634 | December 20, 2023 | | Comparison of Output Completeness Between ChatGPT and Our AI…

- [37] Introducing GPT-5.2 - OpenAIopenai.com

Models were run with maximum available reasoning effort in our API (xhigh for GPT‑5.2 Thinking & Pro, and high for GPT‑5.1 Thinking), except for the professional evals, where GPT‑5.2 Thinking was run with reasoning effort heavy, the maximum available in ChatGPT Pro. Benchmarks were conducted in a research environment, which may provide slightly different output from production ChatGPT in some cases. _ For SWE-Lancer, we omit 40/237 problems that did not run on our infrastructure._ 2025 ## Author OpenAI ## Keep reading View all Image 6: Hero Art Card SEO 1x1 Introducing GPT-5.5 Product Apr 2…

- [38] Introducing GPT-5.4 - OpenAIopenai.com

Evals without reasoning EvalGPT‑5.4 (none)GPT‑5.2 (none)GPT-4.1 OmniDocBench (normalized edit distance)0.109 0.140— Tau2-bench Telecom 64.3%57.2%43.6% Evals were run with reasoning effort set to xhigh, except where specified otherwise. Benchmarks were conducted in a research environment, which may provide slightly different output from production ChatGPT in some cases. 2026 ## Author OpenAI ## Footnotes 1 Human performance reported in OSWorld: Benchmarking Multimodal Agents for Open-Ended Tasks in Real Computer Environments(opens in a new window). ## Keep reading View all Image 2: Hero…

- [39] Introducing GPT-5.4 mini and nano - OpenAIopenai.com

1 The highest reasoning_effort available for GPT‑5 mini is 'high'. 2 Overall Edit Distance. OmniDocBench was run with reasoning_effort set to 'none' to reflect low-cost, low-latency performance. 2026 ## Author OpenAI ## Keep reading View all Image 1: Hero Art Card SEO 1x1 Introducing GPT-5.5 Product Apr 23, 2026 Image 2: Making ChatGPT free for clinicians Making ChatGPT better for clinicians Product Apr 22, 2026 Image 3: OAI Blog Agents Hero 1x1 Introducing workspace agents in ChatGPT Product Apr 22, 2026 Our Research Research Index Research Overview Research Residency Economic Research Lates…

- [40] Introducing GPT-5.3-Codex-Spark - OpenAIopenai.com

Keep reading View all Image 1: Codex Landing Page SEO Introducing the Codex app Product Feb 2, 2026 Image 2: GPT-5.3-Codex Artcard 1x1 Introducing GPT-5.3-Codex Product Feb 5, 2026 Image 3: System Card Art GPT-5.3-Codex System Card Publication Feb 5, 2026 Our Research Research Index Research Overview Research Residency Economic Research Latest Advancements GPT-5.5 GPT-5.4 GPT-5.3 Instant GPT-5.3-Codex Safety Safety Approach Security & Privacy Trust & Transparency ChatGPT Explore ChatGPT(opens in a new window) Business Enterprise Education Pricing(opens in a new window) Download(opens in a…

- [41] Testing New GPT-4o vs Top 5 AI - Community - OpenAI Developer Communitycommunity.openai.com

Related topics | Topic | | Replies | Views | Activity | --- --- | List of fresh gpt-4o benchmarks, please add Community gpt-4o | 1 | 3710 | May 16, 2024 | | Gpt4 comparison to anthropic Opus on benchmarks Community gpt-4 , api | 9 | 43886 | June 8, 2024 | | GPT-4-Turbo and GPT-4-O benchmarks released! They do well compared to the marketplace Community gpt-4 | 7 | 27637 | May 17, 2024 | | GPT-4o vs. gpt-4-turbo-2024-04-09, gpt-4o loses API gpt-4 | 38 | 15533 | June 11, 2024 | | Gpt-4o and o1-pro > other models? API models | 8 | 669 | April 28, 2025 | Powered by Discourse, best viewed with…

- [42] 信任与透明度 | OpenAIopenai.com

我们的研究 研究索引 研究概览 研究驻留 OpenAI for Science 经济研究 最新进展 GPT-5.3 Instant GPT-5.3-Codex GPT-5 Codex 安全 安全措施 安全与隐私 信任与透明度 ChatGPT 探索 ChatGPT(在新窗口中打开) Business 版 Enterprise 版 Education 版 定价(在新窗口中打开) 下载(在新窗口中打开) Sora Sora 概览 功能 定价 Sora 登录(在新窗口中打开) API 平台 平台概览 定价 API 登录(在新窗口中打开) 文档(在新窗口中打开) 开发者论坛(在新窗口中打开) 商业应用 商业应用概览 解决方案 联系销售团队 公司 关于我们 我们的宪章 基金会(在新窗口中打开) 工作机会 品牌 支持 帮助中心(在新窗口中打开) 更多 新闻 客户案例 直播 播客 RSS 条款与政策 使用条款 隐私政策 其他政策 (在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开)(在新窗口中打开) OpenAI © 2015–2026 管理 Cookie 中文 中国 [...] # 信任与透明度 | OpenAI 跳至主要内容 报告 2025 年 1 月–6 月 74,559 向 NCMEC 报告的内容总数 2025 年 1 月–6 月…

- [43] Research - OpenAIopenai.com

GPT OpenAI’s GPT series models are fast, versatile, and cost-efficient AI systems designed to understand context, generate content, and reason across text, images, and more. Image 2: Hero Art Card SEO 1x1 A new class of intelligence for real work Release Apr 23, 2026 12 min read Image 3: 5.4 Thinking Art Card Our most capable and efficient frontier model for professional work Release Mar 5, 2026 16 min read Image 4: 5.3 Instant Art Card Smoother, more useful everyday conversations Release Mar 3, 2026 5 min read ### o series OpenAI’s o series models are advanced reasoning AI systems that u…