研究 Claude Opus 4.7、GPT-5.5、DeepSeek V4、Kimi K2.6 的基準測試,並盡可能全面地進行比較。

基於你提供的證據,能比較出的結論是:GPT 5.5 在可見的綜合智能排名與 BrowseComp 中領先;DeepSeek V4/DeepSeek V4 Pro Max 在 BrowseComp、Terminal Bench 2.0 和價格上顯示出很強的性價比;Claude Opus 4.7 仍屬前排模型;Kimi K2.6 的可用基準數據最少,因此無法做同等完整排名。證據來源有限且多為摘要片段,以下比較應視為「基於現有證據的部分...

基於你提供的證據,能比較出的結論是:GPT 5.5 在可見的綜合智能排名與 BrowseComp 中領先;DeepSeek V4/DeepSeek V4 Pro Max 在 BrowseComp、Terminal Bench 2.0 和價格上顯示出很強的性價比;Claude Opus 4.7 仍屬前排模型;Kimi K2.6 的可用基準數據最少,因此無法做同等完整排名。證據來源有限且多為摘要片段,以下比較應視為「基於現有證據的部分比較」,不是完整評測。 Key findings 綜合智能排名方面,GPT 5.5 領先。 Artificial Analysis 摘要列出的 Intelligence Index 前五名中,GPT 5.5

重點整理

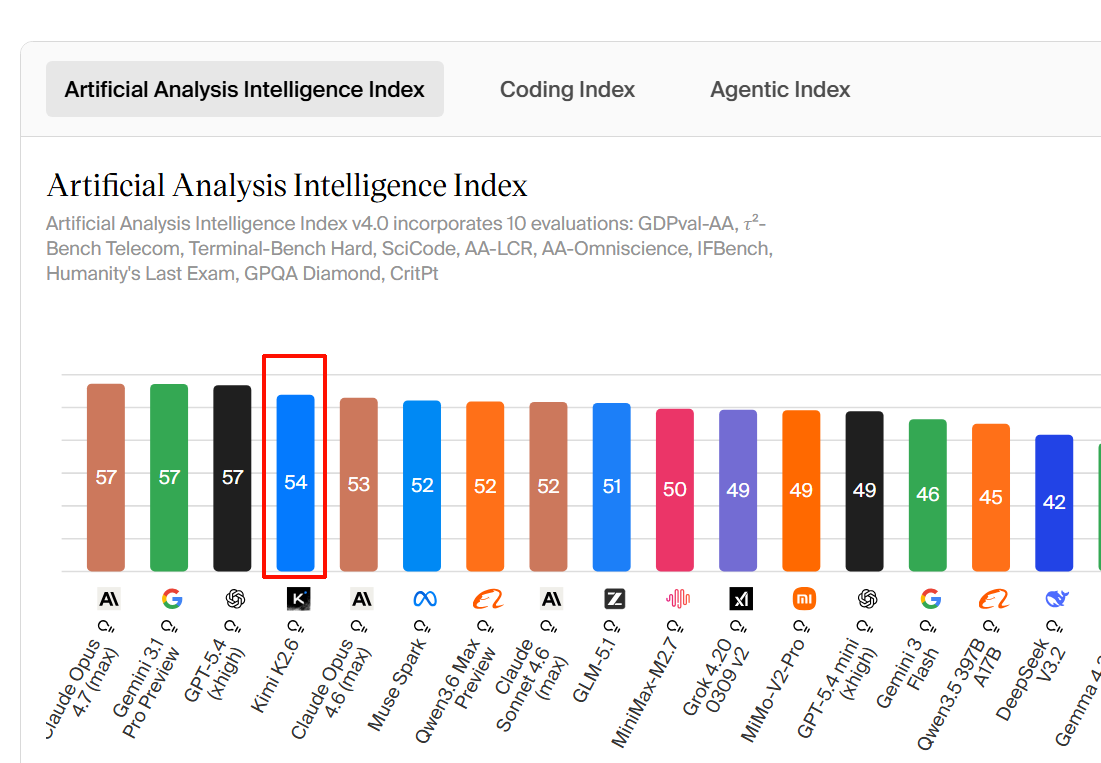

- **綜合智能排名方面,GPT-5.5 領先。** Artificial Analysis 摘要列出的 Intelligence Index 前五名中,GPT-5.5 xhigh 為 60 分、GPT-5.5 high 為 59 分,Claude Opus 4.7 Adaptive Reasoning Max Effort 為 57 分,與 Gemini 3.1 Pro Preview、GPT-5.4 xhigh 同分段出現;該摘要沒有給出 DeepSeek V4 或 Kimi K2.6 的具體 Intelligence Index 分數。

- **BrowseComp 上,GPT-5.5 略高於 DeepSeek-V4-Pro-Max,Claude Opus 4.7 落後一些。** VentureBeat 摘要稱 DeepSeek-V4-Pro-Max 在 BrowseComp 得分 83.4%,GPT-5.5 為 84.4%,Claude Opus 4.7 為 79.3%。

- **Terminal-Bench 2.0 上,DeepSeek V4 有可見分數,但其他模型細節不足。** VentureBeat 摘要稱 DeepSeek 在 Terminal-Bench 2.0 得分 67.9%,並稱其接近 Claude Opus 4.7,但摘要沒有提供 Claude Opus 4.7 的完整數字,也沒有提供 GPT-5.5 或 Kimi K2.6 的 Terminal-Bench 2.0 分數。

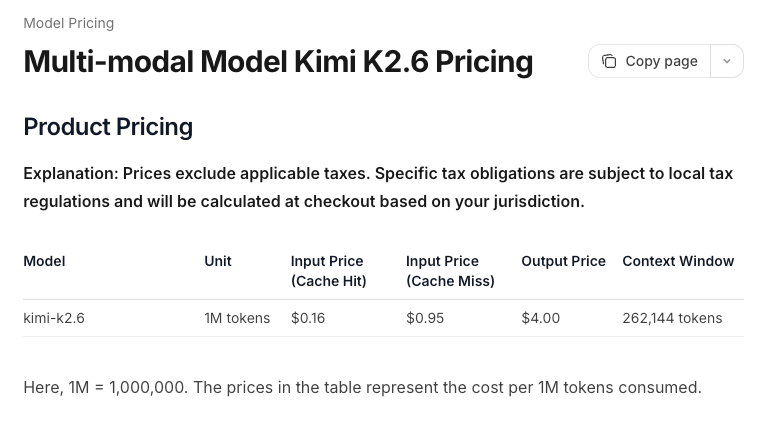

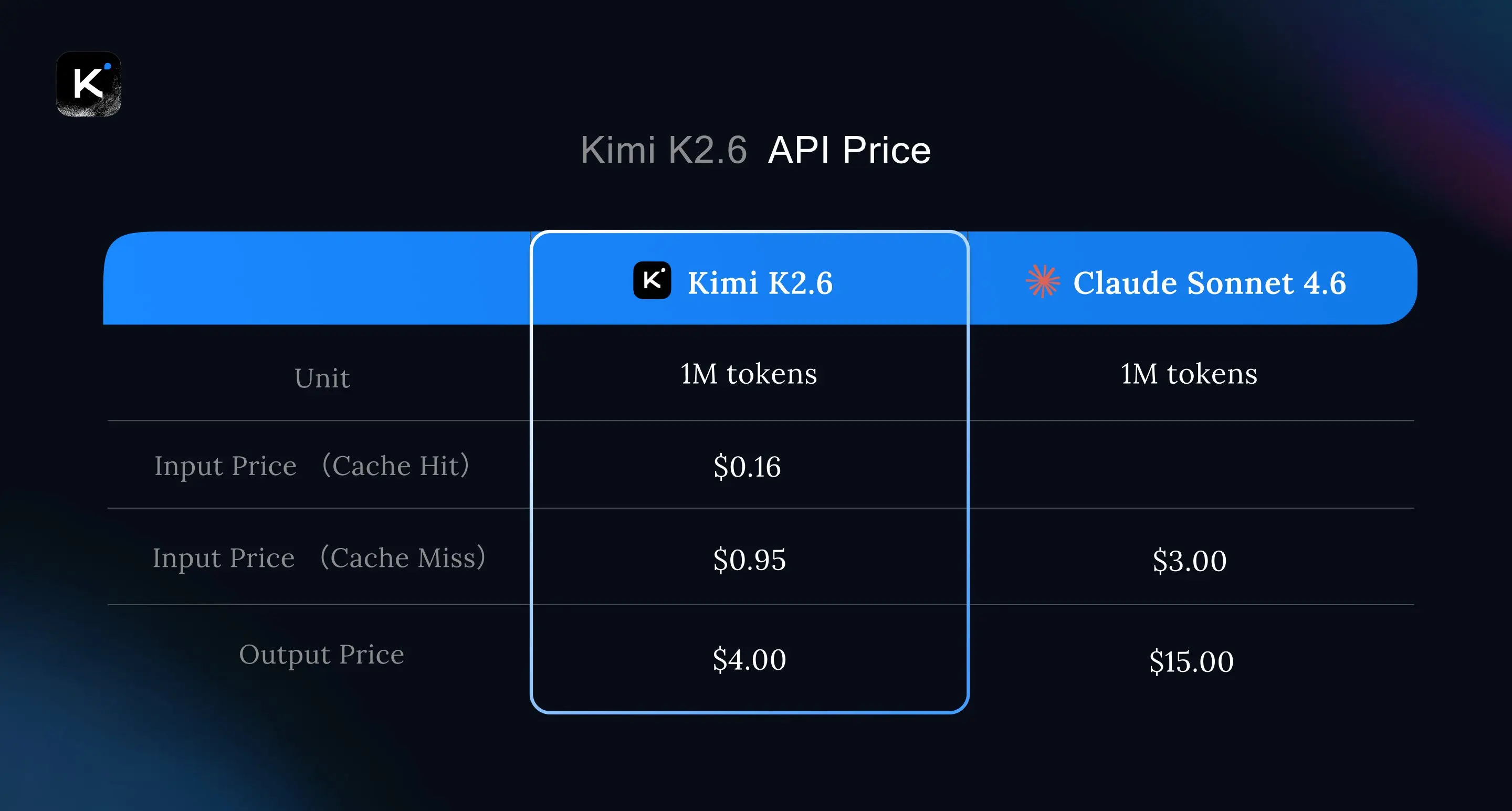

- **成本方面,DeepSeek V4 明顯低於 GPT-5.5;Claude Opus 4.7 的輸入價格片段可見但輸出價格不完整。** Mashable 摘要稱 DeepSeek V4 的 API 價格為每 100 萬輸入 token $1.74、每 100 萬輸出 token $3.48,且上下文窗口為 100 萬;同一摘要稱 GPT-5.5 為每 100 萬輸入 token $5、每 100 萬輸出 token $30,且上下文窗口為 100 萬。 Mashable 摘要也顯示 Claude Opus 4.7 的輸入價格為每 100 萬 token

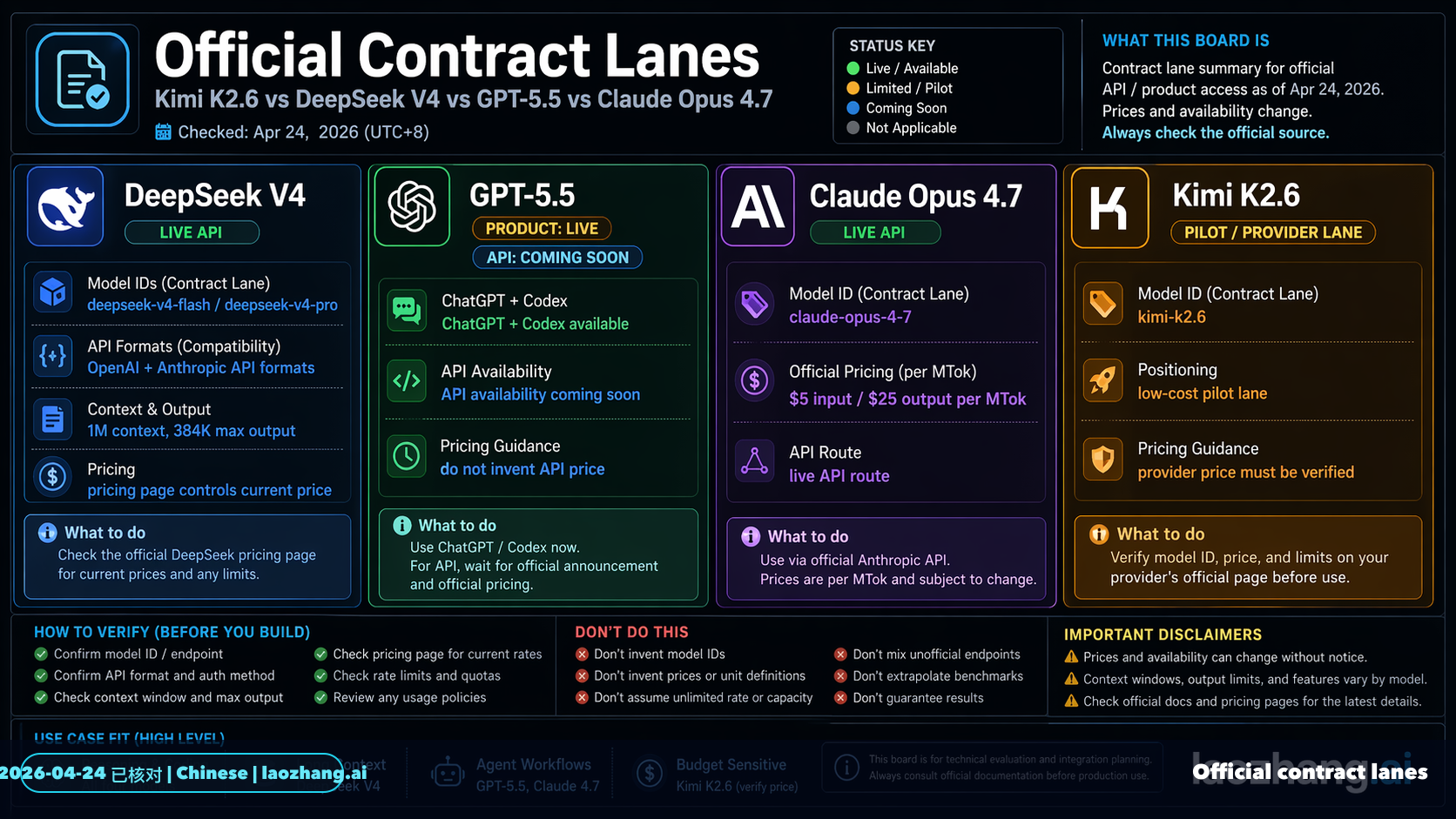

- 基於你提供的證據,能比較出的結論是:GPT-5.5 在可見的綜合智能排名與 BrowseComp 中領先;DeepSeek V4/DeepSeek-V4-Pro-Max 在 BrowseComp、Terminal-Bench 2.0 和價格上顯示出很強的性價比;Claude Opus 4.7 仍屬前排模型;Kimi K2.6 的可用基準數據最少,因此無法做同等完整排名。證據來源有限且多為摘要片段,以下比較應視為「基於現有證據的部分比較」,不是完整評測。

- ## Key findings

研究答案

基於你提供的證據,能比較出的結論是:GPT-5.5 在可見的綜合智能排名與 BrowseComp 中領先;DeepSeek V4/DeepSeek-V4-Pro-Max 在 BrowseComp、Terminal-Bench 2.0 和價格上顯示出很強的性價比;Claude Opus 4.7 仍屬前排模型;Kimi K2.6 的可用基準數據最少,因此無法做同等完整排名。證據來源有限且多為摘要片段,以下比較應視為「基於現有證據的部分比較」,不是完整評測。

Key findings

-

綜合智能排名方面,GPT-5.5 領先。 Artificial Analysis 摘要列出的 Intelligence Index 前五名中,GPT-5.5 xhigh 為 60 分、GPT-5.5 high 為 59 分,Claude Opus 4.7 Adaptive Reasoning Max Effort 為 57 分,與 Gemini 3.1 Pro Preview、GPT-5.4 xhigh 同分段出現;該摘要沒有給出 DeepSeek V4 或 Kimi K2.6 的具體 Intelligence Index 分數。[

4]

-

BrowseComp 上,GPT-5.5 略高於 DeepSeek-V4-Pro-Max,Claude Opus 4.7 落後一些。 VentureBeat 摘要稱 DeepSeek-V4-Pro-Max 在 BrowseComp 得分 83.4%,GPT-5.5 為 84.4%,Claude Opus 4.7 為 79.3%。[

5]

-

Terminal-Bench 2.0 上,DeepSeek V4 有可見分數,但其他模型細節不足。 VentureBeat 摘要稱 DeepSeek 在 Terminal-Bench 2.0 得分 67.9%,並稱其接近 Claude Opus 4.7,但摘要沒有提供 Claude Opus 4.7 的完整數字,也沒有提供 GPT-5.5 或 Kimi K2.6 的 Terminal-Bench 2.0 分數。[

5]

-

成本方面,DeepSeek V4 明顯低於 GPT-5.5;Claude Opus 4.7 的輸入價格片段可見但輸出價格不完整。 Mashable 摘要稱 DeepSeek V4 的 API 價格為每 100 萬輸入 token $1.74、每 100 萬輸出 token $3.48,且上下文窗口為 100 萬;同一摘要稱 GPT-5.5 為每 100 萬輸入 token $5、每 100 萬輸出 token $30,且上下文窗口為 100 萬。[

3] Mashable 摘要也顯示 Claude Opus 4.7 的輸入價格為每 100 萬 token $5,但輸出價格在提供片段中被截斷。[

3]

-

DeepSeek V4 的性價比主張較強,但需要完整原文驗證。 VentureBeat 標題稱 DeepSeek-V4 以 Opus 4.7/GPT-5.5 約六分之一成本達到接近最先進智能,但目前可用證據只提供部分 benchmark 與價格片段,缺少完整計算方法。[

5]

-

Kimi K2.6 的基準證據不足。 可用證據中有 Claude Opus 4.7 與 Kimi K2.6 的 SourceForge 比較頁,以及 Artificial Analysis 的 DeepSeek V4 Pro 與 Kimi K2.6 比較頁標題,但片段沒有提供 Kimi K2.6 的具體分數、價格、上下文窗口或任務表現。[

2][

4]

基準與價格對照

| 維度 | GPT-5.5 | Claude Opus 4.7 | DeepSeek V4 / V4-Pro-Max | Kimi K2.6 |

|---|---|---|---|---|

| Intelligence Index | xhigh 60;high 59。[ | Adaptive Reasoning Max Effort 57。[ | 可用片段未提供分數。[ | 可用片段未提供分數。[ |

| BrowseComp | 84.4%。[ | 79.3%。[ | DeepSeek-V4-Pro-Max 83.4%。[ | 無可用分數。 |

| Terminal-Bench 2.0 | 無可用分數。 | 摘要稱 DeepSeek 接近 Claude,但未給完整 Claude 分數。[ | 67.9%。[ | 無可用分數。 |

| API 價格 | $5 / 100 萬輸入 token;$30 / 100 萬輸出 token;100 萬上下文。[ | 可見片段顯示 $5 / 100 萬輸入 token;輸出價格片段不完整。[ | $1.74 / 100 萬輸入 token;$3.48 / 100 萬輸出 token;100 萬上下文。[ | 無可用價格。 |

| 證據充分度 | 中等:有官方系統卡存在、第三方排名與價格片段。[ | 中等偏低:有第三方排名與部分價格/benchmark。[ | 中等:有 BrowseComp、Terminal-Bench、價格片段。[ | 低:只有比較頁存在,缺少具體 benchmark 數字。[ |

Evidence notes

-

GPT-5.5 的官方性較強,但官方 benchmark 片段不足。 OpenAI 的 GPT-5.5 System Card 於 2026 年 4 月 23 日發布,但提供的片段沒有列出具體 benchmark 成績。[

7]

-

第三方 benchmark 來源比個人測試更適合做排名依據。 Artificial Analysis 提供了 Intelligence Index 排名片段,VentureBeat 提供了 BrowseComp 和 Terminal-Bench 2.0 的部分數字,因此這兩類證據比 LinkedIn 個人測試更適合作為量化比較依據。[

4][

5][

31]

-

個人實測只能作為補充,不宜作為排名依據。 LinkedIn 摘要描述一名用戶用 GPT-5.5 Codex 與 Claude Code on Opus 4.7 做了 4 個 one-shot head-to-head 實驗,並指出 benchmark 只能說明部分情況;但摘要沒有提供可複核的分數表或完整任務結果。[

31]

-

SourceForge 對 Claude Opus 4.7 與 Kimi K2.6 的比較主要是產品/公司資訊,不是 benchmark 數據。 該片段顯示 Anthropic 與 Moonshot AI 的公司資訊,以及 Claude Opus 4.7 vs Kimi K2.6 的比較頁存在,但沒有提供模型能力分數。[

2]

Limitations / uncertainty

-

Insufficient evidence. 目前證據不足以對四個模型做「全面」基準排名,因為 Kimi K2.6 缺少具體 benchmark 數字,DeepSeek V4 在 Intelligence Index 中也沒有可見分數。[

2][

4]

-

不同來源可能使用不同模型配置。 Artificial Analysis 中 GPT-5.5 有 xhigh/high,Claude Opus 4.7 有 Adaptive Reasoning Max Effort;VentureBeat 則提到 DeepSeek-V4-Pro-Max,這些配置不一定代表普通 API 默認模式。[

4][

5]

-

部分價格與 benchmark 資訊被截斷。 Mashable 片段提供 DeepSeek V4 與 GPT-5.5 的完整輸入/輸出價格,但 Claude Opus 4.7 的輸出價格沒有出現在可用片段中。[

3]

-

BrowseComp 和 Terminal-Bench 2.0 不能代表所有任務。 BrowseComp 偏向 agentic web browsing,Terminal-Bench 2.0 偏向終端/開發環境任務;它們不能直接代表寫作、長上下文理解、多語言、數學、視覺或企業安全表現。[

5]

Summary

-

若只看可見綜合智能排名:GPT-5.5 第一,Claude Opus 4.7 屬第一梯隊;DeepSeek V4 與 Kimi K2.6 因缺少同表分數無法排序。[

4]

-

若看 BrowseComp:GPT-5.5 84.4% 最高,DeepSeek-V4-Pro-Max 83.4% 非常接近,Claude Opus 4.7 為 79.3%;Kimi K2.6 無可用分數。[

5]

-

若看性價比:DeepSeek V4 的可見 API 價格明顯低於 GPT-5.5,且其 BrowseComp 接近 GPT-5.5;但完整成本結論仍需 Claude 與 Kimi 的完整價格和更多任務分數。[

3][

5]

-

最可靠的結論是:GPT-5.5 在現有證據中性能最強,DeepSeek V4 最像高性價比追趕者,Claude Opus 4.7 仍在高端模型梯隊,Kimi K2.6 目前證據不足,不能公平排名。[

4][

5][

2]

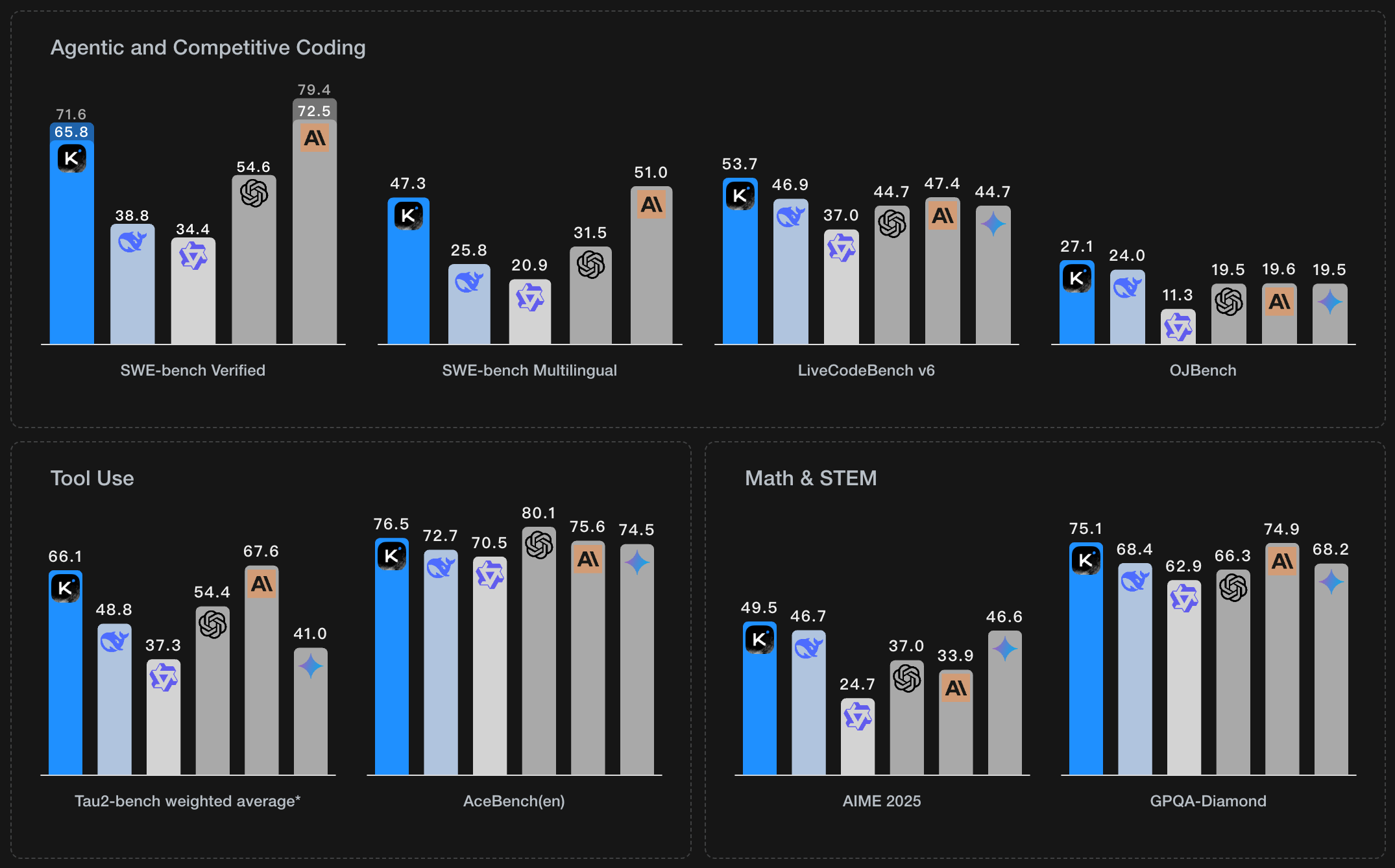

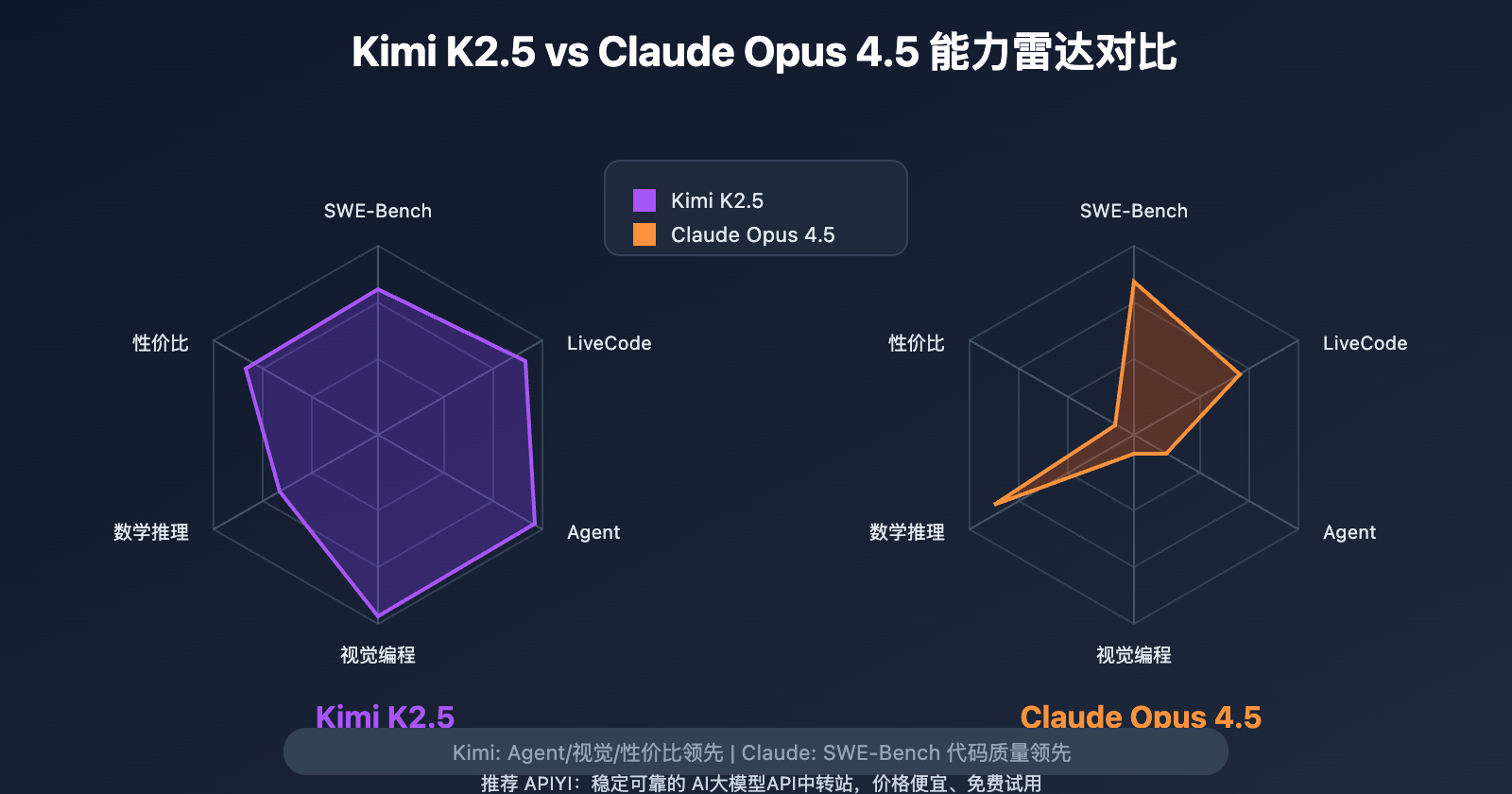

輔助視覺素材

研究對話

研究問題

研究 Claude Opus 4.7、GPT-5.5、DeepSeek V4、Kimi K2.6 的基準測試,並盡可能全面地進行比較。

附引用的答案

基於你提供的證據,能比較出的結論是:GPT-5.5 在可見的綜合智能排名與 BrowseComp 中領先;DeepSeek V4/DeepSeek-V4-Pro-Max 在 BrowseComp、Terminal-Bench 2.0 和價格上顯示出很強的性價比;Claude Opus 4.7 仍屬前排模型;Kimi K2.6 的可用基準數據最少,因此無法做同等完整排名。證據來源有限且多為摘要片段,以下比較應視為「基於現有證據的部分比較」,不是完整評測。

Key findings

-

綜合智能排名方面,GPT-5.5 領先。 Artificial Analysis 摘要列出的 Intelligence Index 前五名中,GPT-5.5 xhigh 為 60 分、GPT-5.5 high 為 59 分,Claude Opus 4.7 Adaptive Reasoning Max Effort 為 57 分,與 Gemini 3.1 Pro Preview、GPT-5.4 xhigh 同分段出現;該摘要沒有給出 DeepSeek V4 或 Kimi K2.6 的具體 Intelligence Index 分數。[

4]

-

BrowseComp 上,GPT-5.5 略高於 DeepSeek-V4-Pro-Max,Claude Opus 4.7 落後一些。 VentureBeat 摘要稱 DeepSeek-V4-Pro-Max 在 BrowseComp 得分 83.4%,GPT-5.5 為 84.4%,Claude Opus 4.7 為 79.3%。[

5]

-

Terminal-Bench 2.0 上,DeepSeek V4 有可見分數,但其他模型細節不足。 VentureBeat 摘要稱 DeepSeek 在 Terminal-Bench 2.0 得分 67.9%,並稱其接近 Claude Opus 4.7,但摘要沒有提供 Claude Opus 4.7 的完整數字,也沒有提供 GPT-5.5 或 Kimi K2.6 的 Terminal-Bench 2.0 分數。[

5]

-

成本方面,DeepSeek V4 明顯低於 GPT-5.5;Claude Opus 4.7 的輸入價格片段可見但輸出價格不完整。 Mashable 摘要稱 DeepSeek V4 的 API 價格為每 100 萬輸入 token $1.74、每 100 萬輸出 token $3.48,且上下文窗口為 100 萬;同一摘要稱 GPT-5.5 為每 100 萬輸入 token $5、每 100 萬輸出 token $30,且上下文窗口為 100 萬。[

3] Mashable 摘要也顯示 Claude Opus 4.7 的輸入價格為每 100 萬 token $5,但輸出價格在提供片段中被截斷。[

3]

-

DeepSeek V4 的性價比主張較強,但需要完整原文驗證。 VentureBeat 標題稱 DeepSeek-V4 以 Opus 4.7/GPT-5.5 約六分之一成本達到接近最先進智能,但目前可用證據只提供部分 benchmark 與價格片段,缺少完整計算方法。[

5]

-

Kimi K2.6 的基準證據不足。 可用證據中有 Claude Opus 4.7 與 Kimi K2.6 的 SourceForge 比較頁,以及 Artificial Analysis 的 DeepSeek V4 Pro 與 Kimi K2.6 比較頁標題,但片段沒有提供 Kimi K2.6 的具體分數、價格、上下文窗口或任務表現。[

2][

4]

基準與價格對照

| 維度 | GPT-5.5 | Claude Opus 4.7 | DeepSeek V4 / V4-Pro-Max | Kimi K2.6 |

|---|---|---|---|---|

| Intelligence Index | xhigh 60;high 59。[ | Adaptive Reasoning Max Effort 57。[ | 可用片段未提供分數。[ | 可用片段未提供分數。[ |

| BrowseComp | 84.4%。[ | 79.3%。[ | DeepSeek-V4-Pro-Max 83.4%。[ | 無可用分數。 |

| Terminal-Bench 2.0 | 無可用分數。 | 摘要稱 DeepSeek 接近 Claude,但未給完整 Claude 分數。[ | 67.9%。[ | 無可用分數。 |

| API 價格 | $5 / 100 萬輸入 token;$30 / 100 萬輸出 token;100 萬上下文。[ | 可見片段顯示 $5 / 100 萬輸入 token;輸出價格片段不完整。[ | $1.74 / 100 萬輸入 token;$3.48 / 100 萬輸出 token;100 萬上下文。[ | 無可用價格。 |

| 證據充分度 | 中等:有官方系統卡存在、第三方排名與價格片段。[ | 中等偏低:有第三方排名與部分價格/benchmark。[ | 中等:有 BrowseComp、Terminal-Bench、價格片段。[ | 低:只有比較頁存在,缺少具體 benchmark 數字。[ |

Evidence notes

-

GPT-5.5 的官方性較強,但官方 benchmark 片段不足。 OpenAI 的 GPT-5.5 System Card 於 2026 年 4 月 23 日發布,但提供的片段沒有列出具體 benchmark 成績。[

7]

-

第三方 benchmark 來源比個人測試更適合做排名依據。 Artificial Analysis 提供了 Intelligence Index 排名片段,VentureBeat 提供了 BrowseComp 和 Terminal-Bench 2.0 的部分數字,因此這兩類證據比 LinkedIn 個人測試更適合作為量化比較依據。[

4][

5][

31]

-

個人實測只能作為補充,不宜作為排名依據。 LinkedIn 摘要描述一名用戶用 GPT-5.5 Codex 與 Claude Code on Opus 4.7 做了 4 個 one-shot head-to-head 實驗,並指出 benchmark 只能說明部分情況;但摘要沒有提供可複核的分數表或完整任務結果。[

31]

-

SourceForge 對 Claude Opus 4.7 與 Kimi K2.6 的比較主要是產品/公司資訊,不是 benchmark 數據。 該片段顯示 Anthropic 與 Moonshot AI 的公司資訊,以及 Claude Opus 4.7 vs Kimi K2.6 的比較頁存在,但沒有提供模型能力分數。[

2]

Limitations / uncertainty

-

Insufficient evidence. 目前證據不足以對四個模型做「全面」基準排名,因為 Kimi K2.6 缺少具體 benchmark 數字,DeepSeek V4 在 Intelligence Index 中也沒有可見分數。[

2][

4]

-

不同來源可能使用不同模型配置。 Artificial Analysis 中 GPT-5.5 有 xhigh/high,Claude Opus 4.7 有 Adaptive Reasoning Max Effort;VentureBeat 則提到 DeepSeek-V4-Pro-Max,這些配置不一定代表普通 API 默認模式。[

4][

5]

-

部分價格與 benchmark 資訊被截斷。 Mashable 片段提供 DeepSeek V4 與 GPT-5.5 的完整輸入/輸出價格,但 Claude Opus 4.7 的輸出價格沒有出現在可用片段中。[

3]

-

BrowseComp 和 Terminal-Bench 2.0 不能代表所有任務。 BrowseComp 偏向 agentic web browsing,Terminal-Bench 2.0 偏向終端/開發環境任務;它們不能直接代表寫作、長上下文理解、多語言、數學、視覺或企業安全表現。[

5]

Summary

-

若只看可見綜合智能排名:GPT-5.5 第一,Claude Opus 4.7 屬第一梯隊;DeepSeek V4 與 Kimi K2.6 因缺少同表分數無法排序。[

4]

-

若看 BrowseComp:GPT-5.5 84.4% 最高,DeepSeek-V4-Pro-Max 83.4% 非常接近,Claude Opus 4.7 為 79.3%;Kimi K2.6 無可用分數。[

5]

-

若看性價比:DeepSeek V4 的可見 API 價格明顯低於 GPT-5.5,且其 BrowseComp 接近 GPT-5.5;但完整成本結論仍需 Claude 與 Kimi 的完整價格和更多任務分數。[

3][

5]

-

最可靠的結論是:GPT-5.5 在現有證據中性能最強,DeepSeek V4 最像高性價比追趕者,Claude Opus 4.7 仍在高端模型梯隊,Kimi K2.6 目前證據不足,不能公平排名。[

4][

5][

2]

大家也會問

「研究 Claude Opus 4.7、GPT-5.5、DeepSeek V4、Kimi K2.6 的基準測試,並盡可能全面地進行比較。」的簡短答案是什麼?

**綜合智能排名方面,GPT-5.5 領先。** Artificial Analysis 摘要列出的 Intelligence Index 前五名中,GPT-5.5 xhigh 為 60 分、GPT-5.5 high 為 59 分,Claude Opus 4.7 Adaptive Reasoning Max Effort 為 57 分,與 Gemini 3.1 Pro Preview、GPT-5.4 xhigh 同分段出現;該摘要沒有給出 DeepSeek V4 或 Kimi K2.6 的具體 Intelligence Index 分數。

最值得優先驗證的重點是什麼?

**綜合智能排名方面,GPT-5.5 領先。** Artificial Analysis 摘要列出的 Intelligence Index 前五名中,GPT-5.5 xhigh 為 60 分、GPT-5.5 high 為 59 分,Claude Opus 4.7 Adaptive Reasoning Max Effort 為 57 分,與 Gemini 3.1 Pro Preview、GPT-5.4 xhigh 同分段出現;該摘要沒有給出 DeepSeek V4 或 Kimi K2.6 的具體 Intelligence Index 分數。 **BrowseComp 上,GPT-5.5 略高於 DeepSeek-V4-Pro-Max,Claude Opus 4.7 落後一些。** VentureBeat 摘要稱 DeepSeek-V4-Pro-Max 在 BrowseComp 得分 83.4%,GPT-5.5 為 84.4%,Claude Opus 4.7 為 79.3%。

接下來在實務上該怎麼做?

**Terminal-Bench 2.0 上,DeepSeek V4 有可見分數,但其他模型細節不足。** VentureBeat 摘要稱 DeepSeek 在 Terminal-Bench 2.0 得分 67.9%,並稱其接近 Claude Opus 4.7,但摘要沒有提供 Claude Opus 4.7 的完整數字,也沒有提供 GPT-5.5 或 Kimi K2.6 的 Terminal-Bench 2.0 分數。

下一步適合探索哪個相關主題?

繼續閱讀「研究並查核事實:在要連續搜尋、整理、交叉比對、再修正的長流程研究任務裡,Claude Opus 4.7 跟 GPT-5.5 Spud 哪一個比較不會中途失焦、漏步驟或跑偏?」,從另一個角度查看更多引用來源。

開啟相關頁面我應該拿這個和什麼比較?

將這個答案與「研究 GPT-5.5、Claude Opus 4.7、Kimi K2.6、DeepSeek V4 的基準測試表現,並根據這些基準測試對它們進行比較。」交叉比對。

開啟相關頁面繼續深入研究

來源

- [1] DeepSeek V4 is here: How it compares to ChatGPT, Claude, Geminimashable.com

Here's how the API pricing compares: DeepSeek V4 costs $1.74 per 1 million input tokens and $3.48 per 1 million output tokens (1 million context window) GPT-5.5 costs at $5 per 1 million input tokens and $30 per 1 million output tokens (1 million context wi...

- [2] DeepSeek V4 Pro (Reasoning, High Effort) vs Kimi K2.6artificialanalysis.ai

What are the top AI models? The top AI models by Intelligence Index are: 1. GPT-5.5 (xhigh) (60), 2. GPT-5.5 (high) (59), 3. Claude Opus 4.7 (Adaptive Reasoning, Max Effort) (57), 4. Gemini 3.1 Pro Preview (57), 5. GPT-5.4 (xhigh) (57). Which is the fastest...

- [3] GPT-5.5 vs Claude Opus 4.7: Pricing, Speed, Benchmarks - LLM Statsllm-stats.com

The Verdict On the 10 benchmarks both providers report, Opus 4.7 leads on 6 and GPT-5.5 leads on 4. The leads cluster by category, not by overall quality: Opus 4.7 is ahead on the reasoning-heavy and review-grade tests (GPQA Diamond, HLE with and without to...

- [4] Kimi K2.6 vs Claude Opus 4.6 vs GPT-5.4 - Verdent AIverdent.ai

Benchmark K2.6 Claude Opus 4.6 GPT-5.4 Notes --- --- SWE-Bench Pro 58.60% 53.40% 57.70% Moonshot in-house harness; SEAL mini-swe-agent puts GPT-5.4 at 59.1%, Opus 4.6 at 51.9% SWE-Bench Verified 80.20% 80.80% 80% Tight cluster; Opus 4.7 now leads at 87.6% T...

- [5] Kimi K2.6 vs DeepSeek-V4 Pro - DocsBot AIdocsbot.ai

Kimi K2.6 Kimi K2.6 is Moonshot AI's latest open-source native multimodal agentic model, advancing long-horizon coding, coding-driven design, proactive autonomous execution, and swarm-based task orchestration. It keeps the Kimi K2.5 1T parameter MoE archite...

- [6] [AINews] Moonshot Kimi K2.6: the world's leading Open Model ...latent.space

Arena results continued to matter for multimodal models. @arena reported Claude Opus 4.7 taking 1 in Vision & Document Arena, with +4 points over Opus 4.6 in Document Arena and a large margin over the next non-Anthropic models. Subcategory wins included dia...

- [7] DeepSeek-V4 arrives with near state-of-the-art intelligence at 1/6th ...venturebeat.com

DeepSeek-V4-Pro-Max’s best showing is on BrowseComp, the benchmark measuring agentic AI web browsing prowess (especially highly containerized information), where it scores 83.4%, narrowly behind GPT-5.5 at 84.4% andahead of Claude Opus 4.7 at 79.3%. On Term...

- [8] GPT-5.5 vs Claude Opus 4.7: Real-World Coding Performance ...mindstudio.ai

Image 13 April 22, 2026 Claude vs GPT for Agentic Coding: Which Model Finishes the Job? Claude Opus 4.7 and GPT 5.4 were tested on a 465-file data migration. See which model stayed on task, caught errors, and produced trustworthy output. Claude GPT & OpenAI...

- [9] Underwhelming or underrated? DeepSeek V4 shows “impressive ...scmp.com

The company’s most advanced system, V4 Pro, ranked second among the world’s leading open-source models, behind Beijing-based Moonshot AI’s Kimi K2.6, benchmark firm Artificial Analysis said in a report on Friday. While V4 Pro marked a clear improvement on i...

- [10] I reviewed how DeepSeek V4-Pro, Kimi 2.6, Opus 4.6, and Opus 4.7 ...news.ycombinator.com

ozgune 1 day ago parent context favorite on: DeepSeek v4 I reviewed how DeepSeek V4-Pro, Kimi 2.6, Opus 4.6, and Opus 4.7 across the same AI benchmarks. All results are for Max editions, except for Kimi. Summary: Opus 4.6 forms the baseline all three are tr...

- [11] DeepSeek V4 vs Claude vs GPT-5.4: A 38-Task Benchmark ... - FundaAIfundaai.substack.com

As of time of publication, GPT-5.5 has not yet officially released its API. Testing solely through Codex 5.5 may not fully reflect the complete performance of the API. We have currently only conducted urgent testing on DeepSeek V4, and will include GPT-5.5...

- [12] I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just ...linkedin.com

I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just dropped GPT 5.5. The benchmarks look strong against Opus 4.7. But benchmarks only tell part of the story. So I ran 4 head-to-head experiments. Codex on GPT 5.5. Claude Code on Opus 4.7. Same pr...

- [13] Claude Opus 4.7 vs. Kimi K2.6 Comparisonsourceforge.net

[...] Training Documentation Webinars Live Online In Person Training Documentation Webinars Live Online In Person Company Information Anthropic Founded: 2021 United States claude.ai Company Information Moonshot AI Founded: 2023 China www.kimi.com/blog/kimi-...

- [14] Introducing Claude Opus 4.7 - Anthropicanthropic.com

CyberGym: Opus 4.6’s score has been updated from the originally reported 66.6 to 73.8, as we updated our harness parameters to better elicit cyber capability. SWE-bench Multimodal: We used an internal implementation for both Opus 4.7 and Opus 4.6. Scores ar...

- [15] Kimi K2.6 vs Claude Opus 4.7 - AI Model Comparison | OpenRouteropenrouter.ai

Beyond coding, Opus 4.7 brings improved knowledge work capabilities - from drafting documents and building presentations to analyzing data. It maintains coherence across very long outputs and extended sessions, making it a strong default for tasks that requ...

- [16] Kimi K2.6 vs DeepSeek V4 vs GPT-5.5 vs Claude Opus 4.7: Which Should You Test First? | LaoZhang AI Blogblog.laozhang.ai

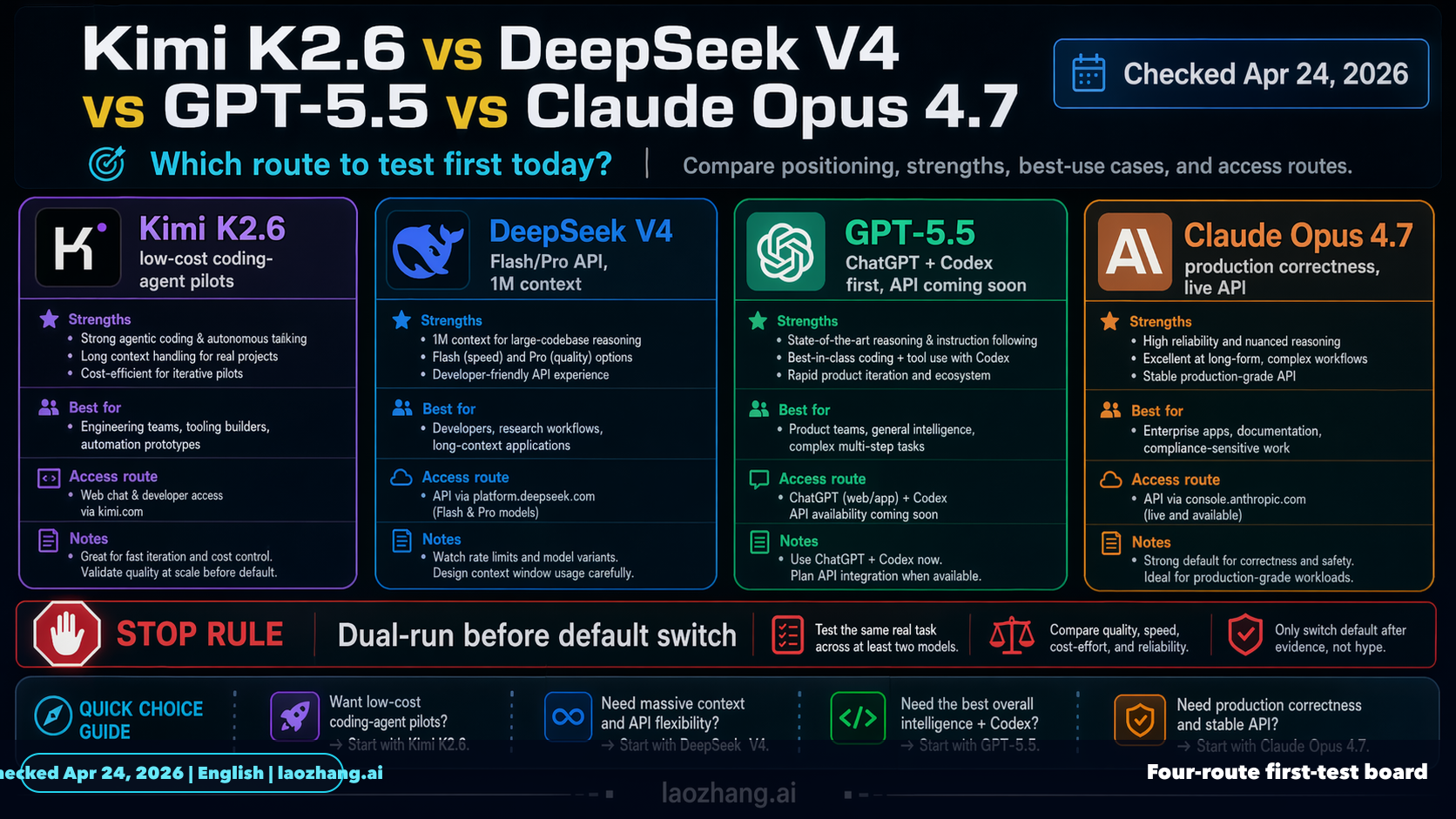

@laozhang\ cn Get $0.1 [...] As of Apr 24, 2026, this comparison should be built around DeepSeek V4, not an older DeepSeek label. Test Kimi K2.6 first when the job is low-cost coding-agent exploration, test DeepSeek V4 Flash or V4 Pro when you need a cheap...

- [17] DeepSeek V4: Features, Benchmarks, and Comparisonsdatacamp.com

DeepSeek V4 vs Competitors Over the last week, we’ve seen the release of OpenAI's GPT-5.5 and Anthropic's Claude Opus 4.7. While those models boast top-tier capabilities, especially in long-context reasoning and agentic coding, DeepSeek V4 competes heavily...

- [18] Bad Opus 4.7, Good Kimi K2.6, and Growing Codexaicodingdaily.substack.com

A 13-minute video. I generated a small demo for multi-language Laravel + Filament project, with Opus and Codex. Let me show the differences in the code - they were shockinglyconsistent all over codebase. My YouTube videos I Tried NEW Kimi K2.6: Same Level a...

- [19] GPT-5.5 Debuts, Sets New Benchmark Highs - eWeekeweek.com

Safety comes with a surcharge. OpenAI classifies the release as a high capability in cybersecurity and biological domains, gating deeper exploit ... 2 days ago

- [20] OpenAI's GPT-5.5 masters agentic coding with 82.7% benchmark ...interestingengineering.com

On SWE-Bench Pro, it reached 58.6%, solving more real-world GitHub issues in a single pass than earlier versions. The model also outperformed its predecessor in long-horizon engineering tasks measured by internal benchmarks. These tasks often take human dev...

- [21] GPT-5.5 Benchmarks Revealed: The 9 Numbers That ... - Kingy AIkingy.ai

A deep, source-checked breakdown of every benchmark, capability, price point, and caveat in OpenAI's April 23, 2026 launch of GPT-5.5 and ... 3 days ago

- [22] OpenAI Launches GPT-5.5: 82.7% Terminal-Bench, 58.6% SWE ...stackfutures.com

OpenAI released GPT-5.5 — codenamed Spud — on April 23, rolling it out to Plus, Pro, Business, and Enterprise tiers in ChatGPT and Codex. 4 days ago

- [23] Introducing GPT-5.5 - OpenAIopenai.com

GPT‑5.5 reaches state-of-the-art performance across multiple benchmarks that reflect this kind of work. OnGDPval, which tests agents’ abilities to produce well-specified knowledge work across 44 occupations, GPT‑5.5 scores 84.9%. On OSWorld-Verified, whic...

- [24] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

Star on GitHub 55.8KGo to Console Start building for free Sign upGo to Console Start building for free Products Docs Pricing Customers Blog Changelog Star on GitHub 55.8K Blog/GPT-5.5 is here: benchmarks, pricing, and what changes for developers Apr 24, 202...

- [25] OpenAI launched GPT-5.5 on April 23, 2026. This new AI model is ...facebook.com

It handles complex jobs with less help, runs faster, and has a 1M token context. GPT-5.5 beats GPT-5.4 in benchmarks but costs 2x more. May be ... 2 days ago

- [26] OpenAI Releases GPT-5.5 With State-of-the-Art Scores on Coding, Science, and Computer Uselinkedin.com

The week of April 14, 2026 produced three significant AI releases aimed at the same enterprise audience. Alibaba… Google's New Deep Research Max Agent Scores 93% on Benchmarks Google's new Deep Research Max agent scored 93.3% on DeepSearchQA and 85. From Cr...

- [27] GPT-5.5 benchmark results have been released : r/singularity - Redditreddit.com

Mostly only a small jump. They didn't bother including SWE-Bench Pro where it went from 57.6% to 58.6% (Mythos got 77.8%). 3 days ago

- [28] GPT-5.5 - Wikipediaen.wikipedia.org

o1 o3 o4-mini People Sam Altman Greg Brockman Jessica Livingston Peter Thiel Elon Musk Andrej Karpathy Concepts Hallucination "Hallucination (artificial intelligence)") Large language model Word embedding Training v t e GPT-5.5 (Generative Pre-trained Trans...

- [29] GPT-5.5 System Card - OpenAIopenai.com

GPT-5.5 System Card OpenAI Skip to main content Log inTry ChatGPT(opens in a new window) Research Products Business Developers Company Foundation(opens in a new window) Try ChatGPT(opens in a new window)Login OpenAI April 23, 2026 SafetyPublication GPT‑5.5...

- [30] GPT-5.5 System Card - OpenAI Deployment Safety Hubdeploymentsafety.openai.com

We measure GPT-5.5’s controllability by running CoT-Control, an evaluation suite described in (Yueh-Han, 2026 ) that tracks the model’s ability to follow user instructions about their CoT. CoT-Control includes over 13,000 tasks built from established benchm...

- [31] OpenAI releases GPT-5.5 with improved coding and research capabilitiesuk.finance.yahoo.com

Louis Juricic 1 min read Investing.com -- OpenAI announced Thursday the release of GPT-5.5, its latest AI model now available to Plus, Pro, Business, and Enterprise users through ChatGPT and Codex platforms. The model achieved 82.7% accuracy on Terminal-Ben...

- [32] OpenAI's GPT-5.5: Benchmarks, Safety Classification, and Availabilitydatacamp.com

OpenAI's GPT-5.5: Benchmarks, Safety Classification, and Availability OpenAI's latest release focuses on execution, research, and dramatically improved inference efficiency. Apr 23, 2026 · 5 min read OpenAI's latest model, GPT-5.5, matches GPT-5.4 in per-to...

- [33] OpenAI GPT-5.5 Benchmark (CodeRabbit)coderabbit.ai

CodeRabbit logoCodeRabbit logo AgentEnterpriseCustomersPricingBlog Resources Docs Trust Center Contact Us FAQ Whitepapers Log InGet a free trial What changed in OpenAI GPT-5.5: Better judgment, stronger coding, better signal by Juan Pablo Flores Abhilash Ha...

- [34] OpenAI releases GPT-5.5 amid a shift to rapid-fire AI updates - Fortunefortune.com

Fortune 500 AIOpenAI OpenAI releases GPT-5.5 amid a shift to rapid-fire AI updates By Sharon Goldman Sharon Goldman AI Reporter By Sharon Goldman Sharon Goldman AI Reporter April 23, 2026, 2:13 PM ET OpenAI CEO Sam AltmanAnna Moneymaker/Getty Images [...] B...

- [35] GPT-5.5 System Card - Deployment Safety Hub - OpenAIdeploymentsafety.openai.com

that tracks the model’s ability to follow user instructions about their CoT. CoT-Control includes over 13,000 tasks built from established benchmarks: GPQA (Rein et al., 2023 ), MMLU-Pro (Hendrycks et al., 2020), HLE (Phan et al., 2025), BFCL (Patil et al.,...