DeepSeek V4와 Kimi K2.6의 벤치마크를 조사해줘. 코딩, 콘텐츠 제작, 번역에서는 누가 이길까?

DeepSeek V4와 Kimi K2.6의 승자를 현재 제공된 근거만으로 확정할 수는 없습니다. 코딩은 DeepSeek V4 쪽에 일부 우세 신호가 있지만, Kimi K2.6과 같은 벤치마크에서 직접 비교한 수치가 없어 “DeepSeek 승”이라고 단정하기에는 증거가 부족합니다. Key findings 코딩: DeepSeek V4 우세 가능성은 있으나 확정 불가 제공된 근거 중 Deep...

DeepSeek V4와 Kimi K2.6의 승자를 현재 제공된 근거만으로 확정할 수는 없습니다. 코딩은 DeepSeek V4 쪽에 일부 우세 신호가 있지만, Kimi K2.6과 같은 벤치마크에서 직접 비교한 수치가 없어 “DeepSeek 승”이라고 단정하기에는 증거가 부족합니다. Key findings 코딩: DeepSeek V4 우세 가능성은 있으나 확정 불가 제공된 근거 중 DeepSeek V4 Pro가 LiveCodeBench에서 앞선다는 제3자 자료가 있지만, 비교 대상이 Kimi K2.6인지 명확하지 않고 원자료도 공식 벤치마크가 아닙니다 [5]. Kimi

주요 시사점

- **코딩: DeepSeek V4 우세 가능성은 있으나 확정 불가**

- - 제공된 근거 중 DeepSeek V4-Pro가 LiveCodeBench에서 앞선다는 제3자 자료가 있지만, 비교 대상이 Kimi K2.6인지 명확하지 않고 원자료도 공식 벤치마크가 아닙니다.

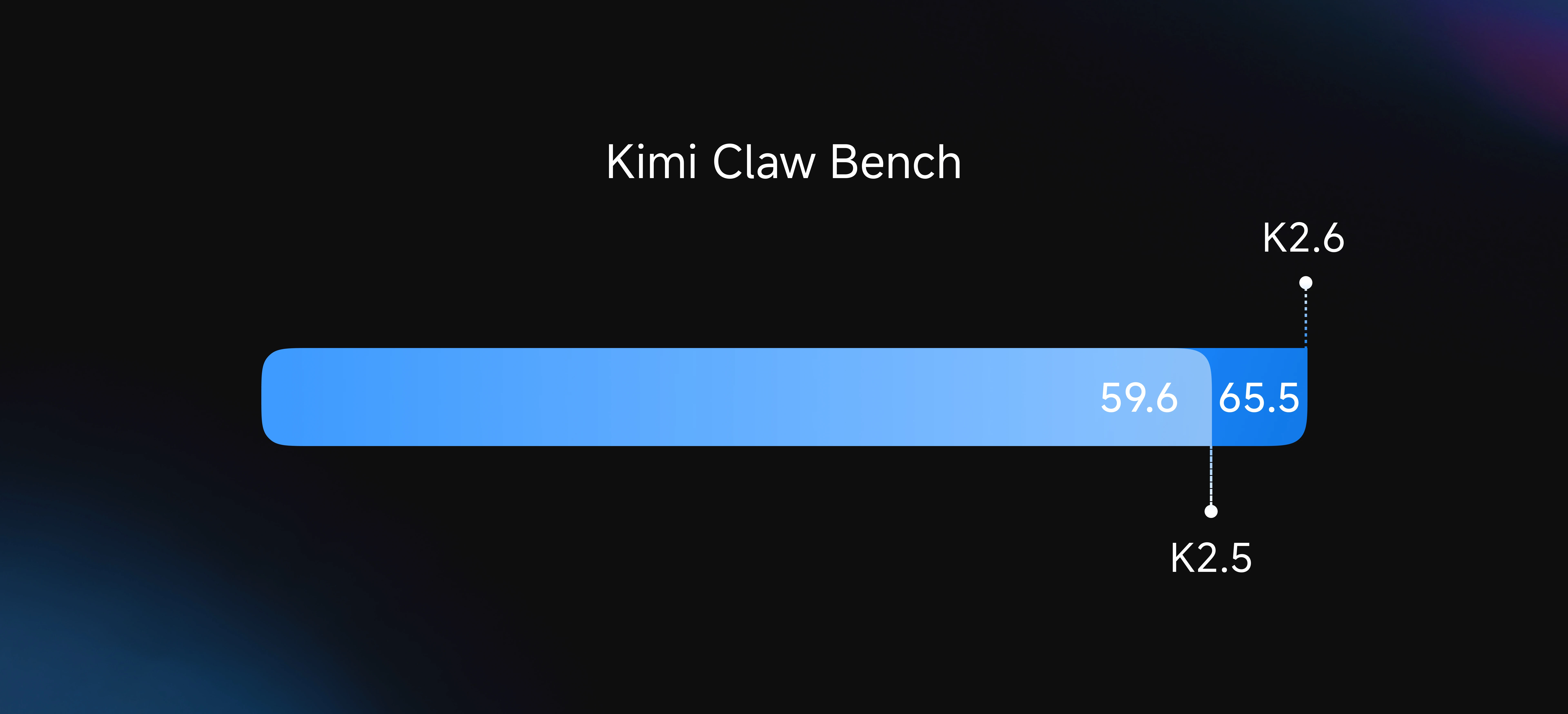

- - Kimi K2.6은 “native multimodal agentic model”로 소개되며 실용적 능력을 발전시킨 모델이라고 설명되지만, 제공된 근거에는 LiveCodeBench, SWE-bench, HumanEval 같은 코딩 벤치마크 수치가 없습니다.

- - 따라서 코딩에서는 **DeepSeek V4가 유리해 보일 수는 있지만, 직접 비교 근거가 없어 판정은 보류**가 맞습니다.

- DeepSeek V4와 Kimi K2.6의 승자를 현재 제공된 근거만으로 확정할 수는 없습니다. 코딩은 DeepSeek V4 쪽에 일부 우세 신호가 있지만, Kimi K2.6과 같은 벤치마크에서 직접 비교한 수치가 없어 “DeepSeek 승”이라고 단정하기에는 증거가 부족합니다.

- ## Key findings

연구 답변

DeepSeek V4와 Kimi K2.6의 승자를 현재 제공된 근거만으로 확정할 수는 없습니다. 코딩은 DeepSeek V4 쪽에 일부 우세 신호가 있지만, Kimi K2.6과 같은 벤치마크에서 직접 비교한 수치가 없어 “DeepSeek 승”이라고 단정하기에는 증거가 부족합니다.

Key findings

-

코딩: DeepSeek V4 우세 가능성은 있으나 확정 불가

- 제공된 근거 중 DeepSeek V4-Pro가 LiveCodeBench에서 앞선다는 제3자 자료가 있지만, 비교 대상이 Kimi K2.6인지 명확하지 않고 원자료도 공식 벤치마크가 아닙니다 [

5].

- Kimi K2.6은 “native multimodal agentic model”로 소개되며 실용적 능력을 발전시킨 모델이라고 설명되지만, 제공된 근거에는 LiveCodeBench, SWE-bench, HumanEval 같은 코딩 벤치마크 수치가 없습니다 [

1].

- 따라서 코딩에서는 DeepSeek V4가 유리해 보일 수는 있지만, 직접 비교 근거가 없어 판정은 보류가 맞습니다.

- 제공된 근거 중 DeepSeek V4-Pro가 LiveCodeBench에서 앞선다는 제3자 자료가 있지만, 비교 대상이 Kimi K2.6인지 명확하지 않고 원자료도 공식 벤치마크가 아닙니다 [

-

콘텐츠 제작: 승자 판단 불가

- 제공된 자료에는 DeepSeek V4와 Kimi K2.6의 글쓰기, 요약, 창작, 마케팅 카피, 장문 작성 등 콘텐츠 제작 벤치마크가 없습니다.

- Kimi K2.6이 멀티모달·에이전트형 모델이라는 설명은 있지만, 콘텐츠 품질에서 DeepSeek V4보다 낫다는 정량 근거는 없습니다 [

1].

- 결론적으로 콘텐츠 제작은 Insufficient evidence.

-

번역: 승자 판단 불가

- 제공된 근거에는 WMT, FLORES, 다국어 MT-Bench, 한국어-영어/중국어-한국어 번역 평가 같은 번역 벤치마크가 없습니다.

- DeepSeek V4와 Kimi K2.6 중 어느 쪽이 번역에서 우수한지 판단할 직접 근거가 없습니다.

- 결론적으로 번역은 Insufficient evidence.

-

모델 상태와 근거 신뢰도

- Kimi K2.6은 2026년 4월 20일 Cloudflare Workers AI에서

@cf/moonshotai/kimi-k2.6로 제공되기 시작했다는 근거가 있습니다 [1].

- DeepSeek 쪽은 공식 API 문서에 V4 Preview Release가 올라와 있고, 기존

deepseek-chat및deepseek-reasoner가 2026년 7월 24일 이후 접근 불가가 되며 현재deepseek-v4-flash로 라우팅된다는 설명이 있습니다 [4].

- 반면 일부 제3자 자료는 2026년 3월 중순 기준 DeepSeek V4가 공식 출시되지 않았다고 설명해 시점상 충돌이 있습니다 [

3]. 이 경우 공식 API 문서인 [

4]가 출시 상태 판단에는 더 강한 근거입니다.

- Kimi K2.6은 2026년 4월 20일 Cloudflare Workers AI에서

Evidence notes

- DeepSeek V4의 코딩 성능에 관한 구체적 주장 중 가장 관련 있는 근거는 LiveCodeBench 언급이지만, 제공된 스니펫만으로는 Kimi K2.6과의 직접 대결이라고 볼 수 없습니다 [

5].

- Kimi K2.6 관련 공식성 높은 근거는 배포 및 모델 성격 설명에 가깝고, 코딩·콘텐츠·번역 점수는 제공하지 않습니다 [

1].

- Facebook 그룹 게시물은 Kimi K2.6의 장기 코딩 및 에이전트 스케일링을 언급하지만, 공식 벤치마크 근거로 보기에는 신뢰도가 낮습니다 [

40].

Limitations / uncertainty

- 제공된 근거에는 DeepSeek V4와 Kimi K2.6을 같은 조건에서 비교한 표준 벤치마크가 없습니다.

- 코딩은 DeepSeek V4 관련 우세 신호가 일부 있으나, Kimi K2.6의 대응 점수가 없어 승패 판정은 불가능합니다.

- 콘텐츠 제작과 번역은 평가 데이터가 사실상 없으므로 Insufficient evidence입니다.

Summary

- 코딩: DeepSeek V4 우세 가능성, 그러나 직접 비교 근거 부족.

- 콘텐츠 제작: 판정 불가.

- 번역: 판정 불가.

- 현재 근거만으로 가장 엄밀한 결론은 **“DeepSeek V4가 코딩에서 앞설 가능성은 있지만, 세 영역 모두 확정 승자는 없다”**입니다.

시각적 지원

연구 대화

연구문제

DeepSeek V4와 Kimi K2.6의 벤치마크를 조사해줘. 코딩, 콘텐츠 제작, 번역에서는 누가 이길까?

인용 답변

DeepSeek V4와 Kimi K2.6의 승자를 현재 제공된 근거만으로 확정할 수는 없습니다. 코딩은 DeepSeek V4 쪽에 일부 우세 신호가 있지만, Kimi K2.6과 같은 벤치마크에서 직접 비교한 수치가 없어 “DeepSeek 승”이라고 단정하기에는 증거가 부족합니다.

Key findings

-

코딩: DeepSeek V4 우세 가능성은 있으나 확정 불가

- 제공된 근거 중 DeepSeek V4-Pro가 LiveCodeBench에서 앞선다는 제3자 자료가 있지만, 비교 대상이 Kimi K2.6인지 명확하지 않고 원자료도 공식 벤치마크가 아닙니다 [

5].

- Kimi K2.6은 “native multimodal agentic model”로 소개되며 실용적 능력을 발전시킨 모델이라고 설명되지만, 제공된 근거에는 LiveCodeBench, SWE-bench, HumanEval 같은 코딩 벤치마크 수치가 없습니다 [

1].

- 따라서 코딩에서는 DeepSeek V4가 유리해 보일 수는 있지만, 직접 비교 근거가 없어 판정은 보류가 맞습니다.

- 제공된 근거 중 DeepSeek V4-Pro가 LiveCodeBench에서 앞선다는 제3자 자료가 있지만, 비교 대상이 Kimi K2.6인지 명확하지 않고 원자료도 공식 벤치마크가 아닙니다 [

-

콘텐츠 제작: 승자 판단 불가

- 제공된 자료에는 DeepSeek V4와 Kimi K2.6의 글쓰기, 요약, 창작, 마케팅 카피, 장문 작성 등 콘텐츠 제작 벤치마크가 없습니다.

- Kimi K2.6이 멀티모달·에이전트형 모델이라는 설명은 있지만, 콘텐츠 품질에서 DeepSeek V4보다 낫다는 정량 근거는 없습니다 [

1].

- 결론적으로 콘텐츠 제작은 Insufficient evidence.

-

번역: 승자 판단 불가

- 제공된 근거에는 WMT, FLORES, 다국어 MT-Bench, 한국어-영어/중국어-한국어 번역 평가 같은 번역 벤치마크가 없습니다.

- DeepSeek V4와 Kimi K2.6 중 어느 쪽이 번역에서 우수한지 판단할 직접 근거가 없습니다.

- 결론적으로 번역은 Insufficient evidence.

-

모델 상태와 근거 신뢰도

- Kimi K2.6은 2026년 4월 20일 Cloudflare Workers AI에서

@cf/moonshotai/kimi-k2.6로 제공되기 시작했다는 근거가 있습니다 [1].

- DeepSeek 쪽은 공식 API 문서에 V4 Preview Release가 올라와 있고, 기존

deepseek-chat및deepseek-reasoner가 2026년 7월 24일 이후 접근 불가가 되며 현재deepseek-v4-flash로 라우팅된다는 설명이 있습니다 [4].

- 반면 일부 제3자 자료는 2026년 3월 중순 기준 DeepSeek V4가 공식 출시되지 않았다고 설명해 시점상 충돌이 있습니다 [

3]. 이 경우 공식 API 문서인 [

4]가 출시 상태 판단에는 더 강한 근거입니다.

- Kimi K2.6은 2026년 4월 20일 Cloudflare Workers AI에서

Evidence notes

- DeepSeek V4의 코딩 성능에 관한 구체적 주장 중 가장 관련 있는 근거는 LiveCodeBench 언급이지만, 제공된 스니펫만으로는 Kimi K2.6과의 직접 대결이라고 볼 수 없습니다 [

5].

- Kimi K2.6 관련 공식성 높은 근거는 배포 및 모델 성격 설명에 가깝고, 코딩·콘텐츠·번역 점수는 제공하지 않습니다 [

1].

- Facebook 그룹 게시물은 Kimi K2.6의 장기 코딩 및 에이전트 스케일링을 언급하지만, 공식 벤치마크 근거로 보기에는 신뢰도가 낮습니다 [

40].

Limitations / uncertainty

- 제공된 근거에는 DeepSeek V4와 Kimi K2.6을 같은 조건에서 비교한 표준 벤치마크가 없습니다.

- 코딩은 DeepSeek V4 관련 우세 신호가 일부 있으나, Kimi K2.6의 대응 점수가 없어 승패 판정은 불가능합니다.

- 콘텐츠 제작과 번역은 평가 데이터가 사실상 없으므로 Insufficient evidence입니다.

Summary

- 코딩: DeepSeek V4 우세 가능성, 그러나 직접 비교 근거 부족.

- 콘텐츠 제작: 판정 불가.

- 번역: 판정 불가.

- 현재 근거만으로 가장 엄밀한 결론은 **“DeepSeek V4가 코딩에서 앞설 가능성은 있지만, 세 영역 모두 확정 승자는 없다”**입니다.

사람들은 또한 묻습니다.

"DeepSeek V4와 Kimi K2.6의 벤치마크를 조사해줘. 코딩, 콘텐츠 제작, 번역에서는 누가 이길까?"에 대한 짧은 대답은 무엇입니까?

**코딩: DeepSeek V4 우세 가능성은 있으나 확정 불가**

먼저 검증할 핵심 포인트는 무엇인가요?

**코딩: DeepSeek V4 우세 가능성은 있으나 확정 불가** - 제공된 근거 중 DeepSeek V4-Pro가 LiveCodeBench에서 앞선다는 제3자 자료가 있지만, 비교 대상이 Kimi K2.6인지 명확하지 않고 원자료도 공식 벤치마크가 아닙니다.

실무에서는 다음으로 무엇을 해야 합니까?

- Kimi K2.6은 “native multimodal agentic model”로 소개되며 실용적 능력을 발전시킨 모델이라고 설명되지만, 제공된 근거에는 LiveCodeBench, SWE-bench, HumanEval 같은 코딩 벤치마크 수치가 없습니다.

다음에는 어떤 관련 주제를 탐구해야 할까요?

다른 각도와 추가 인용을 보려면 "GPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6のベンチマークを調査し、比較してください。"으로 계속하세요.

관련 페이지 열기이것을 무엇과 비교해야 합니까?

"Deep research & compare GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4"에 대해 이 답변을 대조 확인하세요.

관련 페이지 열기연구를 계속하세요

출처

- [1] Moonshot AI Kimi K2.6 now available on Workers AI · Changelogdevelopers.cloudflare.com

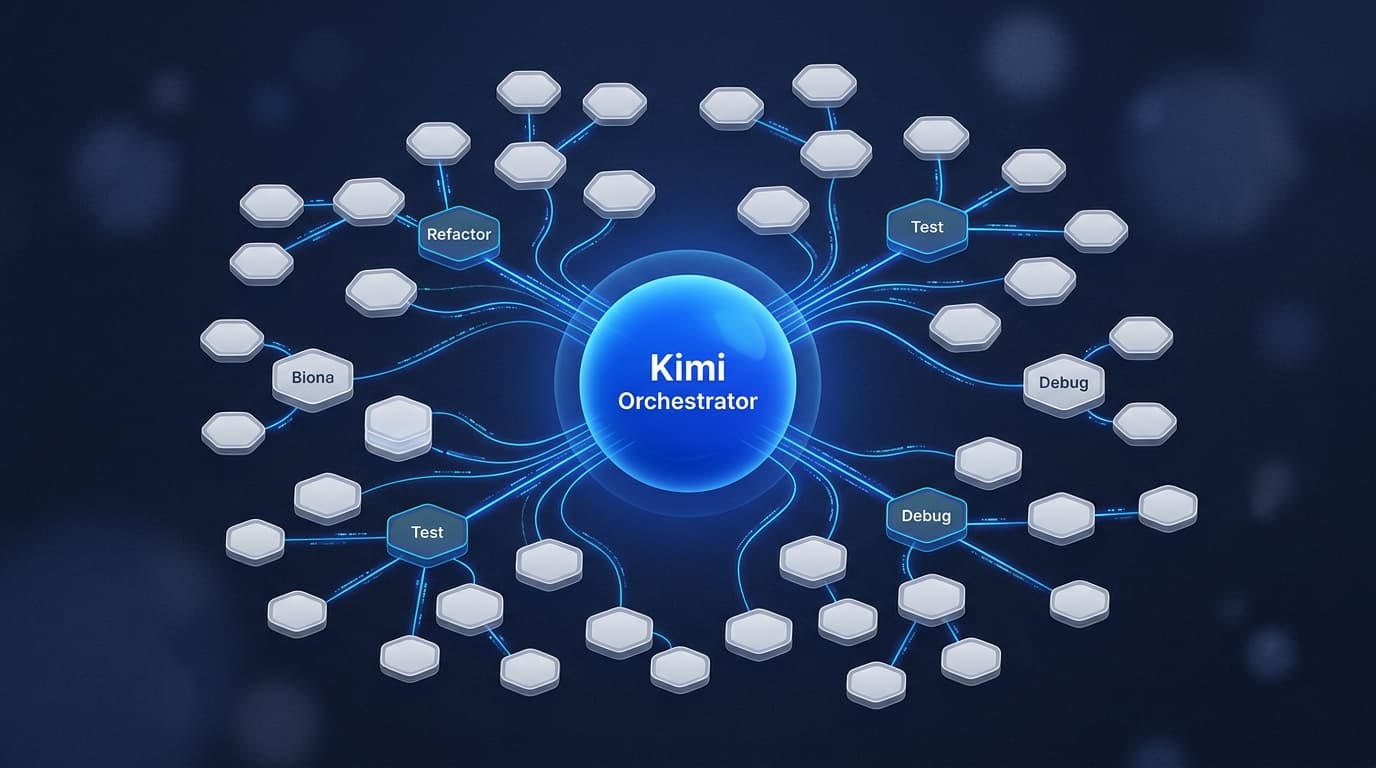

Image 2: hero image ← Back to all posts ## Moonshot AI Kimi K2.6 now available on Workers AI Apr 20, 2026 Workers AI

@cf/moonshotai/kimi-k2.6is now available on Workers AI, in partnership with Moonshot AI for Day 0 support. Kimi K2.6 is a native multimodal agentic model from Moonshot AI that advances practical capabilities in long-horizon coding, coding-driven design, proactive autonomous execution, and swarm-based task orchestration. Built on a Mixture-of-Experts architecture with 1T total parameters and 32B active per token, Kimi K2.6 delivers frontier-scale intelligence with efficient i… - [2] How to Use Kimi K2.6: Complete Guide to Moonshot AI's New 1T ...tosea.ai

On April 20, 2026, Moonshot AI released Kimi K2.6 — a 1-trillion-parameter open-source Mixture-of-Experts model positioned directly at the agentic-coding segment that Claude Opus 4.7 and GPT-5.4 have dominated through early 2026. The numbers on paper are striking: SWE-Bench Pro at 58.6% (ahead of both Opus 4.6 and GPT-5.4), Humanity's Last Exam with tools at 54.0% (ahead of both), and a 185% throughput lift over K2.5 in a real 13-hour optimization run against the exchange-core benchmark. For a weights-available Chinese model to lead US frontier labs on commercially relevant agentic benchmar…

- [3] Kimi 2.6 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Multilingual ### Multimodal ### Inst. Following ## Chatbot Arena Performance ## Benchmark Details Only benchmark rows with an attached exact-source record are shown here. Source-unverified manual rows and generated rows are hidden from model pages. ## Kimi 2.6 Family Base entry Related Earlier Model ## Compare This Model See how Kimi 2.6 stacks up against similar models ## Frequently Asked Questions ### How does Kimi 2.6 perform overall in AI benchmarks? Kimi 2.6 currently ranks #12 out of 115 models on BenchLM's provisional leaderboard with an overall score of 85. It also ranks #6 out of…

- [4] Kimi API Platform - Agentic AI Directoryagentic.ai

Dimension Breakdown Action Capability Autonomy Adaptation State & Memory Safety How agenticness works → ## Categories Engineering & DevTools ### Ask about Kimi API Platform Try asking: Pricing Usage-based: K2.6 is listed at $0.16/MTok cache hit, $0.95/MTok input, and $4.00/MTok output. K2.5 is listed at $0.10/MTok cache hit, $0.60/MTok input, and $3.00/MTok output. K2 0905 is listed at $0.15/MTok cache hit, $0.60/MTok input, and $2.50/MTok output. Free: No free tier was clearly shown in the captured content. Enterprise: Not publicly detailed in the captured content. Details platform.moon…

- [5] Kimi K2.6 Model Specs, Costs & Benchmarks (April 2026) | Galaxy.aiblog.galaxy.ai

Galaxy.ai Logo # Kimi K2.6Model Specs, Costs & Benchmarks (April2026) Kimi K2.6, developed by MoonshotAI, features a context window of 262.1K tokens. The model costs $0.80 per million tokens for input and $3.50 per million tokens for output. It was released on April 20, 2026, and has achieved impressive scores in various benchmarks. Access Kimi K2.6 & 210+ other AI models all in one platformTry Galaxy.ai for free | Kimi K2.6Kimi K2.6 | [...] See how Kimi K2.6 compares with other top models ### CompareKimi K2.6 with top models in each category: #### 🚀Programming Best models for coding and dev…

- [6] Kimi K2.6 Review: Better Reasoning, 100-Agent Swarms (2026)eesel.ai

All posts Blog / Blog Writer AI # Kimi K2.6 Review: Better Reasoning, 100-Agent Swarms (2026) Stevia Putri Written by Stevia Putri Last editedApril 21, 2026 Expert Verified Banner image for Kimi K2.6 Review: Better Reasoning, 100-Agent Swarms (2026) Beta testers spent a week inside the K2.6-code-preview and kept noticing something odd: the console still said K2.5, but the model was clearly better. Deeper reasoning traces, cleaner agent planning, and faster multi-step tool calls. On April 13, 2026, Moonshot AI confirmed the rumors with a single email and rolled Kimi K2.6 out to all Kimi Code s…

- [7] Kimi K2.6 Tech Blog: Advancing Open-Source Codingkimi.com

| APEX-Agents | 27.9 | 33.3 | 33.0 | 32.0 | 11.5 | | OSWorld-Verified | 73.1 | 75.0 | 72.7 | — | 63.3 | | Coding | | | Terminal-Bench 2.0 (Terminus-2) | 66.7 | 65.4 | 65.4 | 68.5 | 50.8 | | SWE-Bench Pro | 58.6 | 57.7 | 53.4 | 54.2 | 50.7 | | SWE-Bench Multilingual | 76.7 | — | 77.8 | 76.9 | 73.0 | | SWE-Bench Verified | 80.2 | — | 80.8 | 80.6 | 76.8 | | SciCode | 52.2 | 56.6 | 51.9 | 58.9 | 48.7 | | OJBench (python) | 60.6 | — | 60.3 | 70.7 | 54.7 | | LiveCodeBench (v6) | 89.6 | — | 88.8 | 91.7 | 85.0 | | Reasoning & Knowledge | | | HLE-Full | 34.7 | 39.8 | 40.0 | 44.4 | 30.1 | | AIME 2026 |…

- [8] Kimi K2.6: Open-Weight Agent Model - Verdent AIverdent.ai

On this page # Kimi K2.6: Open-Weight Agent Model Image 2: Hanks Hanks Engineer April 21, 2026 Share Copy link More Image 3: Kimi K2.6: Open-Weight Agent Model Moonshot AI released Kimi K2.6 on April 20, 2026: 1 trillion parameters, 32B active, open-weight, native multimodal, four variants from quick chat to 300-agent parallel swarms. If you run multi-step coding agents or are evaluating open-weight alternatives to Claude and GPT-5.4, this one is worth understanding. If you don't, it isn't urgent. What follows covers the architecture, the four variants, what actually changed from K2.5, the li…

- [9] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

| OSWorld-Verified | 73.1 | 75.0 | 72.7 63.3 | | Coding | | Terminal-Bench 2.0 (Terminus-2) | 66.7 | 65.4 | 65.4 | 68.5 | 50.8 | | SWE-Bench Pro | 58.6 | 57.7 | 53.4 | 54.2 | 50.7 | | SWE-Bench Multilingual | 76.7 77.8 | 76.9 | 73.0 | | SWE-Bench Verified | 80.2 80.8 | 80.6 | 76.8 | | SciCode | 52.2 | 56.6 | 51.9 | 58.9 | 48.7 | | OJBench (python) | 60.6 60.3 | 70.7 | 54.7 | | LiveCodeBench (v6) | 89.6 88.8 | 91.7 | 85.0 | | Reasoning & Knowledge | | HLE-Full | 34.7 | 39.8 | 40.0 | 44.4 | 30.1 | | AIME 2026 | 96.4 | 99.2 | 96.7 | 98.3 | 95.8 | | HMMT 2026 (Feb) | 92.7 | 97.7 | 96.2 | 94.7 | 8…

- [10] Kimi K2.6: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

Kimi K2.6: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• What if your agent could call anyone? CallingBox Start for free 1. Organizations 2. Moonshot AI 3. Kimi K2.6 Compare Chat Image 2: Moonshot AI logo # Kimi K2.6 Moonshot AI·Apr 2026·Modified MIT License Kimi K2.6 is Moonshot AI's open-source, native multimodal agentic model focused on state-of-the-art coding, long-horizon execution, and agent swarm capabilities. It scales horizontally to 300 sub-agents…

- [11] Moonshot AImoonshot.ai

Moonshot AI Image 1 KimiAPIResearchDownloadCareers EN 中文 Image 2 KimiAPIResearchDownloadCareers EN 中文 Hero background animation Moonshot AI Seeking the optimal conversion from energy to intelligence Image 3 Try KimiImage 4Try APIImage 5 ## Latest Research Our research team works toward AGI while sharing the latest research with the global open-source community. Get MoreImage 6 Image 7: Kimi K2.6 2026-04-20 ### Kimi K2.6Image 8: Agent Swarm 2026-02-09 ### Agent SwarmImage 9: WorldVQA 2026-02-03 ### WorldVQA ## Complex problems, solved with ease AI Models is capability. Code, analyze, sheets…

- [12] Moonshot AI releases Kimi K2.6 with long-horizon coding and agent ...facebook.com

Artificial Intelligence & Deep Learning | Moonshot AI Releases Kimi K2.6 with Long-Horizon Coding, Agent Swarm Scaling to 300 Sub-Agents and 4,000 Coordinated Steps | Facebook Log In Log In Forgot Account? Image 1 Moonshot AI releases Kimi K2.6 with long-horizon coding and agent swarm scaling Summarized by AI from the post below : An open, heterogeneous multi-agent ecosystem where humans and agents from any device, running any model, collaborate in a shared operational space — with K2.6 as the adaptive coordinator. - Benchmarks: 54.0 on HLE-Full with tools (leads GPT-5.4, Claude Opus 4.6, G…

- [13] DeepSeek V4 Coding Performance: The Ultimate 2026 Guideskywork.ai

.200001'%20y='0.642676'%20width='57.6'%20height='57.6'%20filterUnits='userSpaceOnUse'%20color-interpolation-filters='sRGB'%3e%3cfeFlood%20flood-opacity='0'%20result='BackgroundImageFix'/%3e%3cfeColorMatrix%20in='SourceAlpha'%20type='matrix'%20values='0%200%200%200%200%200%200%200%200%200%200%200%200%200%200%200%200%200%20127%200'%20result='hardAlpha'/%3e%3cfeOffset%20dy='2.7'/%3e%3cfeGaussianBlur%20stdDeviation='5.4'/%3e%3cfeComposite%20in2='hardAlpha'%20operator='out'/%3e%3cfeColorMatrix%20type='matrix'%20values='0%200%200%200%200%200%200%200%200%200.0313726%200%200%200%200%200.0941176%200%2…

- [14] DeepSeek V4 Guide: Engram Memory, Training Data Strategy ...kili-technology.com

What's the Current Release Status? As of mid-March 2026, DeepSeek V4 has not been officially released. A "V4 Lite" appeared briefly on DeepSeek's platform on March 9, 2026, suggesting an incremental rollout strategy. Dataconomy, citing Chinese tech outlet Whale Lab, reports an April 2026 launch. The Financial Times previously reported a March release. Multiple earlier launch dates — including mid-February 2026 around Lunar New Year — have passed without a full release. What is publicly available are the foundational papers. The Engram paper was published with open-source code. The mHC pape…

- [15] DeepSeek V4 Preview: The Complete 2026 Guide - o-mega | AIo-mega.ai

On GPQA Diamond, Gemini 3.1 Pro leads at 94.3% versus V4-Pro's 90.1%. On ARC-AGI-2 (a test of genuine novel reasoning), Gemini 3.1 Pro leads at 77.1% versus DeepSeek's comparable scores. These gaps suggest that Google's model maintains an edge on deep scientific reasoning tasks. On LiveCodeBench, V4-Pro appears to lead with 93.5 versus Gemini's 91.7. On Codeforces, V4-Pro leads at 3206 versus Gemini's 3052. The coding benchmarks consistently favor DeepSeek - Google AI. [...] Competitive programming and algorithmic coding. V4-Pro's Codeforces rating of 3206 and LiveCodeBench score of 93.5 make…

- [16] DeepSeek V4 Release Date: February 2026 — Benchmarks, Features & What We Knowspectrumailab.com

Information Verification Status | Claim | Status | Source | --- | Release: Mid-February 2026 | REPORTED | The Information (insider sources) | | Engram memory system | CONFIRMED | Official arXiv paper + GitHub | | mHC architecture | CONFIRMED | Official arXiv paper | | Engram/mHC in V4 | SPECULATED | Third-party analysis | | Beats Claude/GPT at coding | UNVERIFIED | Internal testing claims | | 1 trillion parameters | RUMORED | No official source | | 1M token context | RUMORED | No official source | | Open source (MIT) | EXPECTED | Based on V3 precedent | [...] Artificial Intelligence # De…

- [17] DeepSeek V4: Complete Guide to the 1T AI Model - Emelia.ioemelia.io

January 12, 2026: Publication of the Engram memory paper, considered a foundational building block of V4. January 2026: Code reference leaked under the name "MODEL1" on GitHub. February 11, 2026: Silent expansion of the context window to 1 million tokens on the existing API. February 17, 2026: Expected announcement date per community speculation. Nothing happened. March 3, 2026: Rumored launch date, coinciding with the Two Sessions (a major political event in China). Still nothing. March 5, 2026: OpenAI launches GPT-5.4. March 10, 2026: Still no official release. [...] DeepSeek V4 is the next…

- [18] deepseek-ai/DeepSeek-V4-Pro - Hugging Facehuggingface.co

| Opus-4.6 Max | GPT-5.4 xHigh | Gemini-3.1-Pro High | K2.6 Thinking | GLM-5.1 Thinking | DS-V4-Pro Max | :---: :---: :---: | Knowledge & Reasoning | | | | | | | | MMLU-Pro (EM) | 89.1 | 87.5 | 91.0 | 87.1 | 86.0 | 87.5 | | SimpleQA-Verified (Pass@1) | 46.2 | 45.3 | 75.6 | 36.9 | 38.1 | 57.9 | | Chinese-SimpleQA (Pass@1) | 76.4 | 76.8 | 85.9 | 75.9 | 75.0 | 84.4 | | GPQA Diamond (Pass@1) | 91.3 | 93.0 | 94.3 | 90.5 | 86.2 | 90.1 | | HLE (Pass@1) | 40.0 | 39.8 | 44.4 | 36.4 | 34.7 | 37.7 | | LiveCodeBench (Pass@1) | 88.8 91.7 | 89.6 93.5 | | Codeforces (Rating) 3168 | 3052 - | 3206 | | HMM…

- [19] DeepSeek-V4-Pro-Max: Pricing, Benchmarks & Performancellm-stats.com

Output$3.48/M Throughput 32 tok/s Parameters 1.6T Benchmarks Examples Playground API ## Benchmarks ### Arena Performance #64 Websites ### Leaderboard Rankings #5 Math #6 Healthcare #7 Coding #7 Search #7 Tool Calling #9 Reasoning #9 Legal #10 Finance #20 Vision #33 Long Context ### Quality Tracker +0.06σ— 8 votes 7d+0.06σ 30d+0.06σ Image 3: LLM Stats Logo Websites+0.18σ(5)3D+0.00σ(1)playground-chat+0.00σ(1)music+0.00σ(1) ### DeepSeek-V4-Pro-Max Performance Across Datasets Scores sourced from the model's scorecard, paper, or official blog posts Image 4: LLM Stats Logollm-stats.com - Sat Apr 25…

- [20] How to Use DeepSeek V4: Complete Guide to the New 1T MoE ...tosea.ai

Benchmark Performance The leaked and now-confirmed benchmark numbers position V4 inside the frontier tier, with the strongest gains on coding and long-context tasks. The published comparison sets V4-Pro and V4-Flash against the other April 2026 frontier releases: Image 6: DeepSeek V4 benchmark comparison bar chart showing DeepSeek-V4-Pro-Max versus Claude-Opus-4.6-Max, GPT-5.4-xHigh, and Gemini-3.1-Pro-High across Knowledge & Reasoning and Agentic Capabilities categories The full benchmark table — including the smaller V4-Flash tier and the open-source comparison set — gives a more complet…

- [21] DeepSeek V4 Released: What's New in the Latest Model (2026)sitepoint.com

On Arena-Hard style evaluations, a benchmark format testing instruction following under adversarial conditions (see lmarena.ai), V4 would be expected to show gains over V3. The exact margin varies by task category, and without published scores, any specific number would be fabrication. ### Where V4 Might Excel (and Where It Might Not) V4's projected strengths cluster around coding tasks, multilingual generation, long-context information retrieval, and structured reasoning. Developers building coding assistants, RAG pipelines over large document sets, or agents requiring extended conversationa…

- [22] DeepSeek V4: Architecture, Benchmarks, and API Guide (2026)morphllm.com

| Benchmark | DeepSeek V4 (claimed) | Claude Opus 4.6 | GPT-5.3 Codex | DeepSeek V3.2 | --- --- | SWE-bench Verified | 80-85% | 80.8% | 77.3% | ~65% | | HumanEval | ~90% | ~88% | ~85% | ~80% | | Context window | 1M tokens | 1M tokens (beta) | 128K tokens | 128K tokens | | Active params | 32B | N/A (dense) | N/A (dense) | 37B | | Input price / 1M tokens | ~$0.14 (projected) | ~$15 | ~$15 | $0.27 | The SWE-bench Verified claim is the one to watch. Claude Opus 4.5 was the first model to crack 80% in that benchmark. If V4 exceeds 80.8% at $0.14/M input tokens, it changes the cost structure for ag…

- [23] Deepseek v4 models are out and here are benchmarks !( 4 versions)reddit.com

Local hosting needs planning but pays off for privacy and removing token limits. Start by testing a compact quantized model on the target hardware, pick a backend that matches your team needs (easy UX vs deep control), and design predictable latency and model-loading behavior so users have a smooth experience. ### LLaMA Hosting Communities See Answer Top tools for optimizing AI model performance How to fine-tune LLaMA for specific tasks Common challenges in local AI deployment Innovative applications of LLaMA in business Image 2: Llama Image 3: Llama Public Anyone can view, post, and comment…

- [24] Deepseek v4: Best Opensource Model Ever? (Fully Tested) - YouTubeyoutube.com

Deepseek v4: Best Opensource Model Ever? (Fully Tested) Image 7 WorldofAI WorldofAI 214K subscribers Join Subscribe Subscribed 455 Share Save Download Download 19,407 views 10 hours ago#DeepSeek#AI#LLM 19,407 views • Apr 24, 2026 • #DeepSeek #AI #LLM DeepSeek is BACK with the V4 release… but is it actually the best open-source model ever? In this video, I put DeepSeek V4 Pro and DeepSeek V4 Flash to the test — from coding, agentic workflows, and long-contex…...more ...more How this was made Auto-dubbed Audio tracks for some languages were automatically generated. Learn more ## Chapters View…

- [25] Update benchmark figure · deepseek-ai/DeepSeek-V4-Flash at ...huggingface.co

DeepSeek-V4-Flash. like 317. Follow. DeepSeek 127k. Text Generation · Transformers ... Update benchmark figure. Browse files. Files changed (1) hide show. assets ...

- [26] DeepSeek_V4.pdfhuggingface.co

Overall, DeepSeek-V4 series retain the Transformer (Vaswani et al., 2017) architecture and Multi-Token Prediction (MTP) modules (DeepSeek-AI, 2024; Gloeckle et al., 2024), while introducing several key upgrades over DeepSeek-V3: (1) firstly, we introduce the Manifold-Constrained Hyper-Connections ( mHC) (Xie et al., 2026) to strengthen conventional residual connections; 6(2) secondly, we design a hybrid attention architecture, which greatly improves long-context efficiency through Compressed Sparse Attention and Heavily Compressed Attention. (3) thirdly, we employ Muon (Jordan et al., 2024; L…

- [27] deepseek-ai/DeepSeek-V4-Pro at main - Hugging Facehuggingface.co

Update benchmark figure 2 days ago · encoding · Release DeepSeek-V4 2 days ago · inference · Update inference/config.json 2 days ago .gitattributes. Safe. 1.67 ...

- [28] deepseek-ai/DeepSeek-V4-Pro - Commits - Hugging Facehuggingface.co

DeepSeek-V4-Pro. like 1.6k. Follow. DeepSeek 127k. Text Generation · Transformers ... Update benchmark figure. 833c42d. msr2000 commited on in about 3 hours ...

- [29] deepseek-ai/DeepSeek-V4-Flash at main - Hugging Facehuggingface.co

DeepSeek-V4-Flash. like 378. Follow. DeepSeek 127k ... DeepSeek-V4-Flash / assets. 1 MB. Ctrl+K. Ctrl+ ... Update benchmark figure · 0cad8ee in about 3 hours.

- [30] Commits · deepseek-ai/DeepSeek-V4-Flash - Hugging Facehuggingface.co

DeepSeek-V4-Flash. like 320. Follow. DeepSeek 127k ... DeepSeek-V4-Flash. Commit History. Update ... Update benchmark figure. 0cad8ee. msr2000 commited ...

- [31] Commits · deepseek-ai/DeepSeek-V4-Pro - Hugging Facehuggingface.co

DeepSeek-V4-Pro / assets. Commit History. Update benchmark figure. 833c42d. msr2000 commited on in about 3 hours. Release DeepSeek-V4. 0e1a0e5. 2 days ago

- [32] deepseek-ai/DeepSeek-V4-Pro at main - Hugging Facehuggingface.co

deepseek-ai. /. DeepSeek-V4-Pro. like 2.61k. Follow. DeepSeek 127k. Text Generation ... Update benchmark figure · 833c42d about 21 hours ago.

- [33] Change Log | DeepSeek API Docsapi-docs.deepseek.com

Previous FAQ Date: 2026-04-24 DeepSeek-V4 Date: 2025-12-01 DeepSeek-V3.2 DeepSeek-V3.2-Speciale Date: 2025-09-29 DeepSeek-V3.2-Exp Date: 2025-09-22 DeepSeek-V3.1-Terminus Date: 2025-08-21 DeepSeek-V3.1 Date: 2025-05-28 deepseek-reasoner Date: 2025-03-24 deepseek-chat Date: 2025-01-20 deepseek-reasoner Date: 2024-12-26 deepseek-chat Date: 2024-12-10 deepseek-chat Date: 2024-09-05

deepseek-coder&deepseek-chatUpgraded to DeepSeek V2.5 Model Date: 2024-08-02 API Launches Context Caching on Disk Technology Date: 2024-07-25 New API Features Date: 2024-07-24 deepseek-coder Date: 2024-06-28 deep… - [34] DeepSeek V4 Preview Release | DeepSeek API Docsapi-docs.deepseek.com

⚠️ Note: deepseek-chat & deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). (Currently routing to deepseek-v4-flash non-thinking/thinking). Image 7 🔹 Amid recent attention, a quick reminder: please rely only on our official accounts for DeepSeek news. Statements from other channels do not reflect our views. 🔹 Thank you for your continued trust. We remain committed to longtermism, advancing steadily toward our ultimate goal of AGI. Previous Get User BalanceNext DeepSeek-V3.2 Release DeepSeek-V4-Pro DeepSeek-V4-Flash Structural Innovation & Ultra-…

- [35] deepseek-ai/DeepSeek-V4-Pro-Base · Create README.mdhuggingface.co

70+DeepSeek-V4-Pro-Max vs Frontier Models 71+Benchmark (Metric) Opus-4.6 Max GPT-5.4 xHigh Gemini-3.1-Pro High K2.6 Thinking GLM-5.1 Thinking DS-V4-Pro Max 72+Knowledge & Reasoning 73+MMLU-Pro (EM) 89.1 87.5 91.0 87.1 86.0 87.5 74+SimpleQA-Verified (Pass@1) 46.2 45.3 75.6 36.9 38.1 57.9 75+Chinese-SimpleQA (Pass@1) 76.4 76.8 85.9 75.9 75.0 84.4 76+GPQA Diamond (Pass@1) 91.3 93.0 94.3 90.5 86.2 90.1 77+HLE (Pass@1) 40.0 39.8 44.4 36.4 34.7 37.7 78+LiveCodeBench (Pass@1) 88.8 - 91.7 89.6 - 93.5 79+Codeforces (Rating) - 3168 3052 - - 3206 80+HMMT 2026 Feb (Pass@1) 96.2 97.7 94.7 92.7 89.4 95.2 8…

- [36] DeepSeek-R1 Release | DeepSeek API Docsapi-docs.deepseek.com

API Reference News DeepSeek-V4 Preview Release 2026/04/24 DeepSeek-V3.2 Release 2025/12/01 DeepSeek-V3.2-Exp Release 2025/09/29 DeepSeek V3.1 Update 2025/09/22 DeepSeek V3.1 Release 2025/08/21 DeepSeek-R1-0528 Release 2025/05/28 DeepSeek-V3-0324 Release 2025/03/25 DeepSeek-R1 Release 2025/01/20 DeepSeek APP 2025/01/15 Introducing DeepSeek-V3 2024/12/26 DeepSeek-V2.5-1210 Release 2024/12/10 DeepSeek-R1-Lite Release 2024/11/20 DeepSeek-V2.5 Release 2024/09/05 Context Caching is Available 2024/08/02 New API Features 2024/07/25 Other Resources FAQ Change Log 💰 $0.55 / million input tokens (cache…

- [37] DeepSeek-R1-0528 Release | DeepSeek API Docsapi-docs.deepseek.com

DeepSeek-R1-0528 Release 🚀 DeepSeek-R1-0528 is here! 🔹 Improved benchmark performance 🔹 Enhanced front-end capabilities 🔹 Reduced hallucinations 🔹 Supports JSON output & function calling ✅ Try it now: 🔌 No change to API usage — docs here: 🔗 Open-source weights: Image 2 Image 3 Previous DeepSeek-V3.1 ReleaseNext DeepSeek-V3-0324 Release WeChat Official Account Image 4: WeChat QRcode Community Email Discord Twitter More GitHub Copyright © 2026 DeepSeek, Inc. [...] API Reference News DeepSeek-V4 Preview Release 2026/04/24 DeepSeek-V3.2 Release 2025/12/01 DeepSeek-V3.2-Exp Release 2025/0…

- [38] DeepSeek-V3.1-Terminus | DeepSeek API Docsapi-docs.deepseek.com

API Reference News DeepSeek-V4 Preview Release 2026/04/24 DeepSeek-V3.2 Release 2025/12/01 DeepSeek-V3.2-Exp Release 2025/09/29 DeepSeek V3.1 Update 2025/09/22 DeepSeek V3.1 Release 2025/08/21 DeepSeek-R1-0528 Release 2025/05/28 DeepSeek-V3-0324 Release 2025/03/25 DeepSeek-R1 Release 2025/01/20 DeepSeek APP 2025/01/15 Introducing DeepSeek-V3 2024/12/26 DeepSeek-V2.5-1210 Release 2024/12/10 DeepSeek-R1-Lite Release 2024/11/20 DeepSeek-V2.5 Release 2024/09/05 Context Caching is Available 2024/08/02 New API Features 2024/07/25 Other Resources FAQ Change Log []( News DeepSeek V3.1 Update 2025/09/…

- [39] DeepSeek-V3.2 Release | DeepSeek API Docsapi-docs.deepseek.com

API Reference News DeepSeek-V4 Preview Release 2026/04/24 DeepSeek-V3.2 Release 2025/12/01 DeepSeek-V3.2-Exp Release 2025/09/29 DeepSeek V3.1 Update 2025/09/22 DeepSeek V3.1 Release 2025/08/21 DeepSeek-R1-0528 Release 2025/05/28 DeepSeek-V3-0324 Release 2025/03/25 DeepSeek-R1 Release 2025/01/20 DeepSeek APP 2025/01/15 Introducing DeepSeek-V3 2024/12/26 DeepSeek-V2.5-1210 Release 2024/12/10 DeepSeek-R1-Lite Release 2024/11/20 DeepSeek-V2.5 Release 2024/09/05 Context Caching is Available 2024/08/02 New API Features 2024/07/25 Other Resources FAQ Change Log to support community evaluation & rese…

- [40] deepseek-ai (DeepSeek)huggingface.co

Image 57 #### deepseek-ai/DeepSeek-V4-Pro Text Generation • 862B•Updated 2 days ago• 78.9k•• 2.73kImage 58 #### deepseek-ai/DeepSeek-V4-Flash Text Generation • 158B•Updated 2 days ago• 25.4k•• 699Image 59 #### deepseek-ai/DeepSeek-V4-Pro-Base 1.6T•Updated 2 days ago• 804• 213Image 60 #### deepseek-ai/DeepSeek-V4-Flash-Base 292B•Updated 2 days ago• 1.87k• 159Image 61 #### deepseek-ai/DeepSeek-OCR-2 Image-Text-to-Text • 3B•Updated Feb 3• 1.49M• 927Image 62 #### deepseek-ai/DeepSeek-V3.2-Speciale Text Generation •Updated Dec 1, 2025• 18.6k• 702Image 63 #### deepseek-ai/DeepSeek-V3.2 Text Generat…

- [41] DeepSeek | 深度求索deepseek.com

DeepSeek | 深度求索 Image 1: DeepSeek Logo 🎉 DeepSeek-V4 预览版本发布,具备世界顶级推理性能,Agent 能力大幅提高,已在网页端、APP 和 API 上线,点击查看详情。 探索未至之境 开始对话 与 DeepSeek 免费对话 体验全新旗舰模型API 开放平台 调用 DeepSeek 最新模型 快速集成、流畅体验 获取手机 AppEnglish Image 2: DeepSeek Logo © 2026 杭州深度求索人工智能基础技术研究有限公司 版权所有 浙ICP备2023025841号浙B2-20250178浙公网安备33010502011812号 研究 DeepSeek R1DeepSeek V3DeepSeek Coder V2DeepSeek VLDeepSeek V2DeepSeek CoderDeepSeek MathDeepSeek LLM 产品 DeepSeek AppDeepSeek 网页版开放平台API 价格服务状态 法务 & 安全 隐私政策用户协议反馈安全漏洞 加入我们 岗位详情 Cookie 设置 我们使用 Cookies 来提供和改进服务并进行安全防护。点击查看我们的 Cookie 政策。你可以选择接受全部,或仅接受必要 Cookies(可能导致部分功能无法正常使用)。 仅接受必要 Cookies…

- [42] Introducing DeepSeek-V3.2-Expapi-docs.deepseek.com

News. DeepSeek-V4 Preview Release 2026/04/24 · DeepSeek-V3.2 Release 2025/12/01 · DeepSeek-V3.2-Exp Release 2025/09/29 · DeepSeek V3.1 Update