GPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6のベンチマークを調査し、比較してください。

提供された証拠だけでは、GPT 5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6を同一ベンチマーク上で厳密に横比較するには不十分です。確認できる範囲では、GPT 5.5は「新たな首位モデル」とされる一方、Claude Opus 4.7もArtificial Analysis系の文脈で首位級と示唆されており、Kimi K2.6は一部の公開モデル比較指標のみ数値が確認できます。[3][7][34] K...

提供された証拠だけでは、GPT 5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6を同一ベンチマーク上で厳密に横比較するには不十分です。確認できる範囲では、GPT 5.5は「新たな首位モデル」とされる一方、Claude Opus 4.7もArtificial Analysis系の文脈で首位級と示唆されており、Kimi K2.6は一部の公開モデル比較指標のみ数値が確認できます。[3][7][34] Key findings | モデル | 確認できたベンチマーク・性能情報 | 比較上の解釈 | | | :| | | GPT 5.5 | Artificial Analysis関連記事のスニペットでは、20

重要なポイント

- | モデル | 確認できたベンチマーク・性能情報 | 比較上の解釈 |

- |---|---:|---|

- | GPT-5.5 | Artificial Analysis関連記事のスニペットでは、2026年4月24日時点で「新たな主要/首位AIモデル」とされている。 LinkedInスニペットにも「OpenAIのGPT-5.5が新たな首位AIモデル」とある。 | 総合性能で非常に強い可能性はあるが、提供証拠には具体的なスコア、評価項目、競合平均との差がないため、定量順位は確定できない。 |

- | Claude Opus 4.7 | Mashableスニペットでは、AnthropicがClaude Opus 4.7について「92%のhonesty rate」と「より少ないsycophancy」を主張している。 SourceForgeスニペットでは、Claude Opus 4.7は高度なソフトウェアエンジニアリングと複雑な問題解決での改善を目的にしたAnthropicの最新モデルと説明されている。 | 安全性・誠実性系の指標では具体値があるが、GPT-5.5やKimi K2.6と同じ総合ベンチマークでの数値比較はできない。 |

- 提供された証拠だけでは、GPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6を同一ベンチマーク上で厳密に横比較するには不十分です。確認できる範囲では、GPT-5.5は「新たな首位モデル」とされる一方、Claude Opus 4.7もArtificial Analysis系の文脈で首位級と示唆されており、Kimi K2.6は一部の公開モデル比較指標のみ数値が確認できます。[3][7][34]

- ## Key findings

研究の答え

提供された証拠だけでは、GPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6を同一ベンチマーク上で厳密に横比較するには不十分です。確認できる範囲では、GPT-5.5は「新たな首位モデル」とされる一方、Claude Opus 4.7もArtificial Analysis系の文脈で首位級と示唆されており、Kimi K2.6は一部の公開モデル比較指標のみ数値が確認できます。[3][

7][

34]

Key findings

| モデル | 確認できたベンチマーク・性能情報 | 比較上の解釈 |

|---|---|---|

| GPT-5.5 | Artificial Analysis関連記事のスニペットでは、2026年4月24日時点で「新たな主要/首位AIモデル」とされている。[ | 総合性能で非常に強い可能性はあるが、提供証拠には具体的なスコア、評価項目、競合平均との差がないため、定量順位は確定できない。[ |

| Claude Opus 4.7 | Mashableスニペットでは、AnthropicがClaude Opus 4.7について「92%のhonesty rate」と「より少ないsycophancy」を主張している。[ | 安全性・誠実性系の指標では具体値があるが、GPT-5.5やKimi K2.6と同じ総合ベンチマークでの数値比較はできない。[ |

| DeepSeek V4 | Artificial Analysisの公開モデル比較スニペットに「DeepSeek V4 Pro」の記載はあるが、スコアや速度、価格などの数値は提示されていない。[ | Insufficient evidence. 提供証拠だけではDeepSeek V4のベンチマーク順位・強み・弱みを判断できない。[ |

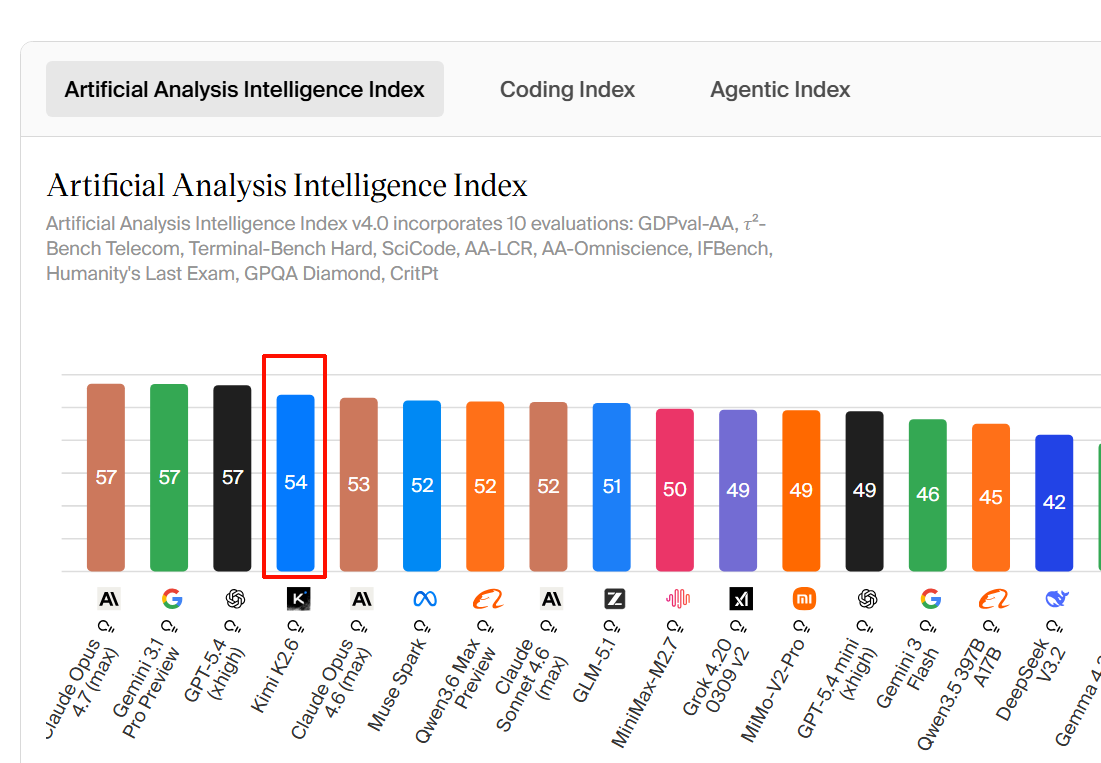

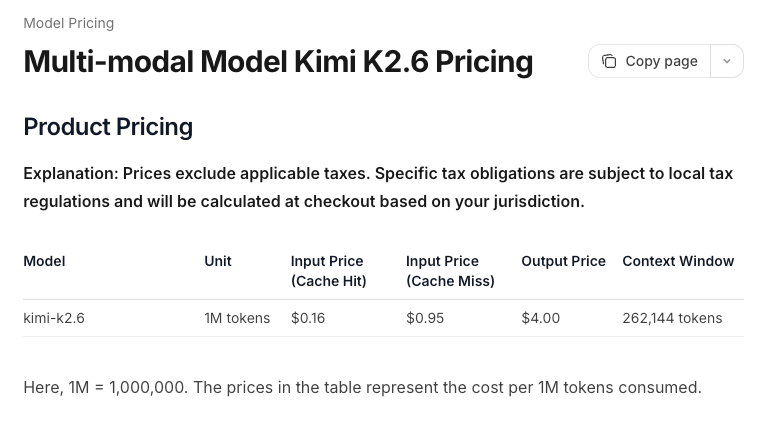

| Kimi K2.6 | Artificial Analysisの公開モデル比較スニペットでは、Kimi K2.6のIntelligenceが54、コンテキスト長が256k、価格が$1.7、出力速度が112 tokens/sと示されている。[ | 4モデル中で最も具体的な公開比較数値が確認できるが、GPT-5.5やClaude Opus 4.7と同一指標で直接比較できる証拠はない。[ |

Evidence notes

-

Stanford HAIの2026年AI Indexでは、2026年2月時点のSWE-bench Verifiedで上位モデルが70%台前半〜中盤に密集し、Claude 4.5 Opus high reasoningが約76.8%で首位、KimiK2.5、GPT-5.2、Gemini 3 Flash high reasoningが70%〜76%の範囲に入っていたとされている。[

2]

-

ただし、このStanford HAIの記述はClaude Opus 4.7、GPT-5.5、Kimi K2.6、DeepSeek V4そのものではなく、直前世代または別モデルの状況を示す背景情報にとどまる。[

2]

-

SciCode Benchの提供スニペットにはClaude Sonnet 4 high、Gemini 2.5 Pro、GPT-5-1-codex、Grok 4などの記載があるが、比較対象であるGPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6の直接スコアは確認できない。[

1]

-

GPT-5.5については「新たな首位」とする記述がある一方、別スニペットではClaude Opus 4.7もArtificial Analysis Intelligence文脈で首位に位置するように読めるため、時点差、評価セット差、またはスニペットの文脈差による不整合がある可能性がある。[

3][

34]

Limitations / uncertainty

-

Insufficient evidence. 4モデルすべてを同一ベンチマーク、同一日付、同一推論設定で比較した表やスコアは、提供証拠内にはありません。[

1][

3][

5][

7][

34]

-

DeepSeek V4は特に情報が不足しており、提供証拠では「DeepSeek V4 Pro」というモデル名の出現以上の定量情報を確認できません。[

7]

-

Claude Opus 4.7の「92% honesty rate」は安全性・誠実性に関する指標であり、コーディング、数学、エージェント性能、総合知能スコアと同列には扱えません。[

4]

-

Kimi K2.6のIntelligence 54、256k context、$1.7、112 tokens/sは有用な比較材料ですが、GPT-5.5やClaude Opus 4.7の同じ列の数値が提供されていないため、相対順位は出せません。[

7]

Summary

サポートビジュアル

研究に関する会話

リサーチクエスチョン

GPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6のベンチマークを調査し、比較してください。

引用された回答

提供された証拠だけでは、GPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6を同一ベンチマーク上で厳密に横比較するには不十分です。確認できる範囲では、GPT-5.5は「新たな首位モデル」とされる一方、Claude Opus 4.7もArtificial Analysis系の文脈で首位級と示唆されており、Kimi K2.6は一部の公開モデル比較指標のみ数値が確認できます。[3][

7][

34]

Key findings

| モデル | 確認できたベンチマーク・性能情報 | 比較上の解釈 |

|---|---|---|

| GPT-5.5 | Artificial Analysis関連記事のスニペットでは、2026年4月24日時点で「新たな主要/首位AIモデル」とされている。[ | 総合性能で非常に強い可能性はあるが、提供証拠には具体的なスコア、評価項目、競合平均との差がないため、定量順位は確定できない。[ |

| Claude Opus 4.7 | Mashableスニペットでは、AnthropicがClaude Opus 4.7について「92%のhonesty rate」と「より少ないsycophancy」を主張している。[ | 安全性・誠実性系の指標では具体値があるが、GPT-5.5やKimi K2.6と同じ総合ベンチマークでの数値比較はできない。[ |

| DeepSeek V4 | Artificial Analysisの公開モデル比較スニペットに「DeepSeek V4 Pro」の記載はあるが、スコアや速度、価格などの数値は提示されていない。[ | Insufficient evidence. 提供証拠だけではDeepSeek V4のベンチマーク順位・強み・弱みを判断できない。[ |

| Kimi K2.6 | Artificial Analysisの公開モデル比較スニペットでは、Kimi K2.6のIntelligenceが54、コンテキスト長が256k、価格が$1.7、出力速度が112 tokens/sと示されている。[ | 4モデル中で最も具体的な公開比較数値が確認できるが、GPT-5.5やClaude Opus 4.7と同一指標で直接比較できる証拠はない。[ |

Evidence notes

-

Stanford HAIの2026年AI Indexでは、2026年2月時点のSWE-bench Verifiedで上位モデルが70%台前半〜中盤に密集し、Claude 4.5 Opus high reasoningが約76.8%で首位、KimiK2.5、GPT-5.2、Gemini 3 Flash high reasoningが70%〜76%の範囲に入っていたとされている。[

2]

-

ただし、このStanford HAIの記述はClaude Opus 4.7、GPT-5.5、Kimi K2.6、DeepSeek V4そのものではなく、直前世代または別モデルの状況を示す背景情報にとどまる。[

2]

-

SciCode Benchの提供スニペットにはClaude Sonnet 4 high、Gemini 2.5 Pro、GPT-5-1-codex、Grok 4などの記載があるが、比較対象であるGPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6の直接スコアは確認できない。[

1]

-

GPT-5.5については「新たな首位」とする記述がある一方、別スニペットではClaude Opus 4.7もArtificial Analysis Intelligence文脈で首位に位置するように読めるため、時点差、評価セット差、またはスニペットの文脈差による不整合がある可能性がある。[

3][

34]

Limitations / uncertainty

-

Insufficient evidence. 4モデルすべてを同一ベンチマーク、同一日付、同一推論設定で比較した表やスコアは、提供証拠内にはありません。[

1][

3][

5][

7][

34]

-

DeepSeek V4は特に情報が不足しており、提供証拠では「DeepSeek V4 Pro」というモデル名の出現以上の定量情報を確認できません。[

7]

-

Claude Opus 4.7の「92% honesty rate」は安全性・誠実性に関する指標であり、コーディング、数学、エージェント性能、総合知能スコアと同列には扱えません。[

4]

-

Kimi K2.6のIntelligence 54、256k context、$1.7、112 tokens/sは有用な比較材料ですが、GPT-5.5やClaude Opus 4.7の同じ列の数値が提供されていないため、相対順位は出せません。[

7]

Summary

人々も尋ねます

「GPT-5.5、Claude Opus 4.7、DeepSeek V4、Kimi K2.6のベンチマークを調査し、比較してください。」の短い答えは何ですか?

| モデル | 確認できたベンチマーク・性能情報 | 比較上の解釈 |

最初に検証する重要なポイントは何ですか?

| モデル | 確認できたベンチマーク・性能情報 | 比較上の解釈 | |---|---:|---|

次の実践では何をすればいいでしょうか?

| GPT-5.5 | Artificial Analysis関連記事のスニペットでは、2026年4月24日時点で「新たな主要/首位AIモデル」とされている。 LinkedInスニペットにも「OpenAIのGPT-5.5が新たな首位AIモデル」とある。 | 総合性能で非常に強い可能性はあるが、提供証拠には具体的なスコア、評価項目、競合平均との差がないため、定量順位は確定できない。 |

次にどの関連トピックを検討すればよいでしょうか?

別の角度からの引用や追加の引用については、「Deep research & compare GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4」に進みます。

関連ページを開くこれを何と比較すればいいでしょうか?

この回答を「請閱讀 Claude Opus 4.7 同 GPT 5.5 嘅介紹: https://www.anthropic.com/news/claude-opus-4-7 https://openai.com/index/introducing-gpt-5-5 請再搜尋更多相關資料,並就」と照合してください。

関連ページを開く研究を続けてください

情報源

- [1] Claude Opus 4.7 vs. Kimi K2.6 Comparison - SourceForgesourceforge.net

| About Claude Opus 4.7 is the latest Anthropic AI model release designed to significantly improve performance in advanced software engineering and complex problem-solving tasks. It builds upon the previous Opus 4.6 model by delivering stronger results on difficult coding challenges and long-running workflows. The model is known for its ability to follow instructions precisely and verify its own outputs for greater reliability. It also introduces enhanced multimodal capabilities, particularly in processing high-resolution images with improved accuracy. Opus 4.7 supports more detailed visual t…

- [2] DeepSeek is back among the leading open weights models with V4 Pro ...artificialanalysis.ai

Lower cost than frontier models, but high token usage keeps costs above most open weights peers: DeepSeek V4 Pro costs $1,071 to run the Artificial Analysis Intelligence Index, more than 4x cheaper than Claude Opus 4.7 ($4,811) but above several open weights models, including Kimi K2.6 ($948), GLM-5.1 ($544), DeepSeek V3.2 ($71), and gpt-oss-120B ($67). This is partly driven by high output token usage: 190M tokens for V4 Pro and 240M for V4 Flash, despite Flash being much cheaper overall at $113. [...] ➤ Cheaper than frontier models but more expensive than other open weights models and a larg…

- [3] DeepSeek V4 is here: How it compares to ChatGPT, Claude, Geminimashable.com

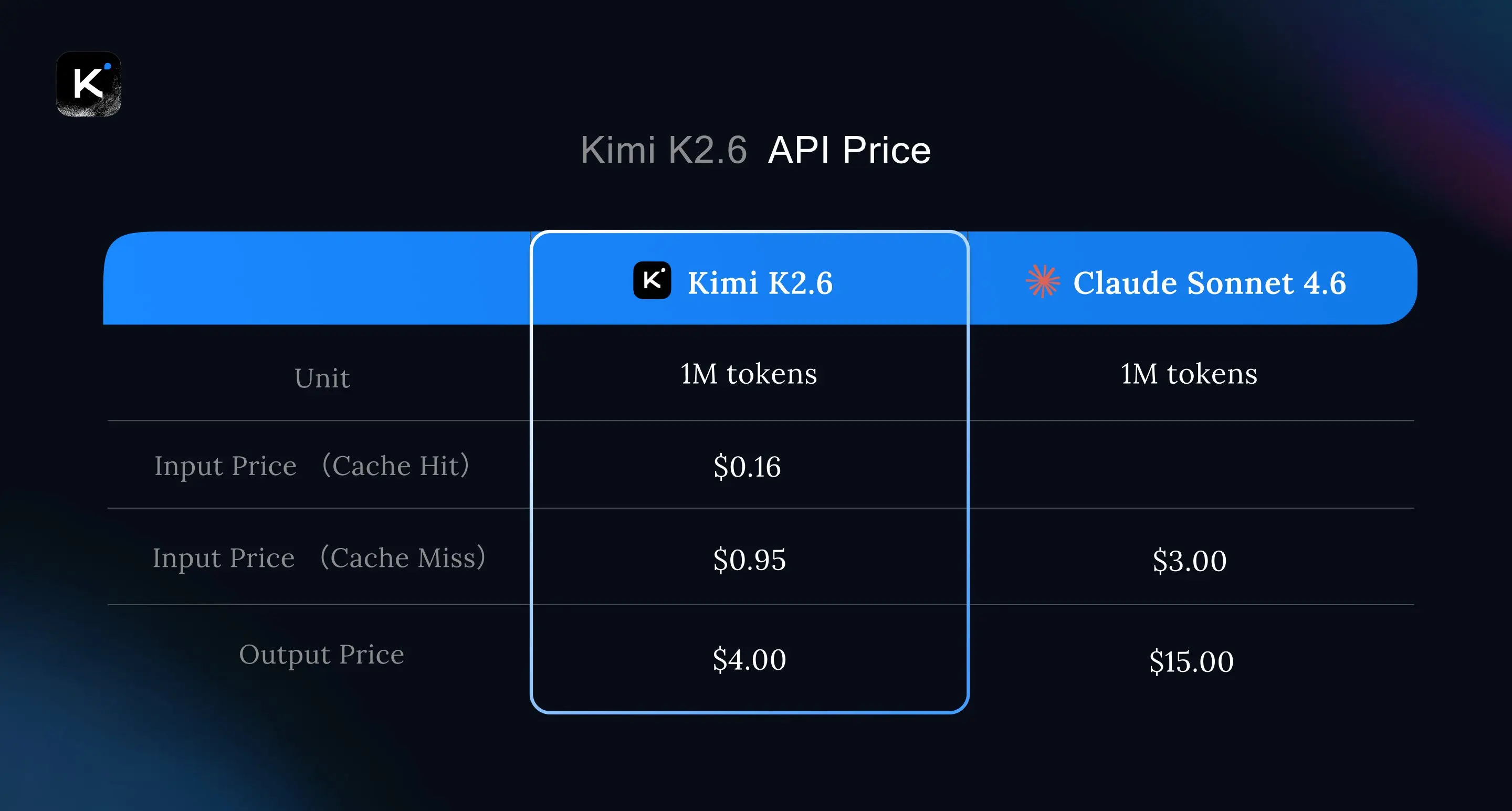

Here's how the API pricing compares: DeepSeek V4 costs $1.74 per 1 million input tokens and $3.48 per 1 million output tokens (1 million context window) GPT-5.5 costs at $5 per 1 million input tokens and $30 per 1 million output tokens (1 million context window) Claude Opus 4.7 costs at $5 per 1 million input tokens and $25 per 1 million output tokens (1 million context window) Google Gemini 3.1 Pro costs $2 per 1 million input tokens and $12 per 1 million output tokens As you can see, DeepSeek is about one-sixth the cost of the latest U.S. models, which is a huge edge. Even the more affordab…

- [4] GPT-5.5 vs Claude Opus 4.7: Pricing, Speed, Benchmarksllm-stats.com

The Verdict On the 10 benchmarks both providers report, Opus 4.7 leads on 6 and GPT-5.5 leads on 4. The leads cluster by category, not by overall quality: Opus 4.7 is ahead on the reasoning-heavy and review-grade tests (GPQA Diamond, HLE with and without tools, SWE-Bench Pro, MCP Atlas, FinanceAgent v1.1). GPT-5.5 is ahead on the long-running tool-use tests (Terminal-Bench 2.0, BrowseComp, OSWorld-Verified, CyberGym). Margins are mostly between 2 and 13 percentage points, and every score is self-reported at each provider's high reasoning tier — comparable in shape, not in methodology. [...…

- [5] GPT‑5 vs Kimi K2.6 - Detailed Performance & Feature Comparisondocsbot.ai

GPT‑5 vs Claude Opus 4.5 GPT‑5 vs Claude Opus 4.6 GPT‑5 vs Claude Opus 4.7 GPT‑5 vs Claude Sonnet 4.6 GPT‑5 vs Claude Haiku 4.5 GPT‑5 vs Claude 4.5 Sonnet GPT‑5 vs Amazon Nova Micro GPT‑5 vs Amazon Nova Lite GPT‑5 vs Amazon Nova Pro GPT‑5 vs Command A GPT‑5 vs Gemma 4 31B GPT‑5 vs Gemini 2.5 Pro GPT‑5 vs Gemini 3 Pro GPT‑5 vs Gemini 3.1 Pro GPT‑5 vs Gemini 3.1 Flash-Lite GPT‑5 vs Gemini 3 Flash GPT‑5 vs Gemini 2.5 Flash GPT‑5 vs Gemini 2.5 Flash Lite GPT‑5 vs Llama 4 Scout GPT‑5 vs Llama 4 Maverick GPT‑5 vs Llama 4 Behemoth GPT‑5 vs Mistral Large 2 GPT‑5 vs GPT-4.1 GPT‑5 vs GPT-4.1 Mini GPT‑5…

- [6] Kimi K2.6 vs Claude Opus 4.6 vs GPT-5.4 - Verdent AIverdent.ai

| Benchmark | K2.6 | Claude Opus 4.6 | GPT-5.4 | Notes | --- --- | SWE-Bench Pro | 58.60% | 53.40% | 57.70% | Moonshot in-house harness; SEAL mini-swe-agent puts GPT-5.4 at 59.1%, Opus 4.6 at 51.9% | | SWE-Bench Verified | 80.20% | 80.80% | ~80% | Tight cluster; Opus 4.7 now leads at 87.6% | | Terminal-Bench 2.0 | 66.70% | 65.40% | 65.40% | See note below | | HLE with tools | 54.00% | 53.00% | 52.10% | All three within 2 points | | LiveCodeBench v6 | 89.60% | 88.80% | — | v6 as of April 2026 | Source for all K2.6 numbers: Moonshot AI official model card, April 20, 2026. Claude Opus 4.6 number…

- [7] Kimi K2.6 vs Claude Opus 4.7 - AI Model Comparison | OpenRouteropenrouter.ai

Beyond coding, Opus 4.7 brings improved knowledge work capabilities - from drafting documents and building presentations to analyzing data. It maintains coherence across very long outputs and extended sessions, making it a strong default for tasks that require persistence, judgment, and follow-through. For users upgrading from earlier Opus versions, see our official migration guide here ### Activity Prompt 120B Completion 927M Reasoning 22.7M ### Provider Image 8: Favicon for Anthropic Anthropic1.77s Throughput (p50)48.0 tok/s Visualize Performance Ready Output will appear here... ### Pricing…

- [8] Kimi K2.6 vs DeepSeek V2 vs GPT-5.5 vs Claude Opus 4.7: Which Should You Test First? | LaoZhang AI Blogblog.laozhang.ai

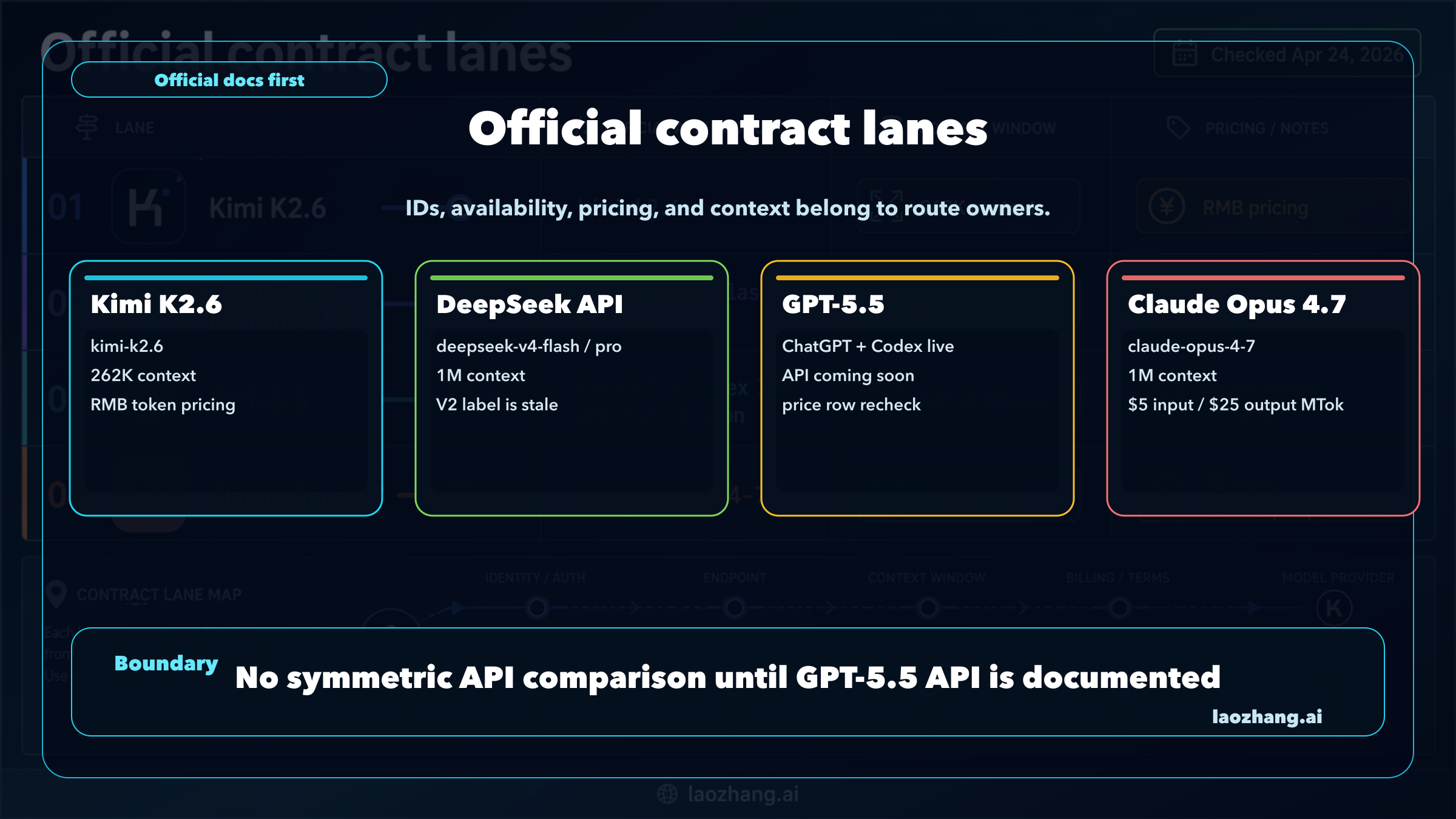

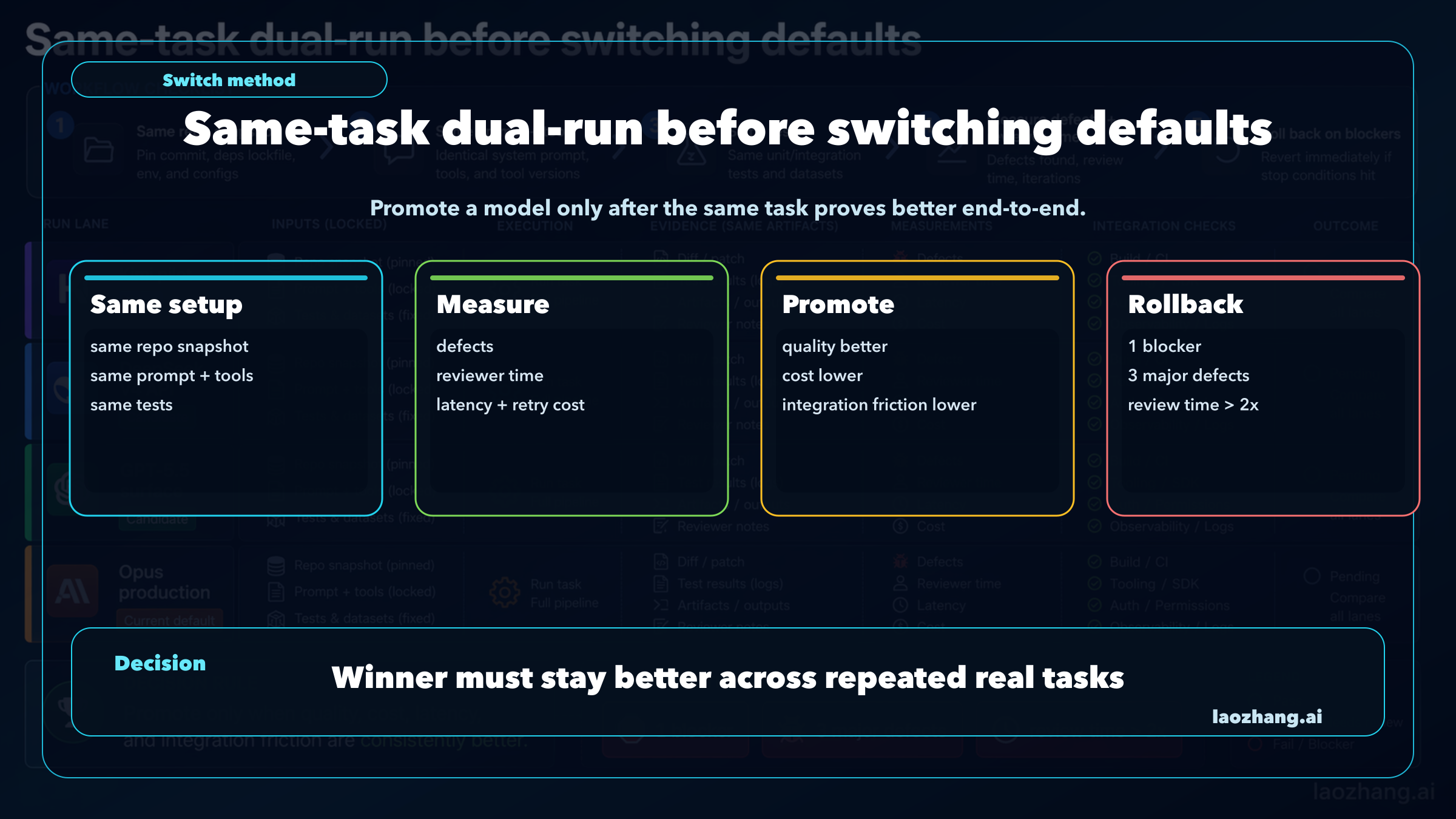

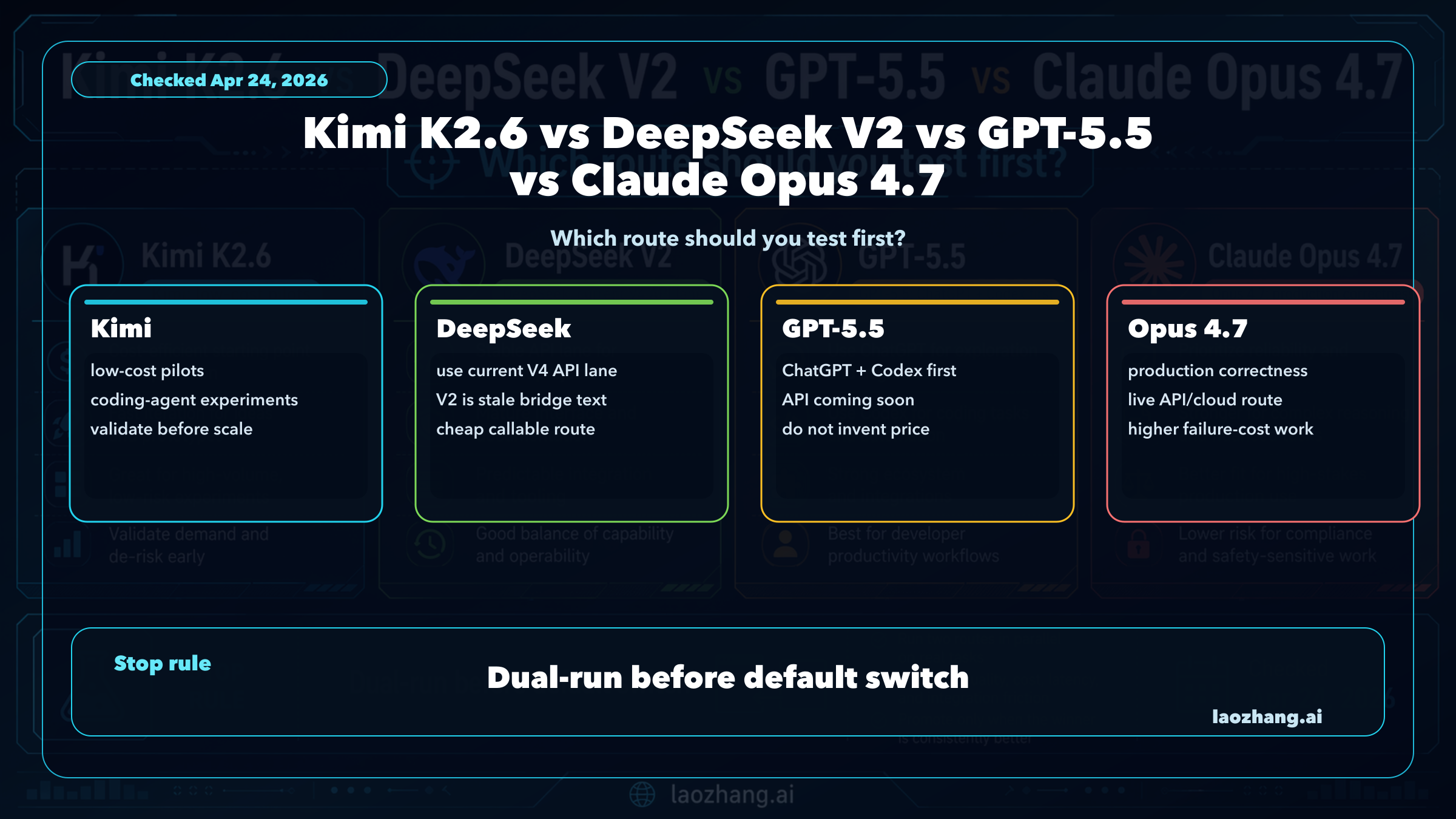

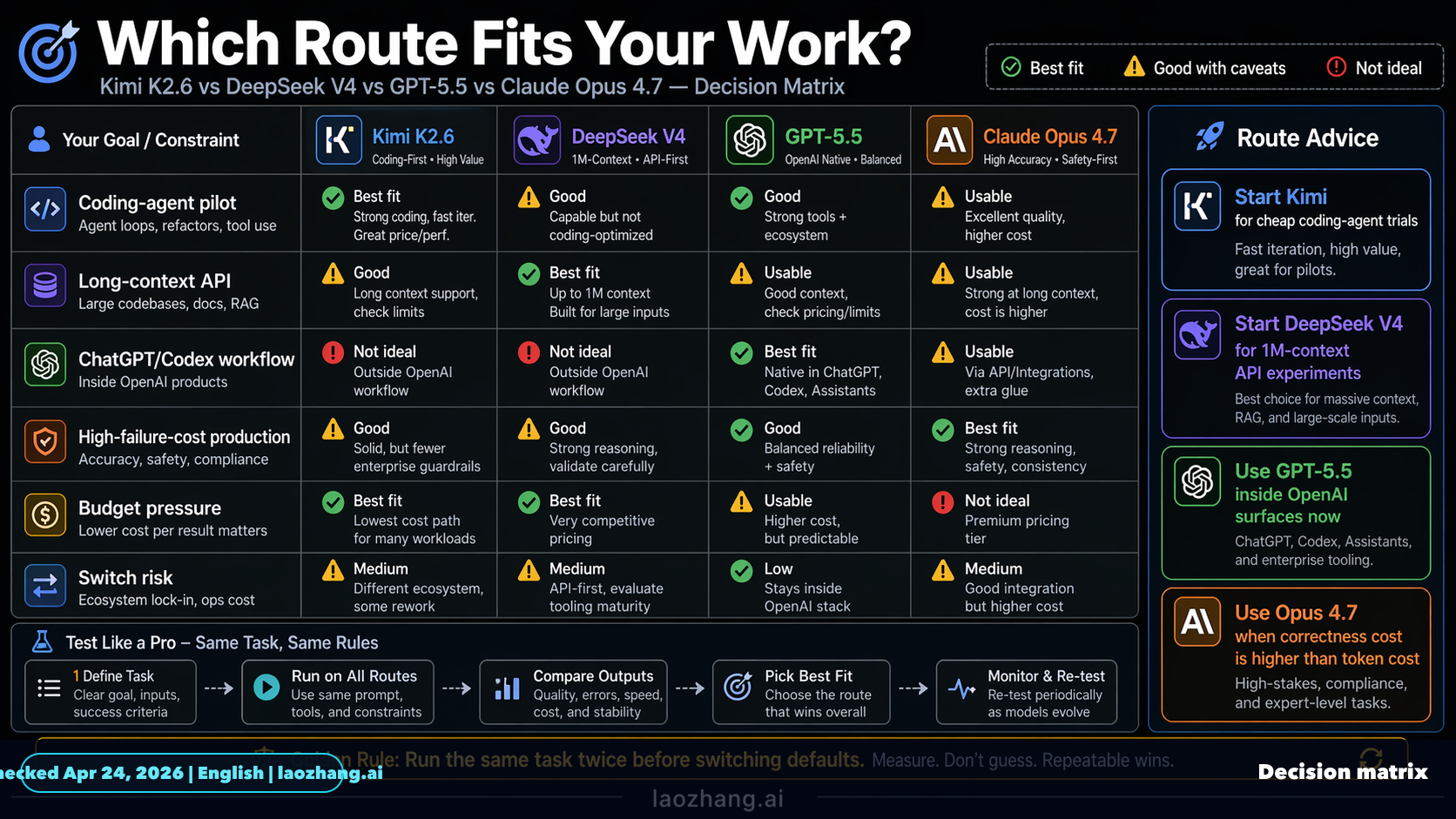

Blog Logo # Kimi K2.6 vs DeepSeek V2 vs GPT-5.5 vs Claude Opus 4.7: Which Should You Test First? Test Kimi for cheap pilots, DeepSeek V4 for current low-cost API work, GPT-5.5 inside OpenAI surfaces, and Opus 4.7 for production correctness. Kimi K2.6 vs DeepSeek V2 vs GPT-5.5 vs Claude Opus 4.7: Which Should You Test First? As of Apr 24, 2026, this is not a symmetric four-model API contest. Test Kimi K2.6 first when you need low-cost coding-agent pilots, use the current DeepSeek V4 Flash or V4 Pro API lane when the job is cheap callable DeepSeek work, keep GPT-5.5 inside ChatGPT or Codex unti…

- [9] OpenAI’s GPT-5.5 vs Claude Opus 4.7: Which is better? | Mashablemashable.com

Thanks for signing up! SWE-Bench Pro: GPT-5.5 scored 58.6; Opus 4.7 scored 64.3 percent Terminal-Bench 2.0: GPT-5.5 scored 82.7 percent; Opus 4.7 scored 69.4 percent Humanity's Last Exam: GPT-5.5 scored 40.6 percent; Opus 4.7 scored 31.2 percent\ Humanity's Last Exam (with tools): GPT-5.5 scored 52.2 percent; Opus 4.7 scored 54.7 percent BrowseComp: GPT-5.5 scored 84.4 percent; Opus 4.7 scored 79.3 percent GPQA Diamond: GPT-5.5 scored 93.6 percent; Opus 4.7 scored 94.2 percent ARC-AGI-1 (Verified): GPT-5.5 (High) scored 94.5 percent; Claude 4.7 (High) scored 92 percent\ ARC-AGI-2 (Verified):…

- [10] We Gave Claude Opus 4.7 and Kimi K2.6 the Same Workflow ...blog.kilo.ai

Claude Opus 4.7 lost points on the reproduced recovery bug, the bounded claim scan, and the SSE cursor fallback. Kimi K2.6 lost points on the six confirmed issues above. The biggest hits are in recovery, scheduling, and streaming, which is exactly where the spec’s hardest requirements live. ## Cost vs Quality Kimi K2.6 is about 4x cheaper per point. The missing 23 points are in step-leasing, scheduling, and event streaming, which is where the hardest spec requirements live. Those are the parts that separate “the endpoints exist” from “the system behaves correctly under load.” ## Where Open-We…

- [11] I reviewed how DeepSeek V4-Pro, Kimi 2.6, Opus 4.6, and Opus 4.7 across the same... | Hacker Newsnews.ycombinator.com

| | | | ozgune 1 day ago | parent | context | favorite | on: DeepSeek v4 I reviewed how DeepSeek V4-Pro, Kimi 2.6, Opus 4.6, and Opus 4.7 across the same AI benchmarks. All results are for Max editions, except for Kimi. Summary: Opus 4.6 forms the baseline all three are trying to beat. DeepSeek V4-Pro roughly matches it across the board, Kimi K2.6 edges it on agentic/coding benchmarks, and Opus 4.7 surpasses it on nearly everything except web search. DeepSeek V4-Pro Max shines in competitive coding benchmarks. However, it trails both Opus models on software engineering. Kimi K2.6 is remarkabl…

- [12] Deep|DeepSeek V4 vs Claude vs GPT-5.4: A 38-Task ... - FundaAIfundaai.substack.com

DeepSeek V4 Flash averages 165s per task — faster than all three Claude Opus models (Opus 4.5: 138s, Opus 4.7: 227s, Opus 4.6: 267s). Pro is slower at 256s — comparable to Opus 4.7. Both models produce dense, token-efficient output: Pro averages ~4,200 output tokens and Flash ~3,800, compared to Claude’s ~7,500-8,800 characters. DeepSeek shows competitive quality per token — the content-to-padding ratio is notably higher, with fewer boilerplate disclaimers and more substantive tables, data points, and analysis per word.On cost, DeepSeek V4 shows a notable advantage in this benchmark. Flash at…

- [13] SciCode Benchcborg.lbl.gov

| claude-sonnet-4-high | 13.8% | $10.49 | Sonnet 4.0 reasoning_budget=8192 | 2025-08-22 | | gemini-2.5-pro | 13.8% | $25.33 | Gemini 2.5 Pro | 2025-08-22 | | gpt-5-1-codex | 13.8% | >$40 | 😢 | OpenAI gpt-5-1-codex (unable to complete) | 2025-12-02 | | grok-4-0709 | 13.8% | $7.13 | 😢 | xAI Grok 4 0709 (unable to complete) | 2025-08-24 | | gpt-5-nano-high | 12.3% | $1.09 | OpenAI gpt-5-nano reasoning_effort=“high” | 2025-09-26 | | qwen-3-coder | 12.3% | $1.50 | ⚠️ | qwen3-coder-480b-a35b-instruct | 2025-08-22 | | kimi-k2-thinking | 12.3% | $4.85 | ⚠️ | qwen3-coder-480b-a35b-instruct | 2025-…

- [14] [PDF] Technical Performance - Stanford HAIhai.stanford.edu

On SWE-bench Verified, top models are tightly clustered in the low-to-mid 70s (Figure 2.5.1). As of February 2026, Claude 4.5 Opus (high reasoning) led at approximately 76.8%, with several others including KimiK2.5, GPT-5.2, and Gemini 3 Flash (high reasoning) grouped between 70% and 76%. This is a pattern seen across several benchmarks in this chapter, where high-performing models score within a few percentage points of each other. SWE-bench 101 2.5 PERFORMANCE IN SPECIFIC DOMAINS | TECHNICAL PERFORMANCE | AI INDEX REPORT 2026 44.00% 46.00% 47.00% 48.00% 48.33% 49.67% 56.67% 58.33% 60.00% 60…

- [15] Anthropic releases Claude Opus 4.7: How to try it, benchmarks, safetymashable.com

Tim studied print journalism at the University of Southern California. He currently splits his time between Brooklyn, NY and Charleston, SC. He's currently working on his second novel, a science-fiction book. Recommended For You Anthropic says Claude Opus 4.7 has a 92% honesty rate, less sycophancy Anthropic says Claude Opus 4.7 is less likely to hallucinate or engage in sycophany than other models. By Timothy Beck Werth OpenAI’s GPT-5.5 vs Claude Opus 4.7: Which is better? How do the latest models from these AI heavy hitters compare? We take a look at benchmarks, leaderboards, and overall fe…

- [16] DeepSeek V4: Features, Benchmarks, and Comparisons - DataCampdatacamp.com

How large are the DeepSeek V4 models? DeepSeek uses a Mixture of Experts (MoE) architecture. The Pro model contains 1.6 trillion total parameters (49 billion active) and requires an 865GB download. The Flash model contains 284 billion parameters (13 billion active) and requires a 160GB download. ### Does DeepSeek V4 beat GPT-5.5 and Claude Opus 4.7? In pure capability, no. DeepSeek's self-reported data suggests the V4-Pro model trails state-of-the-art closed models by about 3 to 6 months on the hardest coding and reasoning benchmarks. However, it delivers near-frontier performance at roug…

- [17] Introducing Claude Opus 4.7anthropic.com

Image 7: logo > Based on our internal research-agent benchmark, Claude Opus 4.7 has the strongest efficiency baseline we’ve seen for multi-step work. It tied for the top overall score across our six modules at 0.715 and delivered the most consistent long-context performance of any model we tested. On General Finance—our largest module—it improved meaningfully on Opus 4.6, scoring 0.813 versus 0.767, while also showing the best disclosure and data discipline in the group. And on deductive logic, an area where Opus 4.6 struggled, Opus 4.7 is solid. > > Michal Mucha > > Lead AI Engineer, Applied…

- [18] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

Footnotes 1. General Testing Details We report results for Kimi K2.6 and Kimi K2.5 with thinking mode enabled, Claude Opus 4.6 with max effort, GPT-5.4 with xhigh reasoning effort, and Gemini 3.1 Pro with a high thinking level. Unless otherwise specified, all Kimi K2.6 experiments were conducted with temperature = 1.0, top-p = 1.0, and a context length of 262,144 tokens. Benchmarks without publicly available scores were re-evaluated under the same conditions used for Kimi K2.6 and are marked with an asterisk (``). Except where noted with an asterisk, all other results are cited from official…

- [19] GPT 5.5 - Vals AIvals.ai

Benchmarks Models Comparison Model Guide App Reports News About Benchmarks Models Comparison Model Guide App Reports About Release date Models 4/23/2026 OpenAI GPT 5.5 4/20/2026 Moonshot AI Kimi K2.6 4/16/2026 Anthropic Claude Opus 4.7 4/8/2026 Meta Muse Spark 4/2/2026 Google Gemma 4 31B IT 4/2/2026 Alibaba Qwen 3.6 Plus 4/1/2026 zAI GLM 5.1 4/1/2026 Arcee AI Trinity Large Thinking 3/17/2026 OpenAI GPT 5.4 Mini 3/17/2026 OpenAI GPT 5.4 Nano 3/17/2026 MiniMax MiniMax-M2.7 3/9/2026 xAI Grok 4.20 (Reasoning) 3/5/2026 OpenAI GPT 5.4 3/3/2026 Google Gemini 3.1 Flash Lite Preview 2/24/2026 OpenAI G…

- [20] Open-weight Kimi K2.6 takes on GPT-5.4 and Claude Opus 4.6 with agent swarmsthe-decoder.com

The Decoder # Open-weight Kimi K2.6 takes on GPT-5.4 and Claude Opus 4.6 with agent swarms Matthias Bastian Image description Moonshot AI has released Kimi K2.6 as an open-weight model. It's built to match GPT-5.4 and Claude Opus 4.6 on coding benchmarks, and it can run up to 300 agents in parallel. Moonshot AI says K2.6 puts up top scores across several benchmarks, landing on par with GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro. The numbers include 54.0 on HLE with Tools, 58.6 on SWE-Bench Pro, and 83.2 on BrowseComp. The model can chain together more than 4,000 tool calls and run conti…

- [21] GPT 5.5 is out. Here are the benchmarks. | Facebookfacebook.com

1d 4 Image 3 View all 3 replies []( Robert Eaton Opus 4.7 has been terrible. Getting everything wrong, making same mistake over and over. I went back to 4.6 and everything was fine 1d 4 Image 4Image 5 View all 2 replies []( Patrick Healy Codex $100a month package while building then go to $20 a month package & you run a local model hybrid is the best set up for a business. Personal use just go full local 1d 3 Image 6 View 1 reply View more comments 3 of 20 See more on Facebook See more on Facebook Email or phone number Password Log In Forgot password? or Create new account [...] # OpenClaw Co…

- [22] Artificial Analysis Intelligence Index | Artificial Analysisartificialanalysis.ai

Related links Image 1: Artificial Analysis Openness IndexGDPval-AAImage 2: 𝜏²-Bench Telecom Benchmark Leaderboard𝜏²-Bench TelecomImage 3: Terminal-Bench Hard Benchmark LeaderboardTerminal-Bench HardImage 4: SciCode Benchmark LeaderboardSciCodeImage 5: Artificial Analysis Openness IndexAA-LCRImage 6: Artificial Analysis Openness IndexAA-OmniscienceImage 7: IFBench Benchmark LeaderboardIFBenchImage 8: Humanity's Last Exam Benchmark LeaderboardHumanity's Last ExamGPQA DiamondImage 9: CritPt Benchmark LeaderboardCritPt #### Artificial Analysis Intelligence Index Claude Opus 4.7 (Adaptive Re…

- [23] Comparison of Open Source AI Models across Intelligence, Performance, Price, Context Window, and more | Artificial Analysisartificialanalysis.ai

| Model Name | Intelligence | Parameters | Context Window | Price | Output Speed (t/s) | Weights | Providers | Provider Benchmarks | --- --- --- --- | Kimi logo Kimi K2.6 Kimi | 54 | 1.0KB (32B active at inference time) | 256k | $1.7 | 112 | 🤗 | Novita Kimi SiliconFlow +6 more | View | | DeepSeek logo DeepSeek V4 Pro (Reasoning, Max Effort) DeepSeek | 52 | 1.6KB (49B active at inference time) | 1.00M | $2.2 | 36 | 🤗 | View | | Z AI logo GLM-5.1 (Reasoning) Z AI | 51 | 744B (40B active at inference time) | 200k | $2.1 | 49 | 🤗 | Novita Parasail SiliconFlow +6 more | View | | DeepSeek logo D…

- [24] Comparison of over 100 AI models from OpenAI, Google, DeepSeek ...artificialanalysis.ai

| GPT-5.5 (medium) | 922k | OpenAIOpenAI | 57 -- -- | Model Providers | | Kimi K2.6 | 256k | KimiKimi | 54 | $1.71 | 134 | 1.04 | 38.01 | Model Providers | | MiMo-V2.5-Pro | 1M | XiaomiXiaomi | 54 | $1.50 | 59 | 2.80 | 44.98 | Model Providers | | GPT-5.3 Codex (xhigh) | 400k | OpenAIOpenAI | 54 | $4.81 | 68 | 87.92 | 95.31 | Model Providers | | Muse Spark | 262k | MetaMeta | 52 -- -- | Model Providers | | Claude Opus 4.7 (Non-reasoning, high) | 1M | AnthropicAnthropic | 52 | $10.00 | 38 | 1.95 | 15.14 | Model Providers | | Qwen3.6 Max Preview | 256k | AlibabaAlibaba | 52 | $2.92 | 37 | 3.38 |…

- [25] Kimi K2.6 - Intelligence, Performance & Price Analysisartificialanalysis.ai

Kimi K2.6 scores 54 on the Artificial Analysis Intelligence Index, placing it well above average among comparable models (averaging 28). When evaluating the Intelligence Index, it generated 160M tokens, which is very verbose in comparison to the average of 41M. Pricing for Kimi K2.6 is $0.00 per 1M input tokens (competitively priced, average: $0.55) and $0.00 per 1M output tokens (competitively priced, average: $1.58). In total, it cost $0.00 to evaluate Kimi K2.6 on the Intelligence Index. ### Technical specifications [...] ### Who created Kimi K2.6? Kimi K2.6 was created by Kimi. ### How in…

- [26] OpenAI's GPT-5.5 is the new leading AI model - Artificial Analysisartificialanalysis.ai

➤ Number one in GDPval-AA with an Elo of 1785: GPT-5.5 (xhigh) leads Claude Opus 4.7 (max) by ~30 pts and Gemini 3.1 Pro Preview by ~470 pts. GDPval-AA is Artificial Analysis' benchmark that leverages OpenAI's GDPval dataset to evaluate models on real-world economically valuable tasks. [...] ➤ Top AA-Omniscience accuracy, but trailing the frontier on hallucination: Our private AA-Omniscience benchmark rewards factual knowledge across diverse topics, but punishes hallucination. GPT-5.5 (xhigh) has the highest accuracy at 57% - meaning the model can recall facts in the Omniscience corpus more e…

- [27] GPT-5.5 (high) - Intelligence, Performance & Price Analysisartificialanalysis.ai

No, GPT-5.5 (high) is proprietary. The model weights are not publicly available. GPT-5.5 (high) is a proprietary model and OpenAI has not disclosed the model size or parameter count. GPT-5.5 (high) achieves a score of 59 on the Artificial Analysis Intelligence Index. This composite benchmark evaluates models across reasoning, knowledge, mathematics, and coding. Yes, GPT-5.5 (high) is available via API through 1 provider.Compare API providers GPT-5.5 (high) is available through 1 API provider.Compare providers [...] GPT-5.5 (high)scores 59on the Artificial Analysis Intelligence Index, placing…

- [28] GPT-5.5 vs Claude Opus 4.7: Real-World Coding Performance Comparedmindstudio.ai

GPT-5.5 uses 72% fewer output tokens than Claude Opus 4.7 on the same coding tasks — a structural difference, not a minor gap. On raw benchmark quality, both models are competitive. Neither dominates on every task type. For high-volume agentic coding pipelines, GPT-5.5 is significantly cheaper and faster to run. For complex, reasoning-heavy tasks across large codebases, Opus 4.7’s thoroughness can justify the cost. The best production setups often use both models via routing — GPT-5.5 for standard tasks, Opus 4.7 for the hard ones. Token efficiency compounds in agentic loops: every step adds…

- [29] Artificial Analysis: AI Model & API Providers Analysisartificialanalysis.ai

Artificial Analysis # Independent analysis of AI Understand the AI landscape to choose the best model and provider for your use case Announcement A new look for Artificial Analysis Same mission, new lookNow Live Real agent workloads, real hardware benchmarking Artificial Analysis Intelligence Index; Higher is better Intelligence: Artificial Analysis Intelligence Index; Higher is better 1. Claude Opus 4.7 (max): 57 2. Gemini 3.1 Pro Preview: 57 3. GPT-5.4 (xhigh): 57 4. Kimi K2.6: 54 5. Muse Spark: 52 6. GLM-5.1: 51 7. Grok 4.20 0309 v2: 49 8. Gemini 3 Flash: 46 9. DeepSeek V3.2: 42 10. NVIDIA…

- [30] Comparison of AI Models across Intelligence, Performance, and Priceartificialanalysis.ai

Which is the most intelligent AI model? Claude Opus 4.7 (Adaptive Reasoning, Max Effort) currently leads the Artificial Analysis Intelligence Index with a score of 57, out of 347 models evaluated. ### What are the top AI models? The top AI models by Intelligence Index are: 1. Claude Opus 4.7 (Adaptive Reasoning, Max Effort) (57), 2. Gemini 3.1 Pro Preview (57), 3. GPT-5.4 (xhigh) (57), 4. Kimi K2.6 (54), 5. GPT-5.3 Codex (xhigh) (54). ### Which is the fastest AI model? Mercury 2 is the fastest at 661.1 tokens per second, followed by Granite 4.0 H Small (426.0 t/s) and Granite 3.3 8B (Non-…

- [31] Claude Opus 4.5 Benchmarks and Analysisartificialanalysis.ai

... Artificial Analysis Intelligence Index. April 24, 2026. OpenAI's GPT-5.5 is the new leading AI model. Benchmarks and Analysis of GPT-5.5. April ... Nov 25, 2025

- [32] GPT-5.5 vs Claude Opus 4.7: quién gana en código, terminal y ...webreactiva.com

GPT-5.5 lidera el Artificial Analysis Intelligence Index con 60 ... OpenAI's GPT-5.5 is the new leading AI model — Artificial Analysis ... 2 days ago

- [33] GPT-5.5 Hallucinates 86% of the Time. Here's How to Use It Anyway.findskill.ai

It also tops the Artificial Analysis Intelligence Index, beats ... OpenAI's GPT-5.5 is the new leading AI model — Artificial Analysis ... 1 day ago

- [34] The Ultimate Guide to GPT-5.5 Software Engineering - Skyworkskywork.ai

OpenAI's GPT-5.5 is the new leading AI model. Mollick, E. (2026-04-23 11:00). Sign of the future: GPT-5.5. One Useful Thing. 1 day ago

- [35] Changelog | Artificial Analysisartificialanalysis.ai

OpenAI's GPT-5.5 is the new leading AI model. Benchmarks and Analysis of GPT ... Intelligence Index unchanged: This does not affect the overall Intelligence Index ...

- [36] We're launching a new frontier physics eval on Artificial Analysis ...linkedin.com

OpenAI's GPT-5.5 is the new leading AI model. Artificial Analysis 22h ... Claude Opus 4.7 sits at the top of the Artificial Analysis Intelligence ... Nov 21, 2025

- [37] Announcing Artificial Analysis Video Arena - LinkedInlinkedin.com

OpenAI's GPT-5.5 is the new leading AI model. Artificial Analysis 8h · Claude Opus 4.7 sits at the top of the Artificial Analysis Intelligence ... Oct 21, 2024

- [38] Artificial Analysis' Post - LinkedInlinkedin.com

OpenAI's GPT-5.5 is the new leading AI model. Artificial Analysis 10h. Claude Opus 4.7 sits at the top of the Artificial Analysis Intelligence ... Mar 26, 2025