Deep research & compare GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4

Based on the evidence I found, the safest ranking is: GPT 5.5 for likely strongest closed model general capability, Claude Opus 4.7 for the most clearly documented long context/agentic production use, DeepSeek V4 for...

Based on the evidence I found, the safest ranking is: GPT 5.5 for likely strongest closed model general capability, Claude Opus 4.7 for the most clearly documented long context/agentic production use, DeepSeek V4 for potentially high value but with documentation uncertainty, and Kimi K2.6 as not reliably comparable fro

Key takeaways

- | Model | Best use case | Evidence confidence | Main caution |

- |---|---:|---:|---|

- | GPT-5.5 | General frontier reasoning, research, coding, multimodal/productivity workflows | Medium-high | Official docs were found, but the retained snippets did not provide enough benchmark/pricing detail for a rigorous numeric comparison. |

- | Claude Opus 4.7 | Long-context knowledge work, coding agents, asynchronous agentic workflows | High | Strong documentation for context/pricing, but third-party benchmark rankings should still be independently validated. |

- Based on the evidence I found, the safest ranking is: GPT-5.5 for likely strongest closed-model general capability, Claude Opus 4.7 for the most clearly documented long-context/agentic production use, DeepSeek V4 for potentially high value but with documentation uncertainty, and

- ## Key findings

Research answer

Based on the evidence I found, the safest ranking is: GPT-5.5 for likely strongest closed-model general capability, Claude Opus 4.7 for the most clearly documented long-context/agentic production use, DeepSeek V4 for potentially high value but with documentation uncertainty, and Kimi K2.6 as not reliably comparable from the high-authority evidence I found. I would not treat this as a clean benchmark leaderboard: GPT-5.5 and Claude Opus 4.7 have stronger primary-source support, DeepSeek V4 has mixed release/methodology evidence, and Kimi K2.6 lacks a direct high-trust source in the retained evidence.

Key findings

| Model | Best use case | Evidence confidence | Main caution |

|---|---|---|---|

| GPT-5.5 | General frontier reasoning, research, coding, multimodal/productivity workflows | Medium-high | Official docs were found, but the retained snippets did not provide enough benchmark/pricing detail for a rigorous numeric comparison [ |

| Claude Opus 4.7 | Long-context knowledge work, coding agents, asynchronous agentic workflows | High | Strong documentation for context/pricing, but third-party benchmark rankings should still be independently validated [ |

| DeepSeek V4 | Cost-sensitive coding/agent experiments, especially if open or semi-open deployment matters | Low-medium | A recent source said no official V4 training-methodology documentation existed at that point, so benchmark claims need verification [ |

| Kimi K2.6 | Possible open-weight / agentic experimentation | Low | I found high-trust academic evidence for Kimi K2.5, not Kimi K2.6, so K2.6-specific claims are insufficiently verified [ |

My practical pick:

- Choose GPT-5.5 if you want the strongest general closed-model assistant and can tolerate higher cost and vendor lock-in.

- Choose Claude Opus 4.7 if your work involves very long documents, coding agents, legal/finance/enterprise analysis, or long-running tool use.

- Choose DeepSeek V4 only after running your own benchmark, because its value story may be strong but the evidence base is less mature.

- Do not choose Kimi K2.6 on public benchmark claims alone unless you can verify the model card, license, pricing, serving stack, and task performance yourself.

Confirmed facts

GPT-5.5 appears in an OpenAI API model documentation page, which is the strongest evidence in the retained source set that it is an official OpenAI API model [2]. The New York Times also reported that OpenAI unveiled a more powerful GPT-5.5 model, giving independent launch-context support beyond OpenAI’s own documentation [

3].

Claude Opus 4.7 has much clearer primary-source documentation than the other non-OpenAI models in this comparison. Anthropic’s Claude documentation says Claude Opus 4.7 provides a 1M-token context window at standard API pricing with no long-context premium [4]. Anthropic’s pricing documentation also says Claude Opus 4.7, Opus 4.6, Sonnet 4.6, and Claude Mythos Preview include the full 1M-token context window at standard pricing [

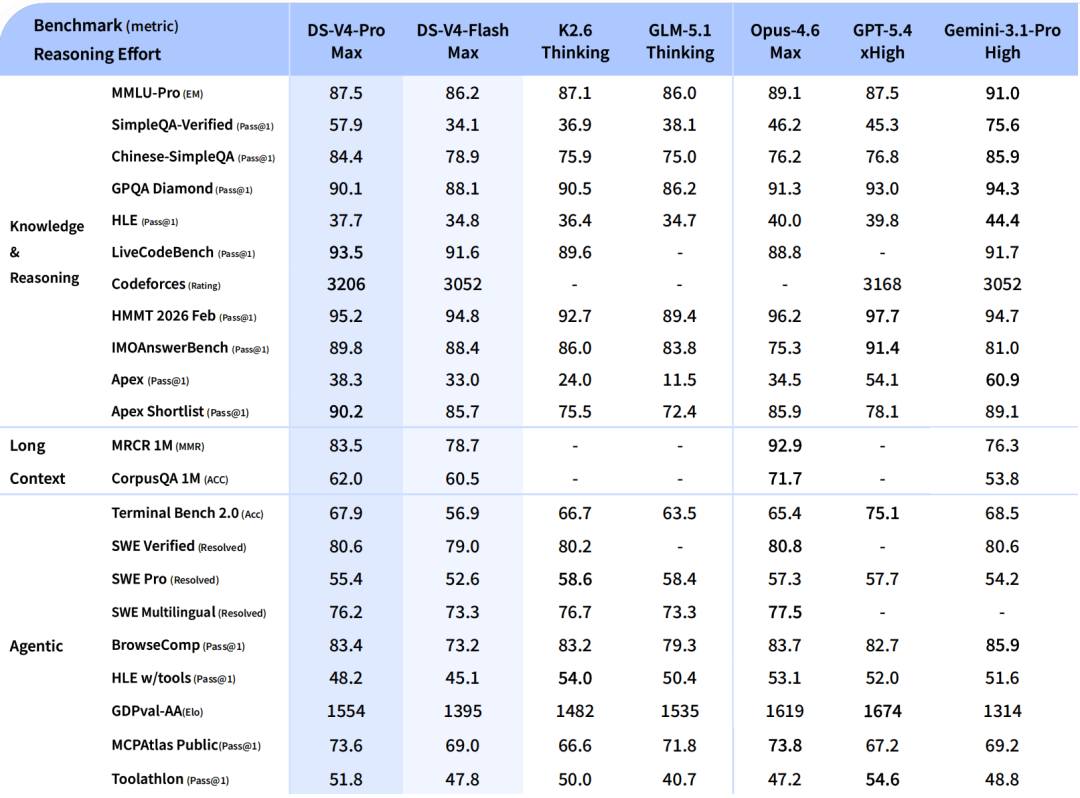

5].

Anthropic describes Claude Opus 4.7 as a hybrid reasoning model focused on frontier coding and AI agents, with a 1M-token context window [8]. A third-party API aggregator lists Claude Opus 4.7 as released on April 16, 2026, with 1,000,000-token context, $5 per million input tokens, and $25 per million output tokens [

7].

For Kimi, the strongest retained academic result concerns Kimi K2.5, not Kimi K2.6. That paper describes Kimi K2.5 as an open-weight model released by Moonshot AI and notes that its technical report lacked an assessment for one evaluation-awareness benchmark [1]. This does not validate Kimi K2.6, but it does show that recent Kimi-family models have attracted independent safety evaluation [

1].

For DeepSeek V4, the retained evidence is more conflicted and less complete. One recent source stated that no official V4 training-methodology documentation existed at the time it was writing, which makes architecture, safety, and benchmark claims harder to audit [6].

What remains inference

A direct “which is smartest?” ranking remains partly inference because the retained evidence does not include a single independent benchmark suite that tested GPT-5.5, Claude Opus 4.7, Kimi K2.6, and DeepSeek V4 under the same prompts, sampling settings, tools, latency constraints, and cost accounting.

The likely capability ordering for general closed-model tasks is GPT-5.5 and Claude Opus 4.7 at the top, because both have stronger primary-source or reputable-source confirmation than Kimi K2.6 and DeepSeek V4 [2][

3][

4][

8]. Between GPT-5.5 and Claude Opus 4.7, I would not declare a universal winner without task-specific tests, because Claude’s documentation is unusually strong for long-context and agentic workflows while GPT-5.5’s retained evidence is broader but less detailed [

2][

4][

8].

The likely value ordering may favor DeepSeek V4 or Kimi K2.6 if their low-cost/open-weight claims are verified, but the retained high-trust evidence is not strong enough to rank them confidently. For Kimi K2.6 specifically, insufficient evidence.

What the evidence suggests

Claude Opus 4.7 is the most defensible production pick from the evidence set if your workload depends on large context windows. Anthropic’s docs explicitly support 1M context at standard pricing and no long-context premium [4][

5]. That matters because long-context pricing often dominates real enterprise costs, not headline benchmark scores.

GPT-5.5 is likely the strongest default choice for broad general-purpose work if your priority is frontier capability and ecosystem maturity. The retained sources show both an official OpenAI API model page and independent news coverage of the launch [2][

3]. However, because the retained snippets do not give enough benchmark detail, I would avoid claiming GPT-5.5 “wins” every category.

DeepSeek V4 may be attractive for cost-sensitive engineering teams, but it needs a stricter validation pass before adoption. The key problem is not that DeepSeek V4 is weak; it is that the retained evidence leaves methodology gaps, including a report that no official V4 training-methodology documentation existed at that point [6].

Kimi K2.6 should be treated as unverified in this comparison. The strongest retained Kimi-related academic source is about Kimi K2.5, not Kimi K2.6 [1]. If Kimi K2.6 is important to your decision, the next step should be to collect its official model card, license, benchmark table, serving requirements, and API pricing before comparing it to GPT-5.5 or Claude Opus 4.7.

Conflicting evidence or uncertainty

The biggest uncertainty is source quality asymmetry. GPT-5.5 and Claude Opus 4.7 have stronger official or near-primary documentation in the retained evidence [2][

4][

5][

8]. Kimi K2.6 and DeepSeek V4 have weaker retained evidence for direct model-card-level comparison [

1][

6].

Claude Opus 4.7’s context and pricing claims are relatively well-supported because they appear in Anthropic documentation and are echoed by an API aggregator [4][

5][

7]. Still, aggregator pricing should be treated as secondary because vendor pricing pages are the ground truth [

5][

7].

DeepSeek V4’s uncertainty is methodological. A source saying no official V4 training methodology documentation existed does not mean the model is poor; it means claims about architecture, benchmark reliability, and safety properties are harder to audit [6].

Kimi K2.6’s uncertainty is evidentiary. The retained academic result is for Kimi K2.5, so K2.6-specific benchmark or pricing claims remain insufficiently supported [1].

Open questions

- What are the official context window, max output, tool-use limits, and pricing for GPT-5.5 across ChatGPT, API, batch, and realtime usage?

- Does Kimi K2.6 have an official Moonshot model card, license, benchmark report, and reproducible evaluation setup?

- Does DeepSeek V4 have a final technical report or only a preview/release note?

- Which model performs best on your actual workload: coding, long-document analysis, tool calling, structured extraction, research, math, multimodal, or customer-support automation?

- How much do latency, privacy, deployment control, and data-residency requirements matter in your use case?

Sources worth trusting most

- OpenAI’s API documentation is the strongest retained source for confirming GPT-5.5’s official API presence [

2].

- Anthropic’s Claude documentation and pricing pages are the strongest retained sources for Claude Opus 4.7 context and pricing claims [

4][

5].

- Anthropic’s own Claude Opus page is useful for understanding the model’s intended positioning around coding, agents, hybrid reasoning, and 1M context [

8].

- The New York Times is useful as independent launch-context reporting for GPT-5.5, but not as a technical benchmark source [

3].

- The Kimi K2.5 arXiv paper is useful for independent safety context around the Kimi model family, but it is not direct evidence for Kimi K2.6 [

1].

- The DeepSeek V4 secondary source is useful mainly as a caution signal about missing methodology documentation, not as final proof of performance [

6].

Recommended next step

Run a controlled benchmark on your own workload instead of relying on public leaderboards.

Use this test plan:

-

Pick 20–50 real tasks:

- 10 coding/debugging tasks

- 10 long-document reasoning tasks

- 5 structured extraction tasks

- 5 tool-calling/agent tasks

- 5 domain-specific tasks, such as finance, legal, science, or product analysis

-

Test each model under the same settings:

- Same prompt

- Same context

- Same temperature

- Same tool permissions

- Same timeout

- Same scoring rubric

-

Score on:

- Accuracy

- Faithfulness to source material

- Tool-call reliability

- Long-context retention

- Latency

- Cost per successful task

- Failure recovery

- Safety/compliance behavior

-

Make the decision by workload:

- If GPT-5.5 wins accuracy by a large margin, use it for highest-value reasoning tasks.

- If Claude Opus 4.7 wins reliability and long-context handling, use it for production agents and document-heavy workflows.

- If DeepSeek V4 or Kimi K2.6 are close enough at much lower cost, use them for batch coding, extraction, or self-hosted/open-weight experimentation.

Summary

GPT-5.5 is the likely premium generalist, but the retained evidence does not support a rigorous numeric benchmark comparison [2][

3]. Claude Opus 4.7 is the best-documented choice for long-context, coding, and agentic workflows, with official support for a 1M-token context window at standard pricing [

4][

5][

8]. DeepSeek V4 may be compelling on cost and openness, but methodology and release-documentation uncertainty make it a “verify before production” option [

6]. Kimi K2.6 has insufficient high-trust direct evidence in the retained sources, so it should not be treated as benchmark-equivalent to GPT-5.5 or Claude Opus 4.7 without further validation [

1].

Supporting visuals

Research conversation

Research question

Deep research & compare GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4

Cited answer

Based on the evidence I found, the safest ranking is: GPT-5.5 for likely strongest closed-model general capability, Claude Opus 4.7 for the most clearly documented long-context/agentic production use, DeepSeek V4 for potentially high value but with documentation uncertainty, and Kimi K2.6 as not reliably comparable from the high-authority evidence I found. I would not treat this as a clean benchmark leaderboard: GPT-5.5 and Claude Opus 4.7 have stronger primary-source support, DeepSeek V4 has mixed release/methodology evidence, and Kimi K2.6 lacks a direct high-trust source in the retained evidence.

Key findings

| Model | Best use case | Evidence confidence | Main caution |

|---|---|---|---|

| GPT-5.5 | General frontier reasoning, research, coding, multimodal/productivity workflows | Medium-high | Official docs were found, but the retained snippets did not provide enough benchmark/pricing detail for a rigorous numeric comparison [ |

| Claude Opus 4.7 | Long-context knowledge work, coding agents, asynchronous agentic workflows | High | Strong documentation for context/pricing, but third-party benchmark rankings should still be independently validated [ |

| DeepSeek V4 | Cost-sensitive coding/agent experiments, especially if open or semi-open deployment matters | Low-medium | A recent source said no official V4 training-methodology documentation existed at that point, so benchmark claims need verification [ |

| Kimi K2.6 | Possible open-weight / agentic experimentation | Low | I found high-trust academic evidence for Kimi K2.5, not Kimi K2.6, so K2.6-specific claims are insufficiently verified [ |

My practical pick:

- Choose GPT-5.5 if you want the strongest general closed-model assistant and can tolerate higher cost and vendor lock-in.

- Choose Claude Opus 4.7 if your work involves very long documents, coding agents, legal/finance/enterprise analysis, or long-running tool use.

- Choose DeepSeek V4 only after running your own benchmark, because its value story may be strong but the evidence base is less mature.

- Do not choose Kimi K2.6 on public benchmark claims alone unless you can verify the model card, license, pricing, serving stack, and task performance yourself.

Confirmed facts

GPT-5.5 appears in an OpenAI API model documentation page, which is the strongest evidence in the retained source set that it is an official OpenAI API model [2]. The New York Times also reported that OpenAI unveiled a more powerful GPT-5.5 model, giving independent launch-context support beyond OpenAI’s own documentation [

3].

Claude Opus 4.7 has much clearer primary-source documentation than the other non-OpenAI models in this comparison. Anthropic’s Claude documentation says Claude Opus 4.7 provides a 1M-token context window at standard API pricing with no long-context premium [4]. Anthropic’s pricing documentation also says Claude Opus 4.7, Opus 4.6, Sonnet 4.6, and Claude Mythos Preview include the full 1M-token context window at standard pricing [

5].

Anthropic describes Claude Opus 4.7 as a hybrid reasoning model focused on frontier coding and AI agents, with a 1M-token context window [8]. A third-party API aggregator lists Claude Opus 4.7 as released on April 16, 2026, with 1,000,000-token context, $5 per million input tokens, and $25 per million output tokens [

7].

For Kimi, the strongest retained academic result concerns Kimi K2.5, not Kimi K2.6. That paper describes Kimi K2.5 as an open-weight model released by Moonshot AI and notes that its technical report lacked an assessment for one evaluation-awareness benchmark [1]. This does not validate Kimi K2.6, but it does show that recent Kimi-family models have attracted independent safety evaluation [

1].

For DeepSeek V4, the retained evidence is more conflicted and less complete. One recent source stated that no official V4 training-methodology documentation existed at the time it was writing, which makes architecture, safety, and benchmark claims harder to audit [6].

What remains inference

A direct “which is smartest?” ranking remains partly inference because the retained evidence does not include a single independent benchmark suite that tested GPT-5.5, Claude Opus 4.7, Kimi K2.6, and DeepSeek V4 under the same prompts, sampling settings, tools, latency constraints, and cost accounting.

The likely capability ordering for general closed-model tasks is GPT-5.5 and Claude Opus 4.7 at the top, because both have stronger primary-source or reputable-source confirmation than Kimi K2.6 and DeepSeek V4 [2][

3][

4][

8]. Between GPT-5.5 and Claude Opus 4.7, I would not declare a universal winner without task-specific tests, because Claude’s documentation is unusually strong for long-context and agentic workflows while GPT-5.5’s retained evidence is broader but less detailed [

2][

4][

8].

The likely value ordering may favor DeepSeek V4 or Kimi K2.6 if their low-cost/open-weight claims are verified, but the retained high-trust evidence is not strong enough to rank them confidently. For Kimi K2.6 specifically, insufficient evidence.

What the evidence suggests

Claude Opus 4.7 is the most defensible production pick from the evidence set if your workload depends on large context windows. Anthropic’s docs explicitly support 1M context at standard pricing and no long-context premium [4][

5]. That matters because long-context pricing often dominates real enterprise costs, not headline benchmark scores.

GPT-5.5 is likely the strongest default choice for broad general-purpose work if your priority is frontier capability and ecosystem maturity. The retained sources show both an official OpenAI API model page and independent news coverage of the launch [2][

3]. However, because the retained snippets do not give enough benchmark detail, I would avoid claiming GPT-5.5 “wins” every category.

DeepSeek V4 may be attractive for cost-sensitive engineering teams, but it needs a stricter validation pass before adoption. The key problem is not that DeepSeek V4 is weak; it is that the retained evidence leaves methodology gaps, including a report that no official V4 training-methodology documentation existed at that point [6].

Kimi K2.6 should be treated as unverified in this comparison. The strongest retained Kimi-related academic source is about Kimi K2.5, not Kimi K2.6 [1]. If Kimi K2.6 is important to your decision, the next step should be to collect its official model card, license, benchmark table, serving requirements, and API pricing before comparing it to GPT-5.5 or Claude Opus 4.7.

Conflicting evidence or uncertainty

The biggest uncertainty is source quality asymmetry. GPT-5.5 and Claude Opus 4.7 have stronger official or near-primary documentation in the retained evidence [2][

4][

5][

8]. Kimi K2.6 and DeepSeek V4 have weaker retained evidence for direct model-card-level comparison [

1][

6].

Claude Opus 4.7’s context and pricing claims are relatively well-supported because they appear in Anthropic documentation and are echoed by an API aggregator [4][

5][

7]. Still, aggregator pricing should be treated as secondary because vendor pricing pages are the ground truth [

5][

7].

DeepSeek V4’s uncertainty is methodological. A source saying no official V4 training methodology documentation existed does not mean the model is poor; it means claims about architecture, benchmark reliability, and safety properties are harder to audit [6].

Kimi K2.6’s uncertainty is evidentiary. The retained academic result is for Kimi K2.5, so K2.6-specific benchmark or pricing claims remain insufficiently supported [1].

Open questions

- What are the official context window, max output, tool-use limits, and pricing for GPT-5.5 across ChatGPT, API, batch, and realtime usage?

- Does Kimi K2.6 have an official Moonshot model card, license, benchmark report, and reproducible evaluation setup?

- Does DeepSeek V4 have a final technical report or only a preview/release note?

- Which model performs best on your actual workload: coding, long-document analysis, tool calling, structured extraction, research, math, multimodal, or customer-support automation?

- How much do latency, privacy, deployment control, and data-residency requirements matter in your use case?

Sources worth trusting most

- OpenAI’s API documentation is the strongest retained source for confirming GPT-5.5’s official API presence [

2].

- Anthropic’s Claude documentation and pricing pages are the strongest retained sources for Claude Opus 4.7 context and pricing claims [

4][

5].

- Anthropic’s own Claude Opus page is useful for understanding the model’s intended positioning around coding, agents, hybrid reasoning, and 1M context [

8].

- The New York Times is useful as independent launch-context reporting for GPT-5.5, but not as a technical benchmark source [

3].

- The Kimi K2.5 arXiv paper is useful for independent safety context around the Kimi model family, but it is not direct evidence for Kimi K2.6 [

1].

- The DeepSeek V4 secondary source is useful mainly as a caution signal about missing methodology documentation, not as final proof of performance [

6].

Recommended next step

Run a controlled benchmark on your own workload instead of relying on public leaderboards.

Use this test plan:

-

Pick 20–50 real tasks:

- 10 coding/debugging tasks

- 10 long-document reasoning tasks

- 5 structured extraction tasks

- 5 tool-calling/agent tasks

- 5 domain-specific tasks, such as finance, legal, science, or product analysis

-

Test each model under the same settings:

- Same prompt

- Same context

- Same temperature

- Same tool permissions

- Same timeout

- Same scoring rubric

-

Score on:

- Accuracy

- Faithfulness to source material

- Tool-call reliability

- Long-context retention

- Latency

- Cost per successful task

- Failure recovery

- Safety/compliance behavior

-

Make the decision by workload:

- If GPT-5.5 wins accuracy by a large margin, use it for highest-value reasoning tasks.

- If Claude Opus 4.7 wins reliability and long-context handling, use it for production agents and document-heavy workflows.

- If DeepSeek V4 or Kimi K2.6 are close enough at much lower cost, use them for batch coding, extraction, or self-hosted/open-weight experimentation.

Summary

GPT-5.5 is the likely premium generalist, but the retained evidence does not support a rigorous numeric benchmark comparison [2][

3]. Claude Opus 4.7 is the best-documented choice for long-context, coding, and agentic workflows, with official support for a 1M-token context window at standard pricing [

4][

5][

8]. DeepSeek V4 may be compelling on cost and openness, but methodology and release-documentation uncertainty make it a “verify before production” option [

6]. Kimi K2.6 has insufficient high-trust direct evidence in the retained sources, so it should not be treated as benchmark-equivalent to GPT-5.5 or Claude Opus 4.7 without further validation [

1].

People also ask

What is the short answer to "Deep research & compare GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4"?

| Model | Best use case | Evidence confidence | Main caution |

What are the key points to validate first?

| Model | Best use case | Evidence confidence | Main caution | |---|---:|---:|---|

What should I do next in practice?

| GPT-5.5 | General frontier reasoning, research, coding, multimodal/productivity workflows | Medium-high | Official docs were found, but the retained snippets did not provide enough benchmark/pricing detail for a rigorous numeric comparison. |

Which related topic should I explore next?

Continue with "Research and fact-check: Claude Opus 4.7 vs GPT-5.5 Spud, Evidence provenance in research workflows: citations, scratchpads, and traceabilit" for another angle and extra citations.

Open related pageWhat should I compare this against?

Cross-check this answer against "Research and fact-check: Claude Opus 4.7 vs GPT-5.5 Spud, Governance, auditability, and production controls for real deployments".

Open related pageContinue your research

Sources

- [1] What's new in Claude Opus 4.7platform.claude.com

We suggest updating your

max_tokensparameters to give additional headroom, including compaction triggers. Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium. ## Capability improvements ### Knowledge work Claude Opus 4.7 shows meaningful gains on knowledge-worker tasks, particularly where the model needs to visually verify its own outputs: .docx redlining and .pptx editing — improved at producing and self-checking tracked changes and slide layouts. Charts and figure analysis — improved at programmatic tool-calling with image-processing librarie… - [2] Pricing - Claude API Docsplatform.claude.com

For more information about batch processing, see the batch processing documentation. ### Long context pricing Claude Mythos Preview, Opus 4.7, Opus 4.6, and Sonnet 4.6 include the full 1M token context window at standard pricing. (A 900k-token request is billed at the same per-token rate as a 9k-token request.) Prompt caching and batch processing discounts apply at standard rates across the full context window. ### Tool use pricing Tool use requests are priced based on:

toolsClient-side tools are priced the same as any other Claude API request, while server-side tools may incur additional… - [3] Anthropic: Claude Opus 4.7 – Effective Pricing - OpenRouteropenrouter.ai

Anthropic: Claude Opus 4.7 ### anthropic/claude-opus-4.7 Released Apr 16, 20261,000,000 context$5/M input tokens$25/M output tokens Opus 4.7 is the next generation of Anthropic's Opus family, built for long-running, asynchronous agents. Building on the coding and agentic strengths of Opus 4.6, it delivers stronger performance on complex, multi-step tasks and more reliable agentic execution across extended workflows. It is especially effective for asynchronous agent pipelines where tasks unfold over time - large codebases, multi-stage debugging, and end-to-end project orchestration. [...] Be…

- [4] Claude Opus 4.7 - Anthropicanthropic.com

Skip to main contentSkip to footer []( Research Economic Futures Commitments Learn News Try Claude # Claude Opus 4.7 Image 1: Claude Opus 4.7 Image 2: Claude Opus 4.7 Hybrid reasoning model that pushes the frontier for coding and AI agents, featuring a 1M context window Try ClaudeGet API access ## Announcements NEW Claude Opus 4.7 Apr 16, 2026 Claude Opus 4.7 brings stronger performance across coding, vision, and complex multi-step tasks. It's more thorough and consistent on difficult work, with better results across professional knowledge work. Read more Claude Opus 4.6 [...] Pricing for Opu…

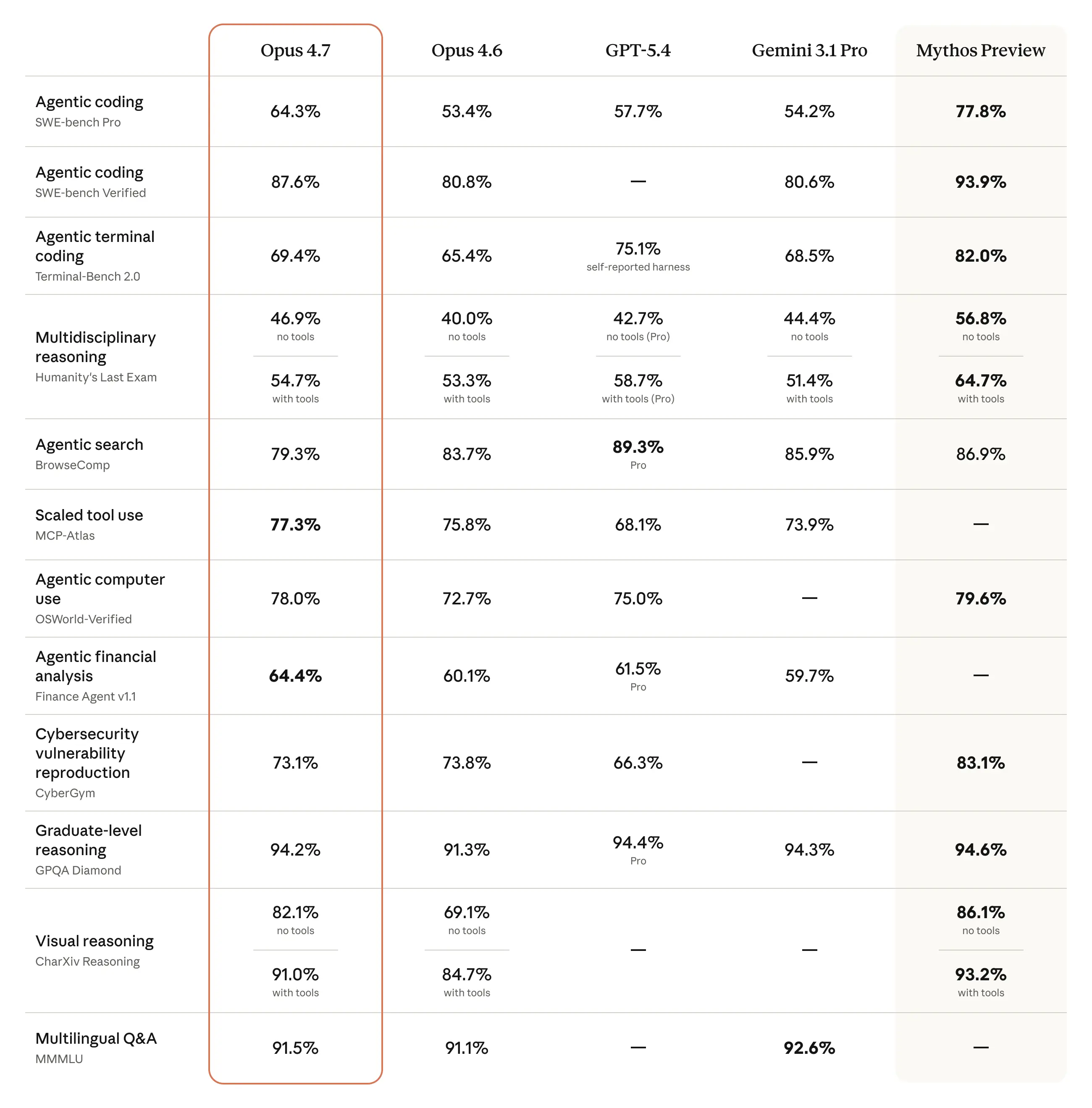

- [5] Claude Opus 4.7 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # Claude Opus 4.7 According to BenchLM.ai, Claude Opus 4.7 ranks #2 out of 110 models on the provisional leaderboard with an overall score of 97/100. It also ranks #2 out of 14 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. Claude Opus 4.7 is a proprietary model with a 1M token context window. It processes queries without explicit chain-of-thought reasoning, offering faster response times and lower to…

- [6] Claude Opus 4.7 Benchmarks Explained - Vellumvellum.ai

Anthropic dropped Claude Opus 4.7 today, and the benchmark table tells a focused story. This is not a model that sweeps every leaderboard. Anthropic is explicit that Claude Mythos Preview remains more broadly capable. But for developers building production coding agents and long-running workflows, the improvements are real and well-targeted. Opus 4.7 is available now across the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. Pricing stays the same as Opus 4.6: $5 per million input tokens and $25 per million output tokens. Here's what the numbers actually show. ## Ke…

- [7] Claude Opus 4.7 Pricing In 2026: What It Actually Costs - CloudZerocloudzero.com

How Much Does Claude Opus 4.7 Cost Per Million Tokens? Here’s the complete Anthropic pricing table for every current-generation model as of April 2026: | | | | | | --- --- | Model | Input (per 1M tokens) | Output (per 1M tokens) | Context window | Max output | | Claude Opus 4.7 | $5.00 | $25.00 | 1M tokens | 128K tokens | | Claude Opus 4.6 | $5.00 | $25.00 | 1M tokens | 128K tokens | | Claude Sonnet 4.6 | $3.00 | $15.00 | 1M tokens | 128K tokens | | Claude Haiku 4.5 | $1.00 | $5.00 | 200K tokens | 64K tokens | [...] | | | | | --- --- | | Model | Input ($ / 1M tokens) | Output ($ / 1M token…

- [8] Claude Opus 4.7 Pricing: The Real Cost Story Behind the “Unchanged” Price Tagfinout.io

Claude Opus 4.7 Pricing: The Real Cost Story Behind the “Unchanged” Price Tag Press Option+1 for screen-reader mode, Option+0 to cancelAccessibility Screen-Reader Guide, Feedback, and Issue Reporting | New window | Output ($/1M) | Context | Best for | --- --- | Claude Opus 4.7 | $5 | $25 | 1M tokens | Frontier coding, agents, high-res vision | | Claude Opus 4.6 | $5 | $25 | 1M tokens | Still-capable coding, lower effective cost/request | | Claude Sonnet 4.6 | $3 | $15 | 1M tokens | Default for most production inference | | Claude Haiku 4.5 | $1 | $5 | 200K tokens | High-volume, low-latency,…

- [9] Claude Opus 4.7: Complete Guide to Features, Benchmarks ...nxcode.io

Claude Opus 4.7: Complete Guide to Features, Benchmarks & Pricing April 16, 2026 — Anthropic released Claude Opus 4.7 today, and the story is not about a new pricing tier or a dramatic architectural overhaul. It is about targeted, measurable improvements in the two areas that matter most for production use: coding and vision. The model scores 70% on CursorBench (up from 58%), achieves 98.5% visual-acuity (up from 54.5%), and solves 3x more production tasks than Opus 4.6 — all at the same $5/$25 per million token pricing. The API identifier is

claude-opus-4-7. It is generally available acr… - [10] Claude Opus 4.7: Pricing, Benchmarks & Context Windowalmcorp.com

What are the main benchmark improvements for Claude Opus 4.7? The most cited improvements include stronger results on SWE-bench Pro, SWE-bench Verified, Terminal-Bench 2.0, CursorBench, finance-oriented evaluations, document reasoning benchmarks, and legal analysis tasks. Anthropic also cites a 13% lift over Opus 4.6 on a 93-task coding benchmark and meaningful gains in several partner evaluations involving code review, legal review, and enterprise document analysis. ### What is the price of Claude Opus 4.7? Anthropic lists Claude Opus 4.7 at $5 per million input tokens and $25 per millio…

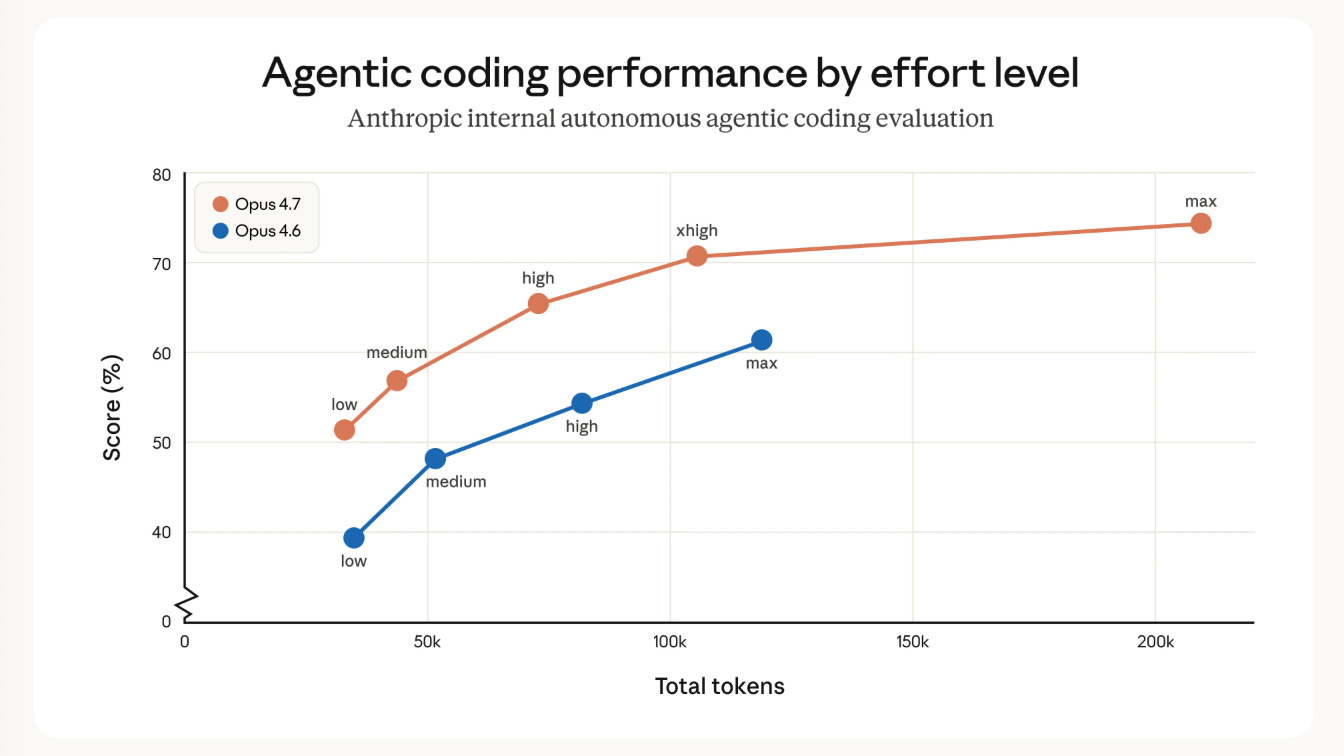

- [11] Claude Opus 4.7: What Changed for Coding Agents (April 2026)verdent.ai

Additionally, the model "thinks more at higher effort levels," generating more output tokens during reasoning. At

xhighormax, Anthropic recommends settingmax_tokensto at least 64K as a starting point. ## What's Unchanged Context window: 1M tokens, same as Opus 4.6, no long-context premium Output limit: 128K tokens per response Adaptive thinking and Agent Teams: both carry forward Prompt caching: up to 90% cost reduction on cached content Pricing structure: $5/$25 per million tokens across all platforms One notable removal: prefilling assistant messages now returns a 400 error on Opu… - [12] Introducing Claude Opus 4.7anthropic.com

Image 7: logo > Based on our internal research-agent benchmark, Claude Opus 4.7 has the strongest efficiency baseline we’ve seen for multi-step work. It tied for the top overall score across our six modules at 0.715 and delivered the most consistent long-context performance of any model we tested. On General Finance—our largest module—it improved meaningfully on Opus 4.6, scoring 0.813 versus 0.767, while also showing the best disclosure and data discipline in the group. And on deductive logic, an area where Opus 4.6 struggled, Opus 4.7 is solid. > > Michal Mucha > > Lead AI Engineer, Applied…

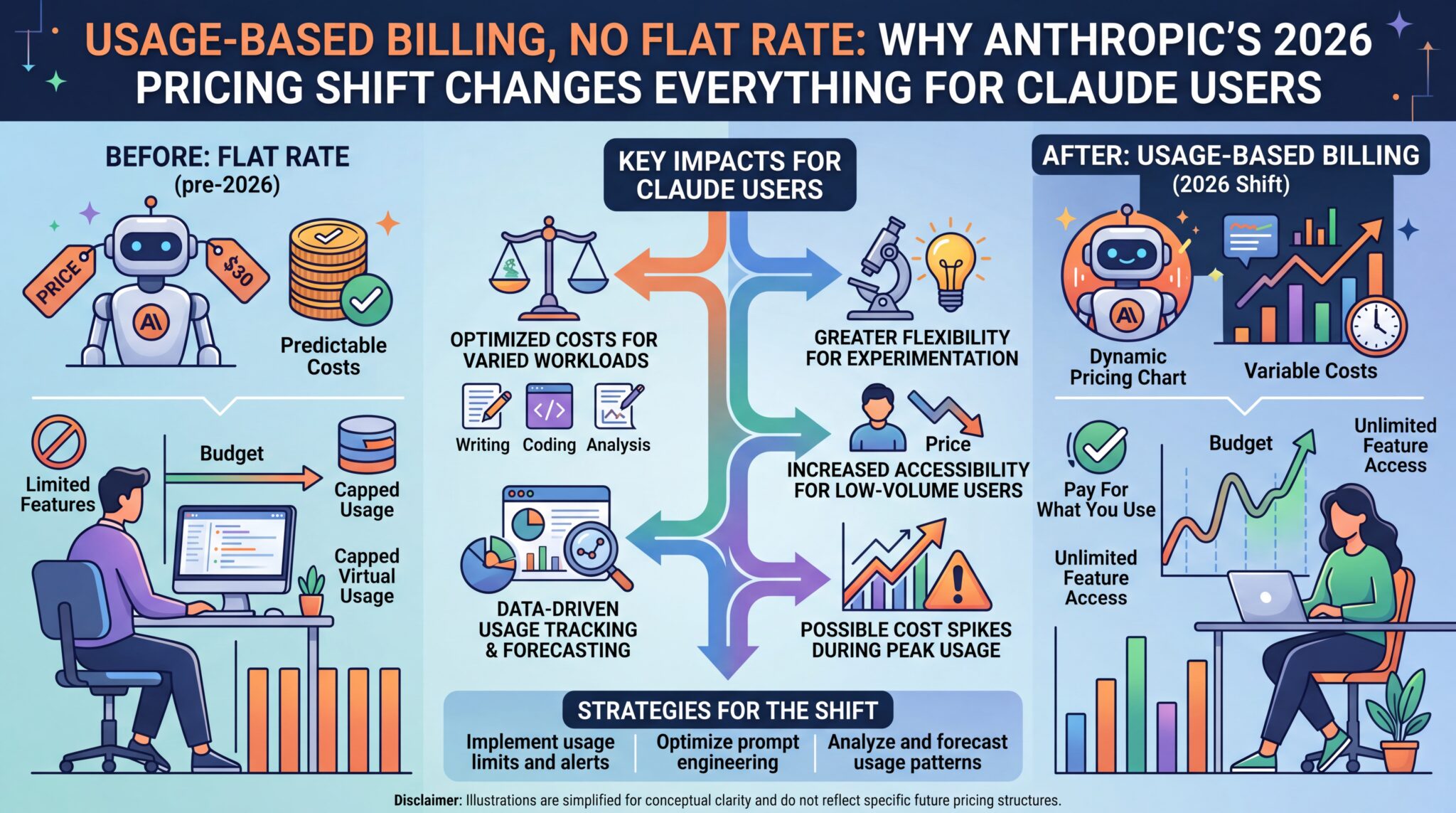

- [13] Usage-Based Billing, No Flat Rate: Why Anthropic's 2026 ... - Kingy AIkingy.ai

As of April 2026, the current-generation model rates are: Claude Opus 4.6 — $5.00 input / $25.00 output per MTok. The flagship. Exceptional for complex multi-step reasoning, agentic pipelines, and nuanced tasks where quality directly impacts revenue. Supports 1M token context at flat rates with no surcharge. Max output: 128K tokens synchronously, up to 300K on the Batch API. Claude Sonnet 4.6 — $3.00 input / $15.00 output per MTok. The recommended default for most production workloads. Delivers near-Opus quality at faster latency and 40% lower cost. Also supports 1M token context at flat rate…

- [14] Claude API Pricing: Haiku 4.5, Sonnet 4.6, and Opus 4.7 (April 2026)benchlm.ai

Anthropic just launched Claude Opus 4.7 on April 16, 2026 and kept Opus pricing unchanged at $5/$25 per million input/output tokens. That makes the pricing story simpler than many third-party tables suggest: Haiku 4.5 at $1/$5, Sonnet 4.6 at $3/$15, and now Opus 4.7 at $5/$25. This guide uses Anthropic's current public model pages for Haiku 4.5 and Sonnet 4.6, plus the official Claude Opus 4.7 launch announcement, combined with benchmark data from BenchLM.ai and Arena Elo scores, to help you decide whether Claude's pricing makes economic sense for your workload. ## Claude pricing at a glance…

- [15] Claude Opus 4.7: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

LLM Stats Logo ### Join our newsletter and stay up to date with everything AI There's too much noise in AI, let's filter it for you. Get a curated digest of models, benchmarks, and the analysis that matters, right in your inbox once a week. No spam, unsubscribe anytime LLM Stats Logo The AI Benchmarking Hub. ### Leaderboards ### Arenas ### Benchmarks ### Models ### Resources © 2026 llm-stats

- [16] Claude Opus 4.7 and Every Anthropic Model Reviewed - Web Wallahwebwallah.in

One million tokens means Claude could now process several full-length novels, an entire codebase, or years of company emails in a single conversation. Norway’s $2.2 trillion sovereign wealth fund adopted Opus 4.6 to screen its portfolio for ESG risks. Claude Sonnet 4.6 Another historic moment: 70% of developers in evaluations preferred Sonnet 4.6 over Opus 4.5 – the previous generation’s flagship. Computer use accuracy hit 94% on insurance industry benchmarks. Microsoft integrated it into Microsoft 365 Copilot, bringing Claude to hundreds of millions of enterprise users. ## Claude Opus 4.7 –…

- [17] Anthropic releases Claude Opus 4.7: How to try it, benchmarks, safetymashable.com

Claude Opus 4.7 is available now. Credit: Samuel Boivin/NurPhoto via Getty Images Anthropic has been shipping products and making news at a blistering pace in 2026, and on Thursday, the AI company announced the launch of Claude Opus 4.7. Claude Opus 4.7 is Anthropic's most intelligent model available to the general public. Notably, Anthropic said in a press release") that Opus 4.7 is not as powerful as Claude Mythos, which Anthropic deemed too dangerous for public release. Claude Opus is a family of hybrid reasoning models capable of multi-step reasoning and advanced coding. Until the announc…

- [18] 19 Claude Opus 4.7 Insights You Wouldn't Get From the Headlinesreddit.com

Public Anyone can view, post, and comment to this community 0 0 Reddit RulesPrivacy PolicyUser AgreementYour Privacy ChoicesAccessibilityReddit, Inc. © 2026. All rights reserved. Expand Navigation Collapse Navigation RESOURCES About Reddit Advertise Developer Platform Reddit Pro BETA Help Blog Careers Press Best of Reddit Reddit Rules Privacy Policy User Agreement Your Privacy Choices Accessibility Reddit, Inc. © 2026. All rights reserved. Image 2 [...] # 19 Claude Opus 4.7 Insights You Wouldn’t Get From the Headlines | AIExplained : r/singularity Skip to main content19 C…

- [19] Opus 4.7 is 50% more expensive with context regression?! - Redditreddit.com

Opus 4.7 is 50% more expensive with context regression?! : r/ClaudeAI Skip to main contentOpus 4.7 is 50% more expensive with context regression?! : r/ClaudeAI Open menu Open navigation and Opus 4.6 (64K) scores on the MRCR v2 (8-needle) context benchmark256K:- Opus 4.6: 91.9%- Opus 4.7: 59.2%1M:- Opus 4.6: 78.3%- Opus 4.7: 32.2% and Opus 4.6 (64K) scores on the MRCR v2 (8-needle) context benchmark256K:- Opus 4.6: 91.9%- Opus 4.7: 59.2%1M:- Opus 4.6: 78.3%- Opus 4.7: 32.2%…") Opus 4.7 (Max) and Opus 4.6 (64K) scores on the MRCR v2 (8-needle) context benchmark256K:- Opus 4.6: 91.9%- Opus 4.7…

- [20] Claude Opus 4.7 benchmarks : r/singularity - Redditreddit.com

The model also has substantially better vision: it can see images in greater resolution. It's more tasteful and creative when completing

- [21] Comprehensive Guide to DeepSeek V4 API Pricing and Featuresskywork.ai

.9742C29.3654%2035.1061%2029.1866%2035.1802%2029.0001%2035.1802C28.8136%2035.1802%2028.6348%2035.1061%2028.5029%2034.9742C28.3711%2034.8424%2028.297%2034.6635%2028.297%2034.4771V20.7055L23.1694%2025.8339C23.0375%2025.9658%2022.8586%2026.0399%2022.672%2026.0399C22.4854%2026.0399%2022.3064%2025.9658%2022.1745%2025.8339C22.0426%2025.702%2021.9685%2025.523%2021.9685%2025.3364C21.9685%2025.1498%2022.0426%2024.9709%2022.1745%2024.839Z'%20fill='%23485568'/%3e%3c/g%3e%3cdefs%3e%3cfilter%20id='filter0_d_21176_403320'%20x='0.200001'%20y='0.642676'%20width='57.6'%20height='57 [...] logo'%3e%3crect%20x='…

- [22] DeepSeek V4 AI Model Launch Guide 2026: What You Need to Knowvertu.com

Conclusion The impending launch of the DeepSeek V4 AI Model in 2026 marks a transformative moment, underscored by its groundbreaking memory architecture, expansive context window, and unparalleled coding prowess. This DeepSeek V4 AI Model Complete Guide 2026 is your essential roadmap to understanding and harnessing this revolutionary technology. Prepare for an AI that redefines efficiency and innovation. To capitalize on DeepSeek V4's capabilities, developers and businesses must proactively begin planning integration strategies now. Familiarize yourselves with its advanced features to unlo…

- [23] DeepSeek V4 API Pricing (March 2026): $0.30/$0.50 per 1M Tokensdevtk.ai

About DeepSeek V4 DeepSeek V4 is a large language model by DeepSeek. It features a 1.0M token context window with up to 16K tokens of output per request. The model supports 3 capabilities: text, function_calling, structured_output. At $0.3 per million input tokens and $0.5 per million output tokens, DeepSeek V4 is positioned as a cost-effective option in the DeepSeek lineup. Use our Token Counter to estimate how many tokens your prompts use, and our Pricing Calculator to compare costs across all models. ## DeepSeek V4 Key Details [...] Context Window 1.0M tokens ## Specifications | | | -…

- [24] DeepSeek V4 Guide: Engram Memory, Training Data Strategy ...kili-technology.com

What's the Current Release Status? As of mid-March 2026, DeepSeek V4 has not been officially released. A "V4 Lite" appeared briefly on DeepSeek's platform on March 9, 2026, suggesting an incremental rollout strategy. Dataconomy, citing Chinese tech outlet Whale Lab, reports an April 2026 launch. The Financial Times previously reported a March release. Multiple earlier launch dates — including mid-February 2026 around Lunar New Year — have passed without a full release. What is publicly available are the foundational papers. The Engram paper was published with open-source code. The mHC pape…

- [25] DeepSeek V4 Preview Release | DeepSeek API Docsapi-docs.deepseek.com

DeepSeek V4 Preview Release | DeepSeek API Docs Skip to main content Image 1: DeepSeek API Docs Logo DeepSeek API Docs English English 中文(中国) DeepSeek Platform Quick Start Your First API Call Models & Pricing Token & Token Usage Rate Limit Error Codes API Guides Thinking Mode Multi-round Conversation Chat Prefix Completion (Beta) FIM Completion (Beta) JSON Output Tool Calls Context Caching Integrate with Coding Agents Anthropic API [...] API Reference News DeepSeek-V4 Preview Release 2026/04/24 DeepSeek-V3.2 Release 2025/12/01 DeepSeek-V3.2-Exp Release 2025/09/29 DeepSeek V3.1 Update 2025/0…

- [26] DeepSeek V4 SWE-bench Score: The Ultimate Guide to the New AI ...skywork.ai

Future Innovations ## 6. Implications for Users and AI Workers ### The End of "Vibe Coding" ### Economic Disruption ## 7. Frequently Asked Questions (FAQ) ### Q1: Is DeepSeek V4 officially released? ### Q2: Is the DeepSeek V4 SWE-bench score of 83.70% verified? ### Q3: How does the 1M context window actually perform? ### Q4: Can I run DeepSeek V4 locally? ### References # DeepSeek V4 SWE-bench Score: The Ultimate Guide to the New AI Coding Frontier logo logo 分享图标 赞图标 不图标 ## Featured Picks Exploring How t-mobile's t-life app received new features and an ai assistant Exploring How t-mobile'…

- [27] DeepSeek-V4 Preview: Million-Token Context & Agent ...atlascloud.ai

or textCopy

1deepseek-v4-flash.1base_url1model1deepseek-v4-pro1deepseek-v4-flashBoth models support a maximum context length of 1M tokens and offer both non-thinking and thinking modes. In thinking mode, a textCopy1reasoning_effortparameter can be set to textCopy1highor textCopy1max. For complex agentic workflows, thinking mode with textCopy1maxintensity is recommended. Docs for API access:1reasoning_effort1high1max1max⚠️ Deprecation notice: The legacy model names textCopy1deepseek-chatand textCopy ``` 1deeps… - [28] DeepSeek-V4 Release: 1M Context Cost Plunges 73%, Inference Efficiency Transformed — BigGo Financefinance.biggo.com

| Benchmark | V4 Pro | V4 Flash | Opus 4.6 | GPT 5.4 xHigh | Gemini 3.1 Pro High | --- --- --- | | Codeforces Elo | 3206 | 3052 3168 | 3052 | | Apex Shortlist | 90.2 85.9 | 78.1 | 89.1 | | IMOAnswerBench | 89.8 - | 91.4 | SWE Verified | 80.6 80.8 - | | Toolathlon | 51.8 47.2 | 54.6 | MRCR 1M | 83.5 92.9 76.3 | | CorpusQA 1M | 62.0 71.7 - | | SimpleQA Verified | 57.9 - 75.6 | | HLE (Frontier Science Reasoning) | 37.7 - - | Math and competitive reasoning are V4 Pro's standout dimensions: Codeforces Elo 3206 is the highest among the four; Apex Shortlist 90.2 exceeds Opus 4.6's 85.9 and GPT 5.4's…

- [29] DeepSeek-V4-Pro-Max: Pricing, Benchmarks & Performancellm-stats.com

Output$3.48/M Throughput 32 tok/s Parameters 1.6T Benchmarks Examples Playground API ## Benchmarks ### Arena Performance #64 Websites ### Leaderboard Rankings #5 Math #6 Healthcare #7 Coding #7 Search #7 Tool Calling #9 Reasoning #9 Legal #10 Finance #20 Vision #33 Long Context ### Quality Tracker +0.06σ— 8 votes 7d+0.06σ 30d+0.06σ Image 3: LLM Stats Logo Websites+0.18σ(5)3D+0.00σ(1)playground-chat+0.00σ(1)music+0.00σ(1) ### DeepSeek-V4-Pro-Max Performance Across Datasets Scores sourced from the model's scorecard, paper, or official blog posts Image 4: LLM Stats Logollm-stats.com - Sat Apr 25…

- [30] Models & Pricing - DeepSeek API Docsapi-docs.deepseek.com

See Thinking Mode for how to switch CONTEXT LENGTH 1M MAX OUTPUT MAXIMUM: 384K FEATURESJson Output✓✓ Tool Calls✓✓ Chat Prefix Completion(Beta)✓✓ FIM Completion(Beta)Non-thinking mode only Non-thinking mode only PRICING 1M INPUT TOKENS (CACHE HIT)$0.028$0.145 1M INPUT TOKENS (CACHE MISS)$0.14$1.74 1M OUTPUT TOKENS$0.28$3.48 The model names

deepseek-chatanddeepseek-reasonerwill be deprecated in the future. For compatibility, they correspond to the non-thinking mode and thinking mode ofdeepseek-v4-flash, respectively. ## Deduction Rules The expense = number of tokens × price. The corr… - [31] DeepSeek V4 Cost Per Token: Complete Pricing Guide & Analysisskywork.ai

.9742C29.3654%2035.1061%2029.1866%2035.1802%2029.0001%2035.1802C28.8136%2035.1802%2028.6348%2035.1061%2028.5029%2034.9742C28.3711%2034.8424%2028.297%2034.6635%2028.297%2034.4771V20.7055L23.1694%2025.8339C23.0375%2025.9658%2022.8586%2026.0399%2022.672%2026.0399C22.4854%2026.0399%2022.3064%2025.9658%2022.1745%2025.8339C22.0426%2025.702%2021.9685%2025.523%2021.9685%2025.3364C21.9685%2025.1498%2022.0426%2024.9709%2022.1745%2024.839Z'%20fill='%23485568'/%3e%3c/g%3e%3cdefs%3e%3cfilter%20id='filter0_d_21176_403320'%20x='0.200001'%20y='0.642676'%20width='57.6'%20height='57 [...] ### Q3: Do I need to…

- [32] DeepSeek-V4-Flash-Max: Pricing, Benchmarks & Performancellm-stats.com

LLM Stats Logo Make AI phone calls with one API call ### Join our newsletter and stay up to date with everything AI There's too much noise in AI, let's filter it for you. Get a curated digest of models, benchmarks, and the analysis that matters, right in your inbox once a week. No spam, unsubscribe anytime LLM Stats Logo The AI Benchmarking Hub. ### Leaderboards ### Arenas ### Benchmarks ### Models ### Resources © 2026 llm-stats

- [33] DeepSeek V4 API: Pricing, Benchmarks & 1M Context - EvoLink.AIevolink.ai

HappyHorse 1.0 Coming SoonLearn More DeepSeek V4 Flash API DeepSeek V4 Flash is the fast general-purpose tier of the V4 series. 1M-token context, optional thinking mode, and an order of magnitude lower cost than Claude Sonnet — callable via OpenAI or Anthropic endpoints on EvoLink. Model Type: ✓DeepSeek V4 FlashDeepSeek V4 Pro Price: $0.147(~ 10 credits) per 1M input tokens; $0.294(~ 20 credits) per 1M output tokens $0.029(~ 2 credits) per 1M cache read tokens Highest stability with guaranteed 99.9% uptime. Recommended for production environments. Use the same API endpoint for all versions. O…

- [34] DeepSeek V4 Released: What's New in the Latest Model (2026)sitepoint.com

No official V4 training methodology documentation exists yet. Benchmark scores on reasoning-heavy evaluations would reflect these changes. Apr 17, 2026

- [35] DeepSeek V4: Architecture, Benchmarks, and API Guide (2026)morphllm.com

March 3, 2026·1 min read TL;DRKey SpecsArchitecture: Three InnovationsBenchmark PerformanceFor Coding: How Good Is It?What Changed from V3API PricingCommunity ReactionLimitationsFAQ ## TL;DR DeepSeek V4 launches this week. It is a 1-trillion-parameter MoE model with a 1M-token context window, three new architectural techniques, and native multimodal support. Pre-release claims put it at 80-85% SWE-bench Verified and 90% HumanEval. API pricing is expected around $0.14/M input tokens, roughly 20-50x cheaper than Western frontier models. The numbers are from internal DeepSeek benchmarks only. In…

- [36] DeepSeek rolls out V4 update with 1 million-token context and ...techxplore.com

DeepSeek says the new V4 open-source models, which include "pro" and "flash" versions, have big improvements in knowledge, reasoning and in

- [37] DeepSeek V4—almost on the frontier, a fraction of the pricesimonwillison.net

DeepSeek V4—almost on the frontier, a fraction of the price # Simon Willison’s Weblog Subscribe Sponsored by: Sonar — Now with SAST + SCA for secure, dependency-aware Agentic Engineering. SonarQube Advanced Security ## DeepSeek V4—almost on the frontier, a fraction of the price 24th April 2026 Chinese AI lab DeepSeek’s last model release was V3.2 (and V3.2 Speciale) last December. They just dropped the first of their hotly anticipated V4 series in the shape of two preview models, DeepSeek-V4-Pro and DeepSeek-V4-Flash. Both models are 1 million token context Mixture of Experts. Pro is 1.6T t…

- [38] DeepSeek V4 Benchmarks! : r/singularity - Redditreddit.com

V4 pro is impressive, and looks like it will be competitive on codings tasks for its price. V4 flash seems like the real winner though, deepseek ... 1 day ago

- [39] DeepSeek V4 vs GPT-4: Cut AI Costs with Long-Context Powerlinkedin.com

V4 is worth serious consideration if: You are hitting 128K context ceilings often, and you are tired of hacks to work around them. Your engineers spend time hand‑stitching partial model outputs for repo‑wide tasks. You are willing to build some custom tooling around V4 instead of waiting for turnkey IDE plugins. If all your high‑value coding use cases comfortably fit within 16–32K context, and cost is not yet a top‑three constraint, staying with GPT‑4 or Claude for now is defensible. V4’s edge is strongest where long context and cost meet. ### 3. When to self host DeepSeek V4 instead of using…

- [40] Deepseek v4 models are out and here are benchmarks !( 4 versions)reddit.com

Local hosting needs planning but pays off for privacy and removing token limits. Start by testing a compact quantized model on the target hardware, pick a backend that matches your team needs (easy UX vs deep control), and design predictable latency and model-loading behavior so users have a smooth experience. ### LLaMA Hosting Communities See Answer Top tools for optimizing AI model performance How to fine-tune LLaMA for specific tasks Common challenges in local AI deployment Innovative applications of LLaMA in business Image 2: Llama Image 3: Llama Public Anyone can view, post, and comment…

- [41] "DeepSeek-V4: Towards Highly Efficient Million-Token Context ...reddit.com

DeepSeek's 3 Underrated Advantages: 1M Context (Already Live), New mHC Architecture Paper, and $0.28/M Tokens. 113 · 17 ; How does DeepSeek have

- [42] DeepSeek V4 Pricing: 20–50x Cheaper Than OpenAI (Cost ...medium.com

Check the source: the official DeepSeek API docs and pricing page. They're the canonical rates when you connect directly. If you route through a

- [43] DeepSeek V4 Pro underwhelms on Arena (crowdsourced user ...reddit.com

DeepSeek V4 performs better in long-context scenarios and costs much less. The Arena doesn't really capture these advantages. According to

- [44] V4 pricing... What are your thoughts!!! : r/DeepSeek - Redditreddit.com

Declared officially in its post in Chinese, the price would be lower when new HUAWEI Ascend NPU arrives around late 2026.

- [45] GPT-5.5 Model | OpenAI APIdevelopers.openai.com

Realtime API Overview Connect + WebRTC + WebSocket + SIP Usage + Using realtime models + Managing conversations + MCP servers + Webhooks and server-side controls + Managing costs + Realtime transcription + Voice agents ### Model optimization Optimization cycle Fine-tuning + Supervised fine-tuning + Vision fine-tuning + Direct preference optimization + Reinforcement fine-tuning + RFT use cases + Best practices Graders ### Specialized models Image generation Video generation Text to speech Speech to text Deep research Embeddings Moderation ### Going live [...] ### Getting Started Overview Q…

- [46] OpenAI Unveils Its New, More Powerful GPT-5.5 Modelnytimes.com

OpenAI Unveils Its New, More Powerful GPT-5.5 Model - The New York Times Skip to contentSkip to site indexSearch & Section Navigation Section Navigation Search Technology []( Subscribe for $1/weekLog in[]( Friday, April 24, 2026 Today’s Paper Subscribe for $1/week []( Artificial Intelligence OpenAI’s New A.I. Model Anthropic’s Model A.I. Arms Race Anthropic-White House Talks Job Cuts on Wall Street Advertisement SKIP ADVERTISEMENT Supported by SKIP ADVERTISEMENT # OpenAI Unveils Its New, More Powerful Model The maker of ChatGPT is taking a more open approach to cybersecurity than its chief…

- [47] Everything You Need to Know About GPT-5.5 - Vellumvellum.ai

- Cybersecurity capabilities are accelerating faster than safeguards. A 93% cyber range pass rate, combined with a universal jailbreak found in six hours of red-teaming, is the tension that defines this era of AI. 4. The pricing shift favors heavy users. The 2x per-token price increase is real, but the 40% token efficiency claim means high-volume Codex users may only see a ~20% cost increase. Light API users get hit harder. 5. The six-week release cadence is the real signal. GPT-5.4 shipped March 5. GPT-5.5 shipped April 23. OpenAI is not releasing models this fast to win benchmarks — they'r…

- [48] GPT-5.5 - API Pricing & Providersopenrouter.ai

GPT-5.5 - API Pricing & Providers | OpenRouter Skip to content OpenRouter / FusionModelsChatRankingsAppsEnterprisePricingDocs Sign Up Sign Up # OpenAI: GPT-5.5 ### openai/gpt-5.5 ChatCompare Released Apr 24, 2026 1,050,000 context$5/M input tokens$30/M output tokens GPT-5.5 is OpenAI’s frontier model designed for complex professional workloads, building on GPT-5.4 with stronger reasoning, higher reliability, and improved token efficiency on hard tasks. It features a 1M+ token context window (922K input, 128K output) with support for text and image inputs, enabling large-scale reasoning, cod…

- [49] GPT-5.5 (high) - Intelligence, Performance & Price Analysisartificialanalysis.ai

Artificial Analysis GPT-5.5 (high) logo • Proprietarymodel • Released April 2026 # GPT-5.5 (high)Intelligence, Performance & Price Analysis ### Model summary #### Intelligence Artificial Analysis Intelligence Index 4 out of 4 units for Intelligence. Output tokens per second Unknown out of 4 units for Speed. #### Input Price USD per 1M tokens 4 out of 4 units for Input Price. #### Output Price USD per 1M tokens 4 out of 4 units for Output Price. #### Verbosity Output tokens from Intelligence Index 3 out of 4 units for Verbosity. GPT-5.5 (high) is amongst the leading models in intelligence, but…

- [50] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

Star on GitHub 55.8KGo to Console Start building for free Sign upGo to Console Start building for free Products Docs Pricing Customers Blog Changelog Star on GitHub 55.8K Blog/GPT-5.5 is here: benchmarks, pricing, and what changes for developers Apr 24, 2026•8 min # GPT-5.5 is here: benchmarks, pricing, and what changes for developers OpenAI shipped GPT-5.5 on April 23, 2026. Here's a source-backed look at benchmarks, pricing versus GPT-5.4 and Claude Opus 4.7, the system card, and where the model still falls short. Image 13: Atharva Deosthale #### Atharva Deosthale Developer Advocate SHARE 7…

- [51] GPT-5.5: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

GPT-5.5: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• Make AI phone calls with one API call CallingBox Start for free 1. Organizations 2. OpenAI 3. GPT-5.5 Compare Image 2: OpenAI logo # GPT-5.5 OpenAI·Apr 2026·Proprietary GPT-5.5 is OpenAI's smartest model yet, designed for real work across agentic coding, computer use, knowledge work, and early scientific research. It matches GPT-5.4 per-token latency in real-world serving while reaching a much higher...m…

- [52] OpenAI announces GPT-5.5, its latest artificial intelligence ...cnbc.com

Ashley Capoot@/in/ashley-capoot/ WATCH LIVE Key Points OpenAI announced GPT-5.5, its latest AI model that is better at coding, using computers and pursuing deeper research capabilities. The launch comes just weeks after Anthropic unveiled Claude Mythos Preview, its new model with advanced cybersecurity capabilties. GPT-5.5 is rolling out to OpenAI's paid subscribers, including its Plus, Pro, Business and Enterprise users, in ChatGPT and Codex. watch now VIDEO4:0104:01 OpenAI's Denise Dresser: Enterprise customers will be 50% of business by year-end Power Lunch OpenAI on Thursday announced its…

- [53] OpenAI launched GPT-5.5 on April 23, 2026, giving ChatGPT and ...threads.com

OpenAI launched GPT-5.5 on April 23, 2026, giving ChatGPT and Codex users access to a new AI model built for coding, research, data analysis, document production and software operation. GPT-5.5 is rolling out to Plus, Pro, Business and Enterprise users in ChatGPT and Codex. GPT-5.5 Pro is available to Pro, Business and Enterprise users in ChatGPT. []( []( []( # Thread 70 views Image 1: arabianbusiness's profile picture arabianbusiness 18h OpenAI launched GPT-5.5 on April 23, 2026, giving ChatGPT and Codex users access to a new AI model built for coding, research, data analysis, document pro…

- [54] OpenAI releases GPT-5.5 amid a shift to rapid-fire AI updatesfortune.com

Fortune 500 AIOpenAI # OpenAI releases GPT-5.5 amid a shift to rapid-fire AI updates Sharon Goldman By Sharon Goldman Sharon Goldman AI Reporter Sharon Goldman By Sharon Goldman Sharon Goldman AI Reporter April 23, 2026, 2:13 PM ET altman OpenAI CEO Sam AltmanAnna Moneymaker/Getty Images [...] By Ken DychtwaldApril 23, 2026 1 day ago © 2026 Fortune Media IP Limited. All Rights Reserved. Use of this site constitutes acceptance of our Terms of Use and Privacy Policy | CA Notice at Collection and Privacy Notice | Do Not Sell/Share My Personal Information;) FORTUNE is a trademark of Fortune Media…

- [55] OpenAI releases GPT-5.5, bringing company one step ... - TechCrunchtechcrunch.com

San Francisco, CA|October 13-15, 2026 REGISTER NOW Mark Chen, chief research officer at OpenAI, said that GPT-5.5 was better at navigating computer work than its predecessors, and also said that the model “shows meaningful gains on scientific and technical research workflows,” noting that the company feels it could really “help expert scientists make progress.” Chen also said it could assist with drug discovery, an area that has shown increased industry interest over the last few years. GPT 5.5 is widely available starting Thursday, according to OpenAI. The company says that the model is depl…

- [56] OpenAI's launch of GPT-5.5 is indicative of the ongoing frontier ...seekingalpha.com

Home page Seeking Alpha - Power to Investors Search for Symbols, analysts, keywords # OpenAI's launch of GPT-5.5 is indicative of the ongoing frontier model race: HSBC Apr 24, 2026, 10:50 AM ETOpenAI (OPENAI) Stock, GOOG Stock, GOOGL Stock, META Stock, ANTHRO StockBy: Chris Ciaccia, SA News Editor Comments (6) Follow us on Google for the latest stock newsFollow Seeking Alpha on Google for the latest stock news This week's announcement by OpenAI (OPENAI) of its latest model, GPT-5.5, is indicative of the ongoing frontier model race, investment firm HSBC said. “LLMs are engaged in a race to del…

- [57] Introducing GPT-5.5 - OpenAIopenai.com

Introducing GPT-5.5 | OpenAI Skip to main content Log inTry ChatGPT(opens in a new window) Research Products Business Developers Company Foundation(opens in a new window) Try ChatGPT(opens in a new window)Login OpenAI Table of contents Model capabilities Next-generation inference efficiency Advancing cybersecurity for everyone’s safety Availability and pricing Evaluations April 23, 2026 ProductRelease # Introducing GPT‑5.5 A new class of intelligence for real work Loading… Share _Update on April 24, 2026: GPT‑5.5 and GPT‑5.5 Pro are now available in the API.__The system card__has also been…

- [58] OpenAI unveils GPT-5.5, claims a "new class of intelligence" at ...the-decoder.com

GPT-5.5 Thinking is now available for Plus, Pro, Business, and Enterprise users in ChatGPT. GPT-5.5 Pro is limited to Pro, Business, and Enterprise users. In Codex, GPT-5.5 is available for Plus, Pro, Business, Enterprise, Edu, and Go users with a 400K context window. A fast mode generates tokens 1.5 times faster at 2.5 times the cost. For the API, OpenAI is charging 5 dollars per million input tokens and 30 dollars per million output tokens, with a context window of one million tokens, exactly twice what GPT-5.4 costs at 2.50 and 15 dollars, respectively. GPT-5.5 Pro lands at 30 dollars per…

- [59] OpenAI launches GPT-5.5 as rivals race to build more ...techxplore.com

OpenAI president Greg Brockman says the new GPT-5.5 model can tend to more computer work without human supervision. OpenAI released a new model

- [60] OpenAI rolls out GPT-5.5 with improved contextual ...9to5google.com

Go to the 9to5Google home page ChatGPT OpenAI # OpenAI rolls out GPT-5.5 with improved contextual understanding, Plus and up Avatar for Andrew Romero Andrew Romero | Apr 23 2026 - 12:22 pm PT 0 Comments OpenAI just announced that ChatGPT is getting a model upgrade to GPT-5.5. The company says the model will bring better results because of changes to how it understands context. OpenAI released another lengthy press release detailing GPT-5.5. The update comes with a few changes over the previous model. It should perform significantly better across various familiar tasks, such as coding, compute…

- [61] Model Drop: GPT-5.5 - by Jake Handyhandyai.substack.com

OpenAI released GPT-5.5 on Thursday morning and pitched it as “a new class of intelligence for real work” plus the next step toward the long-teased Altman / Brockman “superapp.” The model rolls out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex, with GPT-5.5 Pro layered on top for the Pro / Business / Enterprise tiers. The API is not live at launch. OpenAI says it will follow “very soon” at $5 / $30 per million input / output tokens and a 1M context window, double GPT-5.4’s per-token price. Greg Brockman framed the release on the press call as “a real step forward towards t…

- [62] OpenAI launched GPT-5.5 on April 23, 2026, giving ChatGPT and ...facebook.com

ArabianBusiness - OpenAI launched GPT-5.5 on April 23,... | Facebook Log In Log In Forgot Account? ## ArabianBusiness's Post []( ### ArabianBusiness 17h · OpenAI launched GPT-5.5 on April 23, 2026, giving ChatGPT and Codex users access to a new AI model built for coding, research, data analysis, document production and software operation. GPT-5.5 is rolling out to Plus, Pro, Business and Enterprise users in ChatGPT and Codex. GPT-5.5 Pro is available to Pro, Business and Enterprise users in ChatGPT. [...] Image 1: May be an image of text that says 'AB ChatGPT Capabilities Capabilities Intse…

- [63] Introducing GPT-5.5 : r/singularityreddit.com

Introducing GPT-5.5 : r/singularity Skip to main contentIntroducing GPT-5.5 : r/singularity Open menu Open navigation[]( to Reddit Home r/singularity Get App Get the Reddit app Log InLog in to Reddit Expand user menu Open settings menu Image 1 Go to singularity r/singularity•17h ago ShreckAndDonkey123 # Introducing GPT-5.5 AI openai.com Open Share New to Reddit? Create your account and connect with a world of communities. Continue with Email Continue With Phone Number By continuing, you agree to ourUser Agreementand acknowledge that you understand thePrivacy Policy. Public Anyone can view,…

- [64] OpenAI launched GPT-5.5 on April 23, 2026. This new AI model is ...facebook.com

OpenAI launched GPT-5.5 on April... - Artificial Intelligence | Facebook Log In Log In Forgot Account? ## Artificial Intelligence's Post []( ### Artificial Intelligence 19h · OpenAI launched GPT-5.5 on April 23, 2026. This new AI model is more intuitive and better for agents, coding, office tasks, and research. It handles complex jobs with less help, runs faster, and has a 1M token context. GPT-5.5 beats GPT-5.4 in benchmarks but costs 2x more. Image 1 0:00 / 0:00 Image 2 Image 3 All reactions: 7 Like Comment Image 4 All reactions: 7 Like Comment

- [65] GPT-5.5 Becomes OpenAI's IPO Pitch To Enterprise Customersyoutube.com

Sign in to confirm you’re not a bot This helps protect our community. Learn more Sign in # BREAKING - GPT-5.5 Becomes OpenAI’s IPO Pitch To Enterprise Customers Image 7 AIM Network AIM Network 299K subscribers Subscribe Subscribed 16 Share Save Download Download 754 views 13 hours ago#OpenAI#GPT#TechNews 754 views • Apr 24, 2026 • #OpenAI #GPT #TechNews OpenAI's latest GPT 5.5 is poised for a major impact, showcasing 98% accuracy on telecom workflows without prompt engineering. This advancement in AI technology marks a significant shift towards practical enterprise automation, moving beyond b…

- [66] GPT-5.5 - The Most Expensive Model - YouTubeyoutube.com

GPT-5.5 benchmark performance vs open models · SWE-Bench Pro and Terminal-Bench comparisons · API pricing vs open source alternatives.

- [67] How to Use Kimi K2.6: Complete Guide to Moonshot AI's New 1T ...tosea.ai

Sources Kimi K2.6 official launch blog — Moonshot AI, April 20, 2026 Moonshot AI releases Kimi-K2.6 model with 1T parameters, attention optimizations — SiliconANGLE Moonshot AI Releases Kimi K2.6, Beats Top US Models On Some Benchmarks — OfficeChai Kimi K2.6 Has Arrived: An Open-Weight Powerhouse for Agentic Work — Kilo.ai Kimi Code K2.6 Preview: What Developers Need to Know — buildfastwithai Kimi K2.6 Developer Guide: Benchmarks, API & Agent Swarm — Lushbinary Kimi 2.6 Released: 256K Context, Native Video, Beats Claude Opus 4.6 — ofox.ai On this page [...] On April 20, 2026, Moonshot AI r…

- [68] Kimi 2.6 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

What is the context window size of Kimi 2.6? Kimi 2.6 has a context window of 256K, which determines how much text it can process in a single interaction. ## Related Resources ### Don't miss the next GPT moment Which models moved up, what’s new, and what it costs. One email a week, 3-min read. Free. One email per week. Transparent LLM benchmark comparisons. Updated regularly. Last updated: April 20, 2026 ### Stay ahead of the LLM curve Rankings Dashboards Use Cases Explore & Tools Resources © 2026 benchlm.ai [...] Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists T…

- [69] Kimi API Pricing Calculator & Cost Guide (Apr 2026) - CostGoatcostgoat.com

Why is Kimi K2 so cheap? Kimi K2 uses a Mixture-of-Experts (MoE) architecture with 1 trillion total parameters but only 32 billion activated per request. This means you get frontier-model quality while Moonshot AI pays for less compute. Plus, automatic context caching reduces costs by 75% on repeated content. The result: premium performance at $0.60/$2.50 per million tokens. ### Does Kimi API support caching? Yes, Kimi API has automatic context caching. Cached tokens are charged at just $0.15 per million tokens (75% savings on K2 models). The cache is automatically managed - no configurat…

- [70] Kimi K2.6 - Intelligence, Performance & Price Analysisartificialanalysis.ai

Kimi K2.6 logo Open weights model Released April 2026 # Kimi K2.6 Intelligence, Performance & Price Analysis ### Model summary #### Intelligence Artificial Analysis Intelligence Index #### Speed Output tokens per second #### Input Price USD per 1M tokens #### Output Price USD per 1M tokens #### Verbosity Output tokens from Intelligence Index ### Comparison Summary Kimi K2.6 is amongst the leading models in intelligence and well priced when comparing to other open weight models of similar size. The model supports text, image, and video input, outputs text, and has a 256k tokens context window.…

- [71] Kimi K2.6 Has Arrived: An Open-Weight Powerhouse for Agentic Workblog.kilo.ai

Kilo Blog # Kimi K2.6 Has Arrived: An Open-Weight Powerhouse for Agentic Work ### Moonshot's new model is optimized for OpenClaw and KiloClaw Ari Apr 20, 2026 Moonshot AI just dropped their latest model, Kimi K2.6, and it’s an absolute powerhouse for agentic workflows. Even better? It’s completely open-weight from release day. Moonshot AI is starting to feel like less of a “moonshot” and more of a sure thing. The lab’s previous big release, Kimi K2.5, was an immediate hit on Kilo. Our users praised its ability to reason through complex codebases, suggest refactoring strategies, and maintain…

- [72] Kimi K2.6 Model Specs, Costs & Benchmarks (April 2026) | Galaxy.aiblog.galaxy.ai

Galaxy.ai Logo # Kimi K2.6Model Specs, Costs & Benchmarks (April2026) Kimi K2.6, developed by MoonshotAI, features a context window of 262.1K tokens. The model costs $0.80 per million tokens for input and $3.50 per million tokens for output. It was released on April 20, 2026, and has achieved impressive scores in various benchmarks. Access Kimi K2.6 & 210+ other AI models all in one platformTry Galaxy.ai for free | Kimi K2.6Kimi K2.6 | [...] | Kimi K2.6Kimi K2.6 | | Model Provider The organization behind this AI's development | | | Input Context Window Maximum input tokens this model can proc…

- [73] Kimi K2.6 on GMI Cloud: Architecture, Benchmarks & API Accessgmicloud.ai

Kimi K2.6: Architecture, Benchmarks, and What It Means for Production AI April 22, 2026 .png) Moonshot AI just open-sourced Kimi K2.6, and the results speak for themselves. It tops SWE-Bench Pro, runs 300 parallel sub-agents, and fits on 4x H100s in INT4. Built for autonomous coding, agent orchestration, and full-stack design. ## What Kimi K2.6 Is Kimi K2.6 is an open-source, native multimodal agentic model released by Moonshot AI on April 20, 2026, under a Modified MIT License. It is built for three things: long-horizon autonomous coding, coding-driven UI and full-stack design, and agent s…

- [74] Kimi K2.6 pricing & specs — Moonshot AI (Kimi) | CloudPricecloudprice.net

Moonshot AI (Kimi) logo") # Kimi K2.6 Kimi K2.6isMoonshot AI (Kimi) logoMoonshot AI (Kimi)'s language model with a 262K context window, available from 3 providers, starting at $0.600 / 1M input and $2.80 / 1M output. An open-source native multimodal agentic LLM specializing in long-horizon coding, coding-driven design, autonomous execution, and swarm-based task orchestration. | Spec | [...] | | | --- | | Intelligence Index | 53.9 #4 | | Coding Index | 47.1 #12 | | GPQA | 0.9 #5 | | HLE | 0.4 #8 | | IFBench | 0.8 #12 | | Time to First Token | 0.78s #276 | | SciCode | 0.5 #6 | | LCR | 0.7 #19 |…

- [75] Kimi K2.6: The new leading open weights model - Artificial Analysisartificialanalysis.ai

➤ Multimodality: Kimi K2.6 supports Image and Video input and text output natively. The model’s max context length remains 256k. Kimi K2.6 has significantly higher token usage than Kimi K2.5. Kimi K2.5 scores 6 on the AA-Omniscience Index, primarily driven by low hallucination rate. Here’s the full suite of Kimi K2.6 evaluation results: See Artificial Analysis for further details and benchmarks of Kimi K2.6: Want to dive deeper? Discuss this model with our Discord community: ## Read the latest ### Opus 4.7: Everything you need to know Benchmarks and Analysis of Opus 4.7 April 17, 2026 ### Sub…

- [76] Moonshot AI Models – Pricing & Specs | Requesty | Requestyrequesty.ai

Requesty # Moonshot AI Chinese AI company focused on large language models. | Model | Context | Max Output | Input/1M | Output/1M | Capabilities | --- --- --- | | kimi-k2.6 | 262K | 262K | $0.95 | $4.00 | 👁🧠🔧⚡ | | kimi-k2.5 | 262K | 262K | $0.60 | $3.00 | 👁🧠🔧⚡ | | kimi-k2-thinking-turbo | 131K | — | $0.60 | $2.50 | 🔧 | | kimi-k2-0905-preview | 131K | — | $0.60 | $2.50 | 🔧 | | kimi-k2-thinking | 131K | — | $0.60 | $2.50 | 🔧 | | kimi-k2-turbo-preview | 131K | — | $1.20 | $5.00 | 🔧 | | kimi-k2-0711-preview | 131K | — | $0.60 | $2.50 | 🔧 | #### Product #### Company #### Legal #### Comm…

- [77] MoonshotAI: Kimi K2.6 – Effective Pricing | OpenRouteropenrouter.ai

MoonshotAI: Kimi K2.6 ### moonshotai/kimi-k2.6 Released Apr 20, 2026262,144 context$0.60/M input tokens$2.80/M output tokens Kimi K2.6 is Moonshot AI's next-generation multimodal model, designed for long-horizon coding, coding-driven UI/UX generation, and multi-agent orchestration. It handles complex end-to-end coding tasks across Python, Rust, and Go, and can convert prompts and visual inputs into production-ready interfaces. Its agent swarm architecture scales to hundreds of parallel sub-agents for autonomous task decomposition - delivering documents, websites, and spreadsheets in a singl…

- [78] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

| OSWorld-Verified | 73.1 | 75.0 | 72.7 63.3 | | Coding | | Terminal-Bench 2.0 (Terminus-2) | 66.7 | 65.4 | 65.4 | 68.5 | 50.8 | | SWE-Bench Pro | 58.6 | 57.7 | 53.4 | 54.2 | 50.7 | | SWE-Bench Multilingual | 76.7 77.8 | 76.9 | 73.0 | | SWE-Bench Verified | 80.2 80.8 | 80.6 | 76.8 | | SciCode | 52.2 | 56.6 | 51.9 | 58.9 | 48.7 | | OJBench (python) | 60.6 60.3 | 70.7 | 54.7 | | LiveCodeBench (v6) | 89.6 88.8 | 91.7 | 85.0 | | Reasoning & Knowledge | | HLE-Full | 34.7 | 39.8 | 40.0 | 44.4 | 30.1 | | AIME 2026 | 96.4 | 99.2 | 96.7 | 98.3 | 95.8 | | HMMT 2026 (Feb) | 92.7 | 97.7 | 96.2 | 94.7 | 8…

- [79] Kimi AI: Complete Guide to Features, Pricing & How It ...nxcode.io

Kimi AI: Complete Guide to Features, Pricing & How It Compares (2026) March 2026 -- Kimi has gone from a niche Chinese AI chatbot to a globally recognized platform in under two years. With the release of Kimi K2.5 in January 2026, Moonshot AI delivered an open-weight model that matches or exceeds frontier competitors on key benchmarks -- at a fraction of the cost. Cursor recently confirmed that its Composer 2 coding model was built on top of Kimi K2.5, validating the model's quality at the highest level of developer tooling. This guide covers everything you need to know: what Kimi is, what…

- [80] Kimi K2.6 API by MOONSHOTAI - Competitive Pricing - Atlas Cloudatlascloud.ai

NEW HOT Image 5: Kimi-K2-Instruct Kimi's latest and most powerful open-source model. LLM ### Kimi-K2-Thinking 262.1K CONTEXT: Input type: Output type: Context:262.14K Input:$0.6/M tokens Output:$2.5/M tokens Max Output:262.14K $0.6/2.5 M in/out Try it NEW HOT Image 6: Kimi-K2-Instruct No description available. LLM ### Kimi-K2-Instruct-0905 262.1K CONTEXT: Input type: Output type: Context:262.14K Input:$0.6/M tokens Output:$2.5/M tokens Max Output:8.19K $0.6/2.5 M in/out Try it NEW HOT Image 7: Kimi-K2-Instruct Kimi's latest and most powerful open-source model. LLM ### Kimi-K2-Instruct 131.1K…

- [81] Kimi K2.6: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

Kimi K2.6: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• What if your agent could call anyone? CallingBox Start for free 1. Organizations 2. Moonshot AI 3. Kimi K2.6 Compare Chat Image 2: Moonshot AI logo # Kimi K2.6 Moonshot AI·Apr 2026·Modified MIT License Kimi K2.6 is Moonshot AI's open-source, native multimodal agentic model focused on state-of-the-art coding, long-horizon execution, and agent swarm capabilities. It scales horizontally to 300 sub-agents…

- [82] MoonshotAI: Kimi K2.6 Reviewdesignforonline.com

Performance Indices Source: Artificial Analysis This model was released recently. Independent benchmark evaluations are typically completed within days of release — these figures are preliminary and are likely to be updated as testing is finalised. ## Benchmark Scores ### Intelligence ### Technical ### Content Benchmark data from Artificial Analysis and Hugging Face How does MoonshotAI: Kimi K2.6 stack up? Compare side-by-side with other similar models. ## Model Information | | | --- | | OpenRouter ID |

moonshotai/kimi-k2.6| | Provider | moonshotai | | Release Date | April 20, 2026 | |… - [83] Kimi AI Pricing 2026: Plans, Membership Cost & API Token Rateskimik2ai.com

Most LLM billing has two meters: Input tokens: your prompt, system instructions, conversation history, retrieved documents Output tokens: the model’s generated response Output is often priced higher because generating tokens is compute-heavy and can’t be cached as easily. ### A real-world reference point (OpenRouter listing) The OpenRouter listing for Kimi K2.5 shows: Created date (helpful for version context) Context window Input/output price per million tokens Even if you don’t use OpenRouter, these listings help you sanity-check the market. ### Another market snapshot (ArtificialAnalysis)…

- [84] Kimi K2.6 by Moonshot AI - AI SDKai-sdk.dev

Context. 262,000 tokens ; Input Pricing. $0.95 / million tokens ; Output Pricing. $4.00 / million tokens.

- [85] I Tested Kimi K2.6 vs GLM-5.1 on 15 Real Coding Tasks - Mediummedium.com

I Tested Kimi K2.6 vs GLM-5.1 on 15 Real Coding Tasks — The 0.2-Point SWE-Bench Gap Hides a 43% Price Gap (and the "Winner" Codes 11 Points Better) | by Chew Loong Nian - AI ENGINEER | Apr, 2026 | Medium Sitemap Open in app Sign up Sign in Image 2: Chew Loong Nian - AI ENGINEER Chew Loong Nian - AI ENGINEER Follow 11 min read · 16 hours ago dropped GLM-5.1 under MIT — a 754B-parameter model trained on 100,000 Huawei Ascend 910B chips with zero NVIDIA GPUs. Both claimed the #1 spot on SWE-Bench Pro inside the same two-week window. [...] Continue in app Or, continue in mobile web Sign up with…

- [86] Moonshot AI Unveils Kimi K2.6, an Open-Weight Model Built for ...linkedin.com

36K followers Published Apr 20, 2026 + Follow Moonshot AI has released Kimi K2.6 as an open-weight model, positioning it directly against GPT-5.4 and Claude Opus 4.6 on coding benchmarks while emphasizing large-scale agent orchestration as its main differentiator. The model is designed not just for strong benchmark performance, but for extended autonomous execution, including the ability to run up to 300 agents in parallel. [...] Sign inJoin nowImage 2 Image 3: Moonshot AI Unveils Kimi K2.6, an Open-Weight Model Built for Benchmark Parity and Massive Agent Scale Kimi K2.6 is now availabl…

- [87] Kimi K2.6: What Moonshot AI's New Open Source Model Means for ...reddit.com