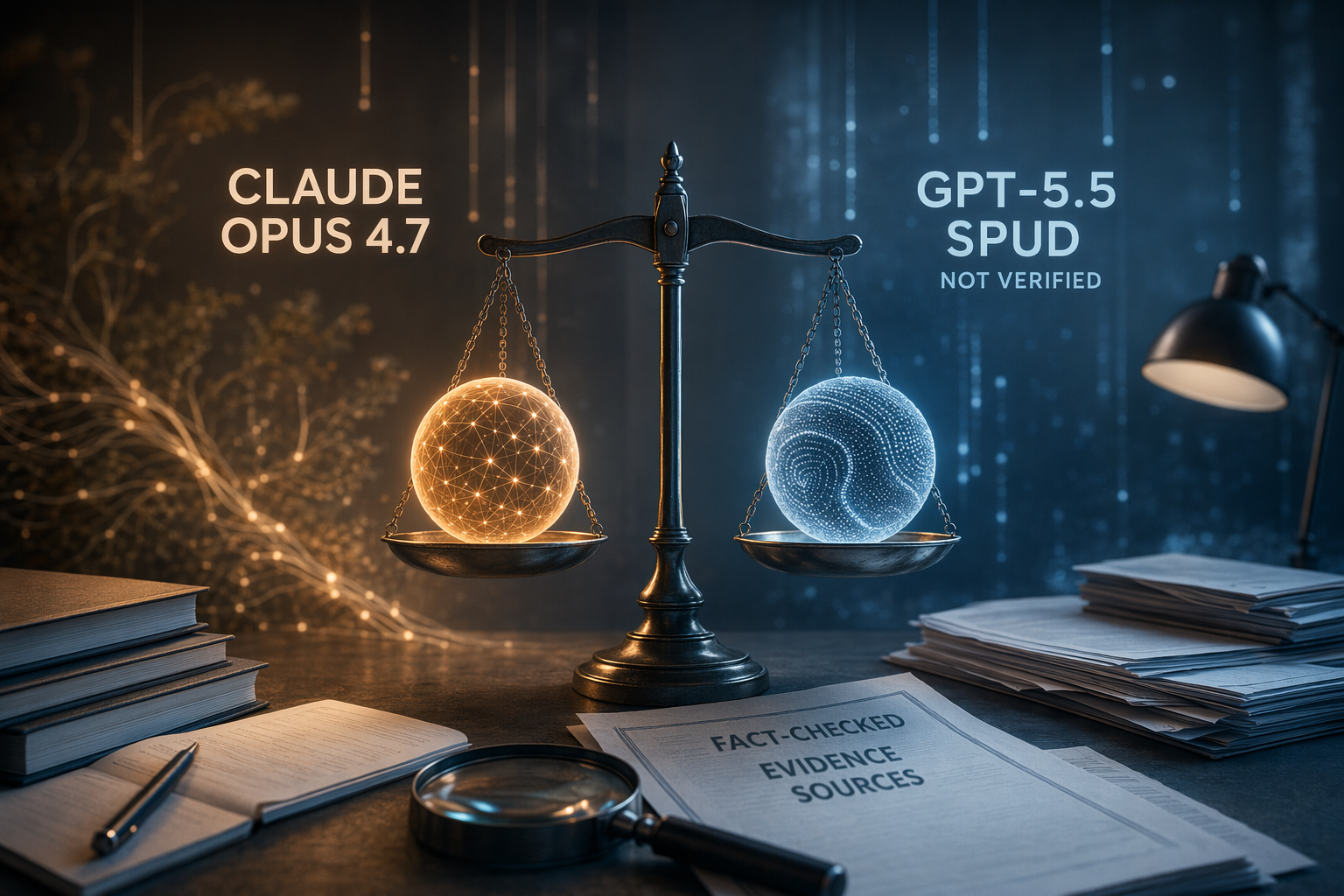

先講結論:呢題表面似係問「Claude 定 Spud 邊個少幻覺」,但證據第一步其實係問:兩個名係咪都核實到?

Anthropic 有文件列明 Claude Opus 4.7,同埋 claude-opus-4-7 呢個 API 識別碼;相反,今次可核對嘅 OpenAI 官方資料只見 GPT-5、GPT-5 mini、GPT-5.2-Codex 同 GPT-5.4 prompt guidance,未見公開模型叫 GPT-5.5 Spud [12][

16][

23][

25][

26][

29][

45]。所以負責任嘅答案唔係「Claude 贏」或者「Spud 贏」,而係:Claude Opus 4.7 可以評測;但 GPT-5.5 Spud 暫時唔應該當成一個已核實、可用嚟做 benchmark 嘅 OpenAI 模型名,除非有官方發布、模型頁、API 文件或同等證據支持。

有證據支持到嘅判斷

| 問題 | 證據支持嘅答案 |

|---|---|

| Claude Opus 4.7 係咪已核實? | 係。Anthropic 文件列出 Claude Opus 4.7,公告亦寫明開發者可以經 Claude API 使用 claude-opus-4-7 [ |

| GPT-5.5 Spud 係咪已核實為 OpenAI 官方模型? | 今次提供嘅 OpenAI 官方來源未能核實。相關官方材料記錄嘅係 GPT-5、GPT-5 mini、GPT-5.2-Codex 同 GPT-5.4 prompt guidance [ |

| Spud 呢個名喺資料入面出現喺邊? | 主要見於 Reddit 帖文同 OpenAI Developer Community 嘅功能建議討論,而唔係正式 release notes 或 API 模型文件 [ |

| 有冇 Claude Opus 4.7 對 GPT-5.5 Spud 嘅幻覺 benchmark? | 今次來源無提供同題目、同評分方法嘅正面比較;公平測試應該將棄答行為同事實錯誤分開計分 [ |

呢個判斷唔代表未來或私人測試環境一定唔會有 Spud 相關模型。只係以現有引用資料計,未足以將 GPT-5.5 Spud 當成 OpenAI 官方模型,更唔足以宣稱邊個幻覺控制贏咗。

Claude Opus 4.7 嘅證據講到邊度?

Anthropic 最硬淨嘅證據係產品同開發者文件,而唔係跨公司幻覺排行榜。Anthropic 公告寫明開發者可透過 Claude API 使用 claude-opus-4-7 [16];文件亦指 Claude Opus 4.7 引入 task budgets,即任務預算控制 [

12]。

不過,task budgets 係產品控制功能,唔等於一個公開、校準過嘅「不確定性」基準。換句話講,它可以影響模型做任務時用幾多資源,但單靠呢點,未能證明模型喺事實唔夠把握時會幾準確咁承認「我唔知」。

同「誠實」較直接相關嘅,是 Mashable 引述 Anthropic 的 Opus 4.7 system card 報道:Claude Opus 4.7 的 MASK 誠實率為 91.7%,而且比之前 Anthropic 模型同其他 frontier AI 模型較少出現幻覺或迎合用戶的情況 [14]。呢點對評估誠實性有參考價值,但仍然答唔到 Claude 對 Spud 邊個較好,因為它唔係對一個已核實 GPT-5.5 Spud 模型做同場同分制比較。

OpenAI 來源實際上核實咗乜?

今次提供嘅 OpenAI 官方材料,核實到 GPT-5、GPT-5 mini、GPT-5.2-Codex 同 GPT-5.4 prompt guidance 等 GPT-5 系列相關資料 [23][

25][

26][

29][

45]。至於 Spud,喺呢組來源入面主要來自 Reddit 帖文同 OpenAI Developer Community 嘅功能建議帖 [

7][

8][

10][

28]。

社群帖可以係早期風聲或用戶需求嘅線索,但唔等同官方模型頁、模型卡、API 識別碼或正式發布公告。對開發者、採購者或者做 PoC 嘅團隊嚟講,呢個分別好重要:未核實名稱唔應該直接放入模型比較表。

OpenAI 關於幻覺嘅說明,反而對「點樣設計評測」更有用。OpenAI 指出,常見訓練同評估程序會獎勵模型猜答案,而唔係獎勵它承認不確定;OpenAI 亦話,模型應該表明不確定或要求澄清,而唔係自信咁提供錯誤資訊 [3]。

OpenAI 的 SimpleQA 例子亦顯示,單睇準確率可以好誤導。該例子列出 gpt-5-thinking-mini 為 52% 棄答、22% 準確、26% 錯誤;o4-mini 則為 1% 棄答、24% 準確、75% 錯誤 [3]。前者答少啲,但喺呢個例子入面錯少好多 [

3]。如果係高風險或需要可信輸出的產品,呢種「識得唔亂答」嘅取捨,往往比每題都答得好有信心更重要。

真正要比嘅,係校準不確定性

AI 幻覺,簡單講就係模型講到似層層,但內容其實錯、無根據,或者超出證據。要控制幻覺,唔係叫模型乜都拒答。好用嘅模型應該做到三件事:證據夠就答;題目唔清楚就追問;答案無法支持就棄答。呢個就係「校準不確定性」嘅實際意思。

研究方向亦支持呢個框架,但要留意限制。一項 2024 年研究報告指,基於不確定性嘅棄答可以改善問答場景入面嘅正確性、幻覺同安全表現 [1][

4]。I-CALM 將 epistemic abstention,即知識性棄答,界定為針對有可驗證答案嘅事實問題而棄答,並指出現有 LLM 仍然會喺應該棄答時未能棄答 [

54]。行為校準強化學習方面嘅研究,亦探討點樣鼓勵模型喺不確定時承認唔知道並棄答 [

61]。

更廣泛嘅綜述則將不確定性量化視為幻覺偵測工具,並形容校準不確定性有助用戶判斷幾時應該信任、轉交人手處理,或者再驗證模型答案 [53][

55]。關鍵係「校準」兩個字:一個成日話唔知嘅模型可能安全但唔實用;一個永遠唔棄答嘅模型可能好似幫到手,但風險更高。

如果真係要公平比較 Claude 同 OpenAI 模型,應該點做?

- 用官方模型 ID。 Claude 方面可測

claude-opus-4-7;OpenAI 方面應用有文件支持嘅模型,例如 GPT-5 或 GPT-5 mini,而唔係未核實嘅 Spud 標籤 [16][

23][

25][

29]。

- 題庫要混合。 唔好只放有明確答案嘅題目;應包括可回答問題、資料不足問題、題意含糊問題同不可回答問題。棄答研究正正關心模型喺高不確定或無法安全回答時,是否懂得拒絕亂答 [

1][

4]。

- 棄答要獨立計分。 同時計正確答案、錯誤答案、正確棄答、錯誤棄答。棄答綜述列出 abstention accuracy、precision、recall 等獨立指標 [

68]。

- 分清事實不確定同安全拒答。 拒絕有害內容,同表示某個事實答案證據不足,係兩種唔同行為;I-CALM 聚焦嘅係有可驗證答案之事實問題上的知識性棄答 [

54]。

- 一齊報準確率、錯誤率同棄答率。 OpenAI 的 SimpleQA 例子顯示,高棄答模型可以有相近準確率,但錯誤率低好多 [

3]。

- 測試環境要一致。 檢索、瀏覽、工具使用、context 長度、system prompt 同提示詞都會影響結果。如果一個模型有額外資料,另一個無,測到嘅就唔止係模型能力,而係整個設定。

FAQ

GPT-5.5 Spud 係咪真係有?

以今次提供嘅證據,未能確認它係 OpenAI 官方模型。官方 OpenAI 來源記錄咗 GPT-5、GPT-5 mini、GPT-5.2-Codex 同 GPT-5.4 prompt guidance;Spud 則見於 Reddit 帖文同社群功能建議討論 [7][

8][

10][

23][

25][

26][

28][

29][

45]。

Claude Opus 4.7 係咪比 GPT-5.5 Spud 少幻覺?

現有來源唔足以嚴謹回答。Claude Opus 4.7 有官方文件支持 [12][

16],亦有二手報道提到 91.7% MASK 誠實率 [

14];但今次無已核實 GPT-5.5 Spud 目標,亦無兩者共用嘅同場 benchmark [

7][

8][

10][

28][

68]。

買家或者開發者應該比較乜?

應該將 Claude Opus 4.7 同有官方文件支持嘅 OpenAI 模型放喺同一套任務、工具、提示詞同評分規則下比較。指標唔應該只睇準確率,而要同時睇錯誤率同棄答行為 [3][

68]。

Bottom line

唔好用現有證據得出「Claude 贏」或者「Spud 贏」嘅幻覺結論。可以支持嘅結論只有三點:Claude Opus 4.7 有官方文件;GPT-5.5 Spud 未在引用到嘅 OpenAI 官方材料中核實;而評估幻覺控制,最應該獎勵嘅係校準不確定性,包括當答案無法支持時能夠正確棄答 [3][

12][

16][

23][

25][

29][

45][

68]。