我想研究并比较 GPT-5.5、Claude Opus 4.7、DeepSeek V4 和 Kimi K2.6 的基准测试表现。

可以,但要分开看。就我目前核实到的公开数字,四家里能直接横向对齐的共享基准其实很少;最清楚的一项是 Terminal Bench 2.0:GPT 5.5 为 82.7%,Claude Opus 4.7 为 69.4%,DeepSeek V4 Pro Max 为 67.9%,Kimi K2.6 Thinking 为 66.7%。[5][12] 这些数字主要来自 OpenAI 的 GPT 5.5 发布页和 DeepSeek V4 Pr...

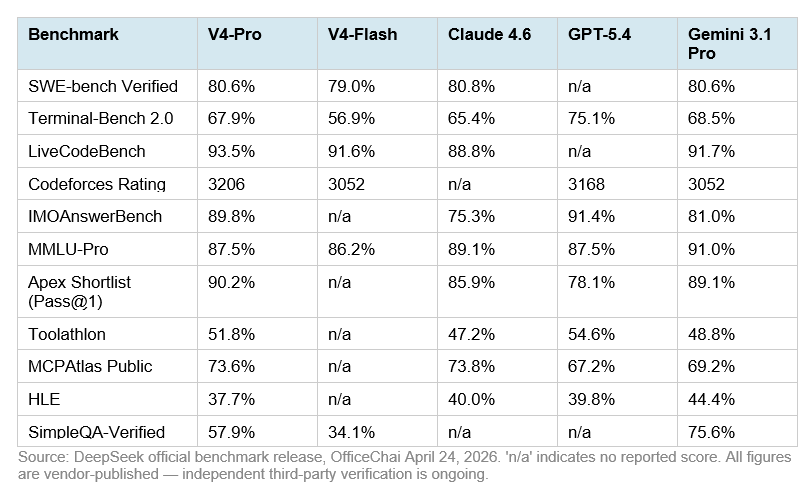

可以,但要分开看。就我目前核实到的公开数字,四家里能直接横向对齐的共享基准其实很少;最清楚的一项是 Terminal Bench 2.0:GPT 5.5 为 82.7%,Claude Opus 4.7 为 69.4%,DeepSeek V4 Pro Max 为 67.9%,Kimi K2.6 Thinking 为 66.7%。[5][12] 这些数字主要来自 OpenAI 的 GPT 5.5 发布页和 DeepSeek V4 Pro 模型卡中的厂商对比表,所以可以做“有保留的比较”,但不足以稳妥给出一个统一总排名。[5][12] Key findings | 可直接对齐的共享基准 | GPT 5.5 | Claude Opus 4.

要点

- | 可直接对齐的共享基准 | GPT-5.5 | Claude Opus 4.7 | DeepSeek V4-Pro Max | Kimi K2.6 Thinking |

- |---|---:|---:|---:|---:|

- | Terminal-Bench 2.0 | 82.7% | 69.4% | 67.9% | 66.7% |

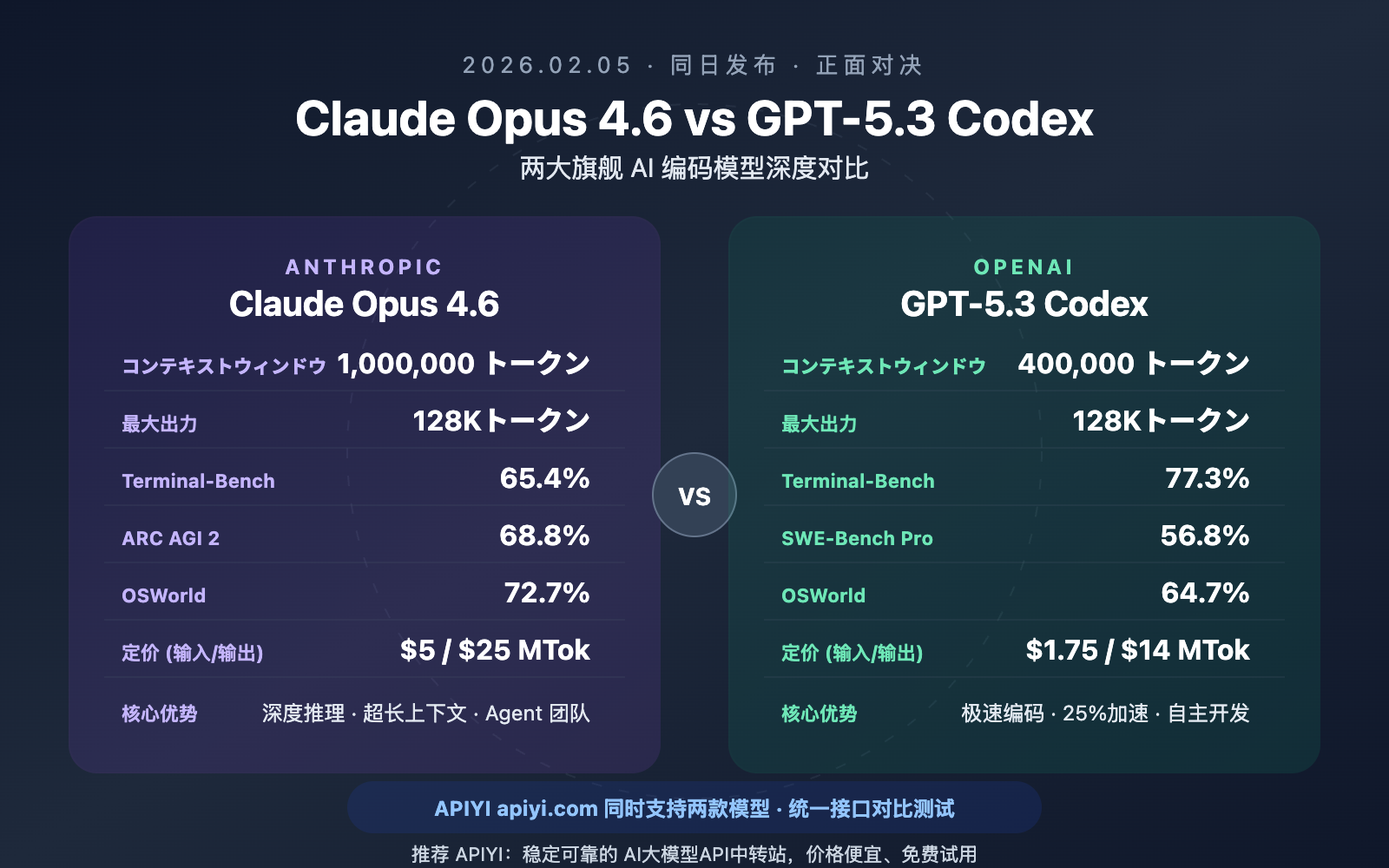

- 在 OpenAI 官方对比表中,凡是同时给出 GPT-5.5 和 Claude Opus 4.7 分数的项目,GPT-5.5 都更高,包括 Terminal-Bench 2.0(82.7% vs 69.4%)、GDPval wins or ties(84.9% vs 80.3%)、BrowseComp(84.4% vs 79.3%)、FrontierMath Tier 1–3(51.7% vs 43.8%)、FrontierMath Tier 4(35.4% vs 22.9%)和 CyberGym(81.8% vs 73.1%)。

- 可以,但要分开看。就我目前核实到的公开数字,四家里能直接横向对齐的共享基准其实很少;最清楚的一项是 Terminal-Bench 2.0:GPT-5.5 为 82.7%,Claude Opus 4.7 为 69.4%,DeepSeek V4-Pro Max 为 67.9%,Kimi K2.6 Thinking 为 66.7%。[5][12] 这些数字主要来自 OpenAI 的 GPT-5.5 发布页和 DeepSeek V4-Pro 模型卡中的厂商对比表,所以可以做“有保留的比较”,但不足以稳妥给出一个统一总排名。[5][12]

- ## Key findings

研究答案

可以,但要分开看。就我目前核实到的公开数字,四家里能直接横向对齐的共享基准其实很少;最清楚的一项是 Terminal-Bench 2.0:GPT-5.5 为 82.7%,Claude Opus 4.7 为 69.4%,DeepSeek V4-Pro Max 为 67.9%,Kimi K2.6 Thinking 为 66.7%。[5][

12] 这些数字主要来自 OpenAI 的 GPT-5.5 发布页和 DeepSeek V4-Pro 模型卡中的厂商对比表,所以可以做“有保留的比较”,但不足以稳妥给出一个统一总排名。[

5][

12]

Key findings

| 可直接对齐的共享基准 | GPT-5.5 | Claude Opus 4.7 | DeepSeek V4-Pro Max | Kimi K2.6 Thinking |

|---|---|---|---|---|

| Terminal-Bench 2.0 [ | 82.7% | 69.4% | 67.9% | 66.7% |

-

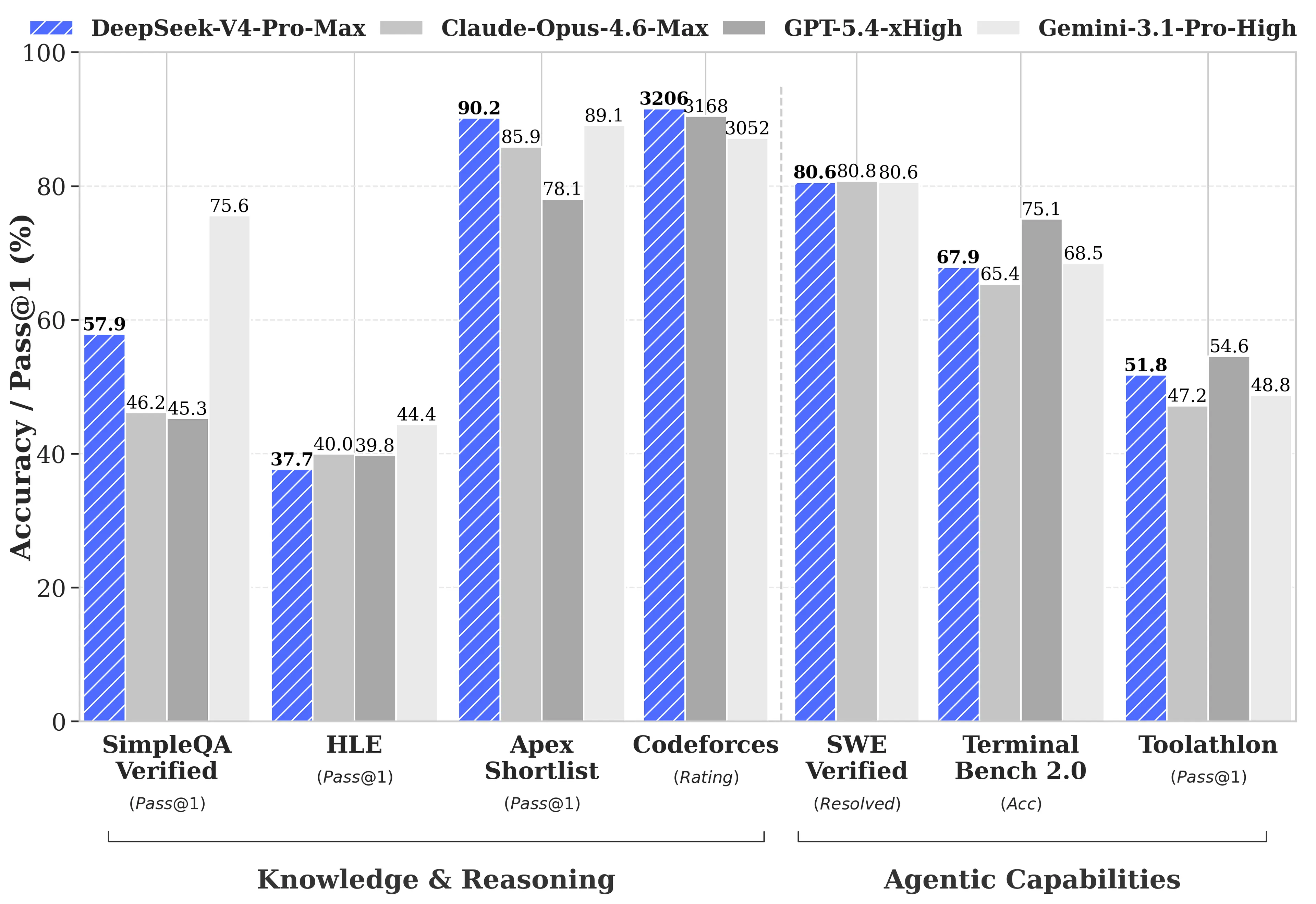

在 OpenAI 官方对比表中,凡是同时给出 GPT-5.5 和 Claude Opus 4.7 分数的项目,GPT-5.5 都更高,包括 Terminal-Bench 2.0(82.7% vs 69.4%)、GDPval wins or ties(84.9% vs 80.3%)、BrowseComp(84.4% vs 79.3%)、FrontierMath Tier 1–3(51.7% vs 43.8%)、FrontierMath Tier 4(35.4% vs 22.9%)和 CyberGym(81.8% vs 73.1%)。[

5]

-

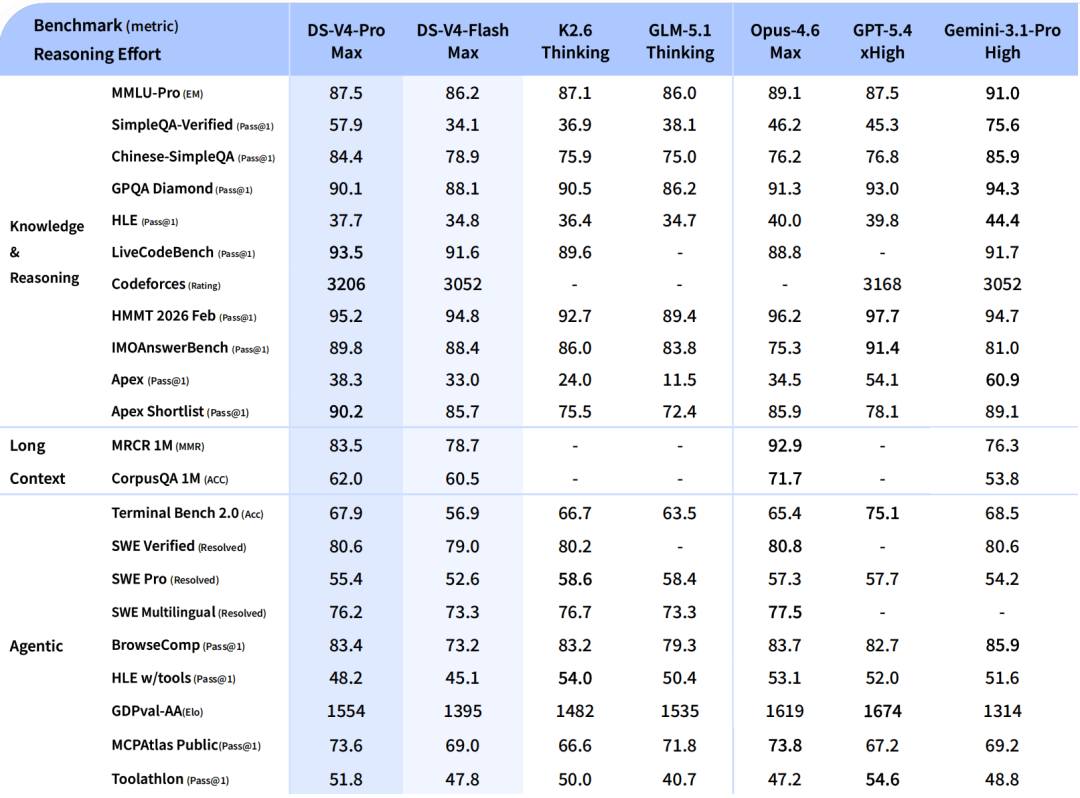

在 DeepSeek 官方模型卡的对比表中,DeepSeek V4-Pro Max 对 Kimi K2.6 Thinking 在多数已列项目上更高,包括 MMLU-Pro(87.5 vs 87.1)、SimpleQA-Verified(57.9 vs 36.9)、LiveCodeBench(93.5 vs 89.6)、HMMT 2026 Feb(95.2 vs 92.7)、IMOAnswerBench(89.8 vs 86.0)、Apex Shortlist(90.2 vs 75.5)以及 Terminal-Bench 2.0(67.9 vs 66.7)。[

12]

-

同一张 DeepSeek 对比表里,Kimi K2.6 Thinking 也有少数领先项,例如 GPQA Diamond(90.5 vs 90.1)和 SWE Pro(58.6 vs 55.4)。[

12]

-

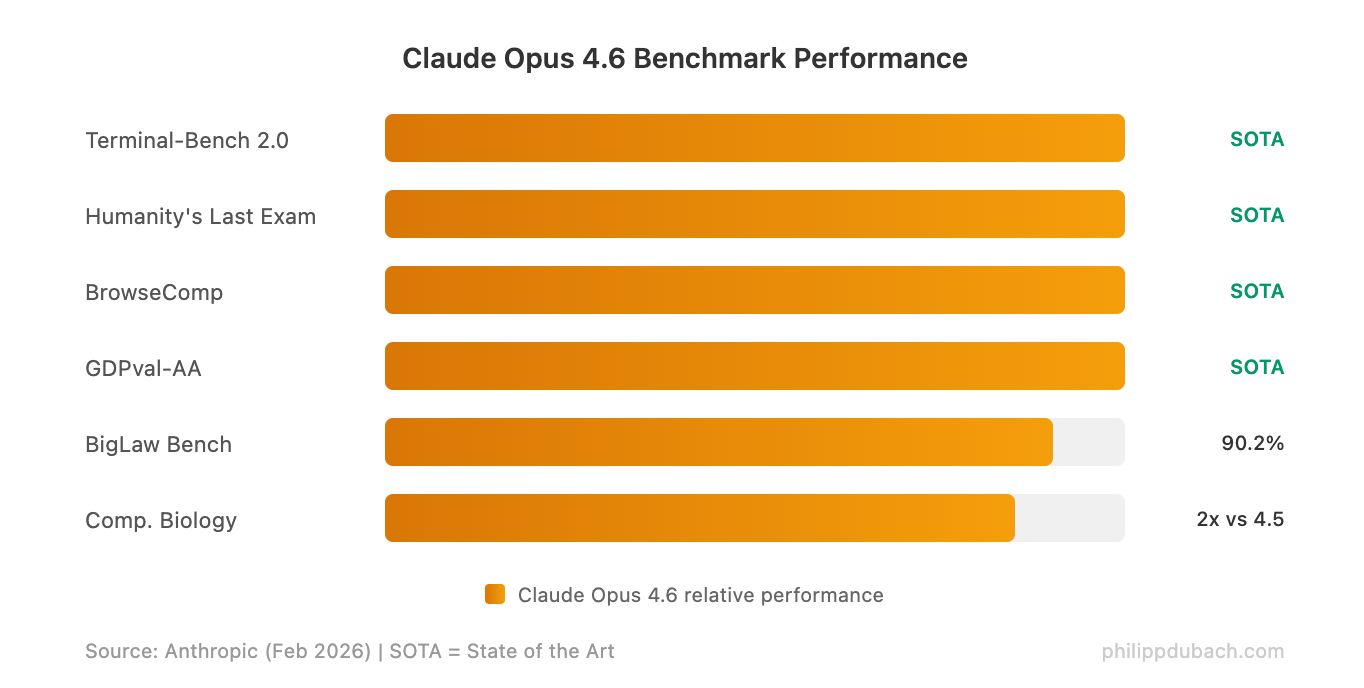

Anthropic 我目前能直接核实到的官方 Opus 4.7 页面,重点是功能和规格更新:Claude Opus 4.7 被描述为其“最强的通用可用模型”,支持 1M token 上下文、128k 最大输出,以及自适应 thinking 和 task budgets 等能力。[

1] 但在我抓取到的官方页面内容里,没有像 OpenAI 或 DeepSeek 那样展开一张完整、可直接引用的 benchmark 总表。[

1]

Evidence notes

-

这次比较最好拆成两层:

-

OpenAI 的表比较的是 GPT-5.5 与 Claude Opus 4.7、Gemini 3.1 Pro 等模型。[

5]

DeepSeek 的表比较的是 DeepSeek V4-Pro Max 与 Kimi K2.6 Thinking、GPT-5.4 xHigh、Claude Opus 4.6 Max、Gemini 3.1 Pro High 等模型。[12]

-

因此,除了像 Terminal-Bench 2.0 这种真正共享的基准外,其他分数不适合被简单拼成一个“总分榜”。这是方法上的判断,不是某一家模型一定更强的确定性结论。

Limitations / uncertainty

-

我目前核实到的高权威公开数字,主要集中在 OpenAI 的 GPT-5.5 发布页、Anthropic 的 Opus 4.7 功能页,以及 DeepSeek 的 V4-Pro 模型卡。[

1][

5][

12]

-

最大限制是:这些表并不是同一第三方、同一 harness、同一工具权限、同一 reasoning effort 下的完整四方对测。[

5][

12]

-

尤其是 GPT-5.5 / Claude Opus 4.7 的官方表,与 DeepSeek V4 / Kimi K2.6 的官方表,不共用同一组对手版本;前者是 GPT-5.5 对 Opus 4.7,后者是 DeepSeek V4-Pro Max 对 Kimi K2.6 Thinking、GPT-5.4 xHigh、Opus 4.6 Max。[

5][

12]

-

所以如果问题是“谁是四者里的绝对 benchmark 第一”,目前公开证据仍然是:Insufficient evidence.

Summary

-

如果你只看当前可直接对齐的共享公开基准,GPT-5.5 在这四个里最强,至少在 Terminal-Bench 2.0 上明显领先。[

5][

12]

-

如果你只看 DeepSeek V4-Pro Max vs Kimi K2.6 Thinking,DeepSeek 在其官方表列出的多数项目上占优,但 Kimi 在 GPQA Diamond 和 SWE Pro 上有亮点。[

12]

-

对 Claude Opus 4.7,目前能较有把握地说:在 OpenAI 官方表里,它落后于 GPT-5.5;但缺少与 DeepSeek V4 和 Kimi K2.6 的同版、同条件、完整第三方对照,因此不宜直接下四方统一总排名。[

5]

如果你愿意,我下一步可以直接帮你整理成一张更实用的对比表,按 Coding / Agent / Reasoning / Vision 四个维度分开排,并把“共享 benchmark”和“厂商自报 benchmark”明确分栏。

支持视觉效果

研究对话

研究问题

我想研究并比较 GPT-5.5、Claude Opus 4.7、DeepSeek V4 和 Kimi K2.6 的基准测试表现。

引用的答案

可以,但要分开看。就我目前核实到的公开数字,四家里能直接横向对齐的共享基准其实很少;最清楚的一项是 Terminal-Bench 2.0:GPT-5.5 为 82.7%,Claude Opus 4.7 为 69.4%,DeepSeek V4-Pro Max 为 67.9%,Kimi K2.6 Thinking 为 66.7%。[5][

12] 这些数字主要来自 OpenAI 的 GPT-5.5 发布页和 DeepSeek V4-Pro 模型卡中的厂商对比表,所以可以做“有保留的比较”,但不足以稳妥给出一个统一总排名。[

5][

12]

Key findings

| 可直接对齐的共享基准 | GPT-5.5 | Claude Opus 4.7 | DeepSeek V4-Pro Max | Kimi K2.6 Thinking |

|---|---|---|---|---|

| Terminal-Bench 2.0 [ | 82.7% | 69.4% | 67.9% | 66.7% |

-

在 OpenAI 官方对比表中,凡是同时给出 GPT-5.5 和 Claude Opus 4.7 分数的项目,GPT-5.5 都更高,包括 Terminal-Bench 2.0(82.7% vs 69.4%)、GDPval wins or ties(84.9% vs 80.3%)、BrowseComp(84.4% vs 79.3%)、FrontierMath Tier 1–3(51.7% vs 43.8%)、FrontierMath Tier 4(35.4% vs 22.9%)和 CyberGym(81.8% vs 73.1%)。[

5]

-

在 DeepSeek 官方模型卡的对比表中,DeepSeek V4-Pro Max 对 Kimi K2.6 Thinking 在多数已列项目上更高,包括 MMLU-Pro(87.5 vs 87.1)、SimpleQA-Verified(57.9 vs 36.9)、LiveCodeBench(93.5 vs 89.6)、HMMT 2026 Feb(95.2 vs 92.7)、IMOAnswerBench(89.8 vs 86.0)、Apex Shortlist(90.2 vs 75.5)以及 Terminal-Bench 2.0(67.9 vs 66.7)。[

12]

-

同一张 DeepSeek 对比表里,Kimi K2.6 Thinking 也有少数领先项,例如 GPQA Diamond(90.5 vs 90.1)和 SWE Pro(58.6 vs 55.4)。[

12]

-

Anthropic 我目前能直接核实到的官方 Opus 4.7 页面,重点是功能和规格更新:Claude Opus 4.7 被描述为其“最强的通用可用模型”,支持 1M token 上下文、128k 最大输出,以及自适应 thinking 和 task budgets 等能力。[

1] 但在我抓取到的官方页面内容里,没有像 OpenAI 或 DeepSeek 那样展开一张完整、可直接引用的 benchmark 总表。[

1]

Evidence notes

-

这次比较最好拆成两层:

-

OpenAI 的表比较的是 GPT-5.5 与 Claude Opus 4.7、Gemini 3.1 Pro 等模型。[

5]

DeepSeek 的表比较的是 DeepSeek V4-Pro Max 与 Kimi K2.6 Thinking、GPT-5.4 xHigh、Claude Opus 4.6 Max、Gemini 3.1 Pro High 等模型。[12]

-

因此,除了像 Terminal-Bench 2.0 这种真正共享的基准外,其他分数不适合被简单拼成一个“总分榜”。这是方法上的判断,不是某一家模型一定更强的确定性结论。

Limitations / uncertainty

-

我目前核实到的高权威公开数字,主要集中在 OpenAI 的 GPT-5.5 发布页、Anthropic 的 Opus 4.7 功能页,以及 DeepSeek 的 V4-Pro 模型卡。[

1][

5][

12]

-

最大限制是:这些表并不是同一第三方、同一 harness、同一工具权限、同一 reasoning effort 下的完整四方对测。[

5][

12]

-

尤其是 GPT-5.5 / Claude Opus 4.7 的官方表,与 DeepSeek V4 / Kimi K2.6 的官方表,不共用同一组对手版本;前者是 GPT-5.5 对 Opus 4.7,后者是 DeepSeek V4-Pro Max 对 Kimi K2.6 Thinking、GPT-5.4 xHigh、Opus 4.6 Max。[

5][

12]

-

所以如果问题是“谁是四者里的绝对 benchmark 第一”,目前公开证据仍然是:Insufficient evidence.

Summary

-

如果你只看当前可直接对齐的共享公开基准,GPT-5.5 在这四个里最强,至少在 Terminal-Bench 2.0 上明显领先。[

5][

12]

-

如果你只看 DeepSeek V4-Pro Max vs Kimi K2.6 Thinking,DeepSeek 在其官方表列出的多数项目上占优,但 Kimi 在 GPQA Diamond 和 SWE Pro 上有亮点。[

12]

-

对 Claude Opus 4.7,目前能较有把握地说:在 OpenAI 官方表里,它落后于 GPT-5.5;但缺少与 DeepSeek V4 和 Kimi K2.6 的同版、同条件、完整第三方对照,因此不宜直接下四方统一总排名。[

5]

如果你愿意,我下一步可以直接帮你整理成一张更实用的对比表,按 Coding / Agent / Reasoning / Vision 四个维度分开排,并把“共享 benchmark”和“厂商自报 benchmark”明确分栏。

人们还问

“我想研究并比较 GPT-5.5、Claude Opus 4.7、DeepSeek V4 和 Kimi K2.6 的基准测试表现。”的简短答案是什么?

| 可直接对齐的共享基准 | GPT-5.5 | Claude Opus 4.7 | DeepSeek V4-Pro Max | Kimi K2.6 Thinking |

首先要验证的关键点是什么?

| 可直接对齐的共享基准 | GPT-5.5 | Claude Opus 4.7 | DeepSeek V4-Pro Max | Kimi K2.6 Thinking | |---|---:|---:|---:|---:|

接下来在实践中我应该做什么?

| Terminal-Bench 2.0 | 82.7% | 69.4% | 67.9% | 66.7% |

接下来我应该探索哪个相关主题?

继续“我想研究 GPT-5.5 与 Claude Opus 4.7 的基准测试表现,看看哪个模型更占优势。”以获得另一个角度和额外的引用。

打开相关页面我应该将其与什么进行比较?

对照“对克劳德·米索斯进行深入研究?”交叉检查此答案。

打开相关页面继续你的研究

来源

- [1] DeepSeek V4 AI Model Launch Guide 2026: What You Need to Knowvertu.com

10. What You Need to Know for 2026 As DeepSeek V4 launches in 2026, understanding its capabilities is crucial. Developers, businesses, and researchers must grasp its architecture, features, and competitive edge. This knowledge is key to navigating the evolving AI landscape effectively. ## Understanding the DeepSeek V4 Advantage The DeepSeek V4 AI Model Complete Guide 2026 highlights a paradigm shift in AI capabilities. Expected mid-February 2026, DeepSeek V4 aims to redefine performance benchmarks. Its advanced architecture and features position it as a significant contender in the evolvi…

- [2] DeepSeek V4 AI Model Launch Guide 2026: What You Need to Knowvertu.com

10. What You Need to Know for 2026 As DeepSeek V4 launches in 2026, understanding its capabilities is crucial. Developers, businesses, and researchers must grasp its architecture, features, and competitive edge. This knowledge is key to navigating the evolving AI landscape effectively. ## Understanding the DeepSeek V4 Advantage The DeepSeek V4 AI Model Complete Guide 2026 highlights a paradigm shift in AI capabilities. Expected mid-February 2026, DeepSeek V4 aims to redefine performance benchmarks. Its advanced architecture and features position it as a significant contender in the evolvi…

- [3] DeepSeek V4 Launched: Features, Benchmarks, and Comparisonsmagicshot.ai

Contents: DeepSeek dropped V4 on April 24, 2026, and the AI community has been picking through every benchmark since. The release comes with two model variants, a 1 million token context window baked in as the new default, and technical claims that put it squarely in competition with the best proprietary models on the market right now. Here is a straight breakdown of what DeepSeek V4 actually is, what it can do, how it performs, and how it compares to GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Pro. ## Two Models, Two Use Cases DeepSeek V4 ships as two separate variants built for different needs…

- [4] DeepSeek V4 Release Date (April 2026 Update) - EvoLink.AIevolink.ai

April 3: Reuters, citing The Information, reported that DeepSeek's V4 model will likely launch in the "next few weeks" and will run on Huawei's newest chips. The report also said DeepSeek has spent the past few months working with Huawei and Cambricon to rewrite parts of the model stack and run testing, while developing two additional V4 variants optimized for different capabilities. Reuters Officially verifiable on April 6: DeepSeek's public API documentation still does not show a V4 model ID, pricing page, or release announcement. The docs explicitly state that

deepseek-chatand `deepseek… - [5] DeepSeek V4 Targets Coding Dominance with Mid-February Launchintrol.com

TL;DR DeepSeek plans to release V4 around February 17, 2026, coinciding with Lunar New Year. The model integrates Engram conditional memory technology published January 13, enabling efficient retrieval from contexts exceeding one million tokens. Internal benchmarks reportedly show V4 outperforming Claude and GPT series in long-context code generation. The anticipated open-source release would make V4 one of the most capable freely available coding models, continuing DeepSeek's pattern of matching proprietary performance at dramatically lower inference costs. ## Release Timeline and Strateg…

- [6] deepseek-ai/DeepSeek-V4-Pro - Hugging Facehuggingface.co

| Opus-4.6 Max | GPT-5.4 xHigh | Gemini-3.1-Pro High | K2.6 Thinking | GLM-5.1 Thinking | DS-V4-Pro Max | :---: :---: :---: | Knowledge & Reasoning | | | | | | | | MMLU-Pro (EM) | 89.1 | 87.5 | 91.0 | 87.1 | 86.0 | 87.5 | | SimpleQA-Verified (Pass@1) | 46.2 | 45.3 | 75.6 | 36.9 | 38.1 | 57.9 | | Chinese-SimpleQA (Pass@1) | 76.4 | 76.8 | 85.9 | 75.9 | 75.0 | 84.4 | | GPQA Diamond (Pass@1) | 91.3 | 93.0 | 94.3 | 90.5 | 86.2 | 90.1 | | HLE (Pass@1) | 40.0 | 39.8 | 44.4 | 36.4 | 34.7 | 37.7 | | LiveCodeBench (Pass@1) | 88.8 91.7 | 89.6 93.5 | | Codeforces (Rating) 3168 | 3052 - | 3206 | | HMM…

- [7] DeepSeek-V4-Pro-Max: Pricing, Benchmarks & Performancellm-stats.com

Output$3.48/M Throughput 32 tok/s Parameters 1.6T Benchmarks Examples Playground API ## Benchmarks ### Arena Performance #64 Websites ### Leaderboard Rankings #5 Math #6 Healthcare #7 Coding #7 Search #7 Tool Calling #9 Reasoning #9 Legal #10 Finance #20 Vision #33 Long Context ### Quality Tracker +0.06σ— 8 votes 7d+0.06σ 30d+0.06σ Image 3: LLM Stats Logo Websites+0.18σ(5)3D+0.00σ(1)playground-chat+0.00σ(1)music+0.00σ(1) ### DeepSeek-V4-Pro-Max Performance Across Datasets Scores sourced from the model's scorecard, paper, or official blog posts Image 4: LLM Stats Logollm-stats.com - Sat Apr 25…

- [8] DeepSeek V4 Released: What's New in the Latest Model (2026)sitepoint.com

On Arena-Hard style evaluations, a benchmark format testing instruction following under adversarial conditions (see lmarena.ai), V4 would be expected to show gains over V3. The exact margin varies by task category, and without published scores, any specific number would be fabrication. ### Where V4 Might Excel (and Where It Might Not) V4's projected strengths cluster around coding tasks, multilingual generation, long-context information retrieval, and structured reasoning. Developers building coding assistants, RAG pipelines over large document sets, or agents requiring extended conversationa…

- [9] DeepSeek V4: Architecture, Benchmarks, and API Guide (2026)morphllm.com

| Benchmark | DeepSeek V4 (claimed) | Claude Opus 4.6 | GPT-5.3 Codex | DeepSeek V3.2 | --- --- | SWE-bench Verified | 80-85% | 80.8% | 77.3% | ~65% | | HumanEval | ~90% | ~88% | ~85% | ~80% | | Context window | 1M tokens | 1M tokens (beta) | 128K tokens | 128K tokens | | Active params | 32B | N/A (dense) | N/A (dense) | 37B | | Input price / 1M tokens | ~$0.14 (projected) | ~$15 | ~$15 | $0.27 | The SWE-bench Verified claim is the one to watch. Claude Opus 4.5 was the first model to crack 80% in that benchmark. If V4 exceeds 80.8% at $0.14/M input tokens, it changes the cost structure for ag…

- [10] DeepSeek V4: 1T Parameter AI Model Guidedeepseek.ai

DeepSeek V4: 1T Parameter AI Model Guide | Independent DeepSeek Resource Hub Deepseek.ai is an independent website and is not affiliated with, sponsored by, or endorsed by Hangzhou DeepSeek Artificial Intelligence Co., Ltd. Machine Learning & Artificial Intelligence DeepSeek AI HomeProducts Compare ResourcesPricing Create AI Agents Focus Keyword: DeepSeek V4 April 2026 15 min read # DeepSeek V4: Everything You Need to Know About the New 1 Trillion Parameter AI Model Discover everything about DeepSeek V4, the groundbreaking 1 trillion parameter AI model. Read about the release date, benchmar…

- [11] Deepseek v4 models are out and here are benchmarks !( 4 versions)reddit.com

Local hosting needs planning but pays off for privacy and removing token limits. Start by testing a compact quantized model on the target hardware, pick a backend that matches your team needs (easy UX vs deep control), and design predictable latency and model-loading behavior so users have a smooth experience. ### LLaMA Hosting Communities See Answer Top tools for optimizing AI model performance How to fine-tune LLaMA for specific tasks Common challenges in local AI deployment Innovative applications of LLaMA in business Image 2: Llama Image 3: Llama Public Anyone can view, post, and comment…

- [12] DeepSeek V4 PRO & Flash: The WHALE is Back with ... - YouTubeyoutube.com

DeepSeek V4 PRO & Flash: The WHALE is Back with Two OpenSource Models! Image 7 Universe of AI Universe of AI 22.8K subscribers Subscribe Subscribed 51 Share Save Download Download 1,444 views 8 hours ago#deepseek#opensource#ainews 1,444 views • Apr 24, 2026 • #deepseek #opensource #ainews eepSeek is back with their V4 series: V4 Pro and V4 Flash, both open source under MIT license. V4 Pro is a 1.6 trillion parameter MoE with 49B active, making it the largest open weights model ever released. V4 Flash comes in at 284B total with 13B active. Both ship with a 1M token context window, hybrid at…

- [13] What's new in Claude Opus 4.7 - Claude API Docsplatform.claude.com

Task budgets (beta) Claude Opus 4.7 introduces task budgets. A task budget gives Claude a rough estimate of how many tokens to target for a full agentic loop, including thinking, tool calls, tool results, and final output. The model sees a running countdown and uses it to prioritize work and finish the task gracefully as the budget is consumed. To use, set the beta header

task-budgets-2026-03-13and add the following to your output config: Python [...] Python ``` response = client.beta.messages.create( model="claude-opus-4-7", max_tokens=128000, output_config={ "effort": "high", "task_b… - [14] Claude Opus 4.7 Benchmark Breakdown: Vision, Coding, and ...mindstudio.ai

Claude Opus 4.7 brings major coding and vision improvements over 4.6, but costs more tokens. Here's what changed and whether the upgrade is worth it. Claude Comparisons AI Development ### Claude Sonnet 4 and Opus 4 Deprecation: What You Need to Do Before June 15 Anthropic is retiring Claude Sonnet 4 and Opus 4 on June 15, 2026. Here's what's changing, which models to migrate to, and how to update your API calls. Claude LLMs & Models How-To Get weekly AI insights from MindStudio [...] Claude Opus 4.7 posted 82.4% on SWE-bench Verified, up roughly 11 points from Opus 4.6 — the most meaningful c…

- [15] Claude Opus 4.7 Benchmark Full Analysis: Empirical Data Leading ...help.apiyi.com

Q1: What is Claude Opus 4.7? Claude Opus 4.7 is the flagship Large Language Model released by Anthropic on April 16, 2026. It leads in multiple benchmarks, including coding (SWE-bench Verified 87.6%), Agent tool invocation, and scientific reasoning (GPQA Diamond 94.2%), outperforming GPT-5.4 and Gemini 3.1 Pro. Compared to Opus 4.6, it introduces the new "xhigh effort" deep reasoning mode, all while maintaining the same official pricing. Q2: Which is better, Claude Opus 4.7 or GPT-5.4? [...] Anthropic officially released Claude Opus 4.7 on April 16, 2026, taking the lead in 7 out of 10 core b…

- [16] Claude Opus 4.7 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # Claude Opus 4.7 According to BenchLM.ai, Claude Opus 4.7 ranks #2 out of 110 models on the provisional leaderboard with an overall score of 97/100. It also ranks #2 out of 14 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. Claude Opus 4.7 is a proprietary model with a 1M token context window. It processes queries without explicit chain-of-thought reasoning, offering faster response times and lower to…

- [17] Claude Opus 4.7 Benchmarks Explainedvellum.ai

Apr 16, 2026•16 min•ByNicolas Zeeb Guides CONTENTS Key observations of reported benchmarks Coding capabilities SWE-bench Verified SWE-bench Pro Terminal-Bench 2.0 Agentic capabilities MCP-Atlas (Scaled tool use) Finance Agent v1.1 OSWorld-Verified (Computer use) BrowseComp (Agentic search) Reasoning capabilities GPQA Diamond (Graduate-level science) Humanity's Last Exam Multimodal and vision capabilities CharXiv Reasoning (Visual reasoning) Multilingual Q&A (MMMLU) Safety and alignment What these benchmarks really mean for your agents When to use Opus 4.6 vs Opus 4.7 Use Opus 4.7 with your Ve…

- [18] Claude Opus 4.7: What Changed for Coding Agents (April 2026)verdent.ai

What is Claude Mythos Preview and how does it compare? Claude Mythos Preview is Anthropic's most capable model, announced April 7, 2026 under Project Glasswing. Anthropic benchmarks show it leading Opus 4.7 across essentially every evaluation category — SWE-bench, reasoning, vision, and agentic tasks. However, it is not generally available. Access is restricted to a limited set of platform partners as part of Anthropic's Project Glasswing initiative. Anthropic explicitly states it does not plan to make Mythos Preview generally available in the near term, though the lessons from its deploy…

- [19] Opus 4.7 Review: Features, Performance & How to Use It (2026 ...aimlapi.com

| Benchmark | Opus 4.7 | Opus 4.6 | Notes | --- --- | | CursorBench (Cursor) | 70% | 58% | +12 pp improvement | | Linear coding benchmark (93 tasks) | +13% resolution | Baseline | 4 tasks neither 4.6 nor Sonnet 4.6 could solve | | Visual acuity (XBOW) | 98.5% | 54.5% | Near-complete elimination of prior pain point | | BigLaw Bench (Harvey, high effort) | 90.9% | Not reported | Best-in-class legal accuracy | | Rakuten-SWE-Bench | 3× more tasks | Baseline | Also double-digit gains in code & test quality | | Databricks OfficeQA Pro | 21% fewer errors | Baseline | Best Claude model for enterprise…

- [20] Claude Opus 4.7: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

LLM Stats Logo ### Join our newsletter and stay up to date with everything AI There's too much noise in AI, let's filter it for you. Get a curated digest of models, benchmarks, and the analysis that matters, right in your inbox once a week. No spam, unsubscribe anytime LLM Stats Logo The AI Benchmarking Hub. ### Leaderboards ### Arenas ### Benchmarks ### Models ### Resources © 2026 llm-stats

- [21] Anthropic releases Claude Opus 4.7: How to try it, benchmarks, safetymashable.com

Claude Opus 4.7 is available now. Credit: Samuel Boivin/NurPhoto via Getty Images Anthropic has been shipping products and making news at a blistering pace in 2026, and on Thursday, the AI company announced the launch of Claude Opus 4.7. Claude Opus 4.7 is Anthropic's most intelligent model available to the general public. Notably, Anthropic said in a press release") that Opus 4.7 is not as powerful as Claude Mythos, which Anthropic deemed too dangerous for public release. Claude Opus is a family of hybrid reasoning models capable of multi-step reasoning and advanced coding. Until the announc…

- [22] Anthropic's Claude Opus 4.7 Surpasses GPT-4 with 64.3% on SWE-bench Pro | O-mega posted on the topic | LinkedInlinkedin.com

Claude Opus 4.7: The Complete Guide (2026) | Articles | o-mega To view or add a comment, sign in ## More Relevant Posts View profile for Benjamin Johnson Particle41•7K followers [...] Your AI pilot is still a pilot. A better model won't change that. 👇 Anthropologic just shipped Claude Opus 4.7. Everyone's talking about the benchmarks. Nobody's talking about the part that actually matters. Here's the uncomfortable truth: 62% of enterprise AI programs never leave the pilot stage. Not because the model wasn't smart enough. Because the deployment was never built for production. Opus 4.7 is genui…

- [23] Claude Opus 4.7 is Out — Weekly AI Newsletter (April 20th 2026)medium.com

TRACER: Trace-Based Adaptive Cost-Efficient Routing for LLM Classification. TRACER uses lightweight execution traces to route classification requests across model tiers, cutting cost while preserving accuracy. The method adapts routing decisions to observed difficulty patterns rather than static rules, outperforming fixed-threshold baselines in deployment-scale LLM classification workloads. [...] Attention Sink in Transformers: A Survey on Utilization, Interpretation, and Mitigation. The survey consolidates research on the attention sink phenomenon, where transformers route disproportionate a…

- [24] Claude Opus 4.7 - A New Frontier, in Performance … and Dramayoutube.com

Claude Opus 4.7 - A New Frontier, in Performance … and Drama Image 7 AI Explained AI Explained 418K subscribers Subscribe Subscribed 2.6K Share Save Download Download 82K views 6 days agoGPT 5 82,889 views • Apr 17, 2026 • GPT 5 Claude Opus 4.7 just dropped, but behind every headline lies a deeper story. From a bonanza of benchmarks, to seeing the fruits of one of the biggest mega-projects in US history, to sneaky Mythos disclaimers, to Anthropic admitting compute restraints and, forcing lower capability of Opus 4.7. Where the new model falls behind Gemini but ahead of GPT 5.4, plus why som…

- [25] GPT-5.5 Debuts, Sets New Benchmark Highseweek.com

| | | | | | | | | | | | | --- --- --- --- --- | | | | | | | | | | | | --- --- --- --- --- | | | | | --- | | | | | Apr 24, 2026 | | | | | | --- | | | | | | | | | | | | --- | | | | Read in Browser | | | | | | | | | | | | | | | | --- --- --- | | | | | | | | --- --- --- | | | | | --- | | | | | Apr 24, 2026 | | | | | | --- | | | | | Read in Browser | | | | | | | | | --- --- | | | | | | --- | | | | --- | | | | | Coffee's on, Tech Insiders. GPT-5.5 is taking meetings, Workspace Agents are emptying your inbox, and even Apple's patch is caffeinating notification privacy. Sip fast... these updates brew…

- [26] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

Star on GitHub 55.8KGo to Console Start building for free Sign upGo to Console Start building for free Products Docs Pricing Customers Blog Changelog Star on GitHub 55.8K Blog/GPT-5.5 is here: benchmarks, pricing, and what changes for developers Apr 24, 2026•8 min # GPT-5.5 is here: benchmarks, pricing, and what changes for developers OpenAI shipped GPT-5.5 on April 23, 2026. Here's a source-backed look at benchmarks, pricing versus GPT-5.4 and Claude Opus 4.7, the system card, and where the model still falls short. Image 13: Atharva Deosthale #### Atharva Deosthale Developer Advocate SHARE 7…

- [27] GPT-5.5 System Card - OpenAIopenai.com

GPT-5.5 System Card | OpenAI Skip to main content Log inTry ChatGPT(opens in a new window) Research Products Business Developers Company Foundation(opens in a new window) Try ChatGPT(opens in a new window)Login OpenAI April 23, 2026 SafetyPublication # GPT‑5.5 System Card Read the System Card(opens in a new window) Share ## 1. Introduction GPT‑5.5 is a new model designed for complex, real-world work, including writing code, researching online, analyzing information, creating documents and spreadsheets, and moving across tools to get things done. Relative to earlier models, GPT‑5.5 understan…

- [28] GPT-5.5: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

GPT-5.5: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• Make AI phone calls with one API call CallingBox Start for free 1. Organizations 2. OpenAI 3. GPT-5.5 Compare Image 2: OpenAI logo # GPT-5.5 OpenAI·Apr 2026·Proprietary GPT-5.5 is OpenAI's smartest model yet, designed for real work across agentic coding, computer use, knowledge work, and early scientific research. It matches GPT-5.4 per-token latency in real-world serving while reaching a much higher...m…

- [29] Introducing GPT-5.5openai.com

Introducing GPT-5.5 | OpenAI Skip to main content Log inTry ChatGPT(opens in a new window) Research Products Business Developers Company Foundation(opens in a new window) Introducing GPT-5.5 | OpenAI Table of contents Model capabilities Next-generation inference efficiency Advancing cybersecurity for everyone’s safety Availability and pricing Evaluations April 23, 2026 ProductRelease # Introducing GPT‑5.5 A new class of intelligence for real work 00:00 01:28 Listen to article Share _Update on April 24, 2026: GPT‑5.5 and GPT‑5.5 Pro are now available in the API.__The system card__has also be…

- [30] OpenAI Launches GPT-5.5 as Its Most Advanced AI Model Yet - MLQ.aimlq.ai

Key Points OpenAI launched GPT-5.5 on April 24, 2026, highlighting improvements in agentic coding, computer use, knowledge tasks and scientific research. The model scores 82.7% on Terminal-Bench 2.0 and 73.1% on Expert-SWE benchmarks14. Available now to Plus, Pro, Business and Enterprise users via ChatGPT and API, with a 1 million token context window. API pricing set at $5 per million input tokens and $30 per million output tokens3. Model maintains prior latency while using fewer tokens for efficiency. Image 2: OpenAI Launches GPT-5.5 as Its Most Advanced AI Model Yet Image 3: Source: O…

- [31] OpenAI releases GPT-5.5, bringing company one step ... - TechCrunchtechcrunch.com

San Francisco, CA|October 13-15, 2026 REGISTER NOW Mark Chen, chief research officer at OpenAI, said that GPT-5.5 was better at navigating computer work than its predecessors, and also said that the model “shows meaningful gains on scientific and technical research workflows,” noting that the company feels it could really “help expert scientists make progress.” Chen also said it could assist with drug discovery, an area that has shown increased industry interest over the last few years. GPT 5.5 is widely available starting Thursday, according to OpenAI. The company says that the model is depl…

- [32] OpenAI's GPT-5.5 masters agentic coding with 82.7% benchmark ...interestingengineering.com

— OpenAI (@OpenAI) April 23, 2026 OpenAI said the improvements go beyond benchmarks. Early testers reported that GPT-5.5 better understands system architecture and failure points. It can identify where fixes belong and predict downstream impacts across a codebase. The company emphasized efficiency alongside capability. GPT-5.5 matches GPT-5.4’s per-token latency despite higher intelligence. It also uses fewer tokens to complete the same tasks, lowering computational cost. “GPT-5.5 delivers this step up in intelligence without compromising on speed,” OpenAI noted. It added that the model perfo…

- [33] Unveiling the GPT-5.5 Benchmark Results: A Deep Dive into Agentic AIskywork.ai

Outline ## 1. What are the GPT-5.5 Benchmark Results? ## 2. Top Products Integrating GPT-5.5 Capabilities ## 3. Comparative Analysis of Product Integrations ## 4. Practical Usage Guide and Real-World Applications ## 5. Development History and Future Trends ## 6. Implications for Users and AI Workers ## 7. Frequently Asked Questions (FAQ) ### References # Unveiling the GPT-5.5 Benchmark Results: A Deep Dive into Agentic AI logo logo 分享图标 赞图标 不图标 ## Featured Picks The Ultimate 2026 Guide to Mastering Your AI Chat Assistant The Ultimate 2026 Guide to Mastering Your AI Chat Assistant Unlockin…

- [34] GPT-5.5 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # GPT-5.5 According to BenchLM.ai, GPT-5.5 ranks #5 out of 112 models on the provisional leaderboard with an overall score of 89/100. It also ranks #2 out of 16 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. GPT-5.5 is a proprietary model with a 1M token context window. It uses explicit chain-of-thought reasoning, which typically improves performance on math and complex reasoning tasks at the cost of…

- [35] OpenAI rolls out GPT-5.5 with improved contextual ...9to5google.com

Go to the 9to5Google home page ChatGPT OpenAI # OpenAI rolls out GPT-5.5 with improved contextual understanding, Plus and up Avatar for Andrew Romero Andrew Romero | Apr 23 2026 - 12:22 pm PT 0 Comments OpenAI just announced that ChatGPT is getting a model upgrade to GPT-5.5. The company says the model will bring better results because of changes to how it understands context. OpenAI released another lengthy press release detailing GPT-5.5. The update comes with a few changes over the previous model. It should perform significantly better across various familiar tasks, such as coding, compute…

- [36] OpenAI Releases GPT-5.5 With State-of-the-Art Scores on Coding, Science, and Computer Uselinkedin.com

The week of April 14, 2026 produced three significant AI releases aimed at the same enterprise audience. Alibaba… ### Google's New Deep Research Max Agent Scores 93% on Benchmarks Google's new Deep Research Max agent scored 93.3% on DeepSearchQA and 85. ### From Creative Toy to Commercial Tool: OpenAI Launches ChatGPT Images 2.0 For years, the easiest way to spot an AI-generated image was to look at the text. Signs with garbled letters. ### How AI Coding Agents Collapsed the Research Lab's Last Moat Google's TurboQuant paper, accepted at ICLR 2026 (arXiv 2504.19874), describes a two-stage app…

- [37] Kimi K2.6: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

All 30 problems from the 2026 American Invitational Mathematics Examination (AIME I and AIME II), testing olympiad-level mathematical reasoning ... 5 days ago

- [38] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

Proactive & Open Orchestration: For autonomous tasks, K2.6 demonstrates strong performance in powering persistent, 24/7 background agents that ... 5 days ago

- [39] Kimi K2.6: The new leading open weights model - Artificial Analysisartificialanalysis.ai

➤ Increase in performance on agentic tasks: Kimi K2.6 achieves an Elo of 1520 on our GDPval-AA evaluation, which is a marked improvement over ... 4 days ago

- [40] Kimi K2.6 on GMI Cloud: Architecture, Benchmarks & API Accessgmicloud.ai

Kimi K2.6 tops SWE-Bench Pro and runs 300 parallel sub-agents on 4x H100S. Learn the full architecture, benchmark results, and how to run it ... 2 days ago

- [41] How to Use Kimi K2.6: Complete Guide to Moonshot AI's New 1T ...tosea.ai

A practical walkthrough of Kimi K2.6 — Moonshot AI's April 2026 open-source MoE release with 1T parameters, 256K context, 300-agent swarms, ... 6 days ago

- [42] Kimi K2.6 - Vals AIvals.ai

Kimi K2.6. Release Date: 4/20/2026. Vals Index. Accuracy (Vals Index). 63.94% ± 1.97. Latency (Vals Index). 373.57s. Cost/Test (Vals Index). $0.21.

- [43] Kimi 2.6 has been released : r/singularity - Redditreddit.com

Despite the engine already operating near its performance limits, Kimi K2.6 extracted a 185% medium throughput leap (from 0.43 to 1.24 MT/s) and ... 5 days ago

- [44] Kimi K2.6 is great! : r/kimi - Redditreddit.com

Harness: Opencode Skill: Frontend-Design (by Anthropic) Prompt: Design and create a very creative, elaborate, and detailed voxel art scene ... 1 day ago

- [45] Kimi K2.6 is now online. : r/kimi - Redditreddit.com

From the iPhone app, v2.6.7 from 2 days ago, sent the same prompt - got k2.6 in the response. Although still showing k2.5-thinking… strange. 6 days ago

- [46] Kimi K2.6 is Here: Full Demo and Deep Dive for Everyone - YouTubeyoutube.com

This video thoroughly tests Kimi K2.6, an open-source, native multimodal agentic model. Get 50% Discount on any A6000 or A5000 GPU rental, ... 5 days ago