我想研究 GPT-5.5 与 Claude Opus 4.7 的基准测试表现,看看哪个模型更占优势。

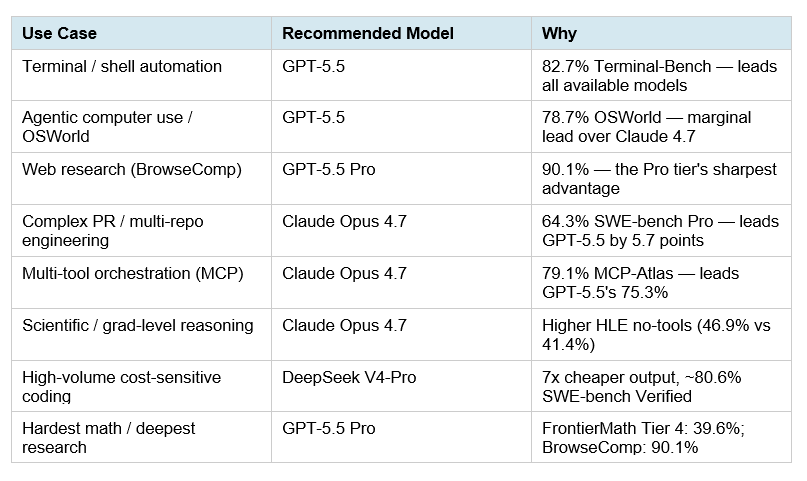

我建议先把结论定为:没有绝对赢家;按公开可见的基准摘要看,Claude Opus 4.7 更偏强于真实软件工程修复类任务,GPT 5.5 更偏强于终端/代理执行和部分商业任务基准。若你的核心场景是代码库级修 Bug,Claude Opus 4.7 更占优;若是自动化任务、工具调用、终端操作或通用专业工作流,GPT 5.5 更值得优先测试。 Key findings GPT 5.5 是 OpenAI 当前面向复杂专业工作的前沿模型,...

我建议先把结论定为:没有绝对赢家;按公开可见的基准摘要看,Claude Opus 4.7 更偏强于真实软件工程修复类任务,GPT 5.5 更偏强于终端/代理执行和部分商业任务基准。若你的核心场景是代码库级修 Bug,Claude Opus 4.7 更占优;若是自动化任务、工具调用、终端操作或通用专业工作流,GPT 5.5 更值得优先测试。 Key findings GPT 5.5 是 OpenAI 当前面向复杂专业工作的前沿模型,OpenAI API 文档将其描述为“newest frontier model for the most complex professional work”,并支持多档 reasoning effort

要点

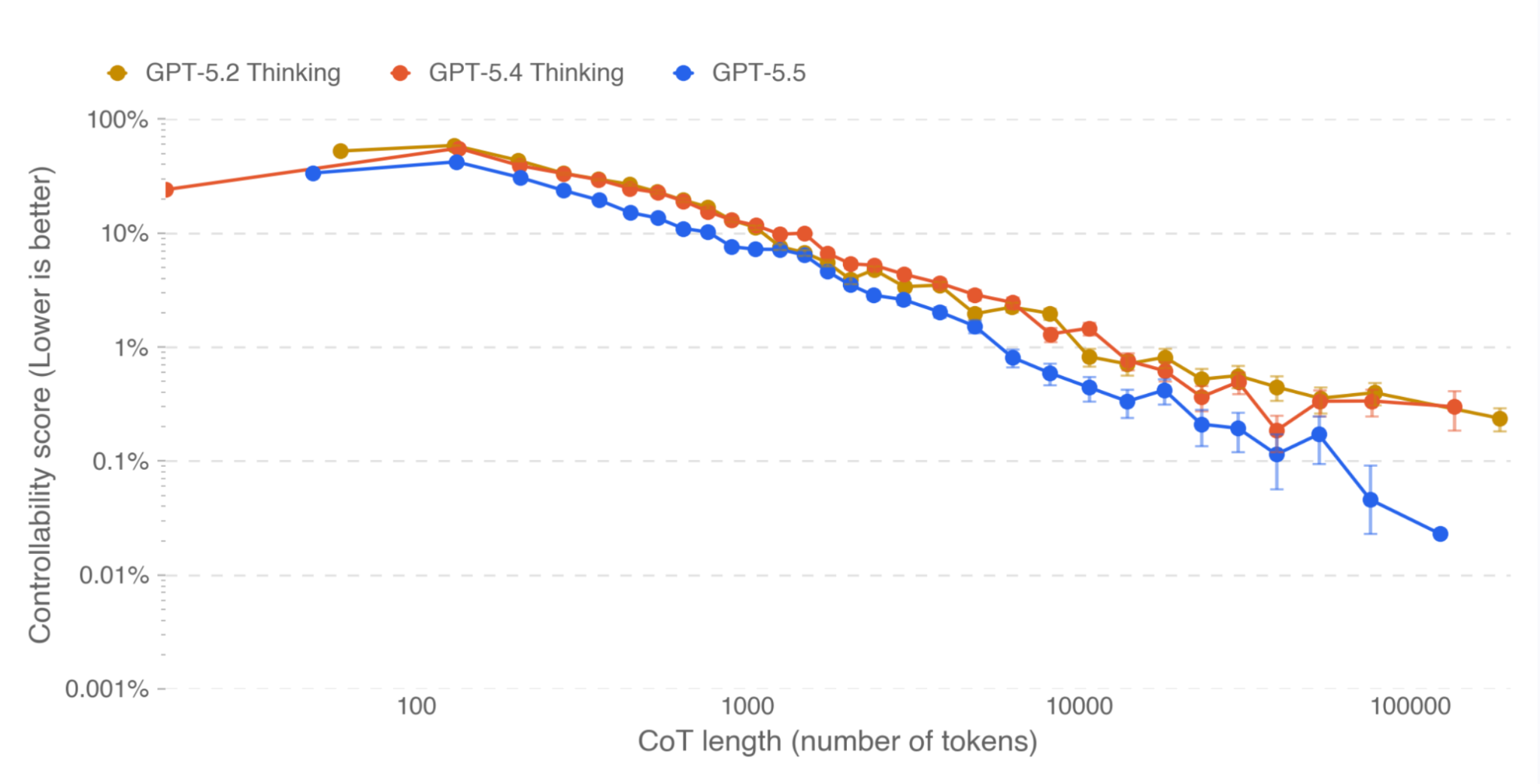

- GPT-5.5 是 OpenAI 当前面向复杂专业工作的前沿模型,OpenAI API 文档将其描述为“newest frontier model for the most complex professional work”,并支持多档 reasoning effort 设置。

- Claude Opus 4.7 有 Anthropic 官方发布页,第三方发布与评测摘要普遍将其定位为 Anthropic 的新旗舰/高端模型。

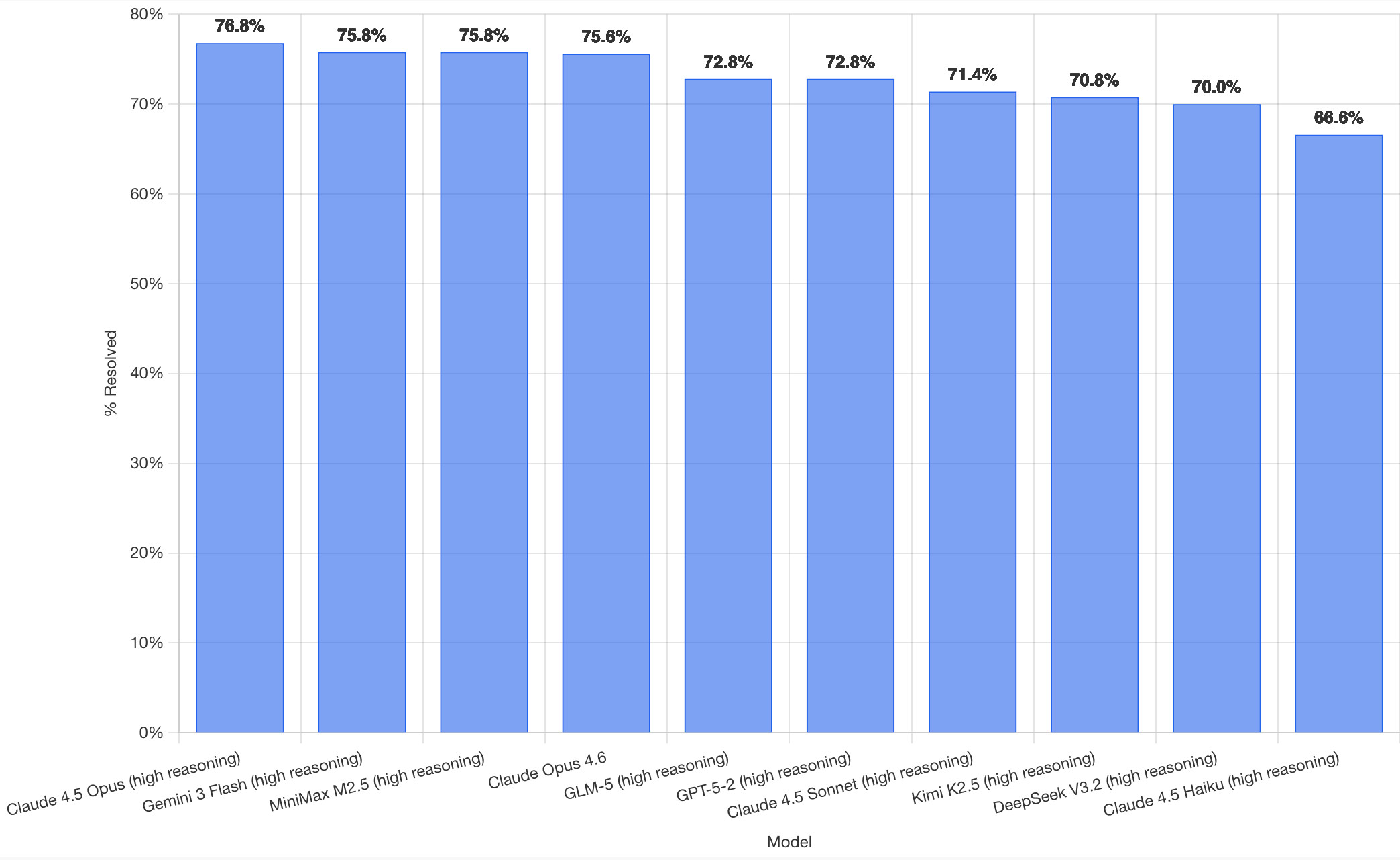

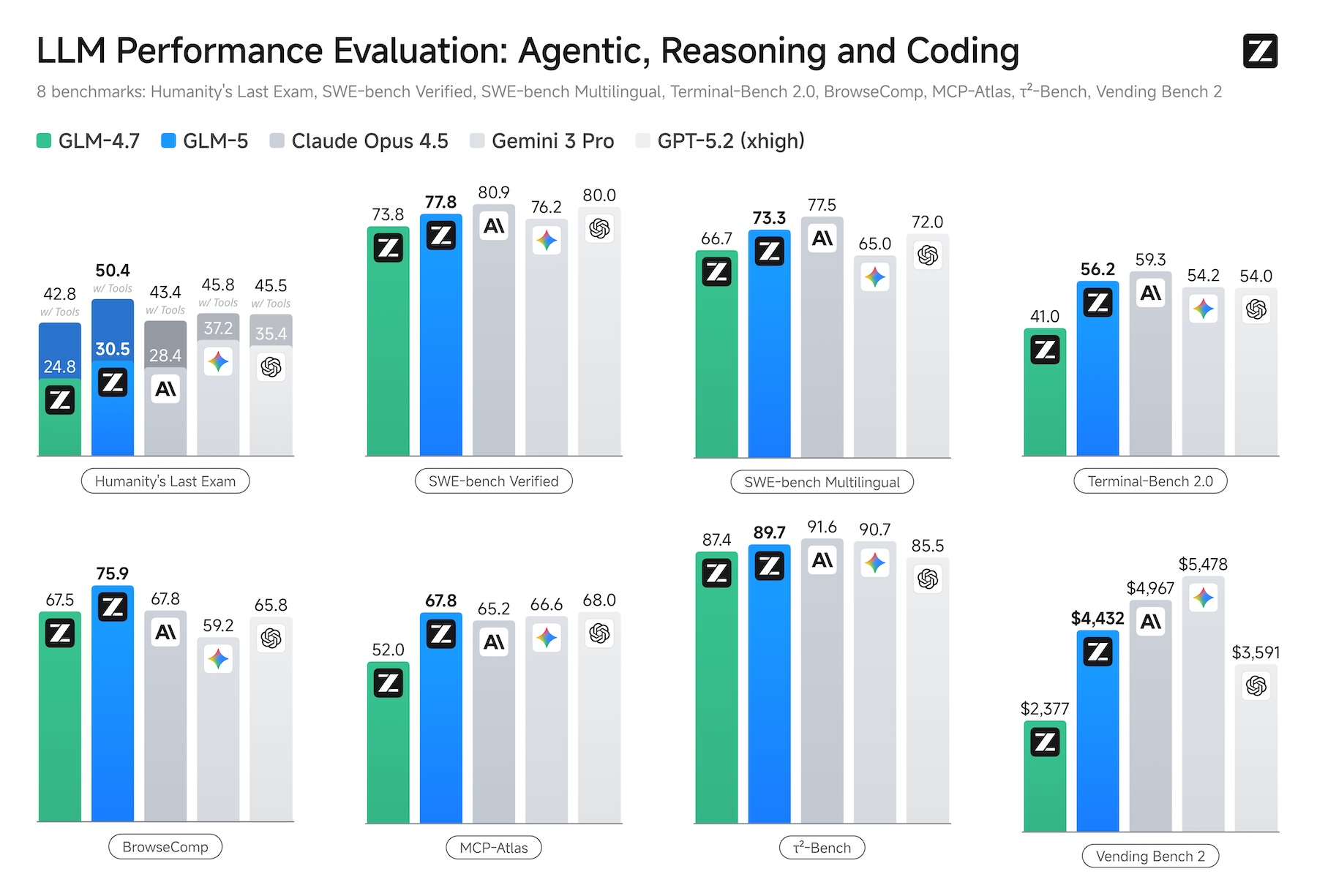

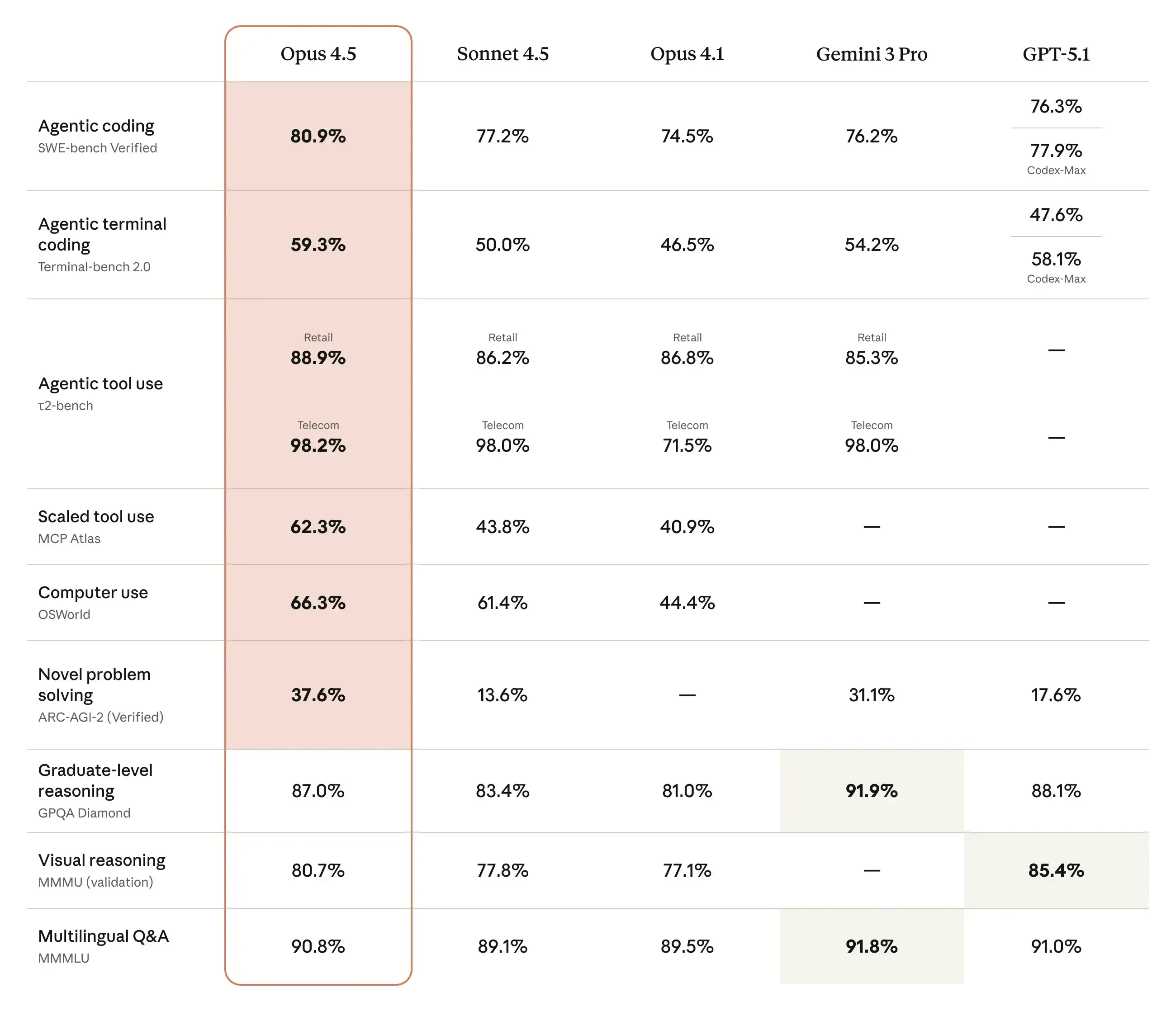

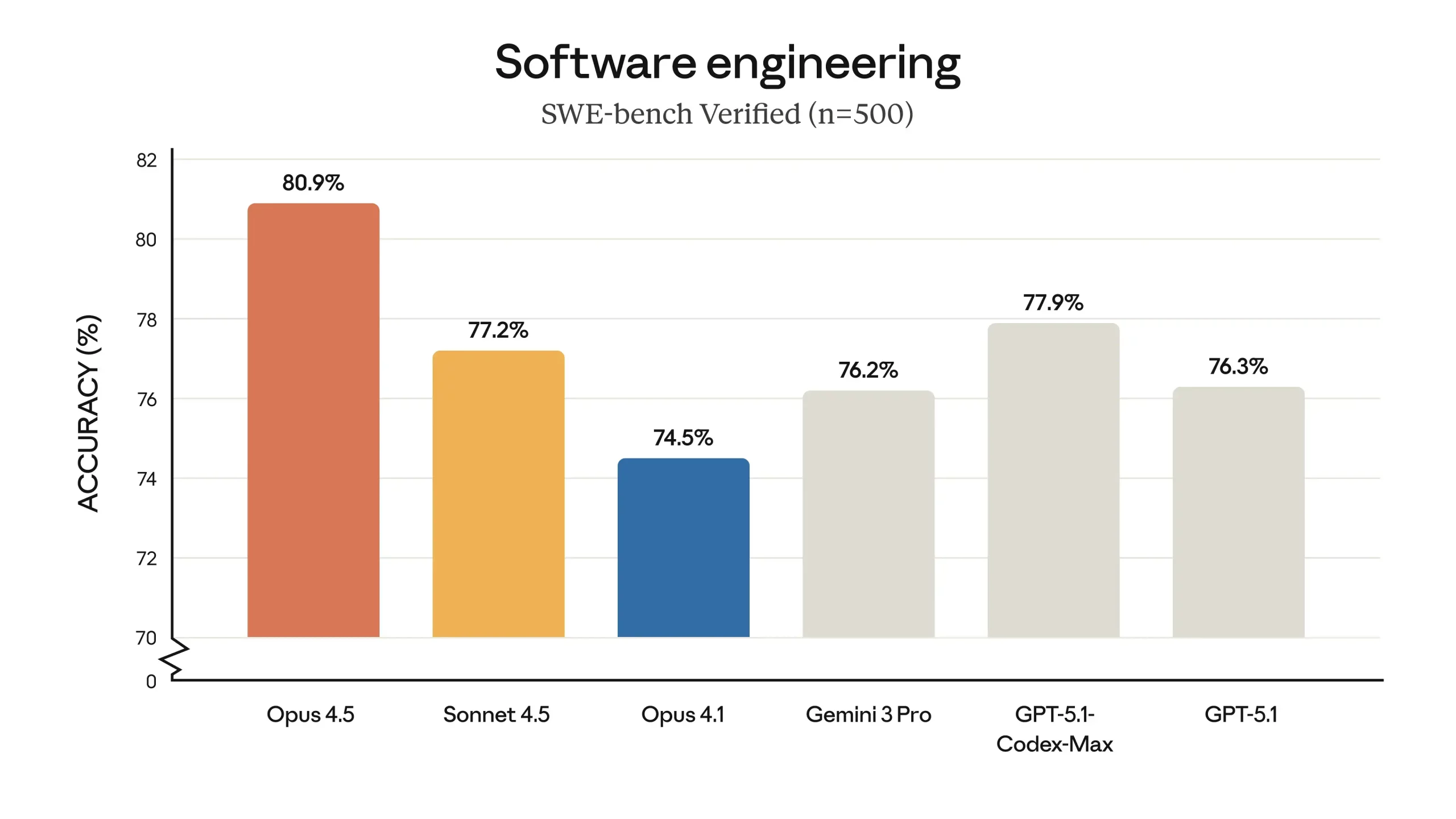

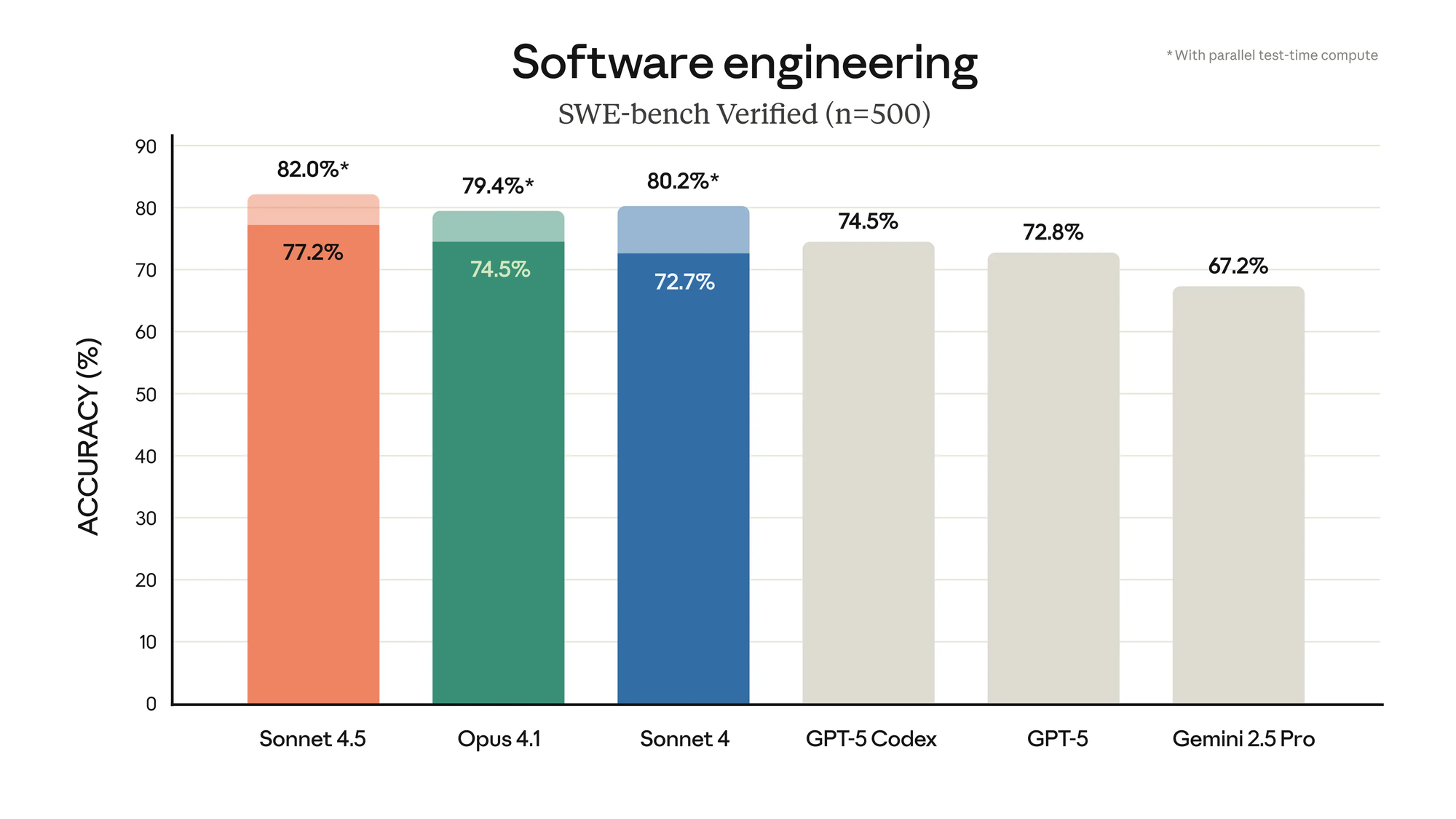

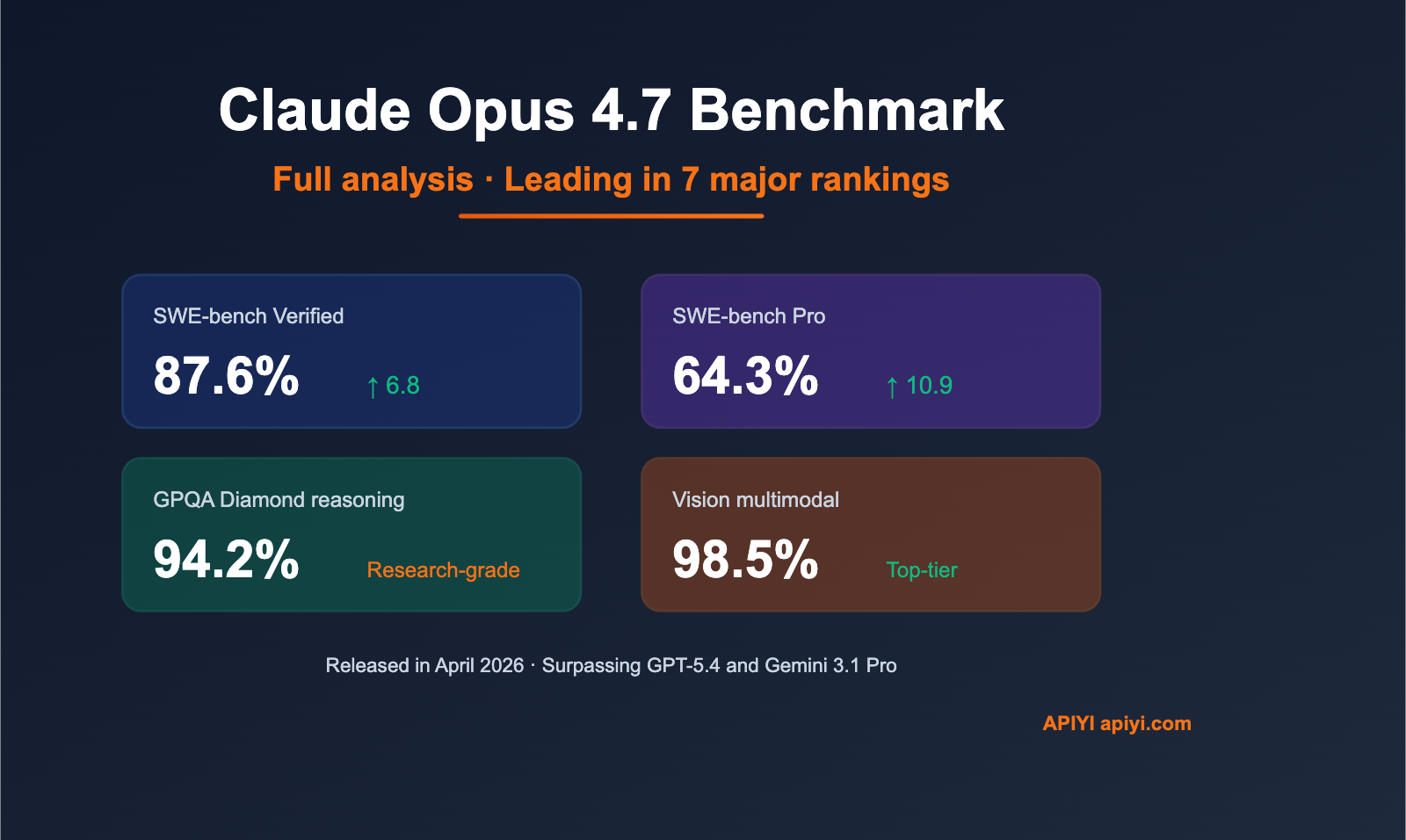

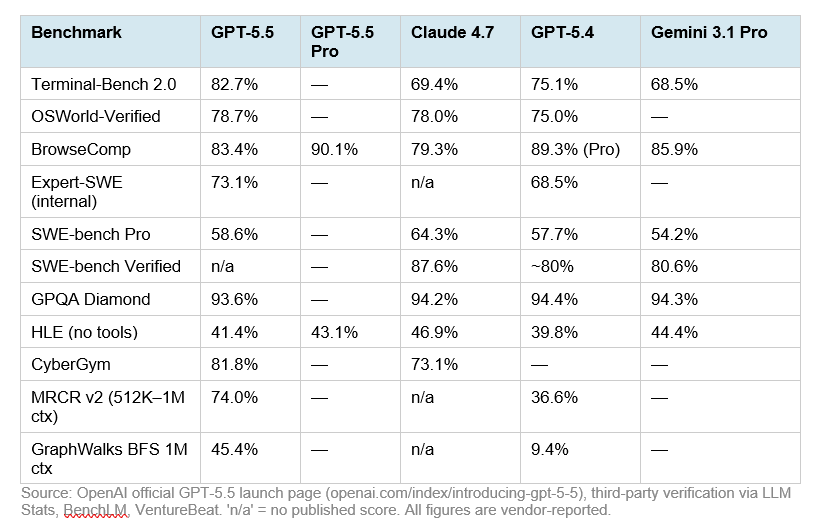

- 在 SWE-Bench Pro 上,公开侧边比较显示 GPT-5.5 为 58.6%,Claude Opus 4.7 为 64.3%,Claude Opus 4.7 领先约 5.7 个百分点。

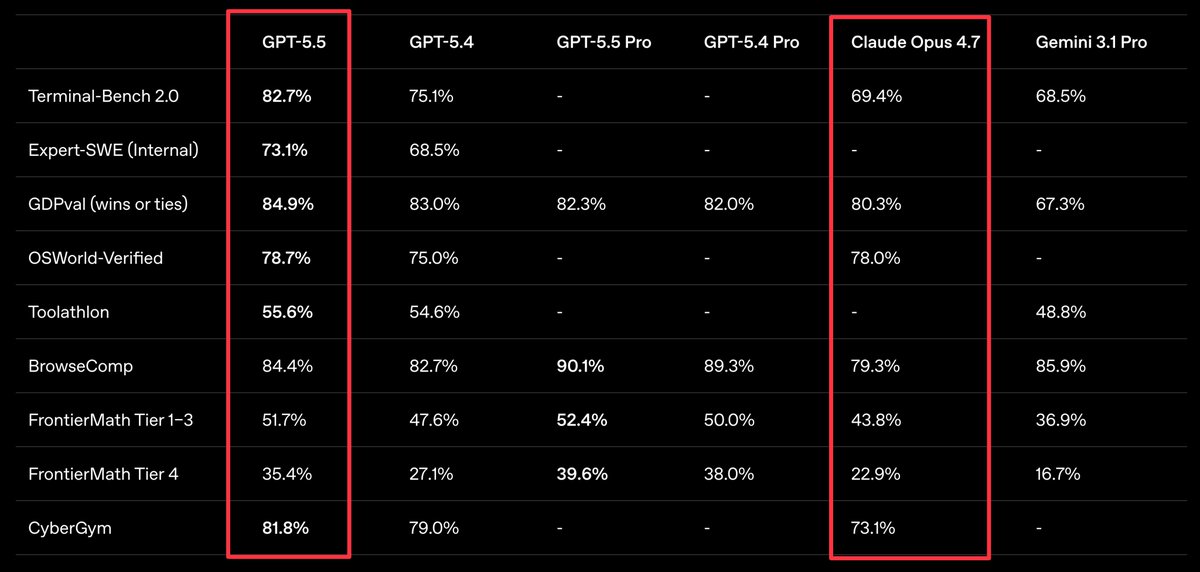

- 在 Terminal-Bench 2.0 上,公开比较显示 GPT-5.5 为 82.7%,Claude Opus 4.7 为 69.4%,GPT-5.5 领先约 13.3 个百分点。

- 我建议先把结论定为:没有绝对赢家;按公开可见的基准摘要看,Claude Opus 4.7 更偏强于真实软件工程修复类任务,GPT-5.5 更偏强于终端/代理执行和部分商业任务基准。若你的核心场景是代码库级修 Bug,Claude Opus 4.7 更占优;若是自动化任务、工具调用、终端操作或通用专业工作流,GPT-5.5 更值得优先测试。

- ## Key findings

研究答案

我建议先把结论定为:没有绝对赢家;按公开可见的基准摘要看,Claude Opus 4.7 更偏强于真实软件工程修复类任务,GPT-5.5 更偏强于终端/代理执行和部分商业任务基准。若你的核心场景是代码库级修 Bug,Claude Opus 4.7 更占优;若是自动化任务、工具调用、终端操作或通用专业工作流,GPT-5.5 更值得优先测试。

Key findings

-

GPT-5.5 是 OpenAI 当前面向复杂专业工作的前沿模型,OpenAI API 文档将其描述为“newest frontier model for the most complex professional work”,并支持多档 reasoning effort 设置。[

1]

-

Claude Opus 4.7 有 Anthropic 官方发布页,第三方发布与评测摘要普遍将其定位为 Anthropic 的新旗舰/高端模型。[

6][

9]

-

在 SWE-Bench Pro 上,公开侧边比较显示 GPT-5.5 为 58.6%,Claude Opus 4.7 为 64.3%,Claude Opus 4.7 领先约 5.7 个百分点。[

12][

13]

-

在 Terminal-Bench 2.0 上,公开比较显示 GPT-5.5 为 82.7%,Claude Opus 4.7 为 69.4%,GPT-5.5 领先约 13.3 个百分点。[

12]

-

在 GDPval 上,公开比较显示 GPT-5.5 为 84.9%,Claude Opus 4.7 为 80.3%,GPT-5.5 领先约 4.6 个百分点。[

12]

-

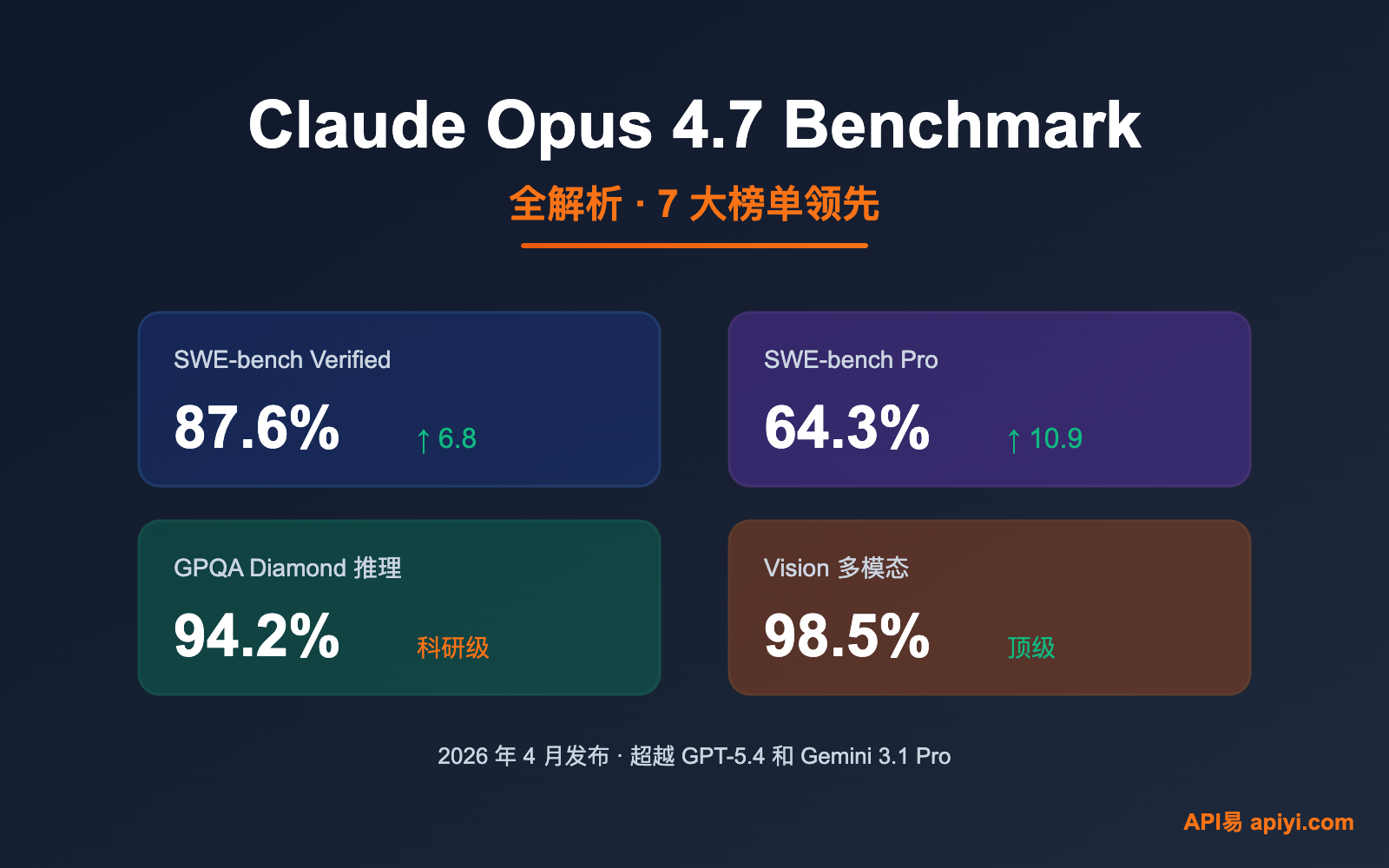

Claude Opus 4.7 的单模型发布摘要显示其在 SWE-bench Verified 为 87.6%、GPQA 为 94.2%,这支持“Claude 在代码修复与高难推理基准上很强”的判断,但该摘要没有同时给出 GPT-5.5 的同口径分数。[

10]

-

BenchLM 的 Claude Opus 4.7 页面称其在 provisional leaderboard 中排名第 2、总分 97/100,但这不是与 GPT-5.5 的直接同表对照,因此只能作为“Claude 处于顶级梯队”的辅助证据。[

6]

Evidence notes

| 维度 | GPT-5.5 | Claude Opus 4.7 | 暂时领先 |

|---|---|---|---|

| SWE-Bench Pro | 58.6% | 64.3% | Claude Opus 4.7 [ |

| Terminal-Bench 2.0 | 82.7% | 69.4% | GPT-5.5 [ |

| GDPval | 84.9% | 80.3% | GPT-5.5 [ |

| SWE-bench Verified | 未在当前同表摘要中确认 | 87.6% | 证据不足以同口径比较 [ |

| GPQA | 未在当前同表摘要中确认 | 94.2% | 证据不足以同口径比较 [ |

Limitations / uncertainty

-

Insufficient evidence:我没有在当前可用结果中找到一个同时由 OpenAI 与 Anthropic 官方共同确认的、完全同口径的 GPT-5.5 vs Claude Opus 4.7 全基准表。

-

目前最有用的 side-by-side 数字主要来自第三方汇总或评测文章,而不是完整官方模型卡的统一评测报告。[

12][

13]

-

基准测试容易受提示词、工具权限、上下文长度、采样参数、是否允许代理循环、是否使用专用 harness 等因素影响,所以这些分数更适合做初筛,不适合替代你自己的任务集评测。

Summary

如果只看公开摘要中的胜负面,GPT-5.5 在 Terminal-Bench 2.0 和 GDPval 上更占优势,Claude Opus 4.7 在 SWE-Bench Pro 上更占优势。[12][

13]

我的建议是:代码库修复、复杂 PR、真实 GitHub issue 类任务优先试 Claude Opus 4.7;终端操作、工具调用、自动化代理、商业工作流类任务优先试 GPT-5.5。最稳妥的做法是拿你自己的 20–50 个真实任务做小型 blind eval,再结合成本、延迟和失败模式决定主模型。

支持视觉效果

研究对话

研究问题

我想研究 GPT-5.5 与 Claude Opus 4.7 的基准测试表现,看看哪个模型更占优势。

引用的答案

我建议先把结论定为:没有绝对赢家;按公开可见的基准摘要看,Claude Opus 4.7 更偏强于真实软件工程修复类任务,GPT-5.5 更偏强于终端/代理执行和部分商业任务基准。若你的核心场景是代码库级修 Bug,Claude Opus 4.7 更占优;若是自动化任务、工具调用、终端操作或通用专业工作流,GPT-5.5 更值得优先测试。

Key findings

-

GPT-5.5 是 OpenAI 当前面向复杂专业工作的前沿模型,OpenAI API 文档将其描述为“newest frontier model for the most complex professional work”,并支持多档 reasoning effort 设置。[

1]

-

Claude Opus 4.7 有 Anthropic 官方发布页,第三方发布与评测摘要普遍将其定位为 Anthropic 的新旗舰/高端模型。[

6][

9]

-

在 SWE-Bench Pro 上,公开侧边比较显示 GPT-5.5 为 58.6%,Claude Opus 4.7 为 64.3%,Claude Opus 4.7 领先约 5.7 个百分点。[

12][

13]

-

在 Terminal-Bench 2.0 上,公开比较显示 GPT-5.5 为 82.7%,Claude Opus 4.7 为 69.4%,GPT-5.5 领先约 13.3 个百分点。[

12]

-

在 GDPval 上,公开比较显示 GPT-5.5 为 84.9%,Claude Opus 4.7 为 80.3%,GPT-5.5 领先约 4.6 个百分点。[

12]

-

Claude Opus 4.7 的单模型发布摘要显示其在 SWE-bench Verified 为 87.6%、GPQA 为 94.2%,这支持“Claude 在代码修复与高难推理基准上很强”的判断,但该摘要没有同时给出 GPT-5.5 的同口径分数。[

10]

-

BenchLM 的 Claude Opus 4.7 页面称其在 provisional leaderboard 中排名第 2、总分 97/100,但这不是与 GPT-5.5 的直接同表对照,因此只能作为“Claude 处于顶级梯队”的辅助证据。[

6]

Evidence notes

| 维度 | GPT-5.5 | Claude Opus 4.7 | 暂时领先 |

|---|---|---|---|

| SWE-Bench Pro | 58.6% | 64.3% | Claude Opus 4.7 [ |

| Terminal-Bench 2.0 | 82.7% | 69.4% | GPT-5.5 [ |

| GDPval | 84.9% | 80.3% | GPT-5.5 [ |

| SWE-bench Verified | 未在当前同表摘要中确认 | 87.6% | 证据不足以同口径比较 [ |

| GPQA | 未在当前同表摘要中确认 | 94.2% | 证据不足以同口径比较 [ |

Limitations / uncertainty

-

Insufficient evidence:我没有在当前可用结果中找到一个同时由 OpenAI 与 Anthropic 官方共同确认的、完全同口径的 GPT-5.5 vs Claude Opus 4.7 全基准表。

-

目前最有用的 side-by-side 数字主要来自第三方汇总或评测文章,而不是完整官方模型卡的统一评测报告。[

12][

13]

-

基准测试容易受提示词、工具权限、上下文长度、采样参数、是否允许代理循环、是否使用专用 harness 等因素影响,所以这些分数更适合做初筛,不适合替代你自己的任务集评测。

Summary

如果只看公开摘要中的胜负面,GPT-5.5 在 Terminal-Bench 2.0 和 GDPval 上更占优势,Claude Opus 4.7 在 SWE-Bench Pro 上更占优势。[12][

13]

我的建议是:代码库修复、复杂 PR、真实 GitHub issue 类任务优先试 Claude Opus 4.7;终端操作、工具调用、自动化代理、商业工作流类任务优先试 GPT-5.5。最稳妥的做法是拿你自己的 20–50 个真实任务做小型 blind eval,再结合成本、延迟和失败模式决定主模型。

人们还问

“我想研究 GPT-5.5 与 Claude Opus 4.7 的基准测试表现,看看哪个模型更占优势。”的简短答案是什么?

GPT-5.5 是 OpenAI 当前面向复杂专业工作的前沿模型,OpenAI API 文档将其描述为“newest frontier model for the most complex professional work”,并支持多档 reasoning effort 设置。

首先要验证的关键点是什么?

GPT-5.5 是 OpenAI 当前面向复杂专业工作的前沿模型,OpenAI API 文档将其描述为“newest frontier model for the most complex professional work”,并支持多档 reasoning effort 设置。 Claude Opus 4.7 有 Anthropic 官方发布页,第三方发布与评测摘要普遍将其定位为 Anthropic 的新旗舰/高端模型。

接下来在实践中我应该做什么?

在 SWE-Bench Pro 上,公开侧边比较显示 GPT-5.5 为 58.6%,Claude Opus 4.7 为 64.3%,Claude Opus 4.7 领先约 5.7 个百分点。

接下来我应该探索哪个相关主题?

继续“对克劳德·米索斯进行深入研究?”以获得另一个角度和额外的引用。

打开相关页面我应该将其与什么进行比较?

对照“研究并核实事实:大陆现在能直接注册并稳定使用 Claude Opus 4.7 吗?”交叉检查此答案。

打开相关页面继续你的研究

来源

- [1] AI Benchmarks 2026: Monthly Leaderboards & Rankings | MangoMindmangomindbd.com

5. Monthly Updates AI moves fast. We re-test all models monthly and publish updated rankings to reflect the latest releases. ## 🎯 Quick Recommendations ### Best AI for Coding (April 2026) 1. •Claude Opus 4.6 - 93.2% SWE-bench 2. •GPT-5.4 Pro - 91.1% SWE-bench 3. •DeepSeek V3.2 - 89.2% SWE-bench ### Best AI for Reasoning 1. •GPT-5.4 Pro - 94.5% GPQA Diamond 2. •Claude Opus 4.6 - 93.1% GPQA Diamond 3. •Gemini 3.1 Pro - 92.4% GPQA Diamond ### Best AI for Creative Writing 1. •GPT-5.4 Pro - Highest creativity scores 2. •Claude Opus 4.6 - Best narrative coherence 3. •Gemini 3.1 Pro - Most dive…

- [2] Claude Opus 4.7 (Adaptive) Benchmarks 2026: Scores, Rankings & Performance | BenchLM.aibenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # Claude Opus 4.7 (Adaptive) According to BenchLM.ai, Claude Opus 4.7 (Adaptive) ranks #5 out of 115 models on the provisional leaderboard with an overall score of 90/100. It also ranks #5 out of 23 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. Claude Opus 4.7 (Adaptive) is a proprietary model with a 1M token context window. It uses explicit chain-of-thought reasoning, which typically improves perfor…

- [3] Claude Opus 4.7 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # Claude Opus 4.7 According to BenchLM.ai, Claude Opus 4.7 ranks #2 out of 110 models on the provisional leaderboard with an overall score of 97/100. It also ranks #2 out of 14 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. Claude Opus 4.7 is a proprietary model with a 1M token context window. It processes queries without explicit chain-of-thought reasoning, offering faster response times and lower to…

- [4] Claude Opus 4.7 Benchmarks Explainedvellum.ai

Apr 16, 2026•16 min•ByNicolas Zeeb Guides CONTENTS Key observations of reported benchmarks Coding capabilities SWE-bench Verified SWE-bench Pro Terminal-Bench 2.0 Agentic capabilities MCP-Atlas (Scaled tool use) Finance Agent v1.1 OSWorld-Verified (Computer use) BrowseComp (Agentic search) Reasoning capabilities GPQA Diamond (Graduate-level science) Humanity's Last Exam Multimodal and vision capabilities CharXiv Reasoning (Visual reasoning) Multilingual Q&A (MMMLU) Safety and alignment What these benchmarks really mean for your agents When to use Opus 4.6 vs Opus 4.7 Use Opus 4.7 with your Ve…

- [5] GPT-5.5 vs Claude Opus 4.7: Pricing, Speed, Benchmarksllm-stats.com

| Spec | GPT-5.5 | Claude Opus 4.7 | --- | Provider | OpenAI | Anthropic | | Release date | Apr 23, 2026 | Apr 16, 2026 | | Model ID |

gpt-5.5|claude-opus-4-7| | Input / output (≤200K) | $5 / $30 per 1M | $5 / $25 per 1M | | Input / output (>200K) | $5 / $30 per 1M (flat) | $10 / $37.50 per 1M | | Context window (input / output) | 1M / 128K | 1M / 128K | | Modalities | Text + image, text out | Text + image (~3.75 MP), text out | | Reasoning controls | xhigh effort tier | low / medium / high / xhigh / max | | Batch / Flex tier | 0.5× standard | 0.5× standard | | Self-verification on age… - [6] GPT‑5.5 vs. Claude Opus 4.7: A Benchmark-by-Benchmark Field Guide to the New Frontier - Kingy AIkingy.ai

| Benchmark | GPT‑5.5 | Claude Opus 4.7 | Edge | --- --- | | SWE‑Bench Pro | 58.6% | 64.3% | Opus 4.7 | | Terminal‑Bench 2.0 | 82.7% | 69.4% | GPT‑5.5 | | GDPval | 84.9% | 80.3% | GPT‑5.5 | | FinanceAgent v1.1 | 60.0% | 64.4% | Opus 4.7 | | OfficeQA Pro | 54.1% | 43.6% | GPT‑5.5 | | OSWorld‑Verified | 78.7% | 78.0% | GPT‑5.5 | | BrowseComp | 84.4% | 79.3% | GPT‑5.5 | | MCP Atlas | 75.3% | 79.1% | Opus 4.7 | | GPQA Diamond | 93.6% | 94.2% | Opus 4.7 | | Humanity’s Last Exam (no tools) | 41.4% | 46.9% | Opus 4.7 | | Humanity’s Last Exam (with tools) | 52.2% | 54.7% | Opus 4.7 | | FrontierMath T…

- [7] Opus 4.7 Review: Features, Performance & How to Use It (2026 ...aimlapi.com

| Benchmark | Opus 4.7 | Opus 4.6 | Notes | --- --- | | CursorBench (Cursor) | 70% | 58% | +12 pp improvement | | Linear coding benchmark (93 tasks) | +13% resolution | Baseline | 4 tasks neither 4.6 nor Sonnet 4.6 could solve | | Visual acuity (XBOW) | 98.5% | 54.5% | Near-complete elimination of prior pain point | | BigLaw Bench (Harvey, high effort) | 90.9% | Not reported | Best-in-class legal accuracy | | Rakuten-SWE-Bench | 3× more tasks | Baseline | Also double-digit gains in code & test quality | | Databricks OfficeQA Pro | 21% fewer errors | Baseline | Best Claude model for enterprise…

- [8] SWE-bench February 2026 leaderboard updatesimonwillison.net

Update: If you look at the transcript Claude claims to have switched to Playwright, which is confusing because I didn't think I had that configured. Posted 19th February 2026 at 4:48 am ## Recent articles Changes in the system prompt between Claude Opus 4.6 and 4.7 - 18th April 2026 Join us at PyCon US 2026 in Long Beach - we have new AI and security tracks this year - 17th April 2026 Qwen3.6-35B-A3B on my laptop drew me a better pelican than Claude Opus 4.7 - 16th April 2026 This is a link post by Simon Willison, posted on 19th February 2026. benchmarks 14django 588ai 1969openai 406generativ…

- [9] SWE-bench Verified Benchmark 2026: 35 LLM scores | BenchLM.aibenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # Software Engineering Benchmark Verified (SWE-bench Verified) A curated, human-verified subset of SWE-bench that tests models on resolving real GitHub issues from popular open-source Python repositories like Django, Flask, and scikit-learn. ## Top models on SWE-bench Verified — April 24, 2026 As of April 24, 2026, Claude Mythos Preview leads the SWE-bench Verified leaderboard with 93.9% , followed by Claude Opus 4.7 (Adaptive) (87.6%) and GPT-5.3 Codex (85%). Claude Mythos Preview Anthropic Claude Opus 4.7 (Adapt…

- [10] Claude Opus 4.7 vs 4.6 vs Mythos: Which Model Should ...nxcode.io

Quick Comparison: All Models Side by Side | Opus 4.7 | Opus 4.6 | Mythos Preview | Sonnet 4.6 | --- --- | | Release | April 16, 2026 | February 17, 2026 | April 7, 2026 | February 17, 2026 | | Availability | Generally available | Generally available | Project Glasswing only | Generally available | | API model ID |

claude-opus-4-7|claude-opus-4-6| Not public |claude-sonnet-4-6| | Pricing (input/output) | $5 / $25 MTok | $5 / $25 MTok | N/A | $3 / $15 MTok | | Context window | 1M tokens | 1M tokens | Unknown | 1M tokens | | SWE-bench Verified 80.8% | 93.9% | 79.6% | | CursorBench… - [11] GPT-5.5: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

9Image 42GPT-5 mini 0.22 10Image 43o3 0.16 GPQAView → #4 of 10 Image 44: LLM Stats Logo A challenging dataset of 448 multiple-choice questions written by domain experts in biology, physics, and chemistry. Questions are Google-proof and extremely difficult, with PhD experts reaching 65% accuracy. More 1Image 45Claude Mythos Preview 0.95 2Image 46Gemini 3.1 Pro 0.94 3Image 47Claude Opus 4.7 0.94 4Image 48GPT-5.5 0.94 5Image 49GPT-5.2 Pro 0.93 6Image 50GPT-5.4 0.93 7Image 51GPT-5.2 0.92 8Image 52Gemini 3 Pro 0.92 9Image 53Claude Opus 4.6 0.91 10Image 54Kimi K2.6 0.91 Show 18 more Notice missing…

- [12] LLM Leaderboard 2026 — Compare Top AI Models - Vellumvellum.ai

93.6% GPT-5.5 92.4% GPT 5.2 91.9% Gemini 3 Pro Best in Reasoning (GPQA Diamond)| Model | Score | --- | | Claude 3 Opus | 95.4% | | Claude Opus 4.7 | 94.2% | | GPT-5.5 | 93.6% | | GPT 5.2 | 92.4% | | Gemini 3 Pro | 91.9% | ### Best in High School Math (AIME 2025) 100%96%93%89%86% 100% Gemini 3 Pro 100% GPT 5.2 99.8% Claude Opus 4.6 99.1% Kimi K2 Thinking 98.7% GPT oss 20b Best in High School Math (AIME 2025)| Model | Score | --- | | Gemini 3 Pro | 100% | | GPT 5.2 | 100% | | Claude Opus 4.6 | 99.8% | | Kimi K2 Thinking | 99.1% | | GPT oss 20b | 98.7% | ### Best in Agentic Coding (SWE Bench) 90…

- [13] Claude Opus 4.7: Benchmarks, Pricing, Context & What's Newllm-stats.com

LLM Stats Logo Make AI phone calls with one API call # Claude Opus 4.7: Benchmarks, Pricing, Context & What's New Claude Opus 4.7 scores 87.6% on SWE-bench Verified, 94.2% on GPQA, 1M token context, 3.3x higher-resolution vision, new xhigh effort level. $5/$25 pricing. Jonathan Chavez The Takeaway Claude Opus 4.7 is a direct upgrade to Opus 4.6 at the same price ($5/$25 per million tokens), with 87.6% on SWE-bench Verified (+6.8pp), a new xhigh effort level, 3.3x higher-resolution vision, and self-verification on long-running agentic tasks. Claude Opus 4.7: Benchmarks, Pricing, Context & What…

- [14] Everything You Need to Know About GPT-5.5 - Vellumvellum.ai

| Benchmark | GPT-5.5 | GPT-5.5 Pro | GPT-5.4 | Claude Opus 4.7 | Gemini 3.1 Pro | --- --- --- | | Terminal-Bench 2.0 | 82.7% | — | 75.1% | 69.4% | 68.5% | | SWE-Bench Pro | 58.6% | — | 57.7% | 64.3% | 54.2% | | Expert-SWE (Internal) | 73.1% | — | 68.5% | — | — | | GDPval | 84.9% | 82.3% | 83.0% | 80.3% | 67.3% | | OSWorld-Verified | 78.7% | — | 75.0% | 78.0% | — | | BrowseComp | 84.4% | 90.1% | 82.7% | 79.3% | 85.9% | | MCP Atlas | 75.3% | — | 70.6% | 79.1% | 78.2% | | GPQA Diamond | 93.6% | — | 92.8% | 94.2% | 94.3% | | FrontierMath T1–3 | 51.7% | 52.4% | 47.6% | 43.8% | 36.9% | | FrontierM…

- [15] Fact Check: Does GPT-5.5 underperform Claude Opus 4.7 on the ...factcheckradar.com

Key checks GPT-5.5 SWE-Bench Pro Performance: In its April 23, 2026, release announcement, OpenAI stated that GPT-5.5 reaches 58.6% on SWE-Bench Pro, which evaluates the resolution of real-world GitHub issues. Claude Opus 4.7 SWE-Bench Pro Performance: Anthropic's Claude Opus 4.7, released on April 16, 2026, scores 64.3% on SWE-Bench Pro, which is 5.7 percentage points higher than GPT-5.5. OpenAI's Use of Internal Benchmarks: OpenAI's official announcement table prominently features 'Expert-SWE (Internal)', where GPT-5.5 scores 73.1%. It does not list the SWE-Bench Pro score in that specif…

- [16] GPT-5.5 Is Here — Reading OpenAI's Own Benchmark Table Honestly Shows Where Opus 4.7 and Gemini 3.1 Pro Still Winton-technotes.com

April 2026 is the month every major AI platform shipped either a specialized or a new-tier model/agent. GPT-5.5 is the last piece. It’s the general-purpose side of OpenAI’s two-track strategy (general GPT-5.x + specialized Codex/Rosalind) stepping up. → OpenAI GPT-Rosalind and the Start of AI’s “Specialized Era” — A Life Sciences-Only Model Signals the Shift From General-Purpose LLMs to Domain-Specific AI ### Rankings Are Over. Read the Map Instead. GPT-5.5 doesn’t win everything. Wins and losses split by task type. The right read for April 2026 is not “which model is #1” but “which model for…

- [17] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

Star on GitHub 55.8KGo to Console Start building for free Sign upGo to Console Start building for free Products Docs Pricing Customers Blog Changelog Star on GitHub 55.8K Blog/GPT-5.5 is here: benchmarks, pricing, and what changes for developers Apr 24, 2026•8 min # GPT-5.5 is here: benchmarks, pricing, and what changes for developers OpenAI shipped GPT-5.5 on April 23, 2026. Here's a source-backed look at benchmarks, pricing versus GPT-5.4 and Claude Opus 4.7, the system card, and where the model still falls short. Image 13: Atharva Deosthale #### Atharva Deosthale Developer Advocate SHARE 7…

- [18] GPT-5.5 vs Claude Opus 4.7: Real-World Coding Performance Comparedmindstudio.ai

SWE-Bench and Coding Tasks On SWE-Bench Verified — the standard benchmark for evaluating real GitHub issue resolution — both models score competitively at the top of the 2026 leaderboard. GPT-5.5 holds a slight edge on problems requiring precise tool use and file navigation. Opus 4.7 performs better on tasks requiring broad architectural reasoning across large codebases. Neither model dominates outright. The gap is narrow enough that benchmark scores alone shouldn’t drive your decision. ### Where Opus 4.7 Pulls Ahead Multi-file reasoning across large repos (10k+ lines) Tasks requiring sig…

- [19] OpenAI’s GPT-5.5 vs Claude Opus 4.7: Which is better? | Mashablemashable.com

Thanks for signing up! SWE-Bench Pro: GPT-5.5 scored 58.6; Opus 4.7 scored 64.3 percent Terminal-Bench 2.0: GPT-5.5 scored 82.7 percent; Opus 4.7 scored 69.4 percent Humanity's Last Exam: GPT-5.5 scored 40.6 percent; Opus 4.7 scored 31.2 percent\ Humanity's Last Exam (with tools): GPT-5.5 scored 52.2 percent; Opus 4.7 scored 54.7 percent BrowseComp: GPT-5.5 scored 84.4 percent; Opus 4.7 scored 79.3 percent GPQA Diamond: GPT-5.5 scored 93.6 percent; Opus 4.7 scored 94.2 percent ARC-AGI-1 (Verified): GPT-5.5 (High) scored 94.5 percent; Claude 4.7 (High) scored 92 percent\ ARC-AGI-2 (Verified):…

- [20] SWE-bench - Vals AIvals.ai

Benchmarks Models Comparison Model Guide App Reports News About Benchmarks Models Comparison Model Guide App Reports About Coding SWE-bench # SWE-bench Updated: 4/23/2026 Solving production software engineering tasks ## Key Takeaways Claude Opus 4.7 leads with a performance of 82.00%, achieving the best accuracy on SWE-bench. Gemini 3.1 Pro Preview (02/26) follows with 78.80%, with Claude Opus 4.6 (Thinking) and GPT 5.4 tied at 78.20%, and GPT 5.3 Codex at 78.00%. ## Background SWE-bench, introduced by Jimenez et al. in their seminal paper “Can Language Models Resolve Real-World GitHub Issues…

- [21] SWE-Bench 2026: Claude Opus 4.7 Wins 87.6% vs GPT-5.3 85.0% - TokenMix 博客tokenmix.ai

SWE-Bench Verified Leaderboard: Full Results April 2026 | Rank | Model | Score | Released | Owner | --- --- | 1 | Claude Opus 4.7 | 87.6% | Apr 16, 2026 | Anthropic | | 2 | GPT-5.3-Codex | 85.0% | Mar 2026 | OpenAI | | 3 | Claude Opus 4.5 | 80.9% | Q4 2025 | Anthropic | | 4 | Claude Opus 4.6 | 80.8% | Q1 2026 | Anthropic | | 5 | Gemini 3.1 Pro | 80.6% | Feb 2026 | Google DeepMind | | 6 | Qwen3.6 Plus | 78.8% | Apr 2026 | Alibaba | | 7 | Muse Spark | 77.4% | Mar 2026 | Meta | Opus 4.7 is the first model to cross 87% on Verified, shipping with 1M context and tightened training for multi-file…

- [22] Model Drop: GPT-5.5 - by Jake Handyhandyai.substack.com

Headline benchmarks: Terminal-Bench 2.0 at 82.7% (Opus 4.7: 69.4%, Gemini 3.1 Pro: 68.5%). SWE-Bench Pro at 58.6% (Opus 4.7 still leads at 64.3%). OpenAI’s internal Expert-SWE eval, where tasks have a 20-hour median human completion time, at 73.1% (up from GPT-5.4’s 68.5%). GDPval wins-or-ties at 84.9% (Opus 4.7: 80.3%, Gemini 3.1 Pro: 67.3%). OSWorld-Verified at 78.7% (narrowly edges Opus 4.7’s 78.0%). FrontierMath Tier 4 at 35.4% (Opus 4.7: 22.9%, Gemini 3.1 Pro: 16.7%). CyberGym at 81.8% (Opus 4.7: 73.1%, Anthropic’s Claude Mythos: 83.1%). Tau2-Bench Telecom at 98.0% without prompt tuning.…

- [23] GPT-5.5 Model | OpenAI APIdevelopers.openai.com

GPT-5.5 is our newest frontier model for the most complex professional work. Learn more in our latest model guide. Reasoning.effort supports: none, low, ... 3 hours ago

- [24] GPT-5.5 pro Model | OpenAI APIdevelopers.openai.com

GPT-5.5 pro uses more compute to think harder and provide consistently better answers. GPT-5.5 pro is available for Responses API requests, ... 4 hours ago

- [25] Introducing GPT-5 - OpenAIopenai.com

In our evaluations, GPT‑5 (with thinking) performs better than OpenAI o3 with 50-80% less output tokens across capabilities, including visual ... Aug 7, 2025

- [26] GPT-5.5 System Card - OpenAIopenai.com

... GPT‑5.5 and GPT‑5.5 Pro in the API. 2026 · System Cards. Author. OpenAI. Keep reading. View all. GPT-5.5 Bio Bug Bounty > art card. GPT-5.5 Bio ... 2 days ago

- [27] GPT-5.5 is here! Available in the API, Codex and ChatGPT todaycommunity.openai.com

OpenAI Developer Community · GPT-5.5 is here! Available in the API, Codex and ChatGPT today · Announcements · models · merefield April 24, 2026, ... 18 hours ago

- [28] GPT-5.5 is here! Available in the API, Codex and ChatGPT todaycommunity.openai.com

OpenAI Developers ... GPT-5.5 is now available in the API. The model brings higher intelligence and stronger token efficiency to complex work, ... 19 hours ago

- [29] Introducing GPT-5.5 - OpenAIopenai.com

“It's incredibly energizing to use OpenAI's new GPT-5.5 model in our harness, have it reason over massive biochemical datasets to predict human ... 2 days ago

- [30] GPT-5.5 is here! Available in the API, Codex and ChatGPT todaycommunity.openai.com

OpenAI Developers · GPT-5.4 (xhigh): 120M tokens · GPT-5.5 (xhigh): 75M tokens · GPT-5.5 (high): 45M tokens · GPT-5.5 (medium): 22M tokens. 2 days ago

- [31] GPT-5.5 System Card - OpenAI Deployment Safety Hubdeploymentsafety.openai.com

Like OpenAI's other models, GPT-5.5 was trained on diverse datasets ... We report here on our Production Benchmarks, an evaluation set ... 2 days ago

- [32] GPT-5.5 Bio Bug Bounty - OpenAIopenai.com

Explore the GPT-5.5 Bio Bug Bounty: a red-teaming challenge to find universal jailbreaks for bio safety risks, with rewards up to $25000. 2 days ago

- [33] Claude Opus 4.7 leads on SWE-bench and agentic reasoning ...thenextweb.com

Claude Opus 4.7 leads on SWE-bench and agentic reasoning, beating GPT-5.4 and Gemini 3.1 Pro Skip to content Toggle Navigation , multi-agent coordination for hours-long workflows, 3x higher image resolution, and a 14% improvement in multi-step agentic reasoning with a third of the tool errors. Priced at $5/$25 per million tokens, it is available across Claude plans and through Amazon Bedrock, Vertex AI, and Microsoft Foundry._ Anthropic has released Claude Opus 4.7, its most capable generally available model to date, with benchmark-leading performance in software engineering and agentic rea…

- [34] Claude Opus 4.7: Anthropic's New Best (Available) Model - DataCampdatacamp.com

Claude Opus 4.7: Anthropic’s New Best (Available) Model Explore what's new in Anthropic's latest flagship: stronger agentic coding, sharper vision, and better memory across sessions. Compare the benchmarks against GPT-5.4, Gemini 3.1 Pro, and the locked-away Mythos Preview. Apr 16, 2026 · 9 min read Anthropic has released Claude Opus 4.7, the latest iteration of its flagship model tier. The headline improvement is autonomy: Anthropic is pitching this as the model you can hand your hardest, longest-running coding and agent work to without close supervision. [...] ## Claude Opus 4.7 Benchmark…

- [35] Claude Opus 4.7: What Changed for Coding Agents (April 2026)verdent.ai

| Benchmark | Opus 4.6 | Opus 4.7 | GPT-5.4 | Notes | --- --- | SWE-bench Verified | 80.80% | 87.60% | — | Anthropic-conducted; memorization screens applied | | SWE-bench Pro | 53.50% | 64.30% | 57.70% | Multi-language real-world tasks | | CursorBench | 58% | 70% | — | Source: Cursor CEO Michael Truell (partner eval) | | Terminal-Bench 2.0 | — | 69.40% | 75.10% | GPT-5.4 leads; Terminus-2 harness, thinking disabled | | BrowseComp | 83.70% | 79.30% | 89.30% | Regression vs Opus 4.6 | | XBOW Visual Acuity | 54.50% | 98.50% | — | Computer use / screenshot tasks | | GPQA Diamond | 91.30% | 94.20%…

- [36] Introducing Claude Opus 4.7 - Anthropicanthropic.com

Image 15: logo > In our evals, we saw a double-digit jump in accuracy of tool calls and planning in our core orchestrator agents. As users leverage Hebbia to plan and execute on use cases like retrieval, slide creation, or document generation, Claude Opus 4.7 shows the potential to improve agent decision-making in these workflows. > > Adithya Ramanathan > > Head of Applied Research Image 16: logo > On Rakuten-SWE-Bench, Claude Opus 4.7 resolves 3x more production tasks than Opus 4.6, with double-digit gains in Code Quality and Test Quality. This is a meaningful lift and a clear upgrade for th…

- [37] Claude Opus 4.7 Benchmark Full Analysis: Empirical Data Leading ...help.apiyi.com

Q1: What is Claude Opus 4.7? Claude Opus 4.7 is the flagship Large Language Model released by Anthropic on April 16, 2026. It leads in multiple benchmarks, including coding (SWE-bench Verified 87.6%), Agent tool invocation, and scientific reasoning (GPQA Diamond 94.2%), outperforming GPT-5.4 and Gemini 3.1 Pro. Compared to Opus 4.6, it introduces the new "xhigh effort" deep reasoning mode, all while maintaining the same official pricing. Q2: Which is better, Claude Opus 4.7 or GPT-5.4? [...] ### Core Takeaways from Claude Opus 4.7 Benchmark Testing Anthropic released Claude Opus 4.7 on April…

- [38] Anthropic Promised Claude Opus 4.7 Would Change ... - Towards AIpub.towardsai.net

Anthropic Promised Claude Opus 4.7 Would Change Everything. Here’s What Actually Happened. | by Adi Insights and Innovations | Apr, 2026 | Towards AI Sitemap Open in app Sign up Sign in []( Get app Write Search Sign up Sign in Image 1 ## Towards AI · Follow publication Image 2: Towards AI We build Enterprise AI. We teach what we learn. Join 100K+ AI practitioners on Towards AI Academy. Free: 6-day Agentic AI Engineering Email Guide: Follow publication Member-only story # Anthropic Promised Claude Opus 4.7 Would Change Everything. Here’s What Actually Happened. ## _The benchmarks say it’s th…

- [39] Anthropic releases Claude Opus 4.7: How to try it, benchmarks, safetymashable.com

Claude Opus 4.7 is available now. Credit: Samuel Boivin/NurPhoto via Getty Images Anthropic has been shipping products and making news at a blistering pace in 2026, and on Thursday, the AI company announced the launch of Claude Opus 4.7. Claude Opus 4.7 is Anthropic's most intelligent model available to the general public. Notably, Anthropic said in a press release") that Opus 4.7 is not as powerful as Claude Mythos, which Anthropic deemed too dangerous for public release. Claude Opus is a family of hybrid reasoning models capable of multi-step reasoning and advanced coding. Until the announc…

- [40] Anthropic Launches Claude Opus 4.7 with Improved Performance | Zain Zia posted on the topic | LinkedInlinkedin.com

View profile for Zain Zia 🚀 Anthropic has Launched Claude Opus 4.7 Anthropic released Claude Opus 4.7 on April 16, 2026. Key Specs: SWE-bench Pro: 64.3% (GPT-5.4: 57.7%, Gemini 3.1 Pro: 54.2%) OfficeChai SWE-bench Verified: 87.6% LLM Leaderboard GPQA Diamond: 94.2% LLM Leaderboard 1M context window 3x higher image resolution vs 4.6 Pricing: $5/$25 per million tokens BenchLM ranks it #4 overall with a score of 93/100 BenchLM What's Different: The model verifies its own outputs before reporting back, cutting error rates on long-running tasks LLM Leaderboard Available via Claude API, AWS Bedroc…

- [41] Opus 4.7 Benchmarks Released | Facebookfacebook.com

AI & You | Agents | Evolution Unleashed: Your Gateway To The AI Era | Opus 4.7 Benchmarks Released | Facebook Log In Log In Forgot Account? Image 1 Opus 4.7 benchmarks show significant improvement Summarized by AI from the post below []( ## AI & You | Agents | Evolution Unleashed: Your Gateway To The AI Era · Join Stu Jordan · Admin · 5d · ### Opus 4.7 Benchmarks Released Anthropic just released the benchmarks for Opus 4.7 which launched today. They show quite a jump from 4.6 - especially in coding and reasoning. Search got a little nerfed but that’s because they’ve added some cyber securit…

- [42] While we wait for Mythos, Anthropic just dropped a pretty massive .1 ...x.com

See new posts # Conversation Image 1 Chris @chatgpt21 While we wait for Mythos, Anthropic just dropped a pretty massive .1 upgrade with Opus 4.7. Opus 4.6 -> 4.7 SWE Bench Pro: 53.4% -> 64.3% SWE Bench Verified: 80.8% -> 87.6% Terminal Bench: 65.4% -> 69.4% HLE no tools: 40.0% -> 46.9% GPQA: 91.3% -> 94.2% CharXiv no tools: 69.1% -> 82.1% CharXiv with tools: 84.7% -> 91.0% Not everything improved. BrowseComp: 83.7% -> 79.3% CyberGym: 73.8% -> 73.1% Still a very real jump overall, especially on coding, reasoning, and visual reasoning. Quote Image 2 Claude Image 3 @claudeai · Apr 16 [...] Quote…