Research & compare Claude Opus 4.7, GPT-5.5, DeepSeek V4, Kimi K2.6 as comprehensively as possible

Based only on the provided evidence, Claude Opus 4.7 is the only model with enough sourced detail to compare meaningfully. GPT 5.5 is confirmed as an OpenAI API model, Kimi K2.6 has only a limited third party snippet,...

Based only on the provided evidence, Claude Opus 4.7 is the only model with enough sourced detail to compare meaningfully. GPT 5.5 is confirmed as an OpenAI API model, Kimi K2.6 has only a limited third party snippet, and DeepSeek V4 has no supporting evidence in the provided material, so any detailed ranking across al

Key takeaways

- Claude Opus 4.7 is described by Anthropic as a “hybrid reasoning model” focused on coding and AI agents, with a 1M context window.

- Anthropic’s documentation says Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium.

- Anthropic’s documentation also says Claude Opus 4.7 shows “meaningful gains” on knowledge-worker tasks.

- A third-party technical writeup describes Claude Opus 4.7 as Anthropic’s most capable generally available model for coding, enterprise workflows, multimodal reasoning, financial analysis, life sciences, cybersecurity, and long-running agentic work.

- Based only on the provided evidence, Claude Opus 4.7 is the only model with enough sourced detail to compare meaningfully. GPT-5.5 is confirmed as an OpenAI API model, Kimi K2.6 has only a limited third-party snippet, and DeepSeek V4 has no supporting evidence in the provided mat

- ## Key findings

Research answer

Based only on the provided evidence, Claude Opus 4.7 is the only model with enough sourced detail to compare meaningfully. GPT-5.5 is confirmed as an OpenAI API model, Kimi K2.6 has only a limited third-party snippet, and DeepSeek V4 has no supporting evidence in the provided material, so any detailed ranking across all four would be unsupported.

Key findings

- Claude Opus 4.7 is described by Anthropic as a “hybrid reasoning model” focused on coding and AI agents, with a 1M context window [

4].

- Anthropic’s documentation says Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium [

2].

- Anthropic’s documentation also says Claude Opus 4.7 shows “meaningful gains” on knowledge-worker tasks [

2].

- A third-party technical writeup describes Claude Opus 4.7 as Anthropic’s most capable generally available model for coding, enterprise workflows, multimodal reasoning, financial analysis, life sciences, cybersecurity, and long-running agentic work [

6].

- GPT-5.5 is listed in OpenAI’s API documentation as

gpt-5.5, with a dated model versiongpt-5.5-2026-04-23[1].

- The provided GPT-5.5 evidence only confirms API model listing and mentions rate limits; it does not provide benchmark results, modality details, context length, pricing, or capability claims [

1].

- Kimi K2.6 is described in one LinkedIn article snippet as an open-weight model from Moonshot AI, positioned against GPT-5.4 and Claude Opus 4.6 on coding benchmarks [

45].

- The provided evidence contains no source for DeepSeek V4. Insufficient evidence.

- There is insufficient evidence to make a defensible overall ranking among Claude Opus 4.7, GPT-5.5, DeepSeek V4, and Kimi K2.6.

Comparison table

| Category | Claude Opus 4.7 | GPT-5.5 | DeepSeek V4 | Kimi K2.6 |

|---|---|---|---|---|

| Evidence strength | Strongest among the four, with official Anthropic sources plus third-party analysis [ | Limited official OpenAI API evidence [ | No provided evidence | Very limited third-party evidence [ |

| Provider | Anthropic [ | OpenAI [ | Insufficient evidence | Moonshot AI, according to the provided LinkedIn snippet [ |

| Model status | Public Claude product/API access is referenced by Anthropic [ | Listed in OpenAI API docs as gpt-5.5 and gpt-5.5-2026-04-23 [ | Insufficient evidence | Described as released in the provided LinkedIn snippet [ |

| Context window | 1M context window [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Pricing evidence | 1M context at standard API pricing with no long-context premium [ | Insufficient evidence beyond rate-limit reference [ | Insufficient evidence | Insufficient evidence |

| Output limit | A third-party source says up to 128K output tokens [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Coding | Anthropic positions it as frontier-level for coding, and a third-party source says it is strong for coding [ | Insufficient evidence | Insufficient evidence | Positioned against GPT-5.4 and Claude Opus 4.6 on coding benchmarks, according to one LinkedIn snippet [ |

| Agents / tool use | Anthropic says it pushes the frontier for AI agents [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Knowledge work | Anthropic says it has meaningful gains on knowledge-worker tasks [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Multimodal reasoning | A third-party source lists multimodal reasoning as a target capability area [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Open weights | No evidence that Claude Opus 4.7 is open-weight | No evidence that GPT-5.5 is open-weight | Insufficient evidence | Described as open-weight in one LinkedIn snippet [ |

| Benchmarks | A Vellum article exists discussing Claude Opus 4.7 benchmarks, including coding, agentic, finance, reasoning, and search-related categories, but the provided snippet does not include specific scores [ | Insufficient evidence | Insufficient evidence | Only a broad claim about positioning on coding benchmarks is provided [ |

Model-by-model assessment

Claude Opus 4.7

Claude Opus 4.7 has the clearest evidence base in the provided material. Anthropic describes it as a hybrid reasoning model that advances coding and AI-agent use cases and includes a 1M context window [4].

The most concrete differentiator is long context: Anthropic says Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium [2]. That makes it the only model in the provided evidence with a clearly documented context-window advantage [

2][

4].

Claude Opus 4.7 also has the broadest capability claims in the supplied sources. Anthropic says it shows meaningful gains on knowledge-worker tasks [2], while a third-party source positions it for coding, enterprise workflows, multimodal reasoning, financial analysis, life sciences, cybersecurity, and long-running agentic work [

6].

However, the provided benchmark evidence is incomplete. A Vellum article is listed as explaining Claude Opus 4.7 benchmarks across categories such as SWE-bench Verified, SWE-bench Pro, Terminal-Bench 2.0, MCP-Atlas, Finance Agent v1.1, OSWorld-Verified, BrowseComp, and GPQA, but the provided snippet does not include actual scores or rankings [5].

GPT-5.5

GPT-5.5 is confirmed in the provided evidence as an OpenAI API model. The OpenAI API documentation snippet lists gpt-5.5 and a dated version, gpt-5.5-2026-04-23 [1].

The same OpenAI snippet mentions rate limits, but only in general terms: rate limits cap requests or token usage and depend on usage tier [1]. The snippet does not provide GPT-5.5’s context window, pricing, benchmark results, modalities, training cutoff, tool-use features, or coding performance [

1].

Because the provided GPT-5.5 evidence is minimal, it cannot be fairly compared against Claude Opus 4.7 on capability, cost, latency, benchmarks, or agentic performance.

DeepSeek V4

No evidence for DeepSeek V4 is included in the provided source list. Insufficient evidence.

That means there is no sourced basis here to describe DeepSeek V4’s release status, context window, pricing, weights, architecture, benchmarks, coding performance, reasoning performance, or API availability.

Kimi K2.6

The only provided evidence for Kimi K2.6 is a LinkedIn article snippet saying Moonshot AI released Kimi K2.6 as an open-weight model [45]. The same snippet says it is positioned against GPT-5.4 and Claude Opus 4.6 on coding benchmarks [

45].

That evidence is too thin for a robust evaluation. The snippet does not provide benchmark names, scores, methodology, context length, license terms, API availability, hardware requirements, pricing, safety evaluations, or comparisons against Claude Opus 4.7 or GPT-5.5 [45].

Evidence notes

- Official vendor sources support the strongest claims for Claude Opus 4.7 and GPT-5.5: Anthropic sources support Claude’s 1M context and positioning [

2][

4], and OpenAI’s API documentation supports the existence of GPT-5.5 as an API model [

1].

- Third-party sources add useful but weaker evidence for Claude Opus 4.7’s broader enterprise and domain positioning [

6].

- The Kimi K2.6 evidence is especially limited because it comes only from a LinkedIn article snippet, not an official Moonshot AI source or benchmark report [

45].

- The provided DeepSeek V4 evidence is absent. Insufficient evidence.

Limitations / uncertainty

- There are no direct benchmark scores in the provided evidence for GPT-5.5, DeepSeek V4, or Kimi K2.6.

- There are no apples-to-apples benchmark numbers across all four models.

- There is no supplied evidence for latency, cost per token, availability by region, safety evaluations, tool-use reliability, coding benchmark scores, or real-world deployment performance across the four models.

- Claims about Claude Opus 4.7 are much better supported than claims about the other three models because the evidence includes official Anthropic documentation [

2][

4].

- Any definitive ranking such as “best overall,” “best coder,” “best reasoning model,” or “best value” would be unsupported by the provided evidence.

Summary

Claude Opus 4.7 appears strongest on the available evidence because it has official documentation for a 1M context window, no long-context premium, coding and agentic positioning, and knowledge-work improvements [2][

4]. GPT-5.5 is confirmed as an OpenAI API model, but the provided evidence does not describe its capabilities beyond model listing and rate-limit context [

1]. Kimi K2.6 is only lightly supported by a third-party snippet describing it as open-weight and coding-benchmark-oriented [

45]. DeepSeek V4 cannot be assessed from the provided evidence.

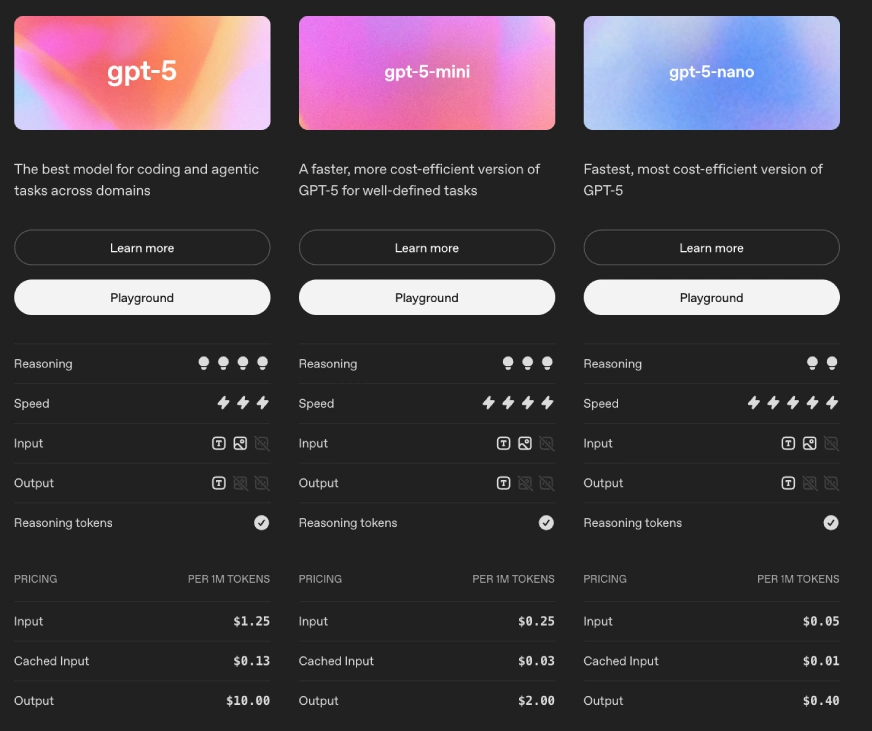

Supporting visuals

Research conversation

Research question

Research & compare Claude Opus 4.7, GPT-5.5, DeepSeek V4, Kimi K2.6 as comprehensively as possible

Cited answer

Based only on the provided evidence, Claude Opus 4.7 is the only model with enough sourced detail to compare meaningfully. GPT-5.5 is confirmed as an OpenAI API model, Kimi K2.6 has only a limited third-party snippet, and DeepSeek V4 has no supporting evidence in the provided material, so any detailed ranking across all four would be unsupported.

Key findings

- Claude Opus 4.7 is described by Anthropic as a “hybrid reasoning model” focused on coding and AI agents, with a 1M context window [

4].

- Anthropic’s documentation says Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium [

2].

- Anthropic’s documentation also says Claude Opus 4.7 shows “meaningful gains” on knowledge-worker tasks [

2].

- A third-party technical writeup describes Claude Opus 4.7 as Anthropic’s most capable generally available model for coding, enterprise workflows, multimodal reasoning, financial analysis, life sciences, cybersecurity, and long-running agentic work [

6].

- GPT-5.5 is listed in OpenAI’s API documentation as

gpt-5.5, with a dated model versiongpt-5.5-2026-04-23[1].

- The provided GPT-5.5 evidence only confirms API model listing and mentions rate limits; it does not provide benchmark results, modality details, context length, pricing, or capability claims [

1].

- Kimi K2.6 is described in one LinkedIn article snippet as an open-weight model from Moonshot AI, positioned against GPT-5.4 and Claude Opus 4.6 on coding benchmarks [

45].

- The provided evidence contains no source for DeepSeek V4. Insufficient evidence.

- There is insufficient evidence to make a defensible overall ranking among Claude Opus 4.7, GPT-5.5, DeepSeek V4, and Kimi K2.6.

Comparison table

| Category | Claude Opus 4.7 | GPT-5.5 | DeepSeek V4 | Kimi K2.6 |

|---|---|---|---|---|

| Evidence strength | Strongest among the four, with official Anthropic sources plus third-party analysis [ | Limited official OpenAI API evidence [ | No provided evidence | Very limited third-party evidence [ |

| Provider | Anthropic [ | OpenAI [ | Insufficient evidence | Moonshot AI, according to the provided LinkedIn snippet [ |

| Model status | Public Claude product/API access is referenced by Anthropic [ | Listed in OpenAI API docs as gpt-5.5 and gpt-5.5-2026-04-23 [ | Insufficient evidence | Described as released in the provided LinkedIn snippet [ |

| Context window | 1M context window [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Pricing evidence | 1M context at standard API pricing with no long-context premium [ | Insufficient evidence beyond rate-limit reference [ | Insufficient evidence | Insufficient evidence |

| Output limit | A third-party source says up to 128K output tokens [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Coding | Anthropic positions it as frontier-level for coding, and a third-party source says it is strong for coding [ | Insufficient evidence | Insufficient evidence | Positioned against GPT-5.4 and Claude Opus 4.6 on coding benchmarks, according to one LinkedIn snippet [ |

| Agents / tool use | Anthropic says it pushes the frontier for AI agents [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Knowledge work | Anthropic says it has meaningful gains on knowledge-worker tasks [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Multimodal reasoning | A third-party source lists multimodal reasoning as a target capability area [ | Insufficient evidence | Insufficient evidence | Insufficient evidence |

| Open weights | No evidence that Claude Opus 4.7 is open-weight | No evidence that GPT-5.5 is open-weight | Insufficient evidence | Described as open-weight in one LinkedIn snippet [ |

| Benchmarks | A Vellum article exists discussing Claude Opus 4.7 benchmarks, including coding, agentic, finance, reasoning, and search-related categories, but the provided snippet does not include specific scores [ | Insufficient evidence | Insufficient evidence | Only a broad claim about positioning on coding benchmarks is provided [ |

Model-by-model assessment

Claude Opus 4.7

Claude Opus 4.7 has the clearest evidence base in the provided material. Anthropic describes it as a hybrid reasoning model that advances coding and AI-agent use cases and includes a 1M context window [4].

The most concrete differentiator is long context: Anthropic says Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium [2]. That makes it the only model in the provided evidence with a clearly documented context-window advantage [

2][

4].

Claude Opus 4.7 also has the broadest capability claims in the supplied sources. Anthropic says it shows meaningful gains on knowledge-worker tasks [2], while a third-party source positions it for coding, enterprise workflows, multimodal reasoning, financial analysis, life sciences, cybersecurity, and long-running agentic work [

6].

However, the provided benchmark evidence is incomplete. A Vellum article is listed as explaining Claude Opus 4.7 benchmarks across categories such as SWE-bench Verified, SWE-bench Pro, Terminal-Bench 2.0, MCP-Atlas, Finance Agent v1.1, OSWorld-Verified, BrowseComp, and GPQA, but the provided snippet does not include actual scores or rankings [5].

GPT-5.5

GPT-5.5 is confirmed in the provided evidence as an OpenAI API model. The OpenAI API documentation snippet lists gpt-5.5 and a dated version, gpt-5.5-2026-04-23 [1].

The same OpenAI snippet mentions rate limits, but only in general terms: rate limits cap requests or token usage and depend on usage tier [1]. The snippet does not provide GPT-5.5’s context window, pricing, benchmark results, modalities, training cutoff, tool-use features, or coding performance [

1].

Because the provided GPT-5.5 evidence is minimal, it cannot be fairly compared against Claude Opus 4.7 on capability, cost, latency, benchmarks, or agentic performance.

DeepSeek V4

No evidence for DeepSeek V4 is included in the provided source list. Insufficient evidence.

That means there is no sourced basis here to describe DeepSeek V4’s release status, context window, pricing, weights, architecture, benchmarks, coding performance, reasoning performance, or API availability.

Kimi K2.6

The only provided evidence for Kimi K2.6 is a LinkedIn article snippet saying Moonshot AI released Kimi K2.6 as an open-weight model [45]. The same snippet says it is positioned against GPT-5.4 and Claude Opus 4.6 on coding benchmarks [

45].

That evidence is too thin for a robust evaluation. The snippet does not provide benchmark names, scores, methodology, context length, license terms, API availability, hardware requirements, pricing, safety evaluations, or comparisons against Claude Opus 4.7 or GPT-5.5 [45].

Evidence notes

- Official vendor sources support the strongest claims for Claude Opus 4.7 and GPT-5.5: Anthropic sources support Claude’s 1M context and positioning [

2][

4], and OpenAI’s API documentation supports the existence of GPT-5.5 as an API model [

1].

- Third-party sources add useful but weaker evidence for Claude Opus 4.7’s broader enterprise and domain positioning [

6].

- The Kimi K2.6 evidence is especially limited because it comes only from a LinkedIn article snippet, not an official Moonshot AI source or benchmark report [

45].

- The provided DeepSeek V4 evidence is absent. Insufficient evidence.

Limitations / uncertainty

- There are no direct benchmark scores in the provided evidence for GPT-5.5, DeepSeek V4, or Kimi K2.6.

- There are no apples-to-apples benchmark numbers across all four models.

- There is no supplied evidence for latency, cost per token, availability by region, safety evaluations, tool-use reliability, coding benchmark scores, or real-world deployment performance across the four models.

- Claims about Claude Opus 4.7 are much better supported than claims about the other three models because the evidence includes official Anthropic documentation [

2][

4].

- Any definitive ranking such as “best overall,” “best coder,” “best reasoning model,” or “best value” would be unsupported by the provided evidence.

Summary

Claude Opus 4.7 appears strongest on the available evidence because it has official documentation for a 1M context window, no long-context premium, coding and agentic positioning, and knowledge-work improvements [2][

4]. GPT-5.5 is confirmed as an OpenAI API model, but the provided evidence does not describe its capabilities beyond model listing and rate-limit context [

1]. Kimi K2.6 is only lightly supported by a third-party snippet describing it as open-weight and coding-benchmark-oriented [

45]. DeepSeek V4 cannot be assessed from the provided evidence.

People also ask

What is the short answer to "Research & compare Claude Opus 4.7, GPT-5.5, DeepSeek V4, Kimi K2.6 as comprehensively as possible"?

Claude Opus 4.7 is described by Anthropic as a “hybrid reasoning model” focused on coding and AI agents, with a 1M context window.

What are the key points to validate first?

Claude Opus 4.7 is described by Anthropic as a “hybrid reasoning model” focused on coding and AI agents, with a 1M context window. Anthropic’s documentation says Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium.

What should I do next in practice?

Anthropic’s documentation also says Claude Opus 4.7 shows “meaningful gains” on knowledge-worker tasks.

Which related topic should I explore next?

Continue with "Research benchmarks of Claude Opus 4.7, GPT-5.5, DeepSeek V4, Kimi K2.6 and compare as comprehensively as possible" for another angle and extra citations.

Open related pageWhat should I compare this against?

Cross-check this answer against "Research & compare Claude Code vs OpenAI Codex as comprehensively as possible".

Open related pageContinue your research

Sources

- [1] What's new in Claude Opus 4.7platform.claude.com

We suggest updating your max tokens parameters to give additional headroom, including compaction triggers. Claude Opus 4.7 provides a 1M context window at standard API pricing with no long-context premium. Capability improvements Knowledge work Claude Opus...

- [2] Anthropic Launches Claude Opus 4.7, Resolving Market Timeline Uncertainty | KuCoinkucoin.com

CoinDesk reports: Anthropic released Claude Design based on Claude Opus 4.7 on April 17, 2026. The contract for the Claude 4.7 release signed by Polymarket expires on May 31.100%Yes. The April 17 release directly addressed multiple market inquiries regardin...

- [3] Claude Opus 4.7 - Anthropicanthropic.com

Skip to main contentSkip to footer []( Research Economic Futures Commitments Learn News Try Claude Claude Opus 4.7 Image 1: Claude Opus 4.7 Image 2: Claude Opus 4.7 Hybrid reasoning model that pushes the frontier for coding and AI agents, featuring a 1M con...

- [4] Claude Opus 4.7 Benchmarks Explained - Vellumvellum.ai

Apr 16, 2026•16 min•ByNicolas Zeeb Guides CONTENTS Key observations of reported benchmarks Coding capabilities SWE-bench Verified SWE-bench Pro Terminal-Bench 2.0 Agentic capabilities MCP-Atlas (Scaled tool use) Finance Agent v1.1 OSWorld-Verified (Computer...

- [5] Claude Opus 4.7 Deep Dive: Capabilities, Migration, and the ...caylent.com

At a spec level, Opus 4.7 is positioned as Anthropic’s most capable generally available model for coding, enterprise workflows, multimodal reasoning, financial analysis, life sciences, cybersecurity, and long-running agentic work. It supports a 1M context w...

- [6] Claude Opus 4.7 lanzado: La mejor IA de programación de Anthropic hasta la fecha en 2026 - AICC - AI.ccai.cc

03Comparativa (y un adelanto de la mitología) Anthropic es transparente: Opus 4.7 es su más capaz disponible públicamente modelo, pero va por detrás de su modelo interno Avance de Claude Mythos En varios aspectos (especialmente en ciberseguridad, lo que lle...

- [7] Claude Opus 4.7 Unleashed: Anthropic's Latest AI Powerhouse ...alphamatch.ai

For organizations choosing an LLM in 2026, Opus 4.7 offers benchmark-leading coding and agentic performance under a vendor that has repeatedly emphasized safety alongside innovation—a combination many buyers will weigh carefully. Stay in the loop Keep up to...

- [8] Claude Opus 4.7: release, pricing, context window, and API changesdatastudios.org

····· Claude Opus 4.7 pricing looks stable at first, but real usage costs can still move. List prices stayed familiar, yet effective cost can change once tokenization and image handling are measured in production. Claude Opus 4.7 keeps the premium Anthropic...

- [9] Claude API Pricing: Haiku 4.5, Sonnet 4.6, and Opus 4.7 (April 2026)benchlm.ai

Anthropic just launched Claude Opus 4.7 on April 16, 2026 and kept Opus pricing unchanged at $5/$25 per million input/output tokens. That makes the pricing story simpler than many third-party tables suggest: Haiku 4.5 at $1/$5, Sonnet 4.6 at $3/$15, and now...

- [10] Claude Opus 4.7 (max): API Provider Performance Benchmarking & Price Analysis | Artificial Analysisartificialanalysis.ai

Claude Opus 4.7 (Adaptive Reasoning, Max Effort) logo Proprietary model Released April 2026 Claude Opus 4.7 (Adaptive Reasoning, Max Effort) API Provider Benchmarking & Analysis Analysis of API providers for Claude Opus 4.7 (Adaptive Reasoning, Max Effort)...

- [11] Claude Opus 4.7: Pricing, Benchmarks & Context Window - ALM Corpalmcorp.com

Image 5: ALM Corp your strategic partner Tools About us Who We Are Careers Contact CALL US AT: +1-877-871-0497 Get in Touch With Us []( Image 6: Claude Opus 4.7 Home » Blog » Claude Opus 4.7 Explained: Benchmarks, Pricing, Context Window, and What Changed f...

- [12] Claude Opus 4.7 Just Dropped — Here's What's New (2026)youtube.com

Anthropic just released Claude Opus 4.7, their latest AI model. It's a major upgrade for coding and software engineering, but it's still not as powerful as the mysterious Mythos model they're keeping behind closed doors. What's new in Opus 4.7: Major improv...

- [13] GPT-5.5 Model | OpenAI APIdevelopers.openai.com

Image 3: gpt-5.5 gpt-5.5 gpt-5.5-2026-04-23 gpt-5.5-2026-04-23 Rate limits Rate limits ensure fair and reliable access to the API by placing specific caps on requests or tokens used within a given time period. Your usage tier determines how high these limit...

- [14] GPT-5.5 (high) Review | Pricing, Benchmarks & Capabilities (2026)designforonline.com

Pricing Token Type Cost per 1M tokens Cost per 1K tokens --- Input $5.00 $0.005000 Output $30.00 $0.030000 Leaderboard Categories Explore Related Models openai openai openai OpenAI Data sourced from OpenRouter API, Artificial Analysis and Hugging Face Open...

- [15] GPT-5.5 (low): API Provider Performance Benchmarking & Price Analysis | Artificial Analysisartificialanalysis.ai

GPT-5.5 (low) logo Proprietary model Released April 2026 GPT-5.5 (low) API Provider Benchmarking & Analysis Analysis of API providers for GPT-5.5 (low) across performance metrics including latency (time to first token), output speed (output tokens per secon...

- [16] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

Table of Contents What shipped Pricing against GPT-5.4 and Claude Opus 4.7 Benchmarks Agentic coding and computer use Knowledge work and reasoning Science Agentic coding in practice The card problem still exists Safety and the system card What this means fo...

- [17] OpenAI Releases GPT-5.5: Agentic Model with 1M Contextdatanorth.ai

The competitive picture in April 2026 is that no single model dominates all categories. GPT-5.5 is strongest when a task requires autonomous tool use and multi-step execution. Claude Opus 4.7 excels at code generation and knowledge-intensive reasoning. Gemi...

- [18] What Is GPT-5.5 for Builders in 2026? | WaveSpeedAI Blogwavespeed.ai

Item Status --- Release date: April 23, 2026 Confirmed — OpenAI official Live in ChatGPT (Plus/Pro/Business/Enterprise) Confirmed — OpenAI official Live in Codex (Plus/Pro/Business/Enterprise/Edu/Go) Confirmed — OpenAI official 400K context in Codex Confirm...

- [19] GPT-5.5 (high) API Provider Benchmarking & Analysisartificialanalysis.ai

GPT-5.5 (high) logo Proprietary model Released April 2026 GPT-5.5 (high) API Provider Benchmarking & Analysis Analysis of API providers for GPT-5.5 (high) across performance metrics including latency (time to first token), output speed (output tokens per se...

- [20] GPT-5.5 vs GPT-5.4: Pricing, Speed, Context, Benchmarks - LLM Statsllm-stats.com

Spec GPT-5.4 GPT-5.5 --- Release date Mar 5, 2026 Apr 23, 2026 Model ID gpt-5.4 gpt-5.5 Standard input / output price $2.50 / $15.00 per 1M $5.00 / $30.00 per 1M Batch & Flex pricing 0.5× standard 0.5× standard Priority pricing 2.5× standard 2.5× standard A...

- [21] GPT-5.5: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

thinking:true Modalities In text image Out text Resources API ReferencePlaygroundBlog CallingBox The voice stack, already built Telephony, STT, TTS, and orchestration in one API. Give your AI agents a phone number and have them make calls for you. Start for...

- [22] Introducing GPT-5.5 - OpenAIopenai.com

Introducing GPT-5.5 OpenAI Skip to main content Log inTry ChatGPT(opens in a new window) Research Products Business Developers Company Foundation(opens in a new window) Try ChatGPT(opens in a new window)Login OpenAI Table of contents Model capabilities Next...

- [23] OpenAI releases GPT-5.5 amid a shift to rapid-fire AI updates - Fortunefortune.com

Fortune 500 AIOpenAI OpenAI releases GPT-5.5 amid a shift to rapid-fire AI updates By Sharon Goldman Sharon Goldman AI Reporter By Sharon Goldman Sharon Goldman AI Reporter April 23, 2026, 2:13 PM ET OpenAI CEO Sam AltmanAnna Moneymaker/Getty Images [...] B...

- [24] Model Drop: GPT-5.5handyai.substack.com

Available on: ChatGPT for Plus, Pro, Business, and Enterprise users (GPT-5.5 Thinking for all paid tiers, GPT-5.5 Pro limited to Pro / Business / Enterprise). Codex in the CLI, IDE extensions, and the web product, across Plus, Pro, Business, Enterprise, Edu...

- [25] DeepSeek API Pricing Calculator & Cost Guide (Apr 2026) - CostGoatcostgoat.com

CalculatorPricing GuideExamplesSave MoneyFAQ Pricing TLDR • 5 million free tokens for new users (no credit card required) • Pay-per-token: Cache hit ($0.028) • Cache miss ($0.28) • Output ($0.42) per million tokens • Automatic context caching • 128K context...

- [26] DeepSeek V4 API Pricing (March 2026): $0.30/$0.50 per 1M Tokensdevtk.ai

DevTk.AI 中文 Token Counter Pricing Calculator VRAM Calculator MCP Generator MCP Validator OpenAPI → MCP Coding Rules Cursor Rules AGENTS.md Schema Builder Prompt Diff Prompt Library Prompt Generator AI Tools & Repos MCP Servers AI Datasets AI Deals Reading L...

- [27] DeepSeek V4 API Review 2026: Flash vs Pro Guide - EvoLink.AIevolink.ai

As of April 24, 2026, DeepSeek's official API docs now list deepseek-v4-flash and deepseek-v4-pro , publish official pricing for both, and document 1M context plus 384K max output. Reuters separately reported on the same date that V4 launched in preview, wh...

- [28] DeepSeek V4 Preview: The Complete 2026 Guide - o-mega | AIo-mega.ai

Second, the pricing spread is extraordinary. DeepSeek V4-Flash's output pricing of $0.28/MTok is 107x cheaper than GPT-5.5's $30/MTok. Even V4-Pro at $3.48/MTok is roughly 8.6x cheaper than GPT-5.5 on output. The question every developer must answer is whet...

- [29] DeepSeek-V4-Pro-Max: Pricing, Benchmarks & Performancellm-stats.com

Output$3.48/M Throughput 32 tok/s Parameters 1.6T Benchmarks Examples Playground API Benchmarks Arena Performance 64 Websites Leaderboard Rankings 5 Math 6 Healthcare 7 Coding 7 Search 7 Tool Calling 9 Reasoning 9 Legal 10 Finance 20 Vision 33 Long Context...

- [30] Models & Pricing - DeepSeek API Docsapi-docs.deepseek.com

See Thinking Mode for how to switch CONTEXT LENGTH 1M MAX OUTPUT MAXIMUM: 384K FEATURESJson Output✓✓ Tool Calls✓✓ Chat Prefix Completion(Beta)✓✓ FIM Completion(Beta)Non-thinking mode only Non-thinking mode only PRICING 1M INPUT TOKENS (CACHE HIT)$0.028$0.03...

- [31] DeepSeek V4 Pro - API Pricing & Providersopenrouter.ai

DeepSeek V4 Pro - API Pricing & Providers OpenRouter Skip to content OpenRouter / FusionModelsChatRankingsAppsEnterprisePricingDocs Sign Up Sign Up DeepSeek: DeepSeek V4 Pro deepseek/deepseek-v4-pro ChatCompare Released Apr 24, 2026 1,048,576 context$0.435/...

- [32] DeepSeek-V4: a million-token context that agents can actually usehuggingface.co

DeepSeek released V4 today. Two MoE checkpoints are on the Hub: DeepSeek-V4-Pro at 1.6T total parameters with 49B active, and DeepSeek-V4-Flash at 284B total with 13B active. Both have a 1M-token context window. The benchmark numbers are competitive, but no...

- [33] DeepSeek V4 Released: What's New in the Latest Model (2026)sitepoint.com

Coding (HumanEval, SWE-bench) HumanEval pass@1 73.8% (per technical report) Expected further improvement; official scores pending API Pricing (per 1M tokens) $0.27 input / $1.10 output (cache miss; check platform.deepseek.com/pricing for current rates) Not...

- [34] DeepSeek V4: Architecture, Benchmarks, and API Guide (2026)morphllm.com

March 3, 2026·1 min read TL;DRKey SpecsArchitecture: Three InnovationsBenchmark PerformanceFor Coding: How Good Is It?What Changed from V3API PricingCommunity ReactionLimitationsFAQ TL;DR DeepSeek V4 launches this week. It is a 1-trillion-parameter MoE mode...

- [35] DeepSeek V4—almost on the frontier, a fraction of the pricesimonwillison.net

DeepSeek V4—almost on the frontier, a fraction of the price Simon Willison’s Weblog Subscribe Sponsored by: Sonar — Now with SAST + SCA for secure, dependency-aware Agentic Engineering. SonarQube Advanced Security DeepSeek V4—almost on the frontier, a fract...

- [36] DeepSeek V4: 1T Parameter AI Model Guidedeepseek.ai

DeepSeek V4: 1T Parameter AI Model Guide Independent DeepSeek Resource Hub Deepseek.ai is an independent website and is not affiliated with, sponsored by, or endorsed by Hangzhou DeepSeek Artificial Intelligence Co., Ltd. Machine Learning & Artificial Intel...

- [37] Kimi K2.6 Tech Blog: Advancing Open-Source Codingkimi.com

To reproduce official Kimi-K2.6 benchmark results, we recommend using the official API. For third-party providers, refer to Kimi Vendor Verifier (KVV) to ...

- [38] Kimi K2.6 API by MOONSHOTAI - Competitive Pricing - Atlas Cloudatlascloud.ai

Kimi K2.6 API - competitive pricing, transparent rates. Starting from $0.95/1M tokens. Unified API access, OpenAI-compatible endpoints, real-time inference.

- [39] How to Use Kimi K2.6: Complete Guide to Moonshot AI's New 1T ...tosea.ai

A practical walkthrough of Kimi K2.6 — Moonshot AI's April 2026 open-source MoE release with 1T parameters, 256K context, 300-agent swarms, ... 7 days ago

- [40] MoonshotAI: Kimi K2.6 – Effective Pricing | OpenRouteropenrouter.ai

Built on Kimi K2 with continued pretraining over approximately 15T mixed visual and text tokens, it delivers strong performance in general ... 6 days ago

- [41] MoonshotAI: Kimi K2.6 Reviewdesignforonline.com

MoonshotAI: Kimi K2.6 by MoonshotAI. 262K context, from $0.7500/1M tokens, vision, tool use, function calling. See benchmarks, comparisons ... 3 days ago

- [42] Kimi K2.6: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

Kimi K2.6 has a context window of 262,144 tokens for input and can generate up to 262,144 tokens of output. The best provider for maximum ... 6 days ago

- [43] Moonshot AImoonshot.ai

K2.6 is a natively multimodal model, powerful coding capabilities, and Agent performance — multiple modes, your choice. Explore Features. Discover Kimi ...

- [44] Kimi K2.6 Is the Open Model Release OpenClaw Users Were ...trilogyai.substack.com

Kimi K2.6 is a 1T-parameter mixture-of-experts model with 32B active parameters, a 262K context window in Moonshot's published runs, and native ... 6 days ago

- [45] Moonshot AI Unveils Kimi K2.6, an Open-Weight Model Built for ...linkedin.com

Moonshot AI has released Kimi K2.6 as an open-weight model, positioning it directly against GPT-5.4 and Claude Opus 4.6 on coding benchmarks ... 6 days ago

- [46] Kimi K2.6: What Moonshot AI's New Open Source Model Means for ...reddit.com

Kimi K2.6: Advancing Open-Source Coding. I've spent some time testing Kimi K2.6 and also gathered feedback from a few real users, ... 5 days ago