搜尋並查核事實:Kimi K2.6 在中國開源模型裡到底算第幾?跟 DeepSeek 這些模型比誰比較強?

只看你提供的這批證據,不能可靠地說 Kimi K2.6 在中國開源模型裡排第幾;現有資料只顯示 Moonshot Kimi 被列入中國模型 benchmark 排行的比較範圍,但沒有看到 Kimi K2.6 的具體名次。[4] 如果問它跟 DeepSeek 誰更強,現有證據也不足以下定論;不過在公開的開源模型文章與榜單能見度上,DeepSeek 和 Qwen 的位置比 Kimi 更明確。[1][4] 能確認的事 BenchLM 的...

只看你提供的這批證據,不能可靠地說 Kimi K2.6 在中國開源模型裡排第幾;現有資料只顯示 Moonshot Kimi 被列入中國模型 benchmark 排行的比較範圍,但沒有看到 Kimi K2.6 的具體名次。[4] 如果問它跟 DeepSeek 誰更強,現有證據也不足以下定論;不過在公開的開源模型文章與榜單能見度上,DeepSeek 和 Qwen 的位置比 Kimi 更明確。[1][4] 能確認的事 BenchLM 的 2026「中國模型」榜單把 DeepSeek、Qwen、GLM、Moonshot Kimi 放在同一個 benchmark 框架下比較,但你提供的片段沒有顯示 Kimi K2.6 的實際名次。[4] 這也

重點整理

- 只看你提供的這批證據,不能可靠地說 Kimi K2.6 在中國開源模型裡排第幾;現有資料只顯示 Moonshot Kimi 被列入中國模型 benchmark 排行的比較範圍,但沒有看到 Kimi K2.6 的具體名次。[4] 如果問它跟 DeepSeek 誰更強,現有證據也不足以下定論;不過在公開的開源模型文章與榜單能見度上,DeepSeek 和 Qwen 的位置比 Kimi 更明確。[1][4]

- ## 能確認的事

研究答案

只看你提供的這批證據,不能可靠地說 Kimi K2.6 在中國開源模型裡排第幾;現有資料只顯示 Moonshot Kimi 被列入中國模型 benchmark 排行的比較範圍,但沒有看到 Kimi K2.6 的具體名次。[4] 如果問它跟 DeepSeek 誰更強,現有證據也不足以下定論;不過在公開的開源模型文章與榜單能見度上,DeepSeek 和 Qwen 的位置比 Kimi 更明確。[

1][

4]

能確認的事

- BenchLM 的 2026「中國模型」榜單把 DeepSeek、Qwen、GLM、Moonshot Kimi 放在同一個 benchmark 框架下比較,但你提供的片段沒有顯示 Kimi K2.6 的實際名次。[

4]

- 這也代表,至少就這批證據來看,無法查實「Kimi K2.6 在中國開源模型裡第幾」這個說法。[

4]

- 另外,現有片段把 Kimi 放進的是「中國模型」榜單,而不是明確可見的「中國開源模型」子榜單,所以把它直接斷言為某個「開源名次」,前提本身也不完整。[

4]

- 一篇 2025 更新的「最佳開源 LLM」文章在標題直接點出 Qwen 3 和 DeepSeek R1,說明這兩個系列在開源模型討論裡屬於前排選手。[

1]

- 關於 Kimi K2.6,一篇 2026 年 4 月 round-up 只說作者已測過 Kimi K2.6,而 DeepSeek v4 當時仍是 rumors/leaks 狀態,若發布後才會用同一套工作負載做直接比較;所以這份證據本身不支持「Kimi 已明確勝過 DeepSeek v4」的說法。[

3]

- 另一篇 2026 評測把 DeepSeek 描述成能在回覆品質上與 ChatGPT、Claude 競爭的「budget flagship」,但這屬於評論型來源,不是完整公開 benchmark 排名。[

7]

查核結論

- 「Kimi K2.6 在中國開源模型裡到底算第幾」:證據不足,不能定名次。[

4]

- 「Kimi K2.6 跟 DeepSeek 比誰比較強」:證據不足,不能下定論。[

3][

4]

- 如果只看目前這批公開材料的能見度與已知排名框架,DeepSeek 和 Qwen 的證據比 Kimi 更強,Kimi K2.6 沒有足夠公開 benchmark 證據去證明自己已經全面超過 DeepSeek。[

1][

3][

4]

如果你要,我下一則可以把這些證據整理成一個「Kimi vs DeepSeek vs Qwen」的超短對照表,只列已被這批來源支持的項目。

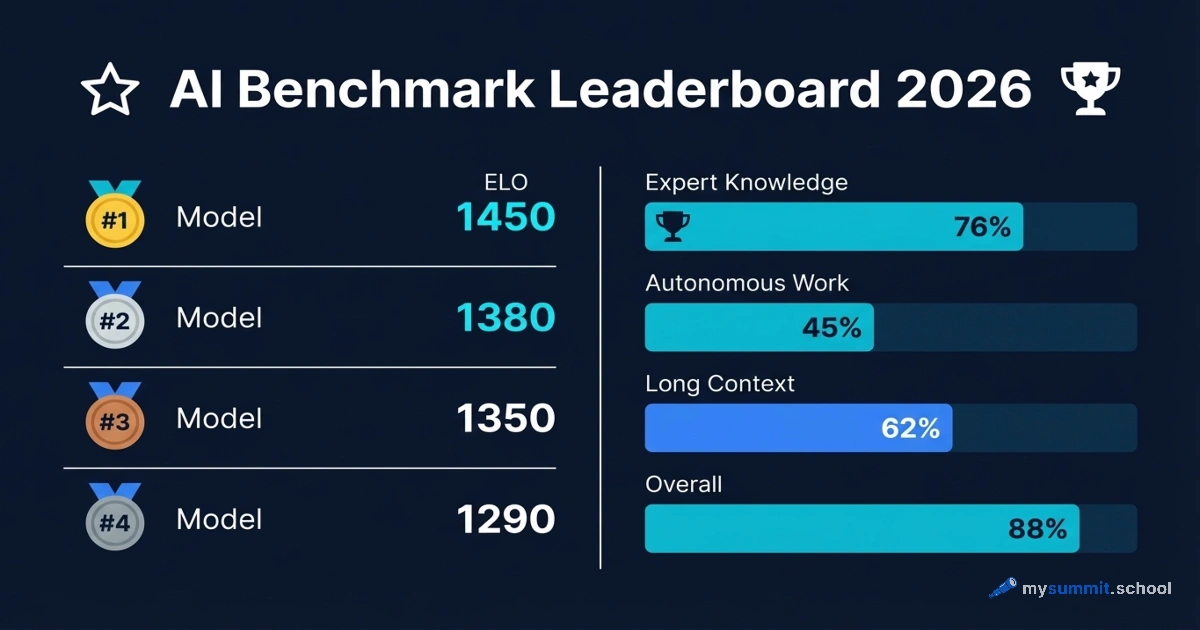

輔助視覺素材

研究對話

研究問題

搜尋並查核事實:Kimi K2.6 在中國開源模型裡到底算第幾?跟 DeepSeek 這些模型比誰比較強?

附引用的答案

只看你提供的這批證據,不能可靠地說 Kimi K2.6 在中國開源模型裡排第幾;現有資料只顯示 Moonshot Kimi 被列入中國模型 benchmark 排行的比較範圍,但沒有看到 Kimi K2.6 的具體名次。[4] 如果問它跟 DeepSeek 誰更強,現有證據也不足以下定論;不過在公開的開源模型文章與榜單能見度上,DeepSeek 和 Qwen 的位置比 Kimi 更明確。[

1][

4]

能確認的事

- BenchLM 的 2026「中國模型」榜單把 DeepSeek、Qwen、GLM、Moonshot Kimi 放在同一個 benchmark 框架下比較,但你提供的片段沒有顯示 Kimi K2.6 的實際名次。[

4]

- 這也代表,至少就這批證據來看,無法查實「Kimi K2.6 在中國開源模型裡第幾」這個說法。[

4]

- 另外,現有片段把 Kimi 放進的是「中國模型」榜單,而不是明確可見的「中國開源模型」子榜單,所以把它直接斷言為某個「開源名次」,前提本身也不完整。[

4]

- 一篇 2025 更新的「最佳開源 LLM」文章在標題直接點出 Qwen 3 和 DeepSeek R1,說明這兩個系列在開源模型討論裡屬於前排選手。[

1]

- 關於 Kimi K2.6,一篇 2026 年 4 月 round-up 只說作者已測過 Kimi K2.6,而 DeepSeek v4 當時仍是 rumors/leaks 狀態,若發布後才會用同一套工作負載做直接比較;所以這份證據本身不支持「Kimi 已明確勝過 DeepSeek v4」的說法。[

3]

- 另一篇 2026 評測把 DeepSeek 描述成能在回覆品質上與 ChatGPT、Claude 競爭的「budget flagship」,但這屬於評論型來源,不是完整公開 benchmark 排名。[

7]

查核結論

- 「Kimi K2.6 在中國開源模型裡到底算第幾」:證據不足,不能定名次。[

4]

- 「Kimi K2.6 跟 DeepSeek 比誰比較強」:證據不足,不能下定論。[

3][

4]

- 如果只看目前這批公開材料的能見度與已知排名框架,DeepSeek 和 Qwen 的證據比 Kimi 更強,Kimi K2.6 沒有足夠公開 benchmark 證據去證明自己已經全面超過 DeepSeek。[

1][

3][

4]

如果你要,我下一則可以把這些證據整理成一個「Kimi vs DeepSeek vs Qwen」的超短對照表,只列已被這批來源支持的項目。

大家也會問

「搜尋並查核事實:Kimi K2.6 在中國開源模型裡到底算第幾?跟 DeepSeek 這些模型比誰比較強?」的簡短答案是什麼?

只看你提供的這批證據,不能可靠地說 Kimi K2.6 在中國開源模型裡排第幾;現有資料只顯示 Moonshot Kimi 被列入中國模型 benchmark 排行的比較範圍,但沒有看到 Kimi K2.6 的具體名次。[4] 如果問它跟 DeepSeek 誰更強,現有證據也不足以下定論;不過在公開的開源模型文章與榜單能見度上,DeepSeek 和 Qwen 的位置比 Kimi 更明確。[1][4]

最值得優先驗證的重點是什麼?

只看你提供的這批證據,不能可靠地說 Kimi K2.6 在中國開源模型裡排第幾;現有資料只顯示 Moonshot Kimi 被列入中國模型 benchmark 排行的比較範圍,但沒有看到 Kimi K2.6 的具體名次。[4] 如果問它跟 DeepSeek 誰更強,現有證據也不足以下定論;不過在公開的開源模型文章與榜單能見度上,DeepSeek 和 Qwen 的位置比 Kimi 更明確。[1][4] ## 能確認的事

下一步適合探索哪個相關主題?

繼續閱讀「搜尋及事實查核:Does Kimi K2.6 work inside coding-agent tools like Claude Code, Roo Code, or Cline?」,從另一個角度查看更多引用來源。

開啟相關頁面我應該拿這個和什麼比較?

將這個答案與「搜尋並查核事實:Kimi K2.6 開源之後要怎麼自己部署?本地端或私有雲跑得動嗎?」交叉比對。

開啟相關頁面繼續深入研究

來源

- [1] AI Model Roundup April 2026: Kimi K2.6, Spud, Grok 4.3mejba.me

My tested breakdown of the April 2026 AI model roundup — Kimi K2.6, GPT-5.5 Spud, Grok 4.3, DeepSeek v4 rumors, Qwen 3.6 Max, Codex Chronicle. If DeepSeek v4 ships this week — which is what some of the leaks imply — I'll run the same Laravel audit job I ran on Kimi K2.6 and publish the real numbers. I moved my long-horizon agent workload from Opus to Kimi K2.6 running locally. Not all of it — the short-form creative writing and reasoning-heavy client work still runs on Opus 4.7. The best AI model in April 2026 depends on your workload: Kimi K2.6 for agentic, long-horizon, cost-sensitive t…

- [2] Best Open Source LLMs in 2026: We Reviewed 7 Modelsfireworks.ai

- [3] DeepSeek in 2026: A Review of the Budget Flagship Among AI Models | mysummit.school - AI for Managers Blogmysummit.school

DeepSeek in 2026: A Review of the Budget Flagship Among AI Models. DeepSeek AI for Managers Tool Comparison AI Model Review Open Source AI. DeepSeek in 2026: A Review of the Budget Flagship Among AI Models. By February 2026, DeepSeek proved it can compete with ChatGPT and Claude in response quality while costing 10–30 times less. * DeepSeek-V3.2 – the flagship model (December 2025). * DeepSeek-R1 – a model for “deep thinking.” It reasons step by step before answering, verifying its own logic. * DeepSeek-Janus Pro – a multimodal model. * Stress-testing decision logic (R1)…

- [4] Kimi 2.6 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

According to BenchLM.ai, Kimi 2.6 ranks #13 out of 110 models on the provisional leaderboard with an overall score of 83/100. ### How does Kimi 2.6 perform overall in AI benchmarks? Kimi 2.6 currently ranks #13 out of 110 models on BenchLM's provisional leaderboard with an overall score of 83. Kimi 2.6 has visible benchmark coverage in knowledge and understanding, but BenchLM does not currently assign it a global category rank there. Kimi 2.6 ranks #6 out of 110 models in coding and programming benchmarks with an average score of 89.8. Kimi 2.6 has visible benchmark coverage i…

- [5] Kimi K2 is the large language model series developed by Moonshot ...github.com

Skip to content. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert. * Code. * Issues 61. * Pull requests 3. * [Actions](https://github.c…

- [6] Kimi K2.6: Pricing, Benchmarks & Performancellm-stats.com

Benchmarks. Compare. Compare. Chat.

. Kimi K2.6Qwen3.6 PlusGemini 3 FlashClaude Opus 4.6[Muse Spark](https:…

. Kimi K2.6Qwen3.6 PlusGemini 3 FlashClaude Opus 4.6[Muse Spark](https:… - [7] Moonshot AI releases Kimi-K2.6 model with 1T parameters, attention optimizations - SiliconANGLEsiliconangle.com

Moonshot AI releases Kimi-K2.6 model with 1T parameters, attention optimizations. Moonshot AI today released Kimi-K2.6, the latest addition to its popular Kimi series of open-source large language models. Kimi-K2.6’s neurons are organized into 384 so-called experts, miniature neural networks that are each optimized for a different set of tasks. Kimi-K2.6’s neural networks use a technology called MLA, or multi-head latent attention, to identify the most important part of a prompt. Kimi-K2.6’s neural networks are supported by a vision encoder with 400 million parameters. According to Moonsh…

- [8] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

Kimi-K2.6. Model Introduction](https://huggingface.co/moonshotai/Kimi-K2.6#1-model-introduction "1. Model Summary](https://huggingface.co/moonshotai/Kimi-K2.6#2-model-summary "2. Evaluation Results](https://huggingface.co/moonshotai/Kimi-K2.6#3-evaluation-results "3. Deployment](https://huggingface.co/moonshotai/Kimi-K2.6#5-deployment "5. Model Usage](https://huggingface.co/moonshotai/Kimi-K2.6#6-model-usage "6. * [Chat Completion with visual content](https://huggingface.co/moonshotai/Kimi-K2.6#chat-completion-with-visual-content "Chat Completion…

- [9] [AINews] Moonshot Kimi K2.6: the world's leading Open Model refreshes to catch up to Opus 4.6 (ahead of DeepSeek v4?)latent.space

DeepSeek V4 rumors are back, and we learned our lesson not to get too excited, but in their deafening silence since v3.2, Moonshot has owned the crown of leading Chinese open model lab for all of 2026 to date, and K2.6 refreshes the lead that K2.5 established in January, with (presumably) more continued…

- [10] Moonshot AI’s Kimi K2.6 launch challenges Anthropic’s AI dominancecryptobriefing.com

.

.

. MarketsPredictionsAITech[Newsletter](https://cryptobrief…

- [11] 10 Best Open-Source LLM Models (2025 Updated): Llama 4, Qwen ...huggingface.co

10 Best Open-Source LLM Models (2025 Updated): Llama 4, Qwen 3 and DeepSeek R1. Qwen3 (235B-A22B)](https://huggingface.co/blog/daya-shankar/open-source-llms#1-qwen3-235b-a22b "1. Mixtral 8x22B](https://huggingface.co/blog/daya-shankar/open-source-llms#2-mixtral-8x22b "2. Llama 4 (Scout / Maverick)](https://huggingface.co/blog/daya-shankar/open-source-llms#3-llama-4-scout--maverick "3. DeepSeek-V3 (R1-distilled capable)](https://huggingface.co/blog/daya-sha…

- [12] deepseek-ai (DeepSeek)huggingface.co

DeepSeek. ### AI & ML interests. ### Recent Activity. ### Papers. DualPath: Breaking the Storage Bandwidth Bottleneck in Agentic LLM Inference. DeepSeek-OCR 2: Visual Causal Flow. Zhenda Xie's profile picture"). Shirong Ma's profile picture"). Auden Wu's profile picture"). wangding zeng's profile picture"). Damai Dai's profile picture"). Zhihong Shao's profile picture"). Wen Liu's profile picture"). Chenggang Zhao's profile picture"). Xingkai Yu's profile picture"). Xiaokang Chen's profile picture"). deepseek-coder's profile picture"). kai dong's profile picture"). Xingchao Liu's profile pi…

- [13] deepseek-ai/DeepSeek-V3.2 - Hugging Facehuggingface.co

deepseek-ai / DeepSeek-V3.2 like 1.42k Follow DeepSeek 126k. # DeepSeek-V3.2: Efficient Reasoning & Agentic AI. We introduce DeepSeek-V3.2, a model that harmonizes high computational efficiency with superior reasoning and agent performance. DeepSeek-V3.2 introduces significant updates to its chat template compared to prior versions. To assist the community in understanding and adapting to this new template, we have provided a dedicated

encodingfolder, which contains Python scripts and test cases demonstrating how to encode messages in OpenAI-compatible format into input strings for t… - [14] deepseek-ai/DeepSeek-V3.2 · Discussionshuggingface.co

deepseek-ai / DeepSeek-V3.2 like 1.41k Follow DeepSeek 126k. #### Add YC-Bench benchmark result (avg $125,263). #### Create test. #### Install & run deepseek-ai/DeepSeek-V3.2 easily using llmpm. #### Add SWE-Bench Pro evaluation results. #### Add GPQA evaluation results. #### Add MMLU Pro evaluation results. #### Add HLE evaluation results. #### Add SWE-Bench Verified evaluation results. #### Add MathArena evaluation result for hmmt/hmmt_feb_2026. #### Add Terminal-Bench evaluation result (39.6%). #### Add MathArena evaluation result for aime/aime_2026. #### Add EvasionBench evaluation r…

- [15] One Year Since the “DeepSeek Moment” - Hugging Facehuggingface.co

- The Seeds of China’s Organic Open Source AI Ecosystem. The second covers architectural and hardware choices largely by Chinese companies made in the wake of a growing open ecosystem, available here. The third analyzes prominent organizations’ trajectories and the future of the global open source ecosystem, available [he…

- [16] deepseek-ai (DeepSeek) - Hugging Facehuggingface.co

. *

. *  #### deepseek-ai/DeepSeek-OCR Image-Text-to-Text • 3B•Updated Nov 4, 2025• 2.08M• 3.22k. * [

#### deepseek-ai/DeepSeek-OCR Image-Text-to-Text • 3B•Updated Nov 4, 2025• 2.08M• 3.22k. * [ #### deepseek-ai/DeepSeek-OCR-2 Image-Text…

#### deepseek-ai/DeepSeek-OCR-2 Image-Text… - [17] Spaces - Hugging Facehuggingface.co

Deepseek Ai DeepSeek R1 Distill Llama 70B. #### Deepseek Ai DeepSeek R1. Answer questions with detailed explanations. #### Deepseek Ai DeepSeek R1. #### Deepseek Ai DeepSeek R1. Answer questions with detailed explanations. #### Deepseek Ai DeepSeek R1. #### Deepseek Ai DeepSeek R1. Generate text based on prompts. #### Deepseek Ai DeepSeek R1. Generate video content based on text prompts. #### Deepseek Ai DeepSeek V3. Generate text based on a given prompt. #### Deepseek Ai DeepSeek R1 Distill Qwen 32B. Answer questions using advanced AI. #### Deepseek Ai DeepSeek R1. Generate answers to y…

- [18] nvidia/DeepSeek-V3.2-NVFP4 · Hugging Facehuggingface.co

The NVIDIA DeepSeek-V3.2-NVFP4 model is a quantized version of DeepSeek AI's DeepSeek-V3.2 model, an autoregressive language model that uses an

- [19] deepseek-ai/DeepSeek-V3.2 · Add YC-Bench benchmark result (avg $125,263)huggingface.co

Adding YC-Bench evaluation result for DeepSeek-V3.2. Score: $125,263 (average final funds across seeds 1, 2, 3). Benchmark: collinear-ai/yc-

- [20] lovedheart/DeepSeek-V3.2-REAP-345B-A37B-GGUF-Experimental · Hugging Facehuggingface.co

The game ends when a player makes a move that results in a number > 2026, and that player loses (i.e, the first player who writes a number >

- [21] DavidAU/gemma-3-12b-it-vl-Deepseek-v3.1-Heretic-Uncensored-Thinking · Hugging Facehuggingface.co

Output generation; Image processing; Benchmarks. Model Features: 128k context; Temp range .1 to 2.5. Reasoning is temp stable.

- [22] salttechno/LLM-Model-Comparison-2026 · Datasets at Hugging Facehuggingface.co

Benchmark Scores ; Claude Opus 4.5, 89.5, 91.0 ; Claude Sonnet 4.5, 89.0, 93.0 ; DeepSeek V3, 88.5, 82.6 ; o3, 87.5, 95.2

- [23] Best performance on DeepSeek-R1 for H200 reproduce failed · Issue #3486 · NVIDIA/TensorRT-LLM · GitHubgithub.com

=========================================================== = PYTORCH BACKEND =========================================================== Model: deepseek-ai/DeepSeek-R1 Model Path: None TensorRT-LLM Version: 0.19.0.dev2025041500 Dtype: bfloat16 KV Cache Dtype: None Quantization: FP8_BLOCK_SCALES =========================================================== = REQUEST DETAILS =========================================================== Number of requests: 128 Number of concurrent requests: 68.0876 Average Input Length (tokens): 1024.0000 Average Output Length (tokens): 2048.0000 ==================…

- [24] DeepSeek-R1/DeepSeek_R1.pdf at main · deepseek-ai/DeepSeek-R1 · GitHubgithub.com

Skip to content. ## Navigation Menu. Sign in. # Search code, repositories, users, issues, pull requests... Search syntax tips. Sign in. [Sign up](/signup?ref_cta=Sign+up&ref_loc=header+logged+out&ref_page=%2F%3Cuser-name%3E%2F%3Crepo-name%3E%2Fblob%2Fshow&source=heade…

- [25] GitHub - deepseek-ai/DeepSeek-V3 · GitHubgithub.com

- [26] GitHub - paradite/deepseek-r1-speed-benchmark: Code for benchmarking the speed of DeepSeek R1 from different providers' APIs. · GitHubgithub.com

Code for benchmarking the speed of DeepSeek R1 from different providers' APIs. Read the full report: DeepSeek R1: Comparing Pricing and Speed Across Providers.

- [27] ai-tech-daily/articles/deepseek-2026-04-07.md at main - GitHubgithub.com

DeepSeek made headlines in January 2025 when their R1 model matched GPT-4 on key benchmarks at a fraction of the training cost, sending shockwaves through the

- [28] GitHub - deepseek-ai/DeepSeek-R1 · GitHubgithub.com

DeepSeek-R1 achieves performance comparable to OpenAI-o1 across math, code, and reasoning tasks. To support the research community, we have open-sourced

- [29] dynamo/benchmarks/llm/perf.sh at main - GitHubgithub.com

... 2025-2026 NVIDIA CORPORATION & AFFILIATES. All rights reserved. # SPDX ... # Default Values model="neuralmagic/DeepSeek-R1-Distill-Llama-70B-FP8

- [30] ktransformers/doc/en/DeepseekR1_V3_tutorial.md at main · kvcache-ai/ktransformers · GitHubgithub.com

Feb 10, 2025: Support DeepseekR1 and V3 on single (24GB VRAM)/multi gpu and 382G DRAM, up to 3~28x speedup. Hi, we're the KTransformers team (

- [31] Research: Benchmarking DeepSeek-R1 IQ1_S 1.58bit · Issue #11474 · ggml-org/llama.cpp · GitHubgithub.com

On more analysis - I can see via Open Router https://openrouter.ai/deepseek/deepseek-r1 the API tokens / s is around 3 or 4 tokens / s for R1.

- [32] Benchmark request: DeepSeek-R1-Distill-Llama-70B · Issue #3167 · Aider-AI/aider · GitHubgithub.com

I would like to request to add a benchmark for DeepSeek-R1-Distill-Llama-70B . There are many providers out there, and some like Together.ai

- [33] Deepseek R1 and V3, FP4 quant, output quality issues at batch size > 2 · Issue #4037 · NVIDIA/TensorRT-LLM · GitHubgithub.com

Then run a high concurrency long context benchmark script against localhost:8000. We see a lot of newlines, simple chinese characters and

- [34] DeepSeek · GitHubgithub.com

A high-performance distributed file system designed to address the challenges of AI training and inference workloads.

- [35] AI Model Leaderboard 2026 | Rankings & Benchmarksswfte.com

| 72 | Qwen: Qwen3.5-9BOSS Alibaba Cloud · Open-source | 82 | — | — | $0.05 / $0.15 | 256K | 820.0 | Mar 2026 |. | 74 | Qwen: Qwen3.5-27BOSS Alibaba Cloud · Open-source | 82 | — | — | $0.195 / $1.56 | 262K | 93.4 | Feb 2026 |. | 101 | Qwen: Qwen3 8BOSS Alibaba Cloud · Open-source | 82 | — | — | $0.05 / $0.4 | 41K | 364.4 | Apr 2025 |. | 112 | Qwen2.5 72B InstructOSS Alibaba Cloud · Ope…

- [36] Best Chinese AI Models (2026) — Ranked by Benchmark Data | BenchLM.aibenchlm.ai

Best Chinese AI Models in 2026. Top AI models from Chinese labs — DeepSeek, Alibaba Qwen, Zhipu GLM, Moonshot Kimi, and more — ranked by benchmark performance. Chinese AI labs have produced some of the strongest models on our leaderboard, especially in math, reasoning, and agentic workflows. Bottom line: Chinese AI labs produce some of the strongest models — GLM-5 (Reasoning) scores within striking distance of top proprietary APIs. DeepSeek and Qwen are strong open-weight alternatives. Open-weight models are highly competitive in this category — self-hosting is a viable alternative to propr…

- [37] Open Source LLM Leaderboard 2026: Rankings, Benchmarks & the ...vertu.com

Shopping Cart. Up to 30% OFF on luxury watches. # Open Source LLM Leaderboard 2026: Rankings, Benchmarks & the Best Models Right Now. Choosing the right open-source large language model in 2026 has never been harder — or more exciting. This article breaks down the definitive open-source LLM leaderboard for 2026 — pulling from benchmark scores across MMLU, MMLU-Pro, HumanEval, SWE-bench Verified, LiveCodeBench, AIME 2025, GPQA Diamond, MATH-500, Chatbot Arena, and IFEval — so you can make an informed decision for your specific use case. The leaderboard organizes open-source models into fou…

- [38] The best Chinese open-weight models — and the strongest US rivalsunderstandingai.org

Chinese companies rushed to use the model in their products. OpenAI released open-weight models in August. * Qwen3 235B A22B 2507:1. * Nathan Lambert [noted](https://www.interconnects.ai/p/kimi-k2-thinking-what-it-means#:~:text=This%20is%20one%20of%20the%20first%20open%20model%20to%20have%20this%20ability%20of%20many%2C%20many%20tool%20calls%2C1%20…

- [39] The Open-Source LLM Revolution 2026: How Chinese Models Are Redefining AI Supremacyalphamatch.ai

The Open-Source LLM Revolution 2026: How Chinese Models Are Redefining AI Supremacy. According to the latest benchmarks, open-source LLMs are now achieving performance comparable to leading closed-source models, with some models outperforming established proprietary systems. * Performance: Outperforms other open-source models and rivals leading closed-source models. Alibaba's Qwen series continues to excel with the latest Qwen3 generation, which demonstrates performance comparable to leading models such as GPT-5.2-Thinking, Claude-Opus-4.5, and Gemini 3. GLM-5 leads open-weight models a…

- [40] Top Chinese AI Models in 2026: Capabilities, Use Cases, and Performance - ZenMuxzenmux.ai

Top Chinese AI Models in 2026: Capabilities, Use Cases, and Performance. Top Chinese AI Models in 2026: Capabilities, Use Cases, and Performance. Top Chinese AI Models in 2026: Capabilities, Use Cases, and Performance. As we move further into 2026, Chinese AI models have solidified their place as global competitors, not just in terms of cost-efficiency but also in performance. The rapid advancements in natural language processing (NLP), multimodal capabilities, and coding abilities have set models like DeepSeek-V3, Qwen 3, Doubao 1.5 Pro, Kimi k2, and WuDao 3.0 apart from their Western coun…

- [41] AI Leaderboard 2026 - Compare Top AI Models & Rankingsllm-stats.com

| 3 |

GPT-5.4 OpenAI | 1,665 | 1,146 | 92.8% | — | 1.0M | $2.50 | $15.00 | Proprietary |. | 5 |

GPT-5.4 OpenAI | 1,665 | 1,146 | 92.8% | — | 1.0M | $2.50 | $15.00 | Proprietary |. | 5 |  GLM-5 Zhipu AI | 1,585 | 1,158 | — | 77.8% | 200K | $1.00 | $3.20 | Open Source |. | 8 |

GLM-5 Zhipu AI | 1,585 | 1,158 | — | 77.8% | 200K | $1.00 | $3.20 | Open Source |. | 8 |  [GPT-5.2](https://llm-stats.com/models/gpt-5.2-…

[GPT-5.2](https://llm-stats.com/models/gpt-5.2-… - [42] February 2026 Text Arena Leaderboard Update: Top 3 Remains Tight | Arena posted on the topic | LinkedInlinkedin.com

- [43] LMSYS Chatbot Arena), the top three open labs are now separated ...facebook.com

AI Hub for YOU | The open model race just got tighter. | Facebook. Forgot Account?. . ## [AI Hub for YOU](https://www.facebook.com/groups/1283797225558917/?__cft__[0]=AZYpRaP9joqNmfpXoAyuVXG82UBuCrDjERLSjBK7iTjPFyAH7eLzyCunB2se0…

- [44] SWE-rebench Jan 2026: GLM-5, MiniMax M2.5, Qwen3-Coder-Next ...reddit.com

SWE-rebench Jan 2026: GLM-5, MiniMax M2.5, Qwen3-Coder-Next, Opus 4.6, Codex Performance : r/LocalLLaMA. Skip to main contentSWE-rebench Jan 2026: GLM-5, MiniMax M2.5, Qwen3-Coder-Next, Opus 4.6, Codex Performance : r/LocalLLaMA. Open menu Open navigationGo to Reddit Home. Sign UpSign up for RedditLog InLog in to Reddit. [![Image 1](https://styles.redditmedia.com/t5_81eyvm/styles/communityIc…