GPT 5.5와 Claude Opus 4.7의 성능을 비교해 보세요.

제공된 근거만 보면, GPT 5.5는 전반 벤치마크에서 Claude Opus 4.7보다 앞선다는 신호가 있지만, 근거가 제한적이어서 확정적 우위로 단정하긴 어렵습니다.[32] 반대로 UI 중심 결과물과 일부 사용 맥락에서는 Claude Opus 4.7이 더 강하다는 평가도 있어, 작업 유형에 따라 우열이 갈리는 모습입니다.[6] 현재 근거로 보이는 비교 ChatGPT 인스타그램 게시물에...

제공된 근거만 보면, GPT 5.5는 전반 벤치마크에서 Claude Opus 4.7보다 앞선다는 신호가 있지만, 근거가 제한적이어서 확정적 우위로 단정하긴 어렵습니다.[32] 반대로 UI 중심 결과물과 일부 사용 맥락에서는 Claude Opus 4.7이 더 강하다는 평가도 있어, 작업 유형에 따라 우열이 갈리는 모습입니다.[6] 현재 근거로 보이는 비교 ChatGPT 인스타그램 게시물에 따르면, GPT 5.5는 대부분의 평가 항목에서 Claude Opus 4.7과 Gemini 3.1 Pro보다 앞서며, 브라우징 과제에서만 약간의 격차가 있다고 합니다.[32] 같은 게

주요 시사점

- 제공된 근거만 보면, GPT-5.5는 전반 벤치마크에서 Claude Opus 4.7보다 앞선다는 신호가 있지만, 근거가 제한적이어서 확정적 우위로 단정하긴 어렵습니다.[32] 반대로 UI 중심 결과물과 일부 사용 맥락에서는 Claude Opus 4.7이 더 강하다는 평가도 있어, 작업 유형에 따라 우열이 갈리는 모습입니다.[6]

- ## 현재 근거로 보이는 비교

연구 답변

제공된 근거만 보면, GPT-5.5는 전반 벤치마크에서 Claude Opus 4.7보다 앞선다는 신호가 있지만, 근거가 제한적이어서 확정적 우위로 단정하긴 어렵습니다.[32] 반대로 UI 중심 결과물과 일부 사용 맥락에서는 Claude Opus 4.7이 더 강하다는 평가도 있어, 작업 유형에 따라 우열이 갈리는 모습입니다.[

6]

현재 근거로 보이는 비교

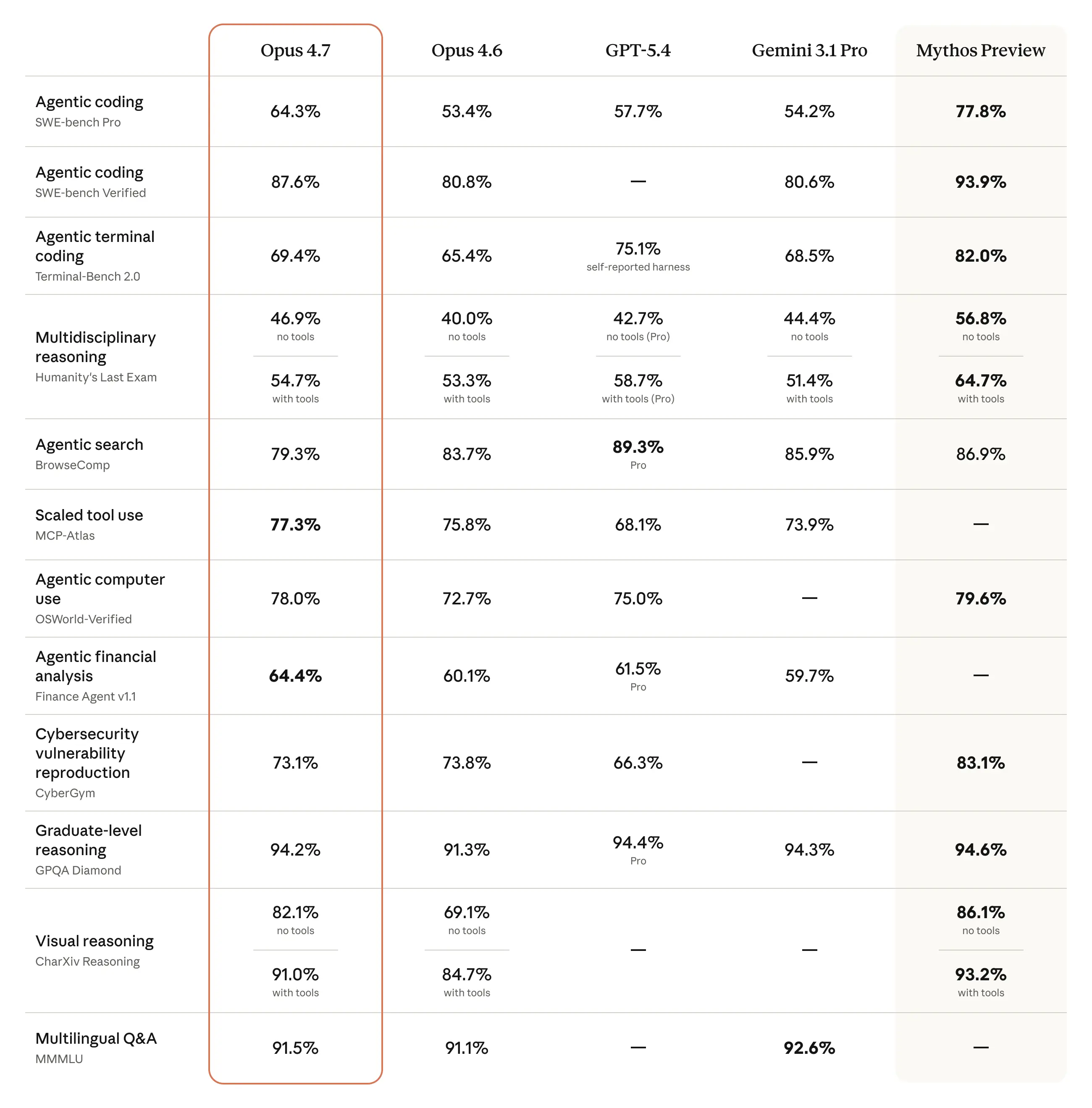

- ChatGPT 인스타그램 게시물에 따르면, GPT-5.5는 대부분의 평가 항목에서 Claude Opus 4.7과 Gemini 3.1 Pro보다 앞서며, 브라우징 과제에서만 약간의 격차가 있다고 합니다.[

32]

- 같은 게시물은 GPT-5.5가 이전 속도를 유지하면서 결과를 개선했고, 같은 작업을 더 적은 토큰으로 끝내 더 효율적이라고 주장합니다.[

32]

- Anthropic는 Claude Opus 4.7을 복잡한 추론과 에이전트형 코딩에서 자사의 가장 강력한 일반 공개 모델이라고 소개했습니다.[

2]

- 제3자 분석인 Appwrite 글은 UI-first 작업에서는 Claude Opus 4.7이 여전히 더 강하며, 레이아웃 계층과 시각적 구조가 더 좋다고 평가했습니다.[

6]

- Anthropic는 4월 20일 이전 시스템 프롬프트 이슈로 Opus 4.6과 4.7이 한 평가에서 각각 3% 하락했지만, 이후 되돌렸다고 밝혔습니다.[

4]

실무적으로 해석하면

- 일반적인 공개 평가 기준만 따르면, 현재 제공된 자료에서는 GPT-5.5가 전체 점수 측면에서 우세해 보입니다.[

32]

- 프런트엔드 시안 생성이나 UI 표현력처럼 시각적 결과물의 구조가 중요한 작업이라면, Claude Opus 4.7이 더 나을 가능성이 있습니다.[

6]

- 브라우징 성능은 GPT-5.5가 약간 뒤처질 수 있다는 신호가 있습니다.[

32]

- 복잡한 추론과 에이전트형 코딩은 Anthropic가 Opus 4.7의 핵심 강점으로 직접 강조한 영역입니다.[

2]

한계와 불확실성

- 근거가 충분하지 않습니다.

- 제공된 자료에는 GPT-5.5와 Claude Opus 4.7의 동일 조건 head-to-head 공식 벤치마크 표, 테스트 방법, 표본 수, 통계적 유의성 정보가 없습니다.

- GPT-5.5 우세 신호는 현재 자료상 주로 ChatGPT 인스타그램 게시물에 의존합니다.[

32]

- Claude 우세 신호는 현재 자료상 주로 제3자 블로그 평가에 의존합니다.[

6]

원하시면 다음 단계로는 코딩, 글쓰기, 브라우징, UI 생성비용 효율

시각적 지원

연구 대화

연구문제

GPT 5.5와 Claude Opus 4.7의 성능을 비교해 보세요.

인용 답변

제공된 근거만 보면, GPT-5.5는 전반 벤치마크에서 Claude Opus 4.7보다 앞선다는 신호가 있지만, 근거가 제한적이어서 확정적 우위로 단정하긴 어렵습니다.[32] 반대로 UI 중심 결과물과 일부 사용 맥락에서는 Claude Opus 4.7이 더 강하다는 평가도 있어, 작업 유형에 따라 우열이 갈리는 모습입니다.[

6]

현재 근거로 보이는 비교

- ChatGPT 인스타그램 게시물에 따르면, GPT-5.5는 대부분의 평가 항목에서 Claude Opus 4.7과 Gemini 3.1 Pro보다 앞서며, 브라우징 과제에서만 약간의 격차가 있다고 합니다.[

32]

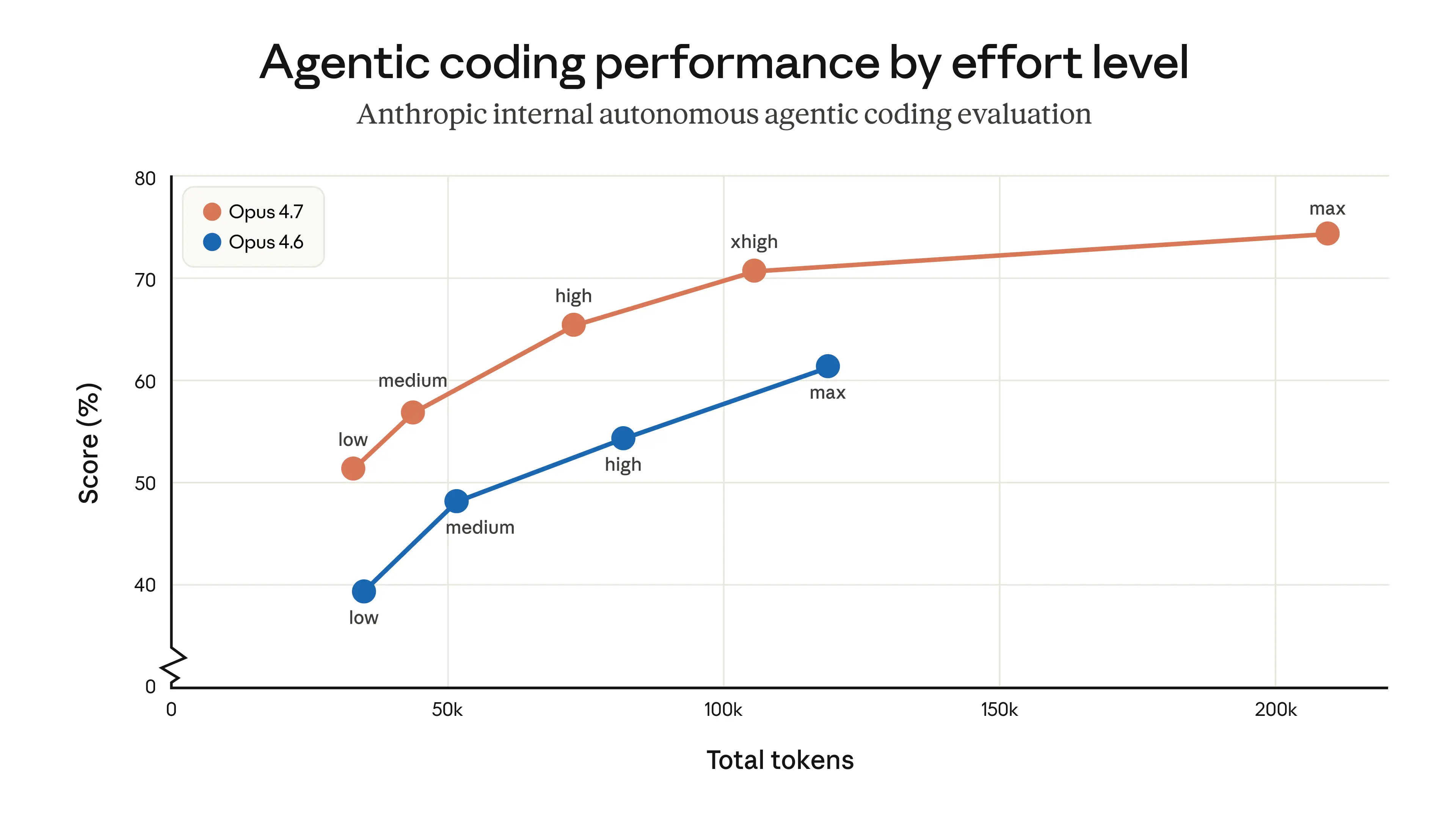

- 같은 게시물은 GPT-5.5가 이전 속도를 유지하면서 결과를 개선했고, 같은 작업을 더 적은 토큰으로 끝내 더 효율적이라고 주장합니다.[

32]

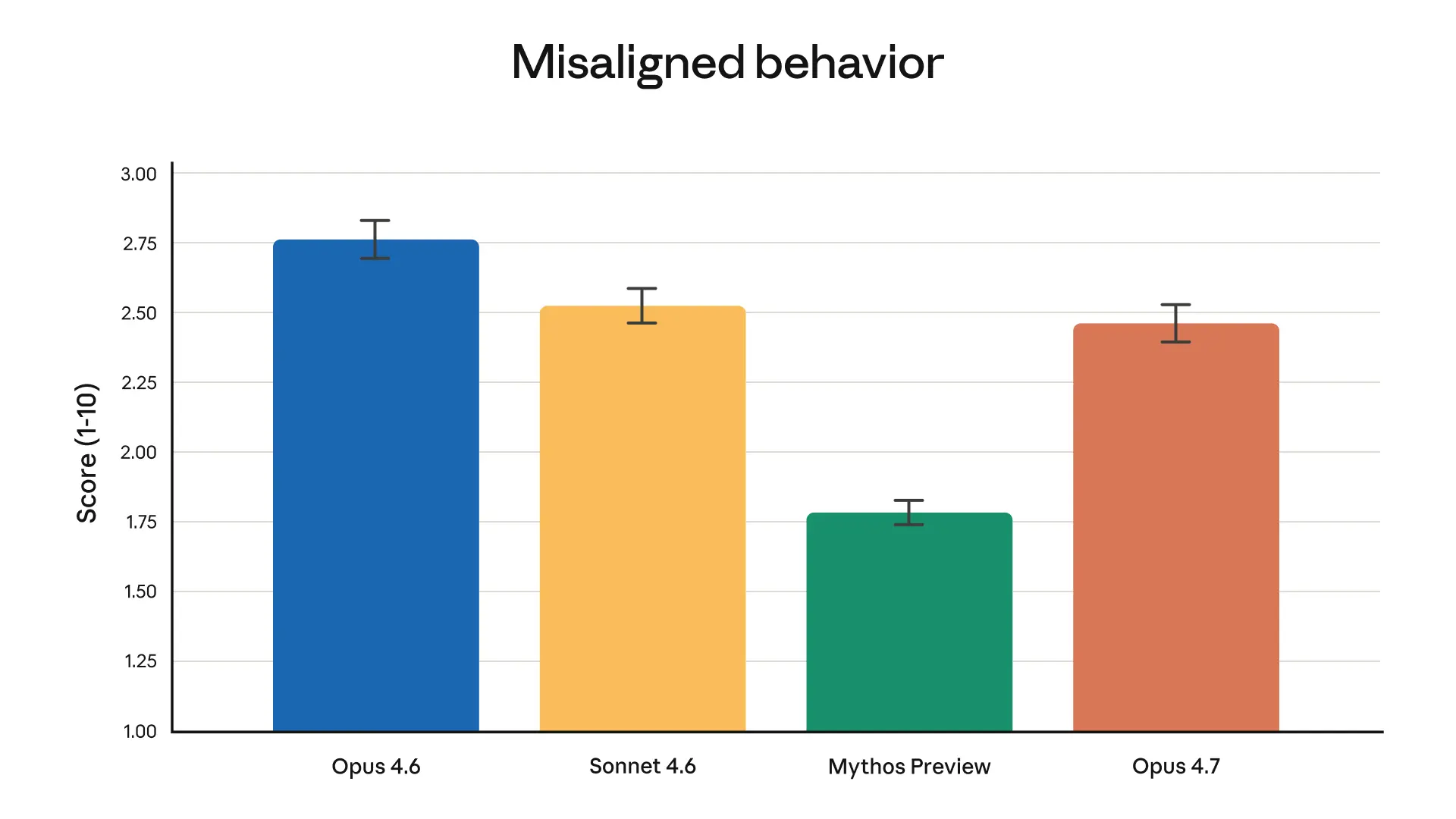

- Anthropic는 Claude Opus 4.7을 복잡한 추론과 에이전트형 코딩에서 자사의 가장 강력한 일반 공개 모델이라고 소개했습니다.[

2]

- 제3자 분석인 Appwrite 글은 UI-first 작업에서는 Claude Opus 4.7이 여전히 더 강하며, 레이아웃 계층과 시각적 구조가 더 좋다고 평가했습니다.[

6]

- Anthropic는 4월 20일 이전 시스템 프롬프트 이슈로 Opus 4.6과 4.7이 한 평가에서 각각 3% 하락했지만, 이후 되돌렸다고 밝혔습니다.[

4]

실무적으로 해석하면

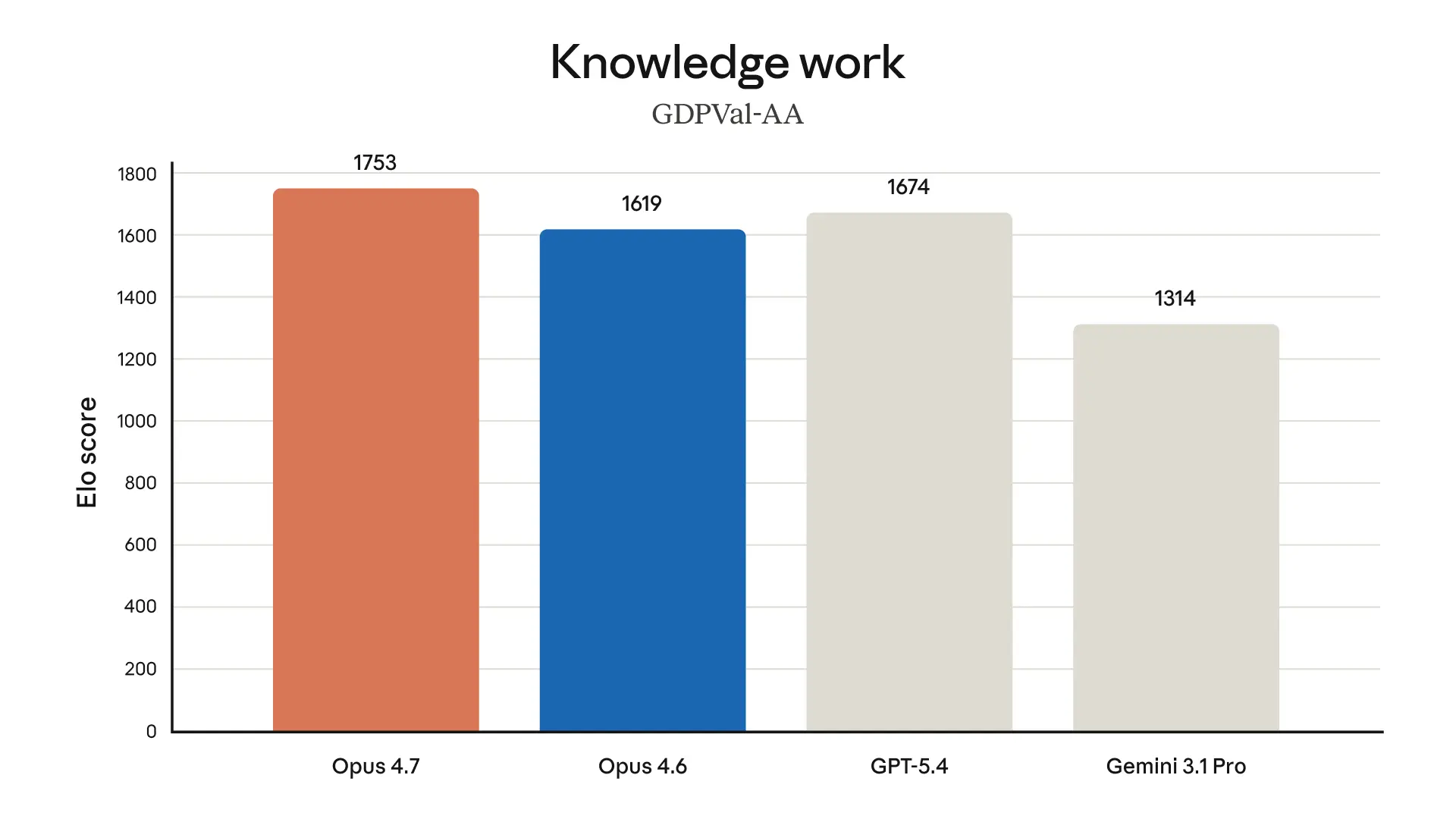

- 일반적인 공개 평가 기준만 따르면, 현재 제공된 자료에서는 GPT-5.5가 전체 점수 측면에서 우세해 보입니다.[

32]

- 프런트엔드 시안 생성이나 UI 표현력처럼 시각적 결과물의 구조가 중요한 작업이라면, Claude Opus 4.7이 더 나을 가능성이 있습니다.[

6]

- 브라우징 성능은 GPT-5.5가 약간 뒤처질 수 있다는 신호가 있습니다.[

32]

- 복잡한 추론과 에이전트형 코딩은 Anthropic가 Opus 4.7의 핵심 강점으로 직접 강조한 영역입니다.[

2]

한계와 불확실성

- 근거가 충분하지 않습니다.

- 제공된 자료에는 GPT-5.5와 Claude Opus 4.7의 동일 조건 head-to-head 공식 벤치마크 표, 테스트 방법, 표본 수, 통계적 유의성 정보가 없습니다.

- GPT-5.5 우세 신호는 현재 자료상 주로 ChatGPT 인스타그램 게시물에 의존합니다.[

32]

- Claude 우세 신호는 현재 자료상 주로 제3자 블로그 평가에 의존합니다.[

6]

원하시면 다음 단계로는 코딩, 글쓰기, 브라우징, UI 생성비용 효율

사람들은 또한 묻습니다.

"GPT 5.5와 Claude Opus 4.7의 성능을 비교해 보세요."에 대한 짧은 대답은 무엇입니까?

제공된 근거만 보면, GPT-5.5는 전반 벤치마크에서 Claude Opus 4.7보다 앞선다는 신호가 있지만, 근거가 제한적이어서 확정적 우위로 단정하긴 어렵습니다.[32] 반대로 UI 중심 결과물과 일부 사용 맥락에서는 Claude Opus 4.7이 더 강하다는 평가도 있어, 작업 유형에 따라 우열이 갈리는 모습입니다.[6]

먼저 검증할 핵심 포인트는 무엇인가요?

제공된 근거만 보면, GPT-5.5는 전반 벤치마크에서 Claude Opus 4.7보다 앞선다는 신호가 있지만, 근거가 제한적이어서 확정적 우위로 단정하긴 어렵습니다.[32] 반대로 UI 중심 결과물과 일부 사용 맥락에서는 Claude Opus 4.7이 더 강하다는 평가도 있어, 작업 유형에 따라 우열이 갈리는 모습입니다.[6] ## 현재 근거로 보이는 비교

다음에는 어떤 관련 주제를 탐구해야 할까요?

다른 각도와 추가 인용을 보려면 "지금 DeepSeek를 어떻게 사용해 볼 수 있나요?"으로 계속하세요.

관련 페이지 열기이것을 무엇과 비교해야 합니까?

"GPT 5.5와 GPT 5.4의 성능을 비교해 보세요."에 대해 이 답변을 대조 확인하세요.

관련 페이지 열기연구를 계속하세요

출처

- [1] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

If you want something more opinionated (a proper storefront with counter rush, seasonal cues, a bento shop layout) you still have to prompt for it explicitly, and even then the fallback is a card grid. For UI-first work, Claude Opus 4.7 is still the stronger model. It produces layouts with clearer hierarchy, tighter typography, and fewer reflexive card grids out of the box. This matters for anyone using Codex or ChatGPT to ship a UI from scratch. A model that is 82.7% on Terminal-Bench 2.0 but still generates the same card layout for a bakery, a SaaS dashboard, and a news site will save you t…

- [2] GPT-5.5 vs Claude Opus 4.7: Benchmarks & Pricing - Digital Applieddigitalapplied.com

Two frontier flagships shipped seven days apart in April 2026. Anthropic released Claude Opus 4.7 on April 16. OpenAI released GPT-5.5 on April 23. Both arrive with 1M-token context windows, both lean on thinking-style reasoning, and both are explicitly positioned as the labs' best models for agentic coding — the highest-stakes commercial AI workload of the year. This guide is a head-to-head, benchmark-by-benchmark comparison: where each model wins, where each model loses, and how to route workloads between them in a production stack. [...] GPT-5.5 (OpenAI) Released April 23, 2026. Default fr…

- [3] Introducing Claude Opus 4.7 - Anthropicanthropic.com

Image 7: logo > Based on our internal research-agent benchmark, Claude Opus 4.7 has the strongest efficiency baseline we’ve seen for multi-step work. It tied for the top overall score across our six modules at 0.715 and delivered the most consistent long-context performance of any model we tested. On General Finance—our largest module—it improved meaningfully on Opus 4.6, scoring 0.813 versus 0.767, while also showing the best disclosure and data discipline in the group. And on deductive logic, an area where Opus 4.6 struggled, Opus 4.7 is solid. > > Michal Mucha > > Lead AI Engineer, Applied…

- [4] OpenAI Releases GPT-5.5: Faster, Smarter—And Pricier - Yahoo Techtech.yahoo.com

It’s also a pretty good coder, as expected. On Expert-SWE, an internal benchmark for long-horizon coding tasks with a median estimated human completion time of 20 hours, GPT-5.5 outperforms GPT-5.4. On SWE-Bench Pro, which grades real-world GitHub issue resolution, it reaches 58.6%. Claude Opus 4.7 scores higher at 64.3%, but OpenAI claims it may be because “Anthropic reported signs of memorization on a subset of problems” Advertisement Advertisement This launch lands in a market that is moving rapidly since the boom of agentic AI. GPT-5.4 arrived just two days after GPT-5.3, while Xiaomi we…

- [5] OpenAI says its new GPT-5.5 model is more efficient and better at codingtheverge.com

The new model is the latest step in an increasingly heated battle between OpenAI and Anthropic as the companies race to potentially go public later this year. Anthropic recently released Claude Opus 4.7 and also announced Mythos Preview, a non-public model it says is uniquely advanced in cybersecurity. OpenAI quickly followed with GPT-5.4-Cyber, its own model trained to flag cybersecurity vulnerabilities. And both companies are competing to corner the market on AI coding and enterprise tools, with OpenAI recently slashing so-called “side quests” in favor of chasing bigger revenue drivers. [..…

- [6] OpenAI's GPT-5.5 is here, and it's no potato - VentureBeatventurebeat.com

The market for leading U.S.-made frontier models has become an increasingly tight race between OpenAI, Anthropic, and Google. Literally a week ago to the date, OpenAI rival Anthropic released Opus 4.7, its most powerful generally available model, to the public, taking over the leaderboard in terms of the number of third-party benchmark tests in which it has the lead. Yet today, GPT-5.5 has surpassed it and even Anthropic's heavily restricted, more powerful model Claude Mythos Preview, albeit only on one benchmark, Terminal-Bench 2.0, which tests "a model's ability to navigate and complete tas…

- [7] GPT-5.5 vs Claude Opus 4.7: Benchmarks, Pricing & Coding ...lushbinary.com

1Release Context & What Changed The timing tells the story. Anthropic released Claude Opus 4.7 on April 16, 2026 — a focused upgrade that pushed SWE-bench Pro from 53.4% (Opus 4.6) to 64.3%, added high-resolution vision up to 3.75 megapixels, and introduced the

xhigheffort level. All at the same $5/$25 per million token pricing as its predecessor. Exactly one week later, on April 23, OpenAI launched GPT-5.5 — codenamed "Spud." Unlike the incremental GPT-5.1 through 5.4 releases, GPT-5.5 is the first fully retrained base model since GPT-4.5. It's natively omnimodal (text, images, audio,… - [8] How OpenAI's recently released GPT-5.5 stacks up with Anthropic's ...rdworldonline.com

The overlapping benchmarks stack up like this: | Benchmark | Mythos (gated) | GPT-5.5 | GPT-5.5 Pro | Opus 4.7† | Notes | --- --- --- | | SWE-bench Pro | 77.8% | 58.6% | — | 64.3% | Memorization concern¹ | | Terminal-Bench 2.0 | 82% / 92.1%² | 82.7% | — | 69.4% | Different harnesses² | | GPQA Diamond | 94.5% | 93.6% | — | 94.2% | At saturation | | HLE, no tools | 56.8% | 41.4% | 43.1% | 46.9% | Largest clean Mythos lead | | HLE, with tools | 64.7% | 52.2% | 57.2% | 54.7% | Different tool stacks | | BrowseComp | 86.9% | 84.4% | 90.1% | 79.3% | Contamination flagged³ | | CyberGym | 83% | 81.8%…

- [9] ChatGPT on Instagram: "GPT-5.5 just raised the bar @openai ...instagram.com

The model matches previous speed while improving results across most evaluations. It also uses fewer tokens to complete the same tasks, which makes it more efficient. Benchmarks shown in the deck place it ahead of Claude Opus 4.7 and Gemini 3.1 Pro in most categories, with only a slight gap in browsing tasks. This signals a clear shift toward faster, more capable, and more efficient AI systems. Are these improvements enough to change how you work daily? Source: @openai Image 6: power.ai's profile picture power.ai20m Improving results while using fewer tokens is a big deal for cost and scale L…

- [10] GPT‑5.5 vs Opus 4.7: The Only Numbers That Actually Matterchetanpujari.substack.com

UX / Design GPT‑5.5 (Codeex) + Clean, on‑brand experience. + Dynamic background, nice drop shadows, modern layout. + Feels very “OpenAI official”. Opus 4.7 (Claude Code) + Different, more Anthropic‑y vibe. + Scrolling banner, animated token visual, clever explanation of attention weights and million‑token memory. + A couple of UX weirdnesses: clicking some links just sends you back to the top, some font sizing/spacing feels off. Both are honestly impressive for a single prompt. From a pure branding perspective, you could ship either with minimal edits. ## Speed & Cost This is where GPT‑5.5…

- [11] I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just ...linkedin.com

in Codex, it's in chat TBT. I'm sure by the time you're watching this video, the API will probably be out. But I saw the announcement and I just jumped on it and wanted to play around. So a quick quote from the president of Opening Eye. It's faster, sharper, and it uses fewer tokens compared to something like GT 5.4. Now let's see where it pulls ahead a little bit. So the terminal bench 2.0, it scored a 82.7, whereas GT 5.4 scored a 75. 21 and Opus 4.7 scored a 69.4 so it is beating Opus here on the terminal bench and it's also beating the previous model. They also ran an expert sweep bench i…

- [12] Model Drop: GPT-5.5handyai.substack.com

Headline benchmarks: Terminal-Bench 2.0 at 82.7% (Opus 4.7: 69.4%, Gemini 3.1 Pro: 68.5%). SWE-Bench Pro at 58.6% (Opus 4.7 still leads at 64.3%). OpenAI’s internal Expert-SWE eval, where tasks have a 20-hour median human completion time, at 73.1% (up from GPT-5.4’s 68.5%). GDPval wins-or-ties at 84.9% (Opus 4.7: 80.3%, Gemini 3.1 Pro: 67.3%). OSWorld-Verified at 78.7% (narrowly edges Opus 4.7’s 78.0%). FrontierMath Tier 4 at 35.4% (Opus 4.7: 22.9%, Gemini 3.1 Pro: 16.7%). CyberGym at 81.8% (Opus 4.7: 73.1%, Anthropic’s Claude Mythos: 83.1%). Tau2-Bench Telecom at 98.0% without prompt tuning.…

- [13] API Pricing - OpenAIopenai.com

OpenAI API Pricing | OpenAI Skip to main content Log inTry ChatGPT(opens in a new window) Research Products Business Developers Company Foundation(opens in a new window) OpenAI API Pricing | OpenAI # API Pricing Contact sales ## Flagship models Our frontier models are designed to spend more time thinking before producing a response, making them ideal for complex, multi-step problems. Choose your processing mode Standard Batch -50%Data residency +10% ## GPT-5.5 (coming soon) A new class of intelligence for coding and professional work. ### Price Input: $5.00 / 1M tokens Cached input: $0.50 /…

- [14] GPT-5.5 is here! Available in Codex and ChatGPT todaycommunity.openai.com

GPT-5.5 is here! Available in Codex and ChatGPT today - Announcements - OpenAI Developer Community Skip to last replySkip to top Skip to main content Image 1: OpenAI Developer Community Docs API Support Sign Up Log In Topics More Resources Documentation API reference Help center Categories Announcements API Prompting Documentation Plugins / Actions builders All categories Tags chatgpt gpt-4 lost-user api assistants-api All tags Light mode Welcome to the OpenAI Developer Community, a forum for developers to meet and chat with other developers while building with OpenAI’s APIs and devel…

- [15] GPT-5.5 is here! Available in Codex and ChatGPT today - #7 by _jcommunity.openai.com

GPT-5.5 is here! Available in Codex and ChatGPT today A straight-up price-doubling on top of a price-doubling between gpt-5.1 to gpt-5.4, on top of a price doubling to use faster yet cheaper to operate inference, reserved for service_tier:priority. For what they directly say is the same “latency” aka compute time. And with pricing tied to API, that’s ChatGPT-purchased Codex credits going half as far, on a platform where they give 0-day model shutoffs. (“Remember, we don’t have enough thinking time for both you and the US Department of War”) ### Related topics [...] ### Related topics | Top…

- [16] GPT-5.5 System Cardopenai.com

GPT-5.5 System Card | OpenAI Skip to main content Log inTry ChatGPT(opens in a new window) Research Products Business Developers Company Foundation(opens in a new window) GPT-5.5 System Card | OpenAI April 23, 2026 SafetyPublication # GPT‑5.5 System Card Read the System Card(opens in a new window) Share ## 1. Introduction GPT‑5.5 is a new model designed for complex, real-world work, including writing code, researching online, analyzing information, creating documents and spreadsheets, and moving across tools to get things done. Relative to earlier models, GPT‑5.5 understands the task earlie…

- [17] Introducing GPT-5 - OpenAIopenai.com

Keep reading View all Image 1: Hero Art Card SEO 1x1 Introducing GPT-5.5 Product Apr 23, 2026 Image 2: Making ChatGPT free for clinicians Making ChatGPT better for clinicians Product Apr 22, 2026 Image 3: OAI Blog Agents Hero 1x1 Introducing workspace agents in ChatGPT Product Apr 22, 2026 Our Research Research Index Research Overview Research Residency Economic Research Latest Advancements GPT-5.5 GPT-5.4 GPT-5.3 Instant GPT-5.3-Codex Safety Safety Approach Security & Privacy Trust & Transparency ChatGPT Explore ChatGPT(opens in a new window) Business Enterprise Education Pricing(opens in…

- [18] Introducing gpt-realtime and Realtime API updates for production ...openai.com

Livestream replay 2025 ## Author OpenAI ## Keep reading View all Image 2: Hero Art Card SEO 1x1 Introducing GPT-5.5 Product Apr 23, 2026 Image 3: Making ChatGPT free for clinicians Making ChatGPT better for clinicians Product Apr 22, 2026 Image 4: OAI Blog Agents Hero 1x1 Introducing workspace agents in ChatGPT Product Apr 22, 2026 Our Research Research Index Research Overview Research Residency Economic Research Latest Advancements GPT-5.5 GPT-5.4 GPT-5.3 Instant GPT-5.3-Codex Safety Safety Approach Security & Privacy Trust & Transparency ChatGPT Explore ChatGPT(opens in a new window) Bus…

- [19] Making ChatGPT better for clinicians - OpenAIopenai.com

Keep reading View all Image 2: Hero Art Card SEO 1x1 Introducing GPT-5.5 Product Apr 23, 2026 Image 3: OAI Blog Agents Hero 1x1 Introducing workspace agents in ChatGPT Product Apr 22, 2026 Image 4: Images 2.0 blog art card Introducing ChatGPT Images 2.0 Product Apr 21, 2026 Our Research Research Index Research Overview Research Residency Economic Research Latest Advancements GPT-5.5 GPT-5.4 GPT-5.3 Instant GPT-5.3-Codex Safety Safety Approach Security & Privacy Trust & Transparency ChatGPT Explore ChatGPT(opens in a new window) Business Enterprise Education Pricing(opens in a new window) D…

- [20] Measuring the performance of our models on real-world tasks | OpenAIopenai.com

Image 4: OAI GPT-Rosaling Art Card 1x1 Introducing GPT-Rosalind for life sciences research Research Apr 16, 2026 Our Research Research Index Research Overview Research Residency Economic Research Latest Advancements GPT-5.5 GPT-5.4 GPT-5.3 Instant GPT-5.3-Codex Safety Safety Approach Security & Privacy Trust & Transparency ChatGPT Explore ChatGPT(opens in a new window) Business Enterprise Education Pricing(opens in a new window) Download(opens in a new window) Sora Sora Overview Features Pricing Sora log in(opens in a new window) API Platform Platform Overview Pricing API log in(opens in a ne…

- [21] GPT-5.5 System Card - OpenAI Deployment Safety Hubdeploymentsafety.openai.com

| evaluation | GPT-5 | GPT-5.1 | GPT-5.2 | GPT-5.4 | GPT-5.5 | | HealthBench length-adjusted | 57.7 (63.1, 2904) | 50.9 (64.2, 4222) | 56.8 (60.7, 2645) | 54.0 (55.7, 2275) | 56.5 (58.4, 2313) | | HealthBench Hard length-adjusted | 34.7 (41.6, 2880) | 25.4 (41.4, 4049) | 34.3 (38.9, 2585) | 29.1 (30.3, 2161) | 31.5 (33.8, 2289) | | HealthBench Consensus length-adjusted | 95.6 (96.0, 2880) | 95.0 (95.8, 4171) | 94.4 (94.7, 2615) | 96.3 (96.4, 2238) | 95.6 (95.7, 2259) | | HealthBench Professional length-adjusted | 46.2 (51.0, 3616) | 39.6 (48.0, 4863) | 45.9 (50.0, 3400) | 48.1 (51.9, 3308) |…

- [22] GPT-5.5 System Card - OpenAI Deployment Safety Hubdeploymentsafety.openai.com

| evaluation | GPT-5 | GPT-5.1 | GPT-5.2 | GPT-5.4 | GPT-5.5 | | HealthBench length-adjusted | 57.7 (63.1, 2904) | 50.9 (64.2, 4222) | 56.8 (60.7, 2645) | 54.0 (55.7, 2275) | 56.5 (58.4, 2313) | | HealthBench Hard length-adjusted | 34.7 (41.6, 2880) | 25.4 (41.4, 4049) | 34.3 (38.9, 2585) | 29.1 (30.3, 2161) | 31.5 (33.8, 2289) | | HealthBench Consensus length-adjusted | 95.6 (96.0, 2880) | 95.0 (95.8, 4171) | 94.4 (94.7, 2615) | 96.3 (96.4, 2238) | 95.6 (95.7, 2259) | | HealthBench Professional length-adjusted | 46.2 (51.0, 3616) | 39.6 (48.0, 4863) | 45.9 (50.0, 3400) | 48.1 (51.9, 3308) |…

- [23] Introducing GPT-5.5 | OpenAIopenai.com

For API developers, gpt-5.5 will soon be available in the Responses and Chat Completions APIs at $5 per 1M input tokens and $30 per 1M output tokens, with a 1M context window. Batch and Flex pricing are available at half the standard API rate, while Priority processing is available at 2.5x the standard rate. We will also release gpt-5.5-pro in the API for even higher accuracy, priced at $30 per 1M input tokens and $180 per 1M output tokens. See the pricing page for full details. While GPT‑5.5 is priced higher than GPT‑5.4, it is both more intelligent and much more token efficient. In Codex,…

- [24] GPT-5.5 System Card - Deployment Safety Hub - OpenAIdeploymentsafety.openai.com

Table 1. Production Benchmarks with Challenging Prompts (higher is better) | Category | gpt-5.1-thinking | gpt-5.2-thinking | gpt-5.4-thinking | gpt-5.5 | --- --- | Violent Illicit behavior | 0.955 | 0.975 | 0.971 | 0.979 | | Nonviolent illicit behavior | 0.990 | 0.993 | 1.000 | 0.993 | | harassment | 0.706 | 0.810 | 0.790 | 0.822 | | extremism | 1.000 | 1.000 | 1.000 | 0.925 | | hate | 0.808 | 0.927 | 0.943 | 0.868 | | self-harm (standard) | 0.926 | 0.961 | 0.987 | 0.959 | | violence | 0.800 | 0.877 | 0.831 | 0.846 | | sexual | 0.933 | 0.940 | 0.933 | 0.925 | | sexual/minors | 0.916 | 0.948…

- [25] Pricing - Claude API Docsdocs.anthropic.com

| Model | Batch input | Batch output | --- | Claude Opus 4.7 | $2.50 / MTok | $12.50 / MTok | | Claude Opus 4.6 | $2.50 / MTok | $12.50 / MTok | | Claude Opus 4.5 | $2.50 / MTok | $12.50 / MTok | | Claude Opus 4.1 | $7.50 / MTok | $37.50 / MTok | | Claude Opus 4 | $7.50 / MTok | $37.50 / MTok | | Claude Sonnet 4.6 | $1.50 / MTok | $7.50 / MTok | | Claude Sonnet 4.5 | $1.50 / MTok | $7.50 / MTok | | Claude Sonnet 4 | $1.50 / MTok | $7.50 / MTok | | Claude Sonnet 3.7 (deprecated) | $1.50 / MTok | $7.50 / MTok | | Claude Haiku 4.5 | $0.50 / MTok | $2.50 / MTok | | Claude Haiku 3.5 | $0.40 / MTok…

- [26] Claude Platform - Claude API Docsdocs.anthropic.com

April 16, 2026 We've launched Claude Opus 4.7, our most capable generally available model for complex reasoning and agentic coding, at the same $5 / $25 per MTok pricing as Opus 4.6. See What's new in Claude Opus 4.7 for capability improvements, new features, and the updated tokenizer. Opus 4.7 includes API breaking changes versus Opus 4.6; see Migrating to Claude Opus 4.7 before upgrading. Claude in Amazon Bedrock is now open to all Amazon Bedrock customers. Claude Opus 4.7 and Claude Haiku 4.5 are available self-serve from the Bedrock console through the Messages API endpoint at `/anthr…

- [27] An update on recent Claude Code quality reports - Anthropicanthropic.com

As part of this investigation, we ran more ablations (removing lines from the system prompt to understand the impact of each line) using a broader set of evaluations. One of these evaluations showed a 3% drop for both Opus 4.6 and 4.7. We immediately reverted the prompt as part of the April 20 release. ## Going forward We are going to do several things differently to avoid these issues: we’ll ensure that a larger share of internal staff use the exact public build of Claude Code (as opposed to the version we use to test new features); and we'll make improvements to our Code Review tool that we…

- [28] Claude Opus 4.7 - Anthropicanthropic.com

Pricing for Opus 4.7 starts at $5 per million input tokens and $25 per million output tokens, with up to 90% cost savings with prompt caching and 50% savings with batch processing. To learn more, check out our pricing page. To get started, use

claude-opus-4-7via the Claude API. For workloads that need to run in the US, US-only inference is available at 1.1x pricing for input and output tokens. Learn more. ## Use cases Opus 4.7 is a premium model that works best for tasks no prior model could handle and where performance matters most. It’s built for professional software engineering, comple… - [29] Introducing Claude Design by Anthropic Labsanthropic.com

For Enterprise organizations, Claude Design is off by default. Admins can enable it in Organization settings. Start designing at claude.ai/design. []( ## Related content ### Anthropic and NEC collaborate to build Japan’s largest AI engineering workforce Read more ### Introducing Claude Opus 4.7 Our latest Opus model brings stronger performance across coding, agents, vision, and multi-step tasks, with greater thoroughness and consistency on the work that matters most. Read more ### Anthropic’s Long-Term Benefit Trust appoints Vas Narasimhan to Board of Directors Read more []( ### Products [...…

- [30] Introducing Claude Opus 4.6 - Anthropicanthropic.com

Read more ### Introducing Claude Opus 4.7 Our latest Opus model brings stronger performance across coding, agents, vision, and multi-step tasks, with greater thoroughness and consistency on the work that matters most. Read more []( ### Products Claude Claude Code Claude Code Enterprise Claude Code Security Claude Cowork Claude for Chrome Claude for Slack Claude for Excel Claude for PowerPoint Claude for Word Skills Max plan Team plan Enterprise plan Download app Pricing Log in to Claude ### Models Mythos preview Opus Sonnet Haiku ### Solutions AI agents Code modernization Coding Customer supp…

- [31] Introducing Claude Opus 4.5 - Anthropicanthropic.com

Read more ### Introducing Claude Opus 4.7 Our latest Opus model brings stronger performance across coding, agents, vision, and multi-step tasks, with greater thoroughness and consistency on the work that matters most. Read more ### Anthropic’s Long-Term Benefit Trust appoints Vas Narasimhan to Board of Directors Read more []( ### Products Claude Claude Code Claude Code Enterprise Claude Code Security Claude Cowork Claude for Chrome Claude for Slack Claude for Excel Claude for PowerPoint Claude for Word Skills Max plan Team plan Enterprise plan Download app Pricing Log in to Claude ### Models…

- [32] Anthropic's Transparency Hubanthropic.com

| | | --- | | Model description | Claude Opus 4 and Claude Sonnet 4 are two new hybrid reasoning large language models from Anthropic. They have advanced capabilities in reasoning, visual analysis, computer use, and tool use. They are particularly adept at complex computer coding tasks, which they can productively perform autonomously for sustained periods of time. In general, the capabilities of Claude Opus 4 are stronger than those of Claude Sonnet 4. | | Benchmarked Capabilities | See our Claude 4 Announcement | | Acceptable Uses | See our Usage Policy | | Release date | May 2025 | | Acces…

- [33] Home \ Anthropicanthropic.com

Models Opus Sonnet Haiku Log in Claude.ai Claude Console EN This is some text inside of a div block. Log in to ClaudeLog in to Claude Log in to Claude Download appDownload app Download app # AI research and products that put safety at the frontier AIresearchandproductsthat put safety at the frontier AI will have a vast impact on the world. Anthropic is a public benefit corporation dedicated to securing its benefits and mitigating its risks. ## Project Glasswing Securing critical software for the AI era Continue reading Image 1 Read the storyRead the story ## Latest releases ### Claude Opus 4.…

- [34] Newsroom - Anthropicanthropic.com

Skip to main contentSkip to footer []( Research Economic Futures Commitments Learn News Try Claude # Newsroom Press inquirespress@anthropic.com Non-media inquiriessupport@anthropic.com Media assetsDownload press kit Image 1: Introducing Claude Opus 4.7 ## Introducing Claude Opus 4.7 Product Apr 16, 2026 Our latest Opus model brings stronger performance across coding, agents, vision, and multi-step tasks, with greater thoroughness and consistency on the work that matters most. [...] Apr 24, 2026 Announcements Anthropic and NEC collaborate to build Japan’s largest AI engineering workforce Apr 1…

- [35] Plans & Pricing | Claude by Anthropicanthropic.com

Trials #### Non-standard terms #### Customer success support at certain spend thresholds #### MSA ### Models and usage #### Opus #### Sonnet #### Haiku #### Extra usage #### Priority access at high traffic times #### User and organizational level spend controls #### Model training Latest models ### Opus 4.7 ### Sonnet 4.6 ### Haiku 4.5 For workloads that need to run in the US, US-only inference is available at 1.1x pricing for input and output tokens. Learn more. Prompt caching pricing reflects 5-minute TTL. Learn about extended prompt caching. Pricing for Claude Platform features Get mo…