코딩에는 Claude Opus 4.7과 GPT-5.5 중 어느 것이 더 나은지 알고 싶습니다.

코딩만 놓고 보면 “무엇을 하느냐”에 따라 다릅니다. 실제 저장소의 버그 수정·PR 작성·리팩터링은 Claude Opus 4.7 쪽이 더 유리하고, 터미널을 많이 쓰는 에이전트형 자동화·툴 실행 중심 작업은 GPT 5.5가 더 유리해 보입니다 [3]. Claude Opus 4.7이 더 나은 경우 실제 GitHub 이슈 해결, 기존 코드베이스 이해, PR 스타일 수정처럼 “소프트웨어 엔지...

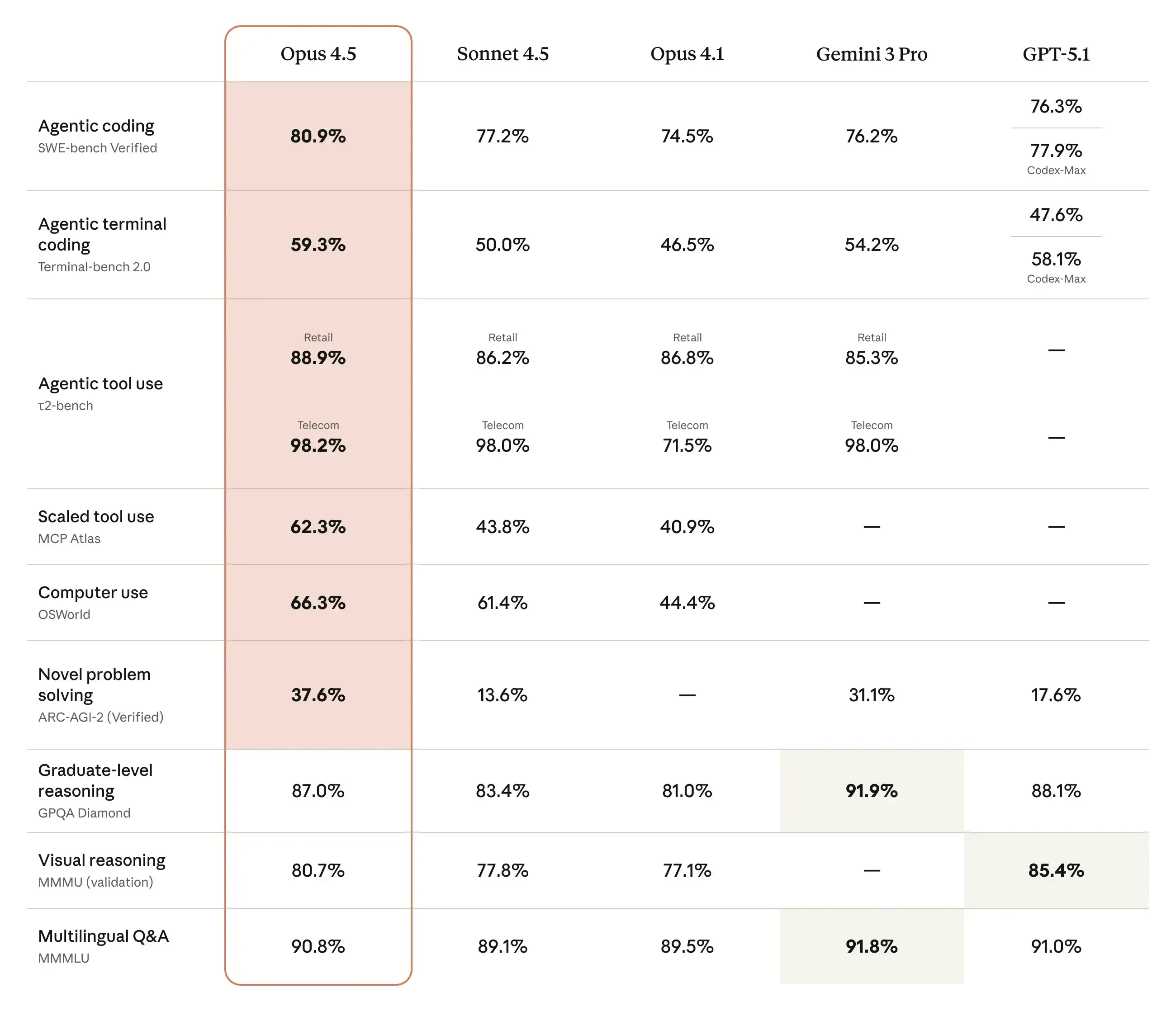

코딩만 놓고 보면 “무엇을 하느냐”에 따라 다릅니다. 실제 저장소의 버그 수정·PR 작성·리팩터링은 Claude Opus 4.7 쪽이 더 유리하고, 터미널을 많이 쓰는 에이전트형 자동화·툴 실행 중심 작업은 GPT 5.5가 더 유리해 보입니다 [3]. Claude Opus 4.7이 더 나은 경우 실제 GitHub 이슈 해결, 기존 코드베이스 이해, PR 스타일 수정처럼 “소프트웨어 엔지니어링”에 가까운 작업. SWE Bench Pro에서 Opus 4.7은 64.3%, GPT 5.5는 58.6%로 보고되어 Opus 4.7이 앞섭니다 [3][4]. 복잡한 코드 리뷰, 설

주요 시사점

- 코딩만 놓고 보면 “무엇을 하느냐”에 따라 다릅니다. 실제 저장소의 버그 수정·PR 작성·리팩터링은 Claude Opus 4.7 쪽이 더 유리하고, 터미널을 많이 쓰는 에이전트형 자동화·툴 실행 중심 작업은 GPT-5.5가 더 유리해 보입니다 [3].

- Claude Opus 4.7이 더 나은 경우

연구 답변

코딩만 놓고 보면 “무엇을 하느냐”에 따라 다릅니다. 실제 저장소의 버그 수정·PR 작성·리팩터링은 Claude Opus 4.7 쪽이 더 유리하고, 터미널을 많이 쓰는 에이전트형 자동화·툴 실행 중심 작업은 GPT-5.5가 더 유리해 보입니다 [3].

-

Claude Opus 4.7이 더 나은 경우

-

GPT-5.5가 더 나은 경우

-

제 추천

- 개인 개발자가 “코드 작성 + 디버깅 + 설명”을 주로 한다면: Claude Opus 4.7부터.

- Cursor/Codex/터미널 에이전트처럼 모델이 직접 명령을 실행하게 한다면: GPT-5.5부터.

- 중요한 작업이라면 둘 다 같은 이슈에 투입해 보고, 하나는 구현, 다른 하나는 코드 리뷰로 쓰는 조합이 가장 안전합니다.

-

주의할 점

시각적 지원

연구 대화

연구문제

코딩에는 Claude Opus 4.7과 GPT-5.5 중 어느 것이 더 나은지 알고 싶습니다.

인용 답변

코딩만 놓고 보면 “무엇을 하느냐”에 따라 다릅니다. 실제 저장소의 버그 수정·PR 작성·리팩터링은 Claude Opus 4.7 쪽이 더 유리하고, 터미널을 많이 쓰는 에이전트형 자동화·툴 실행 중심 작업은 GPT-5.5가 더 유리해 보입니다 [3].

-

Claude Opus 4.7이 더 나은 경우

-

GPT-5.5가 더 나은 경우

-

제 추천

- 개인 개발자가 “코드 작성 + 디버깅 + 설명”을 주로 한다면: Claude Opus 4.7부터.

- Cursor/Codex/터미널 에이전트처럼 모델이 직접 명령을 실행하게 한다면: GPT-5.5부터.

- 중요한 작업이라면 둘 다 같은 이슈에 투입해 보고, 하나는 구현, 다른 하나는 코드 리뷰로 쓰는 조합이 가장 안전합니다.

-

주의할 점

사람들은 또한 묻습니다.

"코딩에는 Claude Opus 4.7과 GPT-5.5 중 어느 것이 더 나은지 알고 싶습니다."에 대한 짧은 대답은 무엇입니까?

코딩만 놓고 보면 “무엇을 하느냐”에 따라 다릅니다. 실제 저장소의 버그 수정·PR 작성·리팩터링은 Claude Opus 4.7 쪽이 더 유리하고, 터미널을 많이 쓰는 에이전트형 자동화·툴 실행 중심 작업은 GPT-5.5가 더 유리해 보입니다 [3].

먼저 검증할 핵심 포인트는 무엇인가요?

코딩만 놓고 보면 “무엇을 하느냐”에 따라 다릅니다. 실제 저장소의 버그 수정·PR 작성·리팩터링은 Claude Opus 4.7 쪽이 더 유리하고, 터미널을 많이 쓰는 에이전트형 자동화·툴 실행 중심 작업은 GPT-5.5가 더 유리해 보입니다 [3]. Claude Opus 4.7이 더 나은 경우

다음에는 어떤 관련 주제를 탐구해야 할까요?

다른 각도와 추가 인용을 보려면 "GPT-5.5와 Claude Opus 4.7의 벤치마크를 조사해줘. 코딩, 디자인, 창의적 콘텐츠, 검색에서는 누가 이길까?"으로 계속하세요.

관련 페이지 열기이것을 무엇과 비교해야 합니까?

"GPT-5.5, Claude Opus 4.7, DeepSeek V4, Kimi K2.6의 벤치마크를 비교해 주세요."에 대해 이 답변을 대조 확인하세요.

관련 페이지 열기연구를 계속하세요

출처

- [1] Claude Opus 4.7 Benchmarks Explained - Vellumvellum.ai

Apr 16, 2026•16 min•ByNicolas Zeeb Guides CONTENTS Key observations of reported benchmarks Coding capabilities SWE-bench Verified SWE-bench Pro Terminal-Bench 2.0 Agentic capabilities MCP-Atlas (Scaled tool use) Finance Agent v1.1 OSWorld-Verified (Computer use) BrowseComp (Agentic search) Reasoning capabilities GPQA Diamond (Graduate-level science) Humanity's Last Exam Multimodal and vision capabilities CharXiv Reasoning (Visual reasoning) Multilingual Q&A (MMMLU) Safety and alignment What these benchmarks really mean for your agents When to use Opus 4.6 vs Opus 4.7 Use Opus 4.7 with your Ve…

- [2] Claude Opus 4.7 vs GPT-5.5 Comparison - LLM Statsllm-stats.com

They are both capable of processing various types of data, offering versatility in application. #### Claude Opus 4.7 #### GPT-5.5 ## License Usage and distribution terms Both models are licensed under proprietary licenses. Both models have usage restrictions defined by their respective organizations. Proprietary Closed source Proprietary Closed source ## Release Timeline When each model was launched Claude Opus 4.7 was released on 2026-04-16, while GPT-5.5 was released on 2026-04-23. GPT-5.5 is 0 month newer than Claude Opus 4.7. Apr 16, 2026 1 weeks ago Apr 23, 2026 1 days ago ## Knowledge C…

- [3] GPT-5.5 vs Claude Opus 4.7: Pricing, Speed, Benchmarks - LLM Statsllm-stats.com

05 Which model is better for coding agents in 2026?Depends on the deployment shape. Forunattended terminal and shell workflows, GPT-5.5 leads on Terminal-Bench 2.0 (82.7% vs 69.4%). Forreal-repo PR-style software engineering, Opus 4.7 leads on SWE-Bench Pro (64.3% vs 58.6%). I default to GPT-5.5 when the model is going to drive the loop end-to-end, and to Opus 4.7 when the output is a single careful patch a human is going to review. [...] Within seven days, I had two new frontier models to compare against the workloads I run for LLM Stats:Claude Opus 4.7shipped on April 16, 2026, andGPT-5.5 o…

- [4] GPT-5.5 vs Claude Opus 4.7: Real-World Coding Performance ...mindstudio.ai

SWE-Bench and Coding Tasks On SWE-Bench Verified — the standard benchmark for evaluating real GitHub issue resolution — both models score competitively at the top of the 2026 leaderboard. GPT-5.5 holds a slight edge on problems requiring precise tool use and file navigation. Opus 4.7 performs better on tasks requiring broad architectural reasoning across large codebases. Neither model dominates outright. The gap is narrow enough that benchmark scores alone shouldn’t drive your decision. ### Where Opus 4.7 Pulls Ahead Multi-file reasoning across large repos (10k+ lines) Tasks requiring sig…

- [5] OpenAI's GPT-5.5 masters agentic coding with 82.7% benchmark ...interestingengineering.com

About UsAdvertise ContactFAQ #### Follow Us On LinkedInXInstagramFlipboardFacebookYouTubeTikTok All Rights Reserved, IE Media, Inc. AI and Robotics # GPT-5.5 crushes Claude Opus 4.7 in agentic coding with 82.7% terminal-bench score GPT-5.5 introduces smarter task handling, reduced token usage, and broader adoption across enterprise workflows. ByAamir Khollam AI and Robotics FacebookLinkedInXReddit Google News Preferred Source ByAamir Khollam FacebookLinkedInXReddit Google News Preferred Source OpenAI logo illustration OpenAI logo illustration.Getty Images OpenAI has introduced GPT-5.5, positi…

- [6] OpenAI's GPT-5.5 vs Claude Opus 4.7: Which is better? | Mashablemashable.com

Thanks for signing up! SWE-Bench Pro: GPT-5.5 scored 58.6; Opus 4.7 scored 64.3 percent Terminal-Bench 2.0: GPT-5.5 scored 82.7 percent; Opus 4.7 scored 69.4 percent Humanity's Last Exam: GPT-5.5 scored 40.6 percent; Opus 4.7 scored 31.2 percent\ Humanity's Last Exam (with tools): GPT-5.5 scored 52.2 percent; Opus 4.7 scored 54.7 percent BrowseComp: GPT-5.5 scored 84.4 percent; Opus 4.7 scored 79.3 percent GPQA Diamond: GPT-5.5 scored 93.6 percent; Opus 4.7 scored 94.2 percent ARC-AGI-1 (Verified): GPT-5.5 (High) scored 94.5 percent; Claude 4.7 (High) scored 92 percent\ ARC-AGI-2 (Verified):…

- [7] GPT-5.5 vs Claude Opus 4.7: Benchmarks, Pricing & Coding ...lushbinary.com

1Release Context & What Changed The timing tells the story. Anthropic released Claude Opus 4.7 on April 16, 2026 — a focused upgrade that pushed SWE-bench Pro from 53.4% (Opus 4.6) to 64.3%, added high-resolution vision up to 3.75 megapixels, and introduced the

xhigheffort level. All at the same $5/$25 per million token pricing as its predecessor. Exactly one week later, on April 23, OpenAI launched GPT-5.5 — codenamed "Spud." Unlike the incremental GPT-5.1 through 5.4 releases, GPT-5.5 is the first fully retrained base model since GPT-4.5. It's natively omnimodal (text, images, audio,… - [8] GPT 5.5 beats Claude Opus 4.7 : r/ArtificialInteligence - Redditreddit.com

Anyone can view, post, and comment to this community 0 0 Reddit RulesPrivacy PolicyUser AgreementYour Privacy ChoicesAccessibilityReddit, Inc. © 2026. All rights reserved. Expand Navigation Collapse Navigation RESOURCES About Reddit Advertise Developer Platform Reddit Pro BETA Help Blog Careers Press Best of Reddit Reddit Rules Privacy Policy User Agreement Your Privacy Choices Accessibility Reddit, Inc. © 2026. All rights reserved. Image 8 [...] # GPT 5.5 beats Claude Opus 4.7 : r/ArtificialInteligence Skip to main contentGPT 5.5 beats Claude Opus 4.7 : r/ArtificialIntel…

- [9] GPT 5.5 is out. Here are the benchmarks. - Facebookfacebook.com

1d 4 Image 3 View all 3 replies []( Robert Eaton Opus 4.7 has been terrible. Getting everything wrong, making same mistake over and over. I went back to 4.6 and everything was fine 1d 4 Image 4Image 5 View all 2 replies []( Patrick Healy Codex $100a month package while building then go to $20 a month package & you run a local model hybrid is the best set up for a business. Personal use just go full local 1d 3 Image 6 View 1 reply View more comments 3 of 20 See more on Facebook See more on Facebook Email or phone number Password Log In Forgot password? or Create new account [...] # OpenClaw Co…

- [10] GPT-5.5 Is Here — I Tested It (And It Beats Claude Opus 4.7)medium.com

GPT-5.5 Is Here — I Tested It (And It Beats Claude Opus 4.7) | by Joe Njenga | AI Software Engineer | Apr, 2026 | Medium Sitemap Open in app Sign up Sign in Image 3: Joe Njenga Joe Njenga Follow 9 min read · 1 day ago Press enter or click to view image in full size Image 5: GPT-5.5 > If you are not a premium Medium Member, you can read the story here for FREE, but please consider joining Medium to support my work — Thank you! The GPT 5.5 release has come seven weeks after GPT 5.4. OpenAI is shipping at a crazy speed, possibly trying to keep up with Anthropic, as we have seen in recent days.…

- [11] I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just ...linkedin.com

I Tested GPT 5.5 vs Opus 4.7: What You Need to Know OpenAI just dropped GPT 5.5. The benchmarks look strong against Opus 4.7. But benchmarks only tell part of the story. So I ran 4 head-to-head experiments. Codex on GPT 5.5. Claude Code on Opus 4.7. Same prompts. No iteration. No back and forth. Just one shot each. 1️⃣ Personal brand site 2️⃣ Solar system simulation 3️⃣ 3D space shooter 4️⃣ Living ecosystem simulation For every experiment I tracked: → Runtime → Input tokens → Output tokens → Total cost The headline numbers across all four: → GPT 5.5 total runtime: 20 min 49 sec → Opus 4.7 tot…

- [12] GPT 5.5 Vs Claude Opus 4.7 Proves Benchmarks Need Contextreddit.com

Frequently Asked Questions About GPT 5.5 Vs Claude Opus 4.7 1. Which is better, GPT 5.5 or Claude Opus 4.7? GPT 5.5 is better for long workflows, large context, computer use, and agentic execution, while Claude Opus 4.7 is better for review, polish, vision, and careful coding. 2. Is GPT 5.5 better than Claude Opus 4.7 for automation? GPT 5.5 looks stronger for automation when the task needs multi-step execution, tool use, and long workflow handling. 3. Is Claude Opus 4.7 better for coding? Claude Opus 4.7 can be better for cleaner code, refactoring, instruction following, and review, while…