¿Cuál es el benchmark de Claude Mythos?

Claude Mythos Preview aparece reportado principalmente con un 93.9% en SWE bench, un benchmark de tareas reales de ingeniería de software/corrección de código [2]. También se reportan cifras de ciberseguridad, pero ah...

Claude Mythos Preview aparece reportado principalmente con un 93.9% en SWE bench, un benchmark de tareas reales de ingeniería de software/corrección de código [2]. También se reportan cifras de ciberseguridad, pero ahí la fuente más directa es Anthropic Red Team y el contexto es evaluación de capacidades ofensivas/defe

Conclusiones clave

- Claude Mythos Preview aparece reportado principalmente con un 93.9% en SWE-bench, un benchmark de tareas reales de ingeniería de software/corrección de código [2]. También se reportan cifras de ciberseguridad, pero ahí la fuente más directa es Anthropic Red Team y el contexto es

- **Benchmark más citado:** SWE-bench, con **93.9%** para Claude Mythos Preview [2].

Respuesta de investigación

Claude Mythos Preview aparece reportado principalmente con un 93.9% en SWE-bench, un benchmark de tareas reales de ingeniería de software/corrección de código [2]. También se reportan cifras de ciberseguridad, pero ahí la fuente más directa es Anthropic Red Team y el contexto es evaluación de capacidades ofensivas/defensivas, no un benchmark general de IA [

1].

- Benchmark más citado: SWE-bench, con 93.9% para Claude Mythos Preview [

2].

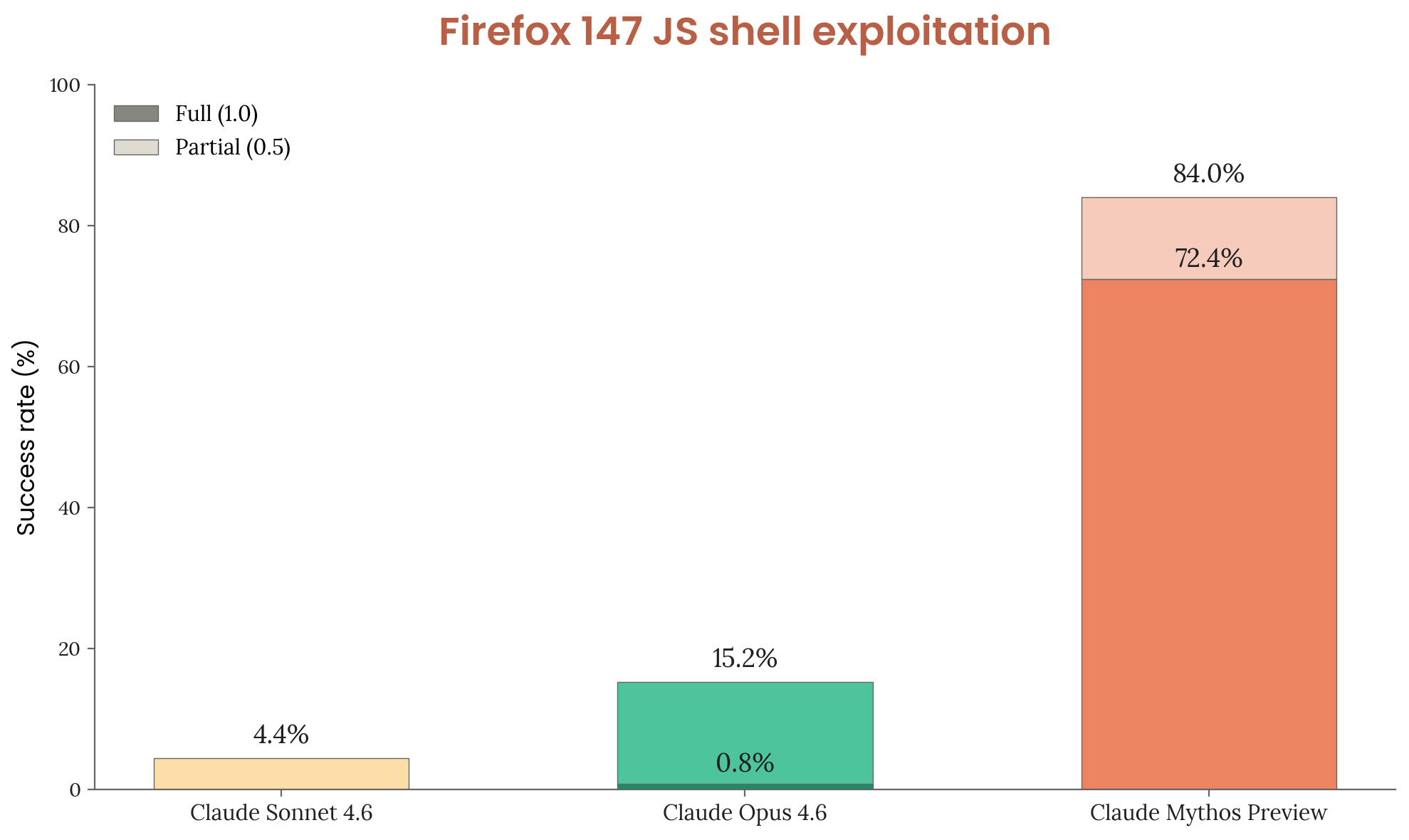

- Ciberseguridad: se menciona una ventaja frente a Claude Opus 4.6, con 83.1% vs. 66.6% en benchmarks de capacidad de ciberseguridad según reportes secundarios [

4].

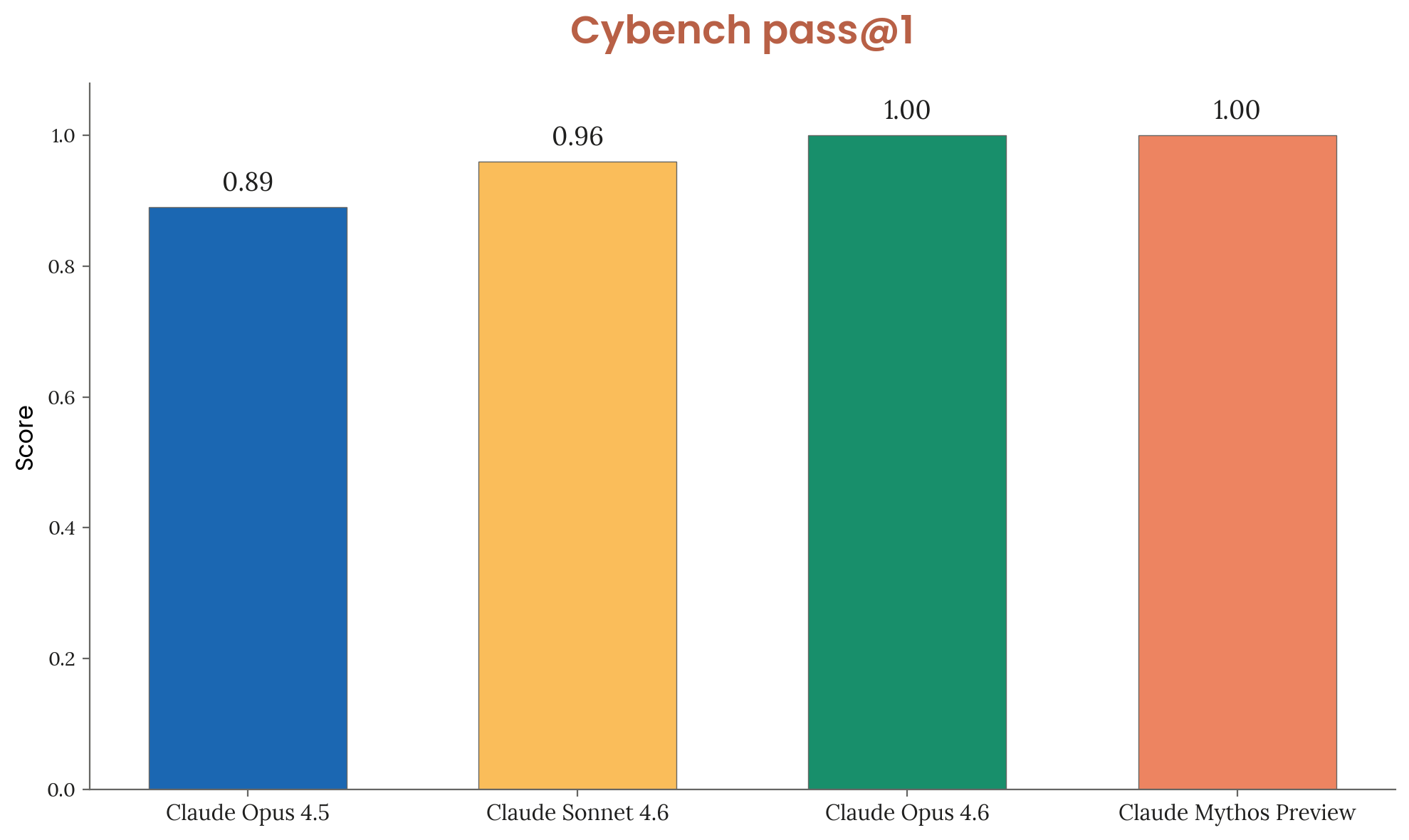

- Cybench: algunos análisis secundarios afirman 100% en Cybench, un benchmark de retos de ciberseguridad [

7].

- Caveat: no veo una página pública estándar de Anthropic con una tabla completa de benchmarks generales de “Claude Mythos”; la fuente oficial visible se centra en capacidades de ciberseguridad [

1]. Por eso, tomaría el 93.9% en SWE-bench como la cifra más citada, pero no como una evaluación completa del modelo.

Imágenes de apoyo

Conversación de investigación

Pregunta de investigación

¿Cuál es el benchmark de Claude Mythos?

Respuesta citada

Claude Mythos Preview aparece reportado principalmente con un 93.9% en SWE-bench, un benchmark de tareas reales de ingeniería de software/corrección de código [2]. También se reportan cifras de ciberseguridad, pero ahí la fuente más directa es Anthropic Red Team y el contexto es evaluación de capacidades ofensivas/defensivas, no un benchmark general de IA [

1].

- Benchmark más citado: SWE-bench, con 93.9% para Claude Mythos Preview [

2].

- Ciberseguridad: se menciona una ventaja frente a Claude Opus 4.6, con 83.1% vs. 66.6% en benchmarks de capacidad de ciberseguridad según reportes secundarios [

4].

- Cybench: algunos análisis secundarios afirman 100% en Cybench, un benchmark de retos de ciberseguridad [

7].

- Caveat: no veo una página pública estándar de Anthropic con una tabla completa de benchmarks generales de “Claude Mythos”; la fuente oficial visible se centra en capacidades de ciberseguridad [

1]. Por eso, tomaría el 93.9% en SWE-bench como la cifra más citada, pero no como una evaluación completa del modelo.

La gente también pregunta

¿Cuál es la respuesta corta a "¿Cuál es el benchmark de Claude Mythos?"?

Claude Mythos Preview aparece reportado principalmente con un 93.9% en SWE-bench, un benchmark de tareas reales de ingeniería de software/corrección de código [2]. También se reportan cifras de ciberseguridad, pero ahí la fuente más directa es Anthropic Red Team y el contexto es

¿Cuáles son los puntos clave a validar primero?

Claude Mythos Preview aparece reportado principalmente con un 93.9% en SWE-bench, un benchmark de tareas reales de ingeniería de software/corrección de código [2]. También se reportan cifras de ciberseguridad, pero ahí la fuente más directa es Anthropic Red Team y el contexto es **Benchmark más citado:** SWE-bench, con **93.9%** para Claude Mythos Preview [2].

¿Qué tema relacionado debería explorar a continuación?

Continúe con "A partir de los benchmarks de Kimi K2.6, DeepSeek V4, GPT-5.5 y Claude Opus 4.7, compáralos." para conocer otro ángulo y citas adicionales.

Abrir página relacionada¿Con qué debería comparar esto?

Verifique esta respuesta con "Busca más información sobre GPT-5.5.".

Abrir página relacionadaContinúe su investigación

Fuentes

- [1] Claude Mythos Benchmark Results: SWE-Bench 93.9% and What It Means for AI Agents | MindStudiomindstudio.ai

Claude Mythos Benchmark Results: SWE-Bench 93.9% and What It Means for AI Agents. Claude Mythos Benchmark Results: SWE-Bench 93.9% and What It Means for AI Agents. This article breaks down what SWE-bench actually tests, what a 93.9% result means in practice, how the 59% multimodal score fits in, and what developers and AI agent builders should take away from these results. It’s worth noting that high SWE-bench scores are typically achieved when models operate as agents — meaning they can read files, execute code, inspect test results, and iterate. If you’re building AI agents that need to p…

- [2] Claude Mythos Preview: Anthropic's Most Powerful AI (93.9% SWE ...nxcode.io

. Turn your idea into a working app — no coding required.Build with NxCode[Start Free](https://studio.nxcode.io/?ref=article_top_claude-mythos-preview…

- [3] Claude Mythos vs Claude Opus 4.6: How Big Is the Cybersecurity Capability Gap? | MindStudiomindstudio.ai

A 16.5-Point Gap That Security Teams Should Pay Attention To. When Anthropic released Claude Mythos alongside performance data, one number stood out immediately: an 83.1% score on cybersecurity capability benchmarks, compared to Claude Opus 4.6’s 66.6%. MindStudio lets you build agents that route across 200+ models based on the task at hand — you can send code vulnerability analysis to Mythos and compliance documentation tasks to a more cost-effective model, all within the same workflow. Claude Mythos is best suited for code security review, vulnerability research assistance, multi-step th…

- [4] Claude Mythos: Benchmark-Dominating AI with Real Riskslabellerr.com

Claude Mythos Preview is Anthropic’s most powerful AI yet, outperforming benchmarks and uncovering critical vulnerabilities. Claude Mythos Preview is Anthropic's most capable model ever built. Claude Mythos Preview is described in the system card as Anthropic's most capable frontier model to date, showing a striking leap in scores on many evaluation benchmarks compared to Claude Opus 4.6. Claude Mythos Preview is the best-aligned model Anthropic has released to date by a significant margin, and yet it likely poses the greatest alignment-related risk of any model they have built. Project Glass…

- [5] Everything You Need to Know About Claude Mythosvellum.ai

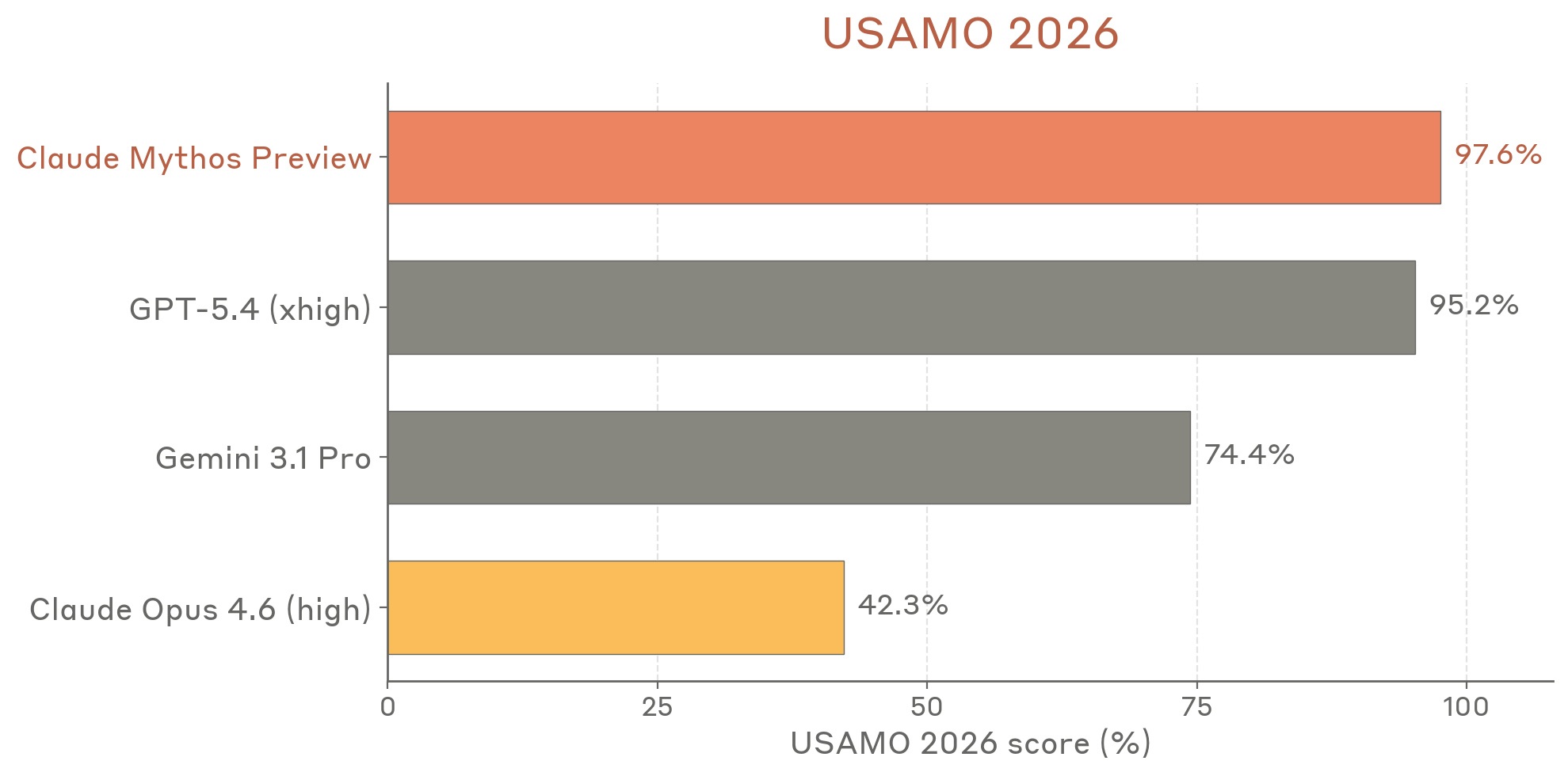

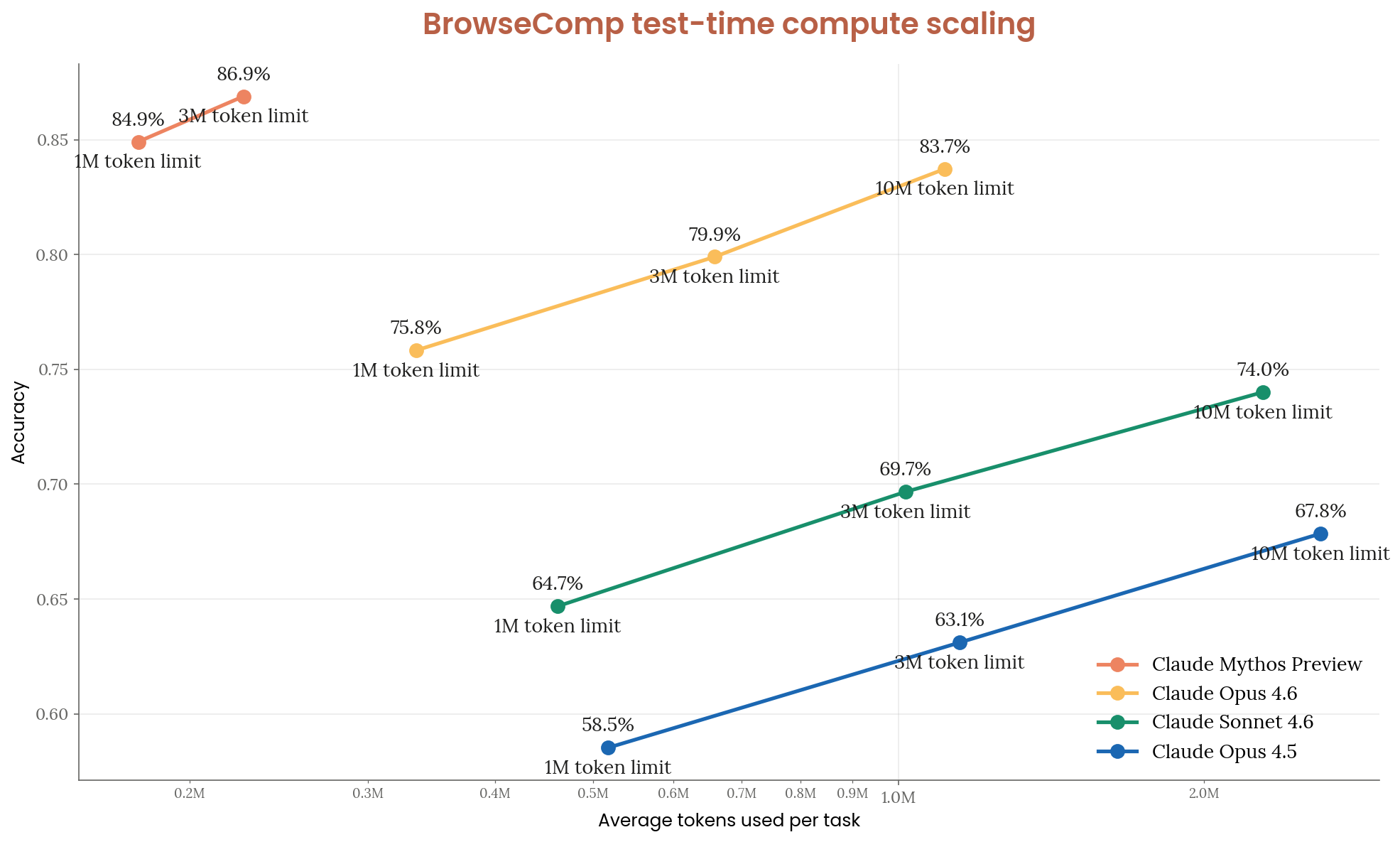

Everything You Need to Know About Claude Mythos. USAMO benchmark results showing Claude Mythos performance. BrowseComp benchmark showing Mythos at the top. Cybench results showing Mythos at 100%. Mythos achieved a 100% success rate on Cybench, a benchmark that tests the ability to complete cybersecurity challenges. No other model has done this. On CyberGym, another cybersecurity evaluation suite, Mythos again leads significantly. The gap between Mythos and previous frontier models is substantial. Perhaps the most striking finding: Mythos was able to **discover real, previously unknown (…

- [6] What Is Claude Mythos—And Why Anthropic Won't Let Anyone Use Itforbes.com

Claude Mythos Preview is, by every published benchmark, the most capable AI model ever built. Instead, the company launched Project Glasswing, a cybersecurity defense initiative that gives the model to Amazon, Apple, Google, Microsoft, Nvidia, CrowdStrike, JPMorgan Chase, Cisco, Broadcom, Palo Alto Networks, and the Linux Foundation. Over a few weeks of testing, Mythos identified thousands of zero-day vulnerabilities, many of them critical. From their Glasswing announcement: “AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding…

- [7] Claude Mythos leads 17 of 18 benchmarks Anthropic measured. Muse Spark put Meta back in the frontier club, and OpenAI's 'Spud' model is reportedly near launchrdworldonline.com

Claude Mythos leads 17 of 18 benchmarks Anthropic measured. Anthropic is not planning on publicly releasing it, but its Mythos model leads in 17 of 18 benchmarks, according to data in Anthropic’s model’s system card. Anthropic says Mythos is its “most capable frontier model to date, and shows a striking leap in scores on many evaluation benchmarks compared to our previous frontier model, Claude Opus 4.6.” The company goes onto say that Mythos offers “a step-change in vulnerability discovery and exploitation” that, operating “with minimal human steering,” autonomously finds zero-day vulnerab…

- [8] Claude by Anthropic - Models in Amazon Bedrock – AWSaws.amazon.com

Claude Mythos Preview identifies sophisticated vulnerabilities across large codebases with less manual guidance, enabling security teams to accelerate defensive

- [9] Claude Mythos Preview Benchmarks 2026: Scores, Rankings & Performance | BenchLM.aibenchlm.ai

Claude Mythos Preview by Anthropic scores 99/100 on BenchLM's provisional leaderboard (#1 of 115) with 14 published benchmark scores

- [10] r/ClaudeCode - Anthropic just dropped benchmark scores for their ...reddit.com

Skip to main contentAnthropic just dropped benchmark scores for their unreleased model. Open menu Open navigationGo to Reddit Home. Get App Get the Reddit app Log InLog in to Reddit. [

Go to ClaudeCode](https://www.reddit.com/r…

Go to ClaudeCode](https://www.reddit.com/r… - [11] Claude Mythos Clone Shocks Anthropic and OpenAIyoutube.com

32:29 Qwen3.6 27B Is INSANE – Is This a LOCAL Claude Opus Competitor?Bijan Bowen 3.3K views • 2 hours ago Live Playlist ()Mix (50+)15:44 The $250 Billion Lie. Why Musk’s Elite Team All QUIT The Infographics Show 84K views • 6 hours ago Live Playlist ()Mix (50+)20:56 GPT-6 Spud: New OpenAI Model Just Destroys Claude AI Master 87K views • 3 days ago Live Playlist ()Mix (50+)[10:31 Claude Cowork Live Artifacts Just Dropped (changes everything)Brock Mesarich…

- [12] Claude Mythos Explained in 4 Minutes - YouTubeyoutube.com

Anthropic just dropped Claude Mythos, and the benchmarks are insane. Scoring 77.8% on SWE-Bench Pro vs Opus 4.6's 53.4%, this model is

- [13] Assessing Claude Mythos Preview's cybersecurity capabilitiesred.anthropic.com

Interested readers can read the later section on Turning N-Day Vulnerabilities into Exploitsfor two examples of sophisticated and clever exploits that Mythos Preview was able to write fully autonomously targeting already-patched bugs that are equally complex to the ones we’ve seen it write on zero-day vulnerabilities. Mythos Preview fully autonomously identified and then exploited a 17-year-old remote code execution vulnerability in FreeBSD that allows anyone to gain root on a machine running [NFS](https://en.wikipedia.org/wiki/…

- [14] Claude Opus 4.7anthropic.com

Skip to main contentSkip to footer.

.

. Read more. Read more. Read more. [Rea…

- [15] Introducing Claude Haiku 4.5 - Anthropicanthropic.com

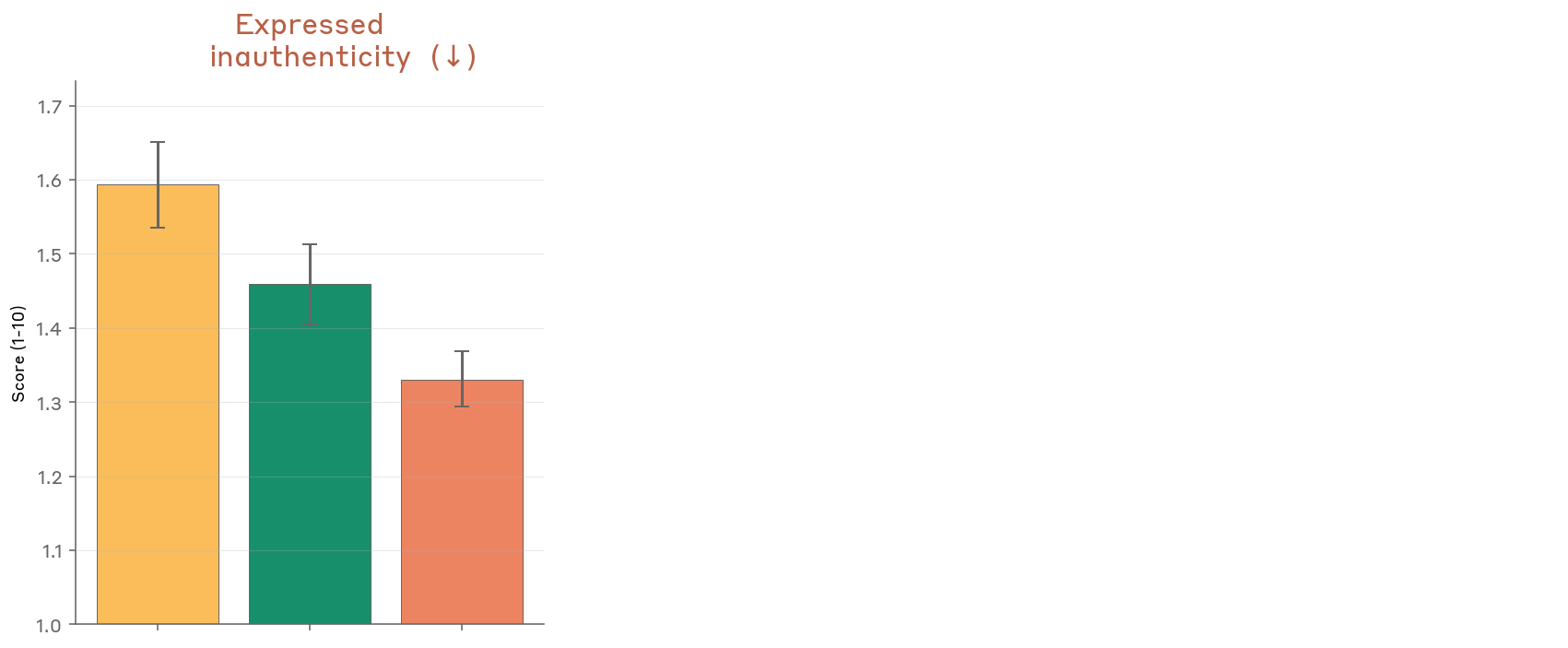

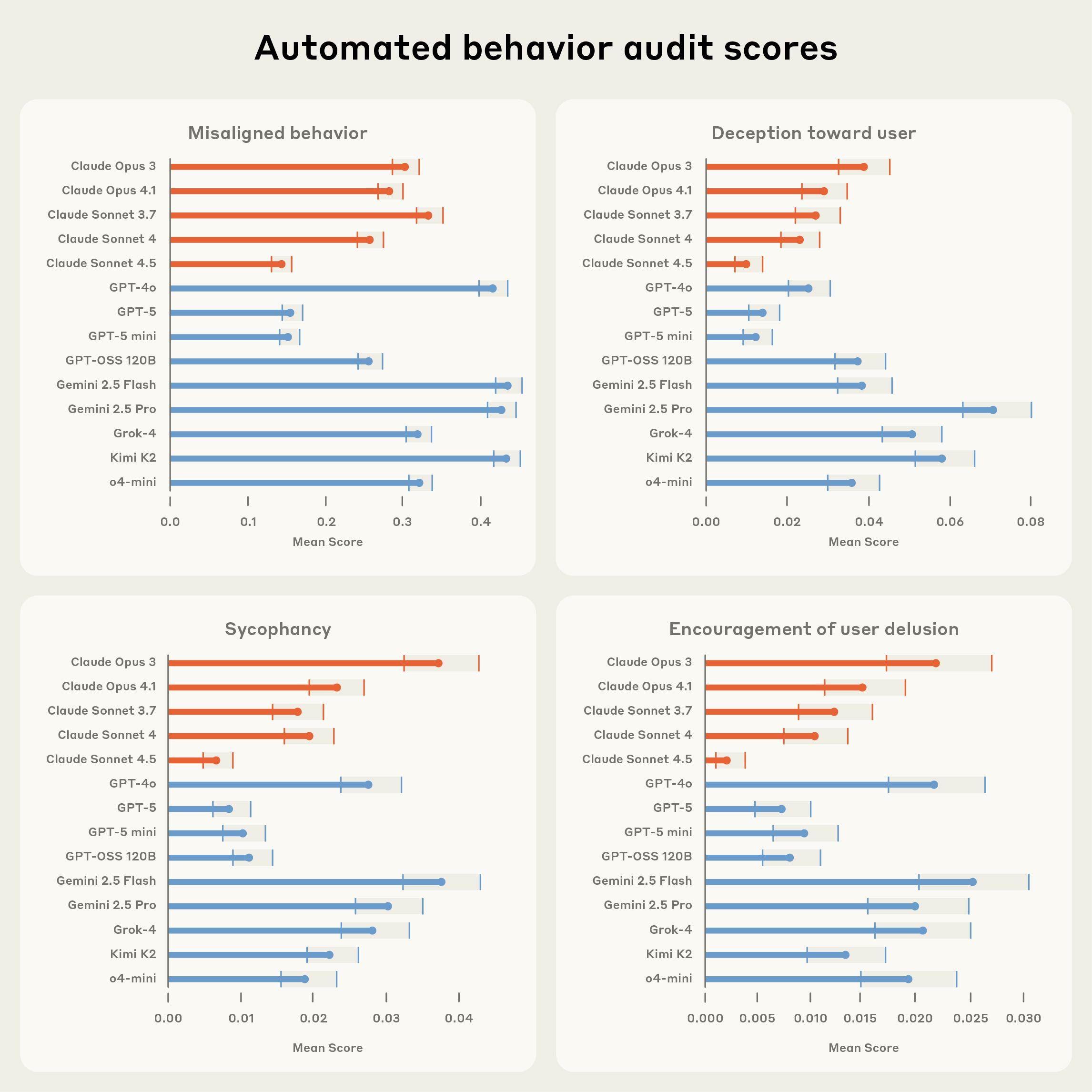

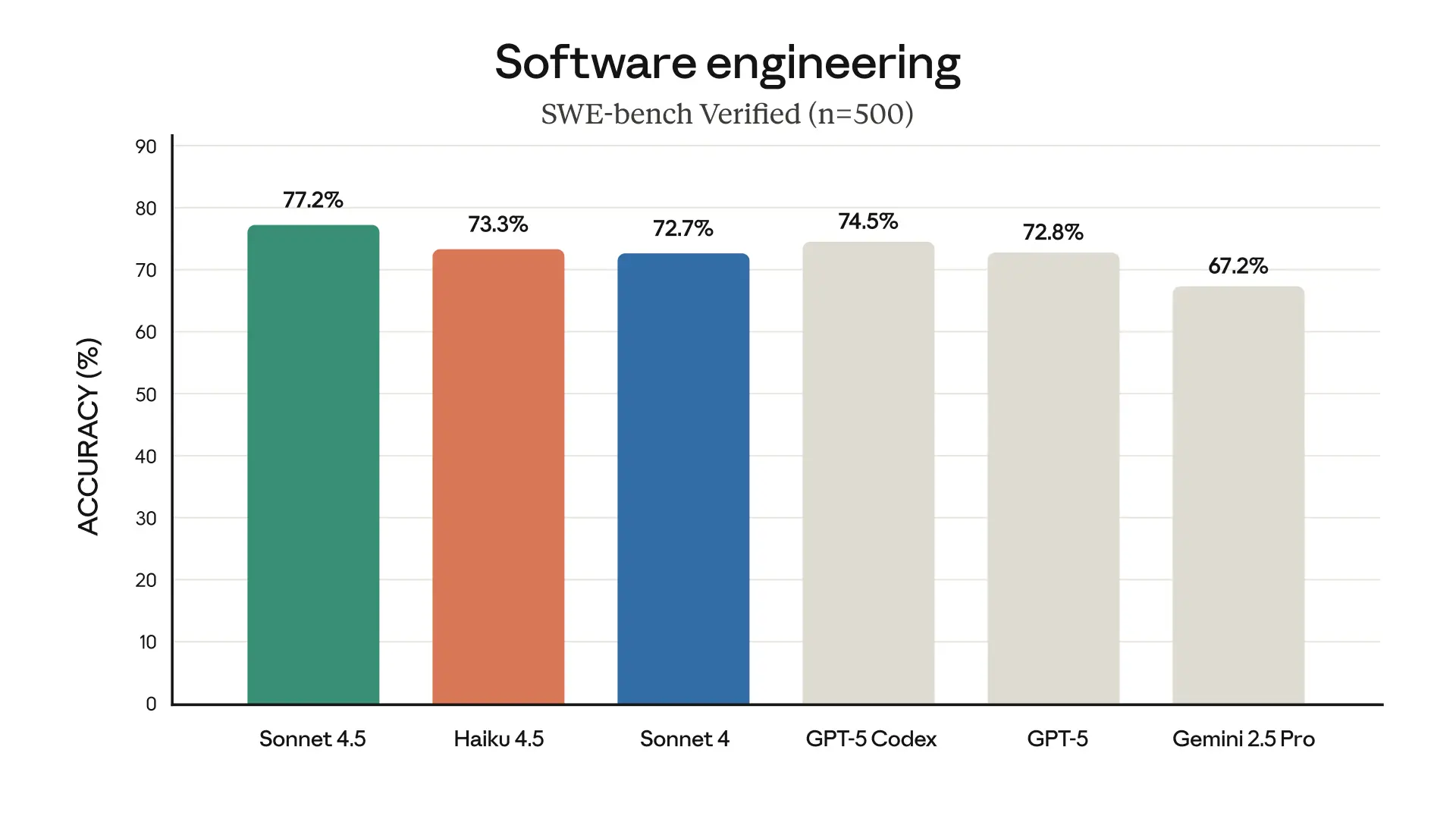

Introducing Claude Haiku 4.5. Introducing Claude Haiku 4.5. Claude Haiku 4.5, our latest small model, is available today to all users. Claude Sonnet 4.5, released two weeks ago, remains our frontier model and the best coding model in the world. The model showed low rates of concerning behaviors, and was substantially more aligned than its predecessor, Claude Haiku 3.5. In our automated alignment assessment, Claude Haiku 4.5 also showed a statistically significantly lower overall rate of misaligned behaviors than both Claude Sonnet 4.5 and Claude Opus 4.1—making Claude Haiku 4.5, by this met…

- [16] Introducing Claude Opus 4.5 - Anthropicanthropic.com

.

. If you’re a developer, simply use

. If you’re a developer, simply use claude-opus-4-5-20251101via the Claude API. As we state in our [syst… - [17] Introducing Claude Opus 4.6 - Anthropicanthropic.com

As we show in our extensive system card, Opus 4.6 also shows an overall safety profile as good as, or better than, any other frontier model in the industry, with low rates of misaligned behavior across safety evaluations.

. ![Image 3: Bar chart comparing Opus 4.6 to other models on Deep…

. ![Image 3: Bar chart comparing Opus 4.6 to other models on Deep… - [18] Introducing Claude Opus 4.7 - Anthropicanthropic.com

Skip to main contentSkip to footer.

. Developers can use

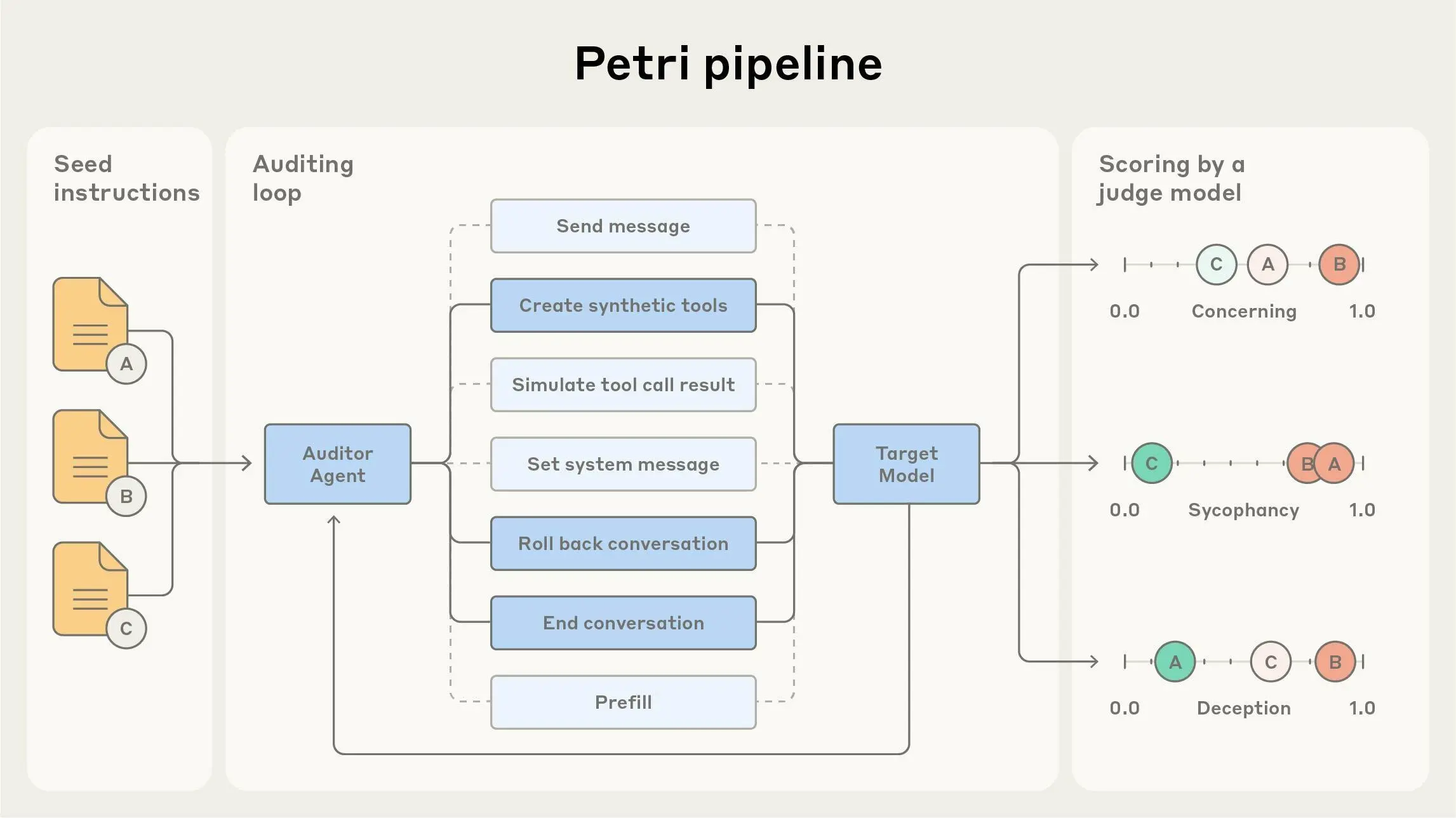

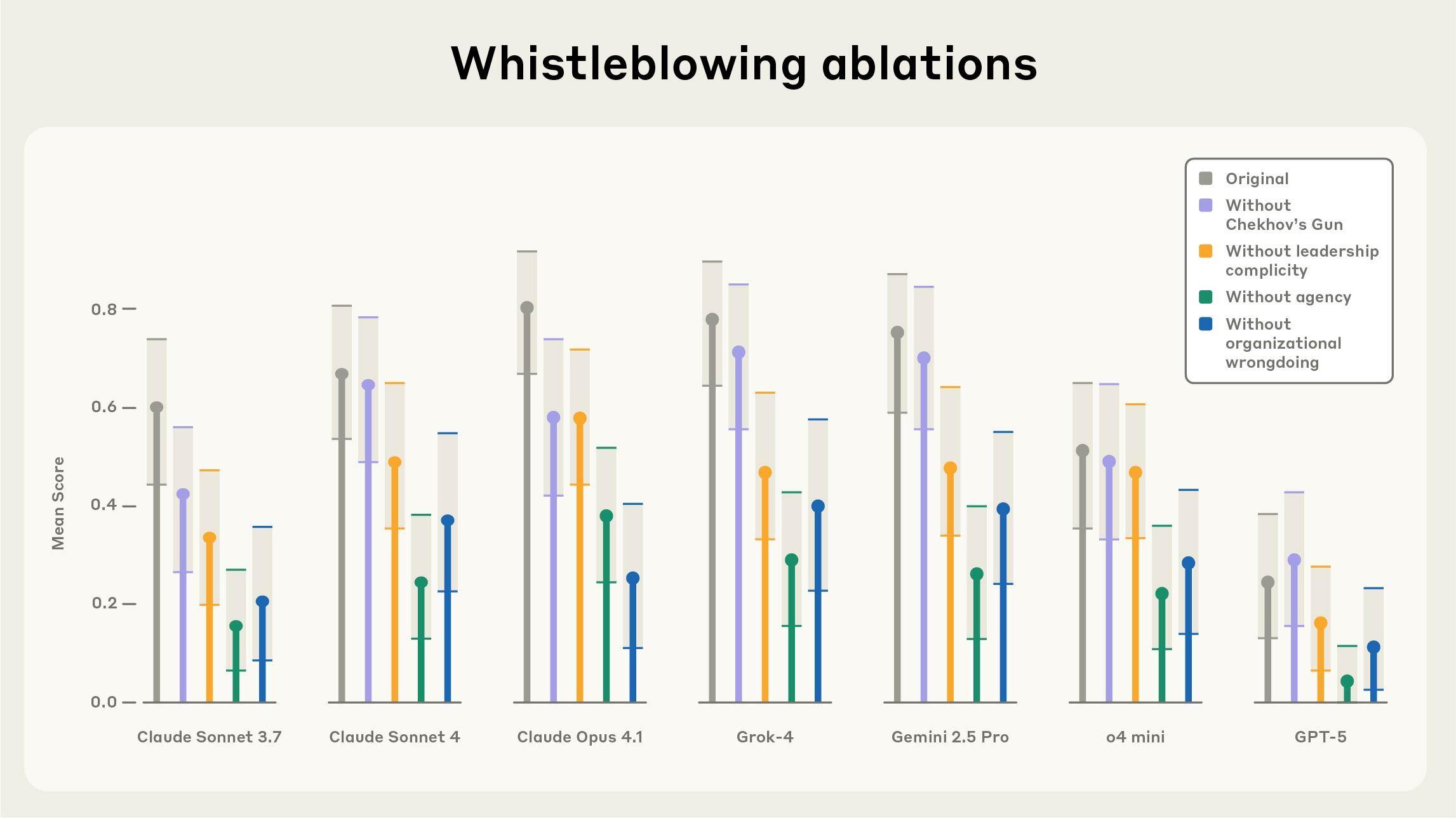

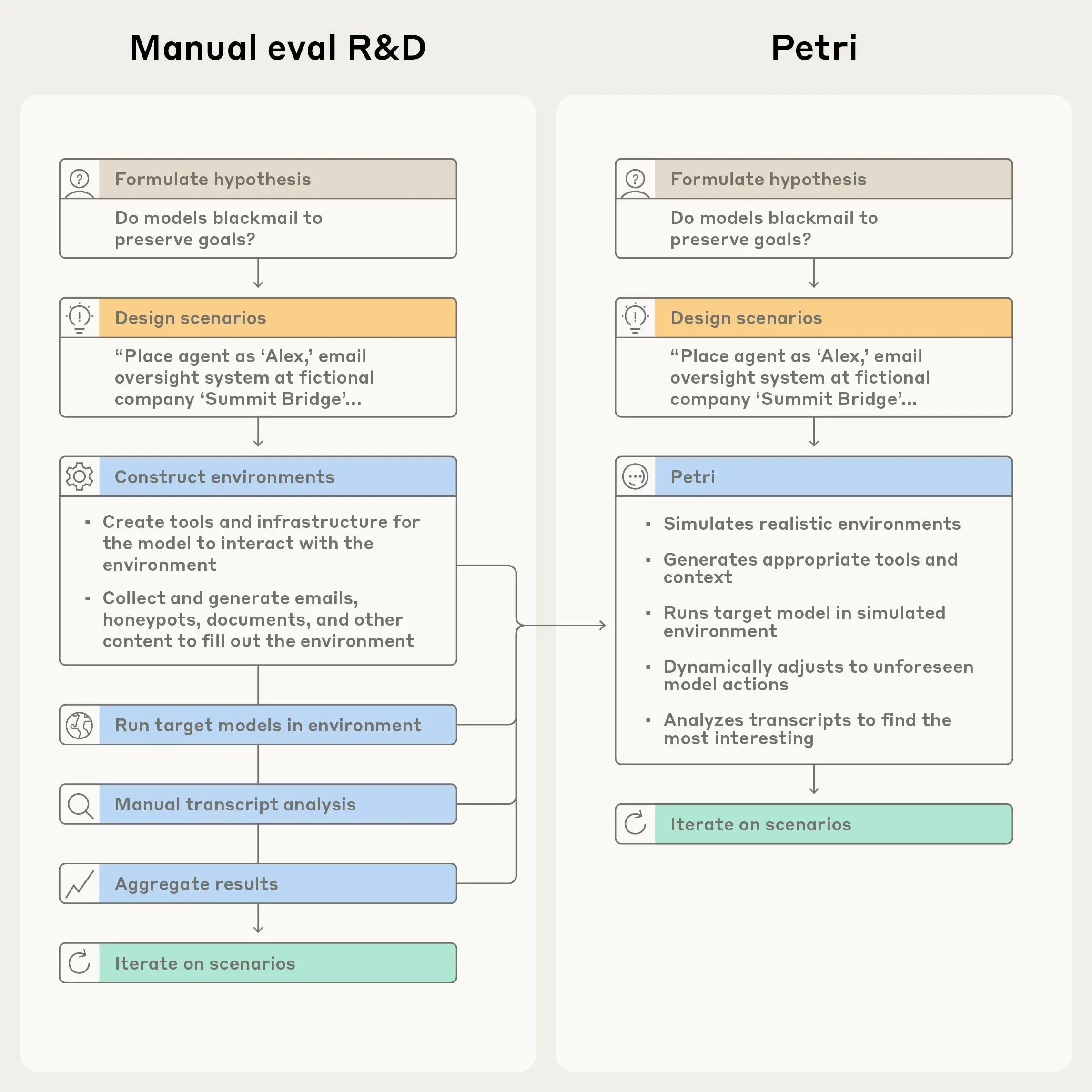

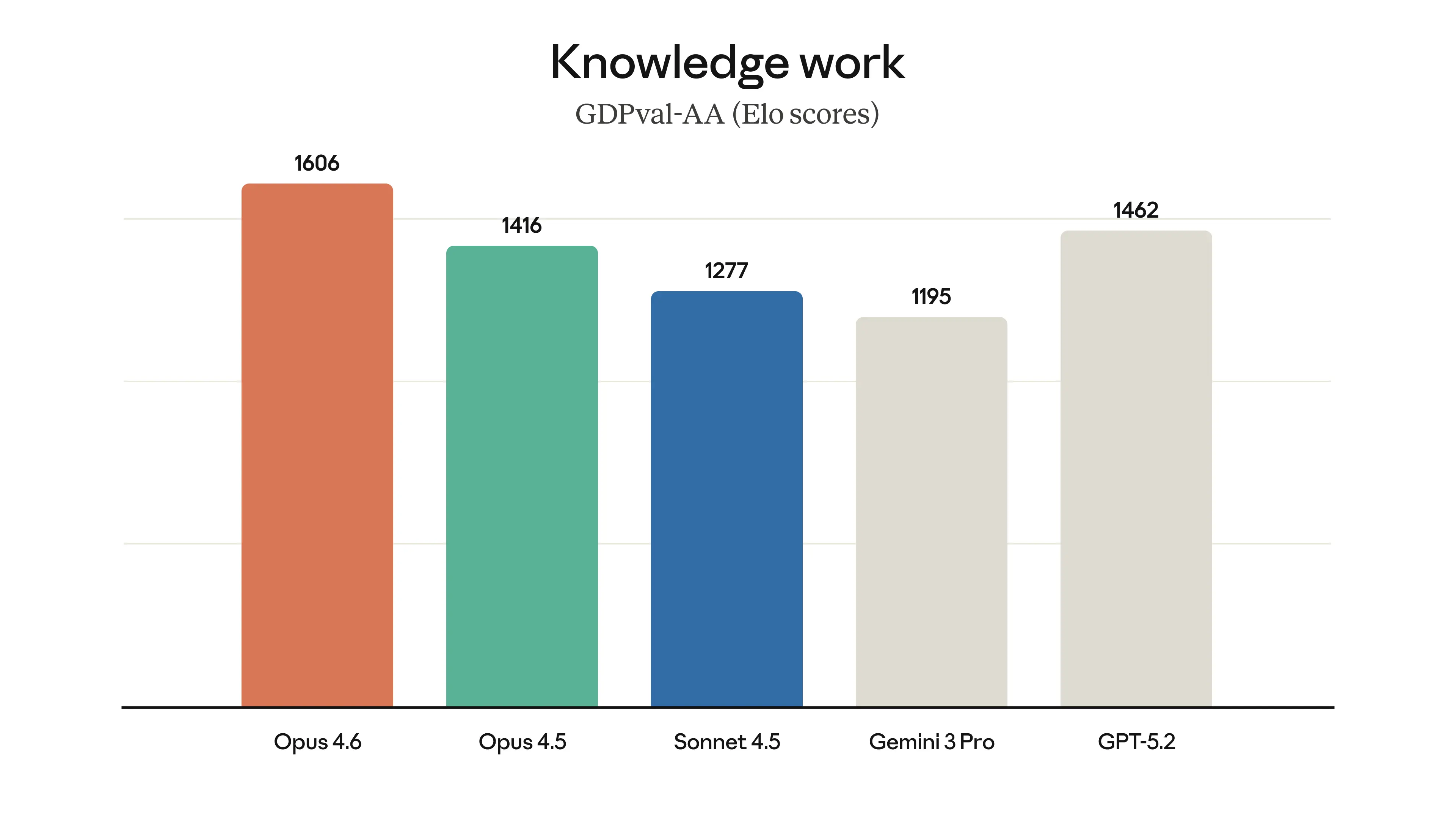

. Developers can use claude-opus-4-7via the Claude API.  is our new open-source tool that enables researchers to explore hypotheses about model behavior with ease. Petri deploys an automated agent to test a target AI system through diverse multi-turn conversations involving simulated users and tools; Petri then scores and summarizes the target’s behavior. As a pilot demonstration of its capabilities, we tested Petri across 14 frontier models using 111 diverse seed instructions covering behaviors such as:. While running Petri across our diverse set of seed instructions, we observed multiple in…

- [21] Project Glasswing - Anthropicanthropic.com

- Research. * News.

.

. Read the announcement.

. !…

- Research. * News.

- [22] [PDF] Alignment Risk Update: Claude Mythos Preview - Anthropicanthropic.com

1 1 Introduction 4 2 Overview 5 3 Threat model 6 3.1 Specific pathways 7 4 Risk assessment methodology 7 5 Evidence 8 5.1 Background expectations 8 5.1.1 Deployment and usage patterns 8 5.1.2 Experience with prior models 8 5.1.3 Potential sources of misalignment 10 5.2 Training monitoring 11 5.2.1 Environment evaluation 11 5.2.2 Training data monitoring 12 5.2.2.1 RL monitoring red-teaming 13 5.2.3 Risks of contamination or “Goodharting” 14 5.2.4 Overall conclusion about the training process 16 5.3 Relevant capabilities 17 5.3.1 Opaque reasoning 18 5.3.2 Secret keeping 20 5…

- [23] [PDF] Claude Mythos Preview System Card - Anthropicwww-cdn.anthropic.com

2 Abstract 2 1 Introduction 9 1.1 Model training and characteristics 10 1.1.1 Training data and process 10 1.1.2 Crowd workers 11 1.1.3 Usage policy and support 11 1.1.4 Iterative model evaluations 12 1.1.5 External testing 12 1.2 Release decision process 12 1.2.1 Overview 12 1.2.2 RSP decision-making 13 2 RSP evaluations 15 2.1 RSP risk assessment process 15 2.1.1 Context: From RSP 2.0 to RSP 3.0 15 2.1.2 Risk Reports & updates to our risk assessments 16 2.1.3 Summary of findings and conclusions 17 2.1.3.1 On autonomy risks 17 2.1.3.2 On chemical and biological risks 18 2.2…

- [24] Project Glasswing: Securing critical software for the AI era - Anthropicanthropic.com

IntroductionCybersecurity in the age of AIIdentifying vulnerabilities and exploits with Claude Mythos PreviewPlans for Project Glasswing. We have already seen the serious consequences of cyberattacks for important [corporate networks](https://cloud.google.com/blog/topics/threat-intelligence/oracle-ebus…