Search for the benchmarks of GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4. Compare them to help me understand more about them.

I found public benchmark data for all four, but not one fully independent, apples to apples leaderboard covering GPT 5.5, Claude Opus 4.7, Kimi K2.6, and DeepSeek V4 under the same harness. The practical read is: GPT...

I found public benchmark data for all four, but not one fully independent, apples to apples leaderboard covering GPT 5.5, Claude Opus 4.7, Kimi K2.6, and DeepSeek V4 under the same harness. The practical read is: GPT 5.5 looks strongest for broad agentic coding/computer use tasks, Claude Opus 4.7 is extremely strong on

Key takeaways

- GPT-5.5’s official benchmarks emphasize agentic coding and computer-use: 82.7% on Terminal-Bench 2.0, 58.6% on SWE-Bench Pro, 78.7% on OSWorld-Verified, 84.4% on BrowseComp, and 81.8% on CyberGym.

- GPT-5.5 Pro appears stronger on some harder research/search/math tasks, including 90.1% on BrowseComp, 52.4% on FrontierMath Tier 1–3, and 39.6% on FrontierMath Tier 4.

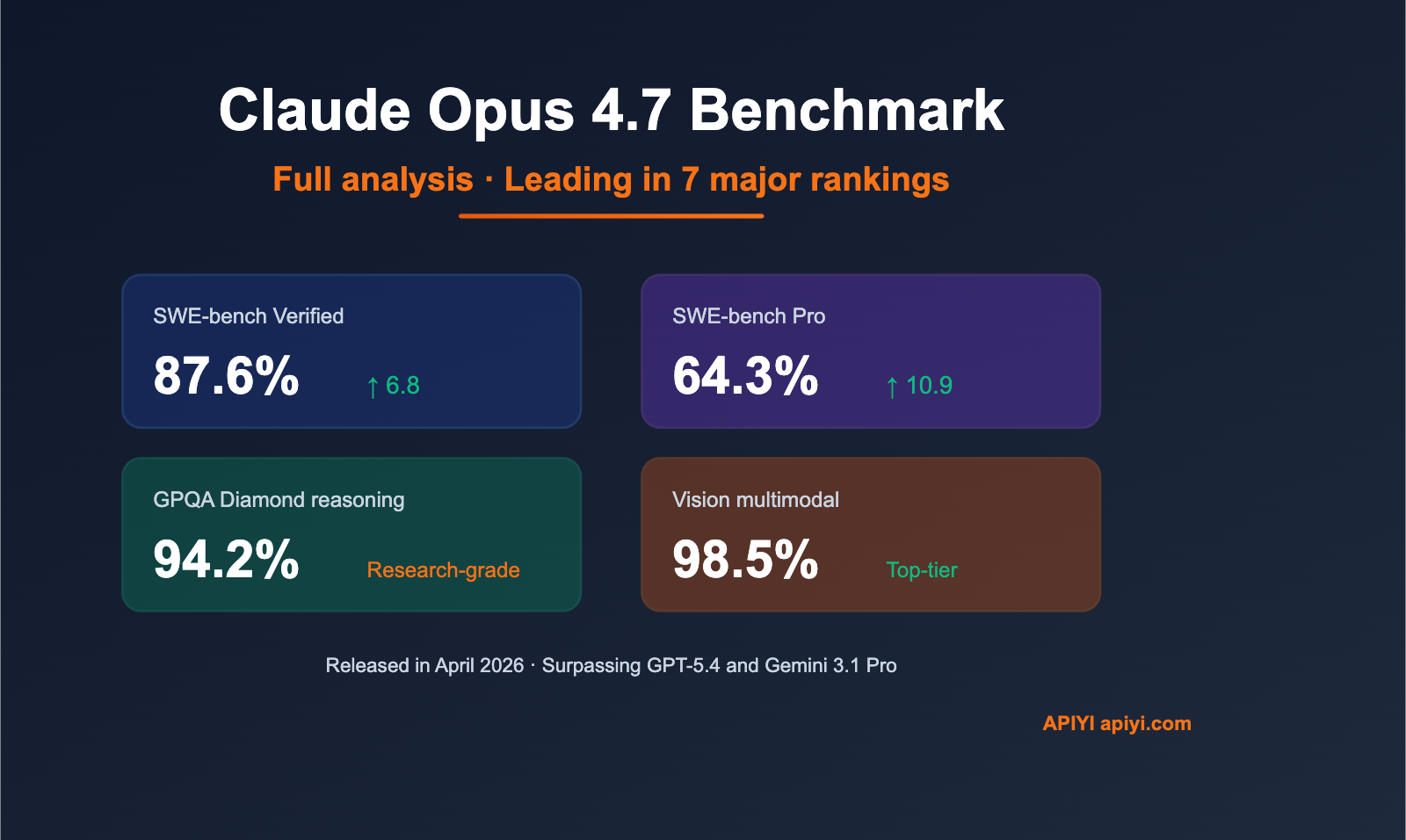

- Claude Opus 4.7 is positioned by Anthropic as a premium hybrid reasoning model for coding and agents, with a 1M-token context window and API pricing of $5 per million input tokens and $25 per million output tokens. Third-party benchmark reporting lists Claude Opus 4.7 at 87.6% on

- Kimi K2.6 is an open-weight multimodal MoE model with 1T total parameters, 32B active parameters, and a 256K-token context window. Its model card reports 80.2% on SWE-Bench Verified, 58.6% on SWE-Bench Pro, 66.7% on Terminal-Bench 2.0, 90.5% on GPQA Diamond, and 54.0% on HLE-Full

- I found public benchmark data for all four, but not one fully independent, apples-to-apples leaderboard covering GPT-5.5, Claude Opus 4.7, Kimi K2.6, and DeepSeek V4 under the same harness. The practical read is: GPT-5.5 looks strongest for broad agentic coding/computer-use tasks

- ## Key findings

Research answer

I found public benchmark data for all four, but not one fully independent, apples-to-apples leaderboard covering GPT-5.5, Claude Opus 4.7, Kimi K2.6, and DeepSeek V4 under the same harness. The practical read is: GPT-5.5 looks strongest for broad agentic coding/computer-use tasks, Claude Opus 4.7 is extremely strong on coding and hard reasoning, Kimi K2.6 is the strongest open-weight option in this group, and DeepSeek V4 looks like the best price/performance play if its reported results hold up across independent testing [4][

5][

6][

13].

Key findings

-

GPT-5.5’s official benchmarks emphasize agentic coding and computer-use: 82.7% on Terminal-Bench 2.0, 58.6% on SWE-Bench Pro, 78.7% on OSWorld-Verified, 84.4% on BrowseComp, and 81.8% on CyberGym [

4].

-

GPT-5.5 Pro appears stronger on some harder research/search/math tasks, including 90.1% on BrowseComp, 52.4% on FrontierMath Tier 1–3, and 39.6% on FrontierMath Tier 4 [

4].

-

Claude Opus 4.7 is positioned by Anthropic as a premium hybrid reasoning model for coding and agents, with a 1M-token context window and API pricing of $5 per million input tokens and $25 per million output tokens [

2]. Third-party benchmark reporting lists Claude Opus 4.7 at 87.6% on SWE-bench Verified and 94.2% on GPQA Diamond [

5].

-

Kimi K2.6 is an open-weight multimodal MoE model with 1T total parameters, 32B active parameters, and a 256K-token context window [

13]. Its model card reports 80.2% on SWE-Bench Verified, 58.6% on SWE-Bench Pro, 66.7% on Terminal-Bench 2.0, 90.5% on GPQA Diamond, and 54.0% on HLE-Full with tools [

13].

-

DeepSeek V4-Pro-Max is reported as a 1.6T-parameter open model with much lower API pricing than GPT-5.5 and Claude Opus 4.7 [

6]. Reported comparison data puts DeepSeek V4-Pro-Max at 90.1% on GPQA Diamond, 37.7% on HLE without tools, 48.2% on HLE with tools, and 67.9% on Terminal-Bench 2.0 [

6].

Comparison

| Model | Best fit | Notable reported benchmarks | Main caveat |

|---|---|---|---|

| GPT-5.5 | Best general pick for agentic coding, computer use, research workflows, and tool-heavy tasks | Terminal-Bench 2.0: 82.7%; SWE-Bench Pro: 58.6%; OSWorld-Verified: 78.7%; BrowseComp: 84.4%; CyberGym: 81.8% [ | OpenAI’s strongest numbers include internal or vendor-run evals, so independent confirmation matters [ |

| GPT-5.5 Pro | Harder reasoning/search/math where cost is less important | BrowseComp: 90.1%; FrontierMath Tier 1–3: 52.4%; FrontierMath Tier 4: 39.6% [ | Higher-tier “Pro” results are not directly comparable to base GPT-5.5 or open models unless the same effort/budget is used [ |

| Claude Opus 4.7 | Premium coding, long-context enterprise workflows, careful reasoning, and high-reliability agents | SWE-bench Verified: 87.6%; GPQA Diamond: 94.2%; 1M context window [ | Some Anthropic benchmark results are presented in release materials or partner/internal evals, and not every benchmark is directly comparable to public leaderboard runs [ |

| Kimi K2.6 | Best open-weight option if you want strong coding/agentic performance and self-hostability | SWE-Bench Verified: 80.2%; SWE-Bench Pro: 58.6%; Terminal-Bench 2.0: 66.7%; GPQA Diamond: 90.5%; HLE-Full with tools: 54.0% [ | Kimi’s own model card notes different settings and re-evaluations for some comparison scores, so treat cross-model deltas cautiously [ |

| DeepSeek V4-Pro-Max | Best value candidate; strong open-model performance with much lower reported API cost | GPQA Diamond: 90.1%; HLE no tools: 37.7%; HLE with tools: 48.2%; Terminal-Bench 2.0: 67.9% [ | Evidence is newer and less independently settled; I would wait for more third-party validation before treating it as equal to closed frontier models [ |

How to interpret the numbers

-

For software engineering, Claude Opus 4.7’s 87.6% SWE-bench Verified score is the standout among the reported figures I found, while GPT-5.5’s 82.7% Terminal-Bench 2.0 score is the strongest reported command-line/agentic coding number in this set [

4][

5].

-

For broad tool-using agent tasks, GPT-5.5 looks especially strong because its official release reports high scores across OSWorld-Verified, BrowseComp, Toolathlon, Terminal-Bench 2.0, and CyberGym rather than just one narrow benchmark [

4].

-

For open-weight deployment, Kimi K2.6 and DeepSeek V4 are the two to watch: Kimi K2.6 has a strong official benchmark table across coding, reasoning, vision, and agentic tasks, while DeepSeek V4 emphasizes frontier-like performance at much lower cost [

6][

13].

-

For hard academic/scientific reasoning, Claude Opus 4.7’s reported 94.2% GPQA Diamond leads the figures I found, while GPT-5.5 and DeepSeek V4 are reported lower on GPQA Diamond in the comparison data I found [

5][

6].

-

For price/performance, DeepSeek V4 appears meaningfully cheaper than GPT-5.5 and Claude Opus 4.7 in reported API pricing, but cheaper inference only matters if its quality is sufficient for your workload [

6].

Evidence notes

-

The strongest source for GPT-5.5 is OpenAI’s official GPT-5.5 release page, which includes the benchmark table for Terminal-Bench 2.0, SWE-Bench Pro, OSWorld-Verified, BrowseComp, FrontierMath, Toolathlon, and CyberGym [

4].

-

The strongest source for Claude Opus 4.7 positioning, context window, availability, and pricing is Anthropic’s Opus 4.7 product/release material [

2][

8]. The cleanest text benchmark figures I found for Opus 4.7’s SWE-bench Verified and GPQA Diamond scores came from LLM Stats reporting [

5].

-

The strongest source for Kimi K2.6 is Moonshot AI’s Hugging Face model card, which includes architecture details, context length, and the evaluation table [

13].

-

The strongest text source I found for DeepSeek V4’s direct comparison against GPT-5.5 and Claude Opus 4.7 was VentureBeat’s launch/benchmark report [

6].

Limitations / uncertainty

-

Insufficient evidence for one single, independent, fully apples-to-apples benchmark suite that evaluates all four models with the same prompts, tools, budgets, reasoning effort, and scoring harness.

-

Some numbers are vendor-reported, some are third-party summaries, and some benchmarks use different tool access or reasoning-effort settings, so small score differences should not be overinterpreted [

4][

8][

13].

-

For real buying/deployment decisions, I would run your own 20–50 task eval set with your actual prompts, files, tools, latency targets, and cost constraints.

Summary

If you want the safest high-end general choice, start with GPT-5.5. If your priority is top-tier coding reliability and long-context enterprise work, test Claude Opus 4.7. If you need open weights and strong frontier-adjacent performance, Kimi K2.6 is the most compelling open option. If cost is the constraint and you can tolerate some validation risk, DeepSeek V4 is the one to benchmark first.

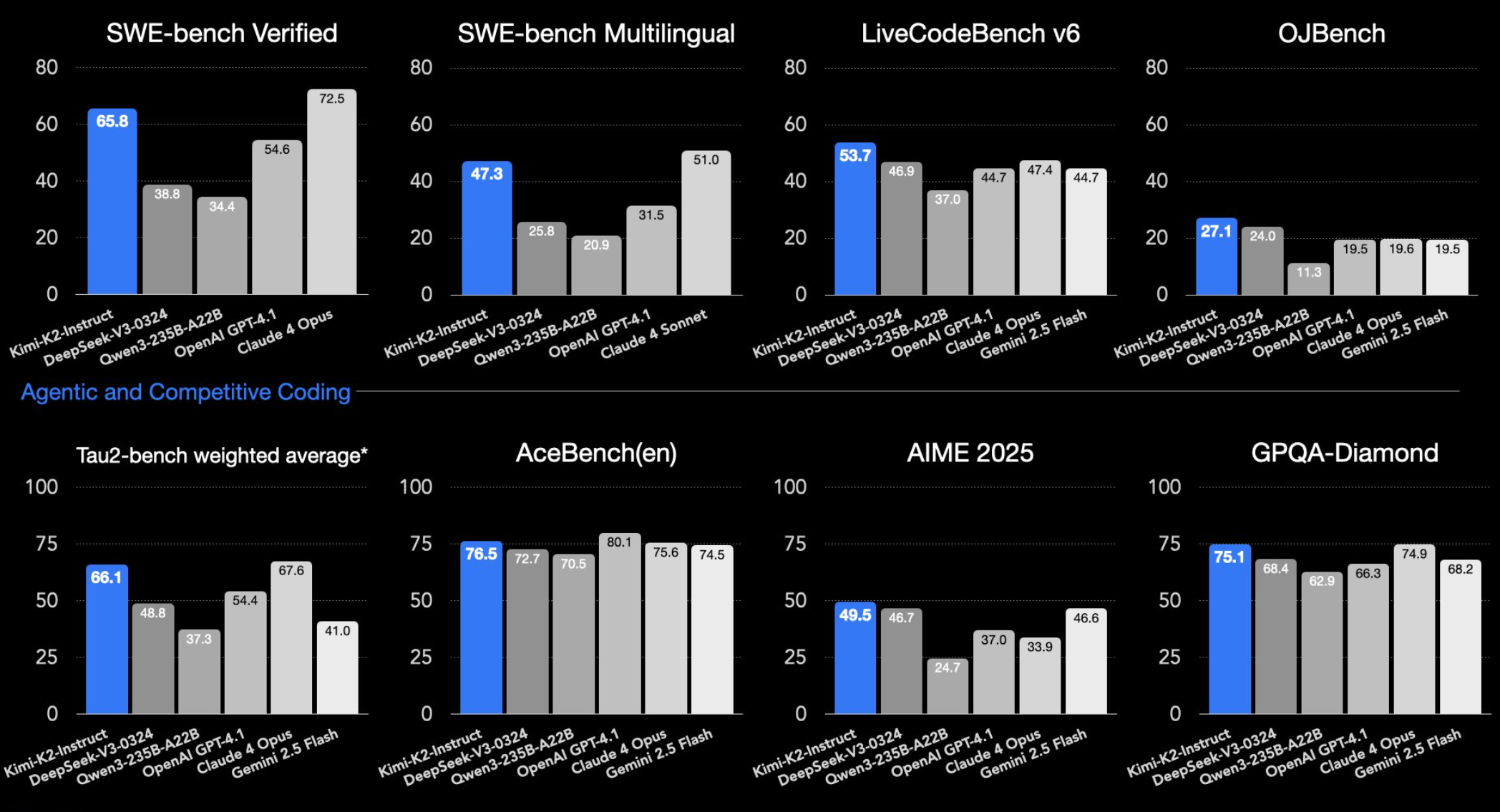

Supporting visuals

Research conversation

Research question

Search for the benchmarks of GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4. Compare them to help me understand more about them.

Cited answer

I found public benchmark data for all four, but not one fully independent, apples-to-apples leaderboard covering GPT-5.5, Claude Opus 4.7, Kimi K2.6, and DeepSeek V4 under the same harness. The practical read is: GPT-5.5 looks strongest for broad agentic coding/computer-use tasks, Claude Opus 4.7 is extremely strong on coding and hard reasoning, Kimi K2.6 is the strongest open-weight option in this group, and DeepSeek V4 looks like the best price/performance play if its reported results hold up across independent testing [4][

5][

6][

13].

Key findings

-

GPT-5.5’s official benchmarks emphasize agentic coding and computer-use: 82.7% on Terminal-Bench 2.0, 58.6% on SWE-Bench Pro, 78.7% on OSWorld-Verified, 84.4% on BrowseComp, and 81.8% on CyberGym [

4].

-

GPT-5.5 Pro appears stronger on some harder research/search/math tasks, including 90.1% on BrowseComp, 52.4% on FrontierMath Tier 1–3, and 39.6% on FrontierMath Tier 4 [

4].

-

Claude Opus 4.7 is positioned by Anthropic as a premium hybrid reasoning model for coding and agents, with a 1M-token context window and API pricing of $5 per million input tokens and $25 per million output tokens [

2]. Third-party benchmark reporting lists Claude Opus 4.7 at 87.6% on SWE-bench Verified and 94.2% on GPQA Diamond [

5].

-

Kimi K2.6 is an open-weight multimodal MoE model with 1T total parameters, 32B active parameters, and a 256K-token context window [

13]. Its model card reports 80.2% on SWE-Bench Verified, 58.6% on SWE-Bench Pro, 66.7% on Terminal-Bench 2.0, 90.5% on GPQA Diamond, and 54.0% on HLE-Full with tools [

13].

-

DeepSeek V4-Pro-Max is reported as a 1.6T-parameter open model with much lower API pricing than GPT-5.5 and Claude Opus 4.7 [

6]. Reported comparison data puts DeepSeek V4-Pro-Max at 90.1% on GPQA Diamond, 37.7% on HLE without tools, 48.2% on HLE with tools, and 67.9% on Terminal-Bench 2.0 [

6].

Comparison

| Model | Best fit | Notable reported benchmarks | Main caveat |

|---|---|---|---|

| GPT-5.5 | Best general pick for agentic coding, computer use, research workflows, and tool-heavy tasks | Terminal-Bench 2.0: 82.7%; SWE-Bench Pro: 58.6%; OSWorld-Verified: 78.7%; BrowseComp: 84.4%; CyberGym: 81.8% [ | OpenAI’s strongest numbers include internal or vendor-run evals, so independent confirmation matters [ |

| GPT-5.5 Pro | Harder reasoning/search/math where cost is less important | BrowseComp: 90.1%; FrontierMath Tier 1–3: 52.4%; FrontierMath Tier 4: 39.6% [ | Higher-tier “Pro” results are not directly comparable to base GPT-5.5 or open models unless the same effort/budget is used [ |

| Claude Opus 4.7 | Premium coding, long-context enterprise workflows, careful reasoning, and high-reliability agents | SWE-bench Verified: 87.6%; GPQA Diamond: 94.2%; 1M context window [ | Some Anthropic benchmark results are presented in release materials or partner/internal evals, and not every benchmark is directly comparable to public leaderboard runs [ |

| Kimi K2.6 | Best open-weight option if you want strong coding/agentic performance and self-hostability | SWE-Bench Verified: 80.2%; SWE-Bench Pro: 58.6%; Terminal-Bench 2.0: 66.7%; GPQA Diamond: 90.5%; HLE-Full with tools: 54.0% [ | Kimi’s own model card notes different settings and re-evaluations for some comparison scores, so treat cross-model deltas cautiously [ |

| DeepSeek V4-Pro-Max | Best value candidate; strong open-model performance with much lower reported API cost | GPQA Diamond: 90.1%; HLE no tools: 37.7%; HLE with tools: 48.2%; Terminal-Bench 2.0: 67.9% [ | Evidence is newer and less independently settled; I would wait for more third-party validation before treating it as equal to closed frontier models [ |

How to interpret the numbers

-

For software engineering, Claude Opus 4.7’s 87.6% SWE-bench Verified score is the standout among the reported figures I found, while GPT-5.5’s 82.7% Terminal-Bench 2.0 score is the strongest reported command-line/agentic coding number in this set [

4][

5].

-

For broad tool-using agent tasks, GPT-5.5 looks especially strong because its official release reports high scores across OSWorld-Verified, BrowseComp, Toolathlon, Terminal-Bench 2.0, and CyberGym rather than just one narrow benchmark [

4].

-

For open-weight deployment, Kimi K2.6 and DeepSeek V4 are the two to watch: Kimi K2.6 has a strong official benchmark table across coding, reasoning, vision, and agentic tasks, while DeepSeek V4 emphasizes frontier-like performance at much lower cost [

6][

13].

-

For hard academic/scientific reasoning, Claude Opus 4.7’s reported 94.2% GPQA Diamond leads the figures I found, while GPT-5.5 and DeepSeek V4 are reported lower on GPQA Diamond in the comparison data I found [

5][

6].

-

For price/performance, DeepSeek V4 appears meaningfully cheaper than GPT-5.5 and Claude Opus 4.7 in reported API pricing, but cheaper inference only matters if its quality is sufficient for your workload [

6].

Evidence notes

-

The strongest source for GPT-5.5 is OpenAI’s official GPT-5.5 release page, which includes the benchmark table for Terminal-Bench 2.0, SWE-Bench Pro, OSWorld-Verified, BrowseComp, FrontierMath, Toolathlon, and CyberGym [

4].

-

The strongest source for Claude Opus 4.7 positioning, context window, availability, and pricing is Anthropic’s Opus 4.7 product/release material [

2][

8]. The cleanest text benchmark figures I found for Opus 4.7’s SWE-bench Verified and GPQA Diamond scores came from LLM Stats reporting [

5].

-

The strongest source for Kimi K2.6 is Moonshot AI’s Hugging Face model card, which includes architecture details, context length, and the evaluation table [

13].

-

The strongest text source I found for DeepSeek V4’s direct comparison against GPT-5.5 and Claude Opus 4.7 was VentureBeat’s launch/benchmark report [

6].

Limitations / uncertainty

-

Insufficient evidence for one single, independent, fully apples-to-apples benchmark suite that evaluates all four models with the same prompts, tools, budgets, reasoning effort, and scoring harness.

-

Some numbers are vendor-reported, some are third-party summaries, and some benchmarks use different tool access or reasoning-effort settings, so small score differences should not be overinterpreted [

4][

8][

13].

-

For real buying/deployment decisions, I would run your own 20–50 task eval set with your actual prompts, files, tools, latency targets, and cost constraints.

Summary

If you want the safest high-end general choice, start with GPT-5.5. If your priority is top-tier coding reliability and long-context enterprise work, test Claude Opus 4.7. If you need open weights and strong frontier-adjacent performance, Kimi K2.6 is the most compelling open option. If cost is the constraint and you can tolerate some validation risk, DeepSeek V4 is the one to benchmark first.

People also ask

What is the short answer to "Search for the benchmarks of GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4. Compare them to help me understand more about them."?

GPT-5.5’s official benchmarks emphasize agentic coding and computer-use: 82.7% on Terminal-Bench 2.0, 58.6% on SWE-Bench Pro, 78.7% on OSWorld-Verified, 84.4% on BrowseComp, and 81.8% on CyberGym.

What are the key points to validate first?

GPT-5.5’s official benchmarks emphasize agentic coding and computer-use: 82.7% on Terminal-Bench 2.0, 58.6% on SWE-Bench Pro, 78.7% on OSWorld-Verified, 84.4% on BrowseComp, and 81.8% on CyberGym. GPT-5.5 Pro appears stronger on some harder research/search/math tasks, including 90.1% on BrowseComp, 52.4% on FrontierMath Tier 1–3, and 39.6% on FrontierMath Tier 4.

What should I do next in practice?

Claude Opus 4.7 is positioned by Anthropic as a premium hybrid reasoning model for coding and agents, with a 1M-token context window and API pricing of $5 per million input tokens and $25 per million output tokens. Third-party benchmark reporting lists Claude Opus 4.7 at 87.6% on

Which related topic should I explore next?

Continue with "Deep research & compare GPT-5.5, Claude Opus 4.7, Kimi K2.6, DeepSeek V4" for another angle and extra citations.

Open related pageWhat should I compare this against?

Cross-check this answer against "Research and fact-check: Claude Opus 4.7 vs GPT-5.5 Spud, Evidence provenance in research workflows: citations, scratchpads, and traceabilit".

Open related pageContinue your research

Sources

- [1] [AINews] Moonshot Kimi K2.6: the world's leading Open Model ...latent.space

Moonshot’s Kimi K2.6 was the clear release of the day: an open-weight 1T-parameter MoE with 32B active, 384 experts (8 routed + 1 shared), MLA attention, 256K context, native multimodality, and INT4 quantization, with day-0 support in vLLM, OpenRouter, Cloudflare Workers AI, Baseten, MLX, Hermes Agent, and OpenCode. Moonshot claims open-source SOTA on HLE w/ tools 54.0, SWE-Bench Pro 58.6, SWE-bench Multilingual 76.7, BrowseComp 83.2, Toolathlon 50.0, CharXiv w/ python 86.7, and Math Vision w/ python 93.2 in the launch thread. The more novel systems claims are around long-horizon execution—4,…

- [2] Kimi 2.6 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # Kimi 2.6 According to BenchLM.ai, Kimi 2.6 ranks #13 out of 110 models on the provisional leaderboard with an overall score of 83/100. It also ranks #5 out of 14 on the verified leaderboard. This places it in the upper tier of AI models, with competitive scores across most benchmark categories. Kimi 2.6 is a open weight model with a 256K token context window. It uses explicit chain-of-thought reasoning, which typically improves performance on math and complex reasoning tasks at the cost of higher latency and tok…

- [3] Kimi K2.6 - Vals AIvals.ai

Benchmarks Models Comparison Model Guide App Reports News About Benchmarks Models Comparison Model Guide App Reports About Release date Models 4/20/2026 Moonshot AI Kimi K2.6 4/16/2026 Anthropic Claude Opus 4.7 4/8/2026 Meta Muse Spark 4/2/2026 Google Gemma 4 31B IT 4/2/2026 Alibaba Qwen 3.6 Plus 4/1/2026 zAI GLM 5.1 4/1/2026 Arcee AI Trinity Large Thinking 3/17/2026 OpenAI GPT 5.4 Mini 3/17/2026 OpenAI GPT 5.4 Nano 3/17/2026 MiniMax MiniMax-M2.7 3/9/2026 xAI Grok 4.20 (Reasoning) 3/5/2026 OpenAI GPT 5.4 3/3/2026 Google Gemini 3.1 Flash Lite Preview 2/24/2026 OpenAI GPT 5.3 Codex 2/23/2026 Al…

- [4] Kimi K2.6 released beating closed models on multiple benchmarks ...threads.com

curl -sfL get.k3s.io | sh - Image 7: May be a Twitter screenshot of screen and text that says 'README More K3s- Lightweight ල Kubernetes license scan passing Nightly Install passing Build Status Integration Test Coverage Unit Test Coverage passing passing openssf best practices passing openssf scorecard 7.2 downloads 8.5M CLOMonitor Report Lightweight Kubernetes. Production ready, easy to install, half the memory, all in a binary less than 100 MB.' 2 1 1 Image 8: aiorqalipulishla's profile picture aiorqalipulishla AI Threads 1d Kimi K2.6 just dropped. And it crushed Claude Opus 4.6 on SWE-Ben…

- [5] Kimi K2.6: The new leading open weights model - Artificial Analysisartificialanalysis.ai

➤ Multimodality: Kimi K2.6 supports Image and Video input and text output natively. The model’s max context length remains 256k. Kimi K2.6 has significantly higher token usage than Kimi K2.5. Kimi K2.5 scores 6 on the AA-Omniscience Index, primarily driven by low hallucination rate. Here’s the full suite of Kimi K2.6 evaluation results: See Artificial Analysis for further details and benchmarks of Kimi K2.6: Want to dive deeper? Discuss this model with our Discord community: ## Read the latest ### Opus 4.7: Everything you need to know Benchmarks and Analysis of Opus 4.7 April 17, 2026 ### Sub…

- [6] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

| OSWorld-Verified | 73.1 | 75.0 | 72.7 63.3 | | Coding | | Terminal-Bench 2.0 (Terminus-2) | 66.7 | 65.4 | 65.4 | 68.5 | 50.8 | | SWE-Bench Pro | 58.6 | 57.7 | 53.4 | 54.2 | 50.7 | | SWE-Bench Multilingual | 76.7 77.8 | 76.9 | 73.0 | | SWE-Bench Verified | 80.2 80.8 | 80.6 | 76.8 | | SciCode | 52.2 | 56.6 | 51.9 | 58.9 | 48.7 | | OJBench (python) | 60.6 60.3 | 70.7 | 54.7 | | LiveCodeBench (v6) | 89.6 88.8 | 91.7 | 85.0 | | Reasoning & Knowledge | | HLE-Full | 34.7 | 39.8 | 40.0 | 44.4 | 30.1 | | AIME 2026 | 96.4 | 99.2 | 96.7 | 98.3 | 95.8 | | HMMT 2026 (Feb) | 92.7 | 97.7 | 96.2 | 94.7 | 8…

- [7] SWE-bench Verified* Benchmark 2026: 5 model averagesbenchlm.ai

75.6% 2 MiniMax M2.7 MiniMax Open 75.4% 3 GLM-5 Z.AI Open 72.8% 4 Kimi K2.5 Moonshot AI Open 70.8% 5 Trinity-Large-Thinking Arcee AI Open 63.2% ## FAQ ### What does SWE-bench Verified measure? A display-only SWE-bench Verified reference from Arcee AI's Trinity-Large-Thinking comparison chart. ### Which model scores highest on SWE-bench Verified? Claude Opus 4.6 by Anthropic currently leads with a score of 75.6% on SWE-bench Verified. ### How many models are evaluated on SWE-bench Verified? 5 AI models have been evaluated on SWE-bench Verified on BenchLM. ## Compare Top Models on SWE-bench Ver…

- [8] Kimi K2.5 vs Kimi K2.6 Comparison - LLM Statsllm-stats.com

LLM Stats Logo Model Comparison # Kimi K2.5 vs Kimi K2.6 Kimi K2.6 significantly outperforms across most benchmarks. Kimi K2.5 is 1.4x cheaper per token. Moonshot AI Moonshot AI ## Performance Benchmarks Comparative analysis across standard metrics Kimi K2.5 outperforms in 1 benchmarks (Humanity's Last Exam), while Kimi K2.6 is better at 14 benchmarks (BrowseComp, CharXiv-R, DeepSearchQA, GPQA, IMO-AnswerBench, LiveCodeBench v6, MathVision, MMMU-Pro, SciCode, SWE-bench Multilingual, SWE-Bench Pro, SWE-Bench Verified, Terminal-Bench 2.0, WideSearch). Kimi K2.6 significantly outperforms across…

- [9] Kimi K2.6: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

Kimi K2.6: Pricing, Benchmarks & Performance Image 1: LLM Stats LogoLLM Stats Leaderboards Benchmarks Compare Playground Arenas Gateway Services Search⌘K Sign in Toggle theme NEW•NEW•NEW•NEW• What if your agent could call anyone? CallingBox Start for free 1. Organizations 2. Moonshot AI 3. Kimi K2.6 Compare Chat Image 2: Moonshot AI logo # Kimi K2.6 Moonshot AI·Apr 2026·Modified MIT License Kimi K2.6 is Moonshot AI's open-source, native multimodal agentic model focused on state-of-the-art coding, long-horizon execution, and agent swarm capabilities. It scales horizontally to 300 sub-agents…

- [10] MoonshotAI: Kimi K2.6 Reviewdesignforonline.com

Performance Indices Source: Artificial Analysis This model was released recently. Independent benchmark evaluations are typically completed within days of release — these figures are preliminary and are likely to be updated as testing is finalised. ## Benchmark Scores ### Intelligence ### Technical ### Content Benchmark data from Artificial Analysis and Hugging Face How does MoonshotAI: Kimi K2.6 stack up? Compare side-by-side with other similar models. ## Model Information | | | --- | | OpenRouter ID |

moonshotai/kimi-k2.6| | Provider | moonshotai | | Release Date | April 20, 2026 | |… - [11] AI Leaderboard 2026 - Compare Top AI Models & Rankingsllm-stats.com

| 19 | Image 20: Moonshot AI Kimi K2.6NEW Moonshot AI | 1,157 | — | 90.5% | 80.2% | 262K | $0.95 | $4.00 | Open Source | | 20 | Image 21: OpenAI GPT-5.2 Codex OpenAI | 1,148 | 812 | — | — | 400K | $1.75 | $14.00 | Proprietary | [...] | 6 | Image 7: Anthropic Claude Opus 4.5 Anthropic | 1,614 | 1,342 | 87.0% | 80.9% | 200K | $5.00 | $25.00 | Proprietary | | 7 | Image 8: Google Gemini 3 Pro Google | 1,579 | 1,045 | 91.9% | 76.2% | — | — | — | Proprietary | | 8 | Image 9: Zhipu AI GLM-5 Zhipu AI | 1,576 | 1,158 | — | 77.8% | 200K | $1.00 | $3.20 | Open Source | | 9 | Image 10: OpenAI GPT-5.2 Ope…

- [12] LLM Benchmarks 2026: 30+ Models Ranked — MMLU, SWE-bench, Arena Elo & Pricingiternal.ai

| Kimi K2.5 | Moonshot AI | 1T MoE | Open-weight | Coding, agentic (Agent Swarm up to 100 agents), vision | SWE-bench 76.8%; HumanEval 99.0%; GPQA 87.6%; HLE 51.8% (tools) | | MiniMax M2.7 | MiniMax | ~230B MoE | Proprietary | Self-evolving agent, office productivity, coding | SWE-bench 78%; GDPval-AA 1495 Elo; released March 18, 2026 | | Step-3.5-Flash | StepFun | 196B (11B active MoE) | Open-weight | Ultra-fast reasoning, competitive coding | AIME 99.8%; SWE-bench 74.4%; 100-350 tok/s; 256K context | | DeepSeek R1 | DeepSeek | ~670B MoE | MIT | Deep reasoning, math, chain-of-thought | MATH-…

- [13] Claude Opus 4.7anthropic.com

Image 15: logo > In our evals, we saw a double digit jump in accuracy of tool calls and planning in our core orchestrator agents. As users leverage Hebbia to plan and execute on use cases like retrieval, slide creation, or document generation, Claude Opus 4.7 shows the potential to improve agent decision making in these workflows. > > Adithya Ramanathan > > Head of Applied Research Image 16: logo > On Rakuten-SWE-Bench, Claude Opus 4.7 resolves 3x more production tasks than Opus 4.6, with double-digit gains in Code Quality and Test Quality. This is a meaningful lift and a clear upgrade for th…

- [14] Claude Opus 4.7 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # Claude Opus 4.7 According to BenchLM.ai, Claude Opus 4.7 ranks #2 out of 110 models on the provisional leaderboard with an overall score of 97/100. It also ranks #2 out of 14 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. Claude Opus 4.7 is a proprietary model with a 1M token context window. It processes queries without explicit chain-of-thought reasoning, offering faster response times and lower to…

- [15] Claude Opus 4.7 leads on SWE-bench and agentic reasoning ...thenextweb.com

On graduate-level reasoning, measured by GPQA Diamond, the field has converged. Opus 4.7 scores 94.2%, GPT-5.4 Pro scores 94.4%, and Gemini 3.1 Pro scores 94.3%. The differences are within noise. The frontier models have effectively saturated this benchmark, which means the competitive differentiation is shifting away from raw reasoning scores and toward applied performance on complex, multi-step tasks. ## The agentic step [...] # Claude Opus 4.7 leads on SWE-bench and agentic reasoning, beating GPT-5.4 and Gemini 3.1 Pro Skip to content Toggle Navigation , multi-agent coordination for hours-…

- [16] Claude Opus 4.7: Benchmarks, Pricing, Context & What's Newllm-stats.com

LLM Stats Logo Make AI phone calls with one API call # Claude Opus 4.7: Benchmarks, Pricing, Context & What's New Claude Opus 4.7 scores 87.6% on SWE-bench Verified, 94.2% on GPQA, 1M token context, 3.3x higher-resolution vision, new xhigh effort level. $5/$25 pricing. Jonathan Chavez The Takeaway Claude Opus 4.7 is a direct upgrade to Opus 4.6 at the same price ($5/$25 per million tokens), with 87.6% on SWE-bench Verified (+6.8pp), a new xhigh effort level, 3.3x higher-resolution vision, and self-verification on long-running agentic tasks. Claude Opus 4.7: Benchmarks, Pricing, Context & What…

- [17] Introducing Claude Opus 4.7 - Anthropicanthropic.com

CyberGym: Opus 4.6’s score has been updated from the originally reported 66.6 to 73.8, as we updated our harness parameters to better elicit cyber capability. SWE-bench Multimodal: We used an internal implementation for both Opus 4.7 and Opus 4.6. Scores are not directly comparable to public leaderboard scores. [...] Image 7: logo > Based on our internal research-agent benchmark, Claude Opus 4.7 has the strongest efficiency baseline we’ve seen for multi-step work. It tied for the top overall score across our six modules at 0.715 and delivered the most consistent long-context performance of an…

- [18] SWE-Bench Verified Leaderboard - LLM Statsllm-stats.com

| # | Model | Score | Size | Context | Cost | License | --- --- --- | 1 | Anthropic Claude Mythos Preview Anthropic | 0.939 | — | — | $25.00 / $125.00 | | | 2 | Anthropic Claude Opus 4.7 Anthropic | 0.876 | — | 1.0M | $5.00 / $25.00 | | | 3 | Anthropic Claude Opus 4.5 Anthropic | 0.809 | — | 200K | $5.00 / $25.00 | | | 4 | Anthropic Claude Opus 4.6 Anthropic | 0.808 | — | 1.0M | $5.00 / $25.00 | | | 5 | Google Gemini 3.1 Pro Google | 0.806 | — | 1.0M | $2.50 / $15.00 | | | 5 | DeepSeek DeepSeek-V4-Pro-MaxNew DeepSeek | 0.806 | 1.6T | 1.0M | $1.74 / $3.48 | | | 7 | MiniMax MiniMax M2.5 MiniMax…

- [19] Claude Opus 4.7 Benchmark Full Analysis: Empirical Data Leading ...help.apiyi.com

Q1: What is Claude Opus 4.7? Claude Opus 4.7 is the flagship Large Language Model released by Anthropic on April 16, 2026. It leads in multiple benchmarks, including coding (SWE-bench Verified 87.6%), Agent tool invocation, and scientific reasoning (GPQA Diamond 94.2%), outperforming GPT-5.4 and Gemini 3.1 Pro. Compared to Opus 4.6, it introduces the new "xhigh effort" deep reasoning mode, all while maintaining the same official pricing. Q2: Which is better, Claude Opus 4.7 or GPT-5.4? [...] ### Core Takeaways from Claude Opus 4.7 Benchmark Testing Anthropic released Claude Opus 4.7 on April…

- [20] Claude Opus 4.7 Benchmarks Explainedvellum.ai

Coding is the clear headline. SWE-bench Verified jumps from 80.8% to 87.6%, a nearly 7-point gain that puts Opus 4.7 ahead of Gemini 3.1 Pro (80.6%). On SWE-bench Pro, the harder multi-language variant, Opus 4.7 goes from 53.4% to 64.3%, leapfrogging both GPT-5.4 (57.7%) and Gemini (54.2%). Computer use takes a meaningful step. OSWorld-Verified climbs from 72.7% to 78.0%, ahead of GPT-5.4 (75.0%) and within 1.6 points of Mythos Preview (79.6%). Pair that with the 3x vision resolution upgrade and you have a model that's genuinely more capable at real UI interaction. Tool use is best-in-class.…

- [21] Claude Opus 4.7 and Every Anthropic Model Reviewed - Web Wallahwebwallah.in

Claude Opus 4.7 is the best generally available model Anthropic has ever built. But the more interesting story sits just above it – a model so capable that Anthropic has chosen, deliberately and publicly, not to release it to the world. That decision will define Anthropic more than any benchmark score. Read: ChatGPT 5.2 Features and Breakthroughs ## Frequently Asked Questions ### What is Claude Opus 4.7? Claude Opus 4.7 is Anthropic’s current flagship AI model, released April 16, 2026. It scores 87.6% on SWE-bench Verified, supports a 1M token context window, introduces 3.75-megapixel vision,…

- [22] Claude Opus 4.7 results: early benchmarks, real-world feedback ...boringbot.substack.com

The Claude Opus 4.7 benchmarks on software engineering tasks show the clearest improvement. On SWE-Bench, the industry-standard benchmark for evaluating autonomous code repair across real GitHub issues, Opus 4.7 shows a meaningful step up from Opus 4.6, with early reported scores suggesting improvements in the range of 8–12 percentage points depending on task category (Source: community-reported testing via r/ClaudeAI and independent evaluations). On HumanEval, which tests functional code generation, Opus 4.7 continues to perform competitively. [...] # The Production Gap # Claude Opus 4.7 res…

- [23] Opus 4.7 benchmarks released with improvements - Facebookfacebook.com

Image 2: May be an image of musical instrument, blueprint, floor plan and text that says 'Opus 4.7 Agentlccoding Opus 4.6 64.3% GPT-5.4 Agentic coding Landv Gemini 53.4% Pro 57.7% 87.6% MythusPrevicw Preview Agentlcterminal cading 54.2% 80.8% 77.8% 69.4% 80.6% 65.4% Multidisciplinary 46.9% 75.1% 93.9% 40.0% 68.5% 42.7% 54.7% .นะม่พล 82.0% Agentic seBrch KoHaKocTp 53.3% นำส'ว 44.4% โลตัล 58.7% Uiboa 56.8% Loor Scaled 83.7% 51.4% W-wcb 89.3% 77.3% computer 85.9% 75.8% 0SWorl ህስተና 86.9% 68.1% 78.0% analyals 73.9% 72.7% 75.0% 64.4% Cybersecurity 60.1% 79.6% 61.5% Oyber3m 73.1% 59.7% Graduate-leve…

- [24] DeepSeek-V4 arrives with near state-of-the-art intelligence at 1/6th ...venturebeat.com

Benchmark DeepSeek-V4-Pro-Max GPT-5.5 GPT-5.5 Pro, where shown Claude Opus 4.7 Best result among these GPQA Diamond 90.1% 93.6% — 94.2% Claude Opus 4.7 Humanity’s Last Exam, no tools 37.7% 41.4% 43.1% 46.9% Claude Opus 4.7 Humanity’s Last Exam, with tools 48.2% 52.2% 57.2% 54.7% GPT-5.5 Pro Terminal-Bench 2.0 67.9% 82.7% — 69.4% GPT-5.5 SWE-Bench Pro / SWE Pro 55.4% 58.6% — 64.3% Claude Opus 4.7 BrowseComp 83.4% 84.4% 90.1% 79.3% GPT-5.5 Pro MCP Atlas / MCPAtlas Public 73.6% 75.3% — 79.1% Claude Opus 4.7 The shared academic-reasoning results favor the closed models: On GPQA Diamond, DeepSeek-…

- [25] GPT-5.5 is here: benchmarks, pricing, and what changes ... - Appwriteappwrite.io

Star on GitHub 55.8KGo to Console Start building for free Sign upGo to Console Start building for free Products Docs Pricing Customers Blog Changelog Star on GitHub 55.8K Blog/GPT-5.5 is here: benchmarks, pricing, and what changes for developers Apr 24, 2026•8 min # GPT-5.5 is here: benchmarks, pricing, and what changes for developers OpenAI shipped GPT-5.5 on April 23, 2026. Here's a source-backed look at benchmarks, pricing versus GPT-5.4 and Claude Opus 4.7, the system card, and where the model still falls short. Image 13: Atharva Deosthale #### Atharva Deosthale Developer Advocate SHARE 7…

- [26] GPQA Leaderboard 2026 - Compare AI Model Scorespricepertoken.com

| Z Z AI | GLM 4.7 | $0.390 | $1.750 | 66.4 | Try | | AL Alibaba | Qwen3 30B A3B Instruct 2507 | $0.090 | $0.300 | 65.9 | Try | | Image 89: Anthropic Anthropic | Claude 3.7 Sonnet | $3.000 | $15.000 | 65.6 | Try | | X Xiaomi | MiMo-V2-Flash | $0.090 | $0.290 | 65.6 | Try | | Image 90: ByteDance Seed ByteDance Seed | Seed 2.0 Lite | $0.250 | $2.000 | 65.6 | Try | | Image 91: DeepSeek DeepSeek | DeepSeek V3 0324 | $0.200 | $0.770 | 65.5 | Try | | Image 92: Google Google | Gemini 2.5 Flash Lite Preview 09-2025 | $0.100 | $0.400 | 65.1 | Try | | Image 93: Anthropic Anthropic | Claude Haiku 4.5 |…

- [27] GPT-5.5 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

Core Rankings Specialized Use Cases Dashboards Directories Guides & Lists Tools # GPT-5.5 According to BenchLM.ai, GPT-5.5 ranks #5 out of 112 models on the provisional leaderboard with an overall score of 89/100. It also ranks #2 out of 16 on the verified leaderboard. This places it among the top tier of AI models available in 2026, competing directly with the strongest models from leading AI labs. GPT-5.5 is a proprietary model with a 1M token context window. It uses explicit chain-of-thought reasoning, which typically improves performance on math and complex reasoning tasks at the cost of…

- [28] GPT-5.5: Pricing, Benchmarks & Performance - LLM Statsllm-stats.com

9Image 42GPT-5 mini 0.22 10Image 43o3 0.16 GPQAView → #4 of 10 Image 44: LLM Stats Logo A challenging dataset of 448 multiple-choice questions written by domain experts in biology, physics, and chemistry. Questions are Google-proof and extremely difficult, with PhD experts reaching 65% accuracy. More 1Image 45Claude Mythos Preview 0.95 2Image 46Gemini 3.1 Pro 0.94 3Image 47Claude Opus 4.7 0.94 4Image 48GPT-5.5 0.94 5Image 49GPT-5.2 Pro 0.93 6Image 50GPT-5.4 0.93 7Image 51GPT-5.2 0.92 8Image 52Gemini 3 Pro 0.92 9Image 53Claude Opus 4.6 0.91 10Image 54Kimi K2.6 0.91 Show 18 more Notice missing…

- [29] SWE-bench February 2026 leaderboard updatesimonwillison.net

Here's how the top ten models performed: Image 1: Bar chart showing "% Resolved" by "Model". Bars in descending order: Claude 4.5 Opus (high reasoning) 76.8%, Gemini 3 Flash (high reasoning) 75.8%, MiniMax M2.5 (high reasoning) 75.8%, Claude Opus 4.6 75.6%, GLM-5 (high reasoning) 72.8%, GPT-5.2 (high reasoning) 72.8%, Claude 4.5 Sonnet (high reasoning) 72.8%, Kimi K2.5 (high reasoning) 71.4%, DeepSeek V3.2 (high reasoning) 70.8%, Claude 4.5 Haiku (high reasoning) 70.0%, and a partially visible final bar at 66.6%. [...] Correction: _The Bash only benchmark runs against SWE-bench Verified, not…

- [30] AI Model Benchmarks Apr 2026 | Compare GPT-5, Claude ...lmcouncil.ai

METR Time Horizons | | Model | Minutes | --- | 1 | Claude Opus 4.6 (unknown thinking) | 718.8 ±1815.2 | | 2 | GPT-5.2 (high) | 352.2 ±335.5 | | 3 | GPT-5.3 Codex | 349.5 ±333.1 | | 4 | Claude Opus 4.5 (no thinking) | 293.0 ±239.0 | | 5 | Claude Opus 4.5 (16k thinking) | 288.9 ±558.2 | ### SWE-bench Verified | | Model | Score | --- | 1 | Claude Opus 4.7 (max) | 83.5% ±1.7 | | 2 | Claude Opus 4.6 (high) | 78.7% ±1.9 | | 3 | GPT-5.4 (high) | 76.9% ±1.9 | | 4 | Claude Opus 4.5 (no thinking) | 76.7% ±1.9 | | 5 | Gemini 3.1 Pro Preview | 75.6% ±2.0 | ### GPQA Diamond [...] # AI Model Benchmarks…

- [31] GPT 5.5vals.ai

2/17/2026 Anthropic Claude Sonnet 4.6 2/16/2026 Alibaba Qwen 3.5 Plus 2/12/2026 MiniMax MiniMax-M2.5 2/12/2026 MiniMax MiniMax-M2.5 2/11/2026 zAI GLM 5 2/5/2026 Anthropic Claude Opus 4.6 (Nonthinking) 2/5/2026 Anthropic Claude Opus 4.6 (Thinking) 1/26/2026 Moonshot AI Kimi K2.5 1/23/2026 Alibaba Qwen 3 Max Thinking 12/23/2025 MiniMax MiniMax-M2.1 12/22/2025 zAI GLM 4.7 12/17/2025 Google Gemini 3 Flash (12/25) 12/17/2025 Xiaomi MiMo V2 Flash openai/gpt-5.5 Release Date: 4/23/2026 Accuracy (Vals Index) 67.76% ± 1.79 Latency (Vals Index) 409.09s Cost/Test (Vals Index) Context Window 1M Max Outpu…

- [32] LLM Benchmarks 2026: MMLU, GPQA Diamond, HLE ... - CodeSOTAcodesota.com

| # | Model | Vendor | pass@1 | --- --- | | 01 | Gemini 3 Pro Preview | 91.7% | | 02 | Gemini 3 Flash | Google | 90.8% | | 03 | GPT-5 | OpenAI | 85% | | 04 | Grok 4 | xAI | 79% | | 05 | Gemini 2.5 Pro | Google | 75.6% | | 06 | DeepSeek-R1-0528 | DeepSeek | 73.3% | | 07 | o4-mini | OpenAI | 72.8% | | 08 | Qwen3-235B-A22B | Alibaba | 70.7% | | 09 | o3-mini | OpenAI | 66.9% | | 10 | DeepSeek R1 | DeepSeek | 65.9% | | 11 | o3 | OpenAI | 65.3% | | 12 | DeepSeek-R1-Distill-Llama-70B | DeepSeek | 65.2% | | 13 | Gemini 2.5 Flash | Google | 63.9% | | 14 | Kimi k1.5 | Moonshot AI | 62.5% | | 15 | DeepS…

- [33] LLM Leaderboard 2026 — Compare Top AI Models - Vellumvellum.ai

93.6% GPT-5.5 92.4% GPT 5.2 91.9% Gemini 3 Pro Best in Reasoning (GPQA Diamond)| Model | Score | --- | | Claude 3 Opus | 95.4% | | Claude Opus 4.7 | 94.2% | | GPT-5.5 | 93.6% | | GPT 5.2 | 92.4% | | Gemini 3 Pro | 91.9% | ### Best in High School Math (AIME 2025) 100%96%93%89%86% 100% Gemini 3 Pro 100% GPT 5.2 99.8% Claude Opus 4.6 99.1% Kimi K2 Thinking 98.7% GPT oss 20b Best in High School Math (AIME 2025)| Model | Score | --- | | Gemini 3 Pro | 100% | | GPT 5.2 | 100% | | Claude Opus 4.6 | 99.8% | | Kimi K2 Thinking | 99.1% | | GPT oss 20b | 98.7% | ### Best in Agentic Coding (SWE Bench) 90…

- [34] OpenAI Releases GPT-5.5 With State-of-the-Art Scores on Coding, Science, and Computer Uselinkedin.com

The Coding Case The strongest benchmark improvements show up in agentic coding. On Expert-SWE, an internal evaluation covering long-horizon coding tasks that OpenAI estimates take human engineers a median of 20 hours to complete, GPT-5.5 scores 73.1% against GPT-5.4's 68.5%. The gains hold on Terminal-Bench 2.0 and SWE-Bench Pro as well, and across all three, GPT-5.5 uses fewer tokens to get there. [...] The week of April 14, 2026 produced three significant AI releases aimed at the same enterprise audience. Alibaba… ### Google's New Deep Research Max Agent Scores 93% on Benchmarks Google'…

- [35] Perspective helps! GPT-5.5 underperforms Mythos on: - SWE-Bench ...x.com

leo 🐾 on X: "Perspective helps! GPT-5.5 underperforms Mythos on: - SWE-Bench Pro - HLE It is basically on-par on: - GPQA Diamond - BrowseComp - OSWorld-Verified It is better on: - Terminal-Bench 2.0 All while being more token efficient, smaller and cheaper than Mythos (and actually" / X Don’t miss what’s happening People on X are the first to know. Log in Sign up # Quote Image 3 leo Image 4: 🐾 @synthwavedd · Apr 23 GPT-5.5 benchmarks are out Benchmark results are more incremental, but in real world use it feels like a larger jump, especially for 5.5 Pro in my experience. Sort of similar t…

- [36] GPT-5.5 Doubles the Price, Google Goes Full Agent, DeepSeek V4 ...thecreatorsai.com

GPT-5.5 is out — $5 per million input, $30 per million output. That's exactly double GPT-5.4 and 20% more than Claude Opus 4.7. OpenAI released ... 21 hours ago

- [37] OpenAI's GPT-5.5 is the new leading AI model - Artificial Analysisartificialanalysis.ai

➤ Effort a clear ladder for balancing intelligence and cost: GPT-5.5 (medium) scores the same as Claude Opus 4.7 (max) on our Intelligence Index ... 2 days ago

- [38] Introducing GPT-5.5 - OpenAIopenai.com

Introducing GPT-5.5, our smartest model yet—faster, more capable, and built for complex tasks like coding, research, and data analysis ... 2 days ago

- [39] DeepSeek V4 is here: How it compares to ChatGPT, Claude, Geminimashable.com

DeepSeek V4 Preview costs about 85 percent less than GPT-5.5. See how the new open-source model compares to its U.S. rivals.

- [40] GPT 5.5 is out. Here are the benchmarks. - Facebookfacebook.com

Anthropic published a benchmark chart showing Opus 4.7 beating GPT-5.4 on almost every category. But they also included their unreleased ... 2 days ago

- [41] GPT 5.5 beats Claude Opus 4.7 : r/ArtificialInteligence - Redditreddit.com

My whole job is to evaluate these models for different tasks. Since sonnet 3.5 no GPT model has ever outperformed a Claude model. 2 days ago

- [42] GPT-5.5 VS Deepseek V4 Pro VS Opus 4.7: I tested THEM ... - Redditreddit.com

4.5K subscribers in the PostAI community. Welcome to PostAI, a dedicated community for all things artificial intelligence. 15 hours ago

- [43] GPT-5.5 VS Deepseek V4 Pro VS Opus 4.7 - YouTubeyoutube.com

In this video, I'll be comparing GPT 5.5, Deepseek V4, and Opus 4.7 across a series of coding and frontend benchmarks to see which model ... 1 day ago