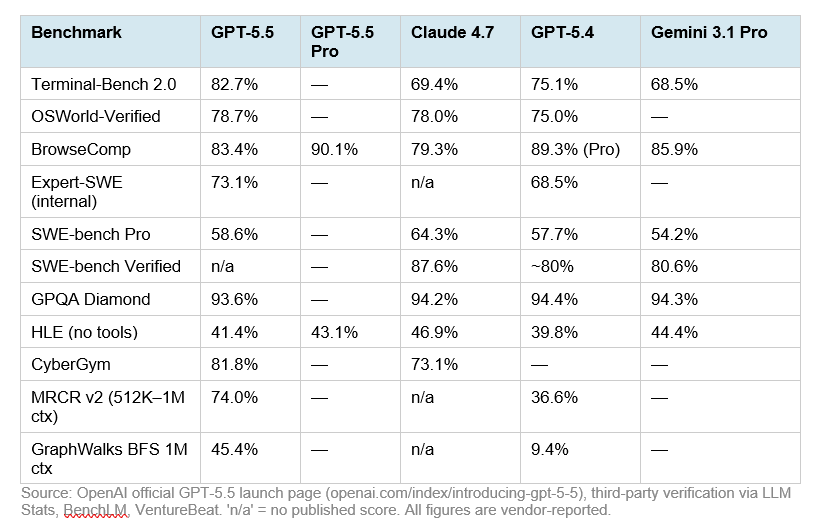

Benchmark tables make these four models look easy to rank, but the evidence points to different winners for different jobs. The strongest shared table has Claude Opus 4.7 ahead on GPQA Diamond and Humanity’s Last Exam without tools, GPT-5.5 ahead on Terminal-Bench 2.0, and GPT-5.5 Pro ahead on Humanity’s Last Exam with tools and BrowseComp [4]. Separate reporting adds GPT-5.5 leads over Claude on OSWorld-Verified and FrontierMath, while Claude Opus 4.7 is reported #1 in Vision & Document Arena [

5][

1].

The practical takeaway: use the benchmark category that matches your workload, not a single overall leaderboard.

Quick verdict

| Workload | Best-supported leader | Evidence |

|---|---|---|

| Science reasoning | Claude Opus 4.7 | 94.2% on GPQA Diamond, ahead of GPT-5.5 at 93.6% and DeepSeek-V4-Pro-Max at 90.1% [ |

| No-tools exam reasoning | Claude Opus 4.7 | 46.9% on Humanity’s Last Exam without tools [ |

| Tool-augmented exam reasoning | GPT-5.5 Pro | 57.2% on Humanity’s Last Exam with tools [ |

| Terminal and agentic computing | GPT-5.5 | 82.7% on Terminal-Bench 2.0 [ |

| OS operation | GPT-5.5 | 78.7% on OSWorld-Verified versus Claude Opus 4.7 at 78.0% [ |

| Frontier math | GPT-5.5 | 51.7% on FrontierMath Tiers 1–3 versus Claude Opus 4.7 at 43.8% [ |

| Software engineering in the shared table | Claude Opus 4.7 | 64.3% on SWE-Bench Pro / SWE Pro [ |

| Browsing | GPT-5.5 Pro | 90.1% on BrowseComp [ |

| Public tool workflow / MCP | Claude Opus 4.7 | 79.1% on MCP Atlas / MCPAtlas Public [ |

| Vision and document work | Claude Opus 4.7 | Reported #1 in Vision & Document Arena [ |

| Cost-performance claim | DeepSeek V4 | Reported near state-of-the-art at about one-sixth the cost of Opus 4.7 and GPT-5.5, but the excerpt does not expose full pricing methodology [ |

| Most under-comparable model | Kimi K2.6 | Useful reported scores, but fewer clean four-way comparisons against GPT-5.5, Claude Opus 4.7 and DeepSeek V4 [ |

Benchmark comparison table

| Benchmark / capability | GPT-5.5 | GPT-5.5 Pro | Claude Opus 4.7 | DeepSeek V4 | Kimi K2.6 | Best-supported read |

|---|---|---|---|---|---|---|

| GPQA Diamond | 93.6% [ | Not provided | 94.2% [ | 90.1% for DeepSeek-V4-Pro-Max [ | Not provided | Claude Opus 4.7 leads [ |

| Humanity’s Last Exam, no tools | 41.4% [ | 43.1% [ | 46.9% [ | 37.7% for DeepSeek-V4-Pro-Max [ | Not provided | Claude Opus 4.7 leads [ |

| Humanity’s Last Exam, with tools | 52.2% [ | 57.2% [ | 54.7% [ | 48.2% for DeepSeek-V4-Pro-Max [ | 54.0% in a separate Kimi comparison [ | GPT-5.5 Pro leads the shared table [ |

| Terminal-Bench 2.0 | 82.7% [ | Not provided | 69.4% [ | 67.9% for DeepSeek-V4-Pro-Max [ | 66.7% in a separate Kimi comparison [ | GPT-5.5 leads [ |

| SWE-Bench Pro / SWE Pro | 58.6% [ | Not provided | 64.3% [ | 55.4% for DeepSeek-V4-Pro-Max [ | 58.6% in a separate Kimi comparison [ | Claude Opus 4.7 leads the shared table [ |

| BrowseComp | 84.4% [ | 90.1% [ | 79.3% [ | 83.4% for DeepSeek-V4-Pro-Max [ | 83.2% in a Kimi vs DeepSeek comparison [ | GPT-5.5 Pro leads the shared table [ |

| MCP Atlas / MCPAtlas Public | 75.3% [ | Not provided | 79.1% [ | 73.6% for DeepSeek-V4-Pro-Max [ | Not provided | Claude Opus 4.7 leads [ |

| OSWorld-Verified | 78.7% [ | Not provided | 78.0% [ | Not provided | Not provided | GPT-5.5 leads Claude in the cited comparison [ |

| FrontierMath Tiers 1–3 | 51.7% [ | Not provided | 43.8% [ | Not provided | Not provided | GPT-5.5 leads Claude in the cited comparison [ |

| Vision & Document Arena | Not provided | Not provided | Reported #1 overall [ | Not provided | Not provided | Claude Opus 4.7 has the only cited result [ |

| AIME 2026 | Not provided | Not provided | Not provided | Not available in the cited Kimi vs DeepSeek table [ | 96.4% in Thinking mode [ | Useful Kimi data, not a four-way ranking [ |

| APEX Agents | Not provided | Not provided | Not provided | Not available in the cited Kimi vs DeepSeek table [ | 27.9% in Thinking mode [ | Useful Kimi data, not a four-way ranking [ |

| Context window | Not provided | Not provided | 1,000k tokens in one Artificial Analysis comparison [ | 1,000k tokens for DeepSeek V4 Pro in the same comparison [ | Not provided | Claude Opus 4.7 and DeepSeek V4 Pro match in that comparison [ |

GPT-5.5 and GPT-5.5 Pro: strongest on terminal, OS, math and tool use

GPT-5.5’s clearest win in the provided evidence is Terminal-Bench 2.0, where it scores 82.7% versus Claude Opus 4.7 at 69.4% and DeepSeek-V4-Pro-Max at 67.9% [4][

5]. It also edges Claude Opus 4.7 on OSWorld-Verified, 78.7% to 78.0%, and leads more clearly on FrontierMath Tiers 1–3, 51.7% to 43.8% [

5].

GPT-5.5 does not lead every reasoning benchmark. In the shared VentureBeat table, Claude Opus 4.7 narrowly leads GPQA Diamond at 94.2%, while GPT-5.5 scores 93.6% and DeepSeek-V4-Pro-Max scores 90.1% [4].

GPT-5.5 Pro changes the picture when tools are allowed. It leads Humanity’s Last Exam with tools at 57.2%, ahead of Claude Opus 4.7 at 54.7%, GPT-5.5 at 52.2%, and DeepSeek-V4-Pro-Max at 48.2% [4]. It also leads BrowseComp at 90.1%, ahead of GPT-5.5 at 84.4%, DeepSeek-V4-Pro-Max at 83.4%, and Claude Opus 4.7 at 79.3% [

4].

OpenAI’s own GPT-5.5 announcement reports GPT-5.5 at 95.0% on ARC-AGI-1 Verified and 85.0% on ARC-AGI-2 Verified, but it also notes that GPT evaluations were run with reasoning effort set to xhigh in a research environment that may differ from production ChatGPT [8]. Those ARC results are useful GPT-5.5 context, but the supplied excerpt does not include DeepSeek V4 or Kimi K2.6, so it cannot settle this four-way comparison [

8].

Some GPT-5.5-only domain results are also promising: 91.7% on Harvey BigLaw Bench, 88.5% on an internal investment-banking benchmark, and 80.5% on BixBench bioinformatics [7]. Treat those as domain context rather than direct comparison evidence because the supplied excerpt does not report the same benchmarks for Claude Opus 4.7, DeepSeek V4 and Kimi K2.6 [

7].

Claude Opus 4.7: strongest cited science, no-tools reasoning and documents

Claude Opus 4.7 has the best academic-reasoning profile in the main shared table. It leads GPQA Diamond at 94.2% and Humanity’s Last Exam without tools at 46.9% [4]. It also leads SWE-Bench Pro / SWE Pro at 64.3% and MCP Atlas / MCPAtlas Public at 79.1% in that table [

4].

Claude’s weaker spot in the cited data is terminal and OS-style operation. GPT-5.5 leads Claude Opus 4.7 by more than 13 points on Terminal-Bench 2.0, 82.7% to 69.4%, and also leads Claude on OSWorld-Verified and FrontierMath Tiers 1–3 in the cited GPT-5.5 comparison [4][

5].

Claude has the strongest multimodal and document signal in the supplied evidence. One source reports Claude Opus 4.7 taking #1 in Vision & Document Arena, improving by 4 points over Opus 4.6 in Document Arena, and winning diagram, homework and OCR subcategories [1]. The excerpt does not provide numeric Vision & Document Arena scores for GPT-5.5, DeepSeek V4 or Kimi K2.6, so this supports Claude’s document strength but not a complete four-way multimodal ranking [

1].

DeepSeek V4: competitive, cheaper on the cited claim, but not the benchmark leader here

The supplied evidence uses more than one DeepSeek label: DeepSeek-V4-Pro-Max appears in the VentureBeat benchmark table, while DeepSeek V4 Pro appears in an Artificial Analysis context-window comparison [4][

3]. That matters because benchmark conclusions should not be assumed to transfer perfectly across variants.

In the main shared table, DeepSeek-V4-Pro-Max is competitive but does not lead any row. It scores 90.1% on GPQA Diamond, 37.7% on Humanity’s Last Exam without tools, 48.2% on Humanity’s Last Exam with tools, 67.9% on Terminal-Bench 2.0, 55.4% on SWE-Bench Pro / SWE Pro, 83.4% on BrowseComp, and 73.6% on MCP Atlas / MCPAtlas Public [4].

DeepSeek’s most important product claim is cost-performance. VentureBeat describes DeepSeek V4 as delivering near state-of-the-art intelligence at about one-sixth the cost of Opus 4.7 and GPT-5.5 [4]. The excerpt does not include enough detail to verify the pricing basis, workload assumptions, latency tradeoffs or token normalization behind that comparison, so teams should validate cost and quality on their own tasks before treating the ratio as universal [

4].

For long-context screening, Artificial Analysis lists both DeepSeek V4 Pro and Claude Opus 4.7 with 1,000k-token context windows in one comparison [3]. That supports parity for those two listed configurations, not a broader claim about every DeepSeek or Claude mode [

3].

Kimi K2.6: promising scores, but the four-way evidence is thinner

Kimi K2.6 is the hardest model to rank cleanly against the other three because the supplied evidence is less complete as a four-way comparison. A Kimi-focused comparison reports Kimi K2.6 at 58.6% on SWE-Bench Pro, 80.2% on SWE-Bench Verified, 66.7% on Terminal-Bench 2.0, 54.0% on Humanity’s Last Exam with tools, and 89.6% on LiveCodeBench v6 [13]. The same source says those K2.6 numbers come from a Moonshot AI official model card, but its comparison set is mainly Claude Opus 4.6 and GPT-5.4 rather than GPT-5.5, Claude Opus 4.7 and DeepSeek V4 together [

13].

A separate Kimi vs DeepSeek comparison reports Kimi K2.6 at 96.4% on AIME 2026 in Thinking mode, 27.9% on APEX Agents in Thinking mode, and 83.2% on BrowseComp with Thinking mode and context management [11]. In that same excerpt, DeepSeek-V4 Pro is listed at 83.4% on BrowseComp, while DeepSeek values are not available for AIME 2026 and APEX Agents [

11].

There is also a Reddit snippet claiming DeepSeek V4 is the #1 open-weight model on a Vibe Code Benchmark and Kimi K2.6 is #2 [18]. Because the supplied evidence is user-generated and lacks a score table or methodology, it should not drive the main ranking [

18].

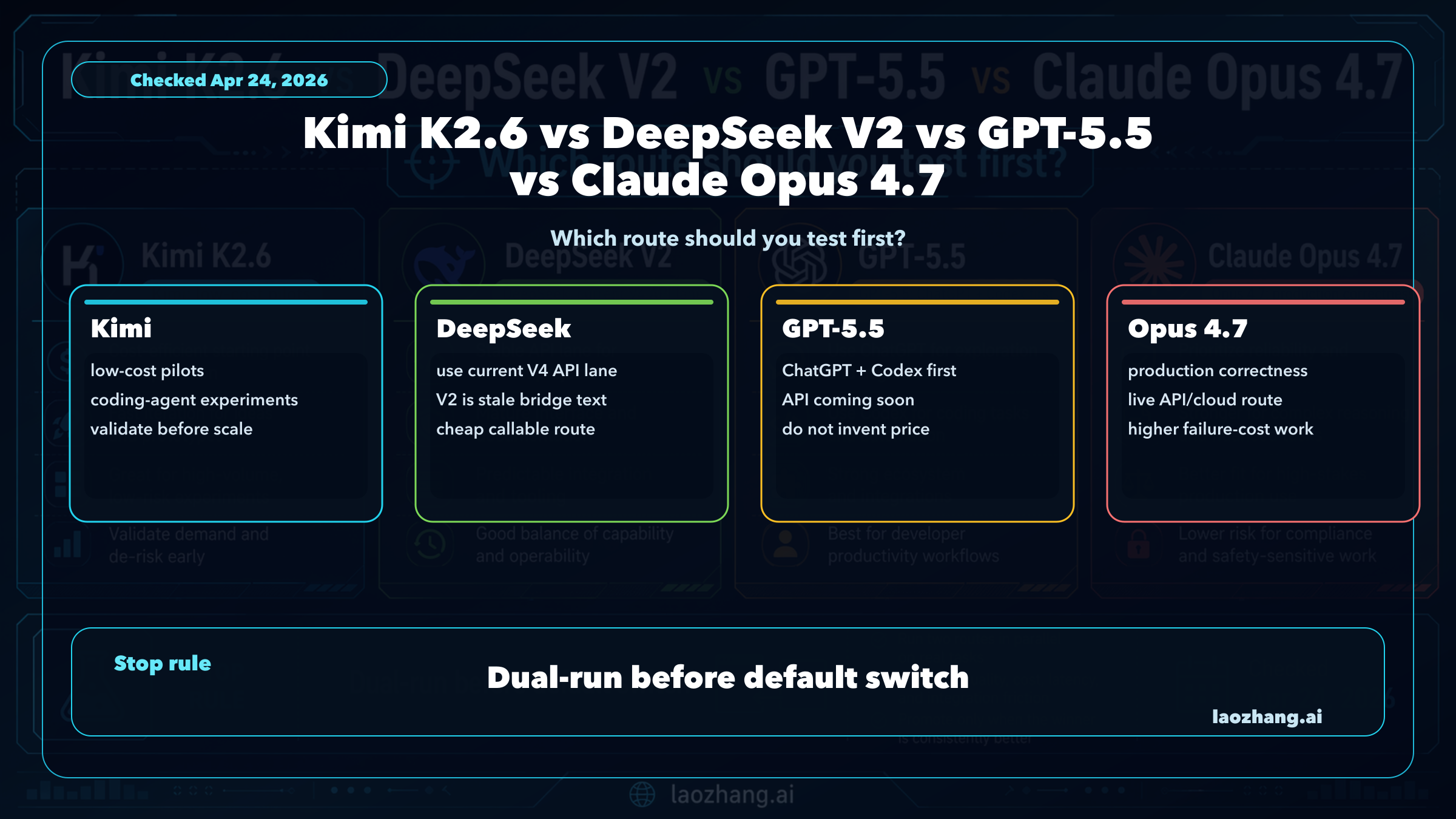

Which model should you test first?

- Test Claude Opus 4.7 first for science reasoning, no-tools expert Q&A, SWE-Bench-style software engineering, MCP-style workflows, and document-heavy multimodal work; those are Claude’s strongest cited areas [

4][

1].

- Test GPT-5.5 first for terminal-heavy agents, OS-operation tasks, and frontier math work; it leads the cited Terminal-Bench 2.0, OSWorld-Verified and FrontierMath results [

4][

5].

- Test GPT-5.5 Pro first when tool-augmented reasoning or browsing is central; it leads Humanity’s Last Exam with tools and BrowseComp in the shared table [

4].

- Test DeepSeek V4 first when cost-performance is the primary constraint and you can run your own quality checks; the main cited advantage is the reported near-frontier performance at about one-sixth the cost of Opus 4.7 and GPT-5.5 [

4].

- Test Kimi K2.6 first if you specifically want to evaluate its reported coding, agentic and browsing scores, but run the same prompts and harnesses yourself because the available Kimi data is less complete as a direct four-way comparison [

11][

13].

Evidence caveats

The benchmark picture is useful, but it is not a universal leaderboard. The evidence mixes GPT-5.5, GPT-5.5 Pro, DeepSeek-V4-Pro-Max, DeepSeek V4 Pro, Claude Opus 4.7 and Kimi K2.6 results from different sources and settings [3][

4][

5][

11][

13]. At least one GPT-5.5 comparison notes that benchmark values are vendor-reported, and OpenAI’s own ARC reporting says its GPT evaluations were run in a research environment with xhigh reasoning effort [

5][

8].

That does not make the numbers useless. It means close results should be treated as directional, while large gaps are more actionable. In the supplied evidence, the large actionable gaps are GPT-5.5’s Terminal-Bench lead, GPT-5.5’s FrontierMath lead over Claude, Claude’s Vision & Document Arena signal, and GPT-5.5 Pro’s tool-augmented HLE lead [4][

5][

1].

For production decisions, the best benchmark is still your own task set: same prompts, same tool access, same context size, same latency constraints, and the same scoring rules across all candidate models.