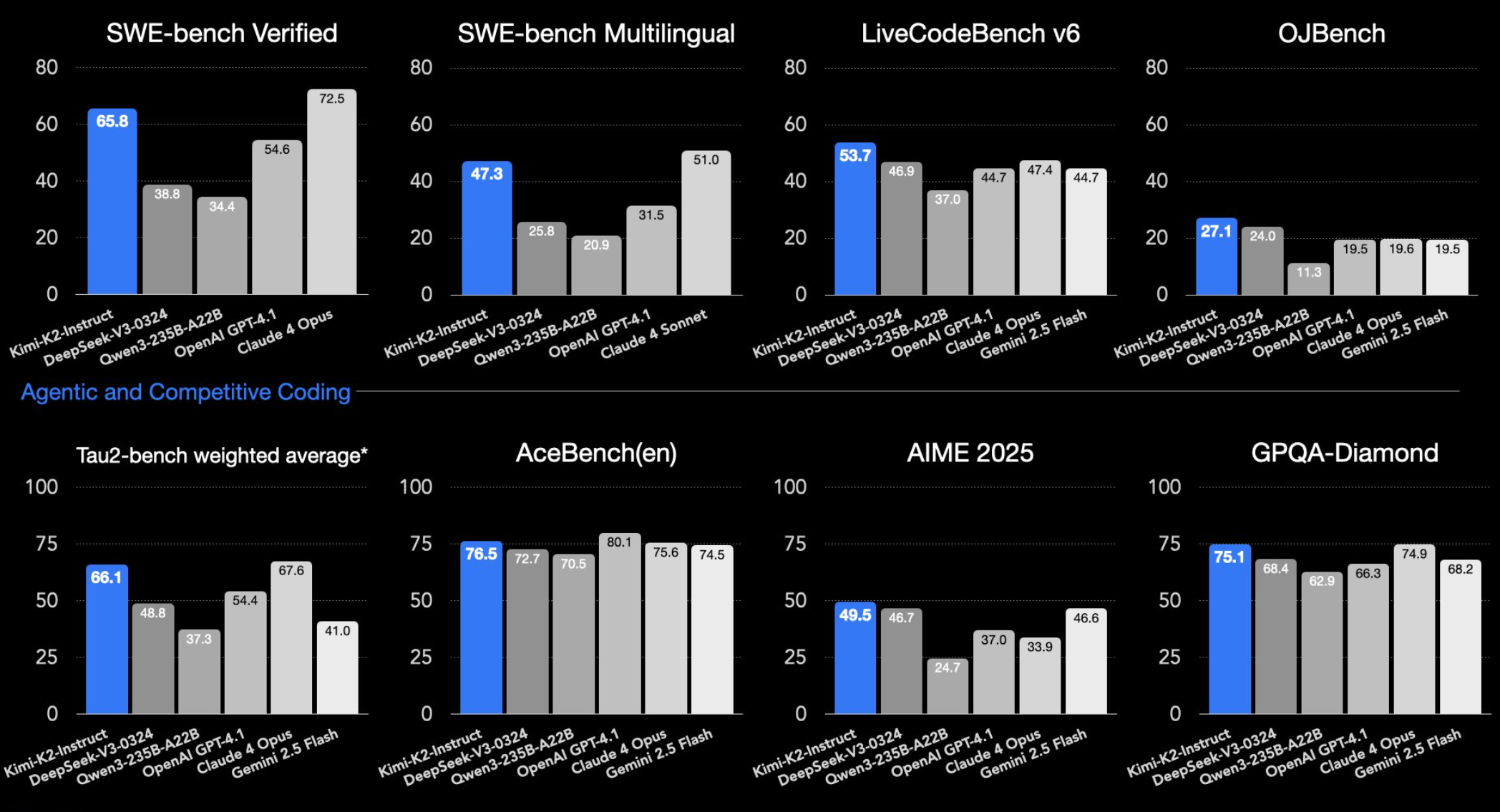

Benchmark charts make this look like a simple race, but this comparison is not a clean single leaderboard. The closest shared table among the cited sources compares GPT-5.5, GPT-5.5 Pro, Claude Opus 4.7, and DeepSeek-V4-Pro-Max; Kimi K2.6 is reported in separate Kimi-focused sources, including release coverage, Hugging Face, and LLM Stats [1][

6][

11][

24]. Use the numbers as a shortlist for testing, not as a final universal ranking.

For DeepSeek V4, this article uses DeepSeek-V4-Pro-Max because that is the DeepSeek V4 variant with benchmark and cost rows in the cited sources [18][

24]. GPT-5.5 and GPT-5.5 Pro are also kept separate where the sources report different results [

24].

Quick verdict

- Best terminal-style coding-agent signal: GPT-5.5, with 82.7% on Terminal-Bench 2.0 in the shared comparison [

24].

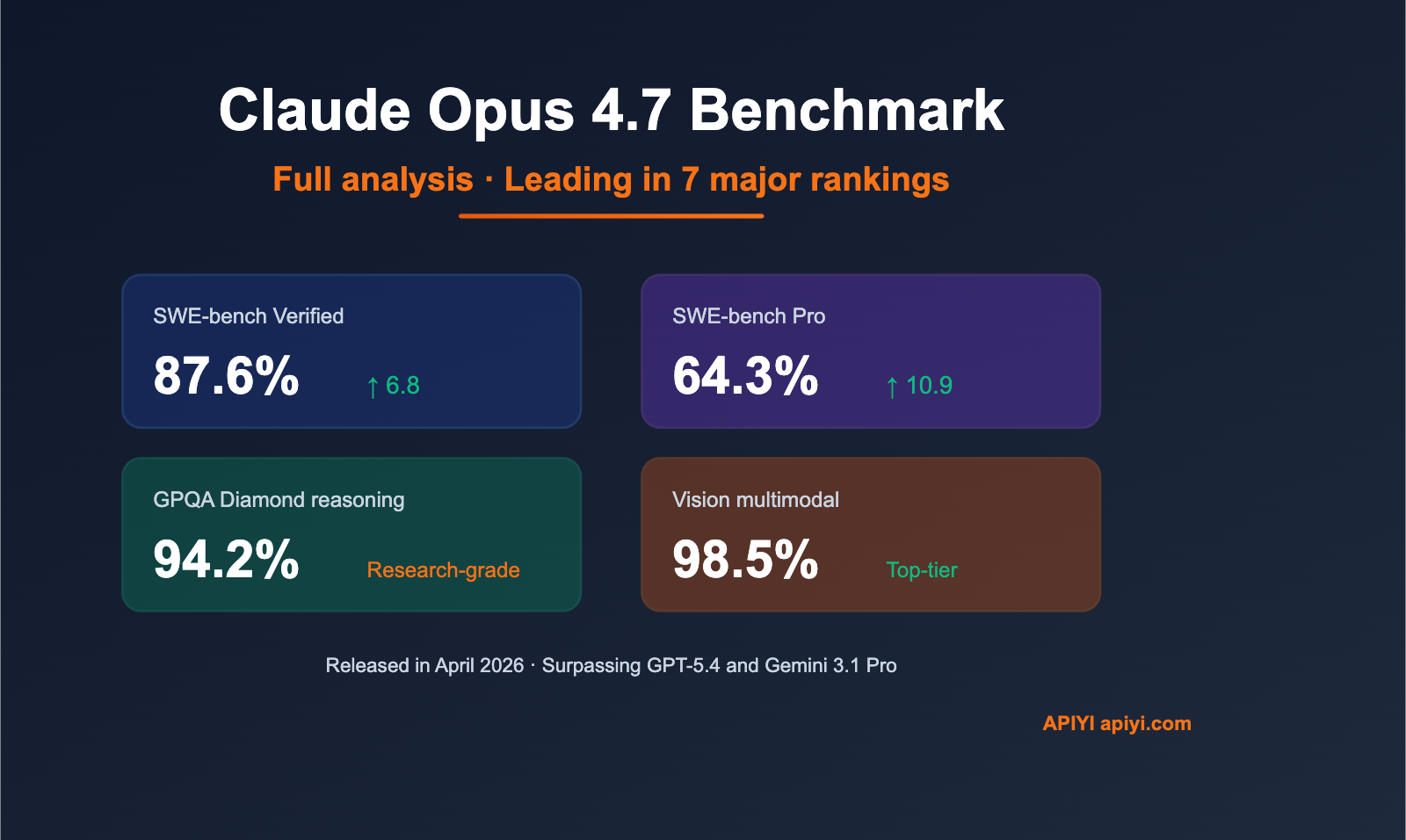

- Best cited software-repair signal: Claude Opus 4.7, with 64.3% on SWE-Bench Pro and 87.6% on SWE-Bench Verified in the cited data [

18][

24].

- Best open-weight candidate: Kimi K2.6, described as an open-weight 1T-parameter MoE model with 32B active parameters and strong coding benchmark rows on its Hugging Face model card [

1][

6].

- Best value candidate to validate: DeepSeek-V4-Pro-Max, which LLM Stats lists with 1.6T size, 1M context, 80.6% on SWE-Bench Verified, and $1.74/$3.48 in its cost columns [

18].

- Best caveat: GPT-5.5 Pro is not the same comparison point as base GPT-5.5; in the shared table it leads BrowseComp and HLE with tools where reported [

24].

Benchmark comparison table

A dash means the score was not found in the cited sources for that model, not that the model scored zero. The GPT-5.5, GPT-5.5 Pro, Claude Opus 4.7, and DeepSeek-V4-Pro-Max rows mostly come from one shared comparison, while Kimi K2.6 figures come from separate Kimi sources [1][

6][

24].

| Benchmark | GPT-5.5 | GPT-5.5 Pro | Claude Opus 4.7 | Kimi K2.6 | DeepSeek-V4-Pro-Max |

|---|---|---|---|---|---|

| GPQA Diamond | 93.6% [ | — | 94.2% [ | ≈91% [ | 90.1% [ |

| Humanity’s Last Exam, no tools | 41.4% [ | 43.1% [ | 46.9% [ | — | 37.7% [ |

| Humanity’s Last Exam, with tools | 52.2% [ | 57.2% [ | 54.7% [ | 54.0% [ | 48.2% [ |

| Terminal-Bench 2.0 | 82.7% [ | — | 69.4% [ | 66.7% [ | 67.9% [ |

| SWE-Bench Pro | 58.6% [ | — | 64.3% [ | 58.6% [ | 55.4% [ |

| BrowseComp | 84.4% [ | 90.1% [ | 79.3% [ | 83.2% [ | 83.4% [ |

| MCP Atlas / MCPAtlas Public | 75.3% [ | — | 79.1% [ | — | 73.6% [ |

| SWE-Bench Verified | — | — | 87.6% [ | 80.2% [ | 80.6% [ |

Best model by workload

| Priority | Start with | Why |

|---|---|---|

| Terminal-heavy coding agents | GPT-5.5 | It has the highest Terminal-Bench 2.0 score in the shared comparison at 82.7% [ |

| Software-engineering repair | Claude Opus 4.7 | It leads the cited SWE-Bench Pro row and the cited SWE-Bench Verified row among these models [ |

| Hard reasoning without tools | Claude Opus 4.7 | It leads GPQA Diamond and HLE without tools in the shared comparison [ |

| Tool-assisted hard reasoning or browsing | GPT-5.5 Pro | It leads HLE with tools at 57.2% and BrowseComp at 90.1% where GPT-5.5 Pro is reported [ |

| Open-weight deployment | Kimi K2.6 | It is the clearest open-weight model in the cited sources and has strong coding rows on Hugging Face [ |

| Cost-sensitive hosted inference | DeepSeek-V4-Pro-Max, then Kimi K2.6 | DeepSeek is listed at $1.74/$3.48 in LLM Stats cost columns, while Kimi K2.6 is listed at $0.95/$4.00 in LLM Stats price columns [ |

| 1M-token context needs | GPT-5.5, Claude Opus 4.7, or DeepSeek-V4-Pro-Max | The cited sources list 1M context for GPT-5.5, Claude Opus 4.7, and DeepSeek-V4-Pro-Max; Kimi K2.6 is reported around 256K context [ |

Model-by-model notes

GPT-5.5

GPT-5.5 is the clearest terminal-agent pick in the cited comparison. OpenAI describes GPT-5.5 as built for complex tasks such as coding, research, and data analysis [38]. In the shared VentureBeat table, GPT-5.5 posts 82.7% on Terminal-Bench 2.0, above Claude Opus 4.7 at 69.4% and DeepSeek-V4-Pro-Max at 67.9% [

24]. It also scores 93.6% on GPQA Diamond, 58.6% on SWE-Bench Pro, and 84.4% on BrowseComp in that table [

24].

The important caveat is that GPT-5.5 Pro is reported separately. In the same shared table, GPT-5.5 Pro reaches 90.1% on BrowseComp and 57.2% on HLE with tools, but those rows should not be merged with base GPT-5.5 when comparing cost, latency, or effort settings [24].

For procurement context, BenchLM lists GPT-5.5 with a 1M-token context window, while one pricing report lists GPT-5.5 at $5 per million input tokens and $30 per million output tokens [27][

36]. Treat that as a pricing signal to verify against current OpenAI API pricing before making a buying decision.

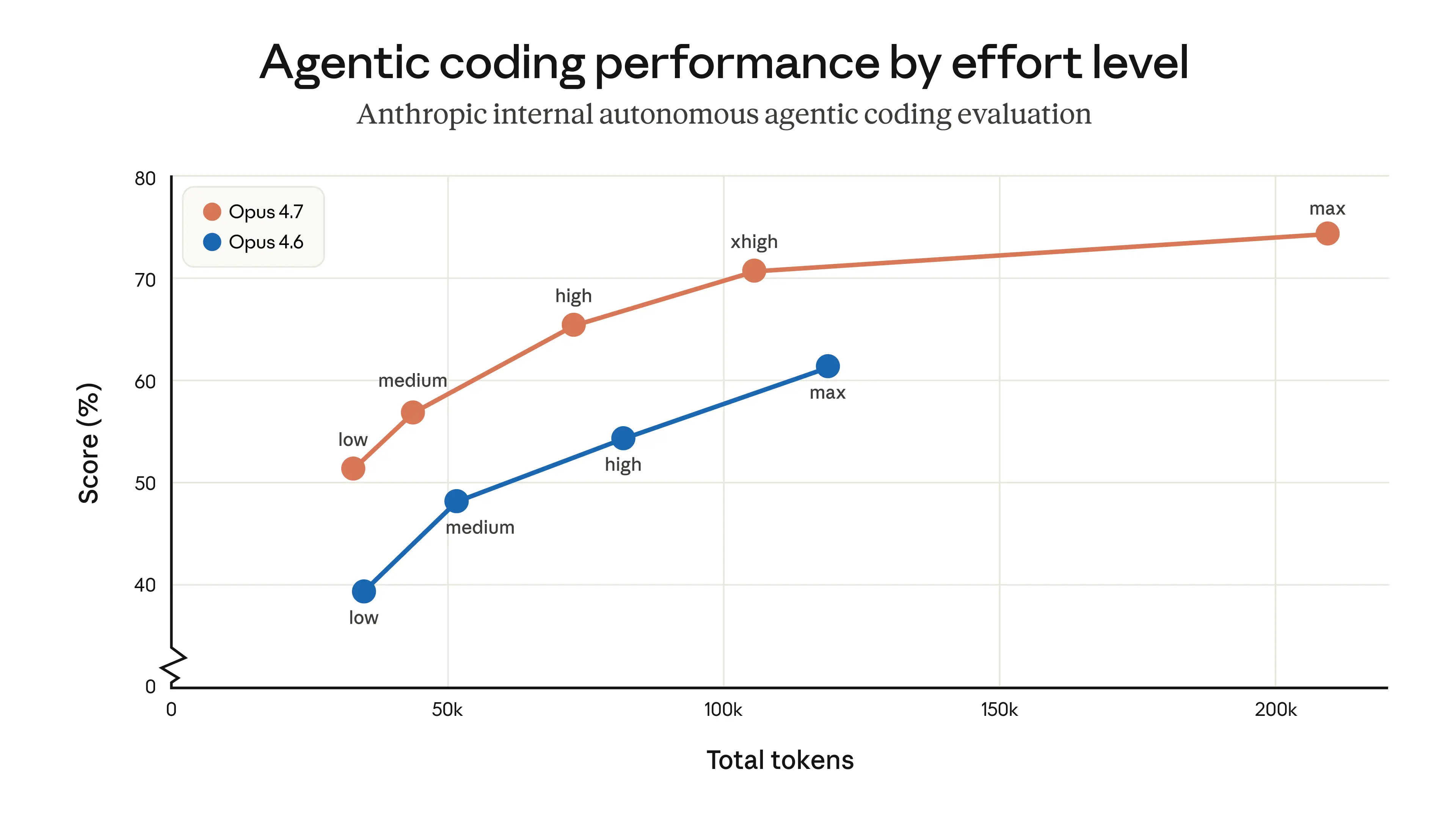

Claude Opus 4.7

Claude Opus 4.7 has the strongest cited software-repair results in this group. LLM Stats lists it at 87.6% on SWE-Bench Verified, and the shared VentureBeat table reports 64.3% on SWE-Bench Pro [18][

24]. It also leads the shared GPQA Diamond row at 94.2%, HLE without tools at 46.9%, and MCP Atlas at 79.1% [

24].

LLM Stats reports a 1M-token context window and $5/$25 per million-token pricing for Claude Opus 4.7 [16]. The main caveat is comparability: Anthropic notes that some of its benchmark results used internal implementations or updated harness parameters, and that some scores are not directly comparable to public leaderboard scores [

17].

Kimi K2.6

Kimi K2.6 is the strongest open-weight candidate in the cited material. Release coverage describes it as an open-weight 1T-parameter MoE model with 32B active parameters, 384 experts, native multimodality, INT4 quantization, and 256K context [1]. Its Hugging Face model card reports 80.2% on SWE-Bench Verified, 58.6% on SWE-Bench Pro, 66.7% on Terminal-Bench 2.0, and 89.6 on LiveCodeBench v6 [

6].

The same release coverage reports 54.0 on HLE with tools and 83.2 on BrowseComp for Kimi K2.6 [1]. LLM Stats lists Kimi K2.6 with 262K context, $0.95/$4.00 in its price columns, and an Open Source label [

11]. The limitation is that Kimi’s figures do not come from the same shared table as GPT-5.5, Claude Opus 4.7, and DeepSeek-V4-Pro-Max, so close score differences should be treated as reasons to test rather than as definitive wins [

1][

6][

24].

DeepSeek-V4-Pro-Max

DeepSeek-V4-Pro-Max is the value candidate rather than the clear all-around benchmark leader. LLM Stats lists it with 1.6T size, 1M context, 80.6% on SWE-Bench Verified, and $1.74/$3.48 in its cost columns [18]. In the shared VentureBeat comparison, it scores 90.1% on GPQA Diamond, 37.7% on HLE without tools, 48.2% on HLE with tools, 67.9% on Terminal-Bench 2.0, 55.4% on SWE-Bench Pro, 83.4% on BrowseComp, and 73.6% on MCP Atlas [

24].

Those numbers make DeepSeek-V4-Pro-Max worth testing for cost-sensitive workloads, especially because the cited cost columns are below the cited GPT-5.5 and Claude Opus 4.7 price signals [16][

18][

36]. But the same shared table shows GPT-5.5 or Claude Opus 4.7 leading most of the reported benchmark rows, so DeepSeek should be validated on your own tasks before replacing a premium closed model [

24].

Context and pricing signals

Pricing and context windows are not always reported by the same source or provider, so treat this as a procurement checklist rather than a final quote.

| Model | Cited context and pricing signal | Practical read |

|---|---|---|

| GPT-5.5 | BenchLM lists 1M context; one pricing report lists $5 input and $30 output per million tokens [ | Premium closed-model option; verify live OpenAI pricing. |

| Claude Opus 4.7 | LLM Stats reports 1M context and $5/$25 per million-token pricing [ | Premium coding, reasoning, and long-context option. |

| Kimi K2.6 | Release coverage reports 256K context; LLM Stats lists 262K context and $0.95/$4.00 in price columns [ | Strong open-weight candidate; hosted pricing may vary by provider. |

| DeepSeek-V4-Pro-Max | LLM Stats lists 1M context, 1.6T size, 80.6% on SWE-Bench Verified, and $1.74/$3.48 in cost columns [ | Strong value candidate if quality holds on your workload. |

Why benchmark rankings can disagree

The cited rows do not test one skill. The comparison separates GPQA Diamond, HLE with and without tools, Terminal-Bench 2.0, SWE-Bench Pro, BrowseComp, MCP Atlas, and SWE-Bench Verified [18][

24]. A model can lead one row and trail another because the tasks, tool access, and scoring harnesses differ.

Even the same named benchmark can vary across implementations. LLM Stats lists Claude Opus 4.7 at 87.6% on SWE-Bench Verified, while LMCouncil lists Claude Opus 4.7 at 83.5% ± 1.7 under its setup [18][

30]. Anthropic also says some benchmark results used internal implementations or updated harness parameters, limiting direct comparability with public leaderboard scores [

17].

That is why one- or two-point gaps should not drive a production decision by themselves. Public benchmarks are best used to narrow the shortlist, then your own evaluation should decide.

How to evaluate the finalists

Before committing to one model, test the top two or three candidates on a representative internal eval set:

- Use your real prompts and files. Benchmark-style prompts are useful, but they rarely capture your repository, documents, policies, or user behavior.

- Match your tool environment. Coding-agent results can change when the model has or lacks terminal access, browsing, repository context, retrieval, or internal APIs.

- Measure cost and latency with the same settings. Higher-effort or Pro modes can change quality, spend, and response time.

- Review failures manually. For coding tasks, inspect tests, diffs, maintainability, security regressions, and hallucinated dependencies.

- Include at least one lower-cost challenger. Kimi K2.6 and DeepSeek-V4-Pro-Max are worth including if open weights or inference cost matter [

1][

18].

Bottom line

If you want the safest high-end shortlist, test GPT-5.5 and Claude Opus 4.7 side by side: GPT-5.5 has the strongest cited Terminal-Bench 2.0 result, while Claude Opus 4.7 has the strongest cited SWE-Bench Pro and SWE-Bench Verified results [18][

24]. If you need open weights, start with Kimi K2.6 [

1][

6]. If cost is the constraint, put DeepSeek-V4-Pro-Max into the evaluation, but validate it against your own workload before treating it as a replacement for the premium models [

18][

24].