The headline comparison sounds simple: Claude Opus 4.7 versus GPT-5.5 Spud. The evidence is not simple. Claude Opus 4.7 is documented in Anthropic’s own materials, including the claude-opus-4-7 model identifier for API use, and VentureBeat reported its public release. [8][

1] GPT-5.5 Spud, by contrast, appears in the supplied evidence only through third-party pages discussing future or speculative OpenAI models, not through a primary OpenAI release note, model card, system card, or API document. [

19][

20]

That means the responsible conclusion is asymmetric: Claude Opus 4.7 can be evaluated as a real model in this evidence set; GPT-5.5 Spud cannot yet be treated as a verified released OpenAI model here. A clean benchmark ranking between the two is therefore not supported.

What is actually verified?

| Claim | Evidence status | What it means for teams |

|---|---|---|

| Claude Opus 4.7 exists as an Anthropic model | Verified in Anthropic’s own documentation, which points developers to claude-opus-4-7 via the Claude API. [ | It is reasonable to include Claude Opus 4.7 in internal evaluations. |

| Claude Opus 4.7 was publicly released | Reported by VentureBeat, with Anthropic’s official page as the primary anchor. [ | Public-release claims have stronger support than rumor-page claims. |

| GPT-5.5 Spud is a released OpenAI model | Not verified in the supplied evidence by a primary OpenAI source. The pages naming it are third-party articles about upcoming or speculative models. [ | Treat direct Spud performance claims as unconfirmed until OpenAI publishes primary documentation. |

| An independent Claude Opus 4.7 vs GPT-5.5 Spud replication exists | Not shown in the supplied evidence. | Do not make procurement or migration decisions from an unverified matchup. |

Why a direct winner is not justified yet

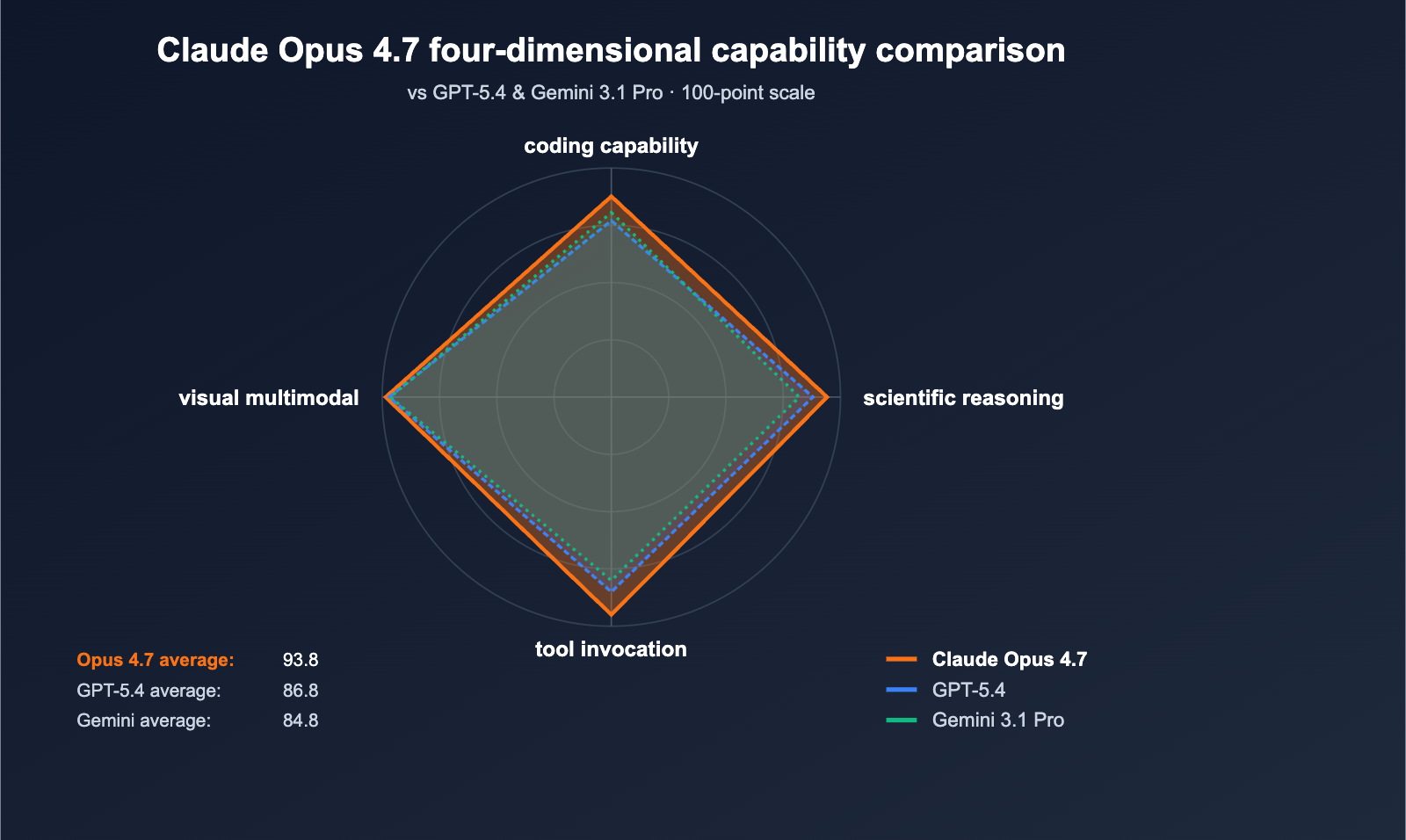

A benchmark can be directionally useful without being strong enough to decide a model switch. The broader LLM evaluation literature warns that static benchmarks face saturation effects, data contamination, and limited independent replication. [26] Those risks are especially important when the comparison involves a fresh model on one side and an unverified or unreleased model name on the other.

For a fair Claude Opus 4.7 vs GPT-5.5 Spud claim, teams would need at least five things: a primary OpenAI source for Spud, a stable model identifier, reproducible access conditions, disclosed benchmark settings, and independent apples-to-apples replication. The supplied evidence does not provide that package for Spud. [19][

20][

26]

What makes a benchmark more credible?

The useful question is not “which leaderboard says my preferred model wins?” It is “which evidence is least likely to be contaminated, cherry-picked, or impossible to reproduce?”

A stronger benchmark signal usually has four traits:

- Recent or private tasks that are less likely to have been included in training data.

- Objective scoring rather than subjective judging where possible.

- Public methods, code, data, or harness details so others can inspect the setup.

- Independent replication across more than one evaluator or run.

The supplied benchmark-methodology sources point in the same direction: dynamic and contamination-limited benchmark designs are more informative than older static tests, but even stronger public benchmarks still do not replace testing on your own workload. [25][

26][

37]

LiveBench is a stronger public signal, but not a final answer

LiveBench is one of the stronger benchmark designs in the supplied evidence because it was built around contamination-limited tasks, frequently updated questions from recent information sources, procedural question generation, and objective ground-truth scoring. [37] The LiveBench site also links to a leaderboard, details, code, data, and paper, which makes the evaluation more inspectable than a chart with no reproducible setup. [

36]

A later survey of LLM benchmarks says dynamic benchmark designs such as LiveBench reduce data-leakage risk. [25] That does not make any single LiveBench result definitive. It does make LiveBench more credible than many static benchmarks when the question is whether a model may have seen similar test items before.

SWE-bench is valuable, but easy to overread

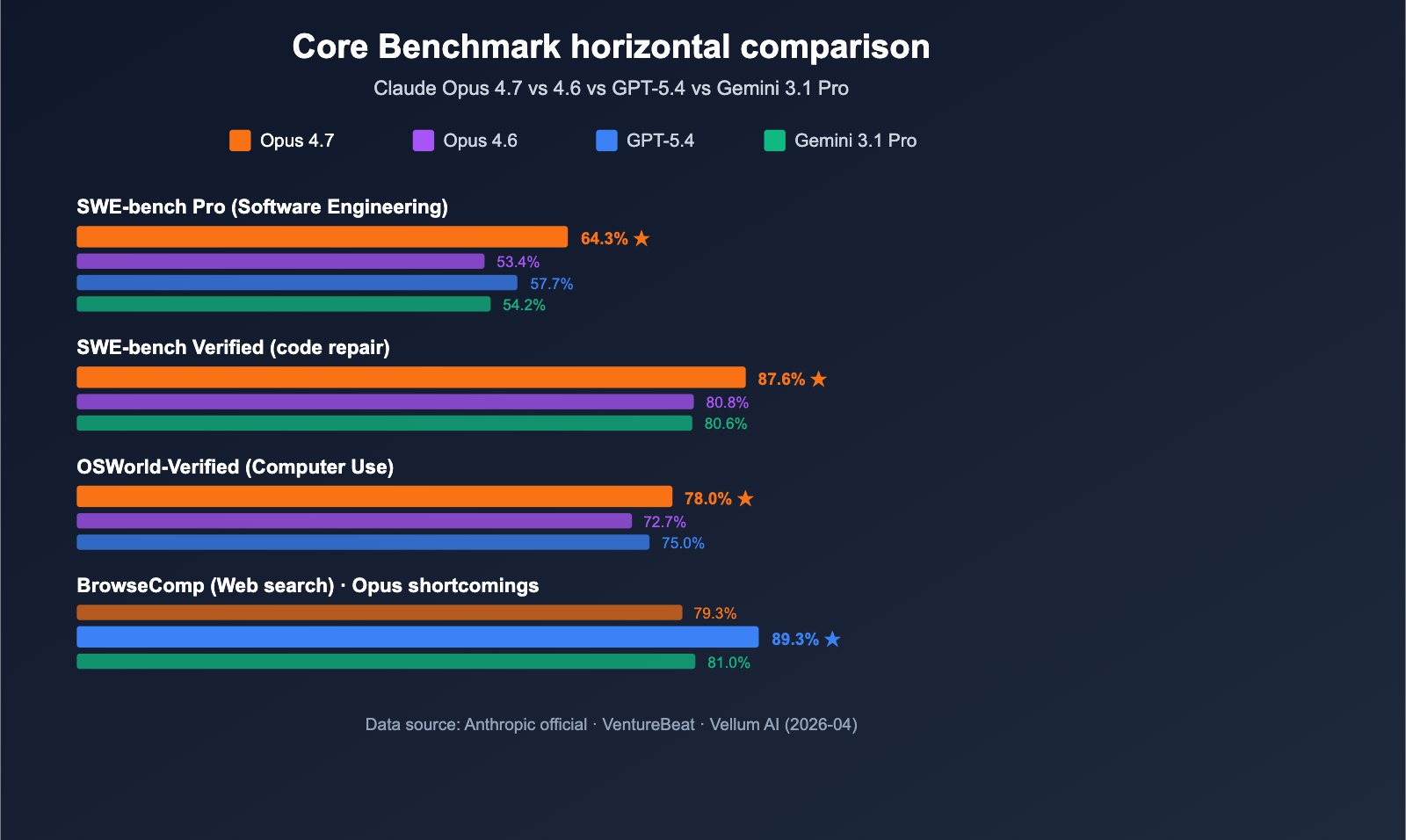

SWE-bench-style evaluations matter because they test software-engineering behavior closer to real development work than short coding puzzles. But “SWE-bench” is not one uniform signal. Variant, harness, tool access, retry policy, repository state, and scoring setup can all affect the result.

SWE-bench Live was designed to reduce pretraining contamination by limiting tasks to issues created between January 1, 2024 and April 20, 2025, and its authors note that leaderboard setups can differ substantially. [43] SWE-bench Pro is presented as a more challenging, contamination-resistant benchmark for longer-horizon software-engineering tasks. [

44]

At the same time, public GitHub-based coding benchmarks remain exposed to leakage risk. SWE-Bench++ argues that open-source software benchmarks face critical contamination risk and that solution leakage can skew leaderboard rankings. [45] A 2026 analysis of SWE-bench leaderboards also reports recent SWE-bench Verified submissions with data contamination. [

47]

There is also a saturation problem. One benchmarking-infrastructure paper reports that results on SWE-bench Verified can drop to 23% on SWE-bench Pro. [46] SWE-ABS separately argues that the SWE-bench Verified leaderboard is approaching saturation and can show inflated success rates until tasks are adversarially strengthened. [

49]

A practical benchmark credibility ladder

For model buyers, developers, and AI teams, the evidence should be weighted roughly like this:

| Evidence type | Why it deserves weight | Main caveat |

|---|---|---|

| Private internal evaluations | They match your codebase, prompts, latency limits, and failure tolerance. | They require careful design and repeatable harnesses. |

| Dynamic or contamination-limited public benchmarks | Recent, frequently updated tasks and objective scoring reduce leakage risk. [ | They still may not match your production workload. |

| SWE-bench Live and SWE-bench Pro | They target realistic software-engineering tasks with stronger contamination controls than older static setups. [ | Tooling and harness differences can change outcomes. [ |

| SWE-bench Verified | It is widely used for coding-agent comparisons. | Contamination, leakage, and saturation can distort raw rankings. [ |

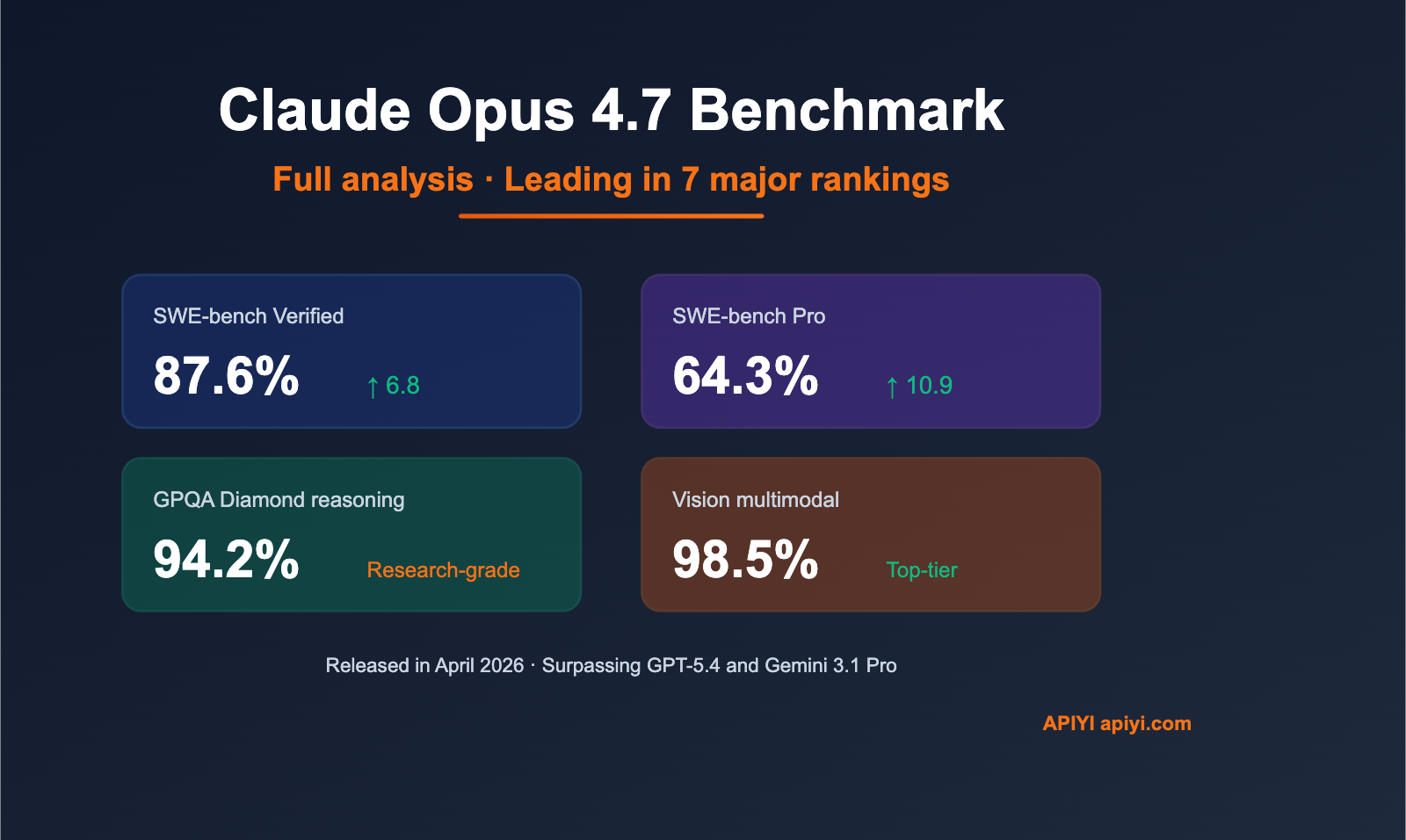

| Vendor launch charts | They show what the model maker claims as strengths. | They need independent replication before high-stakes decisions. [ |

| Rumor pages and SEO comparison posts | They can surface names or claims worth checking. | They are not primary evidence for an unverified model. [ |

How to test before switching models

If you are evaluating Claude Opus 4.7 against any available OpenAI, Google, Anthropic, or open model, use public benchmarks as a filter—not as the final decision.

- Confirm the exact model ID. For Claude Opus 4.7, Anthropic points developers to

claude-opus-4-7through the Claude API. [8]

- Use the same harness for every model. SWE-bench Live explicitly notes that leaderboard setups can differ substantially, so mismatched setups can turn into false model rankings. [

43]

- Prefer recent or private tasks. This reduces the risk that tasks or solutions appeared in training data, which is the concern behind contamination-limited and contamination-resistant benchmark designs. [

25][

37][

44]

- Record cost, latency, retries, tool permissions, and failure modes. A model that wins only after many expensive retries may not be the best production choice.

- Repeat the evaluation. A single leaderboard result should be treated as a hypothesis until internal tests or third-party replications support it. [

26]

What would change the verdict?

The conclusion would become stronger if the evidence set included a primary OpenAI announcement, model card, system card, or API document for GPT-5.5 Spud; a stable Spud model identifier; disclosed evaluation conditions; and independent benchmark entries using comparable harnesses and tool permissions.

It would become stronger still if those entries appeared on contamination-limited or contamination-resistant evaluations such as LiveBench, SWE-bench Live, or SWE-bench Pro, and if independent teams could reproduce the results. [37][

43][

44][

26]

Limitations

This article uses only the supplied evidence. The absence of a primary OpenAI Spud source in this evidence set does not prove that no such source exists elsewhere; it means the claim is not verified here. [19][

20]

Several benchmark-methodology sources cited here are arXiv, OpenReview, or SSRN records rather than final journal articles. They are still useful for understanding current evaluation design, contamination risk, and replication concerns, but their publication status should be kept in mind. [25][

26][

37][

43][

44][

45][

46][

47][

49]

Final verdict

Claude Opus 4.7 is verified in the supplied evidence; GPT-5.5 Spud is not verified here through primary OpenAI documentation. [8][

1][

19][

20] A Claude Opus 4.7 vs GPT-5.5 Spud winner should not be published until Spud is confirmed, accessible under a stable model ID, and tested under comparable conditions.

For model selection, put the most weight on contamination-limited or contamination-resistant benchmarks with inspectable methods and repeated testing. LiveBench, SWE-bench Live, and SWE-bench Pro are more informative than static or vendor-only charts, but none is a substitute for a controlled evaluation on your own workload. [37][

25][

43][

44][

26]