搜尋及事實查核:Kimi K2.6 嘅指令跟從同自我修正能力,實際係咪真係好咗?

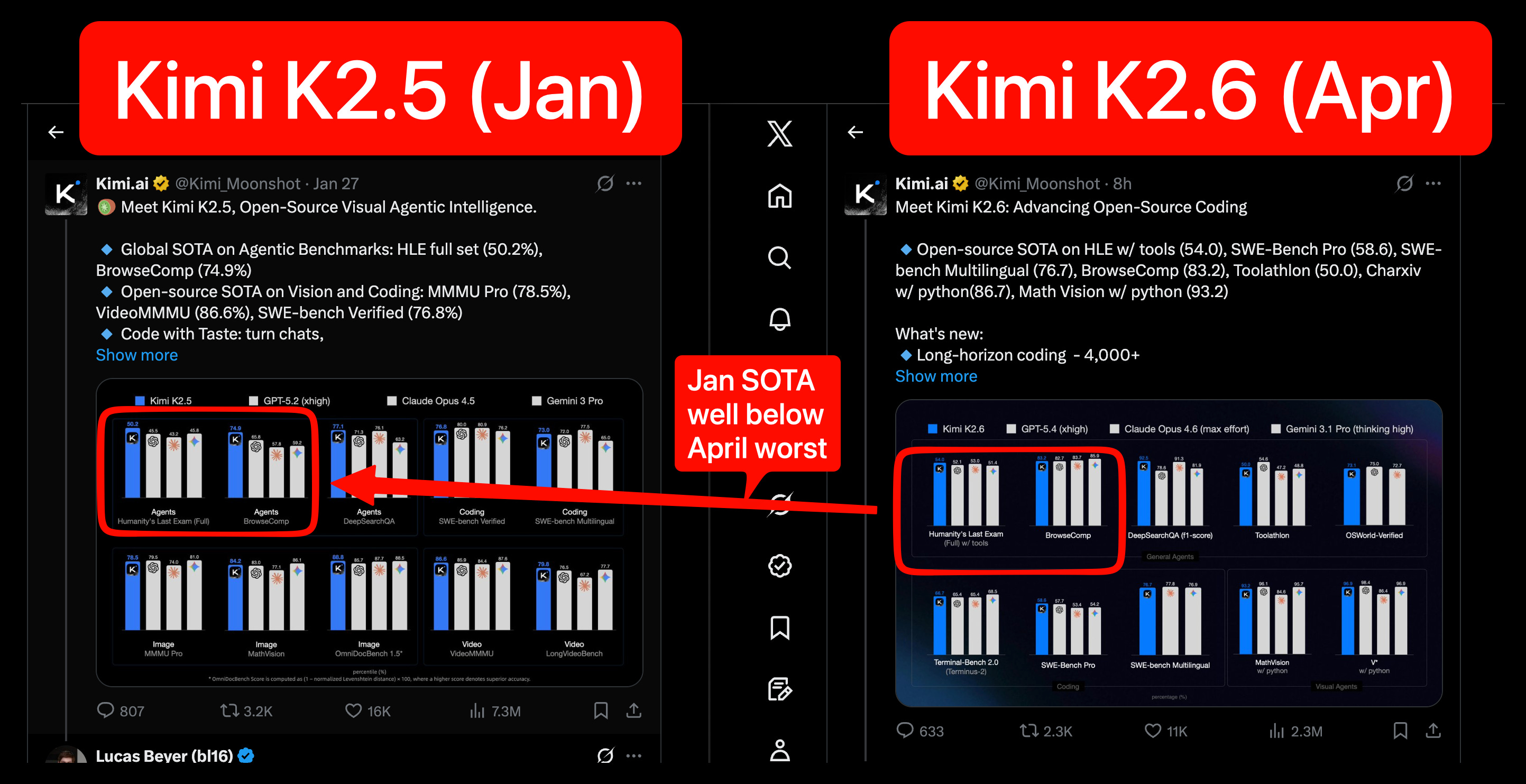

就現有證據睇,未足以證明 Kimi K2.6 喺「指令跟從」同「自我修正」兩方面都明確比之前版本好咗。較穩陣嘅講法係:Kimi K2 系列本身已經有唔錯嘅指令跟從表現,但針對 K2.6 嘅公開、可核對證據仍然有限,尤其「自我修正能力」幾乎冇直接量化資料。[1][2][3][6] 可確認到嘅事 Kimi K2 論文表示,K2 Instruct 喺 instruction following 方面用 IFEval 同 Multi Ch...

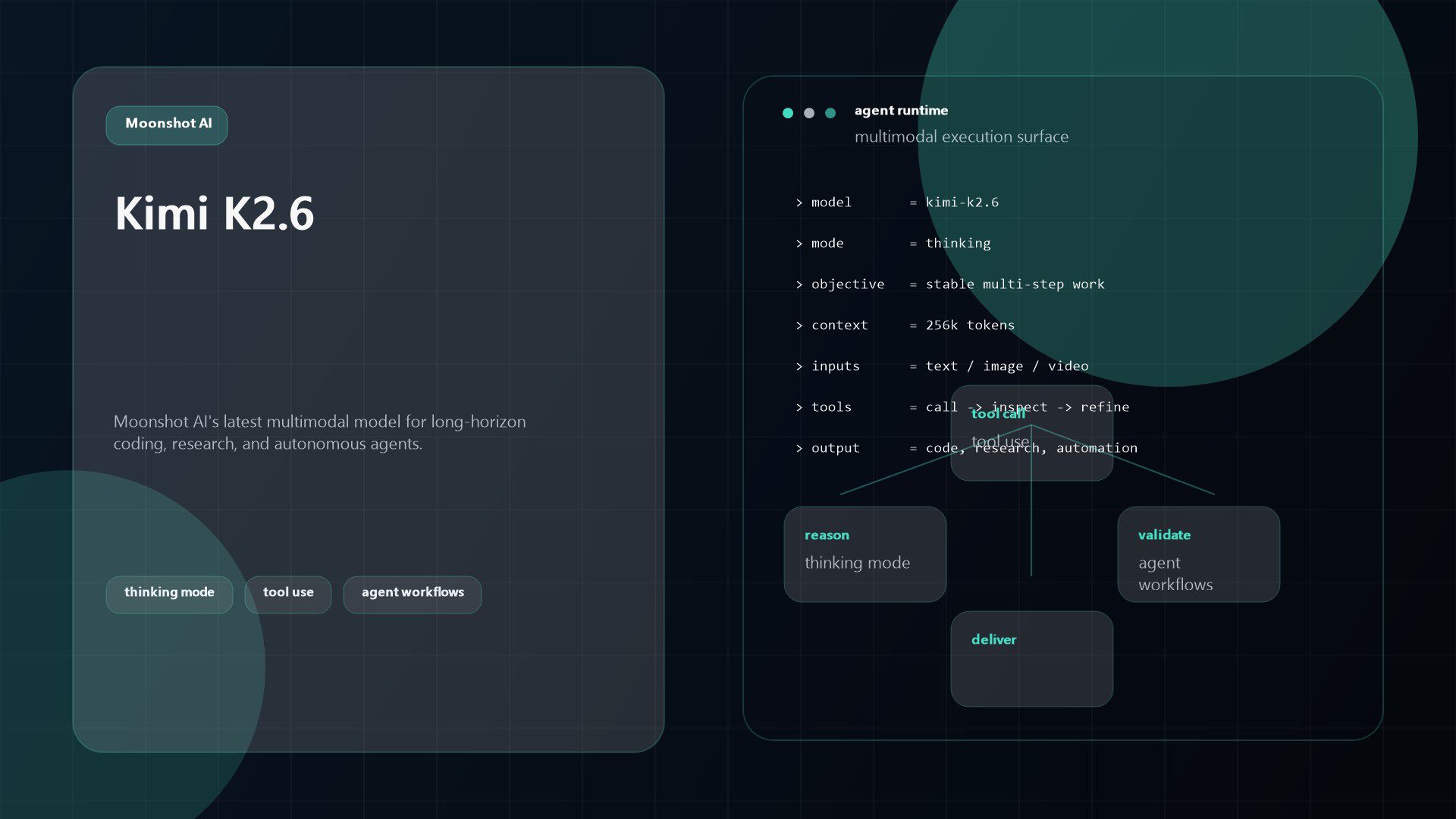

就現有證據睇,未足以證明 Kimi K2.6 喺「指令跟從」同「自我修正」兩方面都明確比之前版本好咗。較穩陣嘅講法係:Kimi K2 系列本身已經有唔錯嘅指令跟從表現,但針對 K2.6 嘅公開、可核對證據仍然有限,尤其「自我修正能力」幾乎冇直接量化資料。[1][2][3][6] 可確認到嘅事 Kimi K2 論文表示,K2 Instruct 喺 instruction following 方面用 IFEval 同 Multi Challenge 做評測,而且屬於開源模型入面嘅 top tier 表現。[1] Kimi K2.6 已經正式上線,亦可經 Workers AI 同 Kimi API 使用。[2][3] 第三方彙整頁面顯示,

重點

- 就現有證據睇,未足以證明 Kimi K2.6 喺「指令跟從」同「自我修正」兩方面都明確比之前版本好咗。較穩陣嘅講法係:Kimi K2 系列本身已經有唔錯嘅指令跟從表現,但針對 K2.6 嘅公開、可核對證據仍然有限,尤其「自我修正能力」幾乎冇直接量化資料。[1][2][3][6]

- ## 可確認到嘅事

研究答案

就現有證據睇,未足以證明 Kimi K2.6 喺「指令跟從」同「自我修正」兩方面都明確比之前版本好咗。較穩陣嘅講法係:Kimi K2 系列本身已經有唔錯嘅指令跟從表現,但針對 K2.6 嘅公開、可核對證據仍然有限,尤其「自我修正能力」幾乎冇直接量化資料。[1][

2][

3][

6]

可確認到嘅事

- Kimi K2 論文表示,K2-Instruct 喺 instruction-following 方面用 IFEval 同 Multi-Challenge 做評測,而且屬於開源模型入面嘅 top-tier 表現。[

1]

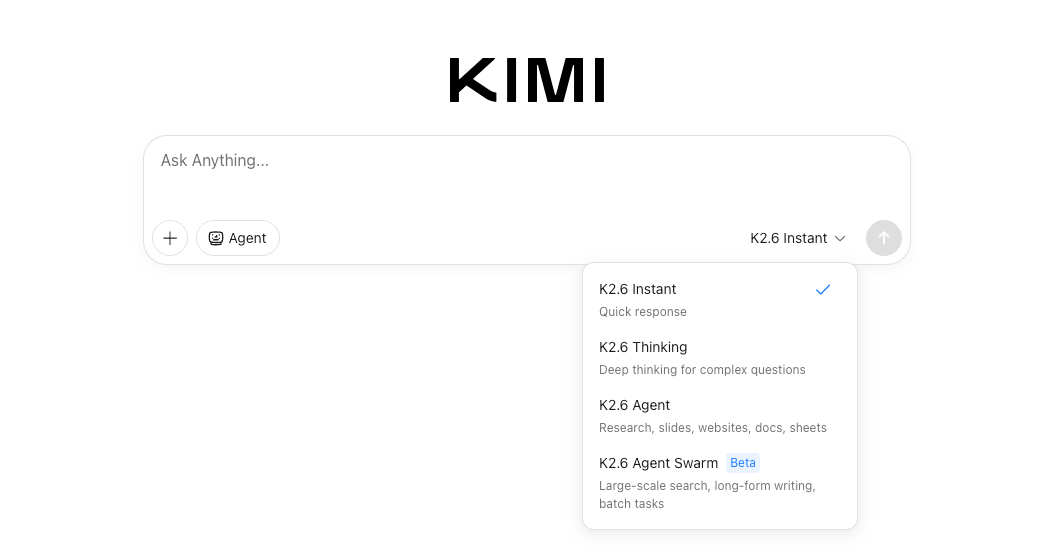

- Kimi K2.6 已經正式上線,亦可經 Workers AI 同 Kimi API 使用。[

2][

3]

- 第三方彙整頁面顯示,Kimi 2.6 喺一個 provisional leaderboard 上總排名第 13/110,總分 83/100,但呢個係整體表現,唔係專門量度指令跟從或自我修正。[

6]

- IFEval 本身係一個專門測試模型能否遵守格式、長度、關鍵字、結構等可驗證指令嘅 benchmark,所以如果要驗證「指令跟從有冇進步」,呢類指標係有參考價值。[

4][

5]

未能證實嘅部分

- 現有證據入面,冇見到 Kimi K2.6 對比 K2 或其他舊版本嘅官方 IFEval 分數、前後測,或者明確寫明「instruction-following improved by X」。[

1][

2][

3][

6]

- 現有證據亦冇提供 Kimi K2.6 嘅「自我修正能力」直接 benchmark,例如 error recovery、reflection、self-correction pass rate、重新規劃任務成功率等量化結果。[

2][

3][

6]

- 因此,如果有人話「Kimi K2.6 嘅指令跟從同自我修正能力實際上真係明顯好咗」,單靠呢批資料,證據不足。[

1][

2][

3][

6]

點樣解讀會比較準確

- 如果只講「Kimi K2 家族喺指令跟從方面本來已有競爭力」,呢個講法有一定支持。[

1]

- 如果進一步講「Kimi K2.6 比上一代更好」,目前只能話可能係,但未被呢批證據充分證實。[

2][

3][

6]

- 如果講「自我修正能力明顯提升」,我而家只能話:Insufficient evidence。[

2][

3][

6]

結論

- 指令跟從:有間接跡象顯示 Kimi 系列底子唔差,但未見足夠公開證據證明 K2.6 相比前版本有明確、可量化提升。[

1][

4][

5][

6]

- 自我修正:現有證據幾乎冇直接支持,未能完成事實查核式確認。[

2][

3][

6]

如果你想,我下一步可以幫你將呢批證據整理成「支持/反對/未證實」三欄嘅簡短查核表述版本。

支持視覺效果

研究對話

研究問題

搜尋及事實查核:Kimi K2.6 嘅指令跟從同自我修正能力,實際係咪真係好咗?

引用的答案

就現有證據睇,未足以證明 Kimi K2.6 喺「指令跟從」同「自我修正」兩方面都明確比之前版本好咗。較穩陣嘅講法係:Kimi K2 系列本身已經有唔錯嘅指令跟從表現,但針對 K2.6 嘅公開、可核對證據仍然有限,尤其「自我修正能力」幾乎冇直接量化資料。[1][

2][

3][

6]

可確認到嘅事

- Kimi K2 論文表示,K2-Instruct 喺 instruction-following 方面用 IFEval 同 Multi-Challenge 做評測,而且屬於開源模型入面嘅 top-tier 表現。[

1]

- Kimi K2.6 已經正式上線,亦可經 Workers AI 同 Kimi API 使用。[

2][

3]

- 第三方彙整頁面顯示,Kimi 2.6 喺一個 provisional leaderboard 上總排名第 13/110,總分 83/100,但呢個係整體表現,唔係專門量度指令跟從或自我修正。[

6]

- IFEval 本身係一個專門測試模型能否遵守格式、長度、關鍵字、結構等可驗證指令嘅 benchmark,所以如果要驗證「指令跟從有冇進步」,呢類指標係有參考價值。[

4][

5]

未能證實嘅部分

- 現有證據入面,冇見到 Kimi K2.6 對比 K2 或其他舊版本嘅官方 IFEval 分數、前後測,或者明確寫明「instruction-following improved by X」。[

1][

2][

3][

6]

- 現有證據亦冇提供 Kimi K2.6 嘅「自我修正能力」直接 benchmark,例如 error recovery、reflection、self-correction pass rate、重新規劃任務成功率等量化結果。[

2][

3][

6]

- 因此,如果有人話「Kimi K2.6 嘅指令跟從同自我修正能力實際上真係明顯好咗」,單靠呢批資料,證據不足。[

1][

2][

3][

6]

點樣解讀會比較準確

- 如果只講「Kimi K2 家族喺指令跟從方面本來已有競爭力」,呢個講法有一定支持。[

1]

- 如果進一步講「Kimi K2.6 比上一代更好」,目前只能話可能係,但未被呢批證據充分證實。[

2][

3][

6]

- 如果講「自我修正能力明顯提升」,我而家只能話:Insufficient evidence。[

2][

3][

6]

結論

- 指令跟從:有間接跡象顯示 Kimi 系列底子唔差,但未見足夠公開證據證明 K2.6 相比前版本有明確、可量化提升。[

1][

4][

5][

6]

- 自我修正:現有證據幾乎冇直接支持,未能完成事實查核式確認。[

2][

3][

6]

如果你想,我下一步可以幫你將呢批證據整理成「支持/反對/未證實」三欄嘅簡短查核表述版本。

人們還問

「搜尋及事實查核:Kimi K2.6 嘅指令跟從同自我修正能力,實際係咪真係好咗?」的簡短答案是什麼?

就現有證據睇,未足以證明 Kimi K2.6 喺「指令跟從」同「自我修正」兩方面都明確比之前版本好咗。較穩陣嘅講法係:Kimi K2 系列本身已經有唔錯嘅指令跟從表現,但針對 K2.6 嘅公開、可核對證據仍然有限,尤其「自我修正能力」幾乎冇直接量化資料。[1][2][3][6]

首先要驗證的關鍵點是什麼?

就現有證據睇,未足以證明 Kimi K2.6 喺「指令跟從」同「自我修正」兩方面都明確比之前版本好咗。較穩陣嘅講法係:Kimi K2 系列本身已經有唔錯嘅指令跟從表現,但針對 K2.6 嘅公開、可核對證據仍然有限,尤其「自我修正能力」幾乎冇直接量化資料。[1][2][3][6] ## 可確認到嘅事

接下來我應該探索哪個相關主題?

繼續“搜尋及事實查核:Kimi K2.6 可唔可以長時間自主跑 task,仲可以用多代理協作完成複雜流程?”以獲得另一個角度和額外的引用。

開啟相關頁面我應該將其與什麼進行比較?

對照「搜尋並查核事實:Kimi K2.6 的 Agent Swarm 到底能幫我一次做完哪些事?真的能同時產出網頁、PPT、表格嗎?」交叉檢查此答案。

開啟相關頁面繼續你的研究

來源

- [1] Moonshot AI Kimi K2.6 now available on Workers AI · Changelogdevelopers.cloudflare.com

Skip to content. Get this page as Markdown: https://developers.cloudflare.com/changelog/post/2026-04-20-kimi-k2-6-workers-ai/index.md (append index.md) or send Accept: text/markdown to https://developers.cloudflare.com/changelog/post/2026-04-20-kimi-k2-6-workers-ai/. For this product's page index use https://developers.cloudflare.com/changelog/llms.txt. For all Cloudflare products use https://developers.cloudflare.com/llms.txt. You can access all of this product's full docs in a single file at https://de…

- [2] Kimi K2.6 - Kimi API Platformplatform.kimi.ai

Skip to main content. * Kimi K2.6 Multi-modal Model. * Kimi K2. * Using Thinking Models. * Overview of Kimi K2.6 Model. * Long-Thinking Capabilities. * [Example Usage]…

- [3] Kimi K2 is the large language model series developed by Moonshot ...github.com

Skip to content. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert. * Code. * Issues 61. * Pull requests 3. * [Actions](https://github.c…

- [4] Kimi K2.6: Open-Weight Agent Model - Verdent AIverdent.ai

Moonshot AI's Open-Weight Agent Model Explained - Verdent Guides. Skip to main content. Sign In. 3. /Kimi K2.6: Open-Weight Agent Model. # Kimi K2.6: Open-Weight Agent Model. Copy link[](https://www.linkedin.com/sharing/share-offsite/?url=https%3A%2F%2Fwww.verdent.ai%2Fguides%2Fwhat-is-kimi-k2-6 "Share on Lin…

- [5] Kimi K2.6: Pricing, Benchmarks & Performancellm-stats.com

Benchmarks. Compare. Compare. Chat.

. Kimi K2.6Qwen3.6 PlusGemini 3 FlashClaude Opus 4.6[Muse Spark](https:…

. Kimi K2.6Qwen3.6 PlusGemini 3 FlashClaude Opus 4.6[Muse Spark](https:… - [6] Moonshot AI's new Kimi K2.6 swarms your complex tasks ... - ZDNETzdnet.com

- Gaming. * Headphones. * Laptops. * PCs. * Smartphones. * Speakers. * Tablets. * TVs. * Artificial Intelligence. * Cloud. * Energy. * [Robotics](h…

- [7] moonshotai/Kimi-K2.6 - Hugging Facehuggingface.co

Kimi-K2.6. Model Introduction](https://huggingface.co/moonshotai/Kimi-K2.6#1-model-introduction "1. Model Summary](https://huggingface.co/moonshotai/Kimi-K2.6#2-model-summary "2. Evaluation Results](https://huggingface.co/moonshotai/Kimi-K2.6#3-evaluation-results "3. Deployment](https://huggingface.co/moonshotai/Kimi-K2.6#5-deployment "5. Model Usage](https://huggingface.co/moonshotai/Kimi-K2.6#6-model-usage "6. * [Chat Completion with visual content](https://huggingface.co/moonshotai/Kimi-K2.6#chat-completion-with-visual-content "Chat Completion…

- [8] [AINews] Moonshot Kimi K2.6: the world's leading Open Model refreshes to catch up to Opus 4.6 (ahead of DeepSeek v4?)latent.space

DeepSeek V4 rumors are back, and we learned our lesson not to get too excited, but in their deafening silence since v3.2, Moonshot has owned the crown of leading Chinese open model lab for all of 2026 to date, and K2.6 refreshes the lead that K2.5 established in January, with (presumably) more continued…

- [9] Moonshot AI Open-Sources Kimi K2.6 — The Coding Model That Runs for Dayschatlyai.app

Moonshot AI Open-Sources Kimi K2.6 — The Coding Model That Runs for Days. Moonshot AI Open-Sources Kimi K2.6 — The Coding Model That Works for Days Without You. Written by Muhammad Bin Habib. Explore what Kimi K2.6's release means for developers, and open-source AI. # Moonshot AI Open-Sources Kimi K2.6 — A Coding Model That Runs Autonomously for Days. Beijing / April 21, 2026 — Moonshot AI has released Kimi K2.6 to the open-source community — a model that executes complex engineering tasks for hours, sometimes days, without a human in the loop. Available immediately via Kimi.com, the…

- [10] Moonshot AI releases Kimi K2.6 with long-horizon coding and agent ...facebook.com

- [11] Moonshot AI Unveils Kimi K2.6, an Open-Weight Model Built for Benchmark Parity and Massive Agent Scalelinkedin.com

- [12] Kimi K2: Open Agentic Intelligencearxiv.org

... K2-Instruct secures a top-tier position among open-source models. We evaluate instruction-following with IFEval and Multi-Challenge. On IFEval, Kimi-K2-Instruct

- [13] IFEval Benchmark 2026: 115 LLM Scores Ranked | BenchLM.aibenchlm.ai

Instruction-Following Eval (IFEval). A benchmark that evaluates language models' ability to follow verifiable instructions such as formatting constraints, keyword inclusion/exclusion, length limits, and structural requirements. According to BenchLM.ai, GPT-5.4 Pro leads the IFEval benchmark with a score of 97, followed by GPT-5.4 (96) and GPT-5.3 Instant (96). 115 models have been evaluated on IFEval. ## About IFEval. IFEval uses verifiable instructions to objectively measure instruction-following ability. ## Leaderboard (115 models). ### What does IFEval measure? A benchmark that e…

- [14] Instruction Following Leaderboard: IFEval Rankings 2026 | Awesome Agentsawesomeagents.ai

Rankings of AI models on IFEval and IFBench, the two main benchmarks for measuring how reliably LLMs follow precise formatting, length, and content constraints. The gap between a model's IFEval and IFBench scores is a useful signal. The average IFBench score across assessed models is 0.649 - dramatically lower than IFEval's 0.844 average - which confirms that instruction generalization is truly harder than it looks on standard benchmarks. The 12-point gap between Qwen3.5-27B's IFEval score (95.0%) and IFBench score (76.5%) tells you something IFEval alone doesn't: the model's constraint-follo…

- [15] Kimi 2.6 Benchmarks 2026: Scores, Rankings & Performancebenchlm.ai

According to BenchLM.ai, Kimi 2.6 ranks #13 out of 110 models on the provisional leaderboard with an overall score of 83/100. ### How does Kimi 2.6 perform overall in AI benchmarks? Kimi 2.6 currently ranks #13 out of 110 models on BenchLM's provisional leaderboard with an overall score of 83. Kimi 2.6 has visible benchmark coverage in knowledge and understanding, but BenchLM does not currently assign it a global category rank there. Kimi 2.6 ranks #6 out of 110 models in coding and programming benchmarks with an average score of 89.8. Kimi 2.6 has visible benchmark coverage i…

- [16] Kimi K2 Evaluation Results: Top Open-Source Non-Reasoning Model for Codingeval.16x.engineer

Our results show that Kimi K2 is the new top open-source non-reasoning model for coding tasks while maintaining competitive performance in writing. The most significant result is that Kimi K2's overall coding performance exceeds DeepSeek V3 (New), establishing it as the new top open-source non-reasoning model for coding tasks. Kimi K2 vs Top Models - Individual Coding Task Performance. * Complex task: For the benchmark visualization task, Kimi K2 scored 8.5, matching the performance of Claude Sonnet 4, Claude Opus 4, GPT-4.1 and Gemini 2.5 Pro. The model produced an effective side…

- [17] moonshotai/Kimi-K2.6 - vLLM Recipesrecipes.vllm.ai

Kimi-K2.6 | vLLM Recipes.

/RecipesDocsGitHub.

/RecipesDocsGitHub.  Arcee AI.

Arcee AI.  Ernie (Baidu). [

Ernie (Baidu). [ Seed (ByteDa…

Seed (ByteDa… - [18] Kimi K2.6 - Vals AIvals.ai

Gemini 3.1 Flash Lite Preview. Gemini 3.1 Pro Preview (02/26). Claude Opus 4.6 (Nonthinking). Claude Opus 4.6 (Thinking). Gemini 3 Flash (12/25). kimi/kimi-k2.6-thinking. Release Date: 4/20/2026. ##### Contact us. Join our mailing list to receive benchmark updates. By subscribing, I agree to the Vals' Privacy Policy. Copyright © 2025 Vals AI, Inc. All rights reserved.

- [19] Instruction Following Benchmarks 2026: IFEval LLM Scores | BenchLM.aibenchlm.ai

| 2 GPT-5.2 Pro OpenAI | Closed | Reasoning | 400K | 90 | 95% |. | 4 GPT-5.3 Codex OpenAI | Closed | Reasoning | 400K | 89 | 93% |. | 5 GPT-5.2 OpenAI | Closed | Reasoning | 400K | 88 | 94% |. | 6 GPT-5.3 Instant OpenAI | Closed | Reasoning | 128K | 87 | 96% |. | 7 GPT-5.3-Codex-Spark OpenAI | Closed | Reasoning | 256K | 87 | 92% |. | 9 GPT-5.2 Instant OpenAI | Closed | Reasoning | 128K | 85 | 95% |. | 10 GPT-5.2-Codex OpenAI | Closed | Reasoning | 400K | 85 | 92% |. | 15 GPT-5.1 OpenAI | Closed | Reasoning | 200K | 80 | 89% |. | 16 GPT-5 (high) OpenAI | Closed | Reasoning | 128K | 79 | 91% |…

- [20] GitHub - lightblue-tech/M-IFEval · GitHubgithub.com

Evaluating instruction following is crucial to ensure these models perform reliably across different tasks and languages. Building upon the Instruction