AnswersPublished8 sources

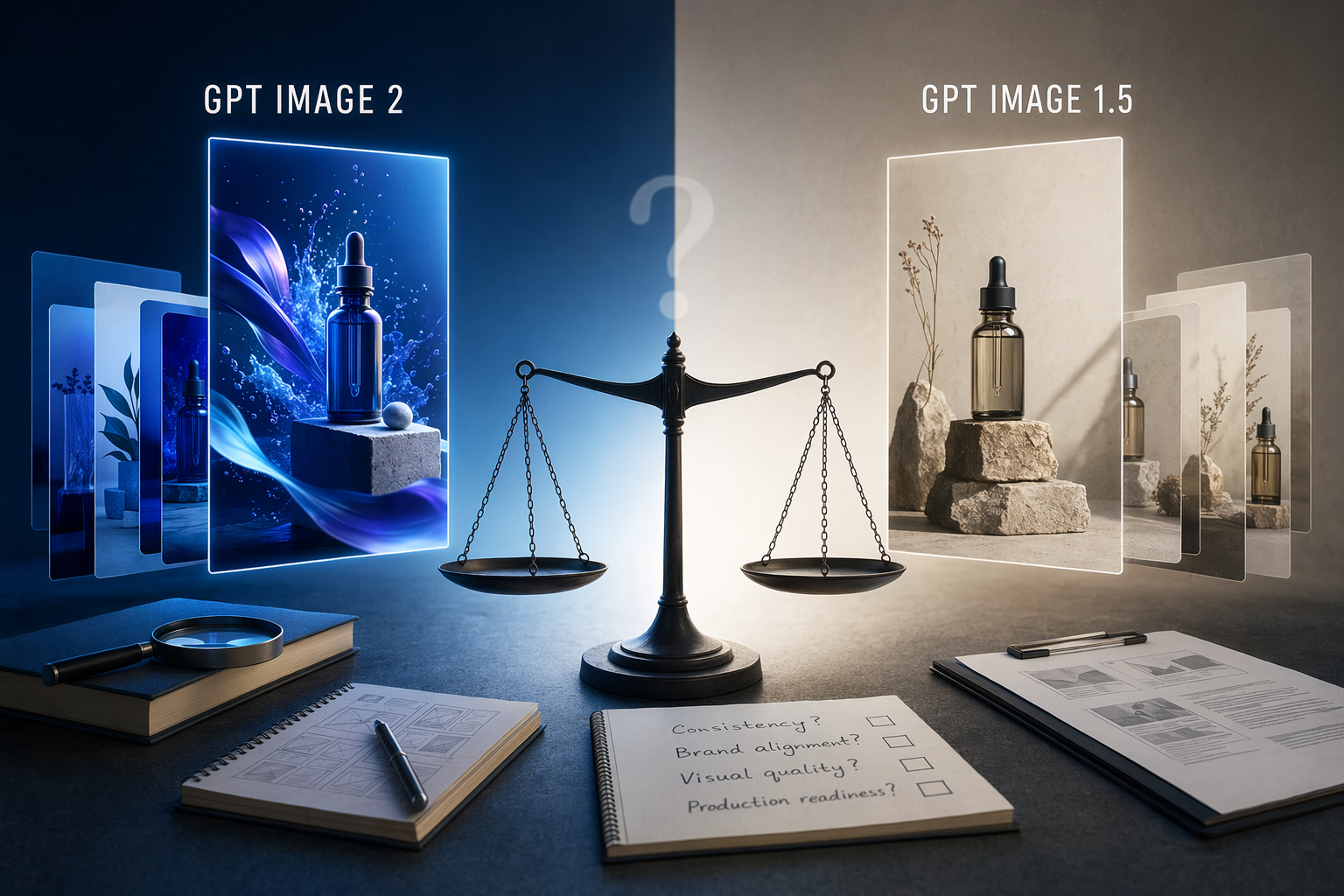

GPT Image 2 vs GPT Image 1.5: Is It More Reliable for Marketing Assets?

No: the available OpenAI sources document GPT Image 2 and GPT Image 1.5, but they do not include a head to head marketing benchmark, acceptance rate, or retry rate comparison. The useful next step is a controlled pilot: use the same prompts, source images, brand constraints, and review rubric for both models.

224K0

AI Prompt

openai.comCreate a landscape editorial hero image for this Studio Global article: GPT Image 2 vs GPT Image 1.5: Marketing Asset Reliability Is Unproven. Article summary: The safest verdict is no: the available sources do not prove GPT Image 2 creates marketing ready variations more reliably than GPT Image 1.5.. Topic tags: ai, openai, gpt image, image generation, generative ai. Reference image context from search candidates: Reference image 1: visual subject "GPT Image 2 vs GPT Image 1.5 in 2026: Which OpenAI Image Model Should You Use? If your real decision is **GPT Image 2 vs GPT Image 1.5**, the cleanest answer on **April 22, 2026**" source context "GPT Image 2 vs GPT Image 1.5 (2026) - EvoLink.AI" Reference image 2: visual subject "The image compares the features of Nano Banana 2 and GPT Image 1.5, highlighting that Nano Banana 2 offers better default cost-efficient generation with a flexible

Marketing teams do not need only attractive AI images. They need assets that preserve product details, follow the brief, keep required copy readable, and pass brand review with minimal rework. The available sources support a cautious answer: GPT Image 2 is documented, GPT Image 1.5 is documented, and OpenAI supports image generation and editing workflows, but the reviewed evidence does not prove GPT Image 2 is more reliable for marketing-ready variations. [30][

12][

15]

Verdict: unproven, not impossible

OpenAI has an API model page for GPT Image 2. [30] It also has an API model page for GPT Image 1.5, which describes GPT Image 1.5 as a state-of-the-art image generation model with better instruction following and adherence to prompts. [

12] OpenAI’s image generation guide covers both prompt-based generations and edits to existing images. [

15]

Studio Global AI

Search, cite, and publish your own answer

Use this topic as a starting point for a fresh source-backed answer, then compare citations before you share it.

Key takeaways

- No: the available OpenAI sources document GPT Image 2 and GPT Image 1.5, but they do not include a head to head marketing benchmark, acceptance rate, or retry rate comparison.

- The useful next step is a controlled pilot: use the same prompts, source images, brand constraints, and review rubric for both models.

- Score outputs on copy accuracy, product fidelity, brand consistency, edit precision, first pass acceptance, and total retries.

People also ask

What is the short answer to "GPT Image 2 vs GPT Image 1.5: Is It More Reliable for Marketing Assets?"?

No: the available OpenAI sources document GPT Image 2 and GPT Image 1.5, but they do not include a head to head marketing benchmark, acceptance rate, or retry rate comparison.

What are the key points to validate first?

No: the available OpenAI sources document GPT Image 2 and GPT Image 1.5, but they do not include a head to head marketing benchmark, acceptance rate, or retry rate comparison. The useful next step is a controlled pilot: use the same prompts, source images, brand constraints, and review rubric for both models.

What should I do next in practice?

Score outputs on copy accuracy, product fidelity, brand consistency, edit precision, first pass acceptance, and total retries.

Which related topic should I explore next?

Continue with "Mogami vs Type 31: Why Japan Wants New Zealand’s Frigate Deal" for another angle and extra citations.

Open related pageWhat should I compare this against?

Cross-check this answer against "Corpay’s BVNK Deal Brings Stablecoin Wallets and 24/7 Settlement to 800,000 Businesses".

Open related pageContinue your research

Sources

- [12] GPT Image 1.5 Model | OpenAI APIdevelopers.openai.com

Search the API docs. Get started. Realtime API. Model optimization. Specialized models. Legacy APIs. Getting Started. Using Codex. + Building frontend UIs with Codex and Figma. API. How Perplexity Brought Voice Search to Millions Using the Realtime API. Bui...

- [15] Image generation | OpenAI APIdevelopers.openai.com

Image generation. Image generation. Image generation. Image generation. Generations : Generate images from scratch based on a text prompt. Edits : [Modify existing images](

- [20] Gpt-image-1.5 Prompting Guide - OpenAI Developersdevelopers.openai.com

Constraints:Constraints: - Original design only - Original design only - No trademarks - No trademarks - No watermarks - No watermarks - No logos - No logos Include ONLY this packaging text (verbatim):Include ONLY this packaging text (verbatim):"{short copy...

- [21] Image Evals for Image Generation and Editing Use Casesdevelopers.openai.com

No extra text.\n", metadata={}, model='gpt-5.2-2025-12-11', object='response', output=[ResponseCodeInterpreterToolCall(id='ci 03756a1c45c8427000697ad91aaf108196974c45daf37a9a18', code="from PIL import Image, ImageOps\nimg1=Image.open('/mnt/data/143ba8edc474...

- [30] GPT Image 2 Model | OpenAI API