OpenAI is not just adding convenience features to Codex. It is reshaping Codex into a delegated-work environment: a place where users can start multiple agent threads, connect tools through plugins, and, on supported Macs, let Codex operate graphical apps when normal integrations are not enough [17][

18][

21]. The direction is broader than coding, but it is not fully universal yet: OpenAI still frames Codex around developer workflows, and Computer Use is currently limited to macOS with regional exclusions at launch [

18][

23].

The core shift: from coding helper to delegated work surface

Codex began with a narrower identity. OpenAI introduced it as a cloud-based software engineering agent that could work on many tasks in parallel and was powered by codex-1, a model optimized for software engineering [13]. That developer DNA is still visible in the product today.

The newer Codex app, however, changes the shape of the product. OpenAI’s developer docs describe it as a desktop experience for working on Codex threads in parallel, with built-in worktree support, automations, and Git functionality; the same docs say Codex is included with ChatGPT Plus, Pro, Business, Edu, and Enterprise plans [17]. OpenAI’s Codex app announcement also described a macOS interface for managing multiple agents at once, running work in parallel, and collaborating with agents over long-running tasks [

25].

That matters because a general computer-work agent needs more than code generation. It needs somewhere to keep jobs running, context for long tasks, and a way to coordinate multiple pieces of work at once. Codex is being moved in that direction.

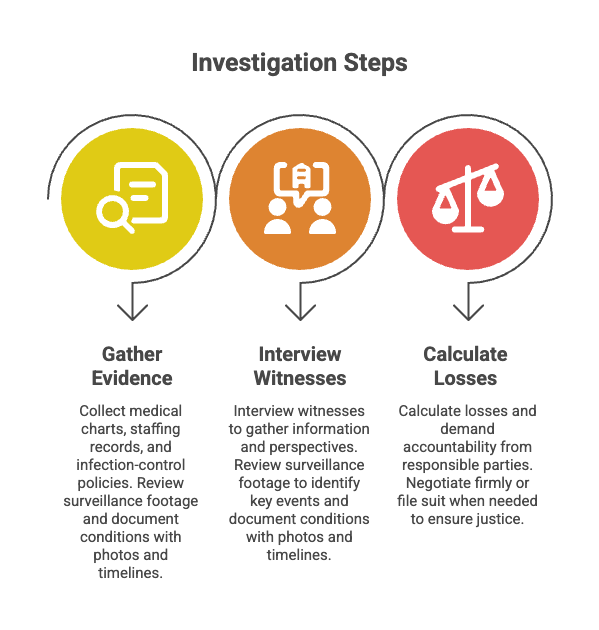

Computer Use is the biggest unlock

The clearest step beyond coding is Computer Use. OpenAI’s Codex documentation says users can install the Computer Use plugin and grant macOS Screen Recording and Accessibility permissions; once enabled, Codex can see and operate graphical user interfaces on macOS [18]. The same documentation says Computer Use is meant for cases where command-line tools or structured integrations are not enough, such as checking a desktop app, using a browser, changing app settings, working with a data source that is not available as a plugin, or reproducing a bug that only appears in a graphical interface [

18].

OpenAI’s use-case docs put it even more directly: Codex can click, type, and navigate apps on a Mac, and users can hand off multi-step tasks across Mac apps, windows, browser sessions, and local files [19]. That is the practical boundary between a coding assistant and a computer operator. Instead of only producing code or instructions, Codex can act inside software.

The limitation is just as important as the capability. OpenAI says Computer Use is currently available on macOS, excluding the European Economic Area, the United Kingdom, and Switzerland at launch [18]. So Codex is moving toward everyday computer work, but it is not yet a general agent that operates every user’s computer everywhere.

Parallel agents turn Codex into a work queue

OpenAI is also building Codex around parallel work rather than one-off prompts. The Codex app is explicitly designed for parallel Codex threads, and its docs emphasize worktrees, automations, and Git functionality [17]. OpenAI’s app announcement framed the product as a way to manage multiple agents at once and collaborate on long-running tasks [

25].

That design is still developer-oriented, but the pattern is broader: a user delegates several jobs, monitors progress, and jumps in when needed. For coding, that may mean testing a fix, updating a feature, or reviewing a repository. For computer work more generally, the same pattern can extend to tasks that move between apps, files, browser sessions, and connected services [19].

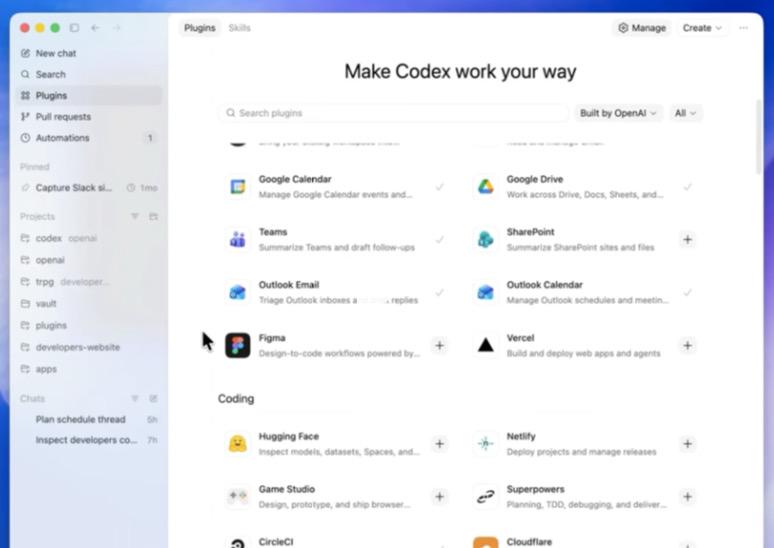

Plugins and integrations reduce the need to click around

Computer Use is only one way for Codex to act. OpenAI is also giving it a plugin and integration layer.

In the March 2026 Codex changelog, OpenAI said plugins became a first-class workflow: Codex could sync product-scoped plugins at startup, browse them in the plugins interface, and install or remove them with clearer authentication and setup handling [21]. In the April 2026 changelog, OpenAI listed additional plugin workflow improvements, including marketplace installation, remote bundle caching, and remote uninstall [

20].

Codex has also been connected to team workflows. When OpenAI announced Codex general availability, it highlighted a Slack integration that lets teams delegate tasks or ask questions from a channel or thread, as well as a Codex SDK for embedding the same agent into workflows, tools, and apps [29].

The strategy is straightforward: use structured integrations where possible, and use Computer Use when an app, website, or local file workflow cannot be handled cleanly through an API or plugin. OpenAI’s own Computer Use docs describe that split by recommending Computer Use for tasks where command-line tools or structured integrations are not enough [18].

Memory and automations point toward persistent agents

OpenAI is also working on persistence. A Codex announcement on OpenAI’s community forum says Codex is expanding beyond coding to support a broader range of work, while adding that the focus remains stronger developer workflows, better integrations, and less friction across projects [23]. The same announcement says OpenAI is releasing a preview of memory and that Codex will support future work scheduling and more proactive help with ongoing projects [

23].

That is a notable shift in product intent. A coding assistant can answer a prompt. A persistent work agent needs to remember preferences, understand recurring workflows, and resume work over time. OpenAI is not saying Codex has become a fully autonomous office assistant; it is saying the product is being extended with the context and scheduling pieces that make longer-running delegation possible [23].

GPT-5.4 is the model layer behind the move

The product changes are being paired with a model strategy. OpenAI says GPT-5.4 is being released in ChatGPT, the API, and Codex, and describes it as its most capable and efficient frontier model for professional work [9]. OpenAI also says that, in Codex and the API, GPT-5.4 is its first general-purpose model with native computer-use capabilities, enabling agents to operate computers and carry out complex workflows across applications [

9].

That is important because desktop control is not just a UI feature. For an agent to work across apps, it needs to interpret screens, decide what to do next, and recover when workflows do not behave exactly as expected. OpenAI is positioning GPT-5.4 as part of that capability stack [9].

What Codex still is not

The best way to describe Codex today is not a finished general-purpose personal assistant. It is a developer-first agent platform expanding into broader computer work.

The evidence for that caveat is in OpenAI’s own framing. The Codex app documentation still emphasizes Codex threads, worktrees, automations, and Git functionality [17]. The broader announcement says Codex is expanding beyond coding, but also says the focus remains developer workflows, integrations, and project friction [

23]. And Computer Use, the most visibly general-purpose feature, is currently limited to macOS with launch exclusions in the EEA, UK, and Switzerland [

18].

Bottom line

OpenAI is turning Codex into an everyday computer-work agent by combining three layers: a desktop app for parallel long-running agents, an action layer made of Computer Use plus plugins and integrations, and a model layer with GPT-5.4’s native computer-use capabilities [17][

18][

21][

9]. The result is no longer just a tool that writes and edits code. It is becoming a workspace for delegating tasks across apps, files, browsers, and connected services—while still starting from, and largely serving, the developer workflow.