Search for: What is the best AI for coding?

There isn’t one universal “best AI for coding” right now. For real repo level engineering, debugging, and multi file work, Claude Code/Opus class models look like the strongest overall choice in independent workflow c...

There isn’t one universal “best AI for coding” right now. For real repo level engineering, debugging, and multi file work, Claude Code/Opus class models look like the strongest overall choice in independent workflow comparisons, but benchmark leaders still vary by test and evaluation setup.[3][5] Claude Code (Opus 4.6)

Key takeaways

- There isn’t one universal “best AI for coding” right now. For real repo-level engineering, debugging, and multi-file work, Claude Code/Opus-class models look like the strongest overall choice in independent workflow comparisons, but benchmark leaders still vary by test and evalua

- Claude Code (Opus 4.6) is rated strongest by Emergent for complex debugging, multi-file reasoning, and high-risk code changes in real workflows.[3]

Research answer

There isn’t one universal “best AI for coding” right now. For real repo-level engineering, debugging, and multi-file work, Claude Code/Opus-class models look like the strongest overall choice in independent workflow comparisons, but benchmark leaders still vary by test and evaluation setup.[3][

5]

- Claude Code (Opus 4.6) is rated strongest by Emergent for complex debugging, multi-file reasoning, and high-risk code changes in real workflows.[

3]

- Benchmark results are not consistent across setups. Awesome Agents reports GPT-5.4 leading SWE-bench Pro with custom agent scaffolding, while the same source says Claude Opus 4.5/4.6 comes out ahead in the Scale SEAL SWE-bench Pro evaluation when tooling is standardized.[

5]

- The official SWE-bench leaderboard snippet in the search results currently shows Gemini 3 Flash (high reasoning) at 75.80 and GPT-5-2 Codex at 72.80 in its displayed rankings.[

10]

- So the practical answer is:

- For hardest coding tasks and agent-style repo work: Claude Code / Opus-class models.[

3][

5]

- For benchmark-focused OpenAI workflows: GPT-5.x Codex-class models remain top-tier contenders.[

5][

10]

- For “single objective winner”: Insufficient evidence, because different sources use different benchmarks, scaffolding, and workflow assumptions.[

5][

10]

- For hardest coding tasks and agent-style repo work: Claude Code / Opus-class models.[

If you want, I can do the next useful step and narrow this to one of these:

- best AI for VS Code/Cursor

- best AI coding model via API

- best free option

- best for Python/web apps/debugging specifically

Supporting visuals

Research conversation

Research question

Search for: What is the best AI for coding?

Cited answer

There isn’t one universal “best AI for coding” right now. For real repo-level engineering, debugging, and multi-file work, Claude Code/Opus-class models look like the strongest overall choice in independent workflow comparisons, but benchmark leaders still vary by test and evaluation setup.[3][

5]

- Claude Code (Opus 4.6) is rated strongest by Emergent for complex debugging, multi-file reasoning, and high-risk code changes in real workflows.[

3]

- Benchmark results are not consistent across setups. Awesome Agents reports GPT-5.4 leading SWE-bench Pro with custom agent scaffolding, while the same source says Claude Opus 4.5/4.6 comes out ahead in the Scale SEAL SWE-bench Pro evaluation when tooling is standardized.[

5]

- The official SWE-bench leaderboard snippet in the search results currently shows Gemini 3 Flash (high reasoning) at 75.80 and GPT-5-2 Codex at 72.80 in its displayed rankings.[

10]

- So the practical answer is:

- For hardest coding tasks and agent-style repo work: Claude Code / Opus-class models.[

3][

5]

- For benchmark-focused OpenAI workflows: GPT-5.x Codex-class models remain top-tier contenders.[

5][

10]

- For “single objective winner”: Insufficient evidence, because different sources use different benchmarks, scaffolding, and workflow assumptions.[

5][

10]

- For hardest coding tasks and agent-style repo work: Claude Code / Opus-class models.[

If you want, I can do the next useful step and narrow this to one of these:

- best AI for VS Code/Cursor

- best AI coding model via API

- best free option

- best for Python/web apps/debugging specifically

People also ask

What is the short answer to "Search for: What is the best AI for coding?"?

There isn’t one universal “best AI for coding” right now. For real repo-level engineering, debugging, and multi-file work, Claude Code/Opus-class models look like the strongest overall choice in independent workflow comparisons, but benchmark leaders still vary by test and evalua

What are the key points to validate first?

There isn’t one universal “best AI for coding” right now. For real repo-level engineering, debugging, and multi-file work, Claude Code/Opus-class models look like the strongest overall choice in independent workflow comparisons, but benchmark leaders still vary by test and evalua Claude Code (Opus 4.6) is rated strongest by Emergent for complex debugging, multi-file reasoning, and high-risk code changes in real workflows.[3]

Which related topic should I explore next?

Continue with "Show me top 5 trending research topic US users often ask about GPT 5.5 Spud now. Dont give me questions you mentions in previous messages." for another angle and extra citations.

Open related pageWhat should I compare this against?

Cross-check this answer against "Research and fact check: GPT 5.5 Spud, Inference economics: token efficiency, API pricing, and production latency/throughput tradeoffs.".

Open related pageContinue your research

Sources

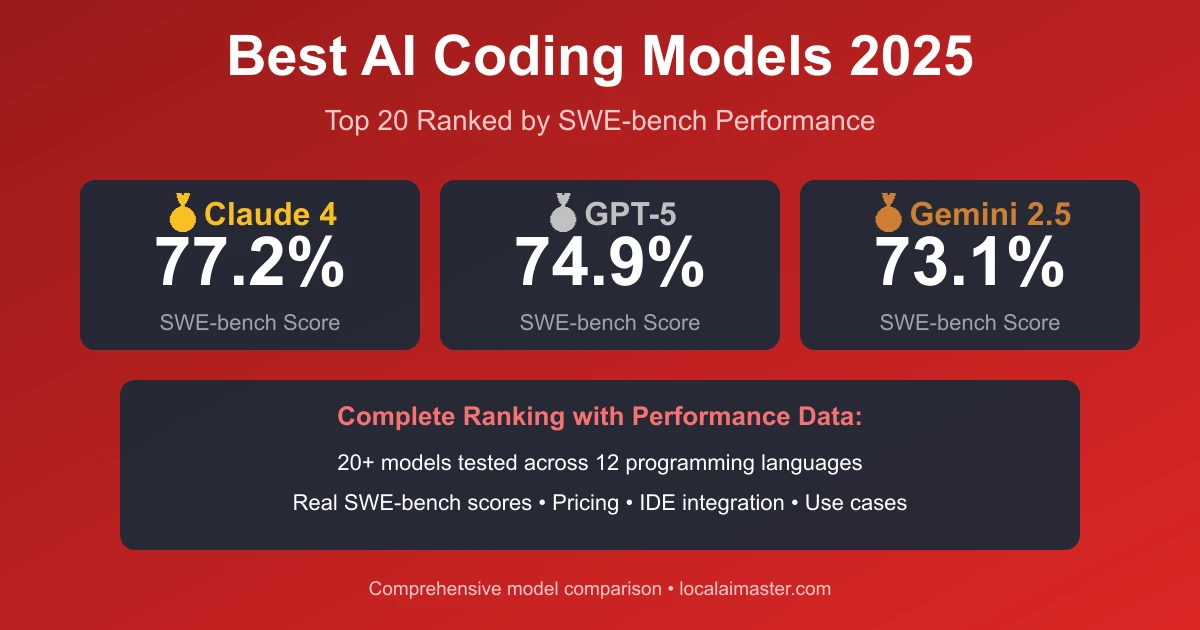

- [1] Best AI Coding Models 2026: SWE-Bench Leaderboard (Claude ...localaimaster.com

Best AI Coding Models 2026: SWE-Bench Leaderboard (Claude, GPT-5, Gemini). # Best AI Coding Models 2026: SWE-Bench Leaderboard (Claude, GPT-5, Gemini). ## Complete 2026 Rankings: Top 20 AI Coding Models. Based on comprehensive testing using SWE-bench Verified (the industry-standard benchmark for real-world coding tasks), performance analysis across 12 programming languages, and evaluation of 500+ production deployments. | Rank | Model | SWE-bench | Provider | Price/Month | Context | Best For |. ### Cloud Models (Claude 4, GPT-5, Gemini 2.5). **From Local Models to Cloud (Claude/GPT-5):*…

- [2] Best AI Coding Tools 2026: Complete Ranking by Real-World ...nxcode.io

. See our roundup of the best free AI coding tools for more options. For a broader look at AI tools beyond coding, see our best AI tools 2026 ranking. * [OpenAI Codex vs Cursor vs Claude Code: Which AI Coding Tool Should You Use in 2026?](https://www.nxcode.io/resources/news/openai-codex-vs-cursor-vs-…

- [3] Best AI Coding Tools in 2026 (Tested in Real Workflows) - Emergentemergent.sh

The mistake almost every comparison makes is evaluating models on generation quality, when real coding performance is determined by something else entirely, how well a system handles multi-step, repository-level work under pressure. | Complex debugging, multi-file reasoning, high-risk changes | Claude Code (Opus 4.6) | Maintains context across large codebases and survives iterative debugging without degrading |. | Daily coding, best developer experience inside an IDE | | Multi-model orchestration gives consistent results without breaking workflow flow |. | You are debugging across 6–8 files w…

- [4] Best AI for Coding 2026 - Top Coding Models - LLM Statsllm-stats.com

Compare the best AI models for coding using live arena results, benchmark performance, and real generation examples across code generation, debugging, and software engineering. 144 models7 coding arenas46 benchmarksRanked by Coding Arena + benchmarks. ## Current Best AI Models for Coding. These rankings are based on 726 blind votes in live coding arenas where users compare real code outputs without knowing which model generated them. ## How We Rank AI Coding Models. The 7coding arenas cover distinct real-world tasks: React website generation (th…

- [5] Best AI Models for Code Generation - April 2026 | Awesome Agentsawesomeagents.ai

GPT-5.4 leads SWE-bench Pro at 57.7% with custom agent scaffolding. | Rank | Model | Provider | SWE-bench Verified | SWE-bench Pro | LiveCodeBench | Price (Input/Output) | Verdict |. Its 80.8% on SWE-bench Verified stays at the top of the field, and the Scale SEAL evaluation of SWE-bench Pro - which uses standardized scaffolding for all models - puts Claude Opus 4.5/4.6 ahead of GPT-5.4 when agent tooling is controlled. With its own agent scaffolding, it reaches 57.7% on SWE-bench Pro - a harder variant of the original benchmark that draws from 1,865 tasks across 41 repositories in multiple l…

- [6] SWE-bench benchmark leaderboard in 2026: best AI for codingbracai.eu

SWE-bench benchmark leaderboard in 2026: best AI for coding. * AI services. * AI strategy. * AI solutions. * AI insights. # SWE-bench benchmark leaderboard in 2026: best AI for coding.  | 352.2 ±335.5 |. Try Top 4Full Results. | 2 | GPT-5.4 (high) | 76.9% ±1.9 |. Try Top 4Full Results. Try Top 4[Full Results](https:/…

- [10] SWE-bench Leaderboardsswebench.com

| - [x] | 🆕 Gemini 3 Flash (high reasoning) | 75.80 | $0.36 | |

| 2026-02-17 | 2.0.0 |. | - [x] | 🆕 GPT-5-2 Codex | 72.80 | $0.45 | |

| 2026-02-19 | [2.0.0](https://github.com/SWE-agent/mini-…

- [11] Which Is the Best AI for Coding in 2026?devspheretechnologies.com

What is the Best AI for Coding in 2026? For modern development teams, choosing the best AI for coding is no longer just about finding a fancy autocomplete tool; it is about selecting a digital partner capable of reasoning through complex architectures. Whether you are building a high-performance SaaS platform or looking for the best AI for coding to streamline your internal scripts, this guide provides the authentic, updated data you need to make an informed decision. Most premium tools now offer “Model Switching.” This allows you to use the best AI model for coding for specific tasks. * **…