Choosing between GPT Image 2 and Nano Banana Pro is less about naming a permanent champion than choosing the model whose strengths match your workload. The strongest sources here are official docs for model availability, rate limits, pricing, and generation parameters; the quality claims come from small hands-on tests and review-style comparisons [3][

6][

9][

10][

13][

14][

25][

26]. Taken together, the evidence supports a practical split: start with GPT Image 2 for text-heavy and layout-heavy commercial assets, and start with Nano Banana Pro when photoreal lighting, skin texture, and Gemini-native image workflows matter most [

3][

6][

10][

26].

Evidence caveat: Pro, 2, and public benchmarks are not identical

AVB’s direct comparison tested GPT Image 2.0 against Nano Banana Pro, identified there as gemini-3-pro-image, across 10 prompts on April 22, 2026 [6]. Several other useful public head-to-heads in this source set compare GPT Image 2 with Nano Banana 2 rather than Nano Banana Pro [

3][

9][

10]. Google’s developer docs describe Nano Banana image generation through the Gemini API, including aspect-ratio and

2K resolution parameters [26].

That naming mismatch matters. Model routes, provider policies, default settings, and product surfaces can change results. Treat Nano Banana 2 comparisons as adjacent evidence for Gemini/Nano Banana image workflows, not as a perfect substitute for your exact Nano Banana Pro endpoint [3][

6][

10][

26].

Official docs are strongest for API facts: OpenAI lists gpt-image-2-2026-04-21 and tiered rate limits for GPT Image 2 [13], and OpenAI’s pricing page lists token prices for

gpt-image-2 [14]. Google’s Gemini pricing page lists image-output pricing and a 1024×1024 token estimate [

25]. Public quality benchmarks are weaker: the cited tests are small prompt sets, blind tests, or comparison posts rather than a standardized independent benchmark suite [

3][

6][

9][

10]. Some comparison pages also make very precise claims, such as text-accuracy percentages or leaderboard-style rankings, without enough methodology in the provided snippets to treat those numbers as decisive [

5][

8].

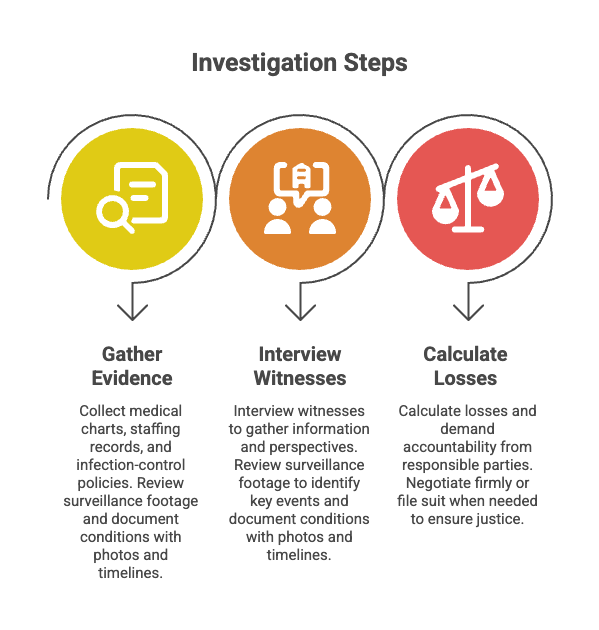

Quick decision matrix

| Workload | Better first test | Why |

|---|---|---|

| Exact English text, labels, menus, UI copy, posters, product labels | GPT Image 2 | Genspark reports a narrow GPT Image 2 edge on precise text and technical terminology, and AVB reports GPT Image 2.0 wins on in-image typography, manga dialogue panels, a bilingual menu, and a silkscreen gig poster [ |

| Layout-heavy ads, packaging, mockups, or commercial edits | GPT Image 2 | Vidguru’s 10-test blind benchmark says GPT-Image 2 won five rounds and tied the other five, with the biggest gap in image-editing fidelity, material logic, and layout-heavy commercial work [ |

| Photoreal portraits, UGC-style images, lifestyle ads, cinematic lighting | Nano Banana Pro | AVB reports Nano Banana Pro wins on photorealism, skin texture, and lighting in hyperreal portrait, UGC selfie, and athletic ad prompts [ |

| CJK typography polish or dramatic lighting | Test Nano Banana first, then verify on your endpoint | Genspark’s Nano Banana 2 comparison reports a narrow edge on CJK typography polish and dramatic lighting, but this is adjacent evidence rather than a direct Nano Banana Pro result [ |

| Product shots, e-commerce mockups, marketing infographics, anatomy diagrams | Benchmark both | Genspark reports the two models are effectively tied in these categories when prompted properly [ |

| Technical diagrams and labeled schematics | Benchmark both | Analytics Vidhya describes its annotated-diagram task as the closest contest, with both models rendering requested labels and data points accurately in that test [ |

| OpenAI-centered API stack, tiered rate limits, or Batch API economics | GPT Image 2 | OpenAI documents the GPT Image 2 model, tiered rate limits, token pricing, and lower Batch API pricing [ |

| Gemini-centered workflow with documented Nano Banana parameters | Nano Banana Pro / Gemini image workflow | Google’s Nano Banana image-generation docs show Gemini API usage with parameters such as aspect ratio and 2K resolution [ |

Benchmark findings

Text, typography, and structured layouts: GPT Image 2 has the clearest edge

Text rendering is the most consistent GPT Image 2 advantage in the provided comparisons. Genspark reports that GPT Image 2 has a narrow edge on precise text and technical terminology [3]. In AVB’s direct 10-prompt test, GPT Image 2.0 won the in-image typography, manga dialogue-panel, bilingual-menu, and silkscreen-gig-poster prompts [

6]. Vidguru’s blind benchmark also favors GPT-Image 2 for layout-heavy commercial work, image-editing fidelity, and material logic [

10].

The practical read: if a malformed headline, bad menu item, broken UI label, or inaccurate product callout makes the image unusable, GPT Image 2 is the more defensible first API to test [3][

6][

10].

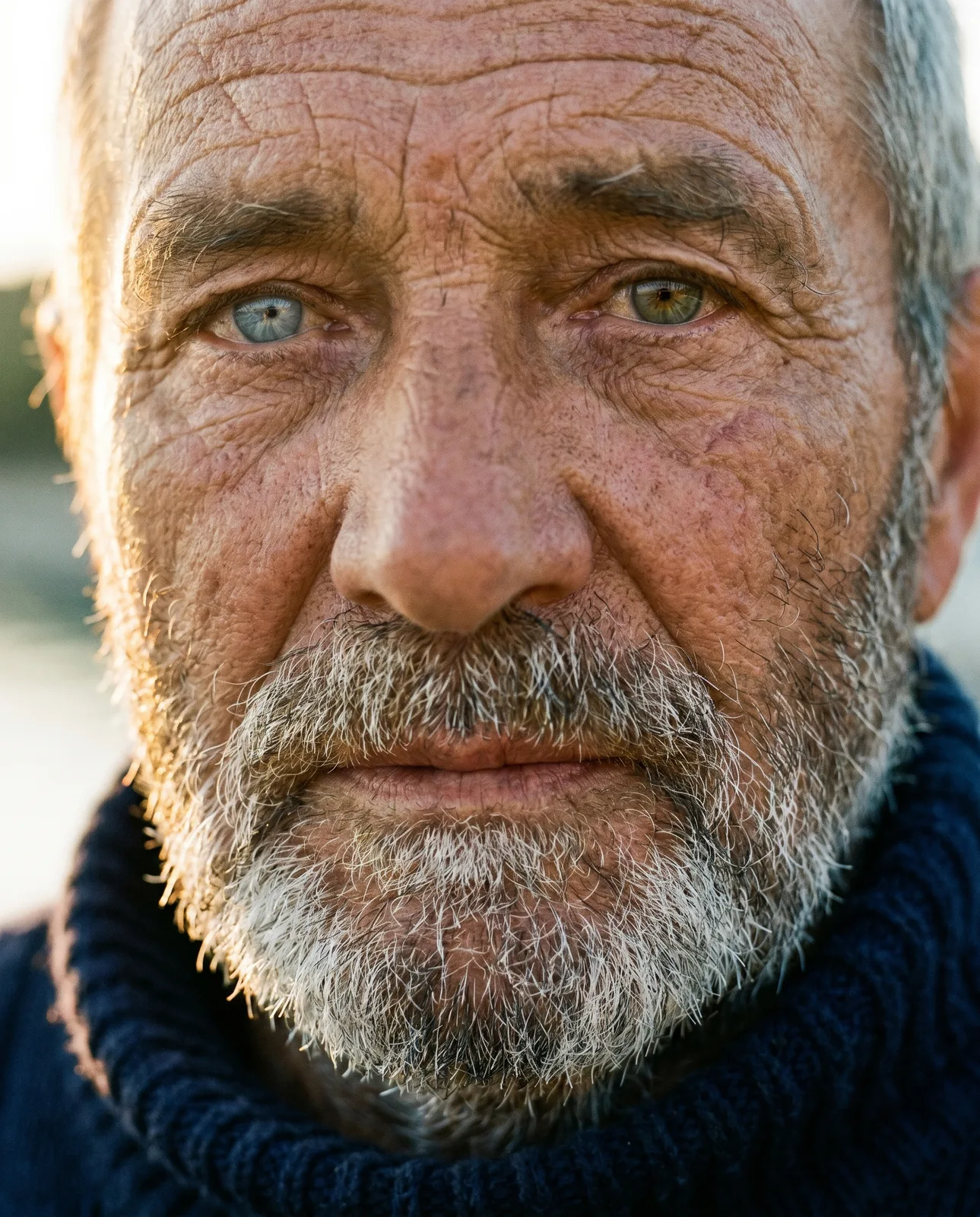

Photorealism and lighting: Nano Banana Pro’s strongest signal

Nano Banana Pro’s best direct evidence is in photoreal and lighting-heavy outputs. AVB reports that Nano Banana Pro won on photorealism, skin texture, and lighting in the hyperreal portrait, UGC selfie, and athletic ad prompts [6]. Genspark’s adjacent Nano Banana 2 comparison also reports a narrow edge for dramatic lighting [

3].

That makes Nano Banana Pro a strong first candidate for editorial portraits, lifestyle campaigns, UGC-style ads, and cinematic creative where mood and naturalistic lighting matter more than exact text overlays [3][

6].

Product shots, e-commerce, and infographics look close

For common commercial categories, the public evidence does not show a clean winner. Genspark reports that GPT Image 2 and Nano Banana 2 are effectively tied on photorealistic product shots, e-commerce mockups, marketing infographics, and anatomy diagrams when prompted properly [3]. For these workloads, the deciding factors are likely to be your prompt style, reference-image workflow, editing loop, latency in your stack, and cost per accepted output.

Technical diagrams are too close for a generic verdict

Diagram performance depends heavily on the style and scoring rubric. Analytics Vidhya describes its annotated-diagram task as the closest contest in its comparison: Nano Banana 2 produced a rigorous two-view engineering-style diagram, GPT Image 2 produced a visually strong blueprint-style result, and both rendered the requested labels and data points accurately in that test [9]. If you need exact dimensions, callouts, or engineering conventions, do not rely on a generic model ranking; test your actual diagram templates.

Prompt completion and refusals: one small test favored GPT Image 2

AVB reports that GPT Image 2.0 rendered all 10 prompts in its test, while Nano Banana Pro rendered 9 of 10 and refused a prominent-person CV prompt on policy grounds [6]. That is useful signal, but it should not be generalized too far. Refusal behavior can vary by prompt wording, API route, safety policy, product surface, and time.

Pricing: the headline image-output price is similar

OpenAI’s pricing page lists gpt-image-2 image input at $8.00 per 1M tokens, cached image input at $2.00 per 1M tokens, and image output at $30.00 per 1M tokens [14]. OpenAI’s materials also list GPT Image 2 text input at $5.00 per 1M tokens, cached text input at $1.25 per 1M tokens, and text output at $10.00 per 1M tokens [

14][

21].

Google’s Gemini pricing page lists image output at $30 per 1,000,000 tokens and says output images up to 1024×1024 consume 1,290 tokens, equivalent to $0.039 per image [25].

So the public pricing docs do not support a simple claim that one model is always cheaper. Real cost can diverge based on prompt length, image inputs, reference images, resolution, edit loops, retries, refusals, caching, and routing [14][

21][

25][

26]. OpenAI’s pricing docs also show lower Batch API rates for GPT Image 2, and OpenAI says the Batch API can save 50% on inputs and outputs for asynchronous jobs run over 24 hours [

14][

15]. If your workload is bulk generation rather than interactive creation, that can materially affect cost.

Production parameters and routing

OpenAI’s GPT Image 2 model page lists tiered TPM and IPM rate limits, with Free not supported and higher tiers scaling upward by usage tier [13]. Google’s Nano Banana image-generation docs show Gemini API examples with inline image inputs, aspect ratio, and

2K resolution parameters [26].

If you use third-party routing, verify limits against that provider rather than assuming they match the first-party API. For example, Fal’s GPT Image 2 page lists custom dimensions that must be multiples of 16, a maximum single edge of 3840px, a maximum aspect ratio of 3:1, and a total pixel range from 655,360 to 8,294,400; it also notes that routing and quota behavior can depend on how the provider is configured [17].

Which API should you use?

Choose GPT Image 2 if you need:

- Precise text, labels, UI copy, menus, posters, or technical terminology [

3][

6].

- Layout-heavy commercial assets such as ads, packaging, product mockups, and structured brand graphics [

10].

- OpenAI API integration with documented model access, tiered rate limits, and token pricing [

13][

14].

- Batch-friendly economics for asynchronous high-volume image jobs [

14][

15].

Choose Nano Banana Pro if you need:

- Photoreal portraits, lifestyle imagery, UGC-style creative, skin texture, or lighting-heavy ad concepts [

6].

- A Gemini/Nano Banana workflow with documented API parameters such as aspect ratio and

2Kresolution [26].

- CJK typography polish or dramatic lighting, provided your own Nano Banana Pro test confirms the adjacent Nano Banana 2 signal from Genspark [

3].

- A pricing model where Google’s documented 1024×1024 estimate of 1,290 output tokens, or $0.039 per image, fits your budgeting workflow [

25].

Run a private benchmark before committing

The public comparisons are useful for shortlisting, not for final vendor selection. A stronger production test should use your own prompts, references, brand rules, and failure criteria. Vidguru’s benchmark describes a useful baseline approach: first-take generations with identical prompts and identical references where relevant, scored on prompt adherence, commercial usability, text accuracy, physical logic, and reference fidelity [10].

For a practical internal benchmark, include 30–50 prompts across your real categories: product shots, ads, UI screens, diagrams, multilingual text, reference-image edits, brand layouts, and policy-sensitive edge cases. Score each output on text accuracy, prompt adherence, layout and spatial logic, reference fidelity, photorealism or style match, editability across follow-up prompts, artifact rate, latency, refusal rate, and cost per accepted image.

The current evidence is enough to choose a starting point: GPT Image 2 for text and structure, Nano Banana Pro for photoreal lighting and Gemini workflows. It is not enough to replace a workload-specific benchmark.