If your decision is based on the clearest public benchmark signal, GPT Image 2 wins the text-to-image comparison. Artificial Analysis lists GPT Image 2 (high) first in its Text to Image Arena with a 1331 Elo score, ahead of GPT Image 1.5 and Nano Banana 2 in the visible ranking snippet [31]. But that is not the same as saying GPT Image 2 is the best choice for every workflow: Google’s Nano Banana documentation explicitly shows selectable 512, 1K, 2K, and 4K resolutions, and Google describes Nano Banana/Gemini image generation as supporting high-speed generation, prompt-based editing, and visual reasoning [

35][

43].

The short verdict

- Text-to-image benchmark winner: GPT Image 2. The strongest leaderboard evidence available here is Artificial Analysis, where GPT Image 2 (high) leads with 1331 Elo [

31].

- Editing winner: too close to call from one leaderboard. Artificial Analysis lists GPT Image 2 (high) at 1251 Elo for editing and Nano Banana Pro at 1250, while GPT Image 1.5 leads that specific editing leaderboard at 1267 [

30].

- Workflow winner: depends on your constraints. Nano Banana has clearer official evidence for 4K output options in the provided Google docs [

35], while OpenAI provides clearer official GPT-image-2 pricing and tiered rate-limit details in the provided sources [

13][

14].

What exactly is being compared?

OpenAI’s developer documentation lists GPT Image 2 as gpt-image-2-2026-04-21 and shows tiered rate limits for the model [13]. OpenAI’s pricing page lists GPT-image-2 as a state-of-the-art image generation model and provides token-based prices for image inputs, cached image inputs, image outputs, text inputs, and cached text inputs [

14].

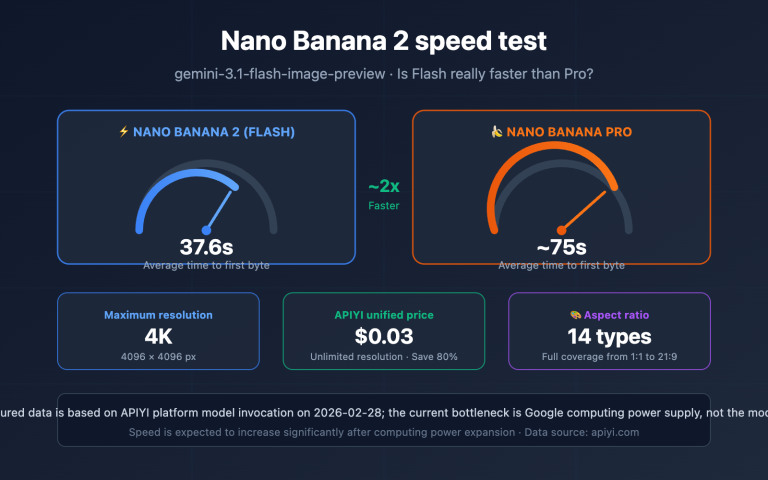

Nano Banana is less tidy as a label because the provided sources use it across multiple Gemini image-generation routes. Google’s image-generation documentation presents Nano Banana image generation through the Gemini API using gemini-3.1-flash-image-preview in the visible code example [35]. Google Skills describes Gemini 2.5 Flash Image, also called Nano Banana, as a model for high-speed image generation, prompt-based editing, and visual reasoning [

43]. Artificial Analysis’ editing leaderboard snippet also refers to Nano Banana Pro as Gemini 3 Pro Image [

30].

That naming difference matters: when a benchmark names Nano Banana 2, Nano Banana Pro, or Gemini Flash Image, it may not be measuring the same route.

Benchmark comparison table

| Question | What the available evidence says | Practical read |

|---|---|---|

| Who leads text-to-image quality? | Artificial Analysis lists GPT Image 2 (high) first with 1331 Elo, followed by GPT Image 1.5 and Nano Banana 2 in the visible snippet [ | GPT Image 2 has the strongest benchmark case for text-to-image. |

| Who leads image editing? | Artificial Analysis lists GPT Image 1.5 first at 1267 Elo, GPT Image 2 second at 1251, and Nano Banana Pro third at 1250 [ | GPT Image 2 and Nano Banana Pro look effectively tied in that snippet. |

| Do third-party arena reports agree? | Neurohive and CalcPro report a large GPT Image 2 lead, including a claimed +242 Elo margin in arena-style rankings [ | Directionally pro-GPT, but verify live leaderboards and methodology. |

| Which is better for exact text and layouts? | Analytics Vidhya says GPT-image-2 makes sense when text inside images must be correct, prompts involve multiple constraints or layouts, or consistency matters [ | GPT Image 2 is the safer first test for ads, posters, packaging, diagrams, and UI mockups with text. |

| Which has clearer 4K workflow evidence? | Google’s Nano Banana docs show a resolution setting with 512, 1K, 2K, and 4K options [ | Nano Banana is easier to validate from official docs when 4K is a hard requirement. |

| Which is easier to price from official docs? | OpenAI lists GPT-image-2 pricing at $8 per 1M image input tokens, $2 per 1M cached image input tokens, and $30 per 1M image output tokens [ | OpenAI’s official pricing is clearer in the provided source set. |

| Which is faster or cheaper? | Google Skills positions Nano Banana as high-speed [ | Nano Banana may be better for fast or cost-sensitive production, but verify current pricing and latency. |

Text-to-image benchmarks: GPT Image 2 has the clearest lead

The cleanest benchmark signal in the provided sources is the Artificial Analysis text-to-image leaderboard snippet. It lists GPT Image 2 (high) as the top text-to-image model with a 1331 Elo score [31].

That does not prove GPT Image 2 will beat Nano Banana on every prompt. Elo-style leaderboards reflect aggregate preferences under a specific evaluation setup, and model rankings can shift as prompts, sampling, and model versions change. Still, if the question is which model has the stronger text-to-image benchmark evidence in this source set, the answer is GPT Image 2 [31].

Several third-party reports are even more favorable to GPT Image 2. Neurohive reports that GPT Image 2 took first place across image-generation categories with a +242 Elo lead over the nearest competitor, citing LM Arena [16]. CalcPro reports a 1512 text-to-image score and a +242 lead over Nano Banana 2 [

28]. Those reports support the same general direction, but they should be treated as secondary evidence unless you verify the live leaderboard and evaluation method yourself.

Editing benchmarks: closer than the hype suggests

The editing picture is much less one-sided. In the Artificial Analysis editing leaderboard snippet, GPT Image 1.5 leads with 1267 Elo, GPT Image 2 (high) is second with 1251, and Nano Banana Pro is third with 1250 [30]. A one-point difference between GPT Image 2 and Nano Banana Pro is not enough to support a sweeping claim that GPT Image 2 dominates editing.

Another caveat: Arena.ai’s image-editing leaderboard snippet shows gemini-2.5-flash-image-preview (nano-banana)29]. That makes it useful as evidence that Nano Banana is competitive in editing arenas, but not sufficient by itself to rank GPT Image 2 against Nano Banana in that leaderboard.

The practical conclusion: for editing, test both on your own image types. The available leaderboard evidence does not justify treating GPT Image 2 as an automatic editing winner over Nano Banana Pro [30].

Where GPT Image 2 earns its advantage

GPT Image 2 is the better first choice when the image has to obey text, layout, or instruction constraints. Analytics Vidhya’s comparison summary says GPT-image-2 makes sense when text inside images must be correct, prompts involve multiple constraints or layouts, or output consistency matters [6]. A hands-on comparison reached a similar mental model: GPT wins where “every character matters,” while Nano Banana wins where “every pixel of light matters” [

3].

That maps directly to common production use cases. Start with GPT Image 2 for:

- Ad creatives with exact headlines or calls to action.

- Posters, menus, signage, and product labels.

- UI mockups, app screens, and web graphics with readable interface copy.

- Diagrams, infographics, and educational visuals with annotations.

- Prompts with many objects, spatial relationships, or layout rules.

This does not mean Nano Banana cannot do those tasks. It means the available comparison evidence gives GPT Image 2 the stronger first-test case when text and structure are expensive to fix later [3][

6][

31].

Where Nano Banana remains the practical pick

Nano Banana’s strongest supported advantage in this source set is workflow clarity around Gemini and high-resolution output. Google’s image-generation docs show aspect-ratio choices ranging across square, portrait, landscape, and ultrawide formats, plus selectable resolutions of 512, 1K, 2K, and 4K [35]. If 4K output is a hard requirement, that is easier to validate from the provided Google documentation than from the provided OpenAI snippets.

Nano Banana is also positioned around speed and iteration. Google Skills describes Gemini 2.5 Flash Image, or Nano Banana, as a model for high-speed image generation, prompt-based editing, and visual reasoning [43]. The hands-on comparison that ended with 2 GPT wins, 2 Nano Banana wins, and 2 ties also found a more balanced real-world picture than the most aggressive leaderboard headlines suggest [

3].

Start with Nano Banana when:

- Your application already uses Gemini, Google AI Studio, or Google developer tooling [

35][

43].

- You need documented 4K image-generation options in the API path [

35].

- You are generating high volumes of drafts, variants, or ideation images.

- Visual polish, lighting, and overall realism matter more than exact embedded text [

3].

- Cost is a major constraint, while remembering that third-party cost claims should be verified against current pricing [

6].

Pricing and rate limits: what the official sources show

OpenAI’s official pricing page gives a clear token-based structure for GPT-image-2. The provided pricing snippet lists image inputs at $8 per 1M tokens, cached image inputs at $2 per 1M tokens, image outputs at $30 per 1M tokens, text inputs at $5 per 1M tokens, and cached text inputs at $1.25 per 1M tokens [14].

OpenAI’s GPT Image 2 model page also shows tiered rate limits. In the visible snippet, Free is not supported; Tier 1 is listed at 100,000 TPM and 5 IPM; and Tier 5 reaches 8,000,000 TPM and 250 IPM [13].

For Nano Banana, the provided official Google image-generation snippet confirms model routing, aspect ratios, and resolution options, but it does not expose a comparable price table [35]. Analytics Vidhya says Nano Banana 2 is significantly cheaper at scale, especially with batch processing [

6], but that is a third-party comparison claim. For budget planning, verify the current Google route, model variant, resolution, batch mode, and billing page before committing.

The best production strategy: route by job, not brand

For most serious workflows, the strongest answer is not one universal winner. It is prompt routing.

Use GPT Image 2 for tasks where mistakes are costly: exact copy, multilingual text, UI screens, diagrams, product packaging, signage, posters, and multi-constraint layouts. That recommendation matches the available benchmark lead in text-to-image and the comparison evidence around text and layout fidelity [3][

6][

31].

Use Nano Banana for fast visual exploration, Gemini-native apps, high-resolution workflows, 4K-oriented output paths, and images where final text can be added or corrected later in a design tool. That recommendation matches Google’s documented resolution options and the positioning of Nano Banana/Gemini image generation as high-speed and editing-capable [35][

43].

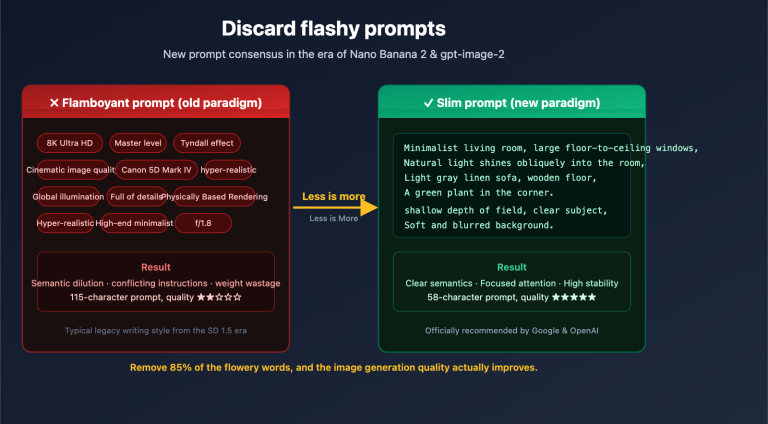

How to run your own fair benchmark

Public leaderboards are useful, but image generation is unusually prompt-sensitive. One hands-on comparison even concluded that prompt quality moved GPT Image 2 by a full tier, which can be a bigger effect than the model-vs-model gap in some tests [3].

A practical benchmark should include:

- The same prompts and reference images for both models. Do not compare a polished GPT prompt against a casual Nano Banana prompt.

- Separate scoring categories. Score text accuracy, prompt adherence, composition, photorealism, editing quality, latency, and cost separately.

- Real production constraints. Include your actual aspect ratios, resolution needs, rate limits, and budget assumptions, because those are documented differently across the provided OpenAI and Google sources [

13][

14][

35].

- Exact model names. Record whether you tested GPT Image 2, Nano Banana 2, Nano Banana Pro, Gemini Flash Image, or another route, because the naming varies across sources [

30][

35][

43].

- Blind review when possible. Human preference can change when reviewers know which model produced which image.

Final verdict

GPT Image 2 wins the benchmark headline for text-to-image quality in the available evidence, with Artificial Analysis listing GPT Image 2 (high) first at 1331 Elo [31]. But Nano Banana remains a practical production choice where Gemini integration, documented 4K output options, speed, and cost-sensitive iteration matter more than maximum text fidelity [

35][

43].

If you are building a single default route, start with GPT Image 2 for text-heavy and layout-sensitive images. If you are building a production system, route tasks: GPT Image 2 for precision, Nano Banana for high-resolution Gemini-native iteration, and your own benchmark prompts for the final call.