GitHub remains a leading software development platform, and Business Insider notes that Microsoft acquired it in 2018 [14]. What has changed is the role GitHub is asking developers to accept for AI inside repositories. Copilot is no longer perceived only as a private autocomplete tool; it is increasingly discussed in relation to issues, pull requests, reviews, comments, and agent workflows where maintainers expect control [

7][

8].

That is why the current backlash is better understood as a trust problem than as a proven platform collapse.

Anger is documented; a mass departure is not

Available reporting supports developer frustration more strongly than it supports a sweeping exit narrative. The Register reported that unavoidable AI features had some developers looking at alternative code-hosting options, especially because maintainers wanted more control over Copilot activity in repositories [8]. Slashdot, covering the same controversy, cited a claim that GitHub’s move into Microsoft’s CoreAI group had helped shift some open-source community members from complaining about Copilot to actively moving away [

1].

Those are serious warning signs. They are not, by themselves, evidence of a broad GitHub abandonment wave. The available sources do not include migration totals, enterprise churn data, or repository-level evidence showing that GitHub’s position has meaningfully collapsed. The narrower, better-supported conclusion is that developers are reassessing how much unchecked trust they want to place in GitHub as Microsoft pushes AI deeper into the platform [8][

14].

Why Copilot became the flashpoint

The backlash is not simply about whether AI code completion is useful. It is about where Copilot is allowed to act.

The Register reported that the most popular GitHub Community discussion over the prior 12 months asked for a way to block Copilot from generating issues and pull requests in repositories [8]. The second most popular discussion, measured by upvotes, sought a fix for users’ inability to disable Copilot code reviews [

8].

That distinction matters. An optional assistant in an editor is one thing. An AI system that can appear in issue queues, pull request flows, and review surfaces becomes part of repository governance. For maintainers, the concern is not only whether Copilot produces good code. It is whether project owners can set the rules for their own communities [8].

Product-quality complaints compound the control problem

Some frustration is also about Copilot’s perceived reliability as a tool. A GitHub Community discussion includes user allegations that Copilot in VS Code was unreliable and caused project damage [9]. That kind of thread should not be treated as an independent benchmark of Copilot across all users or workflows. But it helps explain why some developers no longer see unwanted Copilot activity as harmless automation [

9].

When a tool is both hard to avoid and viewed by some users as unreliable, the argument shifts from productivity to consent.

Reliability incidents matter more when agents are in the loop

GitHub’s own status page shows why agentic workflows raise the stakes. On April 22, 2026, from 18:49 to 19:32 UTC, Copilot Cloud Agent sessions for the Agent HQ Codex agent failed to start from entry points including issue assignment and @copilot comment mentions [7]. GitHub said 0.5% of total Copilot Cloud Agent jobs were affected, or about 2,000 failed jobs, while Copilot and other agent sessions were unaffected [

7].

That was not a platform-wide GitHub collapse. But it illustrates the operational risk created when teams route real work through AI agents. If developers assign issues to agents or trigger work through pull request comments, Copilot availability becomes part of delivery planning [7]. GitHub’s news page has also acknowledged recent availability incidents and said outages affect customers [

10].

Microsoft’s AI roadmap changes the trust equation

Business Insider reported that Microsoft is reshuffling teams to bolster GitHub and overhaul it for AI coding and agents, as GitHub faces rivals such as Cursor and Claude Code [14]. From a product-strategy perspective, the direction is understandable: repositories, pull requests, issues, and reviews are natural places to embed AI coding tools.

Culturally, it is more sensitive. Many developers treat GitHub as shared software infrastructure. When Copilot features feel difficult to avoid, maintainers may read them less as optional productivity features and more as Microsoft using GitHub’s central position to distribute its AI strategy [8][

14].

AI Credits make boundaries a budget issue

GitHub says Copilot is moving to usage-based billing and that, starting June 1, Copilot usage will consume GitHub AI Credits [10]. That does not prove every team will pay more. It does mean organizations need to understand where Copilot can run, who can trigger it, and how AI usage maps to budgets [

10].

For teams already frustrated by Copilot activity in shared repository spaces, metered AI usage can make GitHub’s direction feel less like an optional assistant and more like a billable layer woven into the development workflow [8][

10].

Not every anti-platform story is a GitHub exit

Broader developer-independence stories can get folded into the GitHub backlash even when they are not about GitHub specifically. David Heinemeier Hansson’s HEY profile identifies him as co-owner and CTO of 37signals and creator of Ruby on Rails [26]. His recent writing discusses 37signals’ cloud exit, including the arrival of twenty Dell R7625 servers and a plan to leave cloud complexity behind [

17][

22].

Those posts are about cloud infrastructure, not evidence of a documented GitHub departure. The distinction matters: skepticism toward centralized software platforms may be growing, but that is not the same as proof that developers are leaving GitHub en masse [17][

22].

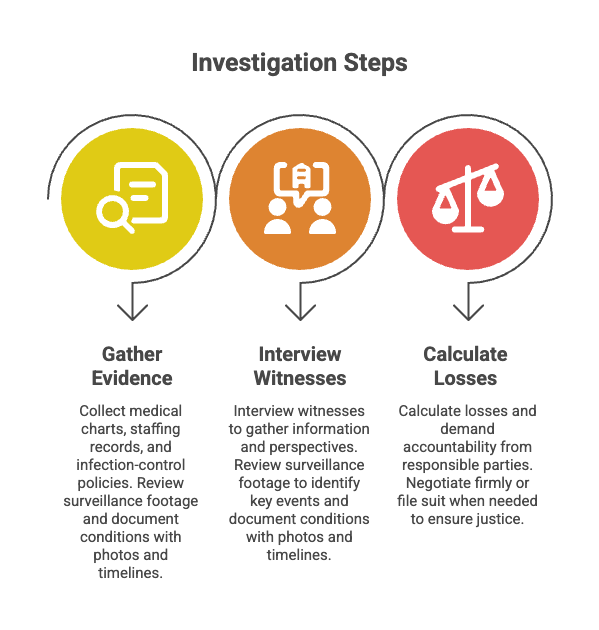

What engineering teams should do now

The practical response is not panic. It is to make GitHub and Copilot assumptions explicit.

- Audit Copilot entry points. Check where Copilot can appear or act in issues, pull requests, code reviews, comments, issue assignment, and

@copilotworkflows [7][

8].

- Set repository-level policy. Decide which Copilot features are approved, restricted, or disallowed, especially for open-source projects and compliance-sensitive repositories [

8].

- Review AI Credits before June 1. Copilot usage will consume GitHub AI Credits, so engineering, platform, and finance teams should understand how usage is counted [

10].

- Plan for AI-agent incidents. If delivery depends on issue assignment, pull request comments, or agent sessions, treat Copilot availability as an operational dependency [

7].

- Keep non-agent fallbacks simple. Critical repositories should still have clear human ownership, documented release procedures, and boring recovery paths when automation fails.

Bottom line

The claim that developers are abandoning GitHub en masse is not supported by the evidence here. The stronger conclusion is that GitHub has a trust problem: Copilot is moving into shared development workflows, Microsoft is reportedly reorganizing GitHub around AI coding and agents, reliability incidents are more consequential, and usage-based AI billing is arriving [7][

8][

10][

14].

GitHub still matters. The open question is how much control developers will demand as it becomes a more aggressive AI platform.