The fair comparison is narrower than the hype. Claude Opus 4.7 has more concrete public evidence for software engineering, tool use, context, and vision, while GPT-5.5’s strongest official data point is OpenAI’s 84.9% GDPval score for agents producing well-specified knowledge work across 44 occupations [2][

3][

14][

24]. That makes Claude the better-supported first trial for coding and tool-heavy agents, but it does not prove Claude wins every category.

Verdict by use case

| Use case | Evidence-backed read | Why |

|---|---|---|

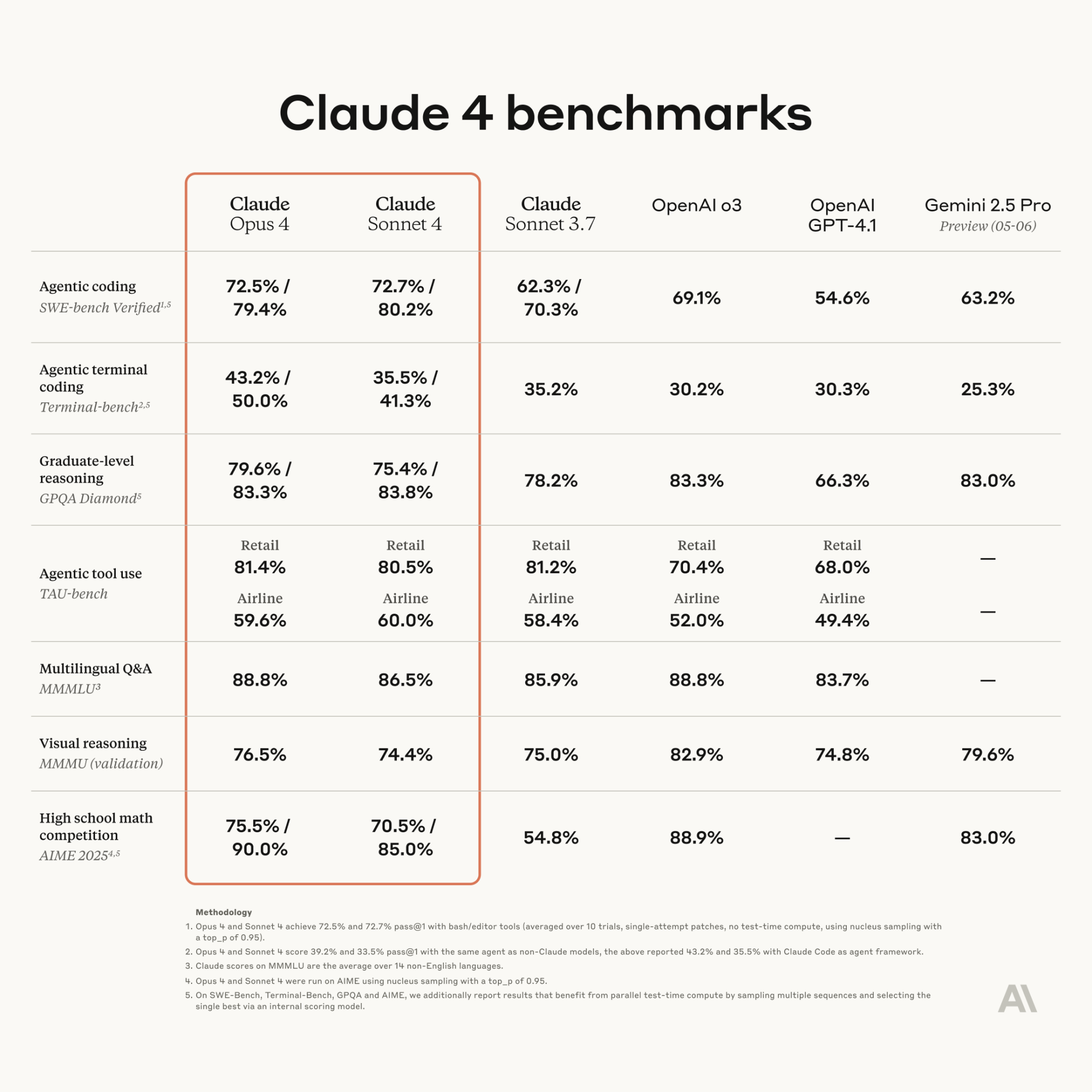

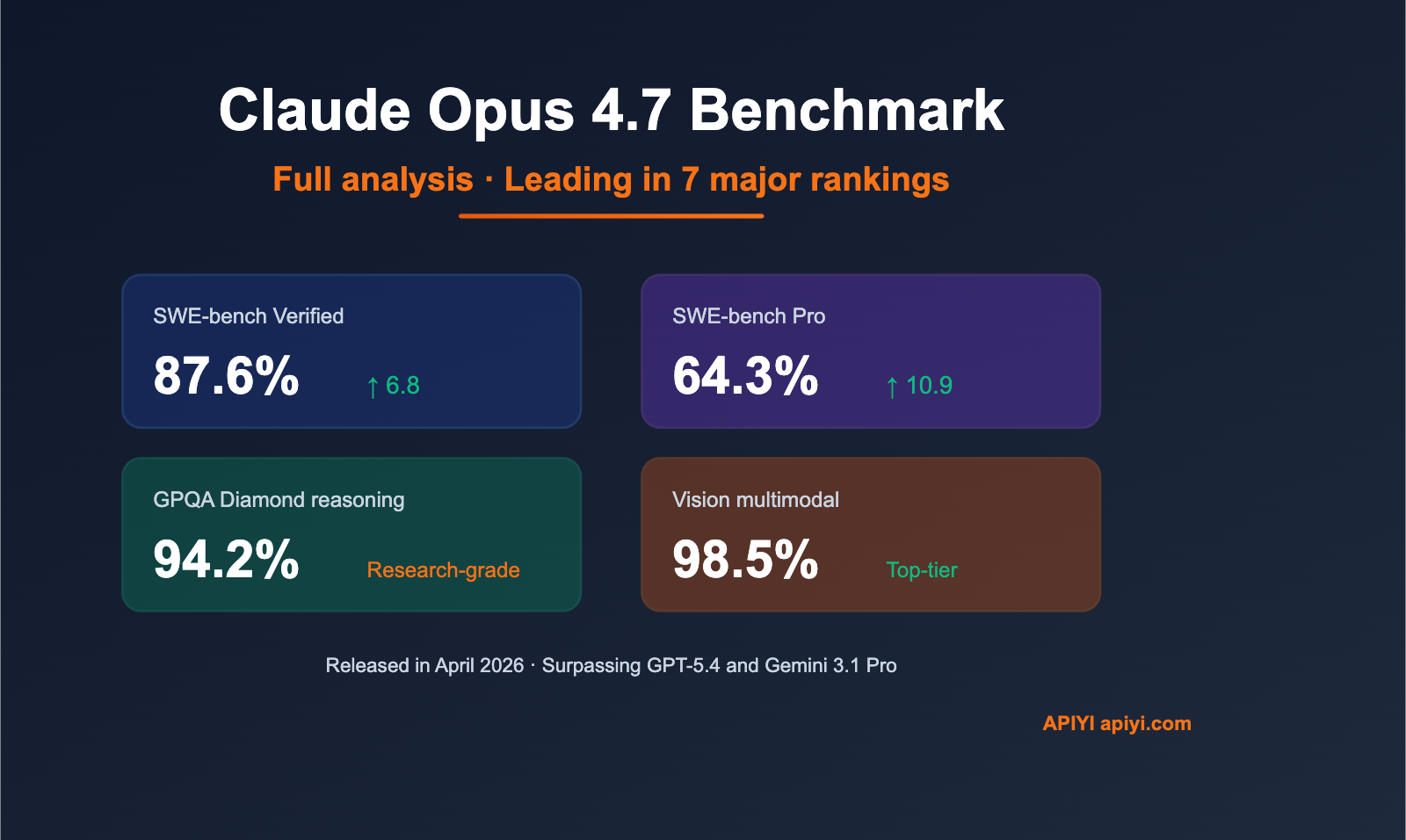

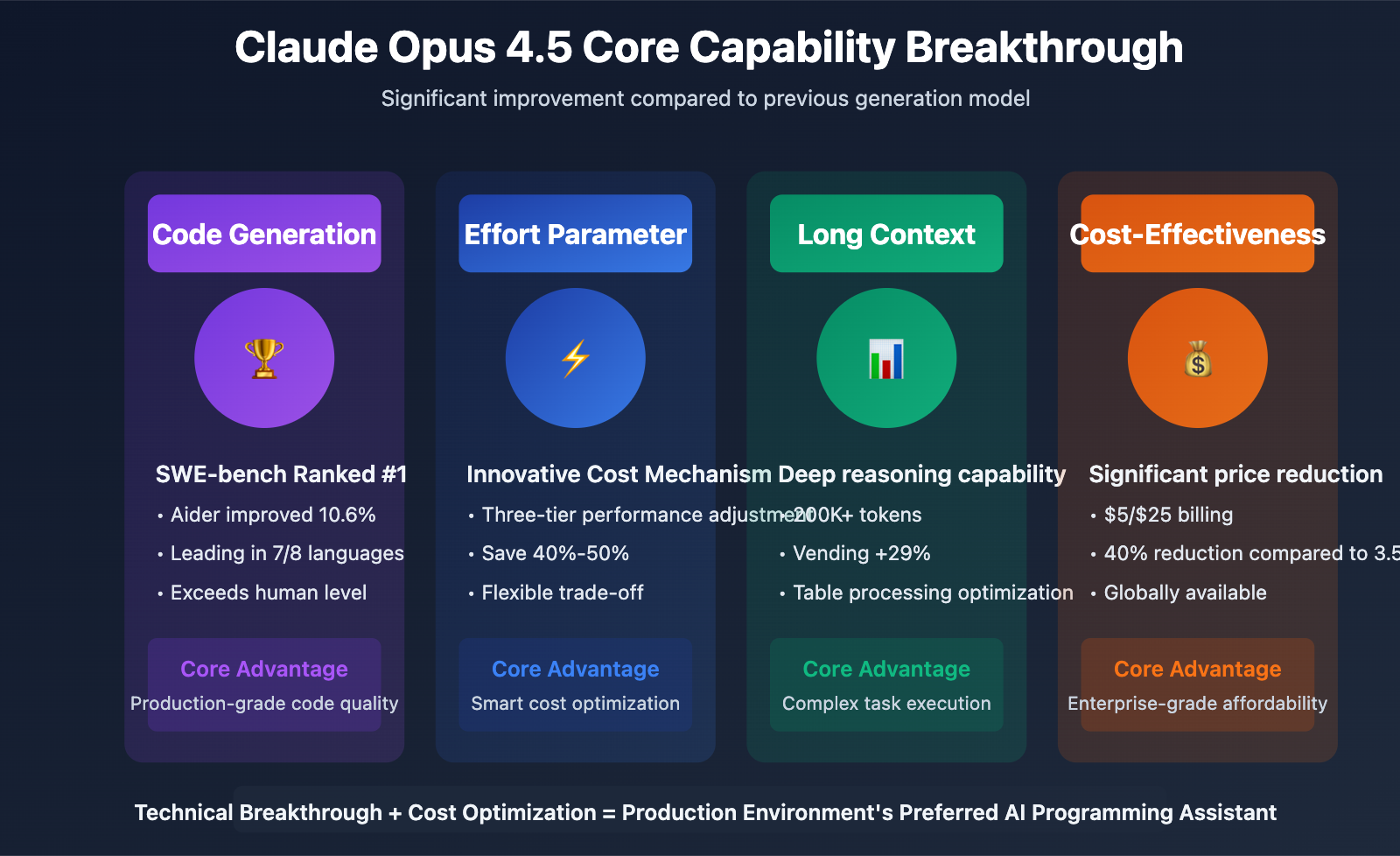

| Coding | Start with Claude Opus 4.7 | Vellum reports Claude Opus 4.7 at 87.6% on SWE-bench Verified and 64.3% on SWE-bench Pro, while BenchLM ranks it #2 for coding and programming with an average score of 95.3 [ |

| External-tool agents | Claude has the clearer tool-use benchmark | Vellum reports Claude Opus 4.7 at 77.3% on MCP-Atlas, compared with GPT-5.4 at 68.1%; that is useful, but it is not a GPT-5.5 comparison [ |

| Knowledge-work agents | GPT-5.5 deserves a serious trial | OpenAI reports GPT-5.5 at 84.9% on GDPval, which it describes as testing agents’ ability to produce well-specified knowledge work across 44 occupations [ |

| Deep research | No direct winner | BenchLM ranks Claude Opus 4.7 #1 in knowledge and understanding, but the supplied material does not include a shared GPT-5.5 deep-research benchmark [ |

| Design and UX | No responsible winner | The supplied sources focus on coding, tool use, knowledge work, context, vision, and cyber safeguards rather than design-specific evaluation [ |

| Context and vision | Claude has clearer supplied data | LLM Stats reports a 1M-token context window, 3.3x higher-resolution vision, and a new xhigh effort level for Claude Opus 4.7 [ |

| Access | Both are available through different surfaces | Anthropic says developers can use claude-opus-4-7 through the Claude API; an OpenAI developer community announcement says GPT-5.5 is available in Codex and ChatGPT [ |

Why this comparison is uneven

The strongest official Anthropic source confirms API availability for claude-opus-4-7 [16]. The richer performance picture for Claude comes from benchmark summaries and leaderboards, including BenchLM, Vellum, and LLM Stats [

2][

3][

14].

For GPT-5.5, the strongest official source is OpenAI’s own announcement. It provides the 84.9% GDPval result and says OpenAI is deploying cyber safeguards for this level of capability while expanding access to cyber-permissive models [24]. The supplied OpenAI material does not include the same level of concrete GPT-5.5 detail for SWE-bench, design, vision, or a named deep-research benchmark [

23][

24].

That asymmetry matters. A model with more published numbers is not automatically better, but it is easier to justify in a procurement or engineering evaluation.

Coding: Claude has the stronger documented case

For software engineering, Claude Opus 4.7 has the clearest benchmark-backed argument. Vellum reports 87.6% on SWE-bench Verified and 64.3% on SWE-bench Pro, and BenchLM lists Claude Opus 4.7 as #2 in coding and programming benchmarks with an average score of 95.3 [2][

3].

The main caveat is that Vellum’s direct OpenAI comparison is against GPT-5.4, not GPT-5.5. Vellum reports Claude Opus 4.7 ahead of GPT-5.4 on SWE-bench Pro and MCP-Atlas, but that cannot be cleanly extrapolated to GPT-5.5 [3].

For engineering teams, the practical approach is to test both models on the same repository tasks:

- Fix real backlog issues with failing tests.

- Refactor a complex module without changing behavior.

- Generate tests that catch known edge cases.

- Follow your style guide and architecture constraints.

- Use tools such as search, build logs, CI output, and package docs without inventing APIs.

Based on the cited evidence, Claude Opus 4.7 should be the first model to benchmark for coding, but not the only one.

Agents and tool use: two different signals

Claude’s strongest agentic signal in the supplied material is tool use. Vellum reports Claude Opus 4.7 at 77.3% on MCP-Atlas, ahead of GPT-5.4 at 68.1% [3]. If your agent needs to call tools, inspect external state, or coordinate MCP-style workflows, Claude has the clearer public benchmark trail.

GPT-5.5’s strongest official agent-related signal is different. OpenAI says GPT-5.5 scores 84.9% on GDPval, a benchmark for agents producing well-specified knowledge work across 44 occupations [24]. That supports testing GPT-5.5 for structured professional tasks, especially if your workflow already lives in ChatGPT or Codex [

23][

24].

The safest reading is split: Claude is better supported for tool-use evaluations, while GPT-5.5 is better documented for GDPval-style knowledge-work agents.

Deep research: promising, but not settled

The supplied evidence does not settle deep research. BenchLM ranks Claude Opus 4.7 #1 in knowledge and understanding and #2 overall on its provisional leaderboard, which supports Claude as a strong general knowledge model [2]. OpenAI’s GPT-5.5 page supports a different claim: strong performance on GDPval’s well-specified occupational knowledge-work tasks [

24].

One supplied secondary source says GPT-5.4 led Claude Opus 4.7 on BrowseComp web research by 10 points, but that is about GPT-5.4, not GPT-5.5 [17]. It should not be used as proof that GPT-5.5 beats Claude Opus 4.7 on research.

If research quality matters, score both models on source retrieval, citation fidelity, contradiction handling, synthesis quality, and refusal to invent unsupported claims.

Design and UX: do not call a winner

There is no citation-backed design winner in the supplied material. The Claude sources emphasize coding, tool use, knowledge, context, vision, and reasoning [2][

3][

14]. The GPT-5.5 official source emphasizes GDPval, cyber safeguards, and access rather than UI design, product design, brand systems, or UX-specific benchmarks [

24].

Design teams should run a practical test suite instead of relying on general model rankings. Useful prompts include turning a product requirement into a wireframe spec, critiquing a checkout flow, generating accessible design tokens, writing component documentation, and producing alternative UX copy. Score the outputs for specificity, accessibility, consistency, usability, and whether the model invents constraints.

Context, vision, cost, and safety signals

Claude has more concrete supplied data for long-context and multimodal work. LLM Stats reports Claude Opus 4.7 with a 1M-token context window, 3.3x higher-resolution vision, and a new xhigh effort level [14]. The same secondary source reports pricing at $5 per million input tokens and $25 per million output tokens, but pricing should be verified against current vendor pages before buying because the supplied official Anthropic snippet only confirms API access [

14][

16].

GPT-5.5 has the clearer official cyber-safety statement in this source set. OpenAI says it is deploying safeguards for GPT-5.5’s level of cyber capability and expanding access to cyber-permissive models [24]. That matters for teams evaluating security, cyber-defense, or governed enterprise workflows.

Which model should you choose?

Choose Claude Opus 4.7 first if your priority is:

- Repository-scale coding, debugging, refactoring, or test generation [

2][

3].

- Tool-use agents and MCP-style workflows [

3].

- Long-context or vision-heavy tasks where the reported 1M-token context window and higher-resolution vision are relevant [

14].

Choose GPT-5.5 first if your priority is:

- Workflows already centered on ChatGPT or Codex [

23].

- GDPval-style professional knowledge work across occupations [

24].

- Cyber-sensitive deployments where OpenAI’s stated safeguard posture is a key buying factor [

24].

Do not choose either model solely on brand, launch hype, or a single leaderboard. The available evidence supports Claude as the first coding and tool-use trial, GPT-5.5 as a serious OpenAI-native knowledge-work trial, and custom evaluation for design or deep research [2][

3][

23][

24].