Cloud giants’ AI infrastructure boom is best read as a conditional capex bet, not a simple bubble call. The largest platforms can justify building while AI compute is scarce, but the investment only pays off if enterprises turn experiments into recurring, high-margin cloud workloads.

The verdict: sustainable for now, but only if demand catches up

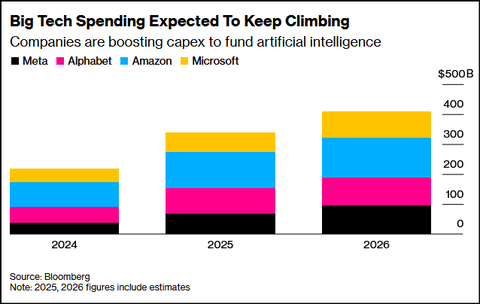

The spending numbers are huge, and they vary by source. Futurum estimates that Microsoft, Alphabet, Amazon, Meta, and Oracle have committed $660 billion to $690 billion of 2026 capital expenditure, nearly doubling 2025 levels [2]. Campaign US says Meta, Microsoft, Alphabet, and Amazon are on track to spend upward of $650 billion in 2026 on AI investments, focused on advanced data centers, specialized chips, and liquid-cooling systems [

5]. Business Insider separately reported that Amazon, Microsoft, Meta, and Google were planning up to $725 billion in 2026 capex after first-quarter earnings updates [

8].

That range changes the debate. The question is no longer whether AI is strategically important; it is whether AI infrastructure will be used enough, and priced well enough, to earn back the buildout.

Why hyperscalers are building ahead of proof

For the biggest cloud platforms, underbuilding is its own risk. If AI workloads keep growing, companies with available data-center and chip capacity can capture demand when others are constrained. AInvest describes the 2026 data-center expansion as happening amid supply constraints and warns that infrastructure investment is running ahead of software value capture [7]. Campaign US similarly says the 2026 spending surge is aimed at infrastructure needed for next-generation generative AI, including advanced data centers, specialized chips, and liquid-cooling systems [

5].

This does not make every dollar safe. It means the buildout is a strategic race for a scarce input: compute. In that kind of market, building early can be rational even before final demand is fully proven.

The enterprise ROI gap is the weak point

The hard part is that enterprise AI value is still uneven. McKinsey’s 2025 Global Survey found that nearly two-thirds of respondents say their organizations have not yet begun scaling AI across the enterprise; 64% say AI is enabling innovation, but only 39% report enterprise-level EBIT impact [27]. McKinsey also notes that organizations are beginning to redesign workflows and put senior leaders into AI governance roles as they try to capture bottom-line impact [

22].

The more bearish evidence comes from coverage of MIT’s GenAI Divide. Digital Commerce 360 reported that, despite an estimated $30 billion to $40 billion in enterprise generative AI spending, 95% of organizations had not seen measurable financial return, while only 5% of integrated pilots were extracting millions in value [24]. That should be read as a warning signal rather than a final verdict on enterprise AI: the issue is not that no company can get value, but that value appears concentrated in a small set of scaled deployments.

The four signals to watch

1. Utilization

The central metric is whether GPUs and AI data centers stay full. High utilization turns expensive infrastructure into sellable capacity. Low utilization exposes overbuild and makes fixed costs harder to absorb.

2. Pricing power

AI compute needs to remain valuable enough to support margins. If cloud providers compete away pricing before enterprise usage matures, revenue growth may not translate into attractive returns.

3. Enterprise-level impact

Individual pilots and use-case wins are not enough. The more important signal is whether customers report measurable enterprise impact, where McKinsey’s survey still shows a gap between innovation benefits and EBIT impact [27].

4. Investor tolerance

Investors are not treating all AI capex the same. Fortune reported that after Alphabet, Meta, and Microsoft discussed higher AI spending, Meta fell more than 6% after hours, Microsoft was essentially flat, and Alphabet rose almost 7% [1]. That reaction suggests investors are judging each company’s path to returns, not simply rejecting AI infrastructure spending.

Who is most exposed?

The safest capex is capacity that can serve many paid workloads. A hyperscaler with broad enterprise cloud demand has more room for error than a company whose buildout depends on a narrower set of AI demand assumptions. Futurum notes that pure-play AI vendors led by OpenAI and Anthropic are growing rapidly, but their combined revenues remain a fraction of the infrastructure investment being deployed on their behalf [2].

That imbalance is the core risk. If enterprise customers keep AI stuck in pilots, infrastructure owners may still grow AI revenue, but not necessarily enough to justify the highest capex estimates. If customers redesign workflows and scale production AI, the same capacity can become a durable cloud growth engine [22][

27].

Bottom line

Big Tech’s AI infrastructure bet can pay off, but it is not self-validating. The near-term spending is defensible because AI compute is strategically scarce and the largest platforms cannot afford to miss a major workload shift [5][

7]. The long-term economics depend on whether enterprise AI demand becomes real, recurring, and profitable at scale.

The buildout looks smart if AI becomes a sticky cloud workload with high utilization and resilient pricing. It starts to look like overbuild if 2026 capex estimates in the $650 billion-plus range arrive faster than enterprises can convert AI pilots into measurable financial returns [2][

5][

24][

27].